►

From YouTube: Kubernetes SIG Storage - bi-weekly meeting 20210128

Description

Meeting of Kubernetes Storage Special-Interest-Group (SIG) - 28 January 2021

Meeting Notes/Agenda: -

Find out more about the Storage SIG here: https://github.com/kubernetes/community/tree/master/sig-storage

Moderator: Xing Yang (VMware)

A

A

So

if

you

have

a

feature

that

you

want

to

get

into

this

release

make

sure

that

you

pin

the

leads

to

get

the

caps

track

tracked

in

the

this

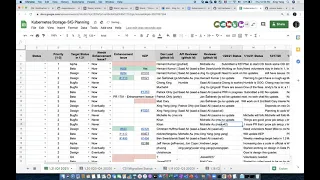

spreadsheet,

and

then

you

must

make

sure

that

your

caps

are

in

the

implementable

state

and

you

need

to

do

the

production

readiness

review.

So

there

are

a

few

requirements

for

that

for

the

cup

okay.

So,

let's

go

to

spreadsheet.

A

B

Yeah,

sorry,

I

was

trying

to

find

me

okay,

so

we

have

a

cap.

I

opened

a

cap,

there's

the

enhancement

file,

it's

a

alpha

feature

and

it's

being

reviewed.

It's

going

david

eats

review,

did

the

pr

review,

redness

production

readiness

review

for

it,

and

I

am

addressing

his

comments

and

everything

then

there's

a

parallel

csi

proposal

to

make

the

changes.

B

B

B

So

the

current

status

is

that

sung

and

abhishek

and

neha

there

are

folks

from

microsoft,

have

are

helping

us

out.

We

are

fixing

some

of

the

issues

in

in

121

time

frame,

but

the

two

big

thing

like

the

something

that

require

cap,

the

recovery

from

research

failure.

I

think

we

may

not

be

able

to

get

in

time

for

121.

A

B

A

A

E

A

A

A

F

G

Sorry

for

that,

so

I've

restarted

the

discussion

around

the

caps,

the

prr

production

readiness

review.

That

was

still

missing

and

I

now

got

the

attention

of

my

reviewer,

so

we

are

making

good

progress

on

or

we

have

made

good

progress

on,

the

storage

capacity

one

and

the

other.

The

next

one,

generic

from

inner

voice

will

come

come

soon

technically.

G

G

So

I'm

I'm

waiting

for

that

and

then,

if

that's,

if

that's

agreeable

I'll

start

the

implementation

work,

but

it's

the

change

itself

is

entirely

an

external

provisioner,

which

means

that

we

are

less

a

bit

less

constrained

by

by

the

kubernetes

deadlines.

We

still

want

to

have

it

ready,

of

course,

but.

G

G

It's

currently

ambiguous

in

the

csi

spec

and

I

think

we

wanted

to

clarify

whether

csi

drivers

can

be

made

more

precise

by

returning

two

values

or

clarifying

respect.

What

that?

What

that

value

is

that

we

return

and

based

on

that,

we'll

probably

update

the

entry

representation

of

that

information

in

the

csi

storage

capacity

object,

so

that'll

that'll

happen

next

week.

I

hope.

Okay,

I'm.

H

G

A

H

H

A

H

A

A

K

Yes,

an

issue

has

been

filed,

a

cap

has

been

pushed

which

outlines

the

temporary

design

and

largely

mirrors

the

pvc

name,

space

transfer,

which

has

not

yet

been

fully

merged,

and

it

relies

on

the

same

api,

so

the

design

is

still

being

tentatively

discussed

and

the

kept

needs

to

be

reviewed,

but

it's

not

imperative

for

this

corner.

Basically,

we

have

a

design

and

we're

iterating

on

it.

A

A

So

I

attended

one

of

the

signal

meeting

and

asked

them

for

some

input,

so

they

mentioned

something

called

a

node

problem

detector

which

actually

is

a

demon

set.

That

is

doing

some

monitoring

on

the

node

and

then

either

export

that

to

prometheus

or

to

event

or

if

it's

a

permanent

error.

They

actually

put

that

as

a

condition

on

the

node

so

well,

we

are

actually

sending

those

events

to

your

pod.

A

A

A

J

A

A

A

A

Okay,

so

the

next

one

change

block

tracking

yeah.

So

finally,

it's

working

on

a

design.

We

had

another

meeting.

We

got

some

input

from

from

from

ad

from

microsoft

on

the

api,

so

I

think

we

still

need

to

get

some

input

from

the

like

the

ebs

side.

We

don't

really

have

anyone

working

on

that

so

because

their

api

is

a

little

different

from

the

rest.

So

yeah,

that's

still

like

one

remaining

thing

we

want

to

resolve.

A

L

A

L

There

are

more

discussions

around

this

and

I

don't

think

I

want.

I

can

talk

all

of

them,

but

yeah,

if

feel

free

to

join

the

sas

and

migration

meeting

or

just

looking

at

the

notes

regarding

the

code,

the

core

ga.

I

had

three

pr

related

to

the

core

ga

out

for

review,

so

I

think

two

of

them

are

already

there

and

one

of

them

is

related

to

the

migration

matrix.

L

It's

still

working

in

progress

and

michelle

and

patrick

are

trying

to

help

review

that

yeah.

I

guess

that's

all

about

it,

yes

and

yeah.

So

one

thing

I

wanted

to

know

if

there

is

anyone

from

like

from

aws

aws

in

the

call

that

I

wanted

to.

Let

them

know

aware

of

this

timeline

thing,

because

last

time

I

don't.

I

don't

know

if

there's

anyone

from

aws

attendant

meeting.

L

L

I'm

sorry

by

the

way,

I

think

one

one

thing

I

also

wanted

to

mention

about

this:

the

core

csi

migration

is,

I

had

a

pr

which

I,

which

is

to

what's

that

called

it's.

It's

called

replace

the

csi

migration

complete

flag

with

entry,

plugin

unreached

unregistered

flag,

so

that

means

some

of

the

existing

csi

migration.

Complete

flag

will

be

just

removed

directly

because

it's

doing

alpha

and

we

will

replace

this

with

a

new

flag

called

entry

plugging

something

on

register.

L

So

basically

the

idea

is

that,

with

this

new

flag,

we

are

decoupling,

unwrapped

unregistered

the

entry

plug-in

with

the

csi

migration.

So

previously,

if

we

wanted

to

disable

the

entry

plugin,

we

will

have

to

enable

csi

migration

complete

flag

to

do

that.

That

means

you

have

to

have

the

csm

migration

feature

turned

on,

but

see

if

there's

a

cluster

that

wants

to

directly

go

with

csi

and

not

support

entry

class

entry

volume

at

all,

it

can

directly.

L

A

A

B

So

the

one

more

item

was

like:

there

were

three

things

I

think

one

was

the

disc

format

and

second

one

was

the.

We

should

explicitly

deprecate

older

versions

of

b

sphere

in

this

release

so

that

we

can

make

meet

124

timeline,

and

the

third

thing

was

that

michelle

pointed

out

the

hardware

version.

If,

if

we

are

going

to

not

support

like

let's

say

15,

then

we

should.

B

A

A

C

A

C

A

H

C

L

H

A

A

H

M

A

H

A

D

D

Is

that,

like

I

haven't

been

able

to

find

anybody

in

my

company

who

knows

the

upstream

of

openstack

cs

cinder,

csg

driver,

and

there

are

some

tasks

in

the

migration

that

we

discussed

last

friday,

that

we

need

to

update

the

upstream

tools

upstream

ci

and

this

kind

of

stuff-

and

we

don't

have

the

expertise

here.

So

if

there

is

anybody

on

this

call

or

in

the

csi

community

who

knows

about

the

openstack

external

cloud

provider

and

the

csi

driver

upstream,

like

the

install

tools

and

the

ci,

we

need

help

there.

Otherwise

we

can.

D

D

B

I

I

actually

pinged

the

the

contribution

history

and

the

owners

of

the

sick,

open,

openstack

cloud

provider

and

like

three

people,

I

pinged

and

I

think

dims

today

morning,

so

that

I

didn't

get

anything

from

the

cloud

product

owner.

But

dims

was

asking

what

those

specific

tasks

are

there

for

migration,

like

answers

like

install

tools

and

ci,

but

I

think

we

have

to

be

if

we

can

provide

more

accurate

guidance

like

we

need

to

what

like.

B

B

C

I

A

A

A

J

C

A

A

A

Okay,

I

don't

see

this

in

the

tracking

sheet,

yet

maybe

they

just

didn't

add.

I

don't

see

anything

from

signature

in

that

tracking

structure,

okay,

so

the

next

one

is

the

immutable

secrets

and

convection

maps

that

this

one

is

already

done.

That's

really

quick,

okay,

next

one

is

pvc

created

by

sleeper

set,

will

not

be

auto

removed

and

I

think

we

are

losing

track

because

I

we

don't.

I

don't

know

if

kk

is

still

working

on

that

yeah.

A

A

A

Okay

thanks

next

one

what

expansion

was

okay.

I

think

this

is

my

saying

this

one

is

a

design,

so

also

cake

is

probably

yeah.

I

think

he's

working

on

the

the

pvc

lesion

first.

So

next

one

is

content

notifier.

So

yes,

I'm

working

with

shantian.

This

is

actually

updating

the

cap,

so

he

will

try

to

schedule

a

meeting

with

tim

to

discuss

the

the

api

changes

so

hope.

Hopefully

we

can

get

that

one

down

soon

and

the

next

one.

A

A

C

A

L

A

Okay,

thanks,

okay,

I

think

that's

all

we

have

on

this

spreadsheet,

we'll

go

back

to

the

agenda

doc,

so

we

have

a

few

items.

I

think

this

is

from

last

meeting,

but

I

don't

it

looks

like

deviant

is

not

on

the

call.

So

he

has

this,

but

I

yeah

I'd

like

to

actually

look

at

this

one.

So

it's

this

issue

that

he

opened

basically

volume

leaks

when

pvc

is

deleted

when

associate

pv

is

in

the

terminating

state.

So

basically

what

happened

this

so

he

created

a

pvc.

A

It

has

pvpc

band

with

the

deletion

policy

which

is

delete

now,

if

you

go

ahead

and

try

to

delete

the

pv

first

right,

so

it's

going

to

be

like

in

this

terminating

state,

so

he

just

canceled

that

one

and

then

then

he

went

to

delete

the

pvc

and

then

ppc

and

pv

are

both

deleted.

However,

the

volume

the

underlying

volume

is

not

deleted

because

the

cc

driver

didn't

get

any

call

to

delete

that.

A

A

A

C

C

I

think

that

is

the

that

was

the

intended

behavior.

It's

definitely

a

bit

confusing.

I

think

we

could

consider

potentially

adding

a

new

finalizer

or

something

to

help

with

this.

I

think

there

were

attempts

to

add

it

in

the

past.

I

don't

remember

exactly

why

we

did

not

end

up

going

with

it,

but

I

mean

it's

something

we

can

look

into,

but

I

think

it's

definitely

right

now,

working

as

expected.

A

C

A

D

A

D

C

A

J

D

H

A

C

A

J

A

But

the,

but

this

is

really

confusing,

like

I

even

thought

I

thought

that's

not

possible

how

come

you

have

this

bag

and

it's

still

there

because

I

thought,

if

you

have

the

deletion

policy,

then

that

means

delete

is

delete.

But

in

this

case,

depending

on

which

one

you

try,

you

try

to

give

you

the

first

right.

You.

O

A

Delete

pv

first,

then

this

reclaimed

policy

is

useless,

but

if

you

try

to

delete

ppc

first,

then

let's

claim

bitcoin

policy

is

useful.

So

I

think

that's

the

confusion,

because

because

if

you

look

at

here

actually

we

did

delete

pvc

next

right,

so

we

tried

pv

first

canceled

it

it's

still

kind

of

in

terminating

right.

But

then

then

we

did

the

delete.

Pvc

right.

It's

not

like.

We

didn't

really

delete

the

pvc

right,

so

so

that's

very,

very

confusing.

A

C

A

Does

it

say

that

in

but

if

you

really

just

delete

pvc,

then

you

have

to

keep

your

pv

and

then

you

need

to

keep

the

the

reclaimed

policy

to

be

retained

or

something

but

otherwise

both

will

be

deleted.

Everything

will

be

deleted

right.

You

can't

yes,

so

I

think

it's

not

clear

right.

If

you

just

say:

okay,

you

did

the

pvc

first.

How

can

we

prevent

the

pv

to

be

also

deleted

if

we

delete

pvc

first

right

didn't.

C

A

A

C

C

A

A

N

N

A

A

J

A

A

A

O

N

N

A

A

J

Yeah,

I

think

that

makes

sense.

I

think

the

only

thing

we

want

is

we

don't

want

to

abandon

a

bunch

of

users

here

and

say:

oh

sorry,

you

can't

actually

do

csi

migration,

so

that

would

be.

That

would

be

pretty

bad,

but

it

sounds

like

at

least

with

the

backboard

there's,

a

potential

lower

hardware

version.

So

let's

find

out

what

that

version

is

yeah.

A

L

A

Let's

see,

oh,

I

think

worry,

sorry

time.

I

think

I

just

I

think

I

already

mentioned

this

one's

basically

this

chain

broad

tracking

proposal.

We

need

to

get

some

input

from

ebs,

some

someone

who

will

understand

the

ebs

apis

so

yeah,

that's

it

anything

else,

all

right,

if

not

that's

it.

For

today

thanks

everyone.