►

Description

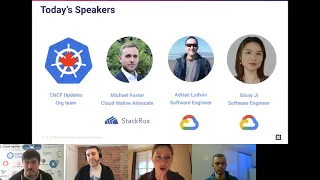

Intro and CNCF Update

Talk #1: The Kubernetes Security Specialist Exam is Here! What to Know and How to Get Started

By Michael Foster, StackRox

Talk #2: What's new in Hierarchical Namespaces: now with less hierarchy! By Adrian Ludwin and Ginny Ji, Google

A

B

A

So

we

are

live

and

recording-

and

I'm

super

excited

about

this

as

usual,

because

it's

that

time

of

the

month

where

we

get

to

come

together

so

welcome

everybody

to

our

kubernetes

and

cloud

native

meetup

for

the

eastern

canadian

cities.

So

we

have

some

exciting

news

on

that

front

that

we

will

share

in

a

moment

but

welcome

to

our

communities

in

montreal,

quebec,

toronto,

kitchener,

waterloo,

ottawa

and

our

newest

member

halifax

that

you

guys

will

hear

about

in

a

moment

so

welcome.

A

A

Today

we

have

a

full

agenda

on

the

docket,

we're

going

to

be

doing

a

bit

of

an

intro,

so

that's

usually

done

by

us.

So

that's

the

organizing

committee

so

we'll

go

through

a

few

things

around

our

team

that

is

growing

in

terms

of

organizing

committee,

what's

happening

in

our

ecosystem,

our

speakers

of

course,

and

how

to

stay

connected

with

us

and

submit

talks,

and

then

we

will

have

two

presentations.

A

So

the

first

one

is

by

our

dear

friend

mike

foster

who's

going

to

be

talking

about

the

new

cks

exam

from

the

cncf,

and

then

we

will

have

another

talk

about

hierarchical.

Namespaces

say

that

fast

ten

times

adrian

and

ginny.

So

that's

gonna

be

our

second

talk

and

then

at

the

end

as

usual,

we

have

some

really

awesome

prizes

and

this

week

they

are

brought

to-

or

this

month

sorry

they're

brought

to

us

by

stackrock.

A

So

our

wonderful

hosts

today

are

myself

julia,

so

hi.

I

work

at

a

company

called

cloud

ops

based

in

montreal,

and

these

are

my

co-hosts

for

today.

So

we're

all

gonna

have

different

roles.

Of

course,

most

of

us

know

archie

already

he's

our

fearless

leader

and

cncf

ambassador.

Then

we

have

anthony

and

suvro

who

are

going

to

be

also

helping

with

moderating

questions

and

helping

do

the

prize

draw

and

all

that

fun

stuff.

A

And

we

want

to

give

a

shout

out

to

our

colleagues

who

are

on

our

committee,

so

our

committee

is

growing

and

we're

really

excited

because

we

have

a

lot

of

representation

from

the

different

cities.

We

now

are

have

six

cities

in

our

crew.

So

it's

really

been

nice

to

have

the

representation

across

the

board.

So

thank

you

to

all

of

you

who

are

on

our

committee

and

a

special

shout

out

to

johan

who's

joined

our

committee

from

the

actual

east

of

the

eastern

canadian

term

in

halifax.

A

So

we're

super

excited

to

have

you

on

board,

and

you

know

it's

always

such

a

pleasure

working

with

all

of

you.

So

typically,

what

we

do

is

we

take

turns

hosting

these

meetups,

so

you'll

get

to

know

each

of

us

over

the

course

of

the

next

year

months.

However,

long

we're

going

to

be

doing

this

online.

A

And,

of

course,

we

have

to

give

a

shout

out

to

our

wonderful

sponsors.

We

could

not

do

these

without

them.

Obviously,

being

online

is

a

different,

a

bit

of

a

different

ball

game,

but

we're

very

grateful

for

the

sponsors

who

help

us,

because

the

funds

that

they

give

us

allow

for

us

to

create

other

in-person

events,

hopefully

one

day

to

have

prizes

and

swag

to

give

out

to

everybody.

So,

as

I

mentioned

earlier,

stackrocks

has

donated

a

couple

of

gift

cards

to

us.

A

So

thank

you

to

them

and,

of

course,

to

our

other

wonderful

sponsors.

If

anyone

works

at

a

company

or

you're

just

feeling

generous

and

you

want

to

sponsor

our

meetups,

there

is

a

qr

code

here.

So

please

feel

free

to

scan

that

and

it'll

send

you

to

some

information

around

what

a

sponsorship

package

looks

like.

A

So,

as

mentioned,

we

have

some

really

exciting

talks

today,

we're

always

very

honored

to

have

people

from

actually

across.

I

guess

the

world

now

that

we're

in

this

virtual

reality

come

and

support

our

community.

So,

as

mentioned

our

first

little,

intro

here

will

be

cncf

updates

from

us

and

then

again,

michael

will

be

chatting

about

the

cks

and

adrian

and

ginny

from

google

will

be

talking

about

hierarchical

namespaces.

A

A

And

with

that,

I

will

pass

the

baton

over

to

archie.

Oh

sorry,

I

think

I'm

doing

a

slide.

Sorry,

we

we

want

to

hear

from

all

of

you.

So

this

is

the

way.

These

are

the

ways

to

be

in

touch

with

us

and

to

follow

what's

happening,

so

we

have

recently

created

a

linkedin

page,

which

sometimes

gets

a

little

neglected,

but

I'm

definitely

trying

to

be

more

active

there

I

enjoy

being

on

linkedin.

A

I

know

don't

hate,

but

if

anyone

wants

to

join

us

on

linkedin,

there

is

a

page

there

cloud

native

canada

as

well

as

on

twitter.

We

have

a

twitter

handle

that

is

posted

here.

It's

at

cloud

native

canada,

and

there

is

this

qr

code

again

to

join

our

kubernetes

canada,

slack

channel.

So

that's

a

different

slack

channel

from

the

main

kubernetes

one,

which

has

many

many

many

many

people

and

ours

is

growing

quite

steadily

as

well,

but

it's

a

really

wonderful

community.

A

A

And

here

as

well,

we

are

always

looking

for

not

only

not

only

hearing

from

you

but

also

for

talks.

We

really

rely

on

our

community.

Of

course

our

sponsors

help

us,

but

we

definitely

rely

on

our

community

for

content.

So

we

have

two

types

of

talks.

So

one

is

a

story,

so

it's

a

success

or

failure

story

of

what

I

tried

to

do

it

did

it.

A

Was

it

a

massive

success

or

did

it

fail

miserably

and

here's

what

I

learned

either

way

we

want

to

hear

from

you

about

those

stories

and

we'd

love

to

have

something

like

that

be

presented

at

our

meetups

there's.

Also,

the

option

of

a

tech

talk

so

similar

to

what

adrian

and

ginny

will

be

doing

today,

so

one

that

is

more

around.

This

is

the

topic.

This

is

how

it

works

more

of

a

deep

dive

into

a

tool

or

technology,

ideally

something

that

is

open

and

accessible

to

everybody.

A

So

these

are

the

types

of

talks

and

content

we're

looking

for.

If

you

have

any

ideas,

suggestions

wanting

to

join,

please

don't

be

shy.

Our

team

is

really

wonderful

and

supportive

to

help

guide

the

cfp

process.

So

if

you

have

any

doubts

on

your

proposal

or

you're,

not

sure

we're

here

to

help

you,

so

please

don't

be

shy

and

as

as

we

all

know,

you

know,

we

started

this

all

started

off

talking

about

just

kubernetes

and

we

have

expanded

that,

of

course,

because

we've

kind

of

gone

through

a

gamut

of

topics

around

that.

A

So

anything

that's

related

to

the

cncf

any

of

the

projects

or

tools

that

are

involved

there.

As

you

can

see

mike,

is

going

to

be

talking

about

exams

that

the

cncf

is

offering

so

yeah.

We

really

welcome

you

with

open

arms

and

would

love

to

hear

from

you

and

with

that

without

further

ado,

I

will

pass

it

to

archie

and

he

will

take

it

away

with

some

of

the

rest

of

the

updates.

B

A

Yeah,

actually,

I

think

in

this

one

the

questions

go

directly

in

the

chat

I

know

on

on

zoom

there's

a

q

a

section,

but

I

will

be

monitoring

the

chat,

as

I

think

a

few

of

us

will

be.

So

if

you

have

any

questions

for

us

when

I'm

done

talking,

I

will

take

a

look

at

the

chat

and

if

you

have

any

questions

for

any

of

the

speakers,

please

post

them

in

there

and

we

will

make

sure

that

they

get

answered

in

function.

A

I

didn't

see

that

so,

if

you

guys

are

in

the

chat

at

the

top

there's

a

general

there's,

a

q,

a

and

there's

dms

so

feel

free

to

add

any

questions

into

the

q,

a

section

there

there's

like

three

little

tabs

at

the

top,

and

thanks

for

pointing

that

out,

I

hadn't

seen

that.

So

that's

perfect.

So

if

you

have

questions

for

our

speakers,

it's

easier

for

us

to

cue

them

up

in

the

q,

a

area

so

feel

free

to

put

those

in

there

and

any

other

comments.

Feedback.

B

All

right,

thanks

julia,

so

yeah

hi,

my

name

is

archie.

I'm

cncf,

ambassador

from

canada.

I'm

happy

to

be

here,

organizing

this

stan

valentine's

edition

of

cncf

meetup.

We

really

love

cncf

kubernetes

technologies

around

and,

most

importantly,

we

love

you

our

community,

so

happy

to

be

here

again

and

we

have

some

exciting

announcements

and

news

around

our

meetup.

So

I

don't

know

if,

if

you

see

you

know

if

this

picture

is

familiar

to

you

and

maybe

you're

playing

a

assassin,

crit

valhalla

we're

kind

of

expanding

our

territories

on

east.

B

So

it's

not

going

to

be

essex

this

time,

but

we're

going

to

nova

scotia

and

we're

having

a

new

member,

a

new

chapter

in

our

meetups,

so

we're

starting

a

new

meetup

at

in

halifax.

So

if

you

have

friends

or

some

camp

companies

who

is

from

halifax,

please

you

know

share

with

them

this

news.

So

we

have

a

local.

B

B

While

you

don't,

you

know,

take

care

of

also

the

cloud

native

canada

kind

of

chapter.

So

we

we

we're

starting

a

new

chapter

which

is

going

to

be

used

mainly

right

now

for

central

event.

So

in

the

future

you'll

see,

the

promotion

will

be

done

for

the

cloud

native

canada

and

we're

going

to

be

using

this

cloud

native

chapter

for

future

virtual

events.

B

One

will

go

post

covet

so

so

we'll

continue

organize

events

in

each

city

as

usual,

but

we

will

have

this

virtual

link

in

case

we

will

be

having

some

virtual

meetups.

So

I

hope

you

like

this

idea.

You

know,

and

you

know

we

know,

that

we

keep

introducing

new

changes

all

the

time,

but

I

I

think

this

will

be

a

good

addition

to

our

meetup

all

right

with

that

I'm

going

to

go

to

the

cncf

announcements.

I

hope

you

were

excited

about.

B

B

Last

year

there

was

35

projects

has

been

added

to

the

sandbox

and

I'm

sure

you're

using

some

of

them,

and

we

spoke

about

some

of

them

already.

But

it's

you

know.

If

you

want

to

share

about

your

journey

about

cncf

projects,

you

know

our

meetup

is

welcoming

you

and

you

can

always

have

a

chance

to

present

there

all

right

and

then

the

on

top

of

the

sandbox

projects.

B

We

had

five

graduated

projects,

helm,

tk,

tv,

ecd,

harbor

and

rook,

which

we

covered

pretty

much

extensively

within

our

meetups,

and

there

are

also

a

few

other.

New

projects

has

been

added

to

the

cncf

landscape,

and

this

is

all

possible

thanks

to

these

people,

technical

oversight,

community

that

are

working

hard

to

bring

new

projects

every

year.

So

they

they

they.

You

know

the

voting

they

they

preparing

the

the

the

plan

for

the

new

projects

to

join

cncf.

B

B

We

already

have

some

news

about

2021,

so

very

popular

project

in

our

community.

Open

policy

agent

oppa

has

been

recently

graduated

in

cncf.

You

know

for

the

people

who

who

loves

kubernetes

they're

using

gatekeeper,

probably

today,

and

we

see

more

and

more

projects

starting

around

opa.

I

actually

wear

probably

my

hoodie

with

opa

right

now.

B

So

hopefully

you

know

if

you

have

some

interesting

stories

to

share,

please

let

us

know,

but

obviously

open

policy

agent

become

a

very,

very

popular

tool

to

use

and

if,

if

you're,

trying,

for

example,

use

pot

security

policies,

this

is

today

can

be

done

through

the

opa

and

it's

deprecated

in

kubernetes.

So

this

is

one

of

the

ways

to

use,

but

like

there's

many

many

policies

that

are

available

right

now

for

kubernetes

in

opa.

B

C

Yeah

sure

yeah,

so

beer

packs

one

of

their

packs,

actually

they've

been

around

for

a

long

time.

Maybe

some

of

you

already

know

them

from

back

in

the

days

with

eroku.

That

is

a

platform

as

a

service

and

ben

also

cloud

foundry

started

using

blue

pack.

Maybe

you

know

using

iroku,

you

remember

that

you

had

to

get

push

to

a

roku

and

then

magically

your

your

project

was

being

built

because

it

would

detect

what

kind

of

project.

C

If

it's

a

java

or

python

or

not

gs

project,

and

then

you

would

choose

the

right

environment

and

it

would.

It

would

actually

build

it

for

you

right.

So

this

is

what

we

have

built

packs

in

the

day,

but

now

buildbacks

are

more

generic

and

they

have

actually

joined

with

the

cncf

as

a

sandbox

project

in

2018,

and

now

they

are

in

incubation,

meaning

that

they

are

getting

traction

and

they're

getting

some

users

and

commuters

from

the

community.

C

C

They

are

going

to

detect

if

they

apply

to

this

project.

So

if,

for

example,

it's

a

java

project,

then

the

java

build

pack

will

probably

try

to

build

the

project

so

once

it's

detected,

then

of

course

the

next

step

is

to

build

the

project

and

then

optionally

they

can

also

well.

The

baxiali

can

also

push

your

image

to

a

registry.

So

this

is

not.

Pack

is

the

default.

C

Is

the

default

user

experience

that

you

would

encounter

using

the

packs,

but

it's

a

rich

ecosystem

with

many

builders,

so

actually

you've

got

a

lot

of

vendors

that

are

providing

their

own

builders,

for

example.

Of

course

you

have

eroku,

because

historically

they

were

kind

of

the

first,

but

also

you

have

cloud

foundry

that

have

renamed

their

effort

into

the

pacquito

bill

packs

as

well

as

google

cloud.

So,

of

course,

if

you

would

deploy

to

a

roku,

you

would

choose

the

buildback

from

your.

C

If

you

go

to

google

cloud,

you

would

use

the

via

packs

from

google,

but

there

are

not

only

builders.

There

are

also

different

implementations,

so

pax

cli

is

the

default

one,

but

you

can

actually,

you

can

encounter

the

one

many

tools,

such

as

tecton

vmware,

a

kpak

of

waypoint,

but

actually

know

how

to

use

build

packs.

How

to

apply.

Bear

packs

to

your

project.

So

back

is

not

only

is

not

the

is

not

the

the

only

tool

that

you

can

use,

build

packs

with.

C

You

can

use

them

in

a

lot

of

ci,

tooling

already

so

yeah

that's,

but

that

was

it

for

the

quick

focus

on

on

the

on

the

build

pack

technology.

I

believe

that

it's

it's

a

great

technology

actually

and

it's

really

getting

traction

a

lot.

If

you

go

to

pivotal

talks-

or

I

don't

know

you

know

also

in

the

java

community-

you

will

see

that

it's

really

getting

attraction

more

and

more.

So

thanks

for

listening.

B

C

B

I'm

really

excited

about

this

project

because

you

know

you're

kind

of

escaping

one

extra

step

in

the

pipeline

so

before

we

had

to

create

docker

file

and

build

the

image.

Now

you

can

completely

skip

this

step

and

you

have

your

source

code

in

github

and

you

can

create

an

image

from

that

automatically

and

deploy

to

kubernetes,

for

example,

or

to

key

native

or

some

other

project.

B

So

it's

kind

of

simplifying

a

lot

of

the

step

and

I

think

the

developers

especially

would

be

the

ones

who

are

really

take

advantage

of

that,

because

they

don't

necessarily

want

to

learn

docker

or

kubernetes.

They

want

to

write

the

code

so

for

them

it's

kind

of

simplifies

a

lot.

The

the

steps

around

definitely.

C

B

Cool

thanks

a

lot

anthony,

I'm

gonna

go

continue

and

usually

you

know

we're

covering

the

latest

kubernetes

release

and

this

release

was

released

actually

in

2020,

but

it

was

released

after

the

meetup

we

organized

our

last

meetup

from

the

kubecon.

So

I

want

to

say

thank

you

to

the

whole

community

community

that

contributed

to

this

release

and

congratulate

the

release

team

with

leading

the

best

release

of

kubernetes

1.20.

B

It's

incredible

that

we

already

lived

together

within

all

of

these

20

releases

and

organized

so

many

meetups

around

kubernetes.

This

release

has

42

enhancements

and

there

was

a

lot

of

work

has

been

done

in

terms

of

moving

cron

jobs

to

beta

and

cri

to

ga,

which

will

happen

shortly

in

the

upcoming

121

release.

B

There

was

a

few

features

that

been

graduated,

like

volume,

snapshots,

runtime

classes,

support

for

container

d

and

on

windows

in

terms

of

new

features.

I

think

graceful

note.

Shutdown

will

be

something

greatly

appreciated

by

the

you

know:

people

who

is

running

kubernetes

in

production,

better

pod

resource

request,

metrics

and

container

resource

bait

based

port,

auto

scaling.

So

if

using

hpa,

it

will

be

able

to

also

take

decision

based

on

specific

container,

not

like

you

know,

so

it's

a

kind

of

easier

way.

B

It's

not

easier

but

like

more

flexible

way

to

do

hpa

and

cover

more

use

cases,

but

I

think

the

major

change

for

everybody

that

you

know

discussed

a

lot

in

twitter

and

and

media

was

docker

shim

deprecation

right.

So

we

get

to

the

point

where

when

docker

is

deprecated

from

kubernetes-

and

there

was

a

lot

of

fear-

you

know

people

were

scared.

You

know

like.

Should

I

learn

a

new

tool

you

know

like?

Should

I

stop

using

docker?

B

The

answer

is,

is

no,

you

know

you're

still

gonna

be

running

your

docker

containers,

docker

images

on

kubernetes,

but

docker

shim

itself.

That

was

supposed

to

be.

You

know,

kind

of

running

docker

itself.

It

has

a

lot

of

tool

in

terms

of

maintaining

it.

So

it's

been

deprecated

right

now.

So

you

have

time

until

1.23

to

move

to

container

d

or

use

cryo,

but

also

mirantis

the

company

which

you

know

acquired

docker.

They

will

be

adding

docker

support

as

a

container

runtime

interface

and

they

will

be

supporting

it.

B

So

there's

no

much

change

for

you

know.

For

many

people,

it's

more

would

be

work

on

the

cloud

providers

that

are

using

docker

shim

today,

or

maybe

the

the

vendors

that

are

using

docker

shim,

but

in

terms

of

you

know,

change,

there's

no

major

changes

for

end

users

with

this

behavior

and

you

can

still

run

your

docker

containers

with

kubernetes

and

then

the

the

next

release

of

one

that

21

we

expecting

on

april

8th.

So

hopefully,

next

meet

up.

We

can

cover

about

it.

B

Some

of

the

you

know,

important

events

that

are

coming

on

may

4th.

We

are

going

for

a

next

cubecon

eu,

it's

still

going

to

be

online

and

we

have

a

a

code

for

our

community

which

provides

x,

25

discount.

Unfortunately,

the

the

price

went

up

a

few

days

ago,

so

it

was

ten

dollars

per

ticket

now

at

75,

but

you

still

have

this

discount

and

kubecon

will

also

host

a

lot

of

co-located

events

that

will

be

sold

separately.

B

D

Hi

everyone,

my

name,

is

supra.

I

hang

out

with

archie

and

the

team

once

in

a

while,

and

thank

you

so

michael

today

would

be

talking

about

the

new

certification

which

came

out

in

last

kubecon

michael,

is

a

cloud

native

advocate

at

stackross

and

feel

fr

enjoy

his

talk,

michael

you're.

There

awesome

thank

you.

F

It

is

somewhat

of

a

unique

test

compared

to

the

ck

and

ckad,

so

I

think

it's

worth

covering

so

again

what

we're

going

to

cover

I'm

just

going

to

intro.

You

know

why

these

certifications

are

useful.

Talk

about

the

exam

structure,

exam

format

break

down

the

individual

sections

without

giving

away

too

much

right.

I

see

you

know

magnus

got

some

good

resources

along

with

waleed

he's

in

the

chat,

so

the

the

cks

people

are

here

which

is

great

and

and

he's

always

worth

pinging.

F

Give

you

resources

not

talk

too

much

and

then

talk

about

some

general

tips

that

I

found

really

useful

for

the

exam

and

I'm

sure

other

people

can

can

chime

in

as

well

feel

free

to

throw

some

q

a

in

the

chat

too,

because

I'll

we

have

some

q

a

time

at

the

end.

So

yeah

a

little

bit

about

me

so

like

it

was

just

touched

on

cloudy

I've

advocated

stack,

rocks,

I've

done

the

ck

cks.

Actually,

I'm

taking

the

ckd.

F

I've

been

like

a

little

slacking

on

it

a

little

bit,

but

I

got

a

very

nice

discount

when

I

was

at

cubecon.

So

again,

I

think

part

of

that

early

sign

up

bonus

for

the

kubecon

eu

is,

I

think

it's

like

ten

dollars

for

full

access

and

they

normally

have

a

lot

of

discounts

on

these

certs,

because

300

for

cert

is

kind

of

a

lot.

F

F

Cks

exam

structure

is

two

hours

long.

You

have

to

get

67

if

you

go

so

this

is

just

pulled

straight

from

the

documentation

which

I

recommend

you

read.

It

says

15

to

20

performance-based

tasks,

but

then

it

kind

of

gives

away

how

many

questions

are

at

the

end.

So

it's

basically

15-16

questions

the

new

version,

so

they

just

updated

the

version

to

1.20

and

it

normally

trails

so

like

whenever

the

release

happens.

I

think

it's

like

six

weeks

or

eight

weeks

after

they'll

update

the

the

exam

version.

F

Just

it's

it's

worth,

knowing

so

that

when

you

use

your

study

tools,

you

are

using

the

correct

version:

cost

300

usd

you

get

a

free

exam

retake,

which

is,

I

think,

awesome

and

really

useful.

The

cert

is

valid

for

two

years.

I,

the

cka

and

the

ckid,

I

believe,

are

both

valid

for

three

years.

Correct

me

if

I'm

wrong

on

that

archie,

but

I

believe

they're

both

valid

for

three

one

of

the

reasons

for

the

two

years

is

because

a

lot

of

there

are

a

lot

of

moving

parts

of

security.

F

Like

you

know,

pod

security

policies

just

became

deprecated

right

and

that's

originally

in

the

cks.

So

there's

I

think

it's

a

quicker

moving

ecosystem

and

they

want

to

make

sure

that

people

are

staying

updated

so

when

it

says

a

12-month

exam

eligibility

they

mean

after

you

sign

up.

So

if

you

sign

up

in

november,

you

have

basically

till

the

next

november

to

take

your

two

tests.

You

get

a

free

retake

as

well.

If

you

fail

and

then

a

valid

cka

assert

is

required

and

I'll

touch

on.

F

Why

that's

required

as

I

go

through

the

sections,

but

it

is

a

good

policy

to

have

it

as

required.

I

think

they

did

a

good

job

with

that,

so

the

format,

if

you've

you

have

to

take

the

secret

to

take

the

cks,

but

I'll

just

you

know

talk

about

it

a

little

bit

to

make

sure

everybody

has

the

same

context.

F

You

basically

get

into

a

pain,

go

through

a

bunch

of

checks

to

make

sure

you're

not

cheating,

obviously,

and

then

you

get

into

your

exam

environment,

the

exam

environment

compromises

basically

a

terminal

window

on

your

right

and

you'll.

Have

your

question

sort

of

navigation

pane

on

your

left?

You're

allowed

two

two

tabs

open,

so

I

recommend

like

two

monitors

but

you'll:

go

through

you'll

have

a

question:

you'll

get

a

cluster

for

each

question

and

there'll

be

some

sort

of

task

that

you

need

to

perform,

whether

it's

on

the

node.

F

Sometimes

it's

within

kubernetes,

sometimes

you're

debugging,

the

master

node

or

the

control

plane,

node,

sorry

and

the

the

worker

node,

but

you'll

get

an

info

box

and

it

will

walk

you

through

exactly

what

you

need

to

do

now.

Every

single

question

means

you

have

to

change

the

context

right

because

you

do

have

16

clusters.

Make

sure

that

you're

aware

of

that

and

know

that,

when

you're

going

to

go

into

each

node

to

debug

or

look

at

some

different

security

tools

that

you

can

access

using

the

node

name,

you

can

use

cubecontrol

when

you

ssh

in.

F

So

you

have

access

to

all

of

these

applications

when

you

ssh

into

control

plate

or

worker

nodes,

which

is

really

useful.

If

you're

debugging,

something

in

kubernetes,

while

you're

working

on

the

node,

you

can

use

keep

control

from

the

node.

But

it's

a

it's

a

very

unsecure

environment

that

you're

testing

in,

but

it's

worthwhile

so

that

you

know

how

to

like

move

through

the

test

in

two

hours.

You

do

have

elevated

privileges

by

default,

which

is

great

because

you

basically

need

to

do

whatever

you

need

on

the

on

the

on

the

node.

F

One

thing

I

will

warn

people

of

is:

don't

delete

files

because

there

are

files

and

there

are

configuration

files

and

things

like

that

that

are

present

always

create

or

copy

new

ones.

Otherwise,

you're

in

for

a

rough

one,

and

I

think

that

should

be

just

a

self-evident

slap

on

the

wrist.

If

you're

deleting

files

on

the

node

yeah,

you

must

return

to

the

base

node.

So

if

you

shin

make

sure

you

come

out,

nested

ssh

is

not

supported

and

yeah.

You

can

use

q

patrol

keep

ctl

to

work

on

any

of

the

clusters.

F

Like

I

said

before,

once

you

get

in,

you

can

use

qctl

once

you're

inside.

I

need

to

update

this

because

it

says

1.1.19,

it's

actually

1.2

now,

so

the

quarterly

update

has

actually

just

been

pushed.

I'm

a

little

late

on

this

and

the

vi

vim

is

the

default

editor.

One

thing

to

note

is:

if

you

like,

using

you,

know:

g

edit

or

emacs

or

nano

they're,

not

there,

you

do

have

privileges,

so

you

can

go

install

them,

but

let's

say

you're

working

through

every

single.

F

You

know

node,

you

don't

want

to

be

installing.

You

know

application

on

every

single

node,

so

it's

worth

it

just

getting

used

to

vi

and

vim,

and

especially

if

you're,

using

the

cube

control

edit

function

to

edit

configuration

to

edit

emails

on

the

fly

vi

via

them

is

definitely

worth

it

all

right.

Exam

sections,

so

just

going

to

break

down

each

of

the

six

sections

talk

about

what

you

need

to

focus

on

and

hopefully

not

give

away

too

much.

F

If

you

have

any

questions,

throw

them

in

the

chat,

so

yeah

section

number

one

cluster

setup:

network

policies

to

restrict

cluster

access

network

policies

are

a

must

know

if

you're

going

to

you

know,

enforce

security

across

your

networks

in

your

kubernetes

clusters.

So

there

are

multiple

different

functionalities,

there's

the

to

and

from

functionality.

That

is

new,

I

think,

is

new

in

like

as

of

117,

but

really

you're

gonna

need

to

know.

You

know

how

to

do

default.

F

Denies

how

to

do

default,

allows

how

to

manage

ingress,

how

to

manage

egress,

how

to

manage

two

pods,

how

to

name

it

and

manage

within

name

spaces.

You

need

to

understand

how

the

the

permissions

and

how

to

configure

them

so

that

the

pods

can

talk

to

each

other

or

not

talk

to

each

other,

so

definitely

worth

knowing

the

cis

kubernetes

benchmarks.

F

So

you

want

to

review

all

the

kubernetes

components,

one

of

the

tough

things

about

working

in

something

like

gke.

Is

you

don't

see

the

masternodes

right,

the

the

exam

uses

cube

adm,

I

believe,

to

set

up

or

cue

spray,

but

either

way

the

kubernetes

components.

They're

not

containerized

they're

on

the

node

you'll

be

able

to

go

and

see

their

configuration

they're,

not

like

an

rke

where

the

components

are

containerized,

so

it's

worthwhile

understanding

those

components

and

how

the

open

source

configuration

sets

it

up.

F

That

should

just

be

a

regular

occurrence,

so

section

two

cluster

hardening

restricting

kubernetes

api

access

like

the

billing

and

lapse

attack.

We

want

to

make

sure

that

our

api

access

is

vetted

and

and

secure.

We

want

to

make

sure

that

we

know

how

we're

applying

role

based

access

control

and

really

our

back

is

that

core

functionality

for

applying

all

of

our

policies

right

using

caution

and

default

accounts.

So

one

of

the

issues

with

pod

security

policies

was

the

default

accounts

that

are

associated

with

them.

F

And

then

I

think

this

is

great.

They

snuck

this

in

here

update

kubernetes,

frequently,

because

I

believe

when

this

came

out

in

kubecon,

most

people

were

still

using

115,

which

we're

at

120

now,

and

I

don't

1.5-

isn't

even

supported

anymore

by

the

kubernetes

community,

and

so

the

cloud

providers

have

done

a

good

job

at

trying

to

push

people

to

adopt.

You

know

the

later

versions,

even

though

I

know

116

had

a

little

bit

of

an

api

api

but

worthwhile.

B

B

F

It's

it's

really

interesting

how

they

they

try

to

incorporate

kind

of

whole

lifecycle

and

outside

of

kubernetes

into

the

exam

as

much

as

they

could

obviously

like

you're,

not

really

creating

your

your

base

image

but

realistically

you'd

be

wanting

a

secure

base

image

that

you

can

vet

with

a

minimal

attack

surface

managing

iam

rolls

that

was

used.

It

throws

some

confusion,

we

don't

necessarily

mean

like

amazon,

iim

roles

or

something

like

that.

We

just

mean

our

back

and

how

would

be

applied

within

kubernetes

minimizing

external

access

to

network.

That

would

be,

you

know.

F

Maybe

something

like

you

can

use

open

source

tools,

maybe

something

like

app

armor

to

control

access

into

different

processes

or

network

policies

as

well

and

again

it

make

it

make

sense

it

mesh.

It

mentions

app,

armor

and

staccom,

so

there

are

other

open

source

tools

and

you're

allowed

to

use

the

documentation.

I'd

recommend

that

you

become

aware

of

those

tools,

as

you

really

are,

not

going

to

be

a

security

expert,

so

to

speak.

F

Unless

you

understand

those

tools

and

their

place

within

the

ecosystem,

all

right,

we

got

three

more

left

so

set

up

appropriate

os

level,

security

domains,

so

psp's

opa

security

context.

I

always

get

a

bunch

of

questions

about

this,

especially

because

psps

have

become

deprecated

as

of

one

two

one

psp's

enforce

security

context

right.

We

are

talking

security

context

at

the

container

and

pod

level

and

then

we're

using

psps

or

opa

to

enforce

the

security

context

against

our

clusters.

F

So

whatever

the

mechanism

for

enforcing

those

security

context

that

might

change,

but

the

core

component

doesn't

change

security

contexts

are

here

to

stay.

That

being

said,

you

need

to

know

how

to

apply

these

different

mechanisms,

as

there

still

is

a

conversation

about

how

to

best

move

forward

with

with

the

application.

So

like

archie

mentioned,

open

policy

agent

is

worth

knowing

gatekeeper.

F

F

All

right,

my

favorite

section

was

supply

chain

security

because

really

like

this

is

kind

of

the

the

core

issue

that

that's

cropping

up

nowadays.

So

you

know

minimizing

base

image

footprint,

securing

your

supply

chain

right,

kubernetes

by

default,

pulse

from

docker,

don't

know

how

long

that's

going

to

be

a

default

arch

or

you

can

fill

me

in

on

that

one,

but

for

now

it's

still

a

default

that

it

pulls

from

docker

hub.

F

So

you

know

setting

up

your

your

supply

chain

to

make

sure

that

you're,

basically

setting

an

allow

list

of

the

registries

you

can

pull

from

static

analysis

of

user

workloads.

So

trivi,

for

example,

is

mentioned.

Falco

is

mentioned

as

some

open

source

tools,

so

enforcing

runtime.

Using

those

tools

on

the

nodes,

for

example,

trivia

so

scanning

for

known

vulnerabilities,

need

to

know

how

to

use

a

an

image

scanner,

how

to

apply

it,

how

to

actually

make

assessments

based

off

of

some

of

the

recommendations

and,

lastly,

monitoring

logging

and

runtime

security.

F

This

is

this

is

the

largest

slot

I

have

so

you're

gonna

perform

behavior

analytics,

like

I

said,

that's

like

a

tool

like

falco.

Does

right,

you're

gonna,

detect

threats

within

physical

infrastructure

apps.

This

is

kind

of

just

a

general

topic

for

hey

we're,

applying

all

these

to

detect

threats

and

detect.

All

phases

of

attack,

regardless

of

where

it

occurs,

perform

deep

analytical

information.

F

Basically,

what

this

is

trying

to

get

at

is

audit

logging

container

scanning.

You

know

runtime

enforcement,

applying

it

understanding

that

when

you

apply

a

runtime

enforcement

or

something

like

falcon,

it

gives

you

feedback.

Saying

hey.

You

know,

you're

running

a

process

as

a

root

pid,

you

know.

How

would

you

enforce

that

in

kubernetes?

How

would

you

go

and

figure

out

which

that

what

pod

that

is

and

and

make

an

adjustment

accordingly

right?

So

it's

not

just

a

lot

of

these

questions

are

like.

F

F

All

right,

general

tips.

I

don't

know

if

I'm,

I

think,

I'm

getting

close

to

the

time

so

I'll

try

to

move

through

this,

and

if

anybody

has

any

questions

they

can,

they

can

hit

me

up

in

the

q

a

period

but

read

the

documentation

number

one

recommendation.

A

lot

of

the

stuff

I'm

talking

about

is

there

and

it

gets

updated

regularly.

So

I

recommend

you

do

that.

Take

your

time

read

the

question

manage

your

time,

because

you

know

bite

off

the

questions

that

you

understand

that

you

you

can

read

through

it's.

F

My

biggest

recommendation

is

to

go

through

and

understand

it

get

hands-on

experience

with

a

lot

of

these

tools

and

then

bookmark

what

you,

what

you

find

the

most

valuable

points

are

you

can

use

bookmarks.

You

can

only

use

the

documentation

that

they're

allowed

to

give

you,

but

you

can

use

bookmarks

in

your

tab,

and

so

I

found

that

really

useful,

because

you

don't

really

have

a

lot

of

time

to

be

searching

through

web

pages.

F

Recording

task

progressions,

so

the

exam

has

a

little

flag

option.

You

can

flag

questions

to

return

to.

I

actually

found

it

more

useful

to

flag

questions

that

I

was

confident

in

and

then

I

returned

to

the

questions

that

I

weren't,

but

it's

useful

to

help.

You

keep

track

of

time

yeah,

so

you

actually

have

autocomplete.

There

is

an

autocomplete

for

cube

control.

F

Like

I

said,

the

ck

is

a

precursor

to

the

cks,

and

one

of

the

reasons

for

that

is

because

you

do

things

in

the

ck,

like

you

know,

create

yaml

files

on

the

fly

use

dry

run.

You

know,

use

basically

a

lot

of

these

workflow

things

in

kubernetes

and

the

cks

sort

of

like

allows.

You

gives

you

some

of

those,

so

it

might

give

you

template

files

or

it

might

give

you

things,

because

we

know

that

you

know

how

to

work

with

kubernetes

yaml

files

and

objects.

F

The

point

is

like:

do

you

understand

the

security

aspects

of

them?

So

it

is

a

little

bit

different

from

from

the

ck,

and

that

in

that

part,

and

you

should

take

advantage

of

that

to

to

cut

down

on

those

typos,

be

provisioning,

vi,

vim

and

pay

attention

to

the

question.

Context

that

cube

control

config

context

make

sure

that

you're

not

in

the

wrong

the

wrong

environment.

F

Looking

at

the

wrong

question,

because

you

can

delete

some

things

that

you

might

not

have

wanted

to

deleted,

never

write

a

gamut

from

scratch,

learn

how

to

sort

through

json

outputs

right.

You

do

have

jq,

there's

a

list

of

applications

that

you

have

on

every

single

node,

that's

available

to

you

and

again

review

those

systemd

basics

and

review

those

kubernetes.

F

Config

files

understand

what

the

open

source

kubernetes

application

looks

like

and

yeah

try

to

avoid

doing

something

like

on

gke,

because

you're

never

really

going

to

get

that

experience

on

the

node

and

resources

just

finishing

up.

So

I

got

a.

I

got

a

chance

to

go

into

killer

sh.

Actually,

when

I'm

just

looking

after

my

ckid,

I

didn't

actually

use

it

for

the

cks,

but

I

really

liked

it

as

a

resource.

F

F

B

F

There's

I

believe

that

there

is

some

sort

of

sub

scoring

now,

because

there

are,

but

don't

quote

me

on

this

they're

one

of

the

my

biggest

gripes

and

archie

don't

hit

me

is

there

is

not

as

much,

I

would

say,

visibility

into

the

scoring.

So

when

you

do

the

cka

a

lot

of

it

is,

it

has

to

be

exact.

The

name

of

the

potty

crate

has

to

be

exact

and

I'd

recommend

that

even

when

you're

creating

manifest

files,

you

are

very

specific

in

what

you

write.

F

D

F

I

think

because

people

come

in

and

they

think

they

have

a

a

hold

on

security

and

kubernetes,

but

they

might

not

have

an

understanding

of,

let's

say

all

of

the

security

tools

that

are

available

and

how

they

would

and

how

you

would

use

them

to

then

interact

back

with

kubernetes

right.

A

lot

of

people

might

be

used

to

working

in

something

like

gke

or

aks,

where

you

know

they

go

to

cloud

logging

and

it

gives

them

and

says:

hey

here's

the

log,

but

do

they

really

go

and

set

the

logs

themselves

right?

F

If

you

were

to

use

something

like

audit

logging

in

like

audit

policy

and

k8s,

do

you

actually

go

and

work

with

that

config

file

and

change

that

config

file

so

that

you

get

different

audit

logs

coming

out?

So

there's

there's

a

lot

of

different

use

cases

that

I

think

they

highlight

all

the

security

around

kubernetes

really

well

and

that

I

think,

is

probably

the

most

challenging

and

to

be

fair.

Also

the

resources

haven't

necessarily

caught

up.

I

know

you

know.

F

B

B

B

C

G

It's

your

turn

now,

thanks,

so

I'm

adrian

ludwig,

I

have

become

accustomed

to

saying

the

word

hierarchical

a

whole

lot

over

the

past

year

and

I'm

from

google

waterloo,

which

of

course

is

in

kitchener.

I

personally

am

in

toronto

today

and

most

of

the

time

since

the

pandemic

started,

and

so

is

jenny,

so

we

are

spread

at

most.

Most

water

wigglers

are

spread

out

through

the

gta

and

kitchener-waterloo

corridor.

So

yeah,

I'm

an

engineer.

G

I

work

on

a

gke

kubernetes

engine

and

I'm

gonna

talk

a

little

bit

about

hierarchical

name

now.

Some

of

you

may

have

heard

about

this

before

I

presented

at

qcon

eu.

I

gave

a

seminar

last

summer

about

this

as

well,

so

I'm

gonna

kind

of

skip

over

some

of

the

older

stuff

and

or

maybe

go

quickly

and

jenny

will

be

talking

about

some

of

the

new

features

that

are

part

of

hierarchical

namespaces.

G

So

why

do

we

have

this

feature?

Well,

it's

all

about

multi-tenancy,

and

so

just

for

those

who

don't

know,

let's

talk

a

little

bit

about

why

you

would

want

to

use

multi-tenancy

so

kubernetes

at

a

glance.

Many

of

you

probably

know

this

you've

got

a

user,

you

use

something

like

cube,

cuddle

or

any,

or

the

ui

to

talk

to

the

control

plane

and

the

control

plane

has

a

bunch

of

nodes

with

cublets

on

them.

G

Now

it's

usually

not

just

you

usually

there's

a

team

and

and

we'll

often

call

that

team

a

tenant,

it's

kind

of

like

a

generalization