►

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

B

Okay,

thanks

ramon

good

morning

or

good

evening

to

everyone

listening

in

based

on

where

you

are

so.

The

topic

for

today

is

deploying

virtual

network

functions

using

cubecord,

vms

myself,

puja

gombre

and

my

cool

speaker,

ashutosh

diwari.

We

we

both

worked

at

platform

line

and

we

will

be

presenting

this

topic

today.

So,

let's

get

started,

let's

get

to

see

the

agenda

first,

so

in.

In

short,

I

will

cover

basics

to

anybody

who's

new.

What

exactly

is

the

nfv

architecture?

What

a

virtual

network

function

means.

B

B

B

The

second

machine

we're

gonna

talk

about

is

openly

switch,

dpdk

again

we'll

get

into

the

details

of

what

exactly

ovs

is.

What

is

the

role

of

dpdk,

the

user's

pcni

that

it

utilizes

then

look

at

the

configuration

steps

that

are

required

for

utilizing,

obs

dpd

in

a

kubernetes

cluster

and

lastly,

get

to

the

vm

deployment

department

using

foot

and

at

the

end,

ashutosh

will

give

us

a

brief

demo

of

using

both

kind

of

applications.

B

So

when

we

talk

about

virtual

network

functions,

you'll

often

hear

this

term

forestry

network

function

virtualization,

so

network

function.

Virtualization

is

basically

the

architecture

concept

name

of

the

architecture

actually,

which

is

used

by

all

the

telco

clouds

today-

and

this

is

the

one-

that's

primarily

revolutionized

the

telecom

industry

in

the

past

few

years.

Right

I

mean

that

this

has

been

addressing

change.

B

If

you

compare

like

3g

to

4g

to

5g

infrastructures

today-

and

this

is

one

of

the

core

components

of

that

evolution

so

with

nfv

the

core

goal

there

is

that

you

don't

need

to

have

dedicated

hardware

for

every

network

function

in

your

telco

cloud

right.

So

so

that's

the

main

goal,

and

that

also

basically

gives

you

the

added

benefits

of

scalability

and

more

agility,

and

you

know

faster

turnaround

time

if

you

have

to

make

any

changes

to

your

nfv

stack.

B

So

that's

the

overall

framework

and

if

you

look

at

the

core

components

of

that

architecture,

the

the

first

one

there

would

be,

the

network

function,

virtualization

infrastructure

or

nfvi

in

short-

and

this

is

primarily

your

infrastructure

components

right,

like

the

core

components

like

compute

storage,

networking

that

are

needed

for

any

platform

to

run

your

software,

and

in

this

case

the

software

we

are

talking

about.

Is

your

q4

hypervisors,

basically

which

utilize

the

kubernetes

platform.

B

B

B

So

these

three

components

together

form

the

manual

component

and

then,

lastly,

your

network

services

themselves

that

are

running

as

software

applications

on

this

infrastructure.

That

is

called

your

virtualized

network

functions

right.

So

we

will

look

at

that

and

in

detail

now

next

slide.

Please

so

so

what

are

vnf's,

as

I

briefly

alluded

to

these

are

basically

network

services

that

are

now

going

to

now.

B

B

For

instance,

if

you

take

a

virtual

router,

it's

basically

a

software

function

which

is

replicating

what

you

would

have

achieved

with

a

hardware

based

l3

or

ip

based

routing

and

replacing

the

dedicated

hardware

for

it.

Now

you

have

a

software

function

for

it

and,

as

I

said,

most

of

them

run

inside

vms

because

it

could

be

either

legacy

reasons

or

just

because

you

are

transitioning

from

a

vnf

model

to

a

container

network

function

like

a

cnf

model

rate.

B

C

B

B

B

It's

you

already

have

the

infrastructure

in

place

and

what

you're

doing

is

essentially

deploying

a

new

software

application,

so

it

makes

it

far

more

scalable

and

you

can

run

it

on

like

commodity

hardware

for

different

services

that

you

want

to

utilize

there,

because

you're

also

running

it

as

a

software

application.

You

can

obviously

pack

them

more

densely

on

your

commodity

hardware,

and

that

gives

you

like

net

result

is

that

you

have

more

efficient

usage

of

your

existing

hardware

again.

That

also

means

you

have

reduced

power

consumption.

B

It

gives

you

overall,

better

security

policies

that

you

can

configure

separately

for

different

services,

obviously

also

save

on

any

physical

space

that

you

would

have

needed.

If

had

you

like

needed

to

store

that

hardware

in

a

data

center

right

and

because

now

it's

not

reliant

on

a

specific

hardware

type

for

running

that

service,

you

basically

get

a

hardware

interoperability,

because

you

can

replace

the

underneath

underlying

hardware

without

actually

impacting

your

software

applications

there.

B

B

Now,

if

you

look

at

a

virtual

network

function,

this

is

an

app

which

we

are

basically

using

in

a

5g

environment

right.

So

you

can

imagine

the

amount

of

data

that's

flowing

through

these

apps

and

all

of

this

needs

like

very

low

latency

applications.

Basically

right,

and

how

do

you

basically

achieve

that

using

your

traditional

hardware

rate,

so

when

you

have

running

virtual

machines

on

a

cluster

node,

for

example?

B

In

this

case,

you

want

to

like

make

the

best

use

of

your

networking

hardware

and

make

sure

that

the

package

switching

happens

at

a

very

fast

rate

as

compared

to

your

traditional

apps

that

are

not

really

vnf's.

In

that

sense,

and

also

when

you

have

an

nfv

environment,

you

are

typically

going

to

not

use

a

network

function

in

isolation

right

you're,

going

to

chain

together

different

virtual

network

functions

because

they

are

catering

to

different

services

and

utilizing

the

service

chaining.

B

B

B

So,

for

example,

if

you

have

a

host

with

two

next

rate,

you

can

make

it

seem,

like

you,

have

10

of

those

and

create

10

virtual

machines

which

have

independent

or

isolated

access

to

those

10

nic

cards.

So

imagine

you're,

just

multiplying

your

hardware

at

a

virtual

level

right

so

to

actually

run

sriov

you're,

basically

going

to

need

some

support

at

the

hardware

level,

as

well

as

you're,

going

to

need

to

configure

certain

bios

settings

and

also

make

some

os

configuration

changes

there.

B

When

you

look

at

a

virtual

functions.

On

the

other

hand,

this

is

a

more

lightweight

pci

function,

which

means

that

you

can

create

multiple

virtual

functions

out

of

a

single

pf,

but

not

really,

you

don't

really

have

the

same

configuration

resources

available

here.

So

what

it's?

It's

essentially,

the

one,

that's

allowing

you

to

create

virtual

size

slices

and

that

can

be

allocated

to

independent

vms

that

are

running

your

vms.

B

B

C

B

B

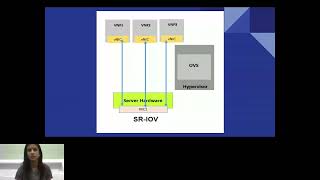

So

this

is

a

good

pictorial

view

of

what

really

you're

trying

to

achieve

with

sri

lv.

So,

as

you

can

see

here,

there

is

a

single

neck

card

and

that's

the

hardware

that

you

have

and

in

this

case

it's

basically

if,

on

the

right

hand,

side

you'll

see

that

the

hypervisor

has

an

open

v

switch.

That's

just

an

example,

switch

that

you

can

have

at

the

software

layer

and

what

it's

trying

to

achieve

is

provide

direct

dma

access

to

our

vnf

applications

by

exposing

each

of

these

virtual

functions

as

an

independent

v-neck

to

the

vm.

B

B

There

are

three

different

plugins

here

that

has

been

worked

on

in

collaboration

by

red

hat

and

intel

so

intel's

sriv

device,

plugin,

the

sra

vcni

plugin,

and

then

there

is

also

a

multus

meta

plugin.

So,

first

off,

let's

talk

about

the

sriv

device

plugin.

So

this

is

the

one

which

is

actually

responsible

for

discovering

what

are

the

available

sriv

resources

on

your.

B

So

when

you

create

a

cluster

with

a

certain

set

of

nodes,

it's

going

to

query

each

of

them

and

see

which

of

them

are

actually

srv

capable

and

how

many

virtual

functions

you

want

to

create,

and

once

it

detects

those

it's

going

to

advertise

them

to

the

kubernetes

scheduler.

So

the

resource

manager

will

keep

track

of

how

many

vf's

exist

on

each

cluster

node,

and

it

will

look

sorry.

It

will

accordingly

allocate

those

to

any

containers

or

vms

that

you

create

on

that

cluster.

B

Okay.

So

this

is

a

you

can

think

of

this

as

more

of

a

read-only

plug-in,

which

is

only

it's

not

going

to

modify

anything

on

your

host,

it's

only

going

to

discover

and

allocate

those

resources

it

achieves

that

by

updating

the

capacity

section.

If

you

look

at

each

kubernetes,

node

definition,

you

would

see

that

it

would

show

up

as

available

or

allocatable

resources

there,

the

plug-in

so

with

sriv

with

kubefoot.

It

relies

on

the

vfio

pci

driver

to

be

used

with

each

of

these

virtual

functions.

B

B

So

so

that's

the

primary

goal

of

how

the

sra

vcni

works.

Now,

if

you

were

to

just

work

with

these

plugins,

you

would

also

want

a

word

to

actually

know

right.

What's

the

pci

address

of

each

device

that

you're

exposing

to

the

part

and

that's

not

something?

That's

very

user

friendly

right,

that's

where

the

multis

meta

plugin

comes

into

play.

So

this

is

a

meta

plugin

in

the

sense

that

it

works

with

other

cni

plugins

on

the

kubernetes

side

and

allows

you

to

attach

multiple

secondary

interfaces

to

your

virtual

machines.

B

So

that's

the

goal

of

the

meta

plug

multis

plugin

here

and

in

this

case

it

would

interact

with

the

sriv

cni

plug-in

and

pass

over

any

information

that

you

configure

via

a

network

attachment

definition,

crd

object

and

using

annotations

on

your

vm

pod.

It

would

pass

over

that

information

used

from

queue

word

so

that

basically

allows

you

to

use

the

network

as

a

using

just

a

resource

name

annotation

rather

than

worry

about.

Individual

pci

addresses

there.

B

B

So

in

terms

of

configuring

srlv

on

the

host,

there

are

certain

steps

that

you

need

to

take.

Firstly,

obviously

you

need

to

make

sure

that

your

hardware

is

capable

of

supporting

sra

vray.

So

that's

the

first

thing.

Then.

When

it

comes

to

bios

settings

it

can

differ

a

little

bit

between

different

hardware,

so

you

need

to

have

some

hosts

will

have

like

global

sriv

or

pernic

sriv

flags

that

you

need

to

enable.

B

So

if

you

look

at

the

spec

file

of

a

vm

or

a

vmi

instance

in

kubert,

it

will

have

a

domain

interfaces

section

and

there

you

would

typically

have

at

least

the

default

pod

network

right.

This

is

the

kubernetes

for

network

that

you

can

have

using

the

default

net

backend.

It

could

be

flannel

or

calico

that

gets

utilized

here,

and

that's

the

first

one

that

you

see

here,

and

there

are

two

types

of

modes

that

you

can

specify.

B

B

So

so

this

is

how

the

network

definition

looks

like,

or

rather

than

an

attachment

definition.

The

the

main

thing

to

note

here,

if

you

look

at

the

annotations

field

right,

so

that

is

the

one

that's

assigned

by

your

device

plugin

on

sriv

that

we

saw

you

can

set

that

in

the

configmap.

When

you

configure

device

plugin

as

we'll

see

in

the

demo,

you

can

specify

a

resource,

prefix

and

resource

name.

In

this

case,

it's

intel

dot

com,

slash

intel

underscore

sri

ob.

B

B

B

Sorry

about

that,

so

it

basically

shows

you

an

alternative

to

that

where

you

have

dpdk,

which

is

data,

plane

development

kit,

you

are

doing

the

packet

switching

in

user

space

instead

of

doing

it

in

the

kernel

space

and

for

doing

so

it

utilizes

what

is

called

a

pole

mode

driver.

There

are

different

types

of

drivers

which

are

available

that

can

be

used

based

on

your

hardware

and

the

the

main

advantage

you

get

here.

B

Combining

dpdk

with

obs

is

that

you

get

faster

packet

processing

because

it's

avoiding

those

kernel

interrupts

in

this

case.

Sorry

now

comparing

to

sriv

srov.

If

you

there

are

some

like

very

good

performance

studies

that

have

been

done

in

this

case

and

if

you

look

at

any

east-west

traffic,

that's

not

exiting

a

specific

server.

In

that

case,

you'll

get

better

performance,

utilizing

ov

and

you

would

get

slav

and

srv

is

basically

a

better

fit

if

you

want

to

run

vnf

applications

across

different

case.

You

are

crossing

the

hypervisor

earlier

and

next

slide.

Please.

B

So,

like

I

mentioned

earlier,

you'll

see

that

the

forwarding

module

on

the

left

hand,

side

is

implemented

in

the

kernel

space,

and

only

the

switching

program

is

in

the

user

space.

In

this

case,

any

packets

are

going

to

cross

your

kernel

space

driver

here

on

the

right

hand,

side

where

you

see

the

dpdk

forwarding

module.

That

is

the

one

which

is

implemented

in

user

space,

you

using

the

phone

mode

drivers,

and

in

this

case

you

basically

get

a

much

better

performance

and

a

higher

throughput.

B

B

This

work

was

done

by

sir

ramnan

from

red

hat

and

we

basically

just

tried

to

cherry

pick

those

changes

from

that

older

comment

onto

the

latest

version

of

keyboard

and

and

we

were

able

to

make

that

work

with

keyboard

vms

as

against

the

sriv

device

plugin.

Here

you

utilize

a

user

space

cni

again,

you

do

use

smartest

meta

plugin,

because

you're

essentially

adding

secondary

interfaces

to

your

vms

and

obviously

the

obvious

package

or

your

cluster

nodes

that

needs

to

be

dpdk

compatible.

B

B

B

B

C

C

So

we

have

used

some

picture

gates

like

nomins

cpu

manager,

so

this

was

required

for

pmd

cpu

pinning

so,

which

is

kind

of

so

apt

use

this

pmb

driver

and

allocate

some

cpus

to

it

for

four

more

drivers.

So

so

it

can

just

it's

isolated

for

pmd

and

it

gives

a

better

performance

also.

We

have

to

change

some.

C

B

C

C

So

we

have

used

anof,

2

and

4

as

a

nick

for

using

this

bridge,

our

dpdk

0

and

yeah.

So

if

we

with

our

conflicts

for

this

bridge,

we

have

dpdk

in

it

true

soccer

memory

configure

and

cpu

mask,

which

kind

of

gives

the

what

codes

to

use.

So

basically,

we

have

taken

care

of

normal

awareness.

We

need

in

this

course,

so

now

this

nad

or

network

communication,

this

uses

this

bridge.

C

C

C

C

C

B

Because,

as

you

can

imagine,

it's

it's

a

huge

network's

service

chain

right

and

to

move

it's

gonna

take

maybe

more

than

a

year,

definitely

for

companies

to

kind

of

just

start

transitioning

to

it.

So

that's

the

core

use

case

that

we

are

looking

at

here

utilizing

world,

because

that

way

you

can

run

cnfs

and

vnf's

on

the

same

platform

using

kubernetes

and

that's

the

beauty

of

qport

here.