►

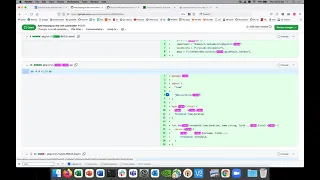

From YouTube: SIG - Performance and scale 2021-11-11

Description

Meeting Notes: https://docs.google.com/document/d/1d_b2o05FfBG37VwlC2Z1ZArnT9-_AEJoQTe7iKaQZ6I/edit#heading=h.yg3v8z8nkdcg

A

All

right

welcome

to

sixth

scale

november

11th,

find

yourself,

listen

to

me.

Please,

okay,

we're

probably

a

short

agenda

today.

So

first

thing

is

just

an

announcement.

I'm

gonna

be

away

next

thursday,

which

is

do

you?

I

don't

know

if

you

guys

wanna,

do

what

happened

last

time.

I

know

david.

Do

you

wanna?

I

can

leave

it

up

to

you

if

you

wanna

host

a

meeting.

If

we

have

agenda

items

or

what

do

you

think.

B

A

A

Where

is

it

this

pull

request,

so

this

pro

pull

request

merged

from

from

last

time,

we

want

to

have

a,

I

want

to

add

a

count

of

the

number

of

vmis

each

phase

which

you

can

see

here

the

this

emerged

this

morning,

but

I'm

not

sure

it's

been

run.

Yet.

Let

me

just

check

looks

like

there's

a

new

job.

Let's

see.

A

Yeah

so

well

I

what's.

What

I

was

not

clear

to

me

is

that

I

just

want

to

get

some

clarity

on

like

what

what

about

like

vms,

that

went

to

failed

like

what

about

like,

because

whenever

we

saw

last

week

some

irregularities

with

some

of

these

numbers,

not

only

the

performance

numbers,

but

just

some

of

the

the

api

calls.

There

was

just

a

lot

of

variation,

so

yeah

I

wanted

to

see

if

like,

if

it

just

had

anything

to

do

with

the

number

of

the

the

phases

that

the

bmis

were

in.

A

B

Because

this

is

running

after

the

test

tears

down

and

our

exit

condition

tears

down

that

namespace

and

everything

for

the

functional

test

I

mean

I

could

be

wrong,

it

doesn't

hurt

to

add

it.

I'm

just

not

sure

if

it's

going

to

give

you

what

you're

hoping

to

see,

I

think

more

likely.

We

need

to

have

the

functional

test

fail

if

vmis,

unexpectedly

crash

or

don't

reach

a

running

phase.

A

A

A

A

B

A

A

B

Locally

using

just

make

cluster

up

cluster

sync

cluster

sync

and

then

running

that

functional

test,

I

would

just

suggest

lowering

like

you

go

into

the

functional

test

and

I'm

sure

there's

some

number

or

something

for

how

many

bmis

get

created.

I

think

it's

probably

100

or

whatever

just

make

it

like

five

and

you'll

be

able

to.

A

A

Okay-

okay,

that's

fine!

I

think

then.

Maybe

that

needs

to

be

the

next

step

here

then,

and

then

we

can

just

because

yeah,

I

think

you're

right

like

if

this

is

run

out

well

after

then

we're

not

gonna

get

it.

We're

not

gonna

get

the

count

that

I'm

looking

for.

Okay,

okay,

I

mean,

I

think,

that

kind

of

settles

that

because

then

I

like,

so

I

guess

really.

A

This

is

a

matter

of

if,

if

I

can

get

any

work

on

any

progress

on

that

before

next

thursday,

then

we

might

at

least

have

some

more

numbers,

or

at

least

something

that

we

can

learn

from

this

and

the

results

but

yeah.

Otherwise,

until

then,

this

action

item

we're

gonna,

we're

gonna,

just

wait

until

yeah

wait

till

we

have

a

little

more

information

before

we

can

kind

of

at

least

start

defining

some

thresholds

on

things.

A

A

A

B

You

have

some

custom

stuff

in

your

environment,

some

custom

environment

variables

like

if

you

do

a

print

env,

which

I

wouldn't

do

that

in

front

of

me

or

on

a

recording,

because

it

might

have

something

like

a

credential

in

there.

So

then,

but

you

probably

have

some

like

custom

cube,

vert

related

or

like

I

can

see,

there's

a

docker

registry

related

stuff.

So

it's

it's

training

yeah,

it's

picking

up.

I

would

go

for

my

clean

man

yeah.

B

So

here's

what

I

would

suggest

just

to

make

all

this

go

away

and

I

would

suggest

starting

fresh

with

you-

have

like

two

code

changes

and

bmi.go

and

vm.co.

make

those

two

changes

in

a

fresh

like

branch

or

something

then

run

make

generate

and

see.

What

happens

you

might

have

to

run,

make

depths

update

as

well

or

at

least

make

that

sync,

I

think,

might

do

it.

A

B

A

B

B

B

B

B

A

A

A

B

What

you

aren't

guaranteed

is

that

two

separate

bmis

won't

be

processed

in

parallel.

So

that's

where

the

threadiness

comes

into

play,

so

you

can

have

multiple

keys

being

acted

on

at

the

exact

same

moment,

but

a

single

key

will

only

be

acted

on

once

any

given

moment.

You

can't

have

it

being

acted

on

the

same

like

multiple

times.

B

B

A

A

B

Step

you

can

get,

you

can't

get

the

fine

granularity

that

you're

looking

for,

but

you

can

at

least

know

which

key

to

belong.

It's

not

very

helpful.

Really,

but

you'd

know

what

happened.

So

you

would

all

be

local

that

that

trace

would

be

local

to

the

execute

function

and

you

wouldn't

have

to

worry

about

it.

Being

a

global

variable.

A

A

B

A

A

Piece

was

like

I

was

doing

the

tracing

by,

I

think,

like

I

was

taking

it

all

over

the

place

like

I

basically

took

the

trace

and

I

kind

of

imported

it

everywhere,

and

I

added

like

I

kind

of

you

know

what

the

basically

they

became

like.

I

created

like

a

map

with

the

key

just

like

you're,

saying

it

just

kind

of

moved

it

around.

I

think

I

I

think

I

moved

it

even

outside

of

this

function.

That's

why

I

used

it

or

something:

okay,

I'll

I'll

play

around

this.

A

A

B

A

B

B

B

B

A

A

B

So

I

don't

want

to.

I

want

to

make

sure

that

I'm

actually

picking

vms

that

can

be

removed

or

be

scaled

in.

Otherwise

I

would,

it

would

just

be

less

efficient,

so

scaling

would

eventually

occur.

It

would

just

I

might

do

a

few

iterations

of

scaling

in

rather

than

efficiently.

Only

targeting

the

ones

that

are

eligible

to

be

scaled

in

the

end

result

would

be

the

same,

though.

B

So

the

let

me

first

address

the

orphan

adoption

orphan

adoption

and

attach

they

are

the

same

thing.

I

need

to

remove

that

logic

and

that

logic

doesn't

do

anything

today.

It

won't

attach

it.

It

would

just

cue

the

pool

so

attachment

could

occur,

but

attachment

doesn't

occur

anymore.

So

it's

just

useless

all

right

logic

line,

496.

A

Like

yeah

yeah,

so

this

would

be

like,

so

I

I

when

I'm

when

I

imagine

it's

like,

let's

say

we're

scaling

in

some

vms

were

so

the

deletion

succeeded.

They

attempted

to

delete,

succeeded

in

that,

like

we,

the

deletion

timestamp

ended

up

on

the

vmi,

so

we're

we're

actually

attempting

to

delete

them,

but

you

know

what

if

they

never

get

deleted

for

some

reason:

they're

just

they're,

not

they're,

not

removed,

and

then

we

do.

A

You

know,

let's

say

and

let's

you

know

say

like

we

do

another

scale

in

and

we

and

we

now

have

these

like

ones

with

deletion.

Timestamps,

do

we

just

keep

deleting

them

kind

of

the

state

I'm

wondering

is

like?

Can

we

get

in

this

place

where,

like

we

just

keep

deleting

the

same

vms

and

they

never

deleted.

B

I

I

have

to

look

directly

at

the

logic

to

be

certain

we're

not

going

to

get

in

a

state

where,

let's,

let's

come

up

with

a

hypothetical

scenario,

we

have

a

replica

count

of

10,

we

remove

it

down

to

nine

and

that

vm

that's

getting

deleted

is

just

stuck

with

the

deletion

time

stamp

and

it's

just

not

going

away

for

whatever

reason.

If

you

then

scale

down

to

eight,

a

different

vm

is

going

to

be

picked

to

be

deleted.

We

aren't

going

to

get

stuck

on

that

first,

one.

B

B

B

A

It's

like

we

just

don't

want

to

like

keep

trying

to

delete

the

same

one

like

over

and

over

again

and

saying,

like

hey

we're

scaling

in

when

we're

actually

not

scaling

it,

because

we're

like

we're,

because

we

keep

filtering

for

these

deleted

vms

and

when

they're,

actually,

when

they're

actually

there's

whatever

they're,

just

not

being

deleted,

for

whatever

reason

we

let

the

user

clean

them

up.

Okay,

it

sounds

like

that.

B

A

B

Cool,

so

here's

the

other

thing.

So

if

you

look

the

line

right

under

that

and

that'll

be

line

498,

I

don't

know

when

I'm

coming

with

the

count

of

how

many

we

need

to

remove

how

many

vms

we

need

to

remove

scaling,

I'm

counting

the

ones

that

are

already

being

deleted

in

that

count,

so

we're

always

going

to

be

accurately

scaling.

B

It's

not

like

if

a

vm

takes

a

really

long

time

to

scale

in

it's

not

going

to

cause

anything

wonky,

it's

just

going

to

mean

that

we're

we

know

we're

attempting

to

scale

in,

but

it's

just

taking

some

time

for

that

to

happen.

If

we

scale

in

further,

then

it's

just

going

to

be

in

addition

to

that,

it's

not

going

to

cause

us

to

like

overshoot

scale

in

or

overshoot

scale

out

or

anything

like

that.

We're

always

understand

what

the

declared

state

is

understand.

B

What's

currently

in

action,

so

the

vm

is

being

created

or

if

it's

in

that

process

of

being

deleted

and

take

that

into

account

when

we

decide

what

actions

need

to

occur

next.

So

in

my

hypothetical

scenario,

where

we

had

a

vm

pool

size

10,

we

went

down

to

size

nine.

So

we

have

one

vm,

that's

in

deletion

and

it's

just

not

going

away

and

then

we

go

down

to

ten.

I'm

sorry.

B

B

A

A

Okay,

cool

okay,

so

we

won't

get

in

this

thing.

It's

very

good!

Okay,

all

right!

I

can

resolve

this

or

oh

I

don't

I

can't,

but

okay,

you

can

ignore

that.

I

think

that's

good

okay,

yeah!

That's

all

I

had

for

now.

I

haven't

I'm

still

like

making

my

through

through

this

file.

I

think

I've

got.

I

think

I

stopped

at

about

496

and

I

guess.

B

Yeah,

it's

really

really

tedious

yeah

and

if

you're

wanting

to

just

understand

the

general

behavior

of

how

things

work

and

stuff

like

that,

the

functional

test

exercises

some

of

these

edge

cases

and

things

like

that.

So

the

file

and

tests

the

test

directory.

It's

a

good

resource.

I

guess

just

to

to

see

how

this

is

exercised.

B

A

A

No,

I

think

I

don't

know

yeah.

I

think

we're

good

all

right

well

and

then

you

know

dave

I'll

leave

to

you

decide

whatever

I'll

mark

it

here

whatever

as

but

then,

if

you,

if

you

decide

you

know

nothing,

you

send

out

an

email

and

then

I

guess

one

thing

I'll:

do

I'm

going

to

make

sure

that

their

time

is

correct,

since

how.

B

A

Let

me

know

that

that

that

our

time

might

be

a

little

off,

so

I'm

going

to

I'll

get

with

chris

and

get

that

that

sort

of,

but

you

said

david

sent

an

email.

If

you

decide

that

you

know

whatever

whatever

case

for

a

meeting

or

not

okay,

that's

good!

All

right!

All

right!

Thanks!

Thanks

guys

talk

to

you

later

have

a

good

day.

Bye.