►

From YouTube: 02 - Introduction to Neural Networks I - Mustafa Mustafa

Description

Deep Learning for Science School 2019 - Lawrence Berkeley National Lab

Agenda and talk slides are available at: https://dl4sci-school.lbl.gov/agenda

A

Again,

Brenda's

done

a

great

job

with

setting

the

stage

on

general

taxonomy

of

methods

and

solutions

in

in

machine

learning,

but

obviously

the

workshop

is

around

deep

learning

for

science.

So

most

of

us

gonna

really

take

a

deep

dive

on

on

deep

learning

methods

so

most

ways

a

machine

learning

engineer

in

the

in

the

data

and

analytics

services

group

at

a

desk

and,

as

I

mentioned

earlier,

he's

really

the

the

mastermind

behind

the

deep

learning

summer

school.

So

really

any

feedback

that

you

have.

B

B

The

second

talk

to

tomorrow's

morning

session

by

then

you

will

be

deep

in

the

trenches,

so

Joel

will

talk

about

training,

very

large-scale,

deep

learning,

models

on

large-scale

data

sets

and

all

the

old

also

to

practical

questions

and

concerns

that

arise.

So

it's

a

steep

curve

from

here

but

till

tomorrow

morning,

essentially

at

the

end

of

the

morning

session,

and

then

after

that,

most

of

the

topics

will

be

essentially

a

tour

de

force,

so

they

all

the

methods

and

the

applications

where

people

have

applied.

B

Deep

learning,

so

it

will

border

somewhat

between

applications

and

also

latest

research.

During

my

talk,

please,

if

you

have

any

question,

just

raise

your

hand

and

ask

I'll,

try

to

repeat

the

question

or

you

won't

having

a

chance

to

pass

microphone

during

their

talk,

but

please

interrupt

me

and

ask

any

question.

You

have

okay.

So

before

on

with

my

talk,

you

can

come

here

and

attend

a

week

of

talks

and

lectures

or

you

can

take

an

online

class

on

you

know

there

are.

There

is

no

shortage

of

online

classes

for

teaching

deep

learning.

B

However,

I

really

think

that

if

you

want

to

understand

the

all

the

intricacies

of

everything

that

goes

into

doing

deep

learning

in

practice,

you

really

need

a

solid

undergraduate

level

course

in

deep

learning.

There

is

one

such

course

online

and

I

think

if

you

have

done

deep

learning,

you

probably

know

this

course.

It's

the

stanford

cs

231

course.

The

videos

are

from

spring

2017

the

lectures

the

lecture

slides

have

been

updated

with

spring

2018.

I

think,

but

the

videos

the

videos

are

are

great.

B

So

if

you

really

want

to

do

deep

learning

for

a

living,

take

some

time

to

actually

go

through

these

lectures

and

probably

the

hormones

as

well,

there

are

about

fourteen

lectures.

There

are

less

lectures,

then

you

will

take

this

during

this

week.

So

it's

not

really

a

lot

of

work

and

during

making

my

slides

for

today

and

and

tomorrow,

I

made

actually

great

use

these

lectures.

You

will

see

a

lot

of

snapshots

from

doors.

I

also

make

good

use

of

deep

learning

books.

B

These

are

two

excellent,

deep

learning

books

that

you

probably

have

seen

these

before

and

there

are,

but

there

are

many

others.

Another

thing

is

engaging

with

research,

I

think

there

is,

you

know

if

you

want

to

do

deep

learning.

Nowadays,

you

really

have

to

be

up-to-date

on

a

lot

of

the

research

that

is,

that

is

happening,

especially

if

you're

using

a

cutting-edge

sort

of

model.

I

do

remember

that

we

ever

talked

in

November

last

year

about

the

scaling,

training,

deep

learning

scaling

at

scale

and

by

January.

B

We

were

about

to

give

another

talk

and

we

had

to

update

the

slides

with

the

latest

research

that

happened

in

December.

So

a

lot

of

stuff

are

happening,

especially

if

you're

working

on

cutting-edge

methods,

the

still

dot

up

is,

is

a

it's

essentially

a

journey.

The

pedagogical

journal,

where

they

try

to

to

essentially

expose

a

lot

of

important

machine

learning

and

deep

learning

concepts.

B

Some

of

them

are

latest

research

sort

of

concepts,

and

some

of

them

are

fundamental

to

everything

that

we're

doing

like,

for

example,

why

does

gradient

descent

with

momentum

work

so

to

make

sure

to

check

out

this

when

you

have

some

time?

Okay,

so

that

aside,

the

talk

for

today

I'll

try

to

essentially

talk

about

the

neural

network

and

neural

networks-

basics,

ok,

trying

to

find

this

new

networks-

basics

Brenda-

did

talk

about

this

I

want

to

go

through

those

basics.

B

Again,

after

that,

we

will

talk

about

how

do

we

optimize

these

neural

networks

and

how

essentially,

how

do

we?

How

do

we

construct

this

problem

of

optimizing,

a

neural

network

and

then

how

do

we

actually

find

those

parameters

of

the

neural

networks

we'll

talk

about

in

practice?

What

we

do

to

monitor

that

the

training

or

the

learning

process

of

those

networks

and

then

at

the

end,

we'll

get

into

convolutional

neural

networks,

basics

I'll!

Try

to

keep

this

talk

at

a

conceptual

level,

so

you

won't

see

a

lot

of

math.

B

You

won't

see

a

lot

of

practical

tips

and

and

nitty-gritty

details

of

actually

King

these

things

work.

Those

will

defer

them

to

tomorrow

morning.

It's

inevitable

that

there

will

be

one

equation

or

another.

So

if

you

look

back

at

the

history

of

of

gira

networks,

you

immediately

realize

that

a

lot

of

the

terms

that

we're

using

right

now

did

appear

before

right.

So

we

talked

about

perceptrons

there.

Nineteen

fifties,

you

probably

have

seen

back

propagation

somewhere.

This

is

nineteen

seventies

lsdm

and

of

the

1990s.

B

So

people

have

been

working

on

this

for

a

while

a

lot

of

the

technologies

that

we

use

right

now,

they're,

not

new

right.

However,

it's

only

the

this

explosion

of

results,

of

applying

deep

learning

or

and

successes

and

deep

learning

has

only

happened

recently,

as

Brandon

I

mentioned.

There

are

factors

for

why

this

has

happened.

First

of

all,

we

do

have

data.

B

We

have

a

lot

of

lot

more

data

than

we

had

before,

something

that

distinguishes

the

performance

of

shallow

learning

sort

of

methods,

the

ones

that

Brenda

talked

about

like

SVM

clustering

methods

and

all

those

sorts

of

sorts

of

problem

models

from

deep

learning

is

that

shallow

learning

methods

they

turn

to

Plateau

their

performance

tends

to

plateau

after

a

certain

amount

of

data

beyond

that.

Actually,

beyond

that,

it

becomes

very

expensive

to

to

evaluate

them

and

train

them.

B

For

example,

in

clustering

and

clustering,

you

might

have

N

squared

sort

of

algorithms,

but

they

also

tend

to

plateau

in

performance.

Deep

learning

is

very

data

hungry.

You

will

see

tomorrow

in

Jules.

Talk

that

deep

learning

performance,

their

models

tend

to

have

a

power-law

dependence

on

the

amount

of

data

that

you

train

on,

and

they

continue

all

the

way

until

an

irreducible

error,

where

you

can't

get

more

performance

than

that.

B

So

the

available

availability

of

such

datasets

to

actually

build

models

on

is

an

essential

component

of

why

deep

learning

now

has

worked.

The

other

thing

is

being

able

to

calculate

all

of

these

big

matrices

very

quickly,

and

that

has

happened

thanks

to

all

the

gamers

by

GPUs

right.

If

you

look

at

the

a

plot

like

this,

for

example,

this

is

the

error

of

the

winning

imagenet

competition,

winning

algorithm

and,

and

then

the

number

of

users

in

blue

the

error

is

in

is

in

red.

B

You

see

that

2011

was

the

last

time,

then

that

non

deep

learning

methods

was

was

used,

and

that

was

the

era

was

about

26%,

deep

learning,

the

first

time

that

it

won

this

competition.

It

reduced

the

error

from

about

by

about

10%

from

26%

to

16%.

You

see

that

this

was

a

tremendous

jump

right,

and

that

was

the

very

first

time

the

GPUs

were

used

for

such

algorithms.

B

Yes,

this

is

the

error

rate

of

the

winning

algorithm

in

the

competition

so

being

able

to

calculate

to

build

bigger,

neural

networks,

bigger

functions

and

optimize

them

is

an

essential

component

and

why

this

works

now

and

last

is

that

that

a

succession

of

algorithms

that

we

have

seen

on

the

on

the

previous

plot

has

finally

actually

has

finally

worked.

So

people

were

thinking

of

better

optimizers,

better

regularizer,

third

normalization

methods,

all

of

these

algorithms.

Without

them

things

don't

work,

and

you

will

see

tomorrow.

B

A

B

That's

it

I

want

to

get

into

talking

more

about

deep

learning.

So

this

is

the

long

story

short.

This

is

essentially

everything

that

we'll

be

talking

about

today.

So

what

deep

learning

is

is

a

family

of

parametric,

nonlinear

and

hierarchical

representation

learning

functions,

so

they

try

to

learn,

representations

and.

A

B

Way

that

we

we,

we

optimize

them

they're

massively

optimized

with

stochastic,

gradient

descent

and

their

objective

is

to

encode

domain

knowledge.

How

do

I

look

at

the

data

and

try

to

learn

a

certain

task

from

this

data

set

and,

of

course,

the

domain

knowledge

can

be

a

variety

of

things

like

domain

and

variances

stationarity,

and

a

lot

of

other

stuff

will

try

to

decode

this

statement

in

this

talk

so

neural

networks-

basics,

as

Brandon

mentioned

this

morning,

what

we

try

to

do

with

we're

trying

newer

networks

and

generally

we

try

to

build

a

neural

network.

B

Generally,

we

try

to

build

these

models

that

try

to

approximate

relationships

that

we

have

in

the

data

right.

So

there's

we

have.

We

make

an

assumption

that

there

is

some

relationship

between

an

input,

X

or

an

observation.

X

and

an

output

Y

can

be

a

label

can

be

an

action,

can

be

whatever

sort

of

things

that

you

want

to

associate

with

the

X

we're

making

this

assumption

that

this

relationship

exists

and

then

we're

trying

to

learn.

What

is

that

relationship

we're

trying

to

make

them?

B

The

model

essentially

learn

that

relationship

so

that

we

can

take

that

model

and

then

apply

it

in

real

life

right.

The

simplest

way

of

doing

this

is

to

think

of,

instead

of

trying

to

build

them,

to

find

the

exact

right

answers

of

of

what

that

function

can

be,

we

can

think

of

a

sample.

We

can

think

of

it

in

a

simpler

way.

Right.

We

can

break

that

down

that

function

into

atomic

functions.

B

We

can

think

of

those

atomic

functions,

what

they

could

be

and

then

try

to

essentially

build

a

hierarchy

of

these

functions,

all

the

way

from

the

input

to

Y,

and

we

try

to

optimize

to

to

find

the

parameters

of

those

atomic

functions.

The

simplest

way

of

doing

this

is

to

think

of

affine

transformations

over

the

simplest

function.

You

can

think

of.

Is

a

linear

function

right,

you

take

an

input,

X

multiply

it

by

a

bunch

of

parameters,

and

that's

it.

That's

your

output.

B

We

add

the

bias

here

because

we

need

like

what,

if

the

input

X

is

not

centered

around

zero

right,

so

you

need

the

bias,

but

this

is

so.

This

is

linear

with

the

biases

and

affine

function.

We

stack

these

functions

so

the

the

output

of

the

first

hidden

layer

of

the

output

of

the

first

operation.

We

call

it

the

hidden

layer.

One

goes

into

the

next

one.

The

output

of

the

next

one

goes

into

the

next

one,

all

the

way

until

the

output.

B

However,

if

we

do

this,

if

we

just

stack

a

bunch

of

linear

functions

after

each

other,

the

global

result,

the

final

result

will

be

a

very

big

linear

function.

Right

so

and

that's

not

very

useful.

We

are

trying

to

learn

very

complex

relationship

between

X

and

the

output

Y,

so

we

do

have

to

have

some

non-linearity

in

there.

So

what

we

do

is

we

take

the

output

of

the

first

layer.

B

We

pass

it

through

a

non-linearity,

and

then

we

call

that

the

activation

I'll

talk

a

little

bit

a

lot

about

that,

but

that

will

be

your

output

of

the

first

day

or

at

the

first

hidden

layer

and

then

that

one

goes

into

the

next

one.

So

essentially

we

have

atomic

functions.

We

pass

the

outputs

by

atomic

conscience

into

some

non-linearity

and

then

we

build

a

hierarchy

of

such

operations

and

that's

what

we

call

a

neural

network.

So

in

this

there

are

a

few

terminology

here

to

remember

this

is

the

input

layer

called

an

input

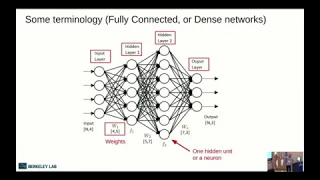

layer.

B

B

Each

hidden

layer

has

a

bunch

of

parameters,

these

are

weights

and

they

also

have,

and

then

there

is

an

output,

their

activations.

So

the

idea

of

activations

is

essentially

that

idea

of

having

a

non-linearity

and,

and

then

your

network

and

the

way

that

it

works,

is

that,

as

you

saw

before,

we

have

a

number

of

features,

those

number

of

features

they

are

weighted.

We

calculate

their

weighted

sum,

which

is

essentially

by

multiplying

them

by

W.

We

add

a

bias.

We

pass

that

through

an

activation

function

and

we

call

that

the

output

of

the

activation

function.

B

This

is

where

the

analogy

to

real

neurons

comes

in

the

ideas

you

have

done,

the

writes

those

dendrites,

they

collect

signal

from

different

places

and

then

the

neuron

decides

whether

to

fire

or

not.

And

then

you

have

the

output

signals

which

goes

into

other

neurons

bit

of

terminology

here.

The

number

that

we

calculate

the

weighted

sum

is

called

a

pre

activation.

The

output

of

the

activation

function

is

called

the

activation

of

that

neuron.

B

So

how

do

these

activations?

Look

like

not

the

activations

the

activation

functions,

so

there

is

a

variety

of

them

and

if

you

look

at

papers

right

now,

you'll

see

that

only

a

few

of

these

appear.

We

will

talk

about

them

in

in

details,

some

of

them

at

least

in

details.

So

if

you

look

at

most

of

the

recent

papers,

you

will

see

that

riilu

is

the

most

common.

A

rectified

linear

unit

is

the

most

common

non-linearity.

B

Essentially

it

takes

the

input

and

if

the

input

is

positive,

it

passes

it

linearly

at

the

end,

but

is

negative.

It

just

chops

that

out

we'll

see

if

this

is

a

good

idea

or

not

a

leak,

you

riilu

has

the

same

thing,

but

it

leaks.

Some

part

of

the

negative

input

and

the

exponential

linear

unit

is

is

similar

to

the

to

the

raloo

and

and

in

the

positive

regime.

So

essentially

it's

near

in

there,

and

then

it

has

an

exponential

in

the

in

the

negative

region.

B

The

reason

that

we

use

any

of

them

or

one

of

them

or

the

other

is

mainly

because

of

computational

efficiency

and

also

for

optimization

ease.

Is

it

easier

to

optimize

a

neural

network

with

one

of

these

versus

the

other?

Sometimes

you

want

to

try

in

your

own

network

and

see

that

one

of

them

works

better

for

you

they

can

the

tantor

the

hyperbolic,

hyperbolic

tangent

you

you

see

this

mostly

in

recurrent

models

nowadays,

I

think

you

will

hear

more

about

this

later

this

week.

Sigmoids

and

Contra

are

also

used

in

as

output

layers.

B

B

Hypertension

hyperbolic

goes

from

minus

1

to

1.

This

also

is

a

nice

property

that

you

might

be

looking

for.

Ok,

so

that's

a

that's!

What

a

neural

network

is

now

we

said

that

we

want

to

build

this

mirror

network

to

try

to

approximate

some

relationship

in

the

right,

but

what

sort

of

relationships

can

we

approximate?

B

There

is

a

theorem

that

appeared

in

early

90s.

It's

called

a

universal

approximation.

Theorem

theorem

says

essentially

this

that

if

you

have

a

neural

network

with

one

hidden

layer,

it

can

approximate

any

continuous

function.

That

is

there,

given

that

you

can

have

as

many

neurons

or

hidden

units

in

that

layer

as

possible,

essentially

in

their

neural

network

with

a

linear

output

unit,

can

approximate

can

approximate

any

continuous

function,

arbitrary,

well,

given

enough

hidden

units.

B

So

the

reason

that

this

is

an

important

result

is

that

we

have

a

theoretical

guarantee

that

if

we

have

the

right

architecture-

and

if

you

have

the

right

capacity,

we

will

be

able

to

approximate

that

Easterns

principle

we'll

be

able

to

approximate

the

relationship

that

we

have

in

data

now.

Of

course,

this

theorem

doesn't

mention

anything

about

how

easy

it

is

to

find

the

parameters

of

of

such

a

network

right.

B

So

you

can,

you

can

have

random

parameters,

but

you

don't

necessarily

have

a

method

finding

the

right

parameters

to

approximate

your

function,

and

it

also

doesn't

mention

anything

about

it.

Also

missa

Tate's

here

an

arbitrary

number

of

hidden

units

and

that's

not

practical

right,

so

you

might

not

have

enough

hidden

units

to

actually

represent

the

relationship

that

you

have

okay,

so

we

talked

about

neural

networks

as

essentially

function,

approximator

z--.

B

B

I'm

sure

you

have

come

across

this

trick

before

you

build

the

cost

function

right,

so

the

basic

idea

is

that

you

want,

if

you

have

a

certain

target-

and

this

is

all

of

this-

will

be

talking

in

the

supervised

learning

I

just

set

up

just

for

illustration,

because

it's

easier

to

list

right

here,

but

it's

the

same

thing

and

unsupervised

learning.

You

will

have

some

target

that

you

want

to

achieve.

So

the

basic

idea

is

that

you

have

some

function.

B

B

We

assume

that

the

last

function,

essentially,

if

it's

high,

then

it's

bad.

If

it's,

if

it's

small,

that

means

the

output

of

the

neural

network

is

very

close

to

the

real

target,

and

then

you

average

all

of

that

over

all

of

your

data

set.

We

call

that

the

cost

function,

so

the

cost

function

is

essentially

the

average

over

many

examples

of

their

real

training

data

set.

B

Okay,

there

is

a

framework

called

empirical

risk

minimization.

So

if

you're

looking

at

any

sort

of

introductory

course

and

machine

learning

or

in

deep

learning,

you

will

see

this

this

framework.

The

basic

idea

is

that

what

we

really

want

to

achieve,

we

don't

want

to

have

our

network

do

very

very

well

on

the

training

data

set,

but

we

are

really

trying

to

do

is

to

have

it

do

well

on

a

data

set

up.

It

hasn't

seen

before

right.

This

is

what

we

call

the

generalization

error.

B

We

want

it

to

actually

generalize

beyond

the

data

set,

that

we

have

the

two

concepts.

So,

if

we're

only

trying

to

make

it

work

on

the

training

data

set,

that

would

be

called

optimization

if

we're

trying

to

make

it

work

on

an

unseen

data

set.

That

would

be

called

learning

right.

That's

the

goal

of

learning,

so

the

real

goal

is,

is

to

actually

have

the

cost

function

on

the

entire

data

set

to

be

really

really

low.

The

entire

data

set.

B

This

is

the

actual

the

data

generation,

Distasio

generation

distribution,

the

original

source

of

your

data

set,

but

we

don't

have

access

to

this

one.

This

would

be

called

the

true

risk,

that's

what

we

are

trying

to

minimize,

but

what

we

end

up

minimizing.

We

end

up

minimizing

the

empirical

risk,

which

is

the

same

quantity,

but

averaged

over

the

training

data

set

that

we

have,

and

we

call

this

the

empirical

risk.

B

The

reason

that

I

wanted

to

point

this

out

is

because

this

is

generally

the

at

least

a

theoretical

framework

from

where

all

of

this

starts

we're

trying

to

minimize

the

real

risk

on

data

generation

distribution,

but

we

end

up

doing

an

optimization

over

the

training

data

set,

and

then

we

hope

that

it

will

do

well

on

an

unseen

data

set.

Okay.

This

is

a

great

principle,

but

it

doesn't

in

reality,

it's

not

very

it's

good

to

to

think

about,

but

it's

not

how

we

build

these

cost

functions.

B

For

many

reasons,

it

turns

out

that

most

of

the

of

their

losses

that

we're

interested

in

this

empirical

risk

or

the

risk

that

that

we're

interested

in

most

of

the

time

it's

not

smooth,

so

you

can

think

of

the

risk

if

you're

trying

to

classify

cats

and

dogs.

What

you're

really

trying

to

to

say

is

this

image

a

cat

or

a

dog?

So

it's

a

zero

one

sort

of

risk

you

either

it's

either

a

dog

or

a

cat.

There's

no,

like

you

don't

give

me

problems

like

the

real

risk,

is

not

probabilities.

B

B

B

B

B

B

Normally,

that's

an

assumption,

so

you

say

that

the

difference

between

y

and

the

function

and

the

output

of

your

neural

network

I

want

that

to

be

distributed

normally

or

I,

assume

that

the

real

errors

and

the

data

sets

are

distributed

normally,

and

this

is

an

a

good

assumption

right.

It

says

that

if

the

if

the

output

of

the

neural

network

is

very

close

to

the

real

output,

it's

okay,

but

if

it's

very

very

far,

I

want

you

to

penalize

strongly

right.

So.

B

If

I

have

P

model

is

a

normally

normal

distribution,

I

can

plug

that

into

the

log-likelihood

and

then

P.

Remember

the

normal

distribution

is

exponential

to

the

power

of

the

mean

minus

the

F

here,

which

is

the

x

squared

and

then,

when

you

have

the

log,

it

cancels

the

exponential

and

you

end

up

with

there

l2

loss,

and

this

is

essentially

how

you

think

of

this

is

using

the

maximum

likelihood

to

build

the

l2

loss

in

a

similar

fashion.

B

B

Gradient

descent

right

like

this

is

the

oldest

trick

in

the

book.

You

have

a

function

you're

trying

to

minimize

that

function.

How

do

you

do

that?

You

take

the

derivative

at

the

point

where

you

are

and

then

the

derivative

points

in

the

direction

where

the

function

is

increasing

negative,

that

that

will

be

the

descent

direction,

and

then

you

take

one

step

in

the

direction

of

your

descent

and

you

update

your

parameters

all

right.

So

mathematically,

you

have

your

WK

and

then

you

take

the

gradient.

B

Okay.

You

need

to

remember

that

we're

talking

about

the

learning

great

or

the

step

size

where

this

is

where

it

comes

in.

We

will

talk

a

bit

about

this

later.

In

reality,

this

gradient

gradient

descent.

When

we're

talking

about

just

gradient

descent,

we

mean

take

your

entire

training

data,

set,

evaluate

the

gradient

on

the

entire

training

data

set

and

then

make

one

step

this

is

it

doesn't

work

really

in

reality

right.

Your

dataset

can

be

millions

of

images,

it's

extremely

expensive

to

actually

evaluate

your

last

function

on

the

entire

data

set.

B

Another

thing

is

that

you

don't

want

your

training,

the

complexity

of

your

of

training

or

optimizing.

Your

network

to

grow,

as

your

data

set,

is

growing

right

if

I

am,

if

I'm,

essentially

increasing

that

I'm

evaluating

the

entire

gradient

on

the

entire

data

set.

That

will

be

all

n

complexities,

linear

complexity,

but

you

don't

want

that.

So

in

practice

we

use

the

gradient

that

stochastic

gradient

descent.

B

We

say

instead

of

using

the

full

gradient,

let's

evaluate

the

gradient

approximate

that

or

with

just

a

small

number

of

of

examples

from

the

dataset,

and

we

hope

that

that

is

you

know

it's

good

enough.

It

will

give

me

a

good

idea

of

which

direction

to

go,

but

the

one

I

rely

too

much

on

on

the

gradient.

This

is

what

we

call

stochastic

gradient.

Descent

is

stochastic

because

those

examples

are

presumably

random,

so

you're

picking

them

randomly.

B

You

don't

want

to

have

a

lot

of

correlations

in

the

in

the

randomness

of

your

gradient,

so

this

came

in

the

beginning.

It

came

out

as

an

idea

for

how

to

do

this

iterative

process

of

doing

gradient

descent

or

optimization

much

faster

in

practice.

What

we

realized

is

that

the

noise

that

you

get

from

the

stochastic

nature

of

this

gradient

estimate,

essentially

the

difference

between

this

gradient

value

from

small

small

number

of

examples

and

the

full

data

set.

It

turned

out

that

that

noise

in

itself

is

extremely

important

to

optimize

these

neural

networks.

B

We

will

see

and

we'll

see,

a

plot

later

of

how

the

last

function,

the

surface

of

this

last

function,

might

look

like,

so

essentially

that

noise,

at

least

intuitively.

It

helps

to

kick

your

network

or

your

parameters

out

of

local

minimums,

so

that

it

goes

to

a

more

a

global

minima

and

in

fact

it

turns

out

that

the

larger

the

batch

that

we

use

the

more

problems

we

have

in

finding

there

are

a

good

many

minimizer

of

the

entire

network.

B

So

you

will

see

I

think

during

this

week

you

will

see

a

lot

of

discussions

of

large

batch

training.

How

do

I

do

training

with

a

larger

batch

okay,

so

two

things

to

point

out

here

is

that

the

learning

rate

and

the

mini

batch

size.

How

many

examples

do

you

want

to

use

in

every

step?

These

are

hyper

parameters,

and

these

are

examples

of

two

hyper

parameters

that

are

extremely

important

to

find

good

parameters

to

train

your

network

and

then

we'll

talk

more

about

this.

B

In

my

talk

and

then

also

in

other

talks,

there

is

a

hpo

talk

later

in

the

week.

Hyper

parameter,

optimization

talk

that

discusses

just

how

to

do

this.

This

stuff,

generally

right

now

to

first-order

using

a

small

batch

somewhere

between

1

and

32

and

powers

of

2,

is

reasonable.

This

is

what

you

will

see

in

most

in

practice.

Once

we

have

once

the

community

has

experience

with

a

certain

network,

you

start

seeing

larger

and

larger

batch

sizes,

for

example

ResNet.

You

will

see

that

most

of

the

time

people

train

with

256

batch

size.

Yes,.

B

B

Actually

there

is

a

lot

of

research

recently

that

is

doing

just

that,

but

so

essentially

it

says

that

when

I

am

when

I

start

from

random

parameters

at

the

very

early

stage,

I

want

to

have

as

much

noise

in

my

gradient

as

possible,

and

then

I

use

a

small

batch

size

to

make

my

steps

I'm

still

exploring

trying

to

kick

myself

out

of

all

the

local

mini

months,

but

once

I

get

to

a

flat

region

and

the

last

surface

I

can

take

a

more

confident.

There

are

less

problems.

B

B

Okay.

So

how

does

this

look

in

practice?

I

just

want

to

emphasize

that

yeah.

When

you

use

learning

great

that

are

off,

you

will

get

differently

lost,

curves

or

learning

curves,

and

you

will

need

to

really

need

to

find

the

right

learning

rate

okay.

So

how

do

we

find

the

the?

How

do

we

actually

do

this

in

practice?

So

if

you,

if

you

look

at,

if

you

look

at

examples

trying

to

visualize,

there

are

a

lot

of

these

we're

trying

to

visualize

the

last

surface

of

a

real

neural

network

on

a

real

data

set.

B

You

will

see

examples

like

this.

So

I

think

this

is

for

vgg

56,

which

is

one

of

the

winners

of

the

imagenet

competition

standard

model.

People

have

done

a

lot

of

stuff

with

it,

and

this

is

a

visualization

of

the

lost

surface

at

a

certain

point,

during

the

training

the

way

that

they

do

this,

they

try

to

find

two

directions

in

which

the

last

changes

the

most

and

then

try

to

visualize

it,

because

you

know

these

networks

have

tens.

B

If

not

hundreds

of

millions

of

parameters,

you

want

to

choose

two

directions

to

visualize,

to

make

a

surface

like

this.

Unfortunately,

we

can

plot

in

more

than

more

dimensions.

So-

and

you

get

something

like

this,

you

can

immediately

see

that

sort

of

trouble

that

you

can

run

into

right.

You

can

get

stuck

in

a

lot

of

local

minimis

if

you're,

if

you're

learning

great,

doesn't

doesn't

essentially

kick

you

out

of

these

local

minimums.

If

you

don't

have

enough

noise,

you

will

not

get

to

a

location

like

this

right.

B

You

can

also

see

that

you

can

get

stuck

optimizing

like

and

in

certain

places

you

can

get

stuck

in

saddle

points

right

instead,

just

like

you

know

in

your

in

your

pace,

you

can

also

see

immediately

here

that,

if,

if

you're,

for

example,

your

parameters

are

somewhere

on

the

surface

and

then

you're

you're

lost

you're,

your

learning

rate

is

very

small.

You

won't

travel

far

from

where

you

have

started

right,

but

if

your

learning

rate,

it

is

very

large,

it

can

essentially

catapult

you

all

the

way

to

arrive

in

somewhere

far

away.

B

B

There

is

a

range

of

optimizers

people,

don't

use

just

gradient

descent

in

practice.

The

first

thing

that

you

can

think

of

is

that

you

can

have

a

momentum

right.

So

if

you

have

a

ball

rolling

down

surface,

you

can,

instead

of

trying

to

do

only

locally

trying

to

make

your

step

based

on

the

local

gradient.

You

can

accumulate

your

speed

right

while

you're

coming

down

and

then

use

that

to

kind

of

give

you

a

sense

for

where

you

should

go.

The

general

direction

right,

so

stochastic,

gradient,

set

with

momentum,

would

be.

B

The

very

first

thing

to

do.

Nesterov

is

a

variation

over

this,

which

is

essentially

UI.

First

update

my

location

based

on

my

velocity

and

then

evaluate

the

gradient

or

or

evaluate

the

gradient

update,

and

there

is

a

range

of

other

things

like

other

grad

and

rmsprop.

Essentially,

they

try

to

use

the

size

of

the

of

the

gradient

along

the

way

to

estimate

by

the

size

of

the

step

that

you're

taking

once

you

get

into

other

grad

and

rmsprop.

We

start

having

different

learning

rates

for

different

crimes.

B

Different

updates

scales

for

different

parameters-

and

then

you

have

Adam

Adam,

is-

is

essentially

doing

somewhat

of

Armas

prop

Plus

momentum,

so

it

combines

the

two

ideas

and

then

it

also

tries

to

eliminate

any

bias

in

the

estimates

of

the

estimates

of

the

the

gradient

mean

and

and

variance.

This

is

very

high-level,

I'm

gonna,

probably

if

we

have

time

we

can

get

into

the

details

of

these

different

optimizers

tomorrow,

you

can

see

in

a

plot

like

this,

that

some

optimizers,

for

example,

pure

as

GD,

gets

stuck

there.

B

Of

course,

this

is

kind

of

a

diagram

just

to

show

in

principle

how

this

happens.

If

the

learning

rate

is

different,

it

might

not

get

stuck

right

if

there

is

some

noise

and

the

gradient,

it

also

might

not

get

stuck.

But

this

is

just

to

illustrate

the

idea

right

and

you

can

see

that

other

sort

of

optimizers.

B

B

That's

true,

so,

in

practice,

what

we

have

realized

is

that

a

lot

of

these

optical

parameters,

these

optimizers,

they

make

it

easy

to

optimize

network.

If

you

don't

know

what

parameters

to

use

so,

but

in

practice

the

best

sort

of

generalization

error

comes

when

you

use

as

gd+

momentum,

but

it

takes

a

lot

of

hyper

parameter,

optimization

to

be

able

to

find

the

right

parameters.

B

So

I

think

this

is

also

something

that

Brenda

I

mentioned.

Is

that

when

you

use

something

like

Adam,

you

don't

worry

a

lot

about

the

exact

value

of

your

learning

rate,

but

the

best

value.

At

least

you

can

start

experimenting

with

the

rest

of

your

model

without

having

to

worry

so

much

about

this

being

completely

off.

It's

less

sensitive

to

the

exact

value

of

the

learning

rate.

But

if

you

look

at

most

of

the

the

state-of-the-art

results

like

models

like

resonate,

for

example,

you

see

that

they

actually

use

SVD

plus

Compton.

B

B

The

other

question,

okay,

so

I

said

we

have

a

loss

function.

We

take

the

gradient

of

that

loss,

function

with

a

certain

parameter

and

then

the

parameter

of

the

network,

and

then

we

take

one

step

opposite

to

the

gradient

right.

But

we

have

a

lot

of

parameters

in

these

networks.

How

do

we

get

the

parameters

to?

How

do

we

get

to

the

parameters

inside

the

network

themselves?

Not

at

the

very

last

layer

and

the

output

layer?

B

For

example,

we

use

again

the

oldest

trick

in

the

book,

which

is

the

chain

rule

of

calculus

to

propagate

the

errors

from

the

last

function,

all

the

way

to

the

parameters

that

were

trying

to

update.

So

imagine

this

is

the

output,

Z

and

you're

trying

to

get

you're

trying

to

update

W.

You

need

to

actually

pass

through

the

entire

network.

Partial

C

by

partial

W

would

be

partial,

X,

partial

W,

partial

Y,

partial

X,

partial

Z,

but

partial

Y.

B

This

is,

if

you're

taking

a

class

like

CS

231.

You

will

see

that

they

spend

at

least

a

whole

lecture

an

hour,

15

minutes

talking

only

about

back

propagation,

how

you

do

actually

this

in

practice,

there

are

a

lot

of

things

that

you

want

to

to

take

care

of.

Essentially

how

do

you

do

it

efficiently

on

linear

ax,

on

on

modern

ax

aerators,

but

for

the

conceptual

understanding

of

how

things

work?

B

All

you

need

to

remember

is

that

there

is

a

chain

rule

and

your

gradients

are

actually

propagating

to

all

the

other

factors

that

you

have

in

your

network,

and

this

is

very

important

because

imagine

that

one

of

these

is

zero.

Imagine

that,

like

one

of

these

is

zero

or

one

of

them

is

extremely

small.

You

will

not

have

any

gradient

signal

going

back

to

area

layers

right,

which

would

kill

the

update

to

2

W

immediately

can

also

think

of.

If

this

is

extremely

large,

it

will

also

support

the

whole

thing

off.

B

B

Is

the

most

common

now

activation

function

and

the

networks

that

you

see

around

and

the

basic

form

of

the

function?

If

it's

linear

the

input

is

linear.

If

the

input

is

positive,

you

go

to

a

linear

response.

If

the

input

is

negative

at

zero,

there

are

a

lot

of

good

properties

about

this.

First

of

all,

it's

computationally

cheap.

It's

extremely

cheap

right.

B

The

other

and

compare

this

to

the

sigmoid

that

has

an

exponential,

we'll

talk

about

this

in

a

bit

it

has

exponential,

are

very

expensive

to

calculate.

Initially,

people

thought

that

sigmoid

would

be

a

good

way

to

do

it.

The

other

thing

is

that,

when

it's

positive,

the

slope

of

this

function

does

not

alter

the

the

slope

of

the

actual

output

of

the

neuron.

It

can

give

very

strong

gradient

signals

right.

B

You

see,

it's

not

dying

like

other

functions

right,

so

if

the

slope

here

is

good

to

for

a

gradient

propagation,

one

of

the

issues

with

riilu

is

that

if

the

output

is

negative

of

your

neurons

negative

it

essentially,

it

kills.

The

EO

kills

the

output,

but

also

kills

the

gradient

right.

We

just

said

that

if

this

has

slope

0,

so

there's

nothing

will

propagate

back,

so

this

leads

to

dead

neurons.

B

B

So

one

way

to

get

around

this

is

to

use

something

called

leaky

riilu,

which

is

essentially

keep

some.

You

use

some

some

part

of

the

negative

negative

part

of

the

of

your

input,

so

you

have

Alpha

X

alpha

times.

X

X

would

be

negative

here

right

and

then

alpha

between

0

&

1,

which

is

essentially

you

keep

some

leakage

in

your

function

so

that

gradients

can

propagate

back,

and

this

is

this

is

very.

This

is

very

important

in

practice.

B

You

will

see

that

one

of

the

ways

to

actually

monitor

if

your

network

is

doing

well

or

not,

is

to

look

a

lot

on

how

many

neurons

are

not

dead.

Okay,

two

other

activations

I

want

to

talk

about

the

first

one

is

sigmoid

so

sigmoid.

We

don't

use

it

inside

the

neural

networks

in

the

head

and

after

hidden

layers,

so

inside

the

neural

networks

anymore,

you

might

find

it

that's

it.

You

might

find

a

lot

of

things,

but

in

practice

we

use

it

to

represent

probabilities.

B

So

if

the

output

of

my

neural

network

has

to

be

somewhere

between

zero

and

one,

it's

very

easy

to

just

take

the

output

of

the

neural

network.

It

should

be

on

the

x-axis

and

then

that

will

give

me

it

will

squash

that

x-axis

into

0

to

1-

and

this

is

great

right

for

representing

Bernoulli

distribution-

it's

expensive

to

you

to

compute.

However,

if

you're

only

using

it

at

the

very

last

layer.

It's

ok

right.

B

One

thing

that

I

want

to

mention

about

sigmoid.

Is

that

actually

they

only

say

so

a

lot

of

what

you

will

see

right

now

and

stuff

that

you

think?

Oh,

this

is

great,

and

this

is

what

happens

to

all

of

us.

You

understand

the

different

details,

but

after

you

get

into

deep

learning

in

practice,

especially

if

you

are

in

application,

applying

deep

yearning

for

science,

not

doing

deep

learning

research,

you

get

into

the

practice

of

doing

neural

networks

as

plug

and

play.

So

you

say:

oh,

this

is

I.

B

Want

this

output

I'm

gonna

try

with

the

output,

which

is

between

0

and

1,

and

that

is

a

sigmoid

function,

gives

me

0

1,

that's

very

nice

and

then

you're

gonna

say:

oh

I'm

gonna

try

different

classes,

I'm

gonna,

try

maximum

likelihood,

I'm

gonna,

try,

l1

I'm

gonna,

try

l2,

and

there

are

fundamental

reasons

for

why

that's

a

bad

idea

to

use

any

random

loss

with

any

round

activation

function.

One

of

them

is

this

one,

so

you

can

see

here

that

the

output

of

a

sigmoid

it

has

extremely

vanishing

like

this

is.

B

B

B

B

B

Yes,

I

see

your

question,

so

the

question

is

release.

They

seem

to

be

linear

because

they

always

output

everything

linearly

right,

except

when

it's

positive,

when

it's

negative

and

the

answer

and

how

come

this

is

a

nonlinear

function,

the

answer

it

is

nonlinear,

because

the

fact

that

you're

actually

killing

the

negative

part

produces

all

of

these

farce

representations

when

you

compose

a

lot

of

them

after

each

other

you're

creating

you

know,

nonlinear

big

nonlinear

functions

and

the

idea

of

riilu

by

the

ways

it's

also

connected

or

inspired

by

nuance.

B

You

have

done

the

writes

and

then

they

either

respond

or

they

don't

respond

some

time

the

whole

neuron

might

respond

or

not.

And

then,

if

it's

just

a

zero

one

sort

of

response

you

still

create,

you

can

create

nonlinear

functions.

Okay,

so

the

point

I

was

trying

to

make

here

if

you're,

using

a

sigmoid.

Remember

that

you

have

an

exponential

in

there.

You

remember

that

you

have

this

vanishing

gradient

and

then

remember

that

that

needs

a

log

to

undo

the

exponential.

B

B

B

So,

in

this

case,

like

Bernoulli,

we

are

assuming

that

the

data

is

the

the

actual

output

is

distributed

according

to

her

knowledge

distribution,

we're

trying

to

match

that

it's

it's

much

better

for

learning,

it's

easier

to

actually

optimize

networks

with

these

distributions,

rather

than

to

try

to

optimize

on

the

original

distribution

that

it's

like

0

1,

for

example,

that

we're

looking

for

I.

Think

it's

it's

easier

to

illustrate

this

with

the

softmax,