►

From YouTube: 23 - Scaling NNs Training (Hands on) - Steven Farrell

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

Basically,

what

we

have

for

you

is

some

additional

hands-on

things

that

we

put

together.

This

is

part

of

a

deep

learning

at

scale

tutorial

that

we've

been

doing

at

a

number

of

conference.

Menus

I,

think

provide

had

mentioned

that

we

do

this

Torsten

lecture

earlier

is,

is

a

big

part

of

that,

so

that

and

these

hands-on

things,

the

examples

that

we

have

here

kind

of

make

up

the

meat

of

that

tutorial.

A

A

We

have,

and

unlike

the

unlike

the

tutorial

examples

from

earlier

in

this

week,

where

you're

just

lazy-

and

we

just

took

those

from

tensorflow

this-

we

actually

did

prepare

ourselves-

it's

not

using

Jupiter

notebooks,

but

it

has

kind

of

a

code

base.

You

can

submit

things

on

from

from

the

terminal

and

we

have

a

reservation

on

Cori,

so

you're

gonna

be

able

to

to

run

things,

but

there

will

be

some

peculiarities,

some

specific

things,

that

you

need

to

keep

in

mind.

A

A

Let

me

just

get

through

some

some

slides

first

and

then

you'll

have

some

time

to

play

with

things

the

school

ends

at

two

should

be

enough

time,

for

you

at

least

run

something

simple

on

some

number

of

nodes

on

Cori.

You

can

also

do

other

things.

If

you

don't

want

to

do

this

hands-on.

Of

course

you

can

go

back

and

do

some

of

the

other

notebooks

from

the

week

I

mean

there's

more

stuff

that

we've

heard

that

actually

was

relevant

to

some

the

examples.

Since

the

last

time

you

had

a

chance

to

do

hands-on.

A

I

will

also

call

out

that,

if

you're

interested

in

the

hyper

parameter

optimization

stuff

that

Ben

had

talked

about

earlier

this

week,

you

can

come

talk

to

him

and

he

can

help

you

I

think

he

can

help.

You

get

an

example

that

you

can

run

and

that's

another

another

option

for

you:

okay,

so

a

distributed

training

hands-on.

A

So

we've

seen

a

handful

of

these

really

kind

of

simple

data

sets

that

are

open

and

popular

for

benchmarking.

This

is

yet

another

one

I,

don't

think

you've

played

with

sigh

fartin

yet,

but

in

this

hands-on,

what

we're

going

to

do

we're

gonna

use

a

convolutional

neural

network

model.

We're

just

gonna.

Do

image

classification

you've

seen

this

already,

but

we're

gonna

show

you

kind

of

how

to

do

distributed

training

so

we're

gonna

use

a

resonant

architecture.

A

Resident

was

mentioned

earlier

in

the

week,

but

now

you

can

actually

look

at

some

code

and

play

with

it.

You

can

run

it

yourself

on

this

Syfy

10

data

set

so

sort

of

like

it's

not

too

different

from

M

NIST.

If

you

take

a

look

at

this,

so

it

has

some

natural

kind

of

image

things.

These

classes,

like

airplane

cat,

dog,

etc

only

ten

classes

fairly,

small

images

RGB,

that's

one

thing:

a

little

more

complicated

than

M

NIST,

which

is

only

single

channel

grayscale

right.

A

So

now

these

have

three

color

channels

slightly

bigger,

and

then

the

number

of

samples

is

basically

the

same

as

M

NIST.

So

this

dataset

in

this

problem

doesn't

really

require

large

scale.

You

don't

need

a

supercomputer

to

train

a

classifier

to

work

on

these

images

at

all,

but

this

is

something

that

you

know

we'll

be

able

to

kind

of

run

something

in

a

reasonable

time.

A

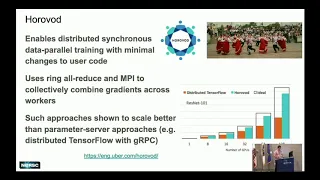

There

are

other

options,

but

that's

just

the

one

with

the

fewest

lines

of

code

you

have

to

change

Torsten

mentioned

about

earlier.

I'm

not

going

to

go

through

very

many

details,

but

just

sort

of

recap,

a

high-level

recap

so

horrible,

it's

this

library

produced

by

uber.

It's

named

after

this

kind

of

Russian

dance

where

he

danced

in

a

ring.

That's

because

you

have

these

kind

of

ring

based

I'll,

reduce

communications,

so

Harvard

enables

distributed

synchronous

data

parallel

training

with

minimal

changes

to

use

your

code.

A

So,

just

refresh

from

what

Torsen

was

talking

about

what

this

means,

the

synchronous

data

parallel

training.

So

we

have

some

number

of

workers,

let's

say

across

different

nodes

of

a

system.

Each

one

has

a

the

same

version

of

a

model,

the

same

set

of

weights

and

architecture

and

as

we're

sampling,

mini

batches

of

data

and

stochastic

gradient

descent.

We're

sort

of

distributing

these

mini

batches

across

those

workers,

so

each

worker

would

have

a

different

subset

of

the

overall

mini

batch.

They

process

a

mini

batch

in

parallel

and

then

there's

an

all

reduced.

A

That

happens

to

kind

of

collectively

kind

of

combine

the

gradients

to

get

the

overall

gradient

and

then

every

worker

can

do

the

same

update

to

their

model

parameters.

So

that's

the

synchronous,

data

parallel

training,

it's

the

most

common

way

to

parallel

eyes

and

speed

up

the

training

of

neural

networks.

A

Yeah.

So

just

then

one

thing,

I

think

I

think,

or

some

probably

mentioned

where

this

kind

of

comes

from,

but

using

all

reduce

an

MPI

has

been

you

know,

common

in

in

HPC

since

forever,

but

when

people

first

started

doing

distributed

training

of

neural

networks

like

tensorflow,

they

were

focusing

more

on

this

parameter

server

based

approach

where

all

the

workers

send

their

updates

to

one

special

process

called

the

parameter

server,

and

then

it

computes

the

updates

and

sends

everything

back,

and

this

just

sort

of

this

plot

that

uber

shows

basically

just

kind

of

hi.

A

It's

that

that's

a

potential

bottom

that

can

can

limit

scalability.

So

these

kinds

of

MPI

based

approaches

are

all

reduce.

Based

approaches

are

really

what's

popular

now,

so

this

example

code

that

we

have

isn't

going

to

show

you

all

the

really

fancy

cutting-edge

stuff

the

torus

didn't

talked

about

when

you

really

need

to

go

huge

scale

and

how

to

solve

all

these

large-scale

convergence

issues

batch

size.

You

know

I

captive,

batch

size

that

kind

stuff.

A

We

don't

have

any

of

that,

but

we

have

basically

here

the

the

basic

stuff

which

can

work

for

reasonable

scales

for

a

large

number

of

problems

and

people

okay.

So

what

we

use

specifically

is

so

again

synchronous

data,

parallel

training

and

your

weak

scaling,

which

what

we

mean

by

weak

scaling

here

is

weak

scaling

of

the

batch

size.

So

let's

say

you

have

a

single

node

batch

size

of

32

or

something

as

we

want

to

scale

up

to

multiple

nodes.

A

When

we

say

we

scaling,

we

mean

we're

keeping

that

local

batch

size

to

be

that

fixed

number,

so

32,

but

if

I

have

10

nodes

now

doing

computation,

the

global

actual

batch

size

is

32

times

10

yep,

so

using

horeb

odd,

we're

gonna

use

this

linear

learning

rate

warmup.

So

I

guess

I

forget

to

always

change

the

order

of

these

bullets.

Let

me

see

the

learning

rate

scaling

first,

so

we're

also

gonna.

Do

this

linear

learning

rate

scaling.

A

A

So

in

scaling

always

you're

trying

to

push

up

the

batch

size,

you

can

have

more

work

to

paralyze

and

then

correspondingly,

you

want

to

take

larger

steps

in

optimization

if

you're

using

more

data

to

take

the

same

step,

size

you're,

not

necessarily

gonna,

get

to

an

optimum

any

faster

you're,

just

wasting

more

computation.

So

always

the

goal

is

to

maximize

both

of

these

things

batch

size

and

learning

rates,

so

we're

gonna.

Do

the

linear

rate

saw

again.

A

10

workers

would

be

10

times

whatever

learning

rate

you

had

for

a

single

node,

and

then

we

have

learning

ripped

a

linear

warm-up

of

the

learning

rate.

So

it

was

shown

that,

starting

with

a

really

large

learning

rate

right

at

the

beginning

can

be

bad,

it's

better

to

start

with

a

small

learning

rate

and

then

ramp

up

to

your

large

ones,

so

to

kind

of

get

out

of

the

whatever

crazy

space

and

the

loss.

The

lost

space

that

you

that

you

start

out

with

okay.

So

that's

what

the

code

is

going

to

do

for

you.

A

This

gives

you

a

you

know

the

kind

of

minimal

description

of

what

you

need

to

do

to

enable

data-parallel

training

with

four

Avadh

in

your

code.

So

you

have

a

training

script

that

runs

on

a

single

node.

Now

you

want

to

go

multi

node.

You

basically

just

need

these

few

things

with

some

additional

small

caveats.

So

it's

basically

in

MPI

applications.

You

need

some

sort

of

initialization

at

the

beginning,

so

you're

gonna

have

some

four

vaad

in

it.

That's

going

to

do

the

rendezvous

between

all

the

workers,

so

they're

ready

to

communicate.

A

You're

gonna

have

an

optimizer

from

let's

say:

Karis

like

SGD

in

this

case,

stochastic

gradient

descent

optimizer.

This

is

things

to

work

yeah,

but

now

to

do

this

in

a

data

parallel

fashion.

All

you

really

have

to

do

is

wrap

it

in

this

class

provided

by

horribad

this

distributed

optimizer

very,

very

simple,

and

then

one

other

thing

you

do.

Is

you

add

a

callback

to

Kharis

that

does

the

synchronization

at

the

very

beginning,

before

training,

to

make

sure

all

the

workers

have

the

same

model?

A

At

the

start,

okay,

so

you

don't

do

that

synchronization

later,

all

you

do.

Is

you

synchronize

all

the

weights

at

the

very

beginning

and

then

through

training

you're

just

doing

the

I'll

reduce

on

the

gradients,

so

that

ensures

that

every

worker

is

doing

the

same.

Update,

therefore,

always

having

the

same

model.

The

same

set

of

parameters

in

the

model.

A

Yeah

then

that's

mostly

it

I

mean

you

got

to

pop

past

your

callbacks

into

the

model.

Dot

fit

function

like

you've

done

you've

used

this

before,

and

you

know

you

probably

some

examples

where

you

had

callbacks

and

then

you

want

to

launch

this

script

with

MPI.

So

you

want

to

say:

I

want

to

launch

10

ranks

10

workers

to

do

this

in

parallel

and

we're

on

Cori,

so

we're

gonna

use

slurm.

So

you

just

submit

that

with

s

ron

and

it's

gonna

work.

A

Ok,

so

we

have

a

separate

repository

for

for

these

examples.

It's

here

these

slides

were

sent

I

linked

these

slides

on

the

slack,

but

also

on

the

agenda

is

the

link

to

slides

through

that

you

can

come

to

this

slide

and

you

can

click

the

link

to

the

repository

or

you

can

just

navigate

to

github

nurse

can

find

it

that

way.

A

So

we

have

a

reservation

as

I

said,

so

you

can

try

the

example

out

on

quarry.

I'll

walk

you

through

it

briefly,

but

you

can

use

Jupiter.

You

can

actually

also

just

use

your

training

accountant

ssh

to

quarry.

If

you

want

I,

don't

know

if

I

have

the

exact

command

in

the

in

the

readme,

but

if

you

sort

of

already

know

how

to

SSH,

then

you

probably

know

how

to

do

that

or

we

can

help

you,

but

we

encourage

you

to

use

Jupiter,

but

we're

not

going

to

actually

run

notebooks.

A

A

We're

gonna

do

a

git

clone

of

the

repository

and

then

just

gonna

work

through

the

instructions

that

are

in

the

readme,

so

I

think

what

it's

gonna

do

is

gonna

suggest

you

can

run

on

a

single

node

to

get

a

feel

for

how

slow

it

is,

and

then

you

can

run

on

a

tour.

You

know

you

can.

You

can

probably

do

more,

but

I

suggest

you

start

with

eight

nodes.

Do

training

and

then

see?

A

Oh,

it's

faster

and

it's

still

converging

to

a

good

result

and

then,

after

that,

they're

kind

of

a

lot

of

things

you

can

play

with.

So

you

can

try

to

go

in

there

and

change

the

optimizer.

That's

used.

You

can

change

the

initial

learning

rate.

You

can

change

kind

of

how

it's

scaled.

You

can

change

the

amount

of

epochs

used

for

the

learning

rate,

warm

up

how

the

learning

rate

is

decayed

the

scaling.

A

So

actually

just

via

the

configuration

file,

you

can

do

linear

learning,

right

scaling

or

you

can

do

that

square

root,

scaling

that

Torsten

mentioned

and

then

the

the

basically

the

the

conclusion

or

the

takeaway

is

that

with

this

example,

with

the

configuration,

as

you

scale

up

and

notes,

you

do

see

training

time

go

down,

but

the

losses

still

converge

the

same

way.

So,

basically,

if

we

look

at

thirty

two

here

we're

getting

through

thirty

two

epochs

much

faster

when

we're

running

on

you

know

like

thirty

two

nodes,

but

we're

still

getting

the

same

result.

A

So

basically

we're

getting

we're

getting

to

our

answer

much

much

faster.

So

that's

good!

That's

the

kind

of

regime

that

you

want

to

be

in

question

this

one

scale

perfectly.

Ideally

you

would

have

that

linear

scaling

and

yeah.

There

are

some

particular

issues

why

this

codebase

is

not

perfectly

scaling,

but

it's

it's

not

too

bad.

Okay,.

A

So

there

can

be

some

performance

hits,

for

example,

because

we're

using

Cara's

here-

and

it's

not

today

the

most

optimal

but

yeah.

If

you,

if

you

know,

if

you

really

push

on

things,

to

use,

maybe

sort

of

lower

level,

tensorflow

and

and

kind

of

work

on

improving

the

do

some

optimizations

there's.

No

reason

why

you

can't

get

really

basically

linear

scaling

up

to

thirty-two.

That

should

be

easy

yeah,

but

if

you

really

want

to

go

up

to

like

thousands

of

nodes

like

Torsten

showed,

you

tend

to

have

to

do

some

additional

tricks.

A

Okay,

so

that's

that's

all

there

is

for

the

slides.

Let

me

just

show

you

a

little

bit

here,

so

this

is

what

the

repository

looks

like,

hopefully

you're

all

there

already

sort

of,

like

the

other

repository

I've

set

it

up.

So

there's

some

links.

That

should

be

the

right

link

to

go

to

Jupiter

using

the

same

Jupiter,

URL

I

already

got

kicked

out

so

now

we

can

see

this

GPU

note

is

what

we

used

before.

Don't

click

that

one

CPU

node

is

what

we

want

today.

A

So

if

you

use

GPU

node,

probably

you

just

won't

be

able

to

submit

the

jobs.

Probably

I

think

what

happen

is

when

you

try

to

do

the

S

batch

it'll

just

say:

I

can't

do

it

because

you're

in

like

a

different

kind

of

slurm,

the

whole

different

setup

for

the

system,

but

the

CPU

node.

So

this

is

gonna,

be

on

a

shared

node,

so

there

will

be

other

people

on

the

node

we're

not

going

to

run

an

extensive

computation

on

that

node

we're

just

using

Jupiter

to

launch

our

work

to

the

Corey

batch

system.

A

Okay,

yeah!

So

again,

when

you

click

this

you're

not

going

to

see

so

many

kernels

and

things,

but

that's

what

I

have

you'll

start.

The

terminal

like

we

did

before

and

we'll

clone

the

repository.

So

again,

I

have

the

nice

thing

here.

You

can

just

copy

paste

unless

you're

one

of

these

four

people

with

the

Windows

laptop

or

some

other

browser,

you

can't

copy

paste

into

Jupiter

laughs.

A

You

don't

have

to

use

Jupiter

yeah,

so

you,

if

your

windows

and

you

have

putty,

you-

can

just

use

SSH

because

again

we're

only

using

Jupiter

here

to

basically

get

a

terminal

well

and

the

file

browser

thing

is

kind

of

nice

right.

So

now,

I

see

it

over

here.

I

can

actually

look

at

the

repository

and

now

I've

got

the

scaling

tutorial.

Okay,

so

I've

got

everything

in

here

now.

A

If

you

look

at

the

instructions,

I

do

sort

of

describe

a

bit

the

contents

of

the

repository,

so

in

additional

takeaway

you

might

get

from

this-

is

it

kind

of

gives

you

an

example

of

how

you

can

set

up

a

project,

a

code

base

for

doing

deep

learning

with

Kara's

like

this?

How

this

is

I

mean

you

don't

have

to

do

it

this

way.

A

Obviously,

but

this

is

like

an

example

of

how

you

can

structure

things,

how

you

can

have

directories

and

configuration

files

that

are

written

in

gamal

to

make

things

nice

and

readable

and

configurable

and

flexible

yeah.

So

so

I

described

a

little

bit

here.

You

can

kind

of

take

a

take,

a

look

at

that

and

see

see

if

you

like

things

and

then

in

the

instructions

I

have

you

know

a

bit

of

stuff,

you

don't

have

to

go

through

it

in

detail.

It's

really

as

much

as

you

want,

but

I

do

suggest

you.

A

You

know

you

take

a

look

at

the

ResNet

code

because

I

don't

think

you've

seen

ResNet

code

yet

this

week,

so

you

can

kind

of

see

how

it

looks

in

Kara's,

it's

a

bit

more

complicated

than

when

we

define

a

sync

when

shield

and

just

added

layers.

So

it's

more

complex

than

that.

That's

probably

somewhat

obvious.

You

can

take

a

look

at

how

things

are

set

up

in

the

optimizer,

how

I

wrap

the

thing

in

the

horrible

optimizer,

but

you

also

already

saw

that

on

the

slide.

A

You

can

look

at

the

main

training,

script

and

kind

of

identify

the

key

points

that

I

mentioned

on

the

slides

of

where

we

do.

You

know

mod

in

it

where

we

put

in

that

callback

to

do

the

initial

broadcast

and

stuff

like

that.

These

config

files,

so

can't

just

show

you

the

easy

ammo

files.

This

is

just

a

really

nice

way

to

kind

of

configure

your

deep

learning

in

general.

You

can

kind

of

define

things

nicely

with

a

nice

hierarchy

here.

A

So

if

you

want

to

tweak

things

for

these

models,

you

can

come

in

here

and

change.

For

example,

the

learning

rate

you

can

change

the

learning

rate

scaling.

You

could

change

this

to

s

qrt,

then

my

code

will

interpret

that

as

a

square

at

scaling

and

so

on.

Okay,

hopefully

it's

all

self-explanatory,

but

we

can,

of

course

they

answered

questions

as

they

come

up

and

then

I

say

you

know

you

can

try

to

run

a

single

node

job.

You

might

want

to

change

the

number

of

epochs

before

you

do

that.

A

Just

maybe

set

it

to

one.

So

you

can

see

really

just

see

how

slow

it

is.

This

actually

I

fixed

this.

So

this

bullet

is

irrelevant.

You

can

ignore

that

now

you

don't

have

to

worry

about

running

a

multi,

node

job

right

away

and

then

trying

to

download

the

data.

It's

it's

safe

now

and

then

I

suggest

that

you

try

the

multi

node

thing,

so

you

can

take

a

look

at

these

scripts.

Basically,

we

we

just

made

a

very

convenience.

A

You

can

run

a

very,

very

simple

command

on

the

command

line,

but

you'll

be

those

scripts.

That's

where

it's

actually

going

to

do

that

s

run

command

to

just

start

up

the

training,

so

cipher

ResNet

I

mean

they

were

just

loading,

the

software.

This

is

actually

the

thing

I

added

that

makes

it

safe,

so

it'll

make

sure

the

data

is

downloaded

before

it

launches

all

the

processes.

And

then

you

just

do

something

like

this.

So

s

run

Python

training,

config

file

and

you're

off.

Okay,

so

that's

all

I

have

for

you.

A

You

can

try

these

out

question

yeah,

yeah,

so

running

on

K&L

we're

gonna.

Do

one

rank

per

node

in

this

case,

yeah

I

guess

yeah

you

could

so

you

could

actually

do

it

even

without

touching

the

script,

but

just

at

the

command

line

instead

of

s

patch

capital,

n,

8,

yeah,

but

I

do

have

a

fixed

kind

of

like

number

of

threads

configuration

you.

You

know

in

principle.

A

A

A

A

This

is

okay,

just

filled

148

days,

but

hopefully

that's

not

really.

Okay,

it's

already

running.

So

since

we

have

a

reservation,

you

shouldn't

have

to

wait

in

the

queue

it

should

start

right

away.

Unless

there's

somebody

in

here

who's,

not

nice

and

submits

a

thousand

node

thing.

It

uses

the

whole

reservation.

Okay,

be

nice.

We

do

have

a

thousand

twenty-four

nodes,

but

we

have

to

share

them

so

start

with

8

or

1

or

8.

Ok,

then,

the

lighter

you

can

you

can

try

more

I

mean

if

you

try

like

128.

A

It's

like

just

the

settings

that

are

here.

It

might

not

converge

again.

It's

a

simple

problem.

So

far

it

doesn't

scale

that

well

it's

hard

to

really

do

a

really

large-scale

32

nodes.

You

should

still

get

decent

convergence

and

it

should

be

fast.

Ok,

let's

start

with

8.

So

let's

see,

if

I

have

anything

in

the

log

file,

yet

not

really

using

tensorflow.

So

it's

it's

at

least

running,

but

you'll

start.

Oh

there

we

go.

A

You

start

to

see

it

saying

Oh

initialize

these

ranks

it's

gonna

print

out

some

stuff

and

eventually

it'll

start

printing

out

the

training.

You

know

you're

not

going

to

see

the

progress

bar

like

we

had

in

the

two

Paterno

books,

but

just

say

epoch

one,

and

then

it

will

print

out

the

lost

validation

loss

and

at

the

end

it

should

say

this

is

the

best

validation

loss.

I

got

okay,

so

we

have

45

minutes

so

feel

free

to

play

with

this

have

some

fun.

Try.

Other

examples

talk

to

Ben.

B

Okay,

so

some

people

are

having

problems

with

running

this

script:

hello,

yeah,

so

that

so

the

problem,

the

problems

are

them

so,

okay,

so

so

the

problem

is

because

you,

the

problem,

is

because,

if

you

go

in

by

Jupiter

it

doesn't

actually

make

this

scratch

directory,

where

it's

trying

to

save

the

files,

and

that's

because

these

are

all

fresh

accounts

and

the

fresh

accounts.

They

don't

make

this

directory

until

you

log

in

and

obviously

logging

by.

B

B

A

A

Ls

scratch:

that's

an

environment

variable

just

like

so

dollar

sign

and

then

all

capital

scratch.

If

you

don't

see

anything

in

there,

if

it

says,

doesn't

exist

or

whatever,

then

you

are

afflicted

by

this

issue.

So

if

you

just

do

a

normal

SSH

on

to

Corey,

with

your

training

account,

it

will

automatically

create

the

directory

and

apparently

that's

the

only

way

to

do

it.

So

to

do

that.

Ssh,

your

your

username

first

train

one,

two,

three:

whatever.

B

A

A

Thing

from

the

terminal,

SSH

Corey

yay

you

can

exit,

then,

if

you

want,

then

it's

all

good

I

think

you

might

be

related

to

the

kind

of

model

compilation.

It's

doing

we're

not

running

TF

2.0

in

this

hands-on

we're

running

the

old

tensorflow.

So

it's

not

all

dynamic,

eager

eager.

It's

doing

some

like

compilation

of

the

computation

graph,

and

then

we

run

it.