►

From YouTube: Getting Up to Speed on OpenMP 4.0 (Part 4)

Description

(4/5) Ruud van der Pas, Distinguished Engineer in the Architecture and Performance Group, SPARC Microelectronics, Oracle, also a co-author of the book "Using OpenMP" (published by MIT press), presented this tutorial on OpenMP 4.0 for NERSC users.

A

Ready

in

2

I'm

ready

to

go

again.

Thank

you

for

coming

back,

I

feel

a

million

pages

so

that

cooks,

a

good

sign

being

either

us

questions,

but

if

it

echoing

it

is

to

have

a

commercial

break,

but

at

the

start

of

this

this

show.

But

if

you

have

to

be

technical,

commercial

break

and

we'll

be

very

brief,

the

reason

is

that

I'll

be

showing

performance

numbers

on

our

spark,

processors

and

I.

Guess

very

few

of

you

are

familiar

with

what

that

is

about

so,

but

again

I'll.

A

Keep

this

very

brief

for

pushing

in

the

break

or

anytime.

I

can

always

talk

about

more,

so

we

currently

have

what

we

call

the

t5

processor.

The

team

5

processor

has

16

cores.

Each

core

is

running

at

Lincoln.

Six

echo

hoops

the

core

go

as

a

twist,

so

in

total

one

ship

isn't

28

feds

and

the

chip

is

designed

to

have

an

egg

way

directly

connected

system.

So

you

have

an

eight

socket

thousand

2930

system

or

point-to-point

connection

you

can

do

bigger,

and

then

you

have

a

second

layer

of

metal

and

visit

them.

A

This

is

a

simple

architecture,

a

simple

architecture

diagram.

It's

not

a

simple

architecture.

It's

a

very

interesting

in

my

system,

which

are

because

each

HT

five

has

national

memory

controller,

but

it's

a

fairly

flat

teaching

in

my

system.

The

ratio

is

about

think

about

forty

percent

when

you

go

off

your

socket,

but

it

still

pays

off

to

welcome.

A

Now,

what's

on

the

way

to

you

that

you

haven't,

we

talked

about

it,

so

this

is

disease

of

the

inflammation,

but

we

we

have

the

noun

system.

Yet

this

is

spike

m7,

that's

the

new

processor

and

we

have

a

new

court

redesigned

core

compared

to

the

team

5

32.

Now

on

the

chip,

the

speed

is

faster.

I

can't

give

them

the

final

Steve,

yet

again,

eight

sets

per

court.

That

part

is

pretty

much

the

same.

In

total,

give

you

250

six

cents

per

socket

and

again

this

system

can

go

a

gray

glueless.

A

What

I

want

to

mention

after

after

this

I'll

show

a

little

bit

about

the

architecture

but

again

64

divided

l3

cache

in

control,

so

the

strength

of

this

chip

is

actually

in

the

bandwidth

together.

Awful

lot

of

bandwidth.

The

measured

bandwidth

is

about

one

hundred

and

fifty

gigabytes.

A

second

family

member

and

we

can

go

too

much

bigger

systems.

The

question

is:

do

you

want

to

do

that?

How

many

people

actually

are

willing

to

pay

for

it?

It's

a

matter

of

business

side.

Technically

it

can

go

much

further.

A

What

I

find

interesting

is

this

is

a

first

ship

where

we

started

having

accelerate

but

not

lot

like

your

common

GPU.

These

are

integrated

and

and

have

specific

purposes

that

what

we

call

Dax

the

data

and

a

Linux

accelerators,

are

really

primarily

targeting

the

database.

To

celebrate.

Local

databases

doesn't

come

as

a

surprise

and

I

won't

say

anything

more

about

that.

But

it's

an

interesting

trend

in

what

you

do

when

you

can

put

more

things

on

the

chip,

is

a

little

bit

more

detail

on

how

it

how

it

works.

A

So

you

have

only

call

these

all

clusters

and

the

connected

digitally

connected

between

the

cheek.

What

the

last

thing

I

want

to

show

is

what

I

think

is

really

interesting:

has

nothing

to

do

with

performance

with

correctness,

and

it

is

the

underhood

name

has

been

changed

a

bit

in

generally,

we

settled

for

application,

data,

integrity

or

ABI,

which

is

kind

of

a

little

bit

of

a

funny

name.

But

basically

what

you

do

is

you

memory?

You

have

your

data

and

you

have

your

addresses

and

in

addition

to

that,

data

now

has

a

specific

color.

A

That's

the

way

to

see

it,

and

you

can

just

summarize

this

in

just

a

few

words,

but

it

is

I

think

pretty

nice.

If

you

try

to

access

data

that

does

not

have

your

collar

at

the

track

will

be

generated

and

that

prevents

at

hardware

speed

things

like

memory,

pepper,

overcoat,

overrun

problems.

If

you

try

to

Malik

outside

of

try

to

access

outside

of

what

you

Malik's,

all

the

nasty

memory

problems

that

have

been

available

in

tools

before

and

now

detected

a

hardware

speed.

So

we

have.

A

We

have

our

own

tool

to

do

this,

checking

into

called

discover

and-

and

that

takes

advantage

of

that.

So

the

huge

performance

penalty

that

you

usually

have

with

these

kind

of

checks

is

pretty

much

gone

with

it

and

I

think

that's

interesting,

who

had

a

correctness

feature

instead

of

always

you're

going

for

so

very

simple

idea.

If

you

try

to

load

from

something,

that's

not

your

color

and

a

signal

is

dead.

Ok,

that

was

the

commercial

I

hope

it

was

not

too

painful.

A

All

right

I'll

talk

about

the

mint

and

I

guess

you

can

guess

what

it

is.

Then

I'll

talk

about

some

of

the

darker

corners

of

the

openmp

building,

then

also

some

cases

and

there's

a

better

record.

The

myth

so

what's

amiss

is

something

widely

believed,

vaults

and

I.

Guess

you

can

can

imagine

what

that

is.

Let's

see

it

make

sure

works

and

commit

this

opening.

It

does

not

scale

and

you'll

find

that

in

too

many

places

people

saying

it

and

once

you

start

asking

it

turns

out

to

be

different.

A

So

what

I'm

going

to

talk

about?

What

I'm

going

to

show

you

now

is

got

to

be

a

little

like

wait

after

a

plan

is

I'll.

Show

you

an

imaginary

discussion

with

an

imaginary

person

who

comes

to

me

and

says

open

and

Peter's

not

together,

and

I

will

show

you

my

side

of

the

discussion

only,

but

I

think

it

will

be

clear

what

the

answers

are

from

the

others.

So

what

are

you

saying?

Opening

theme

doesn't

care

what

what

does

that

really

really

mean

think

about

it?

A

The

programming

model

and

not

not

scare

many

things

cannot

scalp

to

start

with.

The

implementation

could

be

very

poor

at

X

2

day

82

to

create

under

threats.

Then

it

doesn't

get

that

could

be

the

implementation

that

doesn't

scale.

It

could

be

that

you're

running

on

kind

of

the

wrong

type

of

hardware,

for

your

application

requirements

like

your

application

or

certain

needs,

and

you

pick

an

architecture-

that's

not

really

suitable

for

that

that

happens

to

or

it

could

be

kind

of

you.

A

You

wrote

something

in

not

knowing

what's

going

on,

and

that

turns

out

to

be

a

button

and

that's

what

I'll

be

talking,

how

how

you

can

prevent

a

big

balls

in

the

some

questions.

I

could

ask

so

my

first

question

will

be

so

you

acapella

program,

you

use

OpenMP

and

it

doesn't

perform

I.

Think

that's

that's

what

you're

really

saying

with

what

we

have

and

ok

I

see.

A

So

did

you

make

sure

the

program

is

very

well

optimizing

in

sequential

mode,

because

if

it

doesn't

run

well

on

one

core

or

do

you

think

will

happen

on

one

hundred

two

hundred

thousand,

it

won't

get

any

better.

It

actually

got

worse

very,

very

quickly,

so

you

didn't.

Why

do

you

expect

the

program

to

scaled

it?

A

We

just

think

it

should

you

use

all

the

course.

Thank

you.

Maybe

this'll

speed

up

estimate

using

Amdahl's

law.

No,

that's

not

the

new

EU

financial

bailout

program.

That's

something

else!

I

know

you

can't

know

everything,

but

you'll

need

use

a

tool

to

find

out

where

you're

spending

most

of

your

time,

profiler

you

didn't

you

just

paralyze

all

the

loops

in

the

program.

Okay!

Well,

having

done

that

that

your

boy

trying

to

avoid

that

your

voice

try

to

paralyze

the

inner

loop

in

the

most

loop

in

a

Leutnant,

it

didn't.

A

Did

you

minimize

the

number

of

fellow

Regents?

Then

you

didn't,

he

just

was

fine.

The

way

it

was.

Did

you

look

at

a

no

wait

close

to

minimize

the

use

of

the

barrier

we

never

heard

of

a

barium?

Maybe

maybe

should

read

a

little

bit,

so

all

threads

roughly

perform

the

same

amount

of

work.

You

don't

know

you

think

it's

okay,

okay,

well,

I,

hope

you're

right.

A

Did

you

maximize

the

usual

private

data

you

just

shared

all

of

it.

I

am

sharing

it

easier.

Ok,

judging

and

looks

like

he's

using

a

cc

Newman

system

that

you

take

that

in

to

become

neighborhood

of

that

either

making

someone

unfortunate

because

could

perhaps

before

sharing

the

affecting

your

performance,

never

heard

of

that

either.

Maybe

Mary

should

learn

a

little

more

about

those

things.

A

So

what

did

you

do

next

to

address?

Clearly

you

have

a

performance

problem.

So

what

is

your

doing?

The

next

to

address

that

you

switch

to

MPI?

Okay?

Does

that

perform

any

better

than

you

don't

know,

you're

still

debugging

the

code?

Well,

while

you're

waiting

for

that

debug

Roger,

let's

look

at

an

opening

p.m.

performance

in

there

we

go.

A

Definitely

the

ease

of

use

of

opening

p

is

a

mixed

convention.

I!

Think

for

those

of

you

new

to

open

up,

you

have

some

experience.

You'll

find

that

it's

not

so

hard

to

get

going.

The

problem

is,

it

may

be

terrible

for

performance

work,

while

in

other

models

you

go

through

it,

possibly

deep

learning

curve,

but

then

you

get

the

reward

with

open

appeals,

more

subtle.

You

have

different

ways

to

parallel

license

and

it

turns

out

that

only

one

of

them

is

deficient.

A

Well,

then,

how

can

you

tell

so

that's

what

I'm

going

to

talk

about

the

things

to

do

in

the

things

not

to

do

so.

The

ease

of

use

is

an

extra

touching

I,

still

like

it,

but

there's

something

that

you

need

to

be

aware

of

and

I

think

not

much

written

down

about

that.

We

don't

start

searching

through

its

not

recur.

Mvi

is

very

well

in

the

student

documented,

like

nobody

in

their

right

mind

will

send

one

salient

one

byte

messages

to

not

know

you.

Could

you

just

don't

do

that

same

things?

A

Equivalent

thing

is

arturo

can

appeal,

though,

the

rules

are

different

and

people

do

that

because

they

don't

know.

So,

let's

look

at

it

and

two

of

the

nasty

things

that

are

kind

of

silently

happening

are

ceasing

numa

and

for

sharing

the

funny

thing

is

and

they're

real.

I

mean

that

happens

and

I'll

show

you

examples,

but

it

has

nothing

to

do

with

open

Abby.

A

It's

the

way

shared

memory

systems

are

designed

and,

in

particular

things

like

cash

go

hands

which

is

really

nice,

but

it

can

hurt

you

if

you

don't

use

it

in

the

right

way.

So

again,

nothing

to

do

will

open

in

v1

one

time.

I

I

hope

that

some

time

to

show

you

exactly

the

same

nasty

things

in

a

pudding,

/

application,

as

in

opening

p.

But

if

you

happen

to

use

open

it

be

and

that's

sweet,

that's

very

natural

thing.

A

Not

so

many

that's

what

I

was

afraid

of,

because

it's

a

pretty

evil

evil

thing

to

happen

and

I

won't

go

into

much

of

the

detail

of

the

underlying

things

that

are

happening

but

uses

one

slide

that

tries

to

explain

it.

They

have

this

cache

line

and

whenever

you

need

data,

that

data

will

come

unless

it's

extremely

large

but

will

come

as

part

of

a

line.

A

cache

line

is

the

size

of

the

cache

line

is

designed

by

us.

We're

architects

could

be

32,

x,

64,

bytes,

maybe

hundreds

and

28.

A

There

are

very

good

reasons

to

have

very

low

on

cash

lines

and

are

equally

different,

good

reasons

to

a

showcase.

So

there's

no

one-size-fits-all.

That's

why

different

systems

have

given

design

choices

made,

but

it's

it's

a

chunk

of

data

more

than

you

typically

need.

So,

if

you

need

like

one

double

precision

element,

you

gotta

cash

like

when

it's

possible

these

elements

and

somebody

else

who

may

need

a

different

element

that

happens

to

be

the

same

time

will

get

a

car,

but

it's

very

natural

to

have

multiple

copies

floating

around

of

the

equation.

A

So

far,

that's

good!

As

long

as

you

read

it's

good,

but

what,

if

you

modify

Natalie

what,

if

this

court

decides

to

modify

the

yellow

element,

now,

I

have

an

inconsistency.

So

this

is

this.

Is

this

is

now

stale

data

and

that's

getting

access

to

the

right

data?

Is

handled

by

cache

coherence,

that's

the

underlying

system.

So

what

will

happen

is

great

vibe,

which

are

the

state

states

of

that

line,

there's

a

like

clean

or

dirty,

or

whatever.

They

have

different

states

in

the

cash

transfer.

A

They'll

change,

so

that

anybody

who

attempts

to

get

the

cache

line,

but

that

wait

a

minute

I

have

an

old

coffee.

I

need

to

get

a

new

one,

someone-

and

that

includes

this

one.

Although

we

know

that

the

blue

element

wasn't

modified,

it

can

tell

it

will

see

a

dirty

cash

sighing

said:

okay,

I

gotta

get

a

new

woman.

Now

that

happens

all

the

time.

That's

fine!

Unless

it's

in

the

heart

of

your

laundry.

A

If

you

hit

this

all

the

time

in

the

middle

of

your

innermost

loop

than

and

the

decorative

patient

is

feedback

is

really

bad.

I

used

to

have

some

slides

show

how

bad

was

the

damage

to

depression.

I

took

on

it

is

really

bad,

so

it

is

something

to

take

into

account

and

it's

got

full

sharing

and

the

reason

really

to

that

to

exist

is

that

these

statements

they

keep

track

of

the

status

on

the

on

the

cache

line

basis

not

on

a

bike.

Wait,

that's

cost.

A

The

occasions

are

very

large

if

you

have

to

keep

track

of

every

single

bite,

that

would

add

a

lot

of

infrastructure

and

the

design

and

that's

expensive.

So

long

time

ago

somebody

decided

we'll

do

that

on

cache

line.

Yes

like

mother,

so

that's

in

a

nutshell,

is

what

false

sharing

care

needs.

So

one

of

the

red

flags.

A

We

have

free

conditions

for

Krista

Boehm,

it

happens

when

you

modify

data

technically

it

happens

on

the

store

instruction.

So

as

long

as

you

read

it

doesn't

matter,

it

read

as

much

data

as

you

want

no

for

sharing.

As

soon

as

you

modify

an

element

that

line

gets

invalidated.

So

the

bad

things

happen

when

you

have

multiple

threads,

they

hit

the

same

cache

line

over

and

over

again.

That

line

will

travel

throughout

the

system

because

of

each

same

effect,

I

need

it

or

wait.

A

minute.

A

I

have

an

old

copy,

I

need

to

get

a

new

one

and

that's

that's

fairly

expensive.

So

when

that

happens

very

often,

and

at

the

same

time,

then

all

sharing

is

going

to

happen

at

us

that

we

scalability

and

the

recommendation

use

local

data

where

you

can,

because

you

meet

Annie,

violate

rule

number

one.

It's

not

shared

anymore

and

they're

all,

and

they

all

find

the

way

you

do

that

in

practice.

Is

that

often

what

you

can

do

is

you

can

do

something

local

updates

and

only

way

you're

done

even

has

to

be

shared.

A

Give

you

for

sharing,

but

it's

like

an

order

of

magnitude

less

so

that's

the

general

idea

and

how

to

avoid

false,

sharing,

there's

other

ways,

but

something

to

keep

a

friend

again.

We

don't

use.

So

that's

one

of

them

dark,

very

dark

dungeons

of

the

building

government.

Of

this

thanks.

It's

nice

to

get

all

the

scalable

bandwidth,

but

as

I

talked

about

in

the

morning

session,

which

is

a

new

comes

in

sort

of

responsibility

to

make

sure

you

get

rough

and

close

to

your

data

and

the

burden

is

on

you,

that's

one

of

them.

A

One

of

the

painful

thing

I've

shown

this,

like

we

thought

of

rank

this

morning,

but

I

put

it

back

in

again

because

I'm

not

sure

everybody

dialing

for

that

session.

So

how

do

you

bear

with

you

this

ain't

it

again

already

you

got

two

CPUs

could

be

multi-core

whatever

Woonsocket

and

each

socket

has

its

own

when

we

control

the

talks

to

its

own

memory,

but

thanks

to

a

cache,

coherent

internet,

everybody

else

or

no

one's

going

on,

you

know

exactly

what's

going

on

where

you

need

a

variable,

you'll

get

it.

A

You

don't

have

to

do

anything

to

that.

The

only

thing

is

the

time

to

get

it

could

be

vastly

different.

This

is

only

two

socket

system,

religion,

local

memory,

access,

that's

the

best

you

can

do

it

wouldn't

be

so

good.

If

this,

which

we

move

next

and

as

I

said

this

morning,

this

is

hard

to

avoid

hundred

percent,

but

you

don't

want

to

have

this

kind

of

remote

access

happening.

Ninety

percent,

so

it's

always

a

bit

of

a

trade-off

to

decide

where

your

data

go

and

again.

A

So

this

is

definitely

important

on

zee

cinema

systems

as

since,

even

to

socket

systems

or

CC

new

man.

These

days,

it's

pretty

much

affects

everyone,

and

so

the

cost

is

not

only

longer

memory

access

time.

If

they

all

hit

on

to

the

same

memory

controller,

you

can

consider

a

tab

memory

controller

and

get

a

texture

performance

possibility.

Luckily,

open

mp4

todo

provide

support

position,

one

that

was,

but

this

morning

extensively

talked

about.

The

only

places

don't

be

profane

you.

A

What

I'll

I'll

show

you

later

is

in

general,

how

you

can

handle

optimized

for

season,

numeral

and

I

think

it

would

pretty

much

all

the

OS

has

used

its

first

touch

a

principle

to

replace

the

data.

So

what

is

research

and

see

this

before

you

got

to

so

Casey's

with

our

many

and

in

other

loop

and

I'm,

just

initializing

a

vector

20,

and

if

I

don't

do

anything

one

cor?

One

thread

will

execute

that

and

for

the

rest

of

the

lifetime

of

that

data.

A

A

The

solution

is

pretty

straightforward.

You

paralyzed

this

loop

and

for

demonstration

purposes.

I,

are

you

got

on

two

threads

here

and

will

happen

both

will

initialize

half

of

that

vector

and

each

will

get

half

of

that

vector

into

their

memory

and

hopefully

that's

the

way

you

that's

going

to

be

close

to

the

thread,

meaning

which

isn't

always

the

case.

I

mean

this

is

I'm

talking

about

the

ideal

world

view

and

I'll

show

a

little

bit

more

of

them.

The

gory

real

world

that

can

sell

them

happen

like

this

is

to

go.

A

A

Those

are

two

things

for

sharing

teaching

newman

and

I'm

not

going

to

go

through

some

case.

The

problem,

the

case

studies

are

always

kind

of

specific,

so

I

try

to

pick

something.

That's

general

enough

to

have

some

more

general

message

than

only

applicable

to

a

very

small

subset

of

users,

but

they

are,

by

definition

specific.

So

that's

the

title

of

the

first

a

study

and

it

shows

my

fave

is

elaborated

because

it's

so

simple,

it

actually

has

so

many

interesting

things

in

it.

It's

multiplying

a

matrix

with

a

vector

straight

from

the

text.

A

Hundred

what

you're

doing

here

in

see

you

take

dot

product

of

the

rows

of

the

matrix,

multiply

that

with

a

vector-

and

that

gives

you

result-

that's

pretty

much

embarrassingly

parallel,

because

all

these

dog

products

are

you

pendant

and

actually

any

self-respecting

automatically

paralyzing

compiler

will

do

this

for

you,

but

if

you

would

do

it

yourself

with

opening

be

you

know

you

want

to

do

we

want

to

paralyze

this

outer

loop

I.

Look!

That's

to

look

over

the

rows

of

this

openness

matrix

so

using

OpenAPI.

A

A

No

surprise

that

the

babe

small

matrix

there's

not

enough

work

to

amortize

the

cost

of

what

I'm

doing-

and

this

is

where

this,

if

clause

comes

in

handy,

you

could

say

if

the

matrix

is

whatever,

then

don't

go

palace,

so

your

performance,

this

line

here

would

be

all

these

roughly

the

same,

you

can

get

any

improvement,

but

you

wouldn't

get

a

slow

down

either.

That's

how

you

can

use

their

clothes

send

in

a

certain

range

of

the

memory

yoki.

We

actually

get

very

good

performance

here.

Even

got

super

linear

scaling.

A

This

one

right

here

is

more

than

twice

as

fast

and

that's

because

that's

quite

common

on

shared

memory

systems,

you

get

additional

cash

space

available.

As

you

add

threads.

That

course

cores

have

cash

paid.

So

all

of

a

sudden,

the

data

they

did

fit

in

the

cache

is

now

complete

because

you

have

more

guessing

quite

natural

nice.

That

happens,

but

not

always

like

that,

and

this

shows

want

to

go

to

large

amazingness.

It

came

over

no

matter

how

many

things

I

throw

at

it,

but

the

best

peter

is

only

two

thirds

in

about

1.6

remember.

A

This

was

an

embarrassingly

parallel

algorithm,

that's

not

very

impressive,

so,

what's

going

on,

sorry

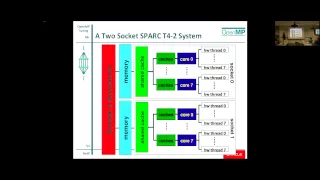

again

will

be

technical

and

again

you've

seen

this

this

picture

over

and

over

again

and

there

we

are

again

with

this.

Is

it

in

this

case

the

specifics

of

that

chip

that

Kip

had

eight

course

each

coil

has

to

Hardware

threads,

but

the

way

I

draw.

It

is

very

similar

to

what

I

showed

earlier.

This

is

the

CC

Numa

books.

I,

hope

that

came

as

small

as

it

is.

It

is

a

cinema

box.

A

They

interconnect

a

quick

bad

name

to

connect

any

cash

go

hand

in

connect,

but

each

circuit

has

a

portion

of

the

memory,

so

we're

talking

about

this

issue.

So

what

do

we

do?

Well,

what

you

need

to

do

is

you

need

to

figure

out

where

the

data

is

the

algorithm

itself

paralyzed.

But

where

was

the

data

hidden

well,

I

was

very

sloppy

with

the

state

initialization

that

was

sequential,

so

on

soccer

didn't

have

any

the

other

one

at

all.

A

A

So

straight

forward

again

from

no

borders

on

the

textbook,

what

I

need

to

do

and

again

this

is

why

I

said

you

go

back

to

the

drawing

board.

What

is

it

out

rhythm

to?

It,

takes

the

dot

product

of

the

roles

in

matrix

times

the

vector

so

and

if

I

run

this

on

two

sets

saying

one

thread

will

access

the

top

half

of

the

array,

the

other

one,

the

bottom

app

if

I

use

the

static

schedule

doing

so?

What

I

need

to

make

sure

is.

A

This

part,

is

in

the

memory

of

the

bed

that

executes

this

part

of

your

day,

the

other

one

under

them.

There's

a

little

catch

here

is

vector,

is

shared

by

all.

All

of

them

have

to

be

so

there's

no

optimal

placement

for

that

either

I

could

copy

it

to

all

the

memories,

but

that's

pretty

expensive.

You

really.

You

really

should

try

to

avoid

copying

data.

That's

the

cost

of

that

gets

out

of

hand

very

quickly.

I

rather

rely

on

some

caching.

A

When

you

read

in

that

vector

that,

hopefully

some

of

my

data,

all

of

it,

is

still

in

a

higher

level

cache.

That's

usually

better

think

big,

be

very

careful

with

copying,

but

that

could

be

a

solution

here.

I

went

the

eating

way

now,

so

what

I

do

I?

First

of

all,

I

take

that

vector

that

was

see

and

I

do

paralyze

the

initialization

telling

these

half

a

minute

in

the

right

place

on

to

third,

we

can

order

is

on

the

right

place

on

Portland.

It's

about

the

moments

about

the

best

I

can

do

it.

A

The

key

part

is

in

the

matrix,

so

I

am

going

to

initialize

your

matrix

and

I'm

going

to

initialize

it

in

parallel

over

the

Rose.

Exactly

as

my

algorithm

access

I

was

lucky

that

that

was

the

case.

I

mean

like

I

said.

This

is

the

best

case

you

can

get

with

the

way

you

initialize.

The

data

in

the

way

of

you

is

pretty

much

the

same.

The

one

thing

in

red

here

is

that

it

got

a

little

bit

Katie

way,

but

the

same

observations

are

to

putting

output

vector

before

you

can

write

your

result.

A

The

cache

line

has

to

be

your

cash

before

we

can

write

into

the

same

locality,

rules

hold

or

their

destination,

not

only

being

so.

Okay,

probably

kind

of

measure

the

difference,

but

I

couldn't

resist

to

do

this

one's

about

this

with

demonstration

and

then

I

got

better

scalability.

It's

about

to

exercise

still

not

very

high,

but

hate.

The

2x

improvement

that

I

get

x

by

x.

We

would

King

my

deck

initialization.

It

wasn't.

My

algorithm

can

with

the

database

to

show

you

this

is

generic

I

took

a

spark

system.

A

That's

an

older

p

for

system,

it's

kind

of

a

smaller

scale

version

of

the

g5.

You

got

a

bunch.

Of

course.

You've

got

your

threads

and

I

really

try

to

draw

it

to

make

it

look

as

similar

as

the

other

one

same

kind

of

memory.

Here,

every

idea

have

your

local

memory

and

you

have

some

indie

connect

that

loose

it

all

together.

So

I

took

the

same

code

and

initially

again

and

I

didn't

get

any

speed

up

beyond

two

threads

and

I.

A

I

use

my

initialization

trick

and

again

I

get

a

roughly

get

a

factor

of

two

performance

improvement,

and

when

you

put

that

in

one

charge,

you

see

that,

although

in

absolute

sense,

the

performance

is

different

that

doesn't

matter,

the

improvement

is

roughly

about

2x,

and

this

is

a

small-scale

thing.

These

things

start

with,

where

more

as

you

go

to

larger

ship

memory

systems,

with

that

with

more

and

more

clothes

right,

selects

a.

A

Fortran

example:

oh

please

original

array

update

I'm

obtaining

an

array

X

and,

as

it

turns

out,

first

I

had

rule

number.

One

always

make

sure

the

program

runs

reasonably

well

in

single

phase,

no

point

in

trying

to

paralyze

a

program

that

runs

like

a

dog

and

a

reason

for

that.

I

didn't

say

that

yeah,

it's

running

like

a

dog,

usually

means

you've

used.

A

The

memory

system

to

go

to

the

memory

is

the

way

too

often

and

when

you

do

that

in

parallel,

you're

overloading

the

integral

and

no

matter

how

fast

is

in

the

connector,

none

of

them

can

saturate

alone,

like

that

all

threads

goal

means

connecting

that

data

from

somewhere

else.

Oh,

so,

first

make

sure

that

the

program

performs

really

well

single

bit

play

with

some

compiler

options

or

whatever

you

like,

a

philippic

addition

to

that

and

then

viola.

So

this

one

is

okay.

This

is

written

in

the

right

way

for

420

access

is

three

dimensional

array.

A

The

loops

are

qualities,

but

that's

fine,

but

unfortunately

they're

too

dependent

people

ikk

depends

on

I

da

k,

minus

1

so

that

the

defendants

in

the

third

dimension

and

here's

the

defendants

in

the

second

image,

so

it

can't

just

simply

paralyzed

I-

may

be

able

to

have

some

way

from

a

sober,

but

as

written

I

cannot

have

a

parallel

do

on

the

cake

for

your

change.

So

if

you

do

it

like

this,

what

is

the

problem?

A

Well,

the

problem

is:

is

that

there's

this

implied

barrier

here

that

will

cost

you

the

performance,

as

you

add

threads,

certainly

going

to

affect

you.

So

that's

because

of

this

dependence,

I'm

stuck

with

only

one

dimension,

so

I

meant

it

and

he

needed

performs

like

a

dormer

and

eight

fences

who

came

over

the

phone

stops

by

very

quickly

and

I

was

not

surprised.

Let

me

show

you

how

this

was

I

know.

A

This

is

not

different

way

to

do

it,

but

I

way

to

the

sanity

check

any

of

these

hidden

performer

and

then

use

our

profile

from

the

performance

analyzer

and

call

it

to

compare

the

single

thread

run

in

the

tooth

bedroom.

As

I

said

good

morning,

whenever

I

look

at

performance

problems,

I

start

with

comparing

one

and

two-thirds,

because

only

if

I

understand

that

I

can

try

to

go

bigger.

So

here

I'm

comparing

side

by

side.

A

If

the

one

thread

the

two

thread-

and

I

look

at

the

user

CP

0

time,

the

work

time

in

opening

p

and

the

wait

time

is

open.

That's

that's

one

of

the

things

our

profiler

spits

out.

She

tells

you

how

much

time

you

spend

doing

some

some

sort

of

hopefully

useful

work

and

overhead

like

waiting

and

it's

a

little

small.

But

what

is

seeing

here

is

when

you

look

at

this

functional

block

3d

from

2.7

seconds.

It

goes

to

two,

not

very

impressive,

but

it

is

a

little

bit

faster.

A

The

thing

that

literally

sticks

out

is

the

wait

time

that

almost

nothing

goes

to

over

two

seconds

already

into

exam.

That's

like

very,

very

strange,

because

why

would

there

be

so

much

weight

I

when

I

had

a

regular

vector

operation

that

I

cut

in

pieces?

I

didn't

understand

the

wait

time

and

I

look

at

the

source

level,

and

it

confirms

way

it

is

anything

it

shows

that

that

loop,

having

a

way

time

from

80

milliseconds

to

2.3

seconds,

that's

a

huge

jump,

I

really

couldn't

figure

it

out

and

no

idea

what

was

going

on.

A

I

switched

our

tool

has

and

I

need

to

explain

that,

because

I'll

be

showing

mortgage

or

you

call

the

timeline,

the

timeline

is

shown

for

each

thread

and

you

can

load

multiple

experiments

as

I'm

doing

here.

This

was

an

experimental

two

threads,

and

this

always

I

think,

of

hate

and

when

it

shows

it

time

from

left

to

right

and

each

color

represents

a

state

in

the

u.s.

A

here

and

when

you

look

so

anything

but

green

is

bad

news,

like

blue

green

systems,

I'm

distant,

I'm

here

means

that

was

initializing

the

pages

for

them

for

the

day.

So

that

was

all

that

interesting

at

the

application

level.

Each

function

has

a

different

color

and

we

do

that

for

every

snapshot

that

we

make

and

what

they're

doing

I

highlight

the

bad

ones

enemy

at

the

legend.

The

bad

ones

here

are

red.

That's

the

barrier

and

the

blue,

which

is

the

idle

time

of

the

feds.

A

So

what

you're

seeing

here

this

was

on

the

master

fled.

This

is

on

the

second

fret.

What

I

see

is

I

think

the

barrier

cost

that's

what

I

expected,

but

they

also

see

that

idle

time

here

the

bloom

and

that's

not

what

I

expected.

So

it

confronts

the

food

you

what

I

already

saw

and

when

I

zoom

in

I,

see

except

the

master,

that

this

is

a

single

thread,

that's

kind

of

boring,

but

the

master

sent

here

is

to

the

master

that

is

very

active.

A

Occasionally

you

have

the

spike,

but

if

his

idol

time

I

want

to

go

to

more

search,

it

gets

worse

and

worse,

and

this

a

little

bit

more

of

the

same

when

I

go

to

16,

says

I,

see

that

really

getting

out

of

hand

so

for

too

long

I

couldn't

figure.

When

is

the

idol

segment?

How

can

I

be

on

a

vector

operation?

Another

realize

this

is

because

when

I

I

have

one

thread,

there's

nothing

to

share,

but

what

I

have

to

say

is

or

what?

A

If

this

is

the

access

part

of

the

first,

this

is

of

the

second

place

and

Hispanic

ashland.

I

have

one

cache

line:

descent

only

one,

but

then

I

go

to

for

I

got

three

enter

food

and

especially

when

you

have

short

pieces

of

data,

that's

very

very

likely

to

happen

because

that

the

impact

of

that

is

very

large

relative

to

the

other.

Things

are

doing.

How

do

you

find

out?

Actually,

as

far

as

I

know,

detecting

for

sharing

is

still

like.

A

I've

is

a

big

crystal

ball

or

wishful

thinking,

but

luckily

we

have

counters

now

that

can

help

you

point

that

and

counters

are

process,

a

specific

each

processor

that

you

use.

You

need

to

figure

out

what

the

name

is,

which

can

be

very

cryptic

unclear,

but

it's

not

an

easy

thing

in

general,

but

the

information

is

there

so

on

on

ship.

I

looked

at

the

counter

that

shows

me

how

many

cache

line

invalidations.

A

I

have

remembered

a

picture

where

you

valid

invalid

end

line

of

this

is

exponential

growth,

as

I,

as

I

increase

in

a

group

until

that's

really

does

smoking

smoking

gun.

You

know

over

two

hundred

times

higher

or

just

32

yeah,

so

this

is

definitely

for

sharing

at

work

before

she.

Okay,

there's

several

ways

you

can

tackle

or

sharing

this

case,

I

found

something

that's

more,

this

generic,

because

you

have

the

defendants

in

the

two

dimensions

here,

but

I

don't

have

luckily

don't

have

a

dependence

in

the

I

direction.

A

That's

shown

here

just

don't

get

the

code

year

and

in

the

next.

Basically,

what

you

do

is

you

have

each

side

work

on

its

own

three-dimensional,

silver

and

then

you're

running

to

what

I

call

the

plus

or

minus

one

problem.

You

need

to

figure

out

what

is

hardened

n

manual,

which

certain

I

usually

get

it

right.

Almost

the

first

timer

but

a

pleasant

one

is

one

missing:

it's

not

hard.

A

Is

it

bookkeeping,

and

this

is

what

I

come

up

with

for

the

code

and

now

I

actually

I

killed

two

birds

with

one

stone,

because

I

have

one

big

parallel

region,

there's

no

very

very

more

than

one

at

the

ends.

Each

thread

will

add.

First,

I

scoid

threadid

and

then

I

think

about

they

start

and

end

value

at

the

size

that

the

position

in

the

block

that

you

work

on

I

mean

it's

not

hard.

It's

got

to

be

careful

and

make

sure

you

get

anything

right,

but

nothing

difficult

about

it.

A

So

all

I

need

to

do

is

adapt

this

thing

to

accept,

start

and

end

value,

and

they

all

call

this

in

parallel

and

any

job.

I

can

wait.

Wait

a

moment:

I

got

the

new

dick,

so

that's

help

loading

balance

so

that

you

know

that

that's

because

it

doesn't

always

usually

divide

the

number

of

thread,

so

you

get

to

load

imbalance

overall,

I

get

about

4x

performance

improvement

with

a

with

a

very

simple

exchange

and

always

do

the

sanity

check

now.

A

This

timeline

looks

very

well

behaved

like

here

in

this

was

I,

don't

know

or

offense

I

go

only

one

barrier.

No,

not

all

that

idle

time

in

between

and

16

there's

more

of

the

same

with

this

confirms

that

this

was

one

little

thing

that

I

never

looked

into.

It's

still

a

little

bit

of

bogging

down

when

you

zoom

in,

but

it's

so

small

I

didn't

really

care

anymore

and

when

I

we

measure

those

sketch

lining

validations.

That's

the

blue

one

now

versus

the

red

one.

Is

it

pretty

much

which

call

it

again?

A

It

confirms

that

there's

still

a

little

bit

of

invalidations,

but

that's

hard

to

avoid

and

that's

why

so.

That

was

one

thing

I

did,

but

that's

another

thing

you

can

do

yeah

is

that

this

is

a

funny

use

of

opening

and

once

you've

seen

it

a

few

times

he

started

get

used

to

it,

but

I

want

to

carefully

explain

it.

The

problem

was

that

I

had

this.

This

whole

parallel

region

embedded

here

remember.

The

parallel

region

is

expensive,

so

that

cost

is

log

n

square

times,

that's

really

high.

A

What

does

that

mean?

That

means

that

all

threads

will

execute

this

whole

to

so

all

execute

is

due

and

then

they'll

split

the

work.

So

this

is

some

toothpaste

how

this

would

execute.

They

all

start

with

a

close

to

Chris,

refrigeration

and

Jacobs

to

then

they

hit

that

inner

loop-

and

this

was

a

work

sharing,

do

so

they'll

split

that

work,

the

link

of

NJ

and

in

that

way

it

works.

A

So

now

by

now,

I

have

actually

have

four

different

versions.

I

had

the

bad

one.

Of

course

I

try

to

compile

our

dresses.

This

is

relatively

new

compiler

to

analyze.

I

have

my

box

version

with

a

single

pamela

region

and

the

last

one,

which

is

just

a

different

implementation,

and

all

of

them

were

significantly

better

than

the

original

version.

A

What

was

kind

of

planning

surprise

is

that

the

paralyzing

compiler

is

very

well.

It

turns

out

and

generate

the

same

code

as

I

did

my

hand,

but

I

was

quite

impressed.

I

mean

for

more

than

I

expected.

So

the

only

do

that

kind

of

funny

version

wins

first,

but

eventually

loses

out

and

since

I'm

only

interested

in

five

seconds,

I

never

looked

into

what

was

going

on

here.

So

that's.

That

was

what

I

thought

was

the

end

of

this

story,

but

some

things

never

end

so

I,

don't

know

why.

A

But

I

started

playing

with

software

prefetch,

which

we

have

on

our

compiler.

The

hardware

does

automatic,

prefetch

and

there's

an

addition.

You

can

add

the

compiler

to

insert

software

prefetch

instructions,

and

what

I

saw

was

that

without

the

software

prefetch,

initially,

it's

slower

because

many

cases

of

the

page,

which

is

a

good

idea

and

then

come

to

me

again,

no

idea

what

we're

going

on

here

of

that.

Oh

this

is

when

these

Hardware

counters

can

be

incredibly

useful.

I

got

some

suspicion

because,

when

I

did

that

first

work,

I

didn't

really

I

difficulties

in.

A

Oh,

my

god,

Oh

data

locality-

and

this

is

another

counter.

If

niches

it

measures,

how

often

I

had

a

local

dedication

is

but

a

when

the

data

was

somewhere

remote.

We

also

have

a

counter

to

tell

you

I

missed

it,

but

it

was

somewhere

else

in

the

memory

a

locally,

that's

kind

of

not

that's

a

nice

one

to

happen.

This

is

the

bad

one.

A

This

is

the

one

you

don't

want

to

seem

too

high

and

what

you

see

for

up

to

eight

threats,

velo,

because

this

processor

has

eight

cores

another

go

beyond

that

and

that's

when

I

start

seeing

my

remote

by

remote,

mrs.

and

what

I

see

and

not

surprising,

is

why

so

nicely?

Optimized

version

is

his

works

in

terms

of

CC

new,

so

I

gained

on

one

side

and

then

I

lost

on

another

side.

I

mean

that's

real

life.

Is

this?

A

Many

of

my

scenes

are

with

one

memory

related.

So

let's

look

at

how

that

array

X.

That

three-dimensional

array

is

stored

in

them.

It's

a

three

dimensional

array

and

all

four

times

people

know

or

should

know

photo

and

arrays

are

stored

by

the

columns

first,

so

you

got

the

first

column

in

memory

and

the

next

one,

the

next

one.

So

this

is

like

all

imaginary

sizes,

for

this

is

the

first

column.

A

The

problem

is

how

about

how

it

links

that

to

the

page

size,

what

if

I,

have

some

very

unfortunate

combination

of

things

that

I

have

a

page

size

that

spends

more

than

one

column.

That

can

happen,

so

is

one

column

plus

a

little

bit

or

doesn't

matter

so

it's

not

nicely

a

mind

and

cut

off

at

the

right

sizes.

A

So

that's

how

things

are

laid

out

in

memory

and

they're

a