►

From YouTube: ExaFEL project port to GPU

Description

Johannes Blaschke of LBNL presents a talk on ExaFEL project port to GPU. Recorded live via Zoom at GPUs for Science 2020. https://www.nersc.gov/users/training/gpus-for-science/gpus-for-science-2020/ Session Chair: Muaaz Awan

A

They

are

all

members

of

nick's

group

at

lbl

and

they

are

the

ones

who,

like,

oh,

except

hugo,

but

hugo

also

worked

extensively

with

this

gpu

port

and

therefore

I

I

think

it's

only

fair

to

acknowledge

all

the

people.

Who've

done

the

actual

work,

and

so

the

things

the

the

technique

that

nick

presented

just

before

lunch

is

this

technique

of

crystallography

and

just

in

in

the

most

basic

physical

terms.

A

Imagine

you

have

a

crystal

in

in

the

sense

that

it's

a

lattice

of

scatterers

and

what

will

happen

is

whereas

an

x-ray

beam

comes

in,

there

was

the

extras.

The

coherent

x-rays

will

scatter

of

these

scatterers

that

are

arranged

in

a

regular

lattice,

some

physics

happens

and

then

on

a

detector.

You

will

see

bright

peaks

with

dark

regions

in

between

and

here's

a

sketch

for

a

one-dimensional

array

of

scatterers.

You

would

see

these

localized

peaks

and

some

some

maybe

a

little

bit

of

in

structure

in

between,

and

in

fact

we

can.

A

A

A

That

needs

to

be

filtered

out,

and

essentially,

why

are

we

interested

in

this

sort

of

thing?

Well,

sim?

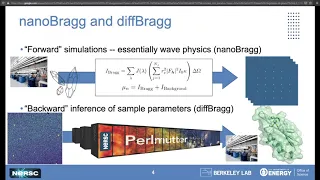

The

previous

slide

shows

what

we

sort

of

like

the

forward

direction

of

this

kind

of

simulation.

Essentially,

if

we

start

off

with

a

crystal

and

the

parameter

is

associated

with

a

beam,

we

can

produce

interference

patterns.

But

this

is

the

much

more

exciting

problem

is

to

flip

it

around.

A

So,

essentially,

we

could

have

a

bunch

of

diffraction

patterns.

These

are

real

diffraction

patterns

and

what

we

want

to

know

is

what

are

the

crystals

responsible

for

for

producing

that

kind

of

pattern,

and

in

fact

we

can't

just

like

disentangle

the

crystals

from

from

the

properties

of

the

beam

themselves,

and

so

the

the

vision

of

all

of

this

is.

A

We

will

take

a

massive

amount

of

these

images

and

we

will

cram

them

all

into

pearl

matter,

and

out

comes

some

interesting

signs,

some

unknown

structures

that

that

we

are

interested

in

and

so

the

way

we

achieve

this

using

cctbx

is

essentially

a

hierarchy

of

different

codes.

You

can

take

a

look

at

cctbx

here

and

essentially

the

the

the

overall

structure

of

these

simulations

is

roughly

the

same,

and

on

the

top

on

the

user

facing

level.

We

have

python

that

sort

of

acts

as

a

as

the

glue

code.

A

We

we

use

boost

python

as

an

api

to

expose

a

c

plus

plus

back

end

to

python,

and

this

c

plus

plus

backend

it

it

orchestrates

all

the

the

I

o,

the

data

structures

or

the

logic

that

you

might

need

for

crystallography

and

in

as

part

of

our

port

to

gpus.

We

have

started

to

take

the

most

expensive

components

out

of

the

c

plus

plus

backend

and

we've

started.

Writing

cuda

implementations

for

these

selected

functions

and

this

and

this

basically

the

these

sort

of

cuda

implementations.

A

A

So,

for

instance,

we

will,

we

might

be

wanting

to

resolve

some

pixel

details,

the

detectors

themselves.

They

they.

There

are

some

physics

associated

with

how

they

absorb

x-rays

and

therefore

we

can

loop

over

like

detector

thickness,

essentially

the

thicker

the

more

easily

it

absorbs

a

photon

and

the

photons

can

also

come

from

different

sources

and

can

have

different

angles,

and

the

crystal

muds

also

have

not

the

perfectly

regular

structure,

but

there

might

be

different

mosaicity,

and

so

we

might

need

to.

A

Iterate

over

those

domains,

and

so

finally,

once

we

have

so

once

we

have

computed

this

on

the

gpu,

we

haven't

ported

the

background,

the

noise

yet

to

the

cpu.

So

on

the

cpu

we

will

add

this

random

noise,

and

so,

let's

just

see

what

what

the

end

result

of

such

a

nanobrag

simulation

is

so

nanobrag

by

the

way

refers

to

this

forward

stimulation,

and

so

what

a

nanobreak

does

is.

A

It

will

take

some

parameters,

some

details

of

the

crystal

that

you're

simulating

and

the

wavelengths

and

the

beam,

and

it

will

produce

black

spots.

So

there

are

several

things

I

want

you

to

be

aware

of

here.

Brag

spots

are

fairly

localized.

Nick

did

show

that

they

do

have

a

structure

in

this

simulation.

I've

actually

zoomed

in

here

into

the

center.

You

can

see

they're

sort

of

fuzzy

blobs,

essentially

so

they

they

are.

A

They

are

not

points

in

the

true

mathematical

sense,

and

you

can

see

that

they

all

have

varying

intensities,

and

there

is

a

background

here

and

now

now

to

to

get

maybe

a

handle

on

the

inverse

problem.

I

thought

it

might

be

instructive

to

look

at

what

happens

when

I

simulate

changing

the

the

the

crystal

position

or

distance

from

this

detector.

A

If

you

know

what

kind

of

crystal

you're

looking

at,

then

you

can

just

look

at

the

positions

of

the

pixels

themselves

and

you

can

say,

oh

well,

it

was

at

that

distance

from

the

detector.

So

let's

look

at

the

more

complicated

situation,

let's

rotate

the

crystal

the

z-axis,

and

we

see

something

much

more.

You

know

trippy

happening

here,

so

we're

really

only

rotating

the

the

the

crystal.

So

it's

actually

creating

a

completely

different

looking

pattern.

A

A

We

want

to

start

with

a

lot

of

simulated

image.

Well,

what

we

want

to

do

is

we

want

to

tell

based

on

forward

simulations

like

these.

We

want

to

tell

what

is

the?

What

are

the

crystal

parameters

of

this

measured

image

here?

You

can

see

some

bright

spots

over

here.

Doesn't

look

like

anything

like

that,

but

the

idea

is,

let's

iterate

over

different

forward

simulations

that

minimizes

the

mismatch

between

the

forward

simulation

and

the

measured

data.

A

In

fact,

we

can

do

this

intelligently

with

quasi-newton,

optimization

and

essentially

the

idea

is

we'll

use

a

forward

simulation

and

the

measured

pixels

to

guide

our

next

parameters

for

the

forward

simulations

and

to

iteratively

decrease

the

mismatch

and

and

the

lowest

mismatching

parameters.

That

will

be

our

best

estimate

for

the

crystal

parameters.

A

So

I

also

want

to

take

maybe

a

minute

or

two

just

to

point

out

that

with

these

kinds

of

codes

there

it's

it's

there's

an

important

aspect

that

sometimes

is

overlooked

when

we

just

want

to

accelerate

kernels

in

that

code,

and

that

is

the

full

software

stack

can

matter,

and

this

is

just

a

small

example

of

what

happens

when

we

process

a

large

batch

of

images

stored

on

disk.

So

here

we

have

time

on

the

x-axis

and

the

mpi

rank

on

the

y-axis

and

red

is

bad

here.

A

Red

means

I

o

and

data

movement

over

the

network,

and

so

over.

Here

we

had

a

problem

with

mpi,

so

you

can

see

things

bunch

up

and

there's

a

lot

of

red,

so

that's

very

bad,

and

then

we

and

then

over

here

we've

reduced

this.

I

o

time

by

optimizing

the

the

way

we

actually

schedule

file

access,

but

none

of

this

is

happening

on

the

cuda

level,

and

so

I

want

to

plug.

A

I

I

just

want

to

plug

jonathan

madison's

temory

utility

and

here

because

it

allows

us

to

build

profilers

that

are

able

to

profile

across

the

python

c,

plus

class

and

cuda

boundaries,

and

so

just

a

very

quick

basic

example

in

python,

we'll

just

import

this

object

here.

This

wall

clock

object

and

we

can

surround

the

python

constructor

for

our

nanobreak

simulator

and

then

in

c

plus.

A

We

can

also

use

temori

to

decorate

the

same

constructor

actually,

but

here

I've

changed

the

label

to

cpp

versus

pi

right,

so

I've

sandwiched

the

vc

profiler

with

the

python

profiler.

And

now,

if

you

just

profile

this

whole

thing,

you

can

see

what's

happening

here.

We're

calling

nanobrag

in

python

that

dispatches

a

call

to

c

plus,

and

the

only

thing

that

sits

in

between

is

the

python

c,

plus

plus

api.

A

And

then

when

we

look

at

the

differences

in

the

time

taken,

you

can

see.

In

this

example,

we

can

pick

up

on

the

python

api

call

time

spent,

and

so

this

is

so.

I

I

will

be

con

will

be

essentially

expanding

the

use

of

temporary

to

not

only

profile

cuda

but

but

to

essentially

to

get

a

snapshot

of

the

full

software

stack,

your

highness,

you

have

30

seconds

left.

Can

you

wind

up?

That's

perfect.

I

am

already

done.

I

just

wanted

to

say

that

all

work

is

work

in

progress.

A

Our

nanograph

cuda

port

has

already

resulted

in

a

decent

speed

up,

but

if

we,

if

we

improve

our

data

movement

and

our

api

based

on

what

we

are

observing,

we

can

definitely

get

way

more

out

of

that.

One

next

on

our

plate

is

optimizing,

the

diff

brag

iteration

itself

and

then

finally,

we

want

to

look

at

openmp

offloading

but,

for

example,

there's

some

little

issues

associated

with

the

fact

that

python

and

our

software

stack

likes

gcc,

which

has

some

problems

playing

nice

with

openmp.