►

From YouTube: Current State of LLVM Compiler

Description

Johannes Doerfert (HPE)

Current State of LLVM Compiler

A

This

is

actually

going

to

be

a

kind

of

a

a

short

version

of

a

three

hour

tutorial

I

gave

that

I

want

that

really

goes

into

details

about

tips

and

tricks

for

application

developers.

What

to

do

in

what

situations?

What

cool

features

are

there

and

so

on

so

forth?

So

we

I

give

you

the

cliff

notes

here,

but

that

link,

which

is

for

the

until

the

end

of

the

year

for

i1

participants,

will

get

you

to

the

three

hour

tutorial

with

lots

of

details.

A

So

it

might

be

a

little

too

long,

but

it's

certainly

detailed,

okay,

cool.

Let's

start

if

you

are

looking

at

llvm

llvm

client,

probably

is

is

what

you

would

start

nowadays

and

you

wanted

to.

You

want

to

build

it

yourself,

because

you

wanna,

you

know

the

newest

features,

the

newest

bug

fixes

or

you

want

to

play

with

it.

A

A

single

cmake

command

usually

suffices

to

get

llvn

from

the

GitHub

and

be

able

to

do

GPU

offloading

with

it.

So

this

this

cmake

command

will

probably

get

you

a

client

compiler

that

can

do

Cuda,

offloading,

openmp,

offloading

and

so

on

and

so

forth.

There's

a

lot

of

useful

flags

that

are

described

on

these

web

pages

down

there,

and

this

is

the

first

out

of

a

few

slides

that

you

might

want

to

just

screenshot

instead

of

me

going

over

all

of

it.

A

A

Similarly,

this

is

my

cheat

sheet

for

just

using

technically

any

c

plus

compiler.

It

always

show

you

a

fast

Linker

use

things

like

CC,

cache

and

and

an

alternative

to

make.

That

is

faster,

consider,

link

time,

optimization

and

we'll

get

to

that

later.

There's

a

lot

of

good

Tooling

in

the

C

plus

plus

World

C

world

that

you

should

consider

always

make

sure

you

get

the

right,

optimization

flags

and

the

right

architecture

set

and

then

on

the

llbm

specifics

site

here.

The

online

documentation

is

often

not

that

bad.

A

So

there's

a

lot

of

documentation

to

a

lot

of

topics

there.

We

have

a

lot

of

sanitizers

that

are

really

useful,

and

if

you

built

your

own

llvm,

a

release

in

a

search

build

is

probably

sufficient

for

you,

rather

than

a

full

debug

build

okay,

this

one

is

openmp

specific,

llvm,

openmp

specific

again

cheat

sheet.

So

take

a

screenshot

of

this

we'll

get

into

details

of

some

of

these

as

we

go

along

so

I

will

not

talk

about

them.

Now.

Take

a

screenshot

and

we'll

go

on

all

right.

So

first

things.

A

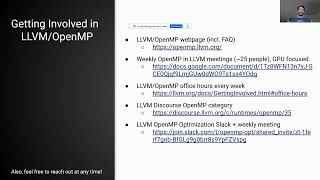

First,

if

you

have

any

questions

about

llbm

about

llbm

openmp,

any

other

subtopic

go

and

ask

the

community

I

mean

llbm

is

is

mainly

Community

Driven.

So

there

is

no.

You

know

there

is

a

lot

of

companies

behind

it,

but

the

companies

have

different

interests

and

so

on

so

forth,

and

we

all

come

together

in

this

community

and

we

have

various

ways

for

you

to

get

involved,

but

also

to

answer

your

questions

go

and

ask

your

questions.

It's

free.

There

is

like

a

forum

mailing

list

which

is

called

discourse.

There

is

a

persistent

Discord

chat.

A

That

is

an

IRC

chat.

They

are

sync

up

meetings

on

various

subtopics,

including

ALS

analysis,

mlir,

machine

learning,

openmp

and

so

on

so

forth,

there's

even

office

hours

nowadays,

where

you

can

talk

to

experts

and

get

ask

them

questions

so

really

make

use

of

this

like

there's

a

lot

of

a

lot

of

information

out

there.

A

A

The

in

addition

to

this

new

driver

or

with

this

new

driver

we

add

a

lot

of

support

one

is

we

get

multi-architecture

binaries,

so

you

can

have

a

single

library

or

executable

that

will

run

on

all

kinds

of

platforms

that

will

run

on

amds

on

Nvidia

platforms

that

will

run

on.

You

know

an

sm30

and

an

sm80

and

a

gfx

908.

So

it

will

you

can

bundle

all

of

them

into

one

library

or

less

static

library

or

all

of

them

into

one

executable,

and

it

will

just

work.

A

We

have

link

time

optimization

in

while

llvm

had

that

for

longest

time.

We

now

have

link

time

optimization

for

device

code.

So

if

you

go

to

the

GPU,

you

can

now

enable

lto

on

the

GPU

side

only

if

you

use

the

new

driver.

So

if

you

use

openmp

with

the

new

driver

or

Cuda

with

the

new

driver,

you

can

do

link

time

optimization

for

your

device

code

and

a

lot

of

times

that

actually

gener

like

gives

you

quite

a

performance

boost.

A

As

I

said,

static,

Library

support

is

now

in

there

all

ways

of

generating

a

static

library

that

has

device

code

in

it

and

then

using

it

should

work

if

there

is

a

way

that

doesn't

work.

Let

us

know

openmp

with

the

new

driver

becomes

interoperable

with

Cuda

and

hip,

which

we'll

see

later

a

little

bit,

and

we

have

a

lot

of

additional

Flags

in

that

release

to

improve

performance

in

in

situations

where

the

compiler

cannot

argue

what

is

correct

or

what

what

static?

What

transformation

is

correct?

A

You

can

help

the

compiler,

so

I

always

have

this

community

efforts

like

with

a

lot

of

names

from

a

lot

of

Institutions.

So

you

see

this

is

you

know

me

giving

a

talk,

but

the

work

is

done

by

a

lot

of

people

and

there's

probably

more

people

that

and

I

should

update

this

slide

to

just

to

give

you

an

idea

on

the

top

left

corner.

You

see

a

link

to

our

weekly

meeting.

We

meet

once

a

once

a

week

for

llvm

openmp

and

feel

free

to

join.

A

These

feel

free

to

join

any

meetings

and

they're

all

in

this

llvm

calendar.

Okay,

I

mentioned

this

before,

but

there's

various

ways

to

get

involved.

We

have

right

now

we

have

an

FAQ

for

the

lbm

openingp

webpage

on

telegram

openmp

webpage.

We

have

these

meetings

where

we're

like

25

people-ish

every

week.

It's

mostly

GPU

Focus,

but

you

get

also

the

people

from

all

the

vendor

companies

such

that

you

know.

A

We

have

office

hours,

we

have

the

discourse

openmp

category

and

we

have

a

slack

and

a

weekly

meeting

for

openmp

specific

optimization.

So

if

there's

something

you

know

you,

you

want

to

see

the

following:

optimization

done

or

the

following

feature:

prototyped

in

llvm.

That

might

be

a

good

place

to

to

find

people

to

help

you

to

do

so

and

now,

as

a

kind

of

case

study

of

people

that

actually

reached

out

and

worked

with

us

on

their

code.

A

So

they

came

to

us

and

said:

okay,

we

are

really

interested

in

using

llvm

openmp,

but

our

cook,

our

performance,

is

really

bad.

So

we

looked

at

their

code,

and

so

you

don't

have

to

read

all

of

this.

The

the

main

idea

here

is

on

the

right.

The

highlighted

numbers,

so

all

of

the

numbers

that

are

in

the

green

oval

are

speed

up

numbers

from

the

different

optimizations

that

we

performed

while

working

with

them.

We,

like

the

first

thing

we

did.

A

We

made

a

cmake

Unity,

build

which

effectively

is

copy

together

all

the

files

into

one

big

file

and

then

compile

it.

We

don't

need

to

do

that

anymore,

because

we

now

have

device

site,

LinkedIn,

optimization

device

set

lto

which

allows

us

to

not

do

this,

but

still

get

the

same

benefit.

But

it's

much

faster

and

then

we

help

them

with

determining

what

what

optimization

Flags

they

can

use

and

then

we

help

them

like.

A

We

worked

with

them

and

found

a

performance

bug

in

llvm,

and

then

we

helped

them

with

improving

their

code

and

at

the

end

of

the

day,

you

know

if

you,

if

you

multiply

all

these

factors

together

the

speed

up

that

they

got

just

from

you

know,

working

together

with

us

on

the

compiler

application.

Development

was

like

a

100x

or

something

like

that.

So

it's

it's

really.

You

know

it

adds

up

and

we're

usually

really

happy

to

work

with

app

teams

as

well

in

close

collaboration

to

make

their

code

faster

and

learn

in

the

process.

A

What

optimizations

were

missing,

where

we

are

like

having

insufficiencies

in

our

runtime

and

in

the

compilation

and

so

on

and

so

forth?

So

so

this

was

really

a

good

experience

and

if

people

are

interested,

you

really

should

you

know

reach

out

so

shout

out

to

John

and

the

openmc

team

who

did

and

I

hope

they

had

a

good

time

as

we

did.

A

A

Not

all

of

it

is,

you

know,

goes

back

into

llvm.

So

if

you

download

lvm,

you

don't

get

all

of

this,

but

a

lot

of

it

feel

free

to

take

a

picture

in

case

you

ever

like.

You

know

want

to

know

more

about

things,

especially

if

you're

you

know

in

a

scientific

world,

but

this

shows

you

I'm,

showing

this

mainly

to

convince

you

that

there

is

a

lot

of

you

know,

research

efforts

going

in.

A

A

Okay,

let's

look

at

some.

You

know

end

user,

end

user

improvements,

so

for

one

nowadays,

if

you

turn

on

minus

G

or

minus

G

line

tables

minus

the

line

tables,

only

you

get

information

about

where

a

crash

happens.

If

that

one

happens,

it

tells

you

to

visit

this

web

page

that

has

more

debugging

options

and

so

on

and

so

forth.

So

the

the

error

messages

that

come

out

of

openmp

offload

is

are

much

better

than

before.

A

At

the

same

time,

if

you

use,

if

you

go

to

this

webpage

and

you

used

it

the

what

is

explained

there

to

do

debugging

you

get

these

environment

variable

Flags,

so

lip

balm,

Target

info

is

is

highlighted.

Here

is

one

of

them

that

allows

you

to

see

what

the

compiler

did

with

your

code.

So

how

did

the

compiler

execute

your

kernels,

your

target

regions?

What

data

is

copied

and

when

and

why

and

how

did

the

compiler

map

you

know

implicit

arguments

and

so

on

and

so

forth?

A

So

this

really

helps

you

to

understand

what

is

going

on

under

the

hood

and

you

can

get

various

kinds

of

information

at

a

like

fine

granularity.

At

the

same

time,

we

have

a

debug

mode,

which

is

which

you

have

to

enable

at

compiler

time

and

at

runtime

together,

and

once

you

enable

it

at

both

times.

You

get

these

debug

checks

in

baked

into

your

program

and

these

debug

checks.

A

Oh

yeah

I

mentioned

this

before

we

have

these

assumptions

to

help

the

compiler

to

do

better

optimizations

of

your

program

to

because

you

don't

really

know

what

the

compiler

needs.

We

also

have

remarks

so

optimization

remarks.

You

can

turn

them

on

with

the

commands

in

the

upper

left

corner

here.

So

our

past

basically

turns

on

just

generic

remarks.

Our

plasma

turns

on

things

about

missed,

optimizations

and

Analysis

about

analysis

results

that

we

collected

throughout

and

then

it

these

remarks

also

tell

you

what

to

do

about

them.

Like

is

this

a

good

thing?

A

Is

this

a

bad

thing

on?

How

do

you

kind

of

interact

and

act

upon

this?

Now

and

usually

you

have

like

three

different

ways

you

can

write.

Prakma

OMP

assumes

you

can

write,

attributes

assume

or

you

can

use

command

line

Flags,

and

we

have.

We

have

standard

assumptions

in

the

openmp

specification.

We

have

llvm

specific

assumptions

that

we

that

we

recognize

and

we

have

by

now

at

least.

A

You

know

five

I

think

by

now

more

than

that

command

line

flags

that

that

provide

assumptions

to

the

compiler

one

more

thing

is

we

even

have

you

know,

environment

variables

that

you

can

use

to

improve

performance

if

you

do

not

need

certain

guarantees

in

all

of

these

are

kind

of

explained

on

our

lovm.openmp

sorry

openmp.lvm.org

webpage,

where

we

explain

all

the

environment

variables

and

so

on

and

all

the

remarks

and

so

on.

So

let's

look

at

an

example.

This

is,

you

know,

Classic

vectorization,

on

the

left.

A

A

A

There's

also

more

information

about

how

to

record

these

remarks,

how

to

filter

them

based

on

your

hotness

in

your

code,

so

you

can

see

only

remarks

that

are,

you

know

relevant

to

Performance,

and

this

also

helped

with

regards

to

how

to

show

remarks

inside

of

your

source

code

rather

than

on

the

command

line

and

so

on

and

so

forth.

So

there's

a

lot

of

tooling

there

that

you

can

utilize

now,

I

mentioned

this

briefly

before

we

have

multi-architecture

binaries.

A

So

when

you

now

nowadays,

if

you

download

a

client,

you

can

say

offload

architecture,

and

then

you

put

the

sub

architecture

there

and

openmp

is

going

to

do

all

the

rest

for

you.

So

if

you

look

at

the

example

here

in

the

in

the

the

second

command

clang,

you

don't

have

the

First,

Command

and

the

second

command

are

equivalent.

A

A

I

mentioned

the

link

time.

Optimization

really,

really.

This

helps

you

to

get

better

performance,

even

if

you

only

have

a

single

translation

unit-

and

it

usually

isn't

going

to

make

your

code

that

much

slower

to

compile,

because

we

only

do

it

for

the

device

code,

so

minus

F,

offload,

lto,

minus

F,

offload

minus

lto

turns

it

on,

and

you

have

to

run

it

both

at

the

compile

and

at

the

Linker.

So

this

is

kind

of

a

little

odd

for

now,

but

this

is

right

now.

A

So

when

you

offload

your

gpus

static,

libraries

are

now

fully

supported,

so

no

matter

how

you

build

your

static

libraries,

it

should

just

work.

You

can

also

you

know

again

analyze

them

and

see.

What

is

what

off-road

images

are

in

there

and

then,

if

you,

you

can

use

this

to

build

static

libraries

that

only

contain

device

code,

for

example.

A

So

and

then

you

can

basically

ship

static

libraries

that

are

only

for

the

device

and

if

you

use

lto

so

the

link

time

optimization,

there

is

no

overhead

in

you

know:

bundling

your

device

code

in

static

libraries

and

then

merging

it

with

your

kernels

later

on.

If

you

do

not

use

lto,

you

would

have

these

call

edges

and

other

overheads

that

that

you

might

not

want,

and

as

you

see

here

when

you

do

the

offload

art

command,

you

can

have

a

lot

of

sub

architecture.

A

A

Now,

with

the

new

driver,

so

here,

if

you

see

the

minus

minus

offload

new

driver

option

and

the

fgpu

RDC

option,

when

you

turn

them

on,

you

can

actually

link

together,

openmp

and

Cuda

code

or

open

a

p

and

hip

code

so

which

is

which

is

great,

because

now

you

know

you

can

mix

and

match

your

program.

You

can.

You

can

use

Cuda

libraries

that

you

find

on

internet

or

Cuda

codes,

and

you

can

call

them

from

your

openmp

code

technically

also

vice

versa.

A

A

A

However,

there

is

also

the

minus

F

openmp

offload

mandatory

flag,

which

effectively

disables

the

host

fallback

in

openmp

offload

and

basically

says:

okay

I

only

create

Target

regions

for

the

device,

which

is

sometimes

really

useful,

especially

if

you

have

you

know,

Cuda

functions

or

so

on

that

only

exist

on

the

device,

and

you

want

to

call

them

and

you're

not

interested

in

ever

running

your

target

regions

on

the

host.

So

this

is

so

you

don't

have

to

create.

A

You

know:

host

Alternatives

or

host

fallback

codes

for

device

only

code-

oh

yeah,

we'll

we'll

skip

this

now.

A

couple

of

small

tools

that

are

that

are

interesting

to

people.

So

when

you

build

lrvm

or

if

it's

installed,

yes,

there's

a

binary

called

lvm

openmp

device

info,

which,

if

you

run

it,

will

tell

you

what

lovem

knows

about

your

your

devices

on

your

system.

A

So

the

first

four

devices

are

going

to

be

CPU

devices

and

then

no

matter

how

many

CPUs

you

have

that's

just

an

artifact

for

now

and

then

afterwards,

it

will

show

all

the

gpus

in

what

we

know

about

the

gpus.

So

you

know

about

like

what

kind

of

compute

capabilities

you

have

What

GPU.

It

is

memory,

size

and

so

on

and

so

forth.

So

this

gives

you

an

idea

if

lovm

actually

is

able

to

see

your

gpus

and

also

gives

you

an

idea

about

what

the

GPU

properties

are.

A

You

can

turn

on

target

profiling

with

llbm

since

12

by

just

setting

an

environment.

Variable

lip

On,

Target

underscore

profile

and

what

you

get

out

of.

There

is

a

Json

file

that

you

can

load

into

Chrome

tracing

or

a

lot

of

other

tools

that

support

the

Chrome

tracing

format,

and

that

gives

you

you

know

a

trace,

a

very

simple

trace

of

what

is

happening

and

with

llbm

16.

A

So

the

next

release

we're

actually

going

to

give

you

the

capability

to

either

automatically

or

manually

profile

parts

of

your

kernel

code

so

of

your

device

code

and

embed

that

into

this

profile

as

well.

So

this

was

not

going

to

be

as

good

as

you

know,

these

big

profiling

libraries,

but

it

gives

you

something

that

is,

that

comes

with

llvm

by

default

and

if

you

just

want

to

do

some

local

profiling

or,

like

you

know,

some

quick

checks.

This

is

really

useful.

A

Okay,

because

I'm

running

out

of

time,

quick

summary

with

llvm

openmp,

you

can

offload

to

remote

gpus

if

you

want,

if

you

want

to

so

this

is

upstream

and

available

and

currently

we're

looking

at

adding

an

MPI

backend

as

well.

So

if

you

want

to

program

only

openmp

and

you

want

to,

but

you

program,

multiple

gpus

or

technically,

even

one

GPU-

you

can

utilize

Hardware,

that

is

in

the

cloud

or

on

on

distributed

systems

purely

with

openmp.

So

on

the

user

Level

side

there

is

no

difference

whatsoever.

A

A

You

can

use

all

the

host

tooling,

but

it's

the

original

Cuda

code

and

it

kind

of

runs

in

the

same.

You

know

GPU

fashion.

Similarly,

you

can

take

on

the

list.

You

can

take

Cuda

code

and

you

can

compile

Cuda

code

with

clang

onto

other

Hardwares,

not

only

the

host,

but

also

you

can

take

that

Cuda

code

and

run

it

on

AMD

Hardware,

and

it's

not

only

about

Cuda

code.

A

But

what

we're

showing

here

is

that

openmp

gives

us

this

target

independent

runtime,

and

we

can,

if

we

use

it,

we

can

merge

and

interoperate

Cuda,

openmp,

hip,

sickle

and

so

on

and

so

forth.

So

that

you

can

mix

and

match

in

your

application,

whatever

you

like

best

and

behind

the

scenes,

everything

will

work

together

and

be

portable

as

well,

which

is

great

and

the

results

here.

Long

story

short

we

generally

perform

just

as

well

as

the

native

programming

language

in

the

native

compiler.

A

A

As

part

of

this

work,

we

literally

take

entire

programs,

no

modification,

and

we

run

them

on

the

GPU

and

while

the

the

paper

which

you

see

at

llvmhpc

is

going

to

show

really

bad

performance

numbers,

but

it

shows

proof

of

concept.

Our

news

performance

numbers

look

pretty

good

so,

and

this

effectively

gets

rid

of

all

the

porting

whatsoever.

We

just

run

the

entire

thing

on

the

GPU,

which

also

works

in

an

environment

where

you

do

not

have

unified

sheet

memory

by

the

way

which

is

kind

of

fun.

A

B

A

A

You

can

just

get

a

subset

of

the

host

openmp,

but

people

are

working

on

this

and

how

about

the

benefits

we

see

benefits

if

you

do

these,

you

know

fancy

things

like

take

Cuda

code

and

translate

it

into

host

code

where

you

do

complex

transformations

in

order

to

make

it

to

run

fast,

but

we're

not

actually

like.

We

haven't

explored

anything

beyond

that.

So

there

isn't.

No

any

benefits

in.

You

know

just

simple

same

device:

same

Target

optimizations.

A

On

the

last

topic

on

running

the

program

on

a

GPU

and

going

to

the

host

only

when

needed,

what

is

the

state

of

the

art

doing

I

O

directly

from

the

GPU

meaning

without

having

to

use

explicit

device

telescopics

and

using

device

storage,

libraries,

okay,

cool

as

far

as

I

can

as

far

as

I

know,

the

state

of

the

art

is

there.

There

is

no

production

working

solution

or

even

research

solution

that

I'm

aware

of

that.

A

Does

it

but

I'm

actually

trying

to

get

someone

to

work

on

it,

because

there

was

some

Nvidia

folks

have

shown.

You

know

proof

of

concept

that

you

can

do

I

owe

from

the

GPU

and

I

would

really

like

to

have

an

open

library

to

do

that

and

then

provide

effectively

things

like

f,

open,

F,

read

and

so

on

on

the

device

through

through

direct

communication

with

the

kernel

rather

than

RPC

sysc

codes

and

so

on.