►

From YouTube: 12. Deep Learning

Description

Learn about the many deep learning tools available at NERSC.

Slides for all sessions can be downloaded from here: https://www.nersc.gov/users/training/events/new-user-training-june-21-2019/

A

A

So

just

in

case

you

know,

you've

not

not

been

tracking

media

for

the

last

month

or

so

the

the

Turing

award

this

year,

which

is

the

biggest

prize

that

you

can

get

in

computer

science,

was

was

made

to

these

three

gentlemen,

who

are

the

the

grandfather's

of

the

field

of

deep

learning.

So

really

it's

you

know

much

like

the

the

Nobel

Prize

in

science.

A

For

us,

you

know

here

at

Newark

and

the

field

of

high-performance

computing

last

year

at

supercomputing

2018,

our

team,

in

collaboration

with

Oak

Ridge

and

NVIDIA

and

UC

Berkeley,

were

awarded

the

Gordon

Bell

prize,

which

is

the

the

top

prize

in

the

field

of

high-performance

computing.

So

we

were

successful

in

taking

a

deep

learning

application

and

scaling

it

on

all

of

summit,

which

is

the

number

one

machine

in

the

world

at

this

point

in

time

and

for

the

first

time

two

applications

were

successful

in

reaching

the

exit

flop

mark.

A

So

again,

you

know:

we've

talked

about

exascale

computing

for

about

a

decade

now,

and

this

is

one

of

two

applications

which

were

able

to

exceed

that

mark

last

year.

So

you

know

just

in

case.

Maybe

you

don't

care

about

the

hype,

and

you

really

want

to

understand

what

deep

learning

is,

and

it's

not

good

for

you,

your

applications,

so

I

did

want

to

call

out.

We

are

very

early

on

that.

A

Deep

learning

is

a

part

of

a

much

broader

toolbox

in

analytics

that

you

should

be

aware

of

so

you

know

if

you've

been

doing

classical

statistical

analysis

for

significance

tests

and

so

on.

Please,

you

know

continue

on

I

mean

there's

no

need

necessarily

for

you

to

do

deep

learning

if

you've

been

doing

classical

linear

algebra

for

solving

you

know

large

matrices

and

so

on

so

forth,

then

you

should

continue

doing

that.

A

But

if

you

care

about

artificial

intelligence

and

perhaps

you've

dabbled

in

machine

learning,

already

I

know

that

you

know

when

Roland

asked

you

for

a

raise

of

hands.

None

of

you

raised

your

hand,

so

maybe

it's

a

safe

assumption

that

you,

you

haven't

been

using

machine

learning

so

far,

but

there

are,

there

are

classes

of

applications

that

machine

learning

is

well

suited

for

and

AI

is

well

suited

for

and

certainly

for

those

class

of

applications.

It

is

worth

considering

whether

you

should

use

deep

learning

or

not

so

in

particular,

just

to

be

very

concrete.

A

You

know

this

is

a

chart

of

use

cases,

so

different

kinds

of

problems

are

laid

out

along

rows

and

then

different

science

areas

are

laid

out

along

along

columns.

So

perhaps,

if

you

care

about

pattern,

classification

problems

so

I

give

you

an

image

and

you're

supposed

to

tell

me:

is

this

an

image

of

a

star

or

a

galaxy?

Those

kinds

of

problems

are

really

well-suited

for

machine

learning

and

deep

learning

can

certainly

get

state-of-the-art

performance

if

you

care

about

regression

problems.

A

So

maybe

you

don't

care

about

class

labels,

but

you

care

about

predicting

a

continuous,

valued

quantity.

Then

again,

deep

learning

is

proving

to

be

very

successful

in

those

applications.

There

are

many

other

use

cases.

So

clustering

I

give

you

a

bunch

of

points,

and

you

need

to

tell

me

you

know

what

is

the

most

natural

clustering

structure

in

this

in

this

data

set.

A

Deep

learning

can

help

there

if

you

care

about

dimensionality

reduction,

so

you

have

very

high

dimension

data,

so

a

million

dimension

perhaps-

and

you

would

like

to

understand

what

is

the

intrinsic

dimensionality

of

this

data

set-

then

potentially

deep

learning

can

help

there.

There

are

many,

many

other

use

cases

again.

You

know.

A

Deep

learning

is

not

quite

well

suited

for

every

single

row

in

this

ROM,

but

I

would

say

that

if

you

have

label

data,

so

examples

of

classes

or

examples

of

regression

quantities,

then,

for

these

first

two

rows,

I

think

what

we

are

seeing

across

the

board.

Is

that

deep

learning?

It's

certainly

what

really

well.

A

So,

that's

just

to

give

you

a

flavor

for

the

kinds

of

problems

that

deep

learning

is

well-suited.

For

you

know

we

can

spend

a

whole

hour

just

chatting

about

use

cases,

but

this

is

meant

to

be

a

very

practical

tutorial

on

what

you

know.

If

you

care

about

deep

learning

and

you'd

like

to

use

it,

then

what

can

you

do

about

it

at

risk?

So

again,

software

is

something

that

we

do

we

provide.

A

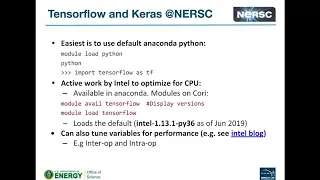

So

if

you

are

a

user

and

you'd

like

to

use

deep

learning,

then

the

four

frameworks

that

you

can

use

or

Chara's,

tensorflow,

pi

torch

and

cafe.

Those

are

the

technologies

that

we've

sort

of

hand

selected

for

you

after

a

lot

of

deliberation

and

thought

there

is

always

going

to

be

a

long

tail

in

terms

of

number

of

frameworks,

I

think

about

a

year

ago,

I

think

new,

deep

learning

frameworks

are

emerging,

every

single

you

know

month,

but

things

I

think

I've

slowed

down

a

little

bit

days,

maybe

as

much

activity.

A

Nevertheless,

we

do

expect

a

lot

of

people

to

develop

a

lot

of

solutions

in

this

layer.

Now

you

know

Rebecca

and

others

chatted

about

hardware.

What

sort

of

systems

we

have

at

nest

right

now,

so

you

know

we

do

have

CPUs

can

Ellen

has

well

in

the

future.

Our

next

machine

is

going

to

feature

GPUs.

So

if

you

wanted

to

use

this

hardware,

you

know

we

are

nurse

need

to

work

with

vendors

and

make

sure

that

all

of

the

intervening

layers

in

the

stack

are

working

well.

A

So

in

particular,

if

you

have

CPUs,

then

we

work

with

Intel

to

make

sure

that

mkl

DNN

supports

deep

learning

software

for

running

on

CPUs,

and

then

we

are

working

with

Nvidia

right

now

on

enhancing

code

enn

so

that

it

works

well

on

GPUs.

Now

it

is

very

very

likely

that

in

the

future,

no

systems

will

have

accelerators

or

special

purpose

hardware

for

computing,

and

some

of

the

best

accelerators

at

this

point

in

time

are

in

the

area

of

deep

learning.

A

So

if

these

show

up

tomorrow,

then

we

will

again

work

with

the

relevant

vendors

to

make

sure

that

you

write

your

application

once

in

those

frameworks

and

will

continue

to

make

sure

that

the

application,

the

same

applications

will

work

well

on

on

emerging

hardware,

all

right

so

I

think

it

just

just

to

a

word

on

software

framework.

So

again

you

know

roland

chatted

about

Python

and

Jupiter

and

how

those

are

interesting

technologies

that

are

you

know,

evolving

very

fast.

A

I

would

say

that

10

years

ago,

if

you

know

you,

you

deserted

that

Python

was

going

to

be

important

in

an

HPC

Center.

Not

many

people

would

listen

to

you.

I

think,

similarly,

all

of

these

frameworks

have

emerged

in

the

last

three

or

four

years,

so

this

is

just

a

time

of

all

plot

of

how

many

users

in

the

community,

broadly

not

in

US

but

in

the

community,

broadly,

have

been

using

these

frameworks.

A

So

here

are

just

some

comments

on

you

know

what

we

think

about

all

of

these

frameworks.

So

if

you

are

a

naive,

deep

learning

user-

and

you

want

to

get

up

and

running

really

fast-

you

maybe

don't

care

about

the

specifics

of

you

know

deep

learning

architectures

on

support,

then

chaos

is

really

the

way

to

go.

I

mean

that's

the

the

go-to

tool,

which

is

probably

the

most

productive

and

in

the

fewest

lines

of

code.

Perhaps

even

in

you

know

two

dozen

lines

of

code.

You

can

get

your

deep

learning

app

up

and

running.

A

A

A

A

This

is

definitely

the

preferred

front-end

for

developing

analytics

capabilities,

so

you

can

bring

up

kernels,

both

tensorflow

and

pythons

kernels

in

jupiter,

and

you

can

run

I

would

say

it's

maybe

small-scale

exploratory

jobs

on

on

the

chip

return

notes,

but

in

the

future

we

will

be

enabling

deep

learning

jobs

to

run

on

the

compute

nodes,

so

the

K

and

L

nodes

and

the

Haswell

nodes-

and

you

know

we

did

talk

about

the

quarry.

Gpu

rack

that's

available

now

so

soon

we

will

also

be

able

to

support

running

deep

learning

jobs

on

the

GPU

racks.

A

So

you

you're

going

to

stick

to

your

Jupiter

notebook,

front-end

and

then

all

of

the

computation

will

run

on

the

back

end

and

you

in

the

jupiter

notebook

you

can

figure

out.

You

know

essentially

how

your

deep

learning

jobs

are

converging

and

training,

and

so

on.

So

some

of

the

examples

of

essentially

launching

these

notebooks

are

all

available

here.

You

can.

You

can

check

those

out

later

on,

if

you

like

so

I.

Just

did

you

know

it's

a

tutorial

at

the

end

of

the

day,

so

I

did

want

to

show

you

some

code.

A

The

the

details

are

not

as

important

but

I.

Think.

The

point

here

is

that

within

just

two

slides,

you

know

two

dozen

lines

of

code.

You

can

get

a

deep

learning

job

up

and

running.

So

this

is

a

Karass

example.

The

task

here

is

to

look

at

such

digits

and

classify

what's

a

zero.

What's

a

nine

and

the

steps

are

fairly

simple,

so

you

import

a

bunch

of

stuff

right

in

the

beginning.

A

You

create

your

network,

you

essentially

fit

your

network

and

then

you,

you

use

your

train

network

to

make

predictions.

Now

you

be

not

gonna.

Have

you

know

in

20

minutes?

We

can't

do

a

tutorial

on

deep

learning

end

to

end,

but

those

are

essentially

the

key

steps

load.

A

bunch

of

stuff

I

mean

all

of

the

modules

that

you

need

load.

Your

data

set

do

pre-processing

or

cleaning

of

the

data

set

as

you

need

create.

A

A

There

are

a

lot

of

details

around

optimizing,

deep

learning

on

CPUs

and

running

on

multiple

nodes.

I,

don't

think

I'm

gonna

have

time

for

that

today,

but

you

can

check

out

this

webpage

where

and

we've

been

systematically

tracking

the

performance

of

tensorflow

and

pi

touch

on

a

range

of

standard

benchmarks

which

are

used

in

the

community

like

alex

net

and

google

net

and

so

and

so

forth.

So

we

do

have

performance

regressions

periodically

and

we

try

to

make

sure

that

the

the

tensorflow

and

pi

Tosh

builds

on

systems

are

performant.

A

Now,

once

you

made

sure

that

deep

learning

software

is

working

well

on

a

single

node.

Obviously,

the

next

step

is

to

run

on

multiple

nodes,

and

you

know

the

simplest

strategy

that

you

can

adopt,

for

you

know

essentially

having

deep

learning

run

on

multiple

nodes

is,

is

using

this

thing

called

data

parallelism.

So

essentially

you

boot

up

the

same

network

on

multiple

nodes

and

you

break

up

your

data

set

into

pieces.

Every

node

looks

at

a

slightly

different

data

set.

So

then,

essentially,

what

you

need

to

do

is

to

do

a

reduction.

A

Make

sure

that

after

your

local

node

has

looked

at

its

local

data

set,

there

is

a

reduction

phase

wherein

everybody

shares

the

gradient,

updates

or

shares

that

the

parameter

estimates,

and

they

all

you

know,

converge

on

a

single

network

at

at

the

end

of

the

day.

So

these

that

that

particular

motor

parallelism

data

parallelism

is

is

supported

through

two

means

one

is

hora

ward,

which

comes

from

uber,

and

then

there

is

a

cray

plugin

and

both

of

them

can

easily

support

that

mode

of

parallelism

and

we've

done

again

a

lot

of

work

in

scaling.

A

All

right,

I

think

I'm

gonna

skip

this

particular

snippet,

but

I

did

one

sort

of

bring

up

the

the

very

last

night

which

is

on

support

so

that

sort

of

a

you

know

sense

for

you

for

what

software

frameworks

you

can

use

what

exists

at

nest

right

now,

but

really

this

is

an

emerging

area.

It's

moving

extremely

fast,

so

we

at

NASCAR

trying

to

do

our

best

in

terms

of

making

sure

that

the

software

is

up

to

up-to-date,

but

then

also

we're

learning

more

and

more

about

the

the

network.

A

Architecture

is

what

works

best

and

so

on

support.

So

I

think,

while

you

might

hopefully

have

an

easy

time

in

just

getting

up

and

running

at

nask

chances

are

that

you're

gonna

have

a

lot

of

sophisticated

questions

around

you

know

is

deep

learning

really

best

suited

for

my

problem.

What

deep

learning

architectures

make

sense

for

this

particular

data

set?

If

my

model

doesn't

converge

or

doesn't

give

me

the

accuracy

that

I

mean

what

do

I

do

next.

A

A

But

obviously,

if

you

have

any

issues

with

the

existing

production

software

that

we

have

up

running,

you

know

feel

free

to

send

an

email

to

consult

and

does

not

curve,

as

you

always

do,

and

we

do

have

now

I

think

a

reasonable

documentation

in

place

machine

learning.

So

you

can

check

out

all

of

the

instructions

for

software.

We

do

have

a

range

of

use

cases

there

and

we've

certainly

been

making

an

effort

to

present

day

long

tutorial.

A

So

again,

I

just

had

20

minutes

for

this,

but

we

do

have

day-long

tutorials

on

deep

learning

at

supercomputing

at

international

supercomputing

and

then

also

at

the

Cray

user

group

meeting.

So

you

should,

you

know,

feel

free

to

check

that

that

material

on

alright,

so

I

think

that's

all

I

have

for

deep

learning.

Do

you

have

any

any

questions

on

any

of

that.