►

From YouTube: 05 Running Jobs

Description

Part of the NERSC New User Training on June 16, 2020.

Please see https://www.nersc.gov/users/training/events/new-user-training-june-16-2020/ for the training day agenda and presentation slides.

A

So

my

name

is

Helen:

he

works

at

nurse

user

engagement

group

talking

about

running

jobs

on

Cory

today

is

my

outline.

I'm

gonna

talk

about

some

introductions

and

give

back

script

examples

and

then

I'll

mention

more

advanced

or

any

jobs

options

and

then

we'll

talk

about

affinity

and

how

do

you

monitor

jobs

and

what

are

some

best

practices

for

running

jobs.

A

So

nurse

we

have

different

types

of

jobs.

Korea

is

a

massive

simulation

and

also

data

intensive

system,

so

it

has

mostly

power

of

jobs

using

small

number,

of

course,

up

talk

to

the

for

capacity

and

also

lots

of

small

sort

of

Serio

type

of

jobs.

Presently,

parallel

simulation

analysis

jobs.

We

have

a

batch

scheduler,

it's

not

first-in

first-out,

there's

some

schedulers

based

on

priority

and

other

combinations.

A

A

The

way

is

that

so

it

could

be

fair

to

our

to

support

over

seven

thousand

users,

jobs,

the

two

types

of

nodes:

logging,

those

and

compute

nodes

logging,

those

mostly

for

editing,

compile,

submit

your

batch

jobs

and

sometimes

you're

wrong,

very

small

short

serial

utilities

and

replications,

and

they

are

as

well

has

one

type

of

logging

them

compute

nodes

that

also

have

has

well

not

and

type,

and

also

can

L

type

at

two

different

architectures.

So

if

you

build

a

binary

right

fork

as

well,

it

does

run

on

care

now,

but

not

vice

versa.

A

I'm

going

to

show

you,

how

does

it

compute

node?

How

does

the

slurm

recognize

a

compute

note

was

the

number

of

course

numbering

and

also

just

getting

some

background

of

the

numa

node

and

different

memory,

access,

look

distances

cetera

so

for

house.

Well

note:

there

are

32

physical

cores

into

sockets,

and

each

socket

is

a

new

metal

man,

so

we

call

them

new

modem

in

zero

and

minimum

1,

and

in

nuuma

domain

0

there

are

16

cores

numbered

0

to

15,

but

also

number

32

to

47,

so

the

green

numbers

are

actually

we

call

them.

A

0

and

32

are

on

the

same

physical

core,

but

they

are

two

logical

cores,

meaning

they

are

actually

two

hyper

threads

per

core

each

physical

core.

There

are

a

few

ways:

all

right

site

can

tell

you

how

to

find

out

this

information

so

that

we

get

to

plot

this

diagram.

For

you

and

when

there

are

memories

attached

to

each

socket

or

each

human

domain

and

a

core

access

to

memory,

local

to

itself

it's

faster

than

it

has

to

access

memory

on

the

remote

human

domain.

A

A

Here's

a

example

k

now

compute

node

there

are

different

modes

on

the

mode.

We're

using

by

default

now

is

called

quad

cache.

There

are

68

physical

cores

and

each

core

has

four

hyper

threats.

So

these

terms

I

it

has

272

cpus

per

node,

there's

a

DDR

memory,

but

also

some

high

bandwidth

memory.

It's

called

h,

km,

h,

BM

or

MCD

ram

in

general,

ladies

in

the

quad

cache

mode

that

is

considered

as

a

cache

for

the

memory.

So

in

this

mode,

actually

there

is

only

one

you,

madam

in

there

are

other

types

of

k.

A

Now

knows

that

you

have

can

resume

by

reservation.

Only

do

you

have

different

number

of

human

domain,

then

also

have

to

worry

about

memory

access,

but

in

quad

cache.

Basically,

you

can

sort

of

view

it

as

a

memory

puking

homogenious

on

the

note,

but

except

it

has

4

cores,

4,

hyper

stress

per

core

and

some

of

the

numbers

here:

0

68,

136,

204

on

the

same

physical,

core

and

all

the

way

down

to

here

and

some

numbers

here,

because

we're

gonna

use

it

later

to

show

you

the

affinity

stuff

as

well.

A

So

when

you're

submitting

a

batch

job,

you

would

have

a

batch

script

prepared

in.

It

has

some

directives

to

ask

for

resources

to

schedule

your

job.

You

asked

for

how

long

your

job

needs

and

what

type

of

knows

who

type

of

qos

etc,

and

they

would

submit

to

you

the

batch

system,

either

vs

batch

and

then

with

s

vac

she's,

submitting

the

queue

and

then

you

come

back

forget

about

it.

Come

back

and

check

your

results

or

you

to

s

Alec,

which

is

interactive.

A

You

would

get

a

prompt

back

on

a

computer

and

you

can

watch

your

job

real-life.

So

many

more

like.

So

here

is

a

simple

illustration:

that's

logging,

node

England.

You

submit

to

assess

matram

as

Alec,

and

we

ask

you

not

to

run

big

executables

on

login.

It's

getting

shared

resources,

you're

gonna

impact,

other

people's

responses,

interactive

response

and

don't

do

a

swan

directory

on

logging

nodes.

We

would

like

you

to

do

this

after

us

version

as

a

lock

you

get

onto

our

head.

Node

you

get

onto

the

head,

compute

node

of

the

compute

nodes.

A

Pour

that

you

job

out

is

allocated

to

on

this

head.

Compute

nodes,

everything

in

your

batch

script,

except

the

s

wrong

line,

is

wrong

on

this

head,

compute,

node

and

then,

when,

after

you,

you

know,

do

the

pre-staging.

You

set

up

your

input

at

such

her

work

and

then

you

as

wrong

command.

This

s1

command

will

start

two

parallel

jobs

on

all

the

compute

nodes,

including

this

pad

compute

node.

Then

you

distribute

your

work

onto

the

computer

and

then,

after

that's

for

IP

do

something

else

in

run

on

the

head.

A

Compute

node

again,

so

show

you

some

bad

script

examples.

My

very

first

hello

world

example

I'm

a

shell

and

then

lots

of

s

patch

options

and

an

s

wrong

command.

I'm!

Not

talking

about

details

yet

I'll

show

it

later

slides,

so

s

patch

and

you

have

into

the

queue

or

you

to

ask

Alec

wait

for

accession

and

you

generous

wrong

common

word

in

this

session.

A

So

now,

let's

just

go

to

some

details

of

the

sample

batch

scripts.

The

first

line

here

is:

you

have

to

have

a

shell,

because

everything

other

than

as

wrong.

You

want

a

shell

to

be

able

to

execute

those

commands,

so

in

special

special

I

would

show

and

also

slurm

has

a

default

default

feature

that

it

dissolve

a

ceccolini.

You

have

a

way

by

is

by

our

configuration.

A

A

Here

is

some

other

flags.

People

talk

about

QoS,

you

would

say

which,

which

which

cures

wants

me

to

use

a

regular

premium,

low,

etc.

How

many

nodes

you

want

and

how

long

I

need

for

one

hour

here

and

what

type

of

nodes

I

want

here

is

a

as

well.

You

see

the

the

flags

here,

it's

called

long

name

for

the

options

and

we're

going

to

show

you

short

names

in

the

later

slides,

as

well

they're,

equivalent.

A

So

here

I

didn't

use

short

names

now,

so

it

originally

was

qos.

Now

I

can

use

this

q.

The

difference

is

short

with

between

short,

its

Q

and

a

space

regular

in

a

long

name

is

qo

as

eCos

regular,

since

this

also

always

says

and

echo

for

the

long

name

and

one

and

space

for

the

short

name

and

an

example.

In

this

example,

I

have

another

few

options

here

there

are

the

optional,

so

for

them,

I

want

L,

meaning

license

for

scratch.

A

File

system

by

specific

specific

find

us

when

the

scratch

file

system

has

is

having

some

issues.

It

will

block

your

job

from

starting

so

that

you

won't

fail

immediately

and

you

could

get

of

your

job,

a

name

J

my

job,

a

job

name,

it's

easier

for

you

to

recognize

with

what

this

is

about,

and

you

could

also

specify

other

things

like

specific

names

just

in

and

out

file,

which

account

to

charge

whether

you

want

receive

some

emails,

etc.

A

Here's

an

MPI

example,

so

I

asked

for

40

nodes

and

my

s4

online

has

little

n1

and

1280.

So

if

we

divide

by

that,

it

means

I'm,

running

32,

MPI

tasks

per

node

in

this

example,

Michael

DX

e

here

and

notice.

I

have

some

other

flags

besides

middle

end

of

MPI

tasks,

here,

C

and

the

CPU

bind

so

C

means

how

many

logical

course

I

want

to

be

allocated

for

each

MPI

task.

So

for

the

house,

one

node,

as

we

mentioned

about

there's

a

32

physical

chorus

and

two

hyper

strands.

A

So

there

are

64

pi,

personalized,

high

64,

large

Coast

rads

per

node

when

I'm

running

32m

kite.

As

per

note,

I

would

like

to

give

two

logical

cores

per

MPI

task.

So

here

is

the

C

value

about

this

is

2,

and

we

also

find

out

that

we

need

sleepy-bye

equals

course,

especially

when

the

node

is

not

fully

occupied.

A

A

Here

this

example

is

still

an

MPI

code.

Sometimes

your

source

code

may

have

openmp,

enabled

and

or

some

libraries

were

used

on

openmp

by

default,

but,

for

example,

you

want

to

run

a

pure

MPI

code.

You

want

to

make

sure

you're

running

on

it

one

openmp

thread.

You

would

like

to

include

this

box

setting

or

Machinima

as

equals

one

here

so

that

you

are

not

accidentally

running

many

many

threads,

that

you

don't

intend

to.

A

A

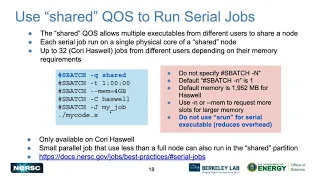

So

so,

for

example,

I

talked

about

MJ

jobs.

What,

if

I

have

a

serial

job?

I

only

want

to

run

on

one

physical

core.

So

if

I

submit

your

regular

TOS,

then

it'll

I

would

be

charged

by

the

whole

note,

but

also

I'm

also

wasting

others

only

one

physical

course

on

as

well,

so

we

provided

as

shared

qsr

in

in

that

QRS

you

or

your

jobs,

are

some

sharing

note

with

other

users.

A

By

default,

you

get

one

court,

one

physical

core,

but

if

and

by

default,

if

it's

one,

then

you

get

total

number

of

memory,

Q,

OS

memories

and

then

divide

by

32,

which

is

the

available

memory

for

the

applications.

It's

about

2

gigabytes

per

share

job,

for

example.

What

if

you

want

your

around

like

4

gigabytes

of

memory,

but

still

on

one

physical

core?

You

can

ask

in

your

own

script

memory

a

little

bit

more

and

it'll

calculate

and

allocate

a

physical

course

for

you.

You

still

run

on

one

core.

A

You

get

to

chords

of

memory

worse

for

yourself

and

so

the

whole

note

or

a

schedule,

fewer

jobs

total.

So

this

QRS

is

only

available

on

as

well

and

also

you'll

be

charged

less,

which

is

good.

You

could

run

some

small

parallel

jobs

there

as

well

and

you're

putting

s

wrong.

You

know

asking

for

a

little

bit

more

memory

or

asking

for

and

for

a

bit

more

number

of

course,

then

you

could

put

Esther

on

there

to

learn

some

smaller

power

jobs

also

charged

only

for

the

number.

A

But

if

you

do

run

a

serial,

a

job,

we

suggest

not

to

putting

s

1

in

front

of

it,

because

there's

an

overhead

I

mention

about

debug

and

intact

interactive

jobs.

We

do

reserve

notes

for

these

two

key

OSS

so

that

you

can

get

jobs

throughput

much

faster

for

the

debug

there.

You

can

ask

for

a

maximum

512

notes,

but

only

for

30

minutes.

A

This

limit

of

run,

limit

and

submit,

submit

limit

per

user,

and

you

can

do

ask

Alec

to

cue

debug

or

you

could

do

a

provide

put

together

a

batch

script

with

QoS

each

equals

debug,

and

then

us

patch

your

script

and

wait

for

your

answer

for

the

interactive

QoS.

You

can

only

do

as

outlook

as

much

won't

allow

you

it's

supposed

to

be

as

completely

interactive.

A

We

highly

recommend

this

because

it

either

gives

you

a

note

within

five

minutes

or

it

all

reject

to

tell

you

tells

you

that

there's

no

notes

available

for

you

there's

also

round

limits

of

my

limit.

The

good

thing

is

that

it

has

max

more

time

for

four

hours

instead

of

debug

there's

only

30

minutes,

but

you

can

only

run

use

up

to

64

nodes

which

has

more

and

Cana

combined

for

project,

not

per

user.

A

So

if

some

of

your

colleagues

are

using,

you

may

not

be

able

to

get

notes,

while

some

people

from

other

projects

can

still

get

nodes,

there

are

so

some

documentation

and

some

commands

there.

You

can

find

out

who,

in

your

project,

is

using

these

nodes,

and

you

can,

you

know,

talk

to

them

and

collaborate.

A

Ok,

next

I'm

gonna

talk

about

advanced

running

jobs

options,

so

many

other

ways

to

run

precisely

that

we

cite

the

basic

batch

script

with

1s,

finding

that

these

are

serial

jobs

or

parallel

jobs.

So

we

could,

you

know,

run

though

jobs

you

can.

This

is

the

list

of

things

here.

I'm

gonna

actually

have

at

least

one

slide

per

thing.

So

just

quickly

skip

this

one,

all

the

jobs.

A

There

are

two

ways

you

can

either

bundle

them

with

multiple

strands

run.

Sequentially

here

is

left

side

sequentially

or

you

could

run

them

simultaneously.

So

the

difference

here

is

that,

with

sequential

a

strands

Eugene

what

restaurants

there,

then

you

ask

for

number

of

nodes,

the

maximum

of

nodes.

That

is

a

strong

need,

but

you

also

ask

for

the

combined

four

hours

of

these

as

well

in

your

bedskirt.

For

simultaneously,

you

ask

the

number

of

nodes

you

would

ask

for

is

the

total

number

of

nodes

that's

needed,

and

ours

is

longest

hour

of

this

decimal.

A

So

you

in

order

to

for

this

children

simultaneously

simultaneously,

and

you

need

to

put

an

ampersand

at

the

end

and

wait

so

that

once

as

finally

submitted,

you

know,

process

other

estarán

and

was

the

wait.

It

would

also

not

exit

immediately

once

the

sales

jobs

are

submitted

and

a

wait

until

your

jobs

finish.

Some

of

notes

here

just

remember

at

least,

and

each

s

run-

is

exclusive

under

notes,

so

they

don't

share

the

course.

A

A

The

job

erase,

sometimes

you

have

many

jobs

that

was

very

similar-

needs

that

so,

instead

of

submitting

ten

of

these

jobs

individually

rather

submit

a

job

array,

contend

those

ten

jobs

and

it's

a

convenient

environment

variable

for

you

to

use

s

alarms

as

slurm

or

a

job

ID.

Then

you

can

have

in

your

plate.

You

know

preparing

events

for

each

of

the

small

jobs,

put

your

input

and

things

in

each

subdirectories.

Then,

when

you

submit

just

one

and

they

don't

want

your

monitor

or

manage,

you

can

still

treat

them.

A

A

A

Variable

time

jobs

because

of

the

batch

scheduler,

we

have

limitations

of

maximum

or

hours.

The

HQs

is

allowed

right

now

is

48

except

some

special

queues,

mostly

48

here,

a

nurse.

So

if

you

want

to

run

multiple

jobs,

this

way

allow

you

to

do

this.

Is

you

could?

If

your

jobs

have

the

check

point

week,

schedule

checkpoint,

restarting

capability,

then

you

could

split

your

jobs

into

smaller

chunks

and

the

variable

times

the

way

to

run

it.

A

You

would

have

lots

of

special

flags

here,

for

example,

for

this

job

I

say

I

say,

for

example,

I

need

my

job

to

run

actually

for

total

of

96

hours

and

and

for

each

of

the

little

chunks,

I'm

okay,

with

as

long

as

it's

two

hours

to

two

hours,

a

truck

like

between

two

hours

and

then

maximum

of

each

individual

job

is

48

hours.

So,

if

I

put

that

in

my

script,

I

may

get

chunks

of

any

time

anywhere

between

2

hours

and

48

hours.

A

A

So

that

you

know,

I'm

also

remembers

how

many

hours

your

jobs

are

already

around

and

then

continued

for

you.

So

there

are

others,

some

of

some

special

scripts

in

there

as

wealth

and

flags.

Here

signal

means,

like

my

job,

would

be

interrupted.

60

seconds

and

I

give

you

at

60

seconds

to

do

the

checkpoint

after

each

little

chunk

of

job.

The

checkpoint

command.

Is

your

script

to

do

checkpointing

or,

if

it

there's

nothing

to

do.

You

can

leave

it

empty

as

well.

Checkpoint

equals

empty

is

fine

and

there's

a

setups

as

script.

A

A

So

with

this

capability,

because

you're

asking

for

time,

minimum

equals

two

hours,

we'd

have

a

special

cure.

That's

it

not

has

to

be

tied

to

the

variable

job,

but

it's

very

beneficial

to

do

it

because

you

add

in

cute

flats

qos.

We

give

you

a

huge

amount

of

charging

discount

seventy-five

percent

now,

so

you

only

try

to

run

a

quarters

of

hours

used

the

because

of

this.

You

know

minimum

time

minimum.

A

You

can

only

ask

for

time

minimum

up

to

two

hours

so

that

these

jobs

like

when

scheduler,

would

try

to

find

lots

of

scheduling

homes.

We

call

them

back

field

house

when

scheduling

those

small

chunks

of

jobs.

It

won't

affect

the

starting

of

bigger

jobs,

so

your

jobs

made

much

easier

to

get

through.

This

is

beneficial

to

you

and

for

us

it's

also,

you

know,

help

us

to

improve

our

system

utilization,

so

flux,

to

ask

to

the

American

time.

Jobs

is

a

good

thing

to

do.

A

I'll

check

out

these

documentation,

please

we

talked

about

over

on

us

earlier

as

well.

When

your

project

zero,

an

active

balance,

you

have

to

sum

you

can't

submit

to

the

Q

over

round.

Q

us

or

over

our

shared,

if

you

want

to

use

shared

nodes,

lowest

priority,

zero

charge,

you

and

you

have

to

do

dash

Q

over

R

explicitly

with

time.

You

also

have

to

have

to

include

time

minimum

of

up

to

four

hours

in

your

script.

A

The

workflow

managed

management

tools

if

I

have

a

large

number

of

similar

jobs

and

if

I,

some

other

you

know,

I

want

some

automation,

I'm

moving,

Dana,

multi-step

processing.

This

is

think

the

tools

you

should

use.

One

such

example

is

I,

have

say

ten

thousand

of

one

core

jobs.

Please

don't

do

for

I

equals.

One

did

one

tends

out.

As

from

my

eight

out

out,

this

is

really

inefficient

and

also

because

of

the

continuous

as

from

with

no

time

space

interval

and

really

overwhelms

our

scheduler.

A

The

few

tools

Cooper

wrote

a

spammer,

a

fireworks,

let

cetera

so

there's

a

talk

or

workflow

to

work.

Those

explicitly

this

afternoon

for

details

course

buffer

is

another

thing

that

for

especially

for

users,

jobs

with

large

IO

need

it's

very

good

for

you.

You

improve

your

IO

backwards,

because

the

these

nodes

are

first

buffer,

are

located

directly

next

to

your

computer's,

with

more

metadata

servers

as

well.

So

there's

also

a

special

talks.

A

This

optional

outburst

buffer

for

usage

examples:

shifter,

is

a

like

similar

compatible

to

the

popular

docker

container,

so

user

can

run

docker

containers

on

earth

systems

which

allows

you

to

use

your

own

custom

software

environment

so

that

you

can

maintain.

You

know

your

you

don't

have

to

reboot

your

applications.

You

can

manage

reproduce

abilities

lots

of

benefits.

We

also

have

a

separate

shift

to

talk

this

afternoon.

A

That's

for

jobs.

Sometimes

you

have

a

big

big

big

production

round,

but

also

your

data

is

not

on

quarry

on

scratch.

There

are

actually

on

tapes

in

the

archive

storage.

So

if

you

put

those

preparing

transferring

data

and

before

and

after

jobs

into

your

large

simulation

ground,

it's

really

cost

to

you

lots

of

allocation

hours.

So

the

way

we

we

created

this

expert

QoS,

which

actually

runs

on

some

of

the

logging

nodes.

That's

why

it's

on

a

different

scale,

our

scheduler

named

es

quarry.

A

So

you

have

to

add

capital

M,

yes,

quarry

in

your

batch

scheduler

batch

script,

then

you

could

run

regular

etched

or

HSI

stuff

to

get

your

data

to

your

art,

to

the

location

of

the

quarry

local

file

systems

and

few

later

on,

you

actually

want

to

monitor

those

jobs.

You

would

also

Marge

module

load,

yes

quarry

and

then

do

the

regular

and

queue

monitor

on

scripts

to

see

these

jobs.

A

A

No

actually,

there's

been

a

few

answered.

Thank

you.

We

go

always

the

affinity

and

their

process

thread

and

memory

infinity.

So,

as

I

mentioned,

the

affinities

is

actually

because

of

the

where

your

memories

with

your

memory

is

relative

to

your

physical

core

with

your

and

threads

are

CPUs.

Are

it

will

affect

your

job

performance,

so

process

memory

affinity

means

how

to

find

your

MPI

tasks.

Cpus

cement

memory,

a

thread

affinity,

means

how

two

bytes

words

two

CPUs

allocated

to

each

of

the

MPI

process

in

memory.

A

Panini

means

how

to

allocate

memory

from

specific

Numa

domains

and

with

the

correct

affinity.

It's

it's

a

low-hanging

fruit

as

I

understand

it,

but

also

without

it.

Your

performance

could

hurt

many

many

phones.

So

it's

it's

it's

existential

to

do

this

as

also

it's

the

base

for

you.

If

you

want

to

do

further

performance

optimizations,

we

also

want

to

promote

OpenMP

standard

settings

such

as

om

teep,

rockbind

ont

places.

Instead

of

some

of

the

vendor

specific

settings,

there's

a

inhale

setting.

There

are

three

settings

that

we

try

to

promote

our

standard

settings.

A

There's

a

page

docs

in

the

documentation,

jobs,

slash

affinity

with

lots

of

details

and

best

practice,

lists

and

explanations.

There.

Please

check

it

out.

So

what

if

I

just

draw

a

naive

as

well,

especially

saying:

okay,

no

nodes

around

sixteen

MPI

tasks

and

eight

open,

and

please

read

on

sixty

eight

core.

Ok,

now

I

do

set

my

own

piece

rats

I

do

some

of

my

oil

and

cheese

settings.

Then

I

just

want

and

and

16

by

executable

it.

It

turns

out,

it's

really

a

mess.

A

It

gets

you

the

threat,

zero

on

rank,

zero

and

spreads

we're

on

rank

one,

the

they

are

overlapping

on

the

same

physical

core.

So

these

numbers

are

the

the

the

numbering.

I

showed

you

earlier

so

zero

and

zero

on

the

same

physical

core,

of

course,

and

144

is

physical,

core

eight

and

a

lot

of

it

again,

but

basically

without

the

other

C

and

their

CPU

bind

beginning

list

yourself

into

completely

overlapping

MPI

rack

ranks

on

the

same

course,

which

is

bad.

A

So

the

reason

is

because

68

is

not

divisible

by

number

of

MPI

tasks,

which

is

sixteen

here.

So

what

we

do

is

we,

let's

say:

let's

make

it

more

even

divisible

by

wasting

four

cores

so

now

we're

dealing

with

only

64

cores

and

with

64

cores.

We

have

64

times

4,

which

is

256,

logical,

CPUs,

and

then

you

can

just

distribute

these

250

logical,

CPUs

too

young

above

tasks

per

node,

which

is

16.

In

this

case,

then

you

do

n,

16,

C

16.

A

Here,

n

16

is

16

MPI

tasks

per

node,

such

C

16

is

16

CPU

logic,

Oct

16,

not

casillas

per

MPI

tasks,

then

you're

also

adding

the

CPU

bind

equals

course

was

that

it's

now

you're

spreading

out

your

tasks

and

threads

so

I

have

this.

How

it

shows

that

it

spreads

out

so

an

MPI

rank

0

is:

are

these

4

physical

cores

and

there

are

8

OpenMP

threads?

Therefore,

here

and

four

here,

these

numbers

are

the

logical

cores

on

the

same

physical

core.

So

six

to

eight

136

204

is

on

the

same

physical,

core,

zero.

A

A

So

here's

an

example

of

the

course

of

68

course

here

and

in

this

example,

we

have

64

mph

as

per

node

I'm,

asking

for

two

nodes

and

128,

so

64

open

MPI

tasks.

Each

has

4

logical

cores,

so

M

0

is

on

this

core

0,

because

for

our

logical

cpus

and

with

my

own

Kannamma

stress,

equals

4.

Also

the

binding

I'm

getting

the

threads

also

find

nicely

on

each

of

the

logical

cores.

A

There's

a

few

ways

to

check

affinities

we

buy.

We,

we

actually

compiled

some

the

binaries

for

the

Intel

compiler,

to

check

how,

if

you

want

to

check

on

curry

for

the

NPI

course

codes

or

for

hybrid

codes

for

different

for

different

compilers,

for

example.

So

what

you

do?

Is

you

replace

this

little

binary

with

replace

your

own

application

excusable

with

this

little

binary

and

run

it

and

I'll?

Tell

you

your

affinity

so

like

in

your

XK?

A

In

this

case,

you

have

32

mph:

ask

you

can

check

whether

MPI

tasks,

the

affinity

settings,

are

correct

because

with

this

s

wrong,

you

also

have

all

these

s.

Patch

flies

basically

will

need

to

check

if

your

s,

patch

flags

and

along

with

this

s,

wrong

option

is

giving

you,

whatever

the

correct

affinity,

you

can

get.

A

Also,

we

do

have

a

JavaScript

generator

you

can

put

in

which

some

of

the

parameters

for

your

job

and

it's

available

on

miners

gov

under

jobs,

job

script

generator,

you

know,

give

you

a

template

of

your

JavaScript

and

you

can

just

add

more.

You

know

other

things,

email,

your

output

or

you

know,

change

your

job,

executables,

etc.

So

this

is

also

very

good

for

you,

so

on

to

monitoring

jobs,

the

jobs

in

jobs

are

scheduled

by

your

priority

and

other

combination.

How

many

jobs

you

some

in

it?

A

Because,

for

example,

you

submit

a

siren

jobs,

it

would

not

block

the

other

user

after

you

submit

one

job.

There

are

a

few

commands

to

use.

I'm

gonna

have

some

slides

about

these

and

then

there's

on

the

web

minor

stuck

up.

You

can

check

Cori,

cues

and

curry

backlogs

and

thank

you

wait

times.

I

think

Steve's

shoulders

earlier,

there's

also

life

status

and

in

the

iris

you

can

also

check

click,

the

jobs

tab

and

you

can

see

all

your

own

jobs

and

how

much

each

job

was

charged.

A

The

nurse

has

a

custom.

Sq

wrapper,

it's

called

sqs

I've

seen

the

transition

right

now.

So

it's

currently

you

going,

you

see,

you

have

sqs

and

SQS

they're.

Pretty

similar

sqs

has

some

you

know

scheduled

start.

We

decide

not

to

use

this

anymore,

because

I've

also

configuration

tuning

a

nurse.

It's

not

needed

anymore,

but

also

the

sqs.

A

We

are

trying

to

recommend

we're

trying.

We

are

I'm

just

going

to

rename

it

to

sqs

in

July.

If

it's

it's,

no,

no

no

issues

reported.

It

is

more

flexible.

It

takes

all

the

allowed

flags

in

the

native

sq

and

you

can

also

put

in

we.

We

gave

you

a

default

option,

but

you

can

override

it,

and

so

it

is

more

flexible.

A

It

won't

implement

everything

else

in

SQL,

some

of

the

things

you

can

use

other

a

strong

command

to

achieve

that,

but

with

sqs

being

flexible

is

the

goal

and

simple,

simple

inflexible

is

I'll:

go

for

go

I'm,

going

storm

monitoring

as

control.

You

can

there's

a

one

particularly

useful

command

as

control

show

job

and

your

job

ID.

So

if

you

have

made

multiple

jobs

in

the

queue

you

want

to

remind

yourself

which

each

job

is

in,

which

that

command

you

can

find

out

which

queue

I

submit

to

you.

A

When

did

you

submit

and

where

is

the

job

submit

from

and

your

command

is

your

job

script?

This,

which

is

useful

s,

account,

is

for

checking

your

jobs,

complete

jobs

and

also

jobs

current

in

the

queue

and

you

can

put

in

lots

of

flag

start

time,

end

time

and

some

of

the

flags.

You

interested

promise

interesting

to

find

out

all

your

job.

A

Yeah

so

now,

I'm

gonna

talk

about

some

best

practices

for

running

jobs.

So

first

question

is:

where

should

I

run

my

jobs,

which

you

know

has

world

okay

now

and

which

QoS

etc?

So

the

factors

to

consider

is

whether

how

long

each

wait

time

is.

You

know

like

it

current

about.

If

you

check

the

backlogs

up

in

on

my

nurse

you'd

see,

backlog

on

Cana

is

much

smaller

than

our

house

walk,

especially

kay.

Now

is

four

times

bigger

than

house.

A

Well,

and

also

you

want

to

check

the

you

know

your

own

throughput,

depending

on

what

type

of

job

submitting

and

you

want

to

check

on

the

charging.

Okay

now

is

cheaper

per

node

wise,

then

house

well,

and

also

there's

a

large

jobs

discount

for

1024,

then

flux

and

low.

Also

is

discounts

are

only

also

available

on

KL,

on

the

other

hand,

shared

and

real

time.

Real

time

is,

by

special

permission

by

shared

it's

only

available

on

house

well

nodes.

A

The

these

are,

this

is

the

current

queue

policy

you

can

see,

which

is

a

list

up

qos

and

there

are

maximum

nodes

allowed

maximum

or

time

allowed

how

many

jobs

you

can

submit

up

to

this

number

when

it's

wrong

time

limit

no

limit

means

in

some

exchanges,

running

jobs

and

those

their

relative

priorities

and

they're

charging

factor.

Most

of

them

are

one

on

our

house

wall

and

premium

is

two

here.

A

A

The

charging.

How

do

you

charge

the

unit?

Is

nurse

hours

and

different

architectural

charting

factor?

The

QoS

charging

factor

and

little

discount

here

I

give

an

example

it

charged

by

the

hours

used

by

your

job,

not

by

the

wall,

clock,

request,

time

and

charged

by

entire

duration

of

the

all

the

nodes

you

allocated

for,

even

if

you

don't

use

them

so

no

mundos

time

time

times,

chart

architecture

charge

factor

time,

skewers

charge

factor

is

what

is

charged

for.

A

Job

scheduling

I

mentioned

job

it.

They

are

not

first

in

first

out.

What

are

some

considerations

are.

What

are

how

we

scheduling

here.

First,

just

to

mention

everything

is:

do

you

know

we

do

constant

monitoring

and

tuning

for

best

utilization

and

also

for

the

fairness

for

the

product

for

the

users,

the

each

type

has

its

priority

value

by

mostly

by

which

QoS

is,

and

by

job

age

and

very

small

area

of

fair

share.

The

two

schedule:

when

is

the

main

scheduler,

the

other

ones,

called

backfield

scheduler.

A

So

every

few

minutes,

when

scheduled,

were

checked

the

jobs

and

scheduled

them

in

order

of

this

priority

and

for

a

few

days

to

into

the

future,

not

too

long,

because

it

takes

too

long

to

schedule,

but

also

it's

dynamic.

If

you

schedule

too

far

away

too

far,

it's

not.

It's

not

gonna

be

useful

because

it's

gonna

change

and

we

just

have

a

thrush

prior

prior

to

threshold.

The

jobs

below

that

will

wait

and

the

scheduler

won't

consider

those.

A

Yet

until

they

age

until

they

reach

the

threshold,

there

will

be

considered

for

scheduling,

there's,

also

a

policy.

We

do

only

consider

two

jobs

per

queue

as

per

user,

so

if

you

have

like

ten

jobs

in

regular,

you

know

only

schedule

two

of

them

until

these

two

starting

to

run,

then

you

can't

the

other

two

will

be

out

consider

for

scheduling.

On

the

other

hand,

the

backfield

scheduler

doesn't

have

any

of

these

restrictions.

A

What

it

does

is

comes

in

and

check

when

the

all

these

the

main

scheduler

has

scheduler

jobs,

and

there

are

some

holes

that

would

allow

opportunities

for

some

smaller

and

shorter

jobs

to

start

the

backfield

scheduler

or

fill

those

holes

and

run

them

as

well

as

the

starting.

The

in

small

jobs

won't

affect

start

time

of

the

jobs

already

scheduled

by

the

main

scheduler,

so

some

tips

to

get

better

throughput.

A

Obviously,

if

your

job

is

shorter

and

smaller,

it's

easier

to

get

scheduled

so

just

consider

if

you

can

break

up

your

lawn

jobs,

use

variable

time.

Do

that

and

you

can

submit

your

chores

before.

Mention

is

because

there's

a

the

larger

jobs

long

longer

jobs,

especially

for

gable,

just

want

to

be

able

to

start,

and

if

you

are

able

to

use

last

us

with

time

minimum

it's

easy,

especially

easier

for

your

jobs

to

be

scheduled

also

to

not

request

more

time.

A

If

your

job

ask

from

compelled

the

maximum

or

time

there's

never

a

chance

to

be

backfield

on

some

of

the

large

jobs

consideration

its

it

takes

time

for

your

job

attitude,

rules,

input,

etc

to

be

staged

on

the

compute

node

to

start

to

round.

So

one

of

the

tip

is

to

do

SB

cast

and

cast

your

excuse

was

on

to

the

compute

nodes.

Then

us

found

that

executable

directly,

which

will

be

faster.

You

can

even

s

back

at

your

input

files

as

well.

A

There's

a

no

link

to

check

this

out

and

also

we

would

like

to

recommend,

if

you

you

prefer,

you

can

be

statically

to

run

large

jobs.

The

reason

is

because

the

shared

libraries

goes

through

an

extra

layer

of

software

called

DVS

to

access

through

master

file

system

and

also

everything

running

from

the

global

file

system,

your

home

directory,

your

community

file

systems,

even

and

also

through

the

DVS.

So

if

you

and

to

access

the

own

computers,

so

basically,

if

you're

thinking

about

your

application

is

statically

in

you

know,

I

don't

avoid

these

a

dynamic

library.

A

A

Now,

although

it

although

high-pass

WAP

I,

need

it

to

run

on

binary,

stay

Rama,

okay,

now

we

disap

recommend

you

build

separately

for

Carol

and,

let's

say

running

out

from

global

homes

is

strongly

discouraged

because

IO

is

not

optimized,

it

needs

to

access

the

on

computers

with

DVS

and,

and

so

it's

slower,

then

scratch

and

once

I

hold

over

homers

is

busy.

Isn't

it

will

cause

negative

impact

for

other

users

even

doing

an

LS

or

an

bi

and

slow?

It

can

slow

down

the

system

there's

a

place.

A

You

can

put

also

put

it

up

and

consider

to

put

your

projects

shared

library

into

a

global

common

software.

We

mounted

right

being

only

on

compute

nodes,

so

nobody

is

writing

into

it

on

compute

nodes.

It

makes

it

faster,

cheat

your

application

and

for

a

lot

lots

of

large,

similar

number

of

similar

jobs

and

workflows.

You

should

consider

to

adopt

workflow

tools

for

better

managing

your

jobs.

A

More

information

is

talks,

there's

curve,

slash

jobs,

there's

also

another

different

set

of

documentation.

I'm

not

covering

in

this

talk.

It's

special

for

quarry

GPU

nodes.

Some

users

may

have

access

to,

but

it's

not

for

all

users

at

all.

It's

only

various

one

of

them

users,

but

if

you

do

need

to

run

GPU

nodes

on

the

chip,

he

knows

check

out

this

documentation.

And

finally,

if

you

have

questions

you

always

welcome

to

fire

or

service

ticket,

we

are

help

portal

help

toddlers

go.