►

From YouTube: NERSC's Ten Year Plan

Description

NERSC's Ten Year Plan, Sudip Dosanjh, NERSC Director

A

Everybody

to

guess

is

technically

the

third

day

but

day

two

of

nug

2014

start

off

today

with

nurse

director,

sudeep

dosanjh,

sudeep

and

says

that

is

rector

of

nurse.

Previously

he

had

an

extreme

scale

computing

at

Sandia

National

Laboratories

was

code

directory

of

Los

Angeles

sandy

Alliance

for

computing

extreme

awesome.

A

B

Okay,

thank

thank

you

Richard

night.

Thank

you.

All

for

coming,

I

was

heading

back

from

DC

last

night

and

my

flight

got

canceled

and

I

told

him

well,

I've

got

to

make

it

back

no

matter

what

so

I

was

happy

to

get

on.

Another

flight

I

was

scrunched

in

the

middle

seat

for

about

six

hours,

but

I

was

happy

too

happy

to

arrive

here

in

time

to

make

it

here

and

meet

all

of

you

I

guess.

You

know

this

is

a

very

special

meeting

for

us.

B

This

is

kind

of

the

kickoff

as

you've

heard

for

a

40th

year.

Our

anniversary,

and

we

do

have

some

faces

from

the

past

who

have

joined

us.

So

michael

mccoy

is

here

and

he

was

a

heavily

involved

with

with

nurse

get

Livermore

when

it

first

started,

and

you

were

the

deputy

director

for

a

while

at

the

end,

okay

and

then

where's

bill

Kramer,

it

bill

step

out

so

bill.

Kramer

is

here

and

and

and

so

he

certainly

has

a

very

long

history

with

nurse

Caswell

when

once

it

was

at

Brooke

Leigh,

so

so.

B

B

B

So

I

just

wanted

to

give

you

kind

of

an

overview

of

kind

of

where

we're

at

with

Narcy

and

where

we're

headed

over

the

next

over

the

next

the

next

decade,

and

so

as

I

mentioned

you

know,

nurse

was

established

originally

at

livermore

in

1974.

So

so

2014

will

write

it

right

at

40

years

it

had

a

long

history.

A

lot

happened

while

it

was

at

Livermore

in

in

96

it

moved

to

to

berkeley

lab

there's.

Also

a

name

change

became

the

national

energy

research

scientific

computing

center.

B

A

lot

of

our

if

you

notice

these

are

just

kind

of

highlights,

but

but

one

of

the

notable

things

that

was

going

on

while

nursed

moved

to

deburr

play

was

the

transition

from

vector

supercomputers

to

massively

parallel

computers,

and

that

was

actually

a

very,

very

challenging

time

for

a

lot

of

the

users

at

nurse.

Certainly

we

have

lots

of

even

back,

then

there

were

lots

and

lots

of

users

and

lots

of

codes

and

managing

that

transition.

That

was

actually

a

you

know.

B

Very

major

transition

for

the

for

the

community

was

was

definitely

a

challenge

and

and

in

some

ways

we're

facing

kind

of

a

similar

challenge.

Now,

as

we

go

to

multi-core,

we

have

again,

we

have

lots

of

users,

and

so

we

can't

perhaps

make

a

very

radical

shift,

but

we

recognize

that

we

need

to

start

making

a

shift

with

our

next

generation

systems.

B

And

so

so

we

do

right,

like

in

kind

of

the

you

know,

kind

of

what's

going

on

now,

with

the

move

to

multi-core

and

perhaps

a

new

programming

model

and

and

the

changes

that

are

going

to

be

required

in

the

software

to

what

we

had

successfully

managed

back

in

the

late

90s.

And

of

course

there

are

lots

of

lessons

learned

from

then

as

well

that

we

can

apply

to

now.

B

We

deployed

a

facility

wide

file

system

in

2006

and

we

started

a

collaboration

with

the

joint

genome

Institute.

So

we

provide

all

the

computing

for

jgi,

and

so

so

I'll

talk

more

about

this.

But

there's

been,

you

know,

there's

lots

of

talk

about

big

data,

but

we

deal

with

lots

of

big

data

at

nurse

as

well.

It's

more

scientific

data.

So

it's

it's

not

the

type

of

data

that

Google

deep

deals

with,

but

it's

still

very

large

quantities

of

data

that

we're

we're

dealing

with

now.

B

So

we

do

collaborate

with

computer

companies

to

deploy

advanced

HPC

and

data

resources.

I

was

at

as

Richard

mentioned.

I

was

at

La

Salle

with

Sandia

and

Los

Alamos

working

on

CL

0

it.

At

the

same

time,

nurse

was

working

on

hopper

and

those

were

sister

systems

that

were

deployed.

At

the

same

time

they

were

the

first

crepe

petascale

systems

with

a

Gemini

interconnect

right

now

we

have

we

have

accepted

and

we're

going

to

have

a

dedication

later

on

for

Edison

and

that's

the

first

Cray

petascale

system

with

Intel

processors,

aries

interconnect

and

dragonfly

topology.

B

It

was

a

serial

number

one

system

for

the

HP

CS

program

right

now,

we're

working

with

with

aces

again

they're

going

to

deploy

Trinity

in

the

2015-2016

time

frame

and

we'll

be

deploying

a

sister

sister

system,

nurse,

Cade

and

and

those

are

being

jointly

designed

on

ramps

to

exascale.

I'll

talk

a

little

bit

about

that

and

then

we've

architected

and

deployed

data

platforms,

including

the

largest,

do

a

system

focused

on

genomics.

So

with

all

of

these

you

know

these

are

leading

edge

systems.

There's

always

lots

of

challenges

when

you're

deploying

systems

like

this.

B

B

They

allocate

their

base

and

submit

proposals

for

over

target

so

and

then

the

deputy

director

of

science

actually

prioritizes

these

over

target

regret

requests.

So

so

we

directly

support

office

of

sciences

mission.

Each

of

the

center's

also

has

a

ten

percent

directors

reserved,

but

but

ninety

percent

of

our

resources

really

get

allocated

through

the

nurse

program.

The

other

point

is

that

that

our

usage

really

shifts.

So

you

know

we

we

we

can't

pick

our

users,

you

know

we

have

to

serve.

The

people.

B

Were

selected

as

users

and

then

the

usage

really

shifts

as

deal

we

priorities

change.

So

if

you

choose,

if

you

see

changes

in,

do

a

strategic

plan

that

directly

affects

the

problems

that

are

run

at

risk,

and

so,

if

you

look

at

the

changes

from

2002

to

2012,

one

thing

that

we

noticed

was

that

there's

so

in

blue

is

2012

and

in

red

is

in

2002

and

what

you'll

see

our

increases

and

things

like

material

science,

chemistry,

climate

research,

Biosciences

earth

sciences.

B

We

really

focus

on

the

the

scientific

impact

of

our

users,

so

this

is

you

know.

This

is

a

really

bragging

about

all

you.

It's

so

it's

easier

to

do.

I

was

having

a

hard

time

bragging

about

what

we're

doing,

but

this

is

a

bragging

about

our

users

and

we

really

enable

an

amazing

breadth

of

science.

We

have

typically

about

1500

journal

publications

per

year.

More

than

10

journal

cover

stories

per

year

there

were

17

this

past

year

there

were

three

recent

Nobel

Prize

winning

projects

that

use

nursing

physics,

magazines,

2013

breakthrough

of

the

year

users.

B

Resources

to

identify

the

first

high-energy

cosmic

neutrinos,

finding

earth-like

planets

are

not

uncommon,

was

recognized

by

Wired

magazine

as

a

top

scientific

discovery

and

covered

in

the

New

York

Times

MIT

researchers

developed

a

new

approach

for

desalinating

sea

water,

and

that

was

one

of

Smithsonian

magazines.

Fifth,

surprising

scientific,

milestone

of

2012

for

of

science

magazines.

Last

insights

of

the

last

decade,

three

in

genomics

and

one

related

to

Cosmic

Microwave

Background,

those

were

all

enabled

by

nurse

resources,

help

enable

those,

and

so

so,

as

I

mentioned

there

were

17

journal

covers

in.

B

B

Just

got

a

new

Edison

t-shirt,

but

but

I

have

a

hopper

t-shirt

now

its

kind

of

falling

apart,

so

I

can't

do

it

anymore,

but

every

time

I've

gotten

on

an

airplane

with

my

hopper

t-shirt

I've

had

someone

approached

me

saying:

oh,

do

you

work

at

nurse,

and

so

so

it

has

always

been

done

so

so

I

guess.

Maybe

that

means

our

users.

Not

only

do

we

have

a

lot

of

them,

but

they

seem

to

fly

a

lot

they're

geographically

distributed.

We

have

47

states

so

in

dark,

blue

or

states

with

over

100.

B

So

we

have

many

states

that

have

over

100

users

there's

lots

with

with

over

50.

So

it

really

is

a

nurse

really

is

a

national

resource

and

there's

a

guy

could

have

also

over

late

late,

an

international

map,

and

so

we

get

lots

of

users

from

really

all

around

the

world.

We

have

lots

of

international

projects,

and

so

so

it

really

is.

It's

amazing

to

see

who's

logged

on

every

every

day.

So

so

one

of

the

consequences

of

this

is

that

we

do

have

a

very

diverse

workload.

B

So

we

have

lots

of

users,

but

we

have

over

600

different

codes

and

and

algorithms

shown

in

the

upper

right

or

is

kind

of

the

breakdown

of

the

for

algorithms,

and

so

the

slices

are

the

codes

and

overlaid

on.

There

are

the

different

algorithm.

So

you

can

see

that

that

lots

of

people

do

fusion

pick

codes,

lattice,

qcd

density,

functional

theory,

climate

molecular

dynamics,

quantum

chemistry,

so

there's

lots

of

different

different

applications

and

algorithms

that

we

need

to

support.

B

B

So

so

you

do

have

to

be

capable

of

running

at

scale,

but

then

we

also

have

to

be

able

to

support

what

we

call

high-throughput

computing,

these

these

massive

massive

numbers

of

smaller

simulations

for

statistics

or

ensembles

that

people

need

to

do

and

we

want

to

be

able

to

do

that.

You

know

seamlessly

so

that

you

don't

have

to

go

to

a

different

system

just

depending

on

the

size

of

the

job

that

you

want

to

run.

You

know

our

operational

priority

is

providing

highly

available.

B

Hpc

resources

with

really

exceptional

user

support

will

try

to

maintain

a

very

high

availability

of

users.

So

so

we

we

do

chart

the

satisfaction

rating.

You

all

have

probably

gotten

an

email

for

me

asking

you

to

complete

our

user

surveys,

but

we

do

track

those

very

seriously.

We

look

at

all

the

issues

if

you

write

down

something,

someone

actually

will

go

and

look

at

it

and

we'll

we'll

try

to

figure

out.

B

What's

what's

going

on

so,

if

you're

having

issues

you

know

you

can,

you

can

call

directly

or

you

know

directly,

but

but

the

user

survey

is

actually

a

very,

very

useful

resource

for

us.

In

figuring

out

what

help

you

need,

we

also

want

to

maximize

the

productivity

of

our

users.

We've

had

some

conversations

with

a

date

good

one

hour

program

that

that

nurse

staff

has

actually

been

at

about

the

same

number

of

ftes

for

a

long

period

of

time,

and

and

during

that

time

our

number

of

users

has

grown

very

dramatically

and

so

shown

on.

B

The

left

is

the

number

of

users

and

and

and

the

the

red

line

is

the

users,

and

you

can

see

this

very

dramatic

increase

from

2000

to

where

we

were

perhaps

around

a

thousand

to

close

to

5,000

now,

and

so

we've

done

that

with

roughly

the

same

number

of

staff,

and

so

so

one

of

the

keys

here

is

is

keeping

the

number

of

tickets

per

user

down,

and

so

so.

This

is

a

very

important

metric

for

us.

B

So

we

we

have

to

have

a

plan

to

if

you,

if

you

generate

a

ticket

for

us

we

within

three

days.

We

have

to

have

some

kind

of

plan

for

trying

to

resolve

that,

and-

and

so

if

we

get

really

high

in

the

number

of

tickets

for

users-

and

we

have

more

and

more

users,

you

can

see,

we

can

really

very

easily

get

dwarfed

and

so

keeping

this

down.

So

we

threw

a

lot

of

hard

work

through

the

user

services

group.

B

B

A

that's

a

huge

change.

That's

really

been

necessary

necessary

by

this

dramatic

growth

in

the

number

of

users,

and

so

how

will

we

done

that?

So,

as

I

said,

you

know,

we

work

very

hard

to

make

the

systems

as

reliable

as

possible.

We

also

try

to

have

training

as

much

training

as

we

can

do.

We

try

to

have

very

useful

web

pages.

People

work

really

hard

on

those.

B

They

understand

that

if

you

don't

put

something

in

the

web

page

that

that

you're

going

to

get

a

phone

call

or

you

going

to

get

lots

of

phone

calls,

and

so

so

we

try

very

hard

to

do

things

that

are

scalable,

and

this

is

going

to

be

some

some.

This

has

to

be

part

of

our

strategy

also

for

moving

to

multi-core,

because

we

have

so

many

users.

We

have

to

do

things

that

are

scalable,

so

we

can't.

B

B

So

if

we

look

at

nurse

today,

this

is

the

these.

Are

the

large

systems

we've

just

just

deployed

Edison

we're

going

to

have

the

dedication

for

Edison

and

I

talked

a

little

bit

more

about

that.

We

have

hopper.

We

have

a

number

of

production

clusters

viz

and

analytics

data

transfer

nodes

all

connected

by

by

very

high

speed

networking.

We

have

a

local

scratch

on

both

of

our

large

systems,

and

then

we

have

a

global

file

system

as

well

as

well

as

archival

storage.

We

just

went

to

100

gigabit

connection

to

the

outside.

B

So

this

just

shows

the

large

systems

within

within

Office

of

Science.

Currently,

as

I

said,

we

always

have

to

at

the

same

time.

So

when

we

bring

on

nurse

aide

will

will

have

retired

hopper

by

that

time,

and

at

that

time

our

systems

will

be

north

gate

and

end

and

Edison.

But

we

really

I

was

actually

out

of

meeting

we're

talking

about

redefining

the

Linpack

benchmark,

but

we

really

don't

focus

on

the

top

line.

Peak

flops.

B

We're

really

focused

on

scientific

productivity

and

deploying

systems

that

are

that

are

useful

to

all

of

you

and

what's

happening

partially.

Is

that

the

benchmark

that

people

use

that

lint

linpack?

Even

the

developers

recognize

that

it's

becoming

more

and

more

divergent

from

the

everyday

applications

that

we

see?

B

So

we

really

focus

on

other

things,

and

one

of

the

things

we

focus

on

is

is

its

memory

having

lots

of

memory

per

node

lots

of

memory

bandwidth

for

node,

more

and

more,

the

performance

of

applications

is

limited

by

your

your

ability

to

transfer

data,

not

not

as

much

as

as

your

ability

to

compute

people

will

say

that

that

floating

point

operations

are

for

free

and

that's

because

you're

often

just

waiting

waiting

for

for

data

to

arrive.

So

you

may

you're

lucky.

B

You

know

if

you

do

a

calculation,

every

eight

or

ten

clock

cycles,

you're,

probably

actually

doing

pretty

well

so

so

so

we

work

very

hard

to

make.

The

the

in

collaboration

with

Cray

and

Intel

the

memory

bandwidth

is

per

per

node

is

very

high.

The

peak

bisection

bandwidth

of

the

new

interconnect

that

Cray

is

deployed.

B

B

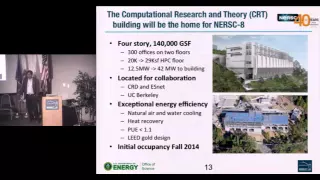

So

if

you,

if

you

drove

up

or

came

up

in

the

shuttle,

you

probably

saw

our

new

you

building

and

you

probably

have

heard

something

about

it,

but

but

that

CRT

on

the

top.

That's

the

artist's

rendition,

and

this

is

a

little

bit.

It's

there's

been

every

every

few

days.

You

see

significant

progress,

but

but

it's

looking

more

and

more

like

a

building,

it's

pretty

imposing

as

you

look

up.

B

The

big

thing

for

us

is

that

it's

going

to

give

us

a

lot

more

space

for

the

systems

and

a

lot

more

power,

and

the

other

thing

is

that

it

will

bring

nurse

back

to

currently

we're

in.

For

those

of

you

visited

us

we're

in

downtown

Oakland,

so

it

will

bring

us

back

onto

the

hill

and

so

will

be

co-located

with

the

research

division

and

with

es

net

and

and

we'll

have

certainly

be

much

closer

to

UC

Berkeley.

B

So

so

there'll

be

a

lot

of

collaborations

that

this

this

will

foster,

but

it

will

also

give

us

lots

of

floor

space

for

44

nurse

gate

and

nurse

9.

The

building

is

being

designed

for

exceptional

energy

efficiency,

so

it's

natural

air

and

water

pooling

there

are

no

no

chillers

we're

trying

to

do

heat

recovery.

The

of

course

you

don't

get

I.

Guess

we

in

California

we

get

cold

when

it's

50

degrees,

but

but

we

can't.

B

So

we're

going

to

try

to

make

it

as

as

painless

as

possible

for

all

of

you,

you

can't

make

a

move

of

the

size

without

some

disruption.

We

work

very

closely

with

some

of

the

folks

from

Livermore.

Actually

who've

done.

Some

similar

moves

to

understand

some

of

the

issues

that

that

we

might

be

facing

as

we

as

we

make

this

change.

B

We're

going

to

move

the

systems

and

phases,

hopper

will

stay

actually

in

in

oakland

and

will

be

retired

there,

but

Edison

will

move

and

will

deploy

nurse

gate

in

the

in

the

new

building.

So

Edison.

You

know

we're

trying

to

keep

it

down

for

as

little

time

as

possible,

and

so

so

for

the

smaller

clusters.

You

know

we

can.

We

can

probably

do

the

moves

in

about

two

weeks.

B

So

we've

been

working

very

hard

on

on

nurse

eight,

you

know

Edison,

we

made

a

decision

not

to

deploy

GPUs

within

Edison

would

have

given

them.

A

much

higher

peak

would

have

done

much

better

on

linpack,

but

are

you

know

for

a

majority

of

our

users?

It

didn't

look

like

all

of

our

users

were

quite

ready

for

that

jump.

We

do

think

that

in

the

2015-2016

time

frame,

there's

been

lots

of

work

done

and

I

think

people

are

better

poised

to

make

the

leap

we've

identified.

B

People

who

we

need

to

work

with

and

I

think

it

really

is

necessary

to

you

know,

show

some

some

data

on

that

later,

but

I

think

it

really

is

necessary

to

make

this

change

to

multi-core

and

and

more

energy-efficient

architectures.

So

the

mission

need

for

for

nurse

gate

is

actually

the

users

need

is

much

higher,

but

we

establish

the

mission.

Need

is

at

least

10

*

hopper

on

on

a

representative

set

of

do

ii

benchmarks,

but

but

we

would

like

to

get

that

on

real

deal.

B

We

applications

we

do

need

to

provide

very

high

bandwidth

access

to

existing

data.

There's

lots

of

data

already

stored

here,

and

so

so

so

northgate

needs

to

integrate

within

that

environment

very

seamlessly

and

I

said

it.

As

I

said,

we

need

to

have

a

plan

to

begin

transitioning

our

user

base,

so

this

should

actually

not

say

not

yet

known.

It's

just

not

public.

B

So

we're

hoping

we'll

be

able

to

announce

this.

We

have

an

independent

project

review

later

this

month,

and

so

our

hope

is.

This

was

a

joint

project

with

Los

Alamos

and

Sandia

on

Trinity.

So

it

doesn't

really

make

sense

for

one

of

us

to

announce

it,

because

everyone

will

know

what

the

other

system

is,

and

so

so

we're

going

to

try

to

do

a

joint

announcement,

hopefully

in

early

March-

and

it

looks

like

we're

on

track

for

that.

B

So

so

at

one

of

the

one

of

the

telecoms

in

March

or

April

will

certainly

give

you

all

a

lot

more

detail

about

what

what

we

can

say

about

nurse

gate.

It's

still

going

to

be

an

early

announcement

of

the

technology,

and

so

we

need

to

work

with

the

technology

providers

well,

to

figure

out

exactly

what

we,

what

we

can

say,

but

I

think

it

will

be

evident.

I

mean

I.

Think

people

understand

what

what

changes

that

that

need

to

be

made

to

begin

preparing

for

nurse

gate

and

really

no

matter

what

the

technology

is.

B

There's

a

lot

of

similarity

in

all

the

systems

and

really

multiple

levels

of

code

modification

may

be

necessary.

You'll

need

to

expose

more

on

node

parallelism,

increased

application

vectorization

if

there

was

a

coprocessor

architecture

than

you

have

worried

about

locality

directives,

if

there's

some

local

scratch

or

scratch

path

than

then

something

you'd

have

to

look

at.

As

far

as

how

do

you

most

effectively

use

that?

Can

the

compiler

just

make

use

of

that?

Or

do

we

need

to

add

code

directives

to

be

able

to

effectively

use

that?

B

So

this

is

something

that

will

be

will

be,

as

I

said,

we'll

be

talking

to

you

more

about

this

just

shows

notionally

what

we're

looking

at

we're.

Looking

at

a

system

that,

in

terms

of

system

peak,

would

be

20

to

40

pedo

flops.

The

system

memory

would

be

around

a

petabyte.

A

lot

of

this

depends

on.

There

are

also

lots

of

budget

lots

of

options

in

the

contract,

so

so

part

of

this

is

to

be

a

little

bit

fuzzy

on

what

the

system

is,

but

part

of

it

is

also

that

there

are.

B

There

are

options

to

make

make

changes,

because

again

this

is

still

early,

and

so

so

we

don't

exactly

know

what

Congress

is

going

to

do

over

the

next.

The

next

the

next

couple

of

years,

the

note

performance

will

be

up

by

you-

know

something

like

a

factor

of

five

we're

hoping

to

see

some

increase

in

node

memory,

bandwidth,

you're,

you're,

probably

going

to

you

know

the

higher

number

would

be

is

if

there's

some

some

scratch

pad

or

some

some

some

memory.

B

That's

on

package

we're

going

to

see

a

lot

more

concurrency

in

terms

of

system

size.

We

will

see,

you

know

some

increase,

it's

not

going

to

be

a

huge

increase

in

the

number

of

nodes.

The

main

thing

you're

going

to

see

is

a

lot

more

node

concurrency

increasing

the

node

interconnect

connect

bandwidth

in

this

time.

Time

frame

is

really

a

chat.

Is

it

is

a

challenge,

so

it

may

actually

be

nurse

night

or

you

see

really

a

big

big

boost

in

this,

but

but

we

are

working

to

see

if

we

can

improve

that.

B

So

so,

we'll

need

to

use

a

number

of

different

approaches

to

begin

preparing.

The

user

community

we're

having

with

the

vendor

and

the

technology

provider,

will

be

working

with

North

kinases

to

we'll

be

doing

developer

workshops

early

test

beds.

An

early

test

bed

for

users

would

be

engaging

with

the

the

application

teams

want

a

partner

and

within

leverage.

Existing

efforts

are

certainly

a

lot

going

on

with

the

community,

and

so

it's

not

like

nurses

going

to

do

this

all

by

ourselves.

B

So

we

need

to

coordinate

with

with

oakridge

and

argon

and

the

other

centers,

and

we

really

need

to

do

widespread

training.

As

I

said,

you

know,

we

have

lots

of

users

and

so

to

manage

is

transition

for

everyone.

We

really

need

to

do.

Things

are

scalable,

so

we

want

to

post

workshops

online

training

create

easy-to-follow

online

documentation

to

help

users

as

much

as

possible.

B

Ultimately,

all

of

those

are

necessary

to

get

good

performance,

but

what

you

are

seeing

in

all

cases

is

that

the

blue

line,

as

you

do

this

work

becomes

goes

down

as

well,

and

so

so

one

benefit

of

getting

ready

for

these

next-generation

architectures

is

that

actually

your

code

will

run

better

on

the

Xeon

on

xeon

clusters

and

xeon

work

stations

as

well?

So

so

so

that's

that's

a

that's

I

think

that's

a

very

important

part

of

this

as

well.

B

So

we

spend

a

lot

of

time

talking

with

you

all,

partly

through

requirements,

reviews

partly

through

meetings

like

this,

to

figure

out

what

we

need

to

be

deploying

in

the

future.

What

direction

we

need

to

be

heading,

so

we

do

requirements

with

six

program

offices,

so

within

the

Office

of

Science,

we

try

to

do

the

reviews

every

33

years,

so

we're

almost

through

our

second

set

of

second

set

of

reviews.

B

So

we

pick

a

target

so,

like

would

say

2017,

so

we

go

and

have

a

discussion

with

the

program

managers

and

the

scientists

we

try

to

get

a

representative

set

of

the

user.

So

typically

we

want

people

at

the

meeting

who

represent

more

than

fifty

percent

of

the

usage

within

that

within

that

program

office.

We

try

to

really

have

a

discussion

about

science

goals

and

representative

use

cases.

Oftentimes.

B

The

scientists

aren't

really

that

interested

in

flops

and

bites

and

bandwidth

and

all

these

things

they're

really

there's

certain

science

problems

that

they

need

to

solve

in

a

certain

time

frame,

and

then

we

work

with

them

to

go

backwards

based

on

those

so

science

that

requirements

did

to

estimate

what

the

what

the

computational

requirements

are.

And

then

we

do

rescale

the

estimates

to

account

for

users,

not

at

the

meeting.

B

We

aggregate

results

across

the

six

program

offices

and

then

we

try

to

validate

through

in-depth

collaborations

things

like

the

this

meeting

here

and

the

the

user

surveys.

This

does

tend

to

underestimate

the

need,

because

you're

you

know,

sometimes

you

can

anticipate,

who

might

be

a

future

user,

but

oftentimes,

you

don't

know

who's

going

to

be

really

a

new

user

in

five

years.

B

So

so

this

does

try

to

underestimate

things.

But

this

just

shows

in

the

black

line,

is

kind

of

the

overall

trend

and

in

red

are

the

actual

nurse

hours

delivered.

So

you

see

a

slight

dip,

which

is

when

Franklin

was

turned

off

and

now

that

we're

deploying

Edison

you're,

seeing

that

that

green

triangle-

that's

a

that's

hopper,

plus

Edison,

and

you

can

see

that

that,

since

we

went

with

a

Z

on

architecture,

we

didn't

deploy

GPUs,

we

were

actually

falling

significantly

below

the

trend

line.

The

black

arrows

are.

B

These

aggregate

needs

from

these

user

requirements,

workshops

and

those

are

actually

much

higher

than

the

trend

line.

Even,

and

so

you

can

see

that

not

only

were

you

know,

we're

way

short

of

the

user

requirements,

but

we're

also

falling

below

the

trendline

and

that's

why

we

need

to

transition

to

a

more

energy-efficient

architecture,

and

so

so

with

nurse

8.

You

see

a

range,

that's

mainly

driven

by

budget,

but

but

we

need

it

will

help

us

begin

to

get

closer

to

that

that

trend

line

as

well.

B

The

other

thing

is

a

you:

can

you

can

kind

of

project

out

from

these

requirements?

Reviews

and-

and

you

can

see

that

that

they're

increasing

very

rapidly

and

in

the

2018

timeframe,

the

aggregate

needs

of

our

users

will

actually

be

in

the

exascale

regime.

So

so

so

you

hear

lots

of

talk

about

exascale,

but

but

just

looking

at,

what's

going

on

and

trying

to

project

with

our

users,

it's

pretty

clear

that

to

do

the

science

if

they

they

need

to

do

in

2018.

2019

really

requires

exascale

computing,

so

this

just

shows

I

asked.

B

We

always

show

these

things

on

log

plots,

but

if

you

show

it

on

linear

plot,

you

actually

see

it

really

is

a

big

gap

between

between

kind

of

what

we're

looking

at

and

the

user

requirements

or

or

needs

are

much

much

higher,

and

we

also

ask

people

about

about

storage,

networking

other

things,

and

so

so

the

the

needs

that

we're

requiring

that

we're

getting

from

these

requirements.

Reviews

also

pointed

big

shortfalls

and

things

like

archival

storage.

As

far

as

what

the

what

the

users

need.

B

B

This

just

shows

there's

lots

of

different

different

plots

like

this

could

show.

But

this

is

just

one

weekend

last

year

and

you

see

data

traffic.

They

were

doing

a

particular

study

down

at

slack

and

you

could

just

see

the

the

data

traffic

very

high

speed

data

traffic

to

to

nurse

for

the

entire

weekend,

and

so

this

often

happens

is

they're

running

some.

Some

big

experiment

at

some

some

facility

and

they're,

really

in

undated

with

data

and

they've,

got

to

take

that

data

somewhere

to

analyze

and

try

to

get

scientific

understanding.

B

And

so

we

see

these

huge

inflows

of

data

traffic

depending

on.

What's

going

on

at

the

difference,

do

II

experimental

facilities,

and

so

so

I

guess

I

can't

won't

be

able

to

say

this

a

lot

longer.

But

but

you

know

this

is

one

of

the

things

that

surprised

me.

So

I

find

something

else.

That

surprises

me,

but

one

of

the

things

that

surprised

me

is

that

that

nurse

users

actually

import

more

data

than

they

export,

which

is

you

know

you

think

about

yourself

as

a

supercomputing

center.

B

What

you

think

of

is

that

people

will

do

simulations

and

take

data

away,

and

so

they

are

taking

lots

of

data

way

where

it's

exporting

and

this

these

numbers

are

actually

much

higher.

Now

we

need

to

update

them

because

they're

they're,

typically

above

a

petabyte

a

month,

especially

since

we've

gone

100

gigabit

networking,

but

we

were

in

blue,

is

how

much

data

we

were

exporting

and

in

red

is

how

much

we're

importing,

and

so

you

can

see

a

lot

of

data

is

coming

in.

B

So

you

could

say

well

where's

that

coming

from

to

be

honest,

some

of

that

does

come

from

the

other

centers

because

they

have

inside

out

allocations

that

end

and

suddenly,

all

that

data

ends

up

coming

to

nurse.

But

a

lot

of

that

data

is

also

experimental

data

coming

from

from

cosmology

or

high

energy

physics

or

from

for

from

daya

Bay,

or

get

lots

of

traffic

from

jgi.

So

lots

of

different

facilities

are

always

transferring

data

from

from.

E

B

The

advanced

light

source

here

from

slack

and

so

so

you'd

expect

that

well

with

all

this

data

coming

here,

that

people

must

be

doing

something

with

it

right

and

we

have

lots

of

examples

of

a

scientific

discovery.

That's

kind

of

the

traditional

uses

of

hpc,

but

we're

also

seeing

that

data

analysis

is

playing

a

key

role

in

scientific

discovery.

So

a

lot

of

the

highlights

that

we're

having

here

at

nurse

we're

seeing

more

and

more

examples

where

its

extreme

data

science

or

related

to

extreme

data

science.

So

there's

the

palomar

transient

factory.

B

B

There's

an

example

of

the

one

of

the

the

very

early

discovery

of

type

1a

supernovae

in

the

last

40

years,

so

those

discovered

overnight

and

then

instantly

the

the

scientists

called

collaborators

around

the

world

and

really

telescopes

from

around

the

world

were

refocused

on

that

on

the

on

the

same

supernova.

So

they

could,

they

could

follow

its

birth.

So

that's

resulted

in

lots

of

refereed,

publications

and

nature

articles

and

and-

and

it

really

makes

heavy

use

of

the

science

gateway

nodes

to

share

the

data

among

the

collaboration

as

well.

B

B

Solving

the

puzzle

of

the

neutrino,

with

with

the

daya

Bay,

there

are

lots

of

their

detectors

that

they

transfer

data

tuner

scanned,

analyzed

it

and

they

were

able

to

measure

the

theta

13

neutrino

parameter,

which

is

the

last

and

most

elusive

piece

of

a

long-standing

puzzle.

Why

neutrinos

appear

to

vanish

as

they

travel

and

so

so

that

really

used

a

high-performance

computing

for

simulation

and

analysis.

B

The

the

data

came

in

to

archival

storage

and

use

the

data

transfer

capabilities,

including

es

net

and

I

use

the

the

the

nurse

global

file

system

and

then

science

gateways

for

distributing

the

results,

and

this

was

one

of

science

magazines,

top

10

2012

breakthroughs

the

clock

mission.

There's

a

lot

of

right

up.

It

was

one

of

physics

world's

top

ten

breakthroughs

of

2013,

but

a

european

space

agency

satellite

mission

to

measure

the

temperature

and

polarization

of

the

Cosmic

Microwave

Background,

realizing

the

full

scientific

potential

of

this

required

really

large

computational

resources.

So.

D

B

The

data

nurse

was

the

primary

computing

site

for

for

Punk

and

all

that

data

was

transferred

here

and

analyzed

and-

and

we

have

a

materials

project

where

this

was

recently

cover

a

cover

on

scientific

american.

But

but

we

have

lots

of

users,

lots

of

companies

that

are

using

it,

but

but

we're

we're

providing

as

the

infrastructure

so

that

that

people

can

screen

materials

using

computational

ins,

that's

much

cheaper

than

making

them

in

lab.

We've

had

more

than

35,000

inorganic

materials

calculated

in

two

years

and

those

are

coupled

with

online

design

and

search

search

tools.

B

So

I

could

give

you

a

very

long

talk

on

this.

So

if

you're

interested

sometime,

we

can

talk

about

it.

You

know,

there's

lots

of

discussion

about

exascale

I

was,

as

Richard

mentioned.

I

was

on

the

exascale

initiative,

steering

committee

for

do

II

and

we

developed

a

roadmap

for

do

we

and

identified

some

of

the

key

challenges.

You

know.

There's

lots

of

discussion

of

big

data.

You

know

granted

for

nurse

gets

its

its

scientific

data,

but

there's

lots

of

discussion

of

big

data,

and

sometimes

these

things

are

viewed

as

being

orthogonal

but

they're.

B

Really

they

really

faced

the

same

computing

challenges

so

in

terms

of

things

like

like

energy

efficiency,

in

terms

of

a

lot

more

concurrency,

your

inability

to

move

data

at

the

same

rate

as

you

can,

you

can

perform

the

computations.

All

those

are

really

common

challenges

between

big

data

and

concurrency

and,

like

I,

said

big.

B

B

This

is

kind

of

leveled

off

some,

but

the

cost

per

genome

had

been

going

down

pretty

dramatically

and

when

we

look

at

things

like

the

advanced

light

source

and

and

an

stand

at

slac,

the

data

rate

has

been

growing

and

growing,

and

you

know

we

can

see

that

in

the

future.

You

know

we're

going

to

be

at

terabit

per

second

type

data

rates

and

and

we're

seeing

that

data

sets

are

going

to

be

going

to

hundreds

of

petabytes,

and

so

so.

This

is

something

that

we

do

expect

to

play.

B

B

You

know

we

have

to

do

great

in

operations

because

really

anything

we

do

has

to

be

built

on

that

base,

but

but

on

top

of

our

operational

base,

we

see

that

we

need

to

deploy

exascale,

usable,

exascale,

computing

and

storage

systems

for

our

users.

Just

as

you

look

at

the

demand

for

computing

within

the

Office

of

Science,

it's

really.

It's

really

amazing

to

see

those

those

curves.

We

need

to

start

transitioning

the

codes

and

for

nurse.

B

This

is

a

big

challenge,

because

we

can't

spend

three

ftes

per

code,

helping

them

transition

to

many

core

architectures,

and

we

do

think

we

have

a

critical

role

in

terms

of

influencing

the

computer

industry

to

ensure

that

future

systems

really

meet

the

mission

needs

of

the

office

of

science,

but

really

meet

the

needs

of

our

broad

base

of

users

and

that's

something

of

very

much

of

interest

to

the

computer

companies

as

well.

They

don't

want

to

deploy

systems

just

for

one

application,

it's

hard

enough

to

get

them

to

pay

attention

to

high

performance

computing.

B

E

B

So

so

I

think

with

all

of

these

you

know

we

made

the

decision

to

wait.

Our

preference

would

be

to

have

a

self-hosted

architecture,

so

we

think

that

if

you

avoid

right

now,

people

are

trying

to

connect

accelerators

through

a

PCI

connection

and

that

really

you're

really

being

limited

in

many

cases

by

by

the

very

poor

bandwidth

that

you

have,

and

so

our

hope

with

northgate

would

be

for

it

to

be

a

self-hosted

architecture

and

that

that

should

that

should

help

quite

a

bit

a

bit.

B

But

to

be

frank,

there

is

a

significant

challenge,

so

so

getting

good

performance

on

these

next

generation

systems

isn't

always

going

to

be

easy.

Some

people

are

already

working

on

it,

so

we've

actually

done

some

some

detailed

analysis

of

which

codes

are

more

ready,

which

ones

need

more

help

and

we're

trying

to

target

the

ones

that

we

need

help,

and

we

also

want

to

coordinate

with

oakridge

and

argon.

B

We

don't

want

to

be

working

on

the

same

set

of

codes,

but

then

again

we

also

need

to

make

sure

that

that

that

the

transition

that

we're

doing

is

is

somehow

related

to

the

transition

that

they're

doing

so.

There

is

some

need

for

coordination

among

the

centers

as

well

so

bill.

You

had

a

good

razor

in.

Are

you

okay,.

B

C

B

So

so,

so

that's

why

we

really

have

these

two

strategic

objectives,

these

two,

what

we've

been

calling

initiatives

they're

very

interrelated,

but

you

know

I

think

that

the

top

one

is

really

aimed

at

our

more

of

our

traditional

work.

Workflow,

which

a

lot

of

it

is

is

solving

these

scientific

problems.

These

pdes

or

or

or

other

things,

and

the

bottom

is-

is

at

related

data.

So

we

think

that

both

are

both

are

important

for

our

future.

Whether

you

can

deploy

a

single

system

that

meets

both

sets

of

needs.

That's

a

that's

something

that

we're

exploring.

B

So

I

would

say

that

that

two

thirds

or

three

quarters

of

the

the

issues

as

to

why

people

are

deploying

different

systems

here

have

to

do

with

more

software

as

opposed

to

architectural

differences.

And

so

so

we

are

looking

at

on

our

large

systems.

Can

we

provide

the

same

set

of

software

services

that

people

need?

Thank.

A

B

B

We

are

working

with

jaye

GI

to

see

if

parts

of

their

workflow

can

can

run

on

Edison

and

hopper

they'd

love

to

be

able

to

use

some

of

their

their

cap

allocation

for

some

other

their

computing

right

now.

Gene

pool

is

very

much

tailored

to

their

workflow

right

and

so

so.

Making

that

transition

is

not

not.

B

Not

particularly

easy,

but

it's

something

that

we're

going

to

be

exploring

with

with

JD

I.

We

will

be

moving

gene

pool

into

CRT,

so

I

would

expect

us

to

have

a

you

know:

the

collaboration

with

with

Jean

with

JD

I,

to

continue

for

a

long

period

of

time.

The

collaboration

with

PDS

south

and

the

high

energy

physics

community

is

even

longer,

and

so

I

would

so

we're

certainly

expecting

to

move

so

we'll

certainly

be

moving.

Mendel

will

certainly

be

you

know.

B

Our

expectation

would

be

that

PDS

f

is,

you

know,

will

continue

in

in

a

you

know.

That

would

be

a

longer

you

no

longer

term,

but

we

are

looking

at.

Can

we

can

we

move

some

of

that

workflow

to

northgate,

and

so

some

of

the

things

that

we're

looking

at

is

potentially

having

a

data

partition

on

northgate

and

that's

something

we

can

talk

with.

B

You

will

certainly

be

able

to

talk

with

you

more

about

in

a

few

months,

and

it

would

be

certainly

useful

to

understand

how

useful

that

would

be

for

some

of

these

for

some

of

these

problems,

but

the

idea

would

be

to

have

you

know

you

could

have

a

more

x86

park-based

partition

that

had

more

memory

that

also

had

access

to

the

first

buffer

that

potentially

had

a

different

software

stack.

And

so

could

you

meet

some

of

these

needs?

B

D

So

there's

currently

demand

for

infrastructure

to

host

a

human

related

data,

particularly

with

the

brain

initiative

in

many

people

here

in

Albiol

actually

participate

in

those

initiatives

that

really

to

human

data

is

there?

What's

the