►

Description

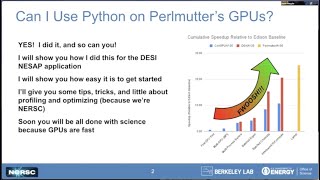

Daniel Margala, a NESAP Postdoc at NERSC, tells of experiences and tips for using CuPy to make use of Perlmutter's GPUs within Python

A

Hi,

can

you

see

my

slides?

Okay?

Yes,

looking

good,

okay!

Yes,

thank

you

for

having

me

I'm

a

nisap

postdoc

working

with

the

dark

energy

spectroscopic

instrument,

and

today

I'm

here

to

help

those

of

you

who

have

python

applications

get

started,

get

off

the

ground

on

pro

mud

or

gpus.

By

telling

a

little

bit

about

our

story

of

porting,

the

the

desi

spectral

extraction

pipeline

to

use

gpus

in

in

preparation

for

for

pearl

mutter.

A

So,

just

to

give

you

a

bit

of

a

quick

background

on

the

actual

desi

spectral

extraction

code.

It

uses

a

lot

of

it's

implemented

in

python,

uses

a

lot

of

special

functions

like

exponentials,

hermet,

polynomials,

ludondor

polynomials,

and

operates

on

lots

of

array,

array-like

data

structures.

They

get

images

from

their

telescope

in

new

mexico.

A

They

send

them

over

to

nurse

and

they

process

them

nightly,

and

then

they

also

reprocess

years

worth

of

images

every

few

months

and

so

there's

a

lot

of

matrix

operations

and

linear

algebra

going

on

in

their

scientific

pipeline.

And

it's

all.

As

I

mentioned,

it's

implemented

in

python,

leveraging

the

numpy

and

scipy

libraries

for

linear

algebra,

which

often

wrap

lower

level

routines

from

from

blast

or

back.

A

A

We

could

use

those

libraries

to

translate

directly

on

many

many

pieces

of

their

code

and

since

the

apis

are

compatible,

the

developers

on

desi

would

be

familiar

with

the

apis

of

kupai

and

nambakuda,

so

they'd

be

able

to

further

develop

and

maintain

the

application,

and

so

on.

The

right

here.

There's

a

bit

of

a

speed

up

relative

to

the

edison

baseline

that

we

used

to

kind

of

track

progress

during

this.

A

This

work-

and

I

just

want

to

mention

that

it

wasn't

like

a

straight-

you

know,

find

and

replace

numpy

with

coupe.

I

it

was

kind

of

an

iterative

process

where

we

report

pieces

of

the

application

test,

the

code

profile,

the

code

track

our

progress.

So

it's

not

something

that

we

just

you

know

hammered

out

in

one

one

weekend

or

one

month

or

something

like

that.

It's

kind

of

an

iterative

process,

learning

learning

about

the

gpus

and

learning

what

changes

we

could

make

to

fully

utilize.

A

So

getting

started

with

gpus,

you

actually

have

many

options,

so

some

some

of

these

were

actually

mentioned

in

the

previous

talk

like

like

tensorflow,

pi,

torch

and

jacks.

So

there's

a

lot

of

different

libraries

out

there.

That

will

give

you

access

to

the

gpus

in

python,

and

many

of

these

are

actually

interoperable.

They

can

work

well

with

each

other,

there's

some

standards

and

some

efforts

in

the

community

to

to

make

sure

that

these

different

libraries

are

able

to

kind

of

efficiently

share

array

like

objects.

A

So

what

is

coupei

so

kubai?

Essentially,

it's

it's

trying

to

implement

the

the

numpy

api,

which

is

kind

of

like

the

foundation

of

a

lot

of

scientific

computing

in

python,

but

it

lets

you

kind

of

work

with

array-like

objects

on

the

gpu

and

it

implements

many

many

features

in

numpy

and

scipy

and

then

under

the

hood.

What

kupai

is

doing

is

when

you

call

a

function,

it

compiles

the

cuda

kernel

on

the

fly

and

then

caches

that

result.

A

So

here's

a

long

list,

so

we

don't

have

to

go

through

all

of

these,

but

basically

the

the

most

of

the

functionality

of

numpy

is

available

to

you

using

google.

There

are

some

some

differences

and

you'd

have

to

go

and

look

at

the

the

coupon

documentation

for

for

a

list

of

what

those

are.

But

for

the

most

part,

many

of

the

features

are

and

numpy

are

implemented

in

kupai

and

let

you

leverage

a

lot

of

things,

especially

like

the

the

special

cuda

libraries

like

coo,

glass

and

ku

solver.

A

A

So

how

do

you

use

coupe

I

on

on

promutter,

so

for

the

most

up-to-date

information,

it's

best

to

check

the

the

nurse

docs?

So

there's

a

there's,

a

page,

that's

being

kind

of

actively

maintained

this

using

python

and

pearlmutter

page

that

I've

linked

to

at

the

bottom.

If

you

also

have

trouble,

you

can

always

open

a

ticket

at

help.nurse.gov,

but

it's

essentially

as

simple

as

logging

into

promutter,

making

sure

that

your

cuda

and

python

modules

are

loaded.

A

A

So

one

of

the

main

things

to

think

about

when

you're

first

getting

started

on

on

the

gpu

and

using

coupe.

I

is

kind

of

having

this

this

concept,

that

you

have

objects

that

are

where

the

the

memory

where

its

eyes

on

the

host

or

the

cpu

and

the

gpu

objects,

reside

on

other

objects

that

reside

on

the

gpu.

A

So

is

there

a

question?

Sorry,

just

five

minute

warning:

oh

okay,

thank

you

yeah!

So

that's

one

thing

to

keep

in

mind

as

you

get

started,

so

you

might

want

to

one

thing

to

be

aware

of

that.

Transferring

data

between

the

host

and

gpu

can

can

be

expensive

performance

wise.

So

it's

it's

important

to

keep

and

keep

that

in

mind

when

you're

getting

started

and

if

you

can

minimize

the

amount

of

data

that

you're

transferring

back

from

the

host

to

the

device.

A

So

when

should

you

use

coupon

versus

numpy,

so

here

I've.

I've

just

done

a

simple

thing,

where

I

kind

of

create

two

two

by

two

two

dimensional

arrays

using

random

numbers

a

and

b

here,

and

then

we

have

just

a

simple

function

that

that

adds

them

together,

element

wise

and

the

the

blue

line

here

kind

of

shows

the

the

amount

of

time

it

takes

to

run

this

operation

in

numpy

for

various

sizes

of

2d

matrices,

and

then

the

orange

line

is

the

equivalent,

but

using

coupei

and

so

for

smaller

sizes.

A

A

So

it's

not

as

simple

again.

You

don't

want

to

just

translate

all

of

your

code

directly

to

using

you

don't

want

to

replace

all

numpy

operations

with

coupe.

I

it's

important

to

be

aware

of.

When

coupe

I

will

be

useful

performance

wise

and

that's

typically

at

larger

array

sizes.

So

it's

always

important

to

measure

these

sorts

of

things,

as,

as

you

start

to

use

the

gpu

as

well,

and

then

here's

just

another

example.

A

And

then,

as

I

mentioned

before,

you

can

sort

of

mix

and

match

and

and

combine

these

different

libraries.

So

so

here

I

just

kind

of

have

an

example

of

really

combining

you

know

code,

that's

using

the

the

coupe

api

number

cuda,

just

in

time,

compilation

and

also

using

the

there's

a

really

cool

feature

of

numpy.

A

This

array

function

protocol

which

allows

you

to

actually

just

use

the

the

numpy

api

on

on

objects

that

support

the

numpy's

array

function,

which

the

kupai

nd

arrays

do

so

on

the

right

here,

I'm

kind

of

initializing,

an

array

on

the

gpu

device

using

coupe

I

and

then

we're

passing

that

array

to

a

kernel,

that's

compiled

using

number

cuda

and

then

we're

using

the

numpy

api

and

the

result

result

of

that.

The

number

cuda

result.

A

So

we

can

kind

of

label

the

regions

that

are

of

interest

to

us

and

so

yeah

there's

a

bunch

of

different

ways

to

do

that.

Using

the

handles

and

then

here

yeah.

I

just

have

an

example

of

how

to

actually

run

that

and

says

profile

with

your

python

application

and

then

here's

quickly

kind

of

just

what

the

encys

system

profile

looks

like.

And

so

the

area

marked

in

the

orange

box

is

kind

of

what

we

get

out

of

the

nvtx

markers.

A

And

so

we

can

kind

of

go

and

study

that

and

kind

of

figure

out

what

we

want

to

do

next

after

profiling,

our

application

trying

to

figure

out

where,

where

we

should

focus

our

development

efforts,

so

there's

many

more

topics

in

in

kupai.

You

can

go

to

the

coupe

documentation

to

dive

into

some

of

those

more

advanced

topics,

and

that's

all

I

have

so.