►

Description

Hamiltonian Simulation via Qubitization using a Tensor Network Quantum Simulator - Nathan Fitzpatrick (Quantinuum)

A

So

I

want

to

say

thank

you

for

that

incredible

work

to

allow

me

to

do

this

research,

okay.

So

how

am

I

talking

about

simulation

by

accumulation?

It's

very

enticing

because

it's

it's

known

to

have

optimal

complexity

in

terms

of

hamiltonian

simulation

when

you

compare

it

to

Trotter

and

kind

of

truncated,

Taylor,

series

methods

and

furthermore,

it's

kind

of

it's

opened

up

a

new

pathway

for

algorithms

research

generally,

so

you

might

have

heard

of

this

Grand

unification

of

quantum

algorithms

method.

This

is

all

based

upon

this

accumulization

framework.

A

A

So

if

you

start

with

a

single

a

single

keyword

example,

so

we

have

the

the

signal

rotation-

and

this

is

an

art

just

a

simple

RX,

for

example-

and

you

can

see

if

we're

going

to

measure

just

this.

This

D

here

is

kind

of

is

the

measurement

on

the

Zero.

If

we

just

measure

the

zero

of

this,

this

unitary

U,

which

is

the

RX

we

kind

of

you'll,

see

we

get

this

kind

of

cosine

squared,

and

if

you

were

to

plot

this

as

an

as

an

angular

Theta,

you

would

see

this

nice

sinusoid

squared.

A

There,

essentially

right

into

leaving

these

RZ

these

RZ

and

keeping

the

original

signal

rotation,

RX

and

interleaving

it

with

him

within

with

that

arbitrary

set

of

RZ

rotations

in

such

a

way

that

you

end

up

with

this

kind

of

two

by

two

matrix

product,

it's

essentially

allows

you

to

implement

any

Championship

polynomial

of

cosine

future

essentially

and

the

championship

polynomial,

acting

on

kind

of

on

the

Zero

element

of

this

of

this

matrix

product.

This

is

Pa

and

the

this

the

essentially

for

any

set

of

these

it.

A

It's

shown

that

any

essentially

any

check

any

Championship

from

anyone

which

can

then

approximate

most

polynomials

most

functions

on

a

matrix

can

be

expressed

with

these

fights.

So

it's

very

very

powerful

and

the

the

famous

theorem

from

Quantic

processing

of

constantly

processing

is

expressed

here

and

I.

Think

the

main

point

to

take

away

here

is

that,

in

the

initial

kind

of

canonical

implementation

of

quad

signal

processing,

we

can

only

implement

functions

which

have

definite

priorities

so

essentially

odd

and

even

functions.

A

Okay,

but

it's

been

shown

that

you

might

say

how

can

how

can

all

of

the?

How

can

we

know

how

to

implement

the

files?

Well,

there's

algorithms,

which

have

been

worked

on

by

the

groups

of

linlin

and

and

also

Archer

trans

people

as

well,

which

can

find

as

long

as

you

haven't

function

input

we

can

find

any

set

of

advice

to

implement

that

function

Okay.

So

we've

worked

on

a

single

key

bit.

A

So

if,

if

we're

now

going

to

generalize

this,

to

kind

of

a

matrix

kind

of

the

the

grand

unification

case

and

to

implement

any

polynomial

transform

on

a

on

a

matrix,

you

kind

of

you

kind

of

have

this

similar

structure.

But

instead

now

you

have

this

kind

of

this

interleague

RZ.

With

this,

with

this

lce

block

here

and

then

repeated

D

times

in

the

same

way,

so

right,

so

you

might

see

that

this

structure

is

kind

of

looks

quite

complicated,

but

I'll

try

and

break

it

down

to

kind

of

simplify

it.

A

So

we

have

the

RZ,

which

are

these

signal.

Processing

files,

which

we

know

from

the

previous

theorem,

can

Implement

any

at

any

function

and

any

any

function

with

respect

to

the

kind

of

the

theorem,

but

that's

it

generalizes

to

a

matrix

function.

What

we

do

is

we

use

this

technique

called

lcu

and

LCD

is

essentially

the

way

of

it's

called

blocking

coding,

a

matrix

and

essentially

what

we

do

is

we.

We

take

a

major

The

Matrix.

A

A

And

by

picking

up

by

post,

selecting

it

we're

kind

of

picking

up

post,

selecting

on

the

Zero

of

this

register,

we're

picking

out

this

h

and

then

we

can

see

by

doing

that.

We

can

then

get

this

green

Matrix

here,

which,

which

can

then

be

which

can

then

be.

This

is

the

signal

Matrix

now

like

before

with

the

single

qubit

case,

but

we

then

now

have

this.

A

We

essentially

have

to

use

the

Euler

equation

to

break

apart

the

time

Evolution

unit

tree

and

and

essentially

do

the

cosine

and

the

sign

separately,

but

then

we

can

recombine

them

using

this.

The

simple

one:

keyword,

linear

combination

of

unit,

trees,

primitive,

okay,

and

if

we

go

then

obviously

once

we

once

if

it

once,

we've

got

this

kind

of

the

signal,

processed

cosine

function

of

the

Matrix

and

the

sine

function

of

the

Matrix.

A

We

will

then

get

a

new

set

of

fives

for

every

single

time,

so

for

this

this

HT

and

then

we

can

then

measure

the

the

operator

that

we're

simulating

over

time.

So

this

could

be

average

magnetization

or

some

dipole

moment

if

you're,

a

chemist,

for

example.

Okay.

Now

the

the

obvious

thing

here

is

that

the

the

lcu

this

blocking

coding

here,

which

gets

the

hap,

gets

the

Matrix

into

onto

the

signal

processing

qubits

here.

So

you

have

this

s.

This

s

register

the

this.

This

is

clearly

the

really

expensive

kind

of

mysterious

step.

A

So

the

aim

I

guess

the

first

step

in

implementing

this

kind

of

being

a

full

quality

processing.

Implementation

is

to

kind

of

is

to

con

to

try

and

build

and

compile

the

circuits

for

lce

and

then,

furthermore,

to

kind

of

test

the

contractions

without

actually

simulating

them

on

Perl

matter,

and

then

once

we've

done

that

we

can

apply

the

D

blocks

in

this.

In

the

same

way

as

this

this

kind

of

framework,

then

we

can

do

the

signal

processing.

A

By

doing

this,

it

allows

us

to

implement

these

non-permission

and

non-neutry

Matrix

matrices

onto

onto

a

States

register.

So

I'll

walk

you

through

a

kind

of

a

simple

example.

So

say

we

have

some

hamiltonian

or

submission

operator

which

can

be

expressed

in

sometimes

it's

a

non.

So

some

in

this

example

we'll

keep

things

hermitian.

A

Besides

to

say,

we

have

some

kind

of

some.

An

operator

that

can

be

expressed

as

a

weighted

sum

of

power

leaves

the

norm

is

kind

of.

This

is

the

we

can.

We

can

normalize

the

obviously

the

the

operator

is

given

by

this

equation

here

and

then,

obviously,

what

we

do

is

we

we

wait.

We

have

this

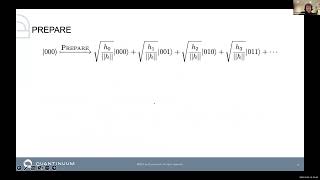

registry

called

the

prepare

register

and

we

wait

the

for

each

weighted

power

string.

A

This

can

be

problematic,

so

complex,

State

preparation

is

quite

difficult,

but,

as

I

would

say

later

we

can

absorb

the

imaginary

coefficient

in

into

the

select

part

of

the

algorithm,

so

typically

prepares

done

with

kind

of

these

kind

of

these

in

series,

but

I

mean

the

most

basic

way

of

doing.

The

Preparatory

is

kind

of

doing

State

preparation

in

series

with

with

a

rotation

for

every

single,

so

these

Alphas

are

encoding.

The

the

coefficients

here.

A

A

A

You

can

see

now.

We've

got

a

prepare

register

going

in

which

we

prepared

before,

but

now

we

can

selectively

apply

our

power

strings

to

the

the

weights

that

we

encoded

to

the

unique

bit

strings.

So

you

can

kind

of

see

here

we're

building

up

this

linear

commission

entries

right

now,

so

there

there's

multiple

ways

to

do

this.

Select

register

kind

of

the

most

naive

way

would

just

be

to

do

a

kind

of

what

we

call

c.

I

call

serial

party

compilation.

We

just

do

lots

of

more

to

control.

A

A

The

problem

is,

obviously

you

have

lots

of

in-series

multi-control,

so

this

motivated

us

to

kind

of

think

about

some

other

ways

of

doing

things

and

then

so.

There's

this

work

by

by

Peter

loves

group

where

it

kind

of

uses

a

parody,

Gadget

structure.

So

obviously,

for

for

those

not

familiar

with

power

gadgets,

you

have

it's

a

it's

essentially

a

it

allows

a

it

allows

us

to

implement

any

powerly

string.

A

We

want

by

having

a

bit

basis,

change

and

then

the

RZ

in

the

middle,

and

then

it

basically

turned

on

the

outside,

and

because

you

have

this

similarity

transform

structure,

it

allows

us

to

kind

of

only

control

on

the

U

in

the

center

here.

So

so

we

can

form

this

kind

of

quite

complicated.

We

can.

We

can

take

this

quite

complicated,

multi-control

thing

and

recompile

it

to

kind

of

have

a

single

multi

control

onto

the

Z

here

so

and

then

using

kind

of

the

power

lead

multiplication

rules

we

can

on

the

parity

qubit.

A

We

can

take

we.

If

we,

if

we

had

to

have

a

complex

coefficient

in

our

lcu,

we

can

use

the

piling

implications

to

encode

the

complex,

the

the

complex

element

multiplication

rather

than

the

state

preparation

site.

Typically,

what

would

that

you

then

do?

Is

you

then

unprepare

the

the

lcu

which

projects

everything

back

onto

the

zero

state

here,

so

you

can

now

see

everything's

back

onto

the

zero

as

we've

rotated

it

back.

A

These

complicated

fault

currents,

things

that

you

can

kind

of

see

for

the

Hubbard

model,

we're

kind

of

for

a

20,

qubit

Hogwarts

system,

we're

kind

of

working

about

50,

000

Gates,

and

we

have

these

two

different

kind

of

compilation

strategy

you

can

see.

One

has

I

mean

they're

quite

similar

right,

but

then

you

can

see,

obviously,

when

we're

doing

height

like

chemistry,

problems

with

hydrogen,

for

example.

We

have

like,

because

your

hamiltonian

has

a

lot

more

terms

turned

on

in

the

keyboard

interactions.

A

You

end

up

with

many

many

more

prepare

registers

needed,

correct

keywords

needed

which

requires

a

much

larger

circuit

there.

So

this

is

there's

lots

of

room

for

improvement

here.

Okay,

so

I've

spoken

about

kind

of

the

certain

compilation

stuff,

but

how

do

we

actually

run

these

on

the

permission?

So

these

are

the

kind.

These

are

the

circuit

structures

that

we're

dealing

with.

So

we

have

a

one

register

with

zeros

and

we

have

a

state

Register

and

then

we

would

do

an

lcu

and

we

measure

on

the

Zero

register.

A

So

obviously,

but

this

post

selection

step

is

not

not

kind

of

a

trivial

step

with

it's

not

what

it's

an

unusual

thing

to

do

with

tensor

networks,

because

most

of

the

time

you

just

work

typically

with

expectation

values.

But

it's

quite

easy.

You

can

just

essentially

you

just

essentially

project

onto

the

zero

vectors

here

and

then

just

build

your

typical

tensor

Network

operator

sandwich,

but

with

a

post

selection

onto

the

zero

registers.

A

So

so

we've

implemented

this

in

pi

ticket.

It's

getting

convert

to

circuit

and

invents

it

as

a

tensor

network

with

post-selection

in

the

under

this

kind

of

framework,

and

then

we

have

a

few

benchmarks

here

so

essentially

I'm

just

sticking

with

the

Hubbard

model,

with

the

kind

of

power

Gadget

compilation

strategy,

you

can

kind

of

see

we

have

so

this

is

this

is

quite

promising

I

mean

I.

A

I

have

a

model

with

22

cubits,

using

29

cubits

for

the

lcu

in

3

000

seconds,

which

is

quite

nice

for

a

primitive

because

considering

only

uses

2.5

megabytes-

that's

quite

nice,

so

you

can

kind

of

see

this.

This

is

I've

almost

finished

now,

so

you

can

kind

of

see

here

that

this

is

memory

for

for

the

lcu.

And

then

you

have

this

kind

of

two

three,

four,

five,

six,

seven,

eight

nine

ten

eleven

site,

Hubbard

models.

A

You

can

see

here

it's

kind

of

what

else

you

you

can't

once

you

get

enough

Hamilton

in

turn,

you

have

to

add

an

extra

Cuba

into

the

repair

register.

So

that's

what

the

stepwise

model

is

here.

So

as

long

as

you're

in

the

same

qubits

kind

of

regime

in

your

prepare

register,

you

get

linear

scaling

with

your

memory,

which

seems

to

be

quite

nice

and

then

obviously

the

contraction

time

is

kind

of

is,

is

kind

of

you

can

see.

A

We've

got

log

10

here

on

the

scale,

so

it's

kind

of

you

can

see

that

it's

exponential

with

conjunction

time,

but

this

is

kind

of

not

using

any

of

the.

This

is

just

all

the

default

methods

within

q10.net,

so

I

would

just

finish

so

what

we've

done

is

built

a

pie

ticket

the

tensor

Network

converter,

with

post

selection,

implemented

lcu

contractions

on

permutter.

A

We

still

have

to

apply

the

LCD

times

and

do

the

quad

signal

processing.

We

need

to

look

further

compilation

strategies

that

might

leverage

more

on

silvers,

which

might

be

more

suitable

for

intense

Network

contraction

and

then.

Finally,

it's

a

question

to

the

Nvidia

guys.

Does

the

repeating

structure

of

quad

signal

processing

allow

for

a

simple

contraction

path?