►

Description

Talk given (remotely from Dublin, Ireland) at the HTM Community Meetup, Numenta, Redwood City, CA on November 13th, 2015. Paper now published at http://arxiv.org/abs/1512.05245

Using 30 years of results from Complex Dynamical Systems, Fergal Byrne describes how Jeff Hawkins' Hierarchical Temporal Memory (HTM) can be extended to describe neocortex as a Universal Dynamical Systems Computer.

A

Okay,

so

hi

everybody

hunts

and

thanks

for

turning

up

at

this,

and

so

this

is

just

a

something

I've

been

working

on

for

the

last

year

or

a

year

and

a

half

or

so

and

kind

of

finally

came

to

fruition

and

just

kind

of

early

summer.

So

this

is,

and

it's

still

work

in

progress,

but

I

think

it's

in

a

state

where

I

can,

where

I

can

explain

it

to

people

and

so

bear

with

me

one

second,

okay,

so

just

to

really

start

off.

A

A

So

what

you

just

saw

there

was

about

I,

don't

know

several

thousand

starlings

in

a

flock

being

attacked

by

a

peregrine

falcon

and

there's

a

couple

of

things.

First

of

all,

each

of

those

starlings

is

just

paying

attention

to

about

seven

to

ten

of

its

neighbors

and

yet

they're

able

to

react

as

a

group

of

several

thousand

individuals

to

a

single

attacker.

That's

the

first

thing,

so

that's

what's

known

as

a

complex

adaptive

system.

A

The

other

thing

is

is

that

when

we

watch

that

video

we're

able

to

see

what's

going

on,

so

we

can

actually

interpret

this

blob

of

dots

as

if

it

was

a

single

organism,

reacting

dynamically

to

the

particular

attack

and

emergently

forming

some

sort

of

a

strategy.

So

there's

actually

several

things

going

on,

and

this

is

kind

of

the

hierarchy

of

these

dynamics.

Is

this

not

just

the

fact

that

the

birds

themselves

are

doing?

A

This

is

the

fact

that,

when

we

look

at

that

video,

we

can

understand

and

interpret,

even

though

we're

looking

at

a

couple

of

thousand

dots,

we

know

exactly

what's

going

on,

why

they're

doing

it,

even

though

individually

they're

just

reacting

in

a

certain

sort

of

a

pre-programmed

way.

That's

the

first

thing,

and

the

second

thing,

then,

is

from

the

world

of

humans

yeah.

This

is

a

sport

that

we're

very

proud

of

that's

kind

of

a

couple

of

thousand

years

old.

I

just

want

to

show

off

this

kind

of

highlights,

clip

of

some

hurling.

A

A

Just

this

simple

problem

of

a

serve

from

a

standing

still

position,

you

need

to

take

into

account

these

things,

and

these

are

the

equations

that

you

need.

These

are

couples

differential

equations

that

you

need

in

order

to

figure

out

and

what

the

effect

of

spin

is

going

to

be

on

where

the

ball

ends

up,

so

possibly

we're

solving

these

differential

equations.

But

it's

highly

unlikely

saying,

as

these

things

were

only

developed

in

the

last

50

20

years.

So

what

we

actually

do

is

stuff

like

this

and

stuff

like

this

and

stuff

like

this.

A

So

luckily

there

is

a

whole

field

of

applied

mathematics

that

has

been

developed

since

about

the

1960s,

mainly

because

we

now

have

computers

that

can

do

all

these

calculations

that

don't

require

understanding

and

differential

equations

or

if

they

do

you

just

plug

them

in

and

these

libraries

just

solve

them.

For

you,

there

is

an

example

of

a

robot

which

and

basically

rides

a

bike,

but

it

doesn't

solve

any

equations.

What

it

does

is

it

just

reacts

to

what's

happening

to

itself

under

the

bike,

so

it

just

corrects

for

errors

effectively.

A

B

This

is

the

Lorenz

attractor.

The

Lorenz

is

an

example

of

a

couple

dynamic

system

consisting

of

three

differential

equations,

where

each

component

depends

on

the

state

and

the

dynamics

of

the

other

two

components

you

can

think

of

each

component,

for

example

as

being

species,

foxes,

rabbits,

grasses

and

each

one

changes

depending

on

the

state

of

the

other

two.

B

So

these

components

shown

here

as

the

axes

are

actually

the

state

variables

or

the

Cartesian

coordinates

that

form

the

state

space

notice

that

when

the

system

is

in

one

lope,

X

and

Z

are

positively

correlated

and

when

the

system

is

in,

the

other,

lobe

x

and

z

are

negatively

correlated.

The

manifold

m

consists

of

the

set

of

all

trajectories

and

phi

is

the

flow

on

n

defined

by

the

coupled

equations.

M

of

T

is

a

point

on

the

manifold.

B

We

can

view

a

time

series,

then

as

a

projection

from

that

manifold

onto

a

coordinate

axis

of

the

state

space.

Here

we

see

the

projection

onto

axis

X

and

the

resulting

time

series

recording

displacements

of

X.

This

can

be

repeated

on

the

other

coordinate

axes

to

generate

other

simultaneous

time

series.

So

these

time

series

are

really

just

projections

of

the

manifold

dynamics

on

two

coordinate

axes.

Conversely,

we

can

recreate

the

manifold

by

projecting

the

individual

time

series

simultaneously

back

into

the

state

space

to

create

the

flow.

B

In

this

panel

we

can

see

the

three

time

series

XY

and

Z,

each

of

which

is

really

a

projection

of

the

motion

on

that

manifold,

and

what

we're

doing

is

the

opposite.

Here

we

are

taking

the

time

series

and

projecting

them

back

into

the

original

3d

state

space

to

recreate

the

manifold

that

butterfly

attractor.

B

B

You

there's

a

very

powerful

theorem

of

proven

by

florists

Takens

that

shows

generically

that

one

can

reconstruct

a

shadow

version

of

the

original

manifold

simply

by

looking

at

one

of

its

time.

Series

projections,

for

example,

consider

the

three

time

series

shown

here.

These

are

all

copies

of

each

other.

They

are

all

copies

of

variable

X

each

is

displaced

by

an

amount

tau,

so

the

top

one

is

unlocked.

B

The

second

one

is

lagged

by

tau

and

the

blue

one

on

the

bottom

is

liked

by

2

tau

Dickens

theorem

says

that

we

should

be

able

to

use

these

three

time

series

as

new

coordinates

and

reconstruct

a

shadow

version

of

the

original

butterfly

manifold.

So,

let's

see

how

this

works.

This

is

the

reconstructed

manifold

produced

from

lags

of

a

single

variable,

and

you

can

see

that

it

actually

does

look

fairly

similar

to

the

butterfly

attractor

each

point

in

the

three-dimensional.

B

Reconstruction

can

be

thought

of

as

a

time

segment

with

different

points,

capturing

different

segments

of

history

of

variable

X

and

the

reconstructed

manifold

is

then

the

library

or

collection

of

the

historical

behavior

of

X.

The

reconstruction

preserves

essential

mathematical

properties

of

the

original

system,

such

as

the

topology

of

the

manifold

and

its

the

a

panov

exponents.

More

importantly,

this

method

represents

a

one-to-one

mapping

between

the

original

manifold,

the

butterfly

attractor

and

the

reconstruction

MX,

allowing

us

to

recover

states

of

the

original

dynamic

system

by

using

lags

of

just

a

single

time

series.

B

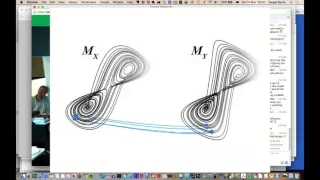

Takens

theorem

gives

us

a

one-to-one

map

between

the

original,

manifolds

and

reconstructed

shadow

manifolds.

Here

we

will

explain

how

this

important

aspect

of

attractor

reconstruction

can

be

used

to

determine

if

two

time

series

variables

belong

to

the

same

dynamic

system

and

are

thus

causally

related.

This

particular

reconstruction

is

based

on

lags

of

variable

X.

If

we

now

do

the

same

for

variable

Y,

we

find

something

similar

here.

B

We

see

their

original

manifold

M,

as

well

as

the

shadow

manifolds

MX

and

my

created

from

lags

of

x

and

y

respectively,

because

both

MX

and

my

map

one-to-one

to

the

original

manifold

M

they

also

map

one-to-one

to

each

other.

This

implies

that

the

points

that

are

nearby

on

the

manifold

my

correspond

to

points

that

are

also

nearby

on

MX.

B

We

can

demonstrate

this

principle

by

finding

the

nearest

neighbors

in

my

and

using

their

time

indices,

to

find

the

corresponding

points

in

MX.

These

points

will

be

nearest

neighbors

on

M

X.

Only

if

x

and

y

are

costly

related.

Thus,

we

can

use

nearby

points

on

my

to

identify

nearby

points

on

MX.

This

allows

us

to

use

the

historical

record

of

Y

to

estimate

the

states

of

X

and

vice-versa

a

technique.

We

call

cross

mapping

with

longer

time

series

the

reconstructed

manifolds

are

denser

nearest

neighbors

are

closer

and

the

cross

map

estimates

increase

in

precision.

C

A

A

Now

what

what

the

theorem,

in

the

middle

of

that

video

talks

about,

which

is

Hawkins,

theorem

or

Takens

theorem

mathematically

proves

that

this

must

be

true

of

certain

types

of

well-behaved

systems,

but

what

people,

since

nineteen,

eighty

or

so

in

every

maths

Department

in

the

world

in

every

university?

Okay,

if

you

go

down

and

look

at

what

they're

studying

they're

studying

this

right,

they're

studying

the

fact

that

these

things

can

actually

be

managed

in

a

non

analytic

way.

A

And

so

this

is

the

important

thing

and

there

was

actually

a

professor

of

applied

mathematics

at

the

New

York

City,

Meetup

and

I

went

through

this

entire

thing

with

him

and

he

said

yeah,

that's

what

we've

been

doing

for

the

last

20

25

years,

so

he

guarantees

that

this

is

completely

valid,

and

this

is

what

all

these

guys

have

been

doing.

The

problem

is

that

the

mathematics

of

it

is

very

complicated,

so

practically

nobody

else

knows

about,

but

this

is

what

all

these

guys

are

just

studying

all

the

time.

A

So

just

to

give

you

an

example

of

okay,

so

it's

this

is

the

same

sort

of

thing

as

you

saw

in

the

previous

video.

So

it's

a

time

lag

and

X

Y

Z

plot

of

the

hot

Jim

data

and

to

show

you

I've

done

a

similar

thing

where

I've

projected

off

graphs

of

the

I'm,

not

I'm,

not

able

to

see

what

you're

seeing

now

so

I'm

I'm

presuming

you

see

it

so

there's

graphs

going

off

on

the

XYZ

axes

where

you

can

see

what

looks

like

the

familiar

hot

Jim

waveform.

A

But

essentially,

this

is

the

same

sort

of

thing,

and

the

idea

is

that,

if

you

can,

the

theorem

says

that

if

you

can

basically

project

that

data

into

a

high

enough

dimensional

space,

and

certainly

our

region

of

neocortex-

is

going

to

give

you

a

high

enough.

The

SD

ORS

are

high

enough

dimensional

vectors

that

you

can

essentially

take

in

that

data

absorb

it

and

merge

it

with

the

dynamics

of

the

of

the

region

itself

and

perform

this

operation

that

these

guys

have

been

saying

is

vital

to

how

couple

dynamical

systems

work.

A

A

C

A

Okay,

so

just

to

put

that

into

perspective,

so

I'm

just

going

to

just

very

quickly.

This

requires

now

the

prediction

assisted

CLA,

which

is

this

type

of

column,

where

every

cell

has

its

own

proximal

dendrite,

that's

the

that

is

the

one

thing

that

that

is

required

for

this

and

and

just

I'm

just

going

to

very

quickly

go

through

this.

So

this

is

the

the

vector

version

of

a

pattern,

memory

or

spatial

pooling,

and

this

is

important

because

and

essentially

what

what

each

column

is

trying

to

do

when

it

feed-forward

input.

A

Is

it's

trying

to

approximate

that

input,

so

the

and

green

vectors

here

are

and

close

to

the

input,

so

they

have

a

lot

of

overlap

in

that

in

the

HTM

sense,

while

these

vectors

here

have

much

less

over

how

overlap

or

perpendicular

to

it-

and

these

are

the

ones

that

participate

in

the

SDR,

so

you

can

imagine

that's

given

an

input.

There's

a

kind

of

a

cluster

of

a

column

representations

that

closely

match

that

input.

A

Okay,

so

and

then

transition

memory

is

then

the

same

sort

of

thing

and

then,

when

those

two

are

mixed,

you

get

basically

a

current

injection.

That

is

a

mixture

of

both

the

feed-forward

input

and

their

predicted

input

from

the

distal

dendrites,

and

then

that's

just

the

equivalent

time

to

fire,

and

this

is

really

the

key

diagram

about

how

that

works.

So,

above

you

see,

there

are

a

number

of

columns

and

in

those

columns,

three

numbers.

The

green

ones

are

basically

where

cell

has

fired,

because

it's

predictive

and

the

brown

ones

are

and

bursting

columns.

A

So

you

can

see

that

there's

a

sequence

of

the

predictive

columns

firing

first

followed

by

the

inhibition

and

then

followed

by

bursts

in

columns.

So

if

you

follow

the

sequence

of

those,

you

can

see

that

there's

a

list

of

cells

that

fire

first,

the

green

ones,

then

there's

a

list

of

ones

that

are

bursting

and

those

form

into

this

kind

of

sequence

here,

which

is

pyramidal,

predictive

cells,

inhibitory

cells

and

then

bursting

cells,

and

then

inhibitory,

spreading

cells

and

those

form,

basically

a

vector

which

is

a

predictive

vector

and

a

perpendicular

bursting

vector.

A

And

that's

the

signal

that

the

that

layer

of

cortex

is

going

to

produce

okay.

So

when

you

translate

that

back

into

this

dynamical

system

thing

basically,

transition

prediction

is

where

a

set

of

nearest

neighbors

are

able

to

predict.

What's

going

to

happen

next

in

this

kind

of

vector

space

of

these

high

dimensional

manifolds

and

multi

predictions

worked

very

similarly.

So

this

is

a

thing

where

two

predictions

are

taking

place

and

basically,

what

they're

doing

is

they're

saying.

Am

I

in

one

regime

or

am

I

in

the

other

regime?

A

How

that

and

how

the

dynamics

of

the

of

the

inputs

are

changing

and

then

that's

feeding

through

to

L

5,

which

again

forms

a

couple

dynamical

system

with

the

motor

output

and

then

L

6

is

controlling

all

of

these

different

activities

by

or

their

blocking

or

actually

creating,

its

own

version

of

the

inputs.

So,

for

example,

L

6

can

shut

off

inputs

and

then

drive

L,

4

and

drive

the

entire

rest

of

the

region

to

simulate

forward.

I

think

that's

something

that

Eric

has

been

working

on,

something

very

very

similar

to

that.

A

So

if,

for

example,

the

and

the

bird

flock

you

saw

when

it

was

on

its

own,

without

any

predator,

had

one

type

of

dynamic,

whereas

when

it

was

being

attacked,

it

split

into

two

or

it

buckled

or

whatever

did,

and

we

can

see

that

and

perceive

that

and

that's

going

to

happen

in

L

two.

But

this

is

gonna

see

that

those

there's

going

to

be

a

shift

of

the

dynamics

in

L

4.

A

We

can

also

model

the

evolution

of

a

non-stationary

system,

that's

the

same

sort

of

thing

and

that

involves

and

L

2

3,

going

through

a

sequence

of

recognitions

of

what's

going

on

in

L

4,

and

we

can

also

extract

chaotic

signal

from

noise.

And

this

is

something

again

that

comes

very,

very

much

from

the

applied

maths

guys

and

we

can

also

detect

and

measure

causal

relationships

and

I.

A

Think

that

was

referred

to

in

that

video

of

this

and

but

Sugihara

has

been,

it's

actually

called

Sugihara

causality

and

this

metric-

and

you

can

do

that

directly

in

a

region

of

cortex,

and

you

can

also

communicate

between

regions

and

that's

just

simply

by

coupling

the

dynamics

of

different

regions.

Together

so,

for

example,

the

default

network

in

in

the

neocortex

is

basically

about

these

things.

A

These

guys

didn't

really

have

a

computational

substrate

to

base

this

on,

and

this

is

really

the

thing

that

I'm

doing

is

to

couple

both

the

the

neuroscience

modeled

using

HTM

to

all

the

stuff

that

these

guys

have

been

talking

about,

and

you

can

also

obviously

control

your

limbs

tools

and

mechanical

devices.

And

you

can

do

this

in

a

very

skillful

way

that

involves

taking

chaos

and

turning

it

into

limit

cycles

and

making

sure

that

it's

much

more

predictable.

A

Ok,

so,

just

to

sum

up,

the

hypothesis

is

that

the

brain

is

just

like

a

digital

computer

is

a

universal

symbolic

computer

and

I'm,

proposing

that

the

brain

is

a

universal

dynamical

systems,

computer

and

we

now

have

a

really

good

mathematical

model

for

cortical

function

and

layer,

four

models,

the

dynamics,

layer,

two

and

three

characterized

the

real

world

layer.

One

provides

top-down

context:

there

are

five

acts

on

the

world

and

layer.

Six

under

this

model

is

the

operating

system

of

the

brain.

So

that's

very

much.

C

A

D

D

D

So

the

first

diagram

you

showed

a

manifold

which

basically

you're

saying

you

can

extract

dimensionality

from

it

and

that

the

dimensionality

you

abstract

has

a

pattern

and

maybe

that

those

patterns

are

offset

in

a

window

type

fashion

and

then

that

those

patterns

can

be

replayed

back

and

you

can

reconstruct

a

version

of

the

manifold

from

that

now

I'm

trying

to

understand

how

that

relates.

First

off

I,

don't

know

if

that's

correct,

but

you

can

tell

me

that

in

a

second

but

I'm

trying

to

understand

how

that

relates

back

to

prediction.

A

Okay,

so

the

idea

is

that

of

all

possible

worlds,

all

possible

things

that

could

happen

only

a

very,

very

small

number

of

those

things

really

do

happen

right.

So,

for

example,

if

you

drop,

if

you

drop

a

glass

from

six

feet,

it'll

smash

into

millions

of

pieces.

Okay,

it's

not

going

to

those

pieces

will

not

reassemble.

Okay,.

D

A

The

world

only

works

in

a

small

number

of

ways,

out

of

all

the

possible

ways

that

it

could

possibly

work.

Okay,

those

things

are

now

governed.

We

know

by

all

these

mathematical

equations

and

by

entropy

and

all

this

kind

of

stuff,

but

evolution

doesn't

know

that

and

our

brains

don't

really

understand

that

what

we

do

is

we

learn

the

small

subset

of

things

that

really

happen

in

the

world.

Okay,

and

we

don't

learn

them

by

learning

equations.

A

What

we

do

is

we

learn

by

following

how

they

evolve,

how

we

see

them

actually

happening,

okay

and

yeah.

What

the

mathematics

shows

is

that

you

can

just

do

that.

You

don't

need

to

understand

what

the

equations

driving

the

dynamical

system

are.

A

dynamical

system

just

means

it's

some

thing

that

has

mathematics

ruling

us

deciding

what

happens

next,

but

you

don't

need

to

know

the

mathematics.

All

you

need

to

do

is

just

watch

the

pink

and

if

you

have

a

suitable

apparatus

in

your

head,

then

you

can

learn

to

build

a

model

of

that

thing.

A

That

works

the

same

way

as

the

thing

itself

and

that's

what

this

takin's

theorem

proves.

Mathematically

for

a

certain

small

subset

of

these

types

of

systems,

but

it

turns

out

that

these

guys,

over

the

last

20

years,

25

years,

every

system

they

look

at

this

works

right.

So

you

don't

need

to

understand

the

exact

details

of

it.

How

those

things

work!

All

you

need

to

do

is

find

some

time

series

of

metrics

and

be

able

to

model

those

transitions

and

you'll

be

able

to

make

forecasts

and

predictions

so,

for

example,

in

weather

forecasting.

A

This

is

the

type

of

thing

that

they

use,

so

the

supercomputers

doing

weather

forecasting

no

longer

run

these

physical

models.

What

they

do

is

this

type

of

prediction:

they

follow

the

dynamics

and

they

just

use

this

time:

time-series,

reconstruction

and

and

and

couples

and

dynamical

systems

to

do

this.

Alright,

so

the

weather

forecasters

are

doing

this

because

weather

is

really

hard.

Okay-

and

this

is

literally

the

state

of

the

art

in

all

of

these

types

of

systems,

but

nature

discovered

this

millions

of

years

ago.

Nature

discovered

that

it

didn't

have

to

solve

differential

equations.

A

It

just

had

to

build

some

way

of

soaking

of

the

stream

of

information

coming

off

these

systems

and

it

just

works,

and

the

mathematics

now

shows

that

it

does

work.

And

what

I'm

saying

is:

is

that

that's

why

our

brains

are

doing

this

and

why

we

have

L

4

and

L

2,

3

and

l5

and

l6

controlling

it

all

yeah?

A

So

the

point

is

you

have

to

have

it

show

the

mathematics

in

order

for

people

to

believe

it

right

and

then

get

some

professors

and

they'll

say

yeah,

that's

all

culture

which

I

have

done,

and

then

you

just

need

to

trust

it

right.

Ok,

because

I

don't

understand

any

more

than

you

do.

Have

them

watch

this

I.

C

A

C

A

I,

don't

know

our

system,

it's

just

something

that

evolves

over

time

and

certain

in

some

sort

of

a

deterministic

way.

You

know,

certainly

everything

that

happens

in

your

brain

is

one

of

those

and

every

level

you

look

at

it's

just

that.

It's

always

too

complex

to

model

directly

mathematically,

and

so

there's

just

you

know.

There's

no

doubt

about

that,

and

you

know

this

half

of

neuroscience

is

is

based

on

treating

neuroscience

as

a

as

a

dynamic,

complex

adaptive

system.

So

well.

A

What

I'm

saying

is

that

it's

not

about

information

in

this

step-by-step

way

and

analysis,

which

is

what

they're

you

know,

good

old

fashioned

they

are.

You

talked

about

that's

a

construct

that

we

put

on

the

top

of

the

pillow,

only

we're

capable

of

doing

that.

What

every

mammal

is

doing

with

its

neocortex

automatically

is

just

this

process

of

using

a

dynamical

system

to

model

a

dynamical

system

to

interact

with

a

dynamical

system

to

make

predictions

about

it

and

then

coupling

those

together

to

perform

cognition.

A

E

A

They

are

modeled

in

the

formation

of

St

yours,

you

know

so,

there's

implicitly

modeled

in

the

you

know

the

local

inhibition

algorithm

in

and

music

HDM,

for

example,

they

are

models,

but

the

dynamics

of

them

are

not

directly

models.

My

dynamics

of

those

are

much

simpler,

they're,

just

a

very,

very

small

extension

of

what

music

is

doing,

for

example,

right,

okay

and

and.

D

A

And

I

have

been

working

on

trying

to

get

that

working

with

HTM

Java,

so

we

might

see

it

in

new

pic

quite

soon,

and

but

it's

that's

a

very

simple

thing

that

isn't

it's

very

much

very,

very

similar

to

what

new

pic

is

currently

doing.

A

new

pic

is

able

to

model

this

stuff

directly

right.

This

is

about

taking

a

step

by

step

kind

of

snapshot

by

snapshot

thing,

which

is

a

simplification

of

what's

actually

happening

in

real

neocortex,

but

it's

this

is

good

enough

to

do

this.

You

know

you

don't

have

to

you.

A

Can

you

can?

There

are

discrete

versions

of

this

that

you

work

just

as

well,

you

know

what

you,

the

dynamical

modeling

of

inhibition

and

excitation,

it's

very,

very

complex,

I,

don't

think

it's

like

it's

like

what

Jeff

was

saying

before

that

you

don't

need

to

model

every

single

detail

of

every

single

process

that

goes

on

in

the

neocortex

in

order

to

figure

out

what's

going

on,

computationally

and

the

the

plan

is

to

see.

A

Can

we

can

we

show

this

happening

using

the

step

by

step

thing

that

HD,

end-users

and

I'm

very

confident,

I

can

and

and

there's

been

a

lot

of

work

and

doing

this

using

RNA,

and

you

know

a

lot

of

different

other

types

of

simplified

neural

networks

as

well.

They

don't

have

the

complexity

that

HTM

has

that's

the

computational

power

that

HD

on

has

and

I.

Think

that's!

What's

going

to

make

the

difference

in

establishing

this

using

HTM.

F

Hi

Fergal,

this

is

Mattea.

Thank

you

for

your

presentation.

So

I've

got

a

couple

questions

for

you.

I

actually

read

your

paper

on

this

and

there's

a

couple

questions

that

I

had

so

one

thing

is

I'm.

I'm

I

only

got

to

read

your

paper

once

so.

I

didn't

do

a

really

really

deep,

deep

dive,

but

one

thing

I

was

wondering

was

whether

you

have

made

any

attempts

to

do

any

proofs

with

the

principles

that

you

have,

that

you've

introduced

to

prove

the

fidelity

of

the

model.

A

I

think

that's

already

been

shown

is

to

to

show

that

is

to

provide

a

mathematical

model

for

HTML

right.

So,

given

the

mathematical

model

on

it,

and

it's

just

literally

derived

from

the

algorithms

right,

so

you

can

look

at

the

code

and

you

can

see

that

you're

doing

a

vector

dot

product,

for

example,

when

you're

doing

SP

yeah

so

and

when

you

do

the

learning

on

that

all

you're

doing

is

just

heavy

and

learning

on

that

you're.

A

Just

adjusting

the

firmness

on

that

and

you're

increasing

the

probability

of

a

match

between

a

single

columns

and

connection

vector

and

an

input

vector,

you're,

just

improving

the

match

right.

So

it's

inherently

stable

and

there's

loads

of

results

that

show

that

when

you

have

a

system

like

that-

and

you

add

in

a

K

winner-takes-all

algorithms

that

I

think

that's

referred

to

in

part

of

that

first

paper.

A

It

can

do

any

binary

computation

you

like

so

there's

proofs

of

that,

and

it's

also

one

of

the

most

efficient

ways

of

doing

it

in

a

single

layer,

and

you

have

to

do

it

in

a

multi-layer

network

and

that

would

have

much

lower

stability.

So

all

those

results

are

well

known

once

you

can

characterize

what

the

mathematics

of

HTM

are,

which

is

what

that

first

paper

is

really

all

about

yeah,

so

I

did,

like

other

people

have

proven

all

of

those

things.

A

So

I

could

probably

dig

up

some

of

those

results

like

this

there's,

no

doubt

and

I

just

saw

it

taught

by

Hinton,

who

keeps

on

inadvertently

veering

towards

what

we're

doing,

and

he

has

an

entire

thing

about

how

his

type

of

model

can

be

replicated

with

a

back

propagation,

using

very

similar

ideas

to

what

I'm

talking

about,

which

is

the

top-down

feedback.

I

mean

stuff

that

and

Jeff

and

Co

have

developed,

but

this

is

and

there's

a

closer

bridge

to

it,

and

but

Hinton

has

shown

that

all

of

these

things

work

as

well.

A

C

It's

we're

running

late,

we're

past

time.

Further.

We

wish

you

were

here.

Sorry

you

couldn't

make

it

meet

a

very

lovely

thanks

thanks

for

connecting

with

us

and

given

you

given

this

talk,

the

rest

of

the

talks

are

not

going

to

be

remote.

They're

all

going

to

be

here

so

I

need

five

minutes

to

switch

my

configuration

a

bit

and

we'll

probably

do

suits

hi

Felix

and

subitize

is

going

to

be

a

whiteboard

chalk,

talks

yeah,

but

yeah,

but

he's

willing

to

go

over.

C

You

know

for

10

or

15

minutes

the

current

research

state

of

yeah.

We

were

to

talk

about.

What's

what's

happening

in

research,

it

wouldn't

be

bad

to

do

it's.

Anyone

interested

in

that

seeing

what's

happening

in

research,

okay,

so

I'll

just

pull

the

white

board

over

here

and

we'll

set

him

up

and

then

we'll

probably

do

Felix

Chandan,

Sergei

and

I'll

go

last

as

time

permitting.