►

From YouTube: Apache TVM Community Meeting, September 23 2021

Description

Featured Agenda:

* Roadmap preRFC

* 0.8 Release News - Junru Shao

Join the TVM Community at http://tvm.apache.org/community

A

A

All

right

with

with

with

with

nobody

stepping

up,

I

still

want

to

say,

welcome

to

everyone,

and

we

also

have

a

few

announcements.

Over

the

last

month,

the

tvm

community

has

continued

to

grow

and,

as

as

a

lot

of

its,

its

contributors

have

have

kind

of

you

know,

made

significant

contributions

and

they've

and

and

we'd

like

to

recognize

them

for

that

by

you

know,

we

either

either

promoting

them

to

committer

or

reviewer

status.

So

gsrp

rossini

is

a

new

committer.

A

A

A

You

know

made

a

lot

of

contributions,

but

have

also

demonstrated

a

commitment

to

reviewing

other

people's

patches

so-

and

this

is

just

a

reminder

that

open

source

projects

depend

upon

a

community

coming

together

and

collaborating,

and

so

that

means

not

just

sending

up

pull

requests

but

also

participating

in

the

review

process,

and-

and

so

we

want

to

thank

everybody

who

has

been

contributing

to

tvm

and

who

has

you

know,

really

shown

their

commitment

to

the

project

and

and

making

it

better.

So

a

big

round

of

congratulations

to

everyone

on

the

list.

A

A

So

you

should

keep

your

eyes

open

that

for

the

next

few

weeks

and

we're

looking

for

talks,

whether

they

be

short,

lightning,

talks

to

larger

feature,

presentations

to

case

studies

that

are

not

just

related

to

tvm,

but

also

to

machine

learning

acceleration,

and

so,

if

they're,

any

work

that

you're

doing

on

tvm,

but

also

you

know

adjacent

projects

that

you

know

you

think

would

be

of

general

interest

to

the

tvm

community.

Please

submit

a

cfp,

we're

going

to

be

closing

in

october

and

and

and

and

building

a

fantastic

schedule

this

year.

A

A

It's

going

to

be

an

entirely

free

conference

again

and

but,

if

you're

interested

in

sponsoring,

please

feel

free

to

reach

out

to

me

at

events

at

octoml.ai,

because

we're

also

trying

to

go

we're

trying

to

make

the

event

larger,

bring

in

some

more

bring

in

some

more

speakers,

make

it

a

more

polished

experience

and

and

and

so,

if

you're

interested

in

in

participating

and

helping

realize

that

vision.

We

would

love

to

hear

from

you

and

again

that's

events

at

octoaml.ai.

B

B

B

I'll

talk

a

little

bit

more

about

that

later.

But

what

I'm

trying

to

say

here

is

that

there's

some

really

fine-grained

planning

like

the

individual

task

tracking

on

github

and

then

there's

some

stronger

technical

visions

like

some

of

the

things

that

tnt

posts

or

some

of

the

rfcs

that

all

of

you

in

the

community

post

and

we're

figuring

out

how

to

unify

all

of

those

into

an

easily

readable

context.

B

And

then

the

next

one

is

to

provide

everyone

with

visibility

into

all

of

tbm's

projects.

So

this

is

very

useful

for

people

who

are

newer

to

the

project

or

people

who

focus

in

one

particular

area

of

tbm

and

want

to

discover

more

areas

of

tbm.

So

we're

really

aiming

to

make

it

easy

for

everyone

to

discover

different

levels

of

the

stack

and

to

discover

what

we're

doing

on

the

community

side,

etc,

and

then

the

last

one

is

to

encourage

collaborative

and

open

planning.

B

There

are

so

many

contributors

to

the

open

source

community

and

really

we

want

to

make

sure

that

everyone

is

working

in

a

synergistic

way

and

that

we're

all

working

to

solve

the

same

problems

and

we're

working

together.

So

I

think

that

having

a

roadmap

and

having

clear

ownership

will

really

help

with

that.

B

B

It

shows

multiple

features,

getting

added

into

versions

of

tvm

and,

lastly,

we

have,

on

the

rightmost

side

a

technical

vision,

and

this

is

where

we

see

the

project

going

and

tnc

has

posted

these

for

the

past

couple

of

releases,

basically

for

each

level

of

the

stack

there's

a

short

overview

of

features

that

are

to

be

created

here,

and

so

basically,

all

of

these

are

great,

and

the

road

map

is

not

meant

to

replace

any

one

of

these.

It's

meant

to

unify

these

and

make

it

more

readable,

more

discoverable

for

the

community.

B

B

So

there

are

four

primary

areas

of

this

roadmap

that

I'd

like

to

walk

you

through

today.

The

first

one

is

the

background

and

motivations

here,

and

so

this

is

really

helpful

for

someone

who's,

maybe

a

little

bit

newer

to

this

particular

sub

project

or

this

particular

piece

of

tvm.

So

this

is

meant

to

give

like

an

overview

of

key

themes

for

whatever

project

or

subproject,

that

the

roadmap

is

covering

in

this

case,

for

ci

and

testing.

B

Maybe

they

see

something

that

they

would

like

to

change

in

tbm,

and

this

is

a

higher

visibility

way

of

showing

it

than

simply

filing

a

github

issue

and

it'll

be

really

nice

when

we

have

roadmaps

for

each

of

the

sub-projects

in

tbm

to

be

able

to

have

a

backlog

for

each

of

those

projects,

and

so

that

people

who

are

new

to

the

project

can

look

at

this

context.

They

can

understand

exactly

the

impacts

that

they're

having

to

the

project

as

they're,

contributing

to

the

backlog

and

moving

those

items

onto

our

roadmap.

B

Okay,

so

going

into

some

of

the

design

considerations,

I

first

wanted

to

talk

about

content

content

is

really

key

and

I

want

to

make

sure

that

the

content

here

is

really

useful

for

people

to

digest.

So

I

really

want

to

emphasize

that

we

want

to

give

people

the

background

information

that

they

need

to

be

able

to

synthesize

any

roadmap,

whether

or

not

they're

an

expert.

B

Each

of

the

road

maps

should

contain

themes

which

encapsulate

key

focus

areas

of

the

roadmap.

You

saw

that

in

the

demo

that

I

just

presented

and

then

the

roadmap

should

contain

items

which

describe

either

features

or

milestones

that

are

to

be

implemented

within

the

timeline

of

that

roadmap,

and

so

within

the

items.

What

I

didn't

show

you

is:

what

actually

is

the

content

within

the

items

so

I'll

describe

it

here?

Briefly,

so

each

item

should

have

clearly

defined

motivations,

end

goals,

deliverables

and

scope.

B

Based

on

the

consensus

that

everyone

in

the

community

has

come

to,

and

then

dependencies

and

blockers

should

be

easily

traceable

across

roadmaps

and

then

the

delivery

schedule

quarter

by

quarter

should

be

estimated

based

on

those

prioritizations

dependencies

and

blockers,

and

it

should

be

updated,

as

our

confidence

increases,

whether

that's

with

prs,

merging,

rfcs,

merging

or

anything

else,

and

then

the

last

one

I

wanted

to

cover

is

ownership.

This

is

especially

important

in

a

community

with

so

many

collaborators.

B

B

Okay

and

then

another

design,

consideration

that

we

had

in

this

roadmap

is

overall

usability

and

maintainability,

and

so

really

there's

a

few

key

themes

here.

We

want

to

encourage,

sharing

and

collaboration

in

the

early

stages

of

planning,

and

really

I

see

the

road

map

as

something

that

we

can

start

using

as

a

pre-rfc

incubator.

And

what

I

mean

by

this

is

that

a

road

map

can

describe

what

changes

are

desired.

B

You

can

describe

a

problem

that

you

think

needs

fixing

in

tbm,

or

you

could

describe

something

that

you

think

would

be

an

improvement

to

tbm

without

proposing

an

immediate

solution,

and

then

the

rfc

goes

into

a

little

bit

more

detail

and

describes

how

changes

will

be

implemented,

and

this

can

be

a

virtuous

cycle

where

things

are

initially

defined

in

the

roadmap.

Like

I

may

want

to

work

on

this

in

the

future.

B

I'll

put

it

in

the

backlog,

then

you

create

an

rfc

and

once

that's

merged,

you

can

move

it

back

into

the

roadmap

and

then

move

the

item

into

the

particular

quarter

where

it's

delivered

and

then

the

next

key

principle

is

leveraging

our

existing

task

tracking

mechanisms,

the

ones

shown

in

the

prior

art

slide

that

I

showed

earlier.

Really.

This

makes

it

so

that

there's

easier

maintenance

for

everyday

contributors

and

so

that

we

can

show

updated

progress

towards

feature

completion

automatically

as

people

merge

prs.

B

B

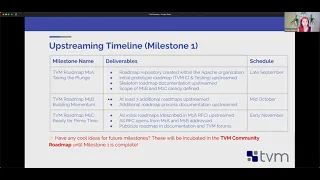

And

so,

lastly,

I

wanted

to

talk

about

some

of

the

upstreaming

timelines.

I

consider

this

to

be

overall

milestone

one

and

so

the

overall

goal

of

milestone

one

is

to

get

the

overall

tvm

roadmap,

as

well

as

the

rest

of

the

sub

component

roadmaps,

upstreamed

and

to

get

all

opens

in

the

rfcs

addressed

and

to

have

documentation

and

public

like

pr

type

things

for

this

roadmap,

and

so

I

split

that

into

three

phases.

B

So

this

one

m1a

is

going

to

be

the

first

one

that

I'll

make

an

rfc

on

in

a

little

bit,

and

basically,

I

want

to

like

basically

upstream

the

initial

prototype

that

I

showed

earlier

in

this

demo

and

then

I'll

have

some

documentation

as

well

as

some

scoping

for

the

future

milestones

and

in

the

future,

milestones

I'll

start

upstreaming,

additional

content

that

I've

created

in

collaboration

with

the

community

and

then

by

the

time

milestone.

One

is

complete.

B

All

of

the

road

maps

will

be

upstreamed,

like

I

said,

and

all

of

the

rfcs

will

be

addressed,

and

if

you

have

any

ideas

for

how

we

can

improve

the

road

mapping

on

its

own,

we

can

incubate

these

in

the

tbm

community

roadmap

until

milestone.

One

is

complete.

They'll

just

enter

the

backlog

and

we'll

work

on

them.

Eventually,.

B

Okay,

so

with

that,

thank

you

so

much

for

your

time

and

listening

to

me

for

10-ish

minutes.

All

feedback

is

now

welcome

and

I

just

wanted

to

especially

thank

certain

folks

on

this

page,

just

for

all

their

feedback

and

support

as

I've

worked

on

this

roadmap

in

terms

of

helping

me

with

content

and

giving

me

feedback,

it's

been

really

helpful.

B

C

Thanks

denise,

it's

really

great

for

presentation,

and

so

one

thing

I'm

thinking

about

this

as

a

distributed

community.

We

are

always

working

together

across

different

companies,

so

I

I

would

say

I

like

to

ask

if

it

is

how

the

procedure

will

look

like

under

a

current

roadmap.

If,

if

like

during

a

release

process,

a

company

may

come

may

come

up

and

say

we

want

to

upstream

those

kind

of

features

to

support

our

own

chips

and

to

apache

tvm.

B

Yeah,

this

isn't

a

great

question

and

I

think

this

process

is

really

in

its

early

stages

and

we're

going

to

be

looking

for

feedback

from

the

community.

As

this

happens,

I

think

really

it's

going

to

be

not

too

much

different

from

the

current

rfc

process

in

that

you

will

still

make

an

rfc

in

the

process

of

trying

to

merge

your

code,

especially

if

it's

a

big

feature,

change.

Adding

it

to

the

roadmap

is

simply

a

way

for

more

visibility,

as

well

as

a

place

for

earlier

discussion

prior

to

the

rfc.

B

So,

for

instance,

anyone

should

be

able

to

file

an

issue

to

the

roadmap

repository

and

describe

something

that

they're

interested

in

working

on.

For

instance,

if

you

were

trying

to

describe

like

auto

tir

at

a

very

high

level,

you

might

say

I

want

to

improve

the

scheduling

infrastructure

to

use

both

template,

free

and

template-based

scheduling,

and

you

wouldn't

go

into

the

details

about

how.

B

But

you

would

create

that

and

you

might

go

into

some

of

the

motivations

of

that,

and

that

would

be

a

roadmap

item

and

as

you

go

through

the

typical

rfc

and

community

consensus

process,

you

can

add

those

additional

details

to

the

roadmap

and

then

add

it

to

a

delivery

schedule.

As

you

build

more

confidence

overall

within

the

community

that

you're

going

to

deliver

something

towards

a

given

timeline.

Does

that

answer

your

question.

C

B

Yes,

yes,

that

is

the

plan

to

reuse

the

tracking

issues

that

are

created

and

to

make

the

roadmap

items

so

that

they

are

unified

so

that

you

get

the

motivations

from

the

start.

Then

some

of

the

details

from

the

rfc

and

then

obviously

the

tracking

from

the

tracking

issue,

is

also

really

important.

Key

piece

to

unify

it

all

into

a

single

place

got

it.

Thank

you

very

much.

D

I

overall,

I

agree

with

what

was

already

said.

I

think

a

lot

of

stuff

looks

good.

I

would

encourage

people

outside

of

those

of

us

who

work

closely

together

every

day

to,

if

not

in

this

session

kind

of,

provide

feedback

we're

trying

to

improve

a

lot

of

the

planning

process.

You

know

in

in

the

meta

for

the

project

to

give

some

more

transparency.

D

A

Yeah,

I

think

I

think

this

is

a

really

exciting

and

well

thought

out

process

for

to

to

kind

of

encourage

larger

community

collaboration

in

the

project,

and

I'm

I'm

really

excited

to

see

it

move

forward

and

how

it's

going

to

turn

out.

So

thank

you,

denise

for

all

of

the

work

that

you've

put

into

this.

I

think

it's

it's

going

to

be

an

important

and

lasting

contribution

to

the

tvm

community.

A

Okay,

well,

once

again,

if

you

have

any

more

questions,

please

please

reach

out

either

on

the

discuss

forum

or

in

the

the

discourse

channel.

You

know,

I

think

that

we're

we're

open

to

a

lot

of

feedback

on

this

and

denise

is

gonna,

take

questions

and,

and-

and

you

know,

suggestions

from

the

community

seriously.

So

we

want

this

to

be

like

a

huge

community

process

now,

along

the

lines

of

of

the

road

map

and

and

what's

happening

with

tvm.

C

C

It's

a

so

some

background,

so

the

previous

release

was

last

year

in

october,

so

it's

been

like

a

year

that

we

haven't

released

anything

yet,

and

the

0.80

is

exactly

really

released

like

in

october

this

year,

and

the

goal

of

this

release

is

to

first

introduce

many

useful

major

features

as

experimental

features

and

stabilization

of

previously

experimental

features.

For

example

like

auto,

auto

scheduler

things

like

that,

and

the

release

process

is

pretty

simple.

First,

we

do

release

planning,

so

we

want

to.

C

We

had

a

thread

to

discuss

with

the

community

about

like

what

kind

of

feature

do

we

want

to

include,

for

example,

we

might

want

to

include

some

mascara

features

that

that

is

not

yet

upstream,

but

will

be

up

soon

really

soon.

So

anyone

who

is,

who

is

who

is

willing

to

get

a

specific

commit

included,

can

comment

on

that

redis

planning

threat

and

to

like

make

sure

that

the

committee

is

included,

and

we

create

proposals

for

highlight,

highlights

in

the

release.

C

For

example,

we

we

we

need

to

like

go

over.

Those

commits

in

the

previous

year

and

find

what

are

the

major

changes

in

release

and

decide

the

release

cut

date.

That

is

the

first

stage

and

on

the

second

stage

after

we

kind

of

really

release

candidate,

it

will

produce

a

like

tarball

as

a

artifact

of

the

release

and

the

turbo

will

be

tested

by

pmc

and

the

community.

C

C

C

We

have

we

introduced

a

lot

of

new

features

in

micro,

tvm

and

arm

and

hexagon

backhand

and

operator

coverage,

method,

back-end

and

documentation

tutorials.

So

that

is

a

proposal

for

enhancements

and

also

we

have.

We

have

the

previous

features

stabilized.

The

major

feature

is

auto

scheduler.

It

was

an

experimental

feature

in

the

last

release

and

after

one

year

of

testing

and

bug

fixes,

we

get

is

finally

like

production,

ready

and

rust

binding.

C

E

C

I

think

it

is

a

great

question.

So,

yes,

it

is.

It's

been

like

almost

a

year

since

the

previous

release,

and

we

are

sort

of

we

are

sorting

out

those

commits

which

are

like

65

pages

of

commits

and

into

different

categories

like

in

the

future.

It

is

certainly

possible

to

release

minor

releases

like

every.

C

A

Yeah

and

and

with

regards

to

the

like

why

tlc

pack

versus

a

tvm

branded

release

tvm,

especially

if

you're,

if

you're

running

on

on

gpus,

depends

heavily

on

proprietary

drivers

and

and

and

so

releasing

open

source

software.

That's

packaged

with

proprietary

drivers.

All

can

always

lead

to

to

licensing

problems

and

and

other

issues

so

to

to

to

help

this

to

help

identify

the

software

that

is

has

has

been

linked.

Like

say,

in

video

drivers

we

use

the

tlc

pack,

which

is

a

which

is

a

almost

like

a

a

branch

of

tvm.

A

F

I

haven't

looked

at

the

latest

contribution,

but

I

see

as

I'm

looking

at

you

know

it

used

to

be

not

supporting

too

many

nodes.

There

were

a

lot

of

them.

You

know

that

we're

missing,

so

is

it

going

to

be

compliant

with,

let's

say,

offset

level

13,

including

also

some

extensions

from

microsoft

for

quantized

nodes?

H

That

is

active

work.

We

are

tracking

the

onyx

quantized

nodes,

we've

implemented,

probably

70

of

them.

Now

we

also

come

the

offset

13

tests

against

the

importer

in

ci

and

there

are,

with

a

few

pr's

they're

currently

up

there.

Maybe

a

hundred

unit

tests

out

of

about

a

thousand

that

onyx

ships

that

we're

still

failing

and

we're

actively

working

on

improving

that

support.

So

if

you

want

to

jump

in

and

add

any

hops

we'd

love

to

help.

F

D

Thank

you

just

to

chime

in

on

that.

I

think

those

guys

have

some

tracking

issues

open.

So

if

they're,

you

know,

if

you

want

to

synchronize

some

efforts,

if

there

are

some

critical

ones

you

want,

you

know,

feel

free

to

reach

out

to

like

matt

or

andrew

and

there's

a

couple.

Other

people

who've

touched

him

at

optimal.

Happy

help.

I

think.

F

F

D

A

D

D

You

know

I

I

was

around

when

we

were

working

on

ross

and

we

went

1.0

and

a

lot

of

that

process

for

the

first

six.

Eight

nine

months

beforehand

was

just

really

triaging

and

figuring

out

what

needed

to

go

in

and

then

maybe

holding

back

stuff

or

marketing.

Things

is

experimental

and

figuring

out

both

the

backwards

compatibility

stories

and

stuff

there,

and

obviously

I

don't

think

we

have

to

be

as

rigid

as

they

are.

They've

got

really

really

strong

backwards

compatibility

guarantees.

D

A

Okay,

so

moving

on

to

the

last

bit

of

the

meeting,

we

also

when,

when

we

have

time

available,

we

like

to

open

up

the

floor.

If

there

are

any

other

last-minute

agenda

items

that

people

would

like

to

to

to

add

or

ask

questions

about,

so

is

there?

Is

there

anything

that

that

folks

have

been

curious

about

and

would

like

to

take

this

opportunity

to

kind

of

have

a

discussion

with

the

larger

community

about.

A

G

G

Yeah,

so

I

went

through

the

documentation

and

what

to

the

code

base

also,

so

what

pass

manager

pass

infrastructure

has

to

offer.

So

currently,

it

is

only

having

timing

thing

time,

profiling

for

the

individual

passes,

but

if

I

want

to

test

out

different

passes

and

combinations

like

what

we

used

to

have,

what

we

have

in

user,

gcc

and

llbm

like

that,

so

are

we

planning

to

extend

it

for

the

memory

profiling?

Also,

I,

like

I'm

kind

of

interested

in

this

area,

so

I'm

just

asking.

G

D

D

I

I

one

of

the

things

that

tristan

did

earlier

this

year

is

try

to

unify

a

lot

of

the

timing

profiling

code

because

before

it

was

kind

of

per

target-

and

there

was

less

sort

of

gen

like

generic

unified

support,

like

the

graph

runtime

profiler,

I

think,

gives

some

estimates,

but

I

think

improving

that

kind

of

stuff

and

and

also

improving

it

for

the

generated

kernels

would

be

something

that's

definitely

worth

doing.

And

if

you

have

ideas,

I

think

everyone's

happy

to

hear

them.

H

Yeah,

so

I

think

a

lot

of

people

would

like

to

get

like

memory

usage

information

from

the

from

the

colonels

in

profiling,

but

it's

a

little

tough

to

do,

especially

in

a

cross-platform

way.

I've

been

looking

at

various

approaches,

but

it's

not

really

on

my

roadmap

and

I

I

haven't

found

a

like

really

good

good

way

to

do.

It.

G

Okay,

thanks,

like

so

same

here

like

even

I'm

interested

kind

of

in

this,

but

again

not

having

much

insight.

How

can

I

do

that?

So

he

is.

That

is

one

thing.

Apart

from

that

to

measure

say

power,

consumption

and

all

those

things

we

prefer

to

use

any

external

profiling

tool

right.

Am

I

correct

on

that.

H

D

D

Yeah,

I

think

the

thing

is,

if

you,

if

one

knows

how

to

get

the

profiling

data

they

care

about

for

their

platform,

I

think

we're

happy

to

advise

on

how

to

hook

that

in

in

a

way

that

will

be

generic

with

a

standard

api

for

everyone,

and

then

I

think

iterate,

because

I

think

that's

where

tris

and

I

were

last

time

we

were

looking

at

this

stuff

was

just

you

know.

Every

gpu

is

slightly

different.

You

know,

every

accelerator

is

different.

D

You

know,

even

cpu

stories

sometimes

are

different,

so

I

think

you

know

understanding

exactly

what

the

best

way

to

do.

It

is

another

thing

that

might

be

useful

is,

if

you

find

yourself

using

external

tools,

as

maybe

contributing

back,

even

whatever,

for

doing

this,

with

tvm

generated

code

or

understanding

if

there

are

weaknesses

or

something

that

we

need

to

help

address

like.

D

G

D

I

don't

know

if

there's

anyone

explicitly

working

on

it

from

octomell

right

now.

I

don't

the

arm.

Folks

are

doing

a

lot

of

stuff,

but

it's

mostly

ethos.

U

focus,

and

maybe

someone

else

has

more

data

on

this.

I

mean

one

thing:

is

it

all

the

tools

there

to

start

iterating

on

it

and

just

trying

it

out

and

seeing

what

weaknesses

are

there

are

there

in

the

terms

of

the

code

generation,

at

least

as

a

one

year

ago,

compared

to

the

competing

solutions

like

the

arm

performance

and

tvm

was

very

good.

D

If

you

auto

tune

like

we

found

that

other

libraries

were

not

very

robust

in

terms

of

amount

of

coverage

and

then

also

performance

for

the

for

varying

workloads.

So

like,

at

least

when

we

wrote

up

the

vm

paper,

which

I

we

again,

we

can

talk

about

in

the

discuss

forum.

We

had

very

good

arm

results

on

like

on

a

few

on

a

few

important

models

like

bert

and

a

few

other

things,

so

I

wouldn't

expect

you

to

have

much

difficulty

there.

D

A

G

So

I

posted

one

question

yesterday

and

like

today

early

morning

I

was

able

to

fix

it.

So

basically

relay

was

giving

me

segmentation

fault

and

then

I

could

fix

it

because

so

for

fujitsu.

Basically

they

provide

custom,

co-design

tensorflow,

and

that

was

having

some

environment

issues

internally.

So

yeah

it

was

a

tough

bit.

I

could

fix

it

but

later

on,

so

I

will

close

that

thing

on

this

course,

but

yeah.

These

are

the

few

things

that

I'm

facing.