►

From YouTube: 009 ONNX 20211021 Knight ONNX TVM for dynamic shapes, control flow, quantization compiler OctoML

Description

LF AI & Data Day - ONNX Community Meeting, October 21, 2021

TVM: Dynamic Shapes, Control Flow and Quantization compiler

Speakers: Jason Knight (OctoML) and Andrew Luo (OctoML)

A

So

without

further

ado,

just

you

know

who

is

octomel?

What

are

we

doing?

Well

we're

building

the

machine

learning

deployment

platform,

which

is

an

automated

platform

for

any

ml

end

user

to

take

their

model

in

onyx

or

other

formats

put

that

into

our

system,

optimize,

benchmark

and

package

that

on

a

variety

of

different

platforms,

both

in

on

the

cloud

and

on

the

edge

for

many

different

devices

and

we're

using

a

lot

of

technologies

like

onyx

and

tvm

to

enable

maximum

performance

and

performance

portability

while

making

a

push

button

experience.

A

But

the

the

focus

of

the

talk

is

on

the

the

open

source,

tvm

and

onyx

side

and

interaction.

So

let's,

let's

focus

back

on

that.

If

you

haven't

heard

of

tvm,

what

is

tvm

tvm

is

an

open

source

project

started

about

five

years

ago,

and

it's

a

toolkit

designed

to

enable

anyone

to

achieve

high

performance,

machine

learning

on

any

device

in

the

cloud

or

on

the

edge

or

and

also

to

support

future

devices

as

well.

And

so

what

is

the

project

composed

of

well

at

the

top?

A

You

know

from

cuda

and

opencl

to

wasm

and

web

gpu

spear

v,

direct

c

l,

vmir

et

cetera,

and

it's

it's

auto

tvm,

auto

scheduling

and

auto

tir

that

enable

high

performance

code

to

be

generated

without

strong

manual

intervention

or

human

expertise

involved,

and

so

with

that,

I'm

going

to

pass

it

over

to

andrew

to

talk

about

some

of

our

experience

using

onyx

in

production

and

and

and

where

we're

going

from

here.

Andrew.

Take

it

away.

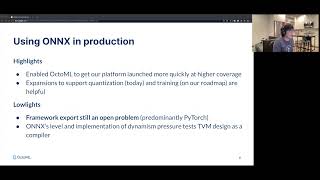

B

Thanks

jason,

so,

as

jason

said,

onyx

remains

our

preferred

way

for

importing

models

into

tvm.

It's

probably

the

most

well

developed

frame

front

end

for

use

in

pvm

and

has

helped

octo

ml

launch

our

platform

quickly,

while

guaranteeing

high

model

coverage

and

being

able

to

talk

with

a

variety

of

different

customers

easily.

B

B

So

by

dynamism

I

mean

shapes

and

values

and

tensors,

which

can

only

really

be

inferred

at

runtime.

So

you

can't

look

at

the

mech

compile

time

and

generate

the

code

properly

ahead

of

time

like

that,

as

we,

the

one

thing

we've

noticed

is

that

as

onyx

gets

older

and

as

offsets

gets,

older,

more

and

more

operators

are

gradually

becoming

dynamic.

B

For

example,

reduce

sum

takes

the

sum

of

a

tensor

across

the

provided

axes

and

older

offsets.

This

axis

was

a

parameter

which

was

an

integer

that

you

could

look

at

compile

time

and

generate

the

proper

code

easily

now

in

offset

13

axis

is

actually

an

input

tensor,

which

it's

a

little

bit

harder

to

do,

and

we've

also

seen

that

this

increasing

dynamism

in

the

operator

upset

is

sort

of

done

inconsistently

at

this

point.

B

Luckily,

even

though

I've

said

all

of

these

problems

a

lot

of

the

current

models,

we

see,

which

use

quote

unquote,

dynamic

offsets

from

the

onyx

framework

aren't

actually

dynamic

because

things

like

the

axis

parameter

or

axis

input

I

described

previously,

aren't

actually

you

know

true.

You

know

black

box

tensors

that

you

can

yeah.

You

can

only

examine

that

run

time

in

order

to

get

the

values

of

they're

actually

constants,

which

we

can

fold

away

running

some

additional

analyses

so

right

now.

B

This

is

not

a

super

major

problem,

but

it

does

show

cracks

potential

cracks

in

the

future.

So

we

go

to

the

next

slide.

We're

going

to

talk

about

some

of

the

things

that

we're

trying

to

do

in

order

to

fix

this.

So

at

tdm

and

octo

ml,

we

sort

of

recognize,

maybe

some

of

the

deficits

with

dynamism

in

tvm.

So

we

of

course,

are

trying

to

come

up

with

projects

in

order

to

fix

this

and

make

other

various

improvements

to

the

compiler

infrastructure

to

better

support

these

new,

more

modern

workloads.

B

So

we

call

this

project

relax

and

it

has

a

lot

of

exciting

things

that

we're

going

to

plan

to

extend

into

tdm,

for

example,

we're

going

to

support

symbolic

shapes

and

being

able

to

propagate

these

symbolic

shapes

with

computation

deduction

and

establishment

of

invariance

throughout

these

computational

graphs.

So

if

we

look

at

this

example

before

we

have

a

tensor

of

size,

n

and

m,

which

can

be

sort

of

inferred

at

compile

time

now,

whereas

in

the

past,

we

couldn't

do

this.

B

So

what

we

see

in

this

graph

here

is

that

when

we

use

tvm

in

order

to

execute

a

training

graph,

taking

a

sort

of

a

best

of

both

worlds

approach,

where

we

take

the

either

the

fastest

dvm

kernel

or

the

fastest

native

executor

for

whatever

gpu

we're

using

we'd,

see

that

we

can

actually

see

major

improvements

in

performance.

So

you

know

on

the

left.

We

see

burt

with

one

set

of

parameters

and

we

get

a

40

improvement

on

the

right.

We

see

like

a

27

improvement.

A

Thanks

andrew

and

if

hopefully

you

enjoyed

that,

I

know

we

compressed

a

lot

into

a

little

time

and

if

you're

interested

in

learning

more

definitely

check

out

tvm

craft.ai,

where

our

fourth

annual

conference

is

coming

up

just

around

the

corner

in

december

and

we'll

be

opening

registration

soon

check

out

our

website

and

sign

up

to

learn

more

or

reach

out

to

me

directly.

If

you

have

any

further

questions,

we'd

love

to

chat

thanks.

So

much

for

your

time.