►

From YouTube: 008 ONNX 20211024 Krishnamurthy ONNX and Audit Considerations and benchmarking with QuSandbox

Description

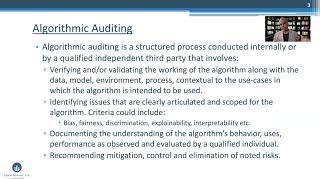

LF AI & Data Day - ONNX Community Meeting, October 24, 2021

Auditing considerations for ONNX models and benchmarking with QuSandbox

Speaker: Sri Krishnamurthy (QuantUniversity)

A

Hello:

everyone,

my

name,

is

shri

krishnamurti

from

quant

university

and

in

today's

presentation

I'm

going

to

be

talking

about

auditing

considerations

for

onyx

models

and

benchmarking

using

the

q

sandbox.

Thank

you

again

for

the

opportunity

to

present

today.

As

you

all

know,

as

the

complexity

of

machine

learning

pipelines

have

grown,

people

are

talking

about.

How

do

we

assess

risks

in

these

models

and

that's

the

problem

we

are

focused

on?

We

are

risk

advisory

based

out

of

boston

and

we

are

focused

on

the

intersection

of

data

science,

machine

learning.

A

We

have

looked

at

a

lot

of

algorithms

and

we

have

audited

a

lot

of

algorithms,

and

one

of

the

challenges

we

see

is:

how

do

we

take

all

these

disparate

components

of

models

and

pipelines

and

assess

the

risk

comprehensively

to

facilitate

that

we

are

building?

What's

called

the

q

sandbox

to

enable

governance

and

risk

management

of

these

models,

and

we

are

also

part

of

lfai.

As

a

member,

we

have

been

able

to

get

a

lot

of

feedback

from

the

community

and

we

very

much

appreciate

that

in

order

to

think

about

algorithmic

auditing.

A

When

we

task

building

these

models,

there

are

so

many

choices.

People

can

build

them

with

enterprise

products

with

open

source

products

and

the

interoperability

becomes

a

major

challenge

and

that's

where

a

lot

of

industries

are

now

thinking

about

using

onyx

models

for

enabling

that

interoperability.

A

Now,

when

we

do

an

an

algorithmic

array,

we

look

at

various

themes

as

a

part

of

the

puzzle,

but

let's

kind

of

just

focus

on

the

model

ps

and

some

of

the

considerations.

I

thought

which

was

important

were

things

like

you

know:

how

do

you

benchmark

a

black

box

model

with

a

white

box

model

which

you

have

potentially

developed?

A

A

So

if

you

are

thinking

about

different

versions

of

the

model

and

making

sure

that

you

are

testing

them

for

different

versions,

what

do

you

think

about

scalability,

especially

if

your

model

is

going

to

be

deployed

in

an

api

environment,

and

then

you

have

to

factor

in

network

latencies,

and

you

also

have

to

factor

in

data

transfers

between

models?

What

kind

of

performance

are

you

going

to

be

potentially

getting,

then?

How

do

you

benchmark

api

behaviors

when

you

think

about

apis

which

are

potentially

going

to

be

leveraging

various

cloud-based

infrastructures?

A

How

can

you

factor

those

nuances

within

your

environment

and

then,

finally,

the

actual

model

testing

itself?

And

how

do

you

stress,

test

scenario

test

and

what

it

matters?

So

these

are

some

of

the

things

we

look

at,

as

we

kind

of

you

know,

try

out

models

and

in

this

demo,

I'm

just

going

to

give

you

a

big

picture

orientation

on

some

of

the

things

we

do

as

a

part

of

this

assessment.

A

So

what

you're

looking

at

is

a

screenshot

of

the

q

sandbox

and

we

take

this

holistic

view

and

we

break

it

down

into

individual

components,

depending

on

the

importance

of

that

particular

audit

and

the

key

components

that

needs

to

be

evaluated,

and

we

have

provided

some

of

the

tooling

so

that

you

can

bring

in

some

of

these

open

source

packages

and

try

out

various

things

in

the

development

environment.

But,

in

the

context

of

you

know,

you

reproducing

some

of

these

aspects

in

a

dockerized,

cloud-based

environment.

A

You'll

understand

like

what

are

the

the

capabilities

of

the

model

for

at

a

granular

level

and

that

auditor

can

potentially

go

in

and

write

reports

on

what

they

saw

and

where

are

the

various

areas

wherein

they

could

potentially

enhance

or

improvise

based

on

the

model.

So

let

me

show

you

a

live

demo

in

case

you're

interested

to

know

more

about

the

platform

happy

to

answer

questions

at

a

later

point,

but

in

today's

presentation

I'm

just

going

to

give

you

a

big

picture

orientation.

So

here

what

we

have

done

is

as

a

problem

setting.

A

We

have

taken

a

simple

mnist

model,

which

is

linux

model

available

in

a

public

repo

and

we're

going

to

be

benchmarking

against

a

python

implementation

of

the

model

and

a

matlab

implementation

of

the

model

and

the

goal

here.

Is

you

know

if

you

are

going

to

be

using

these

models

in

an

api

environment?

How

do

we

test

it

and

how

do

we

kind

of

you

know

factor

your

analysis

and

how

do

you

potentially

audit

these,

based

on

some

of

the

considerations

I

mentioned

to

you

earlier

so

here?

What

you're

seeing

is

the

you

know?

A

It's

a

summary

board.

If

you

will-

and

you

know-

as

we

kind

of

you

know,

look

at

the

the

summary

board

and

we

start

looking

at

the

the

code.

So

I'm

going

to

show

you

you

know

what

we

have

done

is

we

have

taken

the

the

whole

models

and

we

have

created

an

api

which

will

hit

different

api

implementations.

So

one

is

our

implementation

in

python

for

an

mnist

model,

and

these

are

onyx

model

which

are

being

wrapped

up

in

an

api

so

that

you

can

actually

invoke

the

non-response

themselves.

A

A

You'd

want

to

do

various

what-if

analysis,

and

you

will

potentially

also

use

this

as

a

data

generator

so

that

you

can

try

out

different

things

and

then

generate

different

kinds

of

sample

data

sets

so

that

you

can

try

out.

You

know

what

what

the

model

is

going

to

potentially

incorporate.

So

you

can

see

that

there

are

performance

differences

between

the

tf

implementation

and

the

onyx

implementation

for

for

these

kinds

of

models.

A

So

this

is

a

visual

implementation

and

then,

finally,

when

you

run

this,

you

know

jupyter

notebook,

it

generates

reports

and

you

could

potentially

go

in

and

then

look

at

the

actual

reports

themselves

and

you

can

go

in

and

you

know

edit

the

report

and

add

your

id

or

notes

to

it,

and

then

you

could

kind

of

you

know

summarize

the

benchmarking

of

that

particular

report.

So,

as

I

mentioned,

auditing

a

model

is

a

very

involved

effort

and

there

are

various

components

to

the

puzzle.

A

So,

in

addition

to

the

life

cycle

and

the

testing,

there

may

be

aspects

of

specific

algorithmic

assessments

that

need

to

be

done.

Security

reviews

may

need

to

be

done,

especially

if

you're

going

to

be

evaluating

security

for

a

model.

So

there

are

a

lot

of

considerations

to

be

done

and

I

think

the

having

a

comprehensive

effort

to

benchmark

different

models

and

build

a

framework

for

auditing

would

help

kind

of

understand

the

capabilities

of

different

models

and

facilitate

and

foster

the

notion

of

interoperability

and

reproducibility

of

different

models.

I'll

stop

at

that

and

take

any

questions.