►

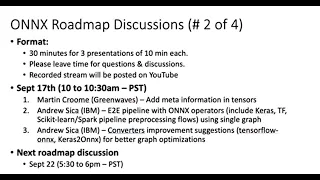

From YouTube: ONNX Roadmap Discussion #2 20210917

Description

1. Martin Croome (Greenwaves) – Add meta information in tensors

2. Andrew Sica (IBM) – E2E pipeline with ONNX operators (include Keras, TF, Scikit-learn/Spark pipeline preprocessing flows) using single graph

3. Andrew Sica (IBM) – Converters improvement suggestions (tensorflow-onnx, Keras2Onnx) for better graph optimizations

A

B

That's

just

behind

me

yeah.

I

just

wanted

to

give

a

little

bit

of

background

on

green

waves,

so

we're

french

fabulous,

semiconductor

manufacturer,

doing

really,

basically,

chips

for

ultra

low

power

signal,

processing,

neural

networks,

we've

got

a

product

in

production

and

we

have

a

tool

chain

that

that

processes,

quantizes

optimizes

analyzes

help

with

debugging

graphs.

B

B

B

But

there

are

two

areas

where

we

really

would

like

to

see

some

improvements

so

and

we've

got

some

suggestions

around

which

is

quantization

and

what

we,

what

I'm

calling

fusion

friendliness

but

you'll,

see

what

it

is

so

quantization

at

the

moment.

The

current

status

in

rnx

is

you've,

got

lots

of

different

data

types.

You've

got

some

fake

quantization

operators

and

you've

got

a

few

kind

of

quantized

implementations

of

operators,

but

I

think

rnex

should

pose

themselves

kind

of

a

question

which

is

what's

the

goal.

B

B

And

you

know

there's

a

lot

of

different

possibilities

there

with

different

types

of

you

know:

scaled

asymmetric,

symmetric

quantization,

and

we

even

you

know

we

have

program

our

kernels

all

in

open

source,

so

people

can

modify

them

as

well,

so

so

that

there

are

some

customers

are

doing

even

more

specialized

things

and

it

seems

to

me

kind

of

impossible

for

annex

to

follow.

All

of

that

because

you'll

have

you

know,

you're

not

going

to

have

quantized

operators

that

can

cope

with

all

of

these

different

schemes.

B

B

Obviously

we

get

the

parameters,

and

mostly

that

gives

us

enough

statistics

unless

they've

been

pre-quantized

into

you,

know,

int

8,

or

something

like

that,

at

which

point

we've

lost

a

lot

of

information,

but

the

activation

statistics

are

definitely

not

so

we

have

no

information

on

the

activations.

What

are

their

ranges,

and

so

on?

Tf

flight

at

at

a

minimum,

now

has

min

max

information

on

tensors

once

the

graph

is

quantized

so

and

there's

also

enough

information

of

because

they

use

a

scaled

asymmetric.

B

Quantization

there's

enough

information

in

each

tensor

to

recover

the

original

sensor,

not

in

its

entire

accuracy,

but

we

can

recover

it.

It

would

be

nice

to

add

this.

Basically,

absolutely

necessary

would

be

min

max.

It

would

be,

I

think,

very

easy

to

add

standard

deviation

and

mean

information

into

into

a

tensor

if

the

tensor

is

quantized,

something

on

how

to

get

back

to

the

original

tensor,

and

then

there

would

be

probably

nice

to

have

things

like

outlier

statistics,

maybe

channel

per

channel

information,

maybe

distribution

information.

B

So

that's

kind

of

the

first

suggestion.

The

second

suggestion

is

around

this

kind

of

fusion

friendliness

or

optimized

kernel

friendliness.

So

currently

you

know

you

have

some

fused

operators

like

lstm

rnn

gru's,

some

others

and

the

giu

support

is

highly

appreciated.

It's

not

available

on

tf

flight

and

now

that

the

microsoft

team

very

nicely

have

done

the

tether

flow.

200X

team

have

have

done

support

for

it.

B

It

works

great

and

it's

it's

really

useful

for

us,

and

there

is

a

move

that

I

sense

towards

using

functions

for

composed

operators

and

the

problem

that

we

have.

Is

you

know

what

we're

working

on

a

a

platform

that

has

very

highly

options

very

highly

optimized

and

we're

really

interested

in

you

know

kind

of

like

micro

watch

of

power

consumption.

B

So

we

really

spend

a

lot

of

time.

Optimizing,

kernels

and

once

you've

broken

up

something

like

a

gru

into

a

load

of

loops

and

if

statements

and

wires

and

and

structures

and

fundamental

operators

and

multipliers,

and

so

on

so

forth,

it's

extremely

difficult

to

get

back

to

something.

That's

really

optimized,

particularly

if

you've

got

custom

hardware

and

particularly,

if

you're

doing

quantization.

B

So

what

the

idea

that

I

had

and

it's

just

an

idea

is-

is

kind

of

encourage

exporters

to

somehow

group

what

they're

exporting

with

some

kind

of

higher

level

container,

and

maybe

that

container

is

a

function

and

and

name

that

container

in

the

sense

of

relating

that

container

back

to

the

original

graph,

which

was

the

source

for

this

on-x

export

of

the

graph.

So

have

some

kind

of

namespace.

B

Perhaps

just

that

you

know

the

namespace

of

the

the

original

you

know

creating

node,

like

you

know,

tf

harass,

experimental

people,

stm

cell,

which

is

one

I

found

today,

but

I

mean

it

could

be

anything

like

that.

Some

kind

of

relationship

back

to

the

thing

that

actually

caused

this

thing

to

be

created.

Then

you

have

the

choice

of

both

worlds.

You

either

have

kind

of

a

function

with

operators

inside

it.

B

You

can

choose

to

execute

those

operators

which

are

inside,

so

you

have

a

general

solution

or

you

can

map

from

that

particular

named

function

onto

some

kind

of

optimized

kernel

that

you've

got

on

your

particular

back

end.

So

that's

that's.

The

second

kind

of

suggestion

I

have

both

of

those

things

would

be

highly

valuable

to

us

and

that's

it.

Hopefully

that

was

under

10

minutes,

update.

C

Yeah

yeah,

so

I

didn't

quite

understand

the

exact

problem

and

asked

so

today

what

happens

in

onyx

is

we.

We

decided

this

move

towards

functions

is

because

any

any

new

operator

which

can

be

composed

by

primitive

operators.

We

said

that

we

would

add

them

as

function.

Ops

instead

of

permittables

and

the.

B

C

B

C

B

B

Perhaps

badly

explained

is

when

an

exporter

starts

exporting

stuff

from

say

a

peephole

lstm

cell

right

and

we

decide

that

you

know

and-

and

it

happens,

that

onx

doesn't

have

a

implementation

for

that.

I

don't

know

whether

that's

the

case

with

that

particular

operator,

I'm

just

using

it

as

an

example.

C

So,

actually,

in

in

the

latest

onyx

release,

we

added

something

which

may

cater

to

your

ask,

so

we

added

an

ability

to

to

import

model

local

functions

so

up

until

now.

The

functions

that

we

had

were

schema

defined

functions

right

and

for

that

those

functions

needed

to

be

defined

in

the

onyx

standard,

and

that's

when

the

converter

understood.

Okay,

this

is

the

function

that's

available

now

with

model

local

functions.

C

So

today

what

they

do

is

today

the

converters

have

to

find

a

way

to

figure

out.

What's

the

function

body,

what

is

the

representative

body

operators

for

this

particular

op,

and

then

they

directly

include

it

in

the

main

graph.

So

now

with

model

local

functions,

it

is

possible

to

do

that.

I

mean

we

can

talk

about

more

details

and

figure

out

if

there

are

any

missing

pieces,

but

the

intention

of

adding

model

local

functions

was

also

to

solve.

One

of

this

problem.

B

You

know

of

saying:

okay,

we

would

like

converters

to

label

to

kind

of

comment

what

they're

exporting

in

a

way

that's

machine,

readable,

and

that's

that

that's

I

guess

what

the

suggestion

is

here.

I

agree

that

you

know

I

mean

if

it

sounds

like

you're

you're,

going

in

the

right

direction

from

a

technical

structure

perspective,

but

I

think

it

also

needs

to

be

sort

of

a

guideline

as

well,

because

otherwise

it

no

one

would

do

it.

Basically.

A

I

think

your

point

is

well

taken.

I

think,

there's

a

bit

of

an

issue

too,

because

I

I'm

not

an

expert

in

converter,

but

I

know

that

they

do

some

layout

optimizations,

sometimes

for

convolution,

for

example.

So

you

because

the

converters

also

do

some

optimization

it

will

having

a

name

or

function

like

this

will

have.

A

C

C

A

A

G

G

G

G

G

You

know

we

do

have

a

linux

operating

system,

but

we

also

have

other

operating

systems

that

don't

have

perhaps

the

ecosystem

right,

that

we

have

on

x86

of

course

or

etc.

So

onyx

is

a

really

a

great

fit

for

us

right

for

that,

along

with

the

again

the

onyx

mlir

project

that

you

know

together.

This

gives

us

the

ability

to

deploy

something

to

these

different

operating

systems.

That

you

know

is

lightweight

and

doesn't

have

a

huge

number

of

ecosystem

requirements.

G

We

tend

to

be

dealing

probably

like

some

of

you

with

a

lot

of

you

know

that

are

that

are

with

a

vendor

with

a

lot

of

enterprise,

clients

that

are

in

the

right

financial

space.

So

we

tend

to

see

cases

around

things

like

fraud,

detection

and

and

other

use

cases

like

that

less

so

around

things

like

you

know,

object

or

image

types

of

models.

G

So

with

that

I've

got

a

couple

of

broader

items

here,

and

my

team

is-

and

you

know,

is

and

can

continue

to

work

on,

providing

more

details,

but

we

definitely

didn't

want

to

miss

the

opportunity

here

to

provide

some

feedback,

so

the

first

one

was

on

basically

at

a

high

level.

This

concept

of

end-to-end

pipeline

support,

and

it's

just

an

area

where

we

think

onyx

has

you

know,

has

and

can

have

a

great

deal

of

value.

G

You

know

a

few

of

the

topics

here.

Right

really

are

that

one.

You

know

we

like

the

direction

that

things

are

heading

and

hope

to

continue

to

see

the

direction

of

enabling

onyx

operators

across

both.

You

know

onyx

and

onyx

ml,

that

enable

not

just

the

execution

of

models,

but

also

the

execution

right

of

some

of

the

mod,

the

data

preparation

stages

and

the

focus

on

that,

both

in

not

just

the

onyx

proper

project

right

but

the,

but

the

converters

we're

seeing

that

there's.

G

You

know-

and

I

think

not

just

for

us,

but

I'm

assuming

for

other

vendors,

a

lot

of

potential

value

in

there

and

the

ability

to

expand

that

to

cover

you

know.

Other

types

right

of

data

preparation

operations,

whether

they're

coming

from

right-

and

I

know

this

branches

into

the

converters-

whether

they're

coming

from

psychic,

learn

right

or

the

tensorflow

experimental

pre-processing

layers-

is

something

that

we

think

right

creates

in

edge

use

cases

and

use

cases

like

what

I've

described

earlier

around

the

mainframe,

something

that's

a

more

embeddable

and

maintainable

unit.

G

You

know

for

clients,

right

and

other

users

of

the

various

services

here.

It

also

gives

the

user

the

opportunity

to

right

optimize

data

preparation

without

rewriting

right.

So

what

I?

What

I

really

mean

by

that

and

one

of

the

things

we've

encountered

is

that

we

see

these

cases

where

latency

really

matters,

and

I

would

imagine

again,

depending

on

the

use

case

for

edge

that

could

also

really

matter

cases

where

you

know

we

have

a

data

scientist.

G

So

we

think

there's

an

opportunity

for

onyx

really

to

continue

to

gain

mind

share

there,

because

there's

the

potential

of

saving

not

just

the

right

the

efforts,

but

then

the

inconsistency

that

that

can

introduce

you

know

like.

I

said

that

continued

focus

and

work

on

the

pre-processing,

perimeters,

primitives

and

converters-

I

I

think,

is

also

going

to

be

key.

G

The

other

thing

that

you

know

that

something

like

this

could

provide,

as

I

hinted

at

earlier,

is

that

capability

of

the

multi-model

support

right

and

really

simplifying

deployment,

artifacts

and

governance,

and

I

understand

with

something

like

the

onyx

runtime.

You

do

have

the

ability

to

to

chain

together

multiple

onyx

models,

but

I

I

do

think

and

and

we'll

be

happy

to

provide

more

input

on

this

in

the

future.

G

As

we

start

to

explore

it

more,

I

I

do

think

that

ability

to

simplify

to

a

single

or

common

deployment,

artifact

or

model

onyx

model

could

also

have

value

so

again

from

from

our

standpoint

and

from

what

we've

seen

some

of

the

challenges

not

just

on

our

platform,

but

some

of

the

challenges

we've

heard

from

clients

on

on

the

other

platforms

they're

working

with.

I

think

there

is

a

potential,

a

lot

of

value

here

to

continue

on

this

platform

and

broaden

it.

G

D

Aren't

any

questions

you

can?

Oh,

is

there

a

question?

I'm

sorry

hi,

I'm

xavier

dupree,

I'm

I'm

working

on

a

psychic

converter

and

it's

not

like

a

question

but

just

to

confirm

that

I

faced

a

similar

issue

that

you

you

mentioned

here

and

I

started

to

propose

some

some.

Some

options

like

merging

linux,

server,

onix

to

get

a

single

onyx

graph

or

try

to

find

better

ways

to

help

users

write

well,

convert

the

custom

code

into

annex

which

is

not

easy.

So

more

like

a

a

comment

than

a

question

right.

G

It

is,

I

think,

that

the

most

obvious

use

case

that

we're

seeing

is

you

know

cases

where

the

data

preparation

pipeline

incites,

something

like

scikit-learn.

For

example,

you

know

maybe

doing

data

preparation

for

a

tensorflow

model

right

got

it

all

right,

so

you

have

two

things

that

convert

basically

through

separate

converter

technologies

or

packages

and

right.

G

I

think

martin

hit

on

probably

some

very

similar

points

here

and

when

I

propose

this-

and

I

know

this

gets

in

very

much

more

into

the

converter-

sig-

probably

part

of

this,

but

you

know

what

what

we're

seeing

in

particular

with

some

of

the

types

of

models

that

are

really

of

interest,

is

the

first

that

the

the

focus

on

us

on

a

single

tensorflow

converter.

Now

that

tensorflow

is,

you

know,

absorbed

keras.

G

G

G

I

did

notice

after

the

fact

after

we

suggested

this

that

at

least

for

something

like

the

tensorflow

onyx

converter,

for

example.

There

are

a

couple

of

you

know,

issues

open

that

appear

to

be

moving

right

in

and

what

we

feel

is

a

positive

direction

right

of

being

able

to

represent

the

right

lstm

operation

in

onyx

stemming

from

a

tensorflow

graph

that

had

it,

but

you

know

again,

I

think,

just

in

general,

this

would

be

a

you

know.

G

I'll

say

I

echo

a

lot

of

martin's

comments

and

I

think

it

would

be

a

general

movement

in

the

right

direction.

I

don't

have

a

feel

for

you

know

what

the

best

implementation

would

be

and

what

I

mean

by

that.

I

think

during

the

discussion

with

martin,

the

I

top,

the

idea

of

name

spacing

came

up,

and

I

think

you

know

something

like

that

would

probably

be

right,

also

a

really

good

path

towards

making

it

easier

on

the

back

ends.

G

To

you

know

to

optimize

some

of

this,

we

I

did

put

out

a

example

of

a

fraud

detection

model,

that's

I'll,

say

a

proxy

for

something

we've

seen

so

I

I

included

a

link

here

just

again

so

there's

an

example

to

point

to

that

uses

some

open

data

and

it's

really

a

relatively

simple

right.

Two-Layer

lstm

model.

B

G

Okay,

great

so,

and

it

could

very

well

be

the

case

that,

like

I

said

we

put

in

this

input

probably

a

couple

of

months

ago-

and

maybe

at

that

point

it

wasn't-

I

think

it

wasn't

there

yet

so

we'll

we'll

take

a

look

at

that

again-

and

you

know

we

are

I'll

say,

as

I

said

here

right

we're.

We

are

investigating

this

further

and

trying

to

get

some

more

examples

of

prop.

You

know

proxy

models,

basically

of

the

types

of

things

that

you

know.

G

E

E

One

thing

which

is

different

is

that

in

this

particular

case,

with

gru

and

lstm,

they

both

are

supported

in

the

onyx

spec

as

single

ops

and

the

reason

we

were

not

not

converting

them

correctly

was

just

because,

whenever

we're

working

in

tensorflow

the

keras,

you

know

splits

it

down

into

all

the

compositions.

I

think

that

is

the

gru

and

lstm

are

the

only

two

cases

to

my

knowledge

where

that

really

occurs.

E

I

don't

really

think

that

that

should

happen

too

much

in

other

areas

like

keras

is,

you

know

inherently

higher

level,

but

because

onyx

has

mostly

low

level

ops.

Anyway,

most

of

the

time,

those

things

are

getting,

you

know

broken

down,

but

with

lstm

and

gru.

Those

are

like

the

two

cases

where

onyx

has

big

high-level

ops

for

them

and

tensorflow

is

representing

those

at

a

low

level

and

keras

is

at

a

high

level.

We've

been

trying

to

close

that

and

we

did

the

the

gru

for

one

of

the

cases.

E

There

are

a

few

different

cases

where

tensorflow

splits,

those

out

differently

and

we'd

like

to

add

support

for

all

of

them,

but

I'm

not

sure

if

we're

going

to

have

the

I

don't

know

what

the

timeline

is

on

those.

But

of

course,

if

you

add

you

know

more

more

github

issues,

then

that

is

going

to

add

more.

G

Okay,

great

so

I'll

I'll

do

that,

then

I

wanted

to

wait

till

after

this

discussion,

but

I

think

alex.

We

did

talk

about

probably

opening

up

issues

and

I

know

tom.

I

think

I

posted

in

your

in

the

in

the

project

discussions

forum

and

you

were.

You

were

thank

you

nice

enough

to

answer

me.

So

I

appreciate

that

too

cool

yeah.

A

I

think

you

this.

This

is

fantastic

to

have

a

you

know.

Like

a

connection

directly

like

this,

I

have

a

follow-on

question

for

martin,

like

for

the

lstm

and

the

gru.

This

is

the

case

where

basically,

these

two

representation

analytics

that

are

possible.

Both

are

high

level

and

a

low

level,

and

and

for

you,

martin,

do

you

find

that

often

there's

a

keras

operation,

but

there's

not

an

equivalent

onyx

high

level,

so

it

gets

broken

down

to

a

low

level,

but

you

are

able

to

accelerate

better.

B

I

mean

you

know

we're

we're

working

day-to-day

on

on

loads

of

customer

models.

So

it's

sort

of

kind

of

the

yesterday's

problem

is

the

one

that's

the

most

high

in

my

mind.

Well,

in

fact,

today's

problem,

you

know,

I

think

the

reason

for

my

name

spacing

idea

was

was-

was

to

give

us

a

way

out

in

that

situation,

where

you

know

that

it

is

being

broken

down,

because

once

it's

been

broken

down,

it's

pretty

difficult

to

go

in

the

reverse

direction.

B

It's

kind

of

you

know

cow

to

mince

meat,

as

you

can't

really

go

back,

which

is

not

very

politically

correct,

but

anyway

it's

and

it's

it's

different.

It's

difficult

to

do,

and-

and

that's

that's

why

I

was

suggesting

that

generally,

though,

now

with

the

the

work

that

was

done

recently

on

the

giu

stuff,

it

seemed

to

work

very

well

for

for

a

quite

complex

graph,

I've

yet

to

try

it

with

an

lstm

because

I've

yet

to

have

a

customer.

B

E

But

in

the

other

cases

where

that

breakdown

occurs

in

the

converter,

you

do

kind

of

already

have

an

escape

hatch,

which

is,

if

you

convert

with

the

dash

dash

custom

ops

flag.

Then

it

will

leave

that

tensorflow

up

as

a

single

atomic

op

that

will

get

put

into

the

onyx

graph

and

it

will

just

put

the

domain

as

tensorflow

and

that

might

work

for

your

purposes,

because

that

will

work

in

cases

where

there

is

no

equivalent

high

level

onyx

op.

E

E

E

The

issue

with

the

optimization,

which

I

think

was

mentioned,

is

that

whenever

it's

broken

into

low

level,

ops,

we're

able

to

optimize

through

it,

and

some

of

those

optimizations

are

like

really

affect

the

like

break

it.

So

it

does

not

have

the

same

characteristics

as

the

original

thing

right,

so

it

is

probably.

It

seems

to

me

that

it's

probably

best

to

explicitly

denote

when

you're

converting,

whether

you

want

it

broken

down

or

not,

because

if

you

say

that

you

want

it

broken

down,

we

push

optim

optimizations

through

it.

E

Now,

at

the

same

time,

if

you

want

it

broken

down,

I

can

see

value

in

just

having

some

way

to

trace

back

where

that

thing

came

from

and

yeah,

it

seems

like

once

the

optimizations

are

allowed

to

go

through

that

thing.

There's

no

way

to

make

it

machine

parsable,

where

it

will

be

like

robustly

identical

to

the

original

tensorflow

app

in

terms

of

semantics,

but

there

is

probably

a

way

to

make

it

so

that

you

can

at

least

figure

out

exactly

what

op

it

came

from

like.

E

Currently,

we

are,

you

know,

kind

of

preserving

the

name,

but

we

actually

don't

in

tf2

onyx

make

it

a

top

priority

to

keep

the

names

like

really

similar

to

what

they

were

before

so

often

you'll

just

get

like

underscores

with

different

numbers

on

them.

It

seems

really

difficult,

unfortunately,

to

make

it

so

that

it's

like

completely

high-level

parcel,

have

the

same

op

semantics

and

everything

just

because

the

converter,

whatever

does

those

compositions,

sometimes

makes

decisions

based

on

the

properties

of

that

particular

op

like

it

will

only

convert

it

to

a

certain.

E

E

B

B

For

for

doing

some

of

the

things

that

you

do,

I

mean

things

like,

for

example,

ap,

chw,

hwc

conversion,

because

we

have

kernels

that

are

operating

both.

So

we

have

optimizations

that

that

handle

that

and

in

fact

handle

that

through

reshapes

and

things

like

that

which-

which

I

don't

think

you

do

apparently

so.

E

A

E

B

E

B

E

A

B

A

F

A

If

you

embed

the

the

the

the

tensorflow

name

in

it,

so

you

lose

by

torch

or

some

other

framework.

So

so

basically,

I

think

we

we

almost

coming

to

a

point

that

if

you

still

want

to

be

generic,

if

you

have

a

high

name,

high

level

name,

it's

it's

almost

like

it

should

belong

to

onyx

because

it

needs

to

be

defined

and

generated

by

the

virus.

Different

front-end,

it's

just

my

opinion,

but

but

I

definitely

see

the

attractiveness

of

having.