►

Description

We will be introducing On-Device Training, a new capability in ONNX Runtime (ORT) which enables training models on edge devices without the data ever leaving the device. The new On-Device Training capability extends the ORT-Mobile inference offering to enable training on the edge devices. The goal is to make it easy for developers to take an inference model and train it locally on-device—with data present on-device—to provide an improved user experience for end customers. We will be giving a brief overview of how to enable your applications to use on-device training.

A

A

B

B

So

motivation,

assuming

that

I'm,

an

app

developer

I,

will

have

my

apps

running

on

multiple

devices

across

different

platforms,

maybe

even

using

different

Frameworks

and

I

have

a

lot

of

data

that's

being

created

across

these

devices.

I

would

potentially

want

to

use

this

data

to

enhance

the

End

customer

user

experiences.

B

Also

as

a

developer

want

portable

Solutions

I

want

a

solution

that

works

across

Frameworks

I

want

a

solution

that

potentially

even

works

across

different

platforms

like

Cloud

desktop

mobile

Etc.

So

what

the

on

device

training

solution

provides

is

using

Onyx

runtime.

It

is

an

efficient

framework,

agnostic,

local

trainer,

that

trains

with

Device

data

on

the

on

the

edge.

So

let's

break

this

down

a

little

bit,

it's

efficient

because

we've

strived

to

make

it

both

performance

and

memory

efficient,

including

to

save

battery

life.

B

We've

also

made

a

framework

agnostic,

so

it

starts

with

an

onyx

model.

As

long

as

we

have

an

Linux

model,

maybe

from

if

you're,

using

or

the

inference

you

already

have

an

onyx

model

or

if,

even

if

you're

using

other

Frameworks,

you

can

convert

it

to

Onyx

and

it's

ready

for

use

and

it's

local.

The

data

is

not

leaving

the

device.

It's

all

the

training

happens

on

the

edge.

B

It's

it's

preserving

the

Privacy

for

your

end

customers

and

it

again

it's

it's

training

on

the

edge.

So

this

this

is

using

all

the

resources,

the

limited

resources,

including

CPU.

So

that's

the

as

long

as

you

have

a

CPU

on

your

device.

You

can

train

using

the

the

data

on

the

edge

so

quickly

we'll

touch

on

the

scenarios.

B

The

major

scenarios

are

Federated

learning

and

personalization.

So

personalization

is

where

you

fine

tune

on

the

device.

Typically

you'll

enhance

your

inference,

model

and

train

it

with

local

data,

make

it

serve

your

customers

so

that

it's

it's

serving

it's

just

giving

them

a

richer

user

experience.

We

have

Federated

learning,

which

is

more

training,

Global

model,

so

you

train

on

the

device.

B

We

touched

on

a

lot

of

the

key

benefits

before,

but

I

just

want

to

reiterate

that

this

extends

the

ort

solution

so

or

the

inference

again.

If

you

are

already

in

the

ecosystem.

This

provides

an

end-to-end

flow

from

inference

to

training

and

back

to

an

inference

model

that

you

can

run

on

the

end

user's

device

and

again

give

them

a

better

experience.

C

Now,

let's

delve

into

the

process

of

learning

on

an

edge

device

using

Onyx

runtime,

which

is

divided

into

two

phases.

First,

we

have

the

offline

phase.

In

this

initial

stage,

training

artifacts

are

generated

using

python

in

an

offline

script

or

a

server

or

development

machine.

These

artifacts

include

the

training

on

its

model.

The

evaluation

Onyx

model,

the

optimizer

Onyx

model

and

the

checkpoint

file.

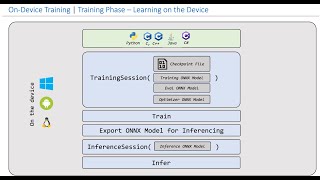

C

Next

comes

the

training

phase,

which

takes

place

directly

on

the

edge

device.

This

is

where

developers

interface

with

Onyx

runtime's,

training,

API

and

Define

the

logic

for

the

training

Loop

to

better

illustrate

these

two

phases.

Let's

work

with

an

example.

Imagine

we

start

with

a

simple

Onyx

model

that

consists

of

a

linear

layer,

followed

by

a

relu

activation,

followed

by

another

linear

layer.

C

C

Another

artifact

is

the

training

Onyx

model,

which

incorporates

the

gradient

graph

of

the

input

on

its

model.

On

top

of

the

evaluation

Onyx

model,

the

training,

Onyx

model,

outputs,

the

gradients

for

each

of

the

trainable

initializers,

allowing

us

to

focus

specifically

on

the

parameters

associated

with

the

second

linear

layer.

In

this

example

to

avoid

duplicate

definitions

within

the

training

and

evaluation,

Onyx

model,

the

checkpoint

file,

abstracts

the

shared

model

initializers

into

a

binary

checkpoint

file.

C

C

Now

that

we

have

these

training

artifacts

in

place,

we

are

ready

to

deploy

them

and

enter

the

second

phase

of

on-device

training.

The

training

phase

Onyx

runtime

offers

a

wide

range

of

language

bindings,

including

python

C,

C,

plus

plus

C,

sharp

and

Java,

with

Objective

C

and

typescript

bindings

currently

in

development

packages

for

Windows,

Android

and

Linux

are

already

available,

while

packages

for

iOS

and

web

can

be

expected

soon.

C

The

entry

point

for

training

is

the

training

session,

which

takes

all

the

training

artifacts

as

inputs

and

exposes

methods

that

enable

developers

to

train

their

models

effectively.

These

methods

include

train

step,

eval,

step,

Optimizer,

step

reset

grad

and

more

once

the

model

has

been

trained

on

the

device.

The

training

session

also

offers

a

convenient

way

to

generate

an

inference

ready

on

its

model

directly

on

the

device

itself.

C

Now,

let

me

demonstrate

the

power

of

on-device

training

through

a

practical

application.

For

this

demo,

we

have

developed

an

Android

application

that

utilizes

the

mobile

net

V2

model.

We

specifically

select

the

last

classifier

layer

to

be

trained

and

we

use

the

model

to

learn

to

classify

celebrity

images.

C

C

C

B

Thanks

Bijou,

so,

as

major

mentioned,

we

have

a

lot

of

resources.

I've

listed

it

here

in

the

slides

and

I

invite

you

to

check

out

the

repo

contribute

reach

out

to

us.

If

you

have

questions

we

again,

as

you

mentioned,

we

have

a

large

number

of

language

Bindings

that

we

support

CC,

plus

plus

C

sharp

python

Java.

We

also

have

JavaScript

Objective,

C

and

Swift

coming

up

pretty

soon.

We

have

support

for

Windows

Linux

Android,

and

then

we

have

IOS

and

oit

web

support

coming

pretty

soon.

So

with

that,

thank

you.

A

C

C

B

B

So

if

your

task,

if

your

model

is

supporting

text

to

speech,

you

can

bring

that

down

to

on

device

training,

it's

it's

mostly

around

the

last

couple

of

layers

of

your

model

that

you

will

be

training

and

as

long

as

you

are

able

to

train

that

offline

and

create

a

text-to-speech

model,

you

can

bring

that

down

to

on

device

and

then

you

can

train

it.

Also

just

it's

just

that

the

examples

to

show

vision

is

more

appealing

to

a

large

audience,

but

but

it's

yeah,

it's

applicable

to

text

as

well.

Yeah,

yes,.

D

B

So

Define,

so

is

the

fine

tuning

done

online

or

you

first

fine

tune

and

then

use

the

personalized

mode.

So

there

are

two

stages:

one

is

the

offline

validation

where

you

essentially

prepare

the

model,

get

it

ready

so

that

you

can

deploy

it.

But

there

is

a

phase

of

validation

online,

where

you

make

sure

your

model

is

ready

for

production

testing

and

then

the

actual

fine

tuning,

where

you

use

the

user's

data

and

you

tune

the

model

to

make

it

ready

for

inference

later

on,

is

done

on

the

edge.

B

E

I

was

asking

whether

all

the

training

node

operators

has

to

be

explicitly

defined

and

included

in

the

Onyx

operators

app.

So

what

if

I

have

some

very

special

data

pre-processing

for

the

training

framework

and

it

cannot

be

represented

by

you-

know

those

Onyx

operators,

specifically

Onyx

training

operators.

How

am

I

going

to

deal

in

this

particular

scenario?

Sure.

B

So

the

question

was

what,

if

I

have

special

training

operators

that

are

not

part

of

my

Onyx

inference

model?

How

am

I

going

to

deal

with

it

right,

so

you

do

not

have

to

have

the

training

operators

in

your

Onyx

model.

You

start

with

an

inference

Onyx

model

and

then,

when

we

have

the

artifact

generation

phase

that

baiju

had

mentioned.

That's

when

the

training

graph

gets

created

so

potentially

most

of

the

operators

standard

operators

for

training

will

be

available.

B

E

B

Are

in

the

process

of

and

we'll

we,

so

we

are

having

a

multi-part

Blog

series,

that's

being

released.

We

are

in

the

Deep

dive

stage

right

now,

but

we

are

also

going

to

release

the

measurements

for

both

performance

and

Battery,

as

well

as

memory

requirements.

So

we'll

let

you

know

soon,

yes,

and

the

question

was:

was

there

any

power

measurements

done?

Sorry

about

that.

D

B

B

The

Federated

learning

is

work

done

by

Microsoft

research

and

there

are

other

companies

as

well,

but

essentially

we

we

transferred

the

model

weight

differences

back

to

the

cloud

rather

than

sending

any

of

the

other

data

specific

differences

and

that's

how

the

global

model

gets

updated.

I

will

share

some

resources,

maybe

in

the

team's

Channel,

and

then

they

can

look

into

it

further.