►

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

So

you

these

lights,

probably

you

everyone

in

this

room

and

knows

it

and

it's

more

appropriate

to

comment

how

it

works.

But

basically

you

have

a

synchronous

right

coming

to

the

arc

then

committing

to

the

zilla.

At

that

point,

you

can

commit

to

the

ulster

the

execution

execution

of

the

right

and

then

at

the

data

can

go

back

to

the

main

pool

that

can

be

a

zero

can

be

HDD

whatever

it

is.

So

I

will

learn

it

I

promise.

A

So,

for

this

z

cash

we

develop

a

specific

product

that

is

called

Zeus

from

it's

an

SSD,

but

it's

based

on

DRM.

So

it's

only

in

a

terabyte

ddr3,

fully

back

up

inflation.

So

we

have

super

capacitor

inside

so

that

we

can

transfer

the

data

to

flesh

in

case

of

power

interruption

and

that's

the

lowest

latency

device

available

in

the

market

being

based

on

drm.

So

we

are

writing

to

the

drm,

fully

protected

with

extreme

low

latency.

Normally,

the

latency

is

in

the

range

of

twenty

microseconds.

A

We

commit

to

less

than

14

microseconds,

no

matter

what

the

only

limitation

of

the

Zeus

Rama

is

the

fact

that

is

available

in

three

and

al

Finch.

So

for

the

people

who

cannot

use

three

NL

Finch,

we

are

suggesting

to

use

our

new

12

gigabit

sauce

ultrastar

SSD,

the

I

endurance

version

that

doesn't

live.

Unlimited

endurance

like

drm

Bay's,

SSD,

bastila

and

extremely

I

endurance,

9.1

data

byte

random,

with

with

a

quite

good

latency

below

60

microseconds,

latency

average

I.

A

You,

you

probably

notice

that

the

year

I

wrote

average

here

is

a

committed

less

than

40

micro

seconds.

The

issue

with

such

as

these

that

you

can

find

some

spikes

in

the

latency.

We

are

pretty

good

in

minimizing

the

spikes

most

of

the

earth

flesh

appliance

one

factor

are

using

our

SSD

because

of

this

because

of

being

able

to

level

the

spikes

still

is

not

as

good

as

a

DM

based

SSD.

A

So

that's

for

the

zeal

for

dl

to

arc.

I

have

been

talking

a

lot

today

this

morning

and

in

the

presentation

of

an

extent

about

L

to

R

kal-el

to

our

case

for

speeding

up

read.

So

what

you

need

from

an

l2

ARCA

is

for

sure

I

performance,

primarily

in

read,

random,

read

I

endurance,

because,

even

though

this

is

a

read

intensive

implementation,

you

need

to

feel

the

cash

with

data.

So

you

need

to

constantly

write

data

into

the

cash

and

obviously

I

reliability,

and

this

is

what

we

are

good

at.

A

Our

agency

so

the

product

that

we

propose

a

for

l2

arc

implementation,

it's

the

ultrastar,

SSD

12

gigabit

in

three

different

fashion

to

device

right

per

day,

10

device

five

per

day,

25

device

five

per

day.

Probably

this

is

overkill

for

a

network.

So

we

see

the

majority

of

the

implementation

are

still

using

10

device,

fri

/.

They

are

you

familiar

with

device

5

per

day

on

the

mini

okay,

good

good,

asking

the

question.

A

So

basically

it's

how

many

times

you

can

write

the

entire

capacity

of

the

drive

every

day

for

the

entire

warranty

period,

so

we

say

10

device

right

per

day

for

an

800

gigabyte.

It

means

you

can

write

at

a

terabyte

of

random

data.

Every

day's

over

five

years

to

the

Viceroy

per

day

is

the

same

concept

and

up

to

25

25.

Basically,

it's

a

the

equivalent

of

single

level

cell-based

SSD.

A

You

don't

want

to

have

twice

as

much

as

the

flesh,

because

there

is

a

lot

of

over-provisioning

that

comes

into

play

to

achieve

such

an

endurance,

but

most

of

the

implementation

that

we

see

require

it

10

device

right

birthday

and

there

are

brave

people

who

are

satisfied

with

it

with

an

entry

level

to

the

vice

5

per

day

that

for

read

cache,

it

can

be

quite

sufficient,

mainly

if

you

consider

that

today

we're

already

ship

in

one

point,

ninety

two

terabytes

so

to

devise

fri

per

day

over

a

10

point.

Ninety

two

terabyte

SSD.

A

What's

new,

that's

something

really

new!

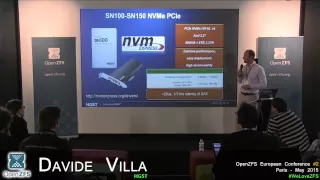

We

announced

it

around

few

weeks

ago

to

three

weeks

ago,

the

release

of

our

first

nvme

compatible

pci

express.

Are

you

familiar

with

the

nvme,

what

it

is?

So

it's

basically

a

standardization

of

the

PCI

Express

protocol

for

SSD

implementation.

So

that

means

that

today

you

can

use

our

SSD

nvme

compatible

and

tomorrow

you

can

replace

it

with

an

intel

s,

nvme

or

samsung,

or

whether

come

into

the

market,

and

you

don't

require

our

propriety.

A

Drivers

anymore

so

makes

the

product

much

more

usable

easier

to

use

interchangeable,

but

it

gives

also

some

other

advantage.

It

allows

to

release

a

PCI

Express

in

a

12

inch

from

factor

and

it

becomes

a

not

pluggable

device

so

with

the

similar

to

what

you're

familiar

with

with

the

SAS

SSD

or

SAS

drives

in

general.

So

it's

much

easier

to

deploy.

It's

scalable.

A

You

don't

need

to

switch

off

the

server.

If

you

need

to

do

any

maintenance,

like

you,

should

do

with

a

pcie,

2.0

implementation

plug

and

play

or

pluggable

as

well

or

swappable

as

well,

so

lot

of

advantage

that

makes

these

new

protocol

the

next

wave

of

SS

the

implementation

and

the

main

advantage

of

nvme

protocol

beside

the

the

fact

that

is

a

standard

protocol.

It's

the

latency!

The

latency

is

around

one

third

of

SAS

latency,

so

that

allow

us

to

release

product

today

with

latency.

That's

stay

below

20

micro

seconds.

A

A

There

are

not

that

many

option

in

the

market

today,

but

more

are

coming.

We

did

a

quite

a

good

job

in

optimizing

the

performance,

and

even

though

there

are

not

that

many

suppliers,

there

are

huge

difference

in

thermal

performance

in

what's

available.

The

other

advantage

of

nvme

I

didn't

say

before

is

that

you

can

run

with

a

longer

cadet.

You

can

with

queue

depth

of

128

even

up,

and

we

show

now

in

this

bench

market

that

we

achieved

twice

as

much

as

the

performance

of

one

of

the

other

suppliers.

A

A

That's

for

your

exercise

of

tomorrow

today,

nvme

is

compatible

with

linux

windows,

with

a

free,

SBS

vmware

solaris

oracular

as

its

own

implementation

of

nvme,

but

it's

not

yet

compatible

with

the

ZFS.

Next

scent

is

working

on

it.

I

know

there

is

something

going

on

I,

don't

know,

probably

you're

already

ahead

of

the

game

with

the

implementation

of

nvme,

but

we

are

more

than

willing

to

partner

with

the

weather

is

interested

into

developing

the

driver

for

a

za

fly.

There's

ZFS

implementation

questions.

A

A

Gonna

be

a

we're

planning

to

have

12

port

nvme

in

the

second

half

of

next

year,

so

in

our

next

generation

product

in

q3

2016,

we

plan

to

have

a

dual

port

device

today

and

vme

pcie

in

general.

It's

mostly

used

for

accelerating

database,

so

it's

mostly

used

on

server

acceleration.

So

dual

port

is

not

a

feature

that

has

been

highly

demanded

for

such

an

implementation,

but

the

point

is

right

and

VMA

deliver

much

higher

performance.

So

it's

a

natural

evolution

of

SAS

deployment

into

storage

appliances

and

dwell

parties

is

a

feature

that

is

needed.

A

A

It's

just

as

good

as

the

HDD

in

term

of

MTBF

2.5

million

hours,

the

annualized

failure

rate,

it's

much

lower,

obviously,

I.

Think

I

recall

it's

zero

point,

something

we

are

far

lower

than

the

average

of

the

market

with

the

widest

SAS

implementation

in

the

market.

There

are

many

many

SSD

supply

in

the

market,

few

enterprise,

SSD

supply

and

into

the

market.

We

own

a

fifty

percent

of

this

market

so

and

we're

not

the

cheapest.

It's

all

about

quality.