►

From YouTube: The Next Generation of AI/ML Application Development; Embracing the Container Revolution

Description

In this rapidly changing society we need a way to quickly, efficiently and powerfully experiment with AI/ML concepts and systems. The revolution of Containers has brought a new facet into the equation; the ability to rapidly build, test and repeat AI/ML applications extremely easily while efficiently using compute resources.

This talk will present an overview and demo of the ways in which Containers can be easily used to build AI-centric applications, allowing data-scientists to spend more of their time experimenting and less time setting up complex systems.

A

More

in

all

start

slightly

early,

so

I'm

gonna

waffle

for

a

couple

of

minutes.

Before

we

get

going

on

this,

my

name

is

ian

lawson,

I'm

a

solution

architect,

I

think

for

red

hat.

In

reality,

I'm

a

software

developer,

who

does

ai

just

hiding

out

in

red

hat

I've

been

working

in

the

industry

for

around

about

35

years.

A

I

come

from

a

hadoop

background,

any

bit

of

a

room

like

hadoop.

The

lights

are

very

bright,

so

I

can't

see

you

so

shout

wave.

I

see

one

person

I'm

going

to

try

and

give

a

demo

as

well.

The

network

here

is

a

bit

not

very

good,

so

I

apologize

profusely

in

advance.

Also,

the

cluster

I'm

running

is

actually

in

an

intel

data

center.

Those

lovely

guys

at

intel

have

given

us

some

kit

and

it's

behind

a

firewall,

so

I

do

get

a

bit

of

a

slow

response

when

I'm

talking

to

the

cluster.

A

When

I

talk

to

customers

normally,

I

say:

please

don't

think

that

openshift

is

slow.

Please

don't

think

that

intel

chips

are

slow.

If

you

want

to

blame

anybody,

blame

chinese

intelligence

because

they

are

always

smashing

that

firewall

still

got

four

minutes

to

talk

a

little

bit

of

an

overview

of

openshift

itself.

Openshift

is

currently

on

version.

Four

version

four

is

based

on

kubernetes.

A

We

did

something

very

nice

with

the

fourth

version

of

openshift

and

that

we

actually

absorbed

a

company

called

coreos

that

had

something

called

the

operator

framework

in

english.

What

we

now

do

with

openshift

is

we

ship

the

ship

openshift

has

a

number

of

what

we

call

cluster

operators.

Cluster

operators

are

little

objects

that

sit

on

top

of

kubernetes

and

own

an

individual

object

type.

So

you

can

extend

the

object

taxonomy

of

kubernetes

without

having

to

actually

change

the

kubernetes

core.

What

this

means

in

real

terms.

A

What

this

means

in

english

is

that

when

you

can

do

an

installation

of

openshift,

when

you

can

do

an

upgrade,

it's

basically

zero

downtime,

because

you're

updating

individual

applications

that

make

a

big

application

in

that

combination,

and

this

ties

in

a

lot

to

the

ai

side,

but

we'll

get

onto

that

once

we

start

the

slides

once

I

start

the

presentation,

I'm

kind

of

hoping

it

won't

be

as

hot

today.

I'd

like

this

talk

to

be

longer

than

25

minutes,

because

this

is

the

coolest

room

in

the

in

the

entire

venue.

A

So

I

will

get

dragged

off

the

stage.

If

I

go

over

25

minutes,

do

I

start

now,

or

do

we

just

just

just

waffle

for

a

couple

of

minutes,

right

cool?

So

today's

talk

should

be

very,

very

quick.

I've

got

around

about

eight

to

nine

hours

of

information.

I'm

gonna

try

and

get

across

in

25

minutes.

So

I'm

going

to

talk

very

very

quickly.

My

voice

is

gone

because

I

was

talking

to

lots

of

people

yesterday

and

I

was

drinking

last

night,

so

I

apologize

if

I

actually

fade

out.

A

A

A

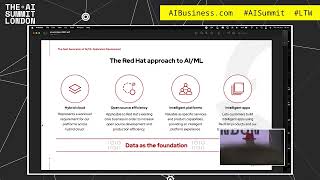

It's

all

about

big

data,

it's

all

about

huge

amounts

of

data

and

repetitive

tasks,

but

the

red

hat

approach

to

ai

and

ml

can

be

summed

up

in

four

base:

basic

sort

of

clauses,

the

first

one

is

hybrid

cloud

and

that's

incredibly

important.

That's

immensely

important

and

the

point

behind

that

is

that

openshift

runs

the

same

everywhere.

A

If

you

install

openshift

on

azure,

install

on

aws,

install

it

on

bare

metal,

if

you're

brave

enough

to

install

it

on

arm

yeah,

we'll

try

to

squeeze

it

onto

raspberry

pi.

At

some

point,

any

workload

you

deploy

to

openshift

behaves

identically.

It's

the

same

configuration

as

code,

we've

abstracted

the

underlying

infrastructure

and

technology

away

from

the

orchestration.

That's

actually

done

to

orchestrate

the

applications,

we're

talking

about

open

source

efficiency.

Now

red

hat

is

a

different

kind

of

company.

A

I've

worked

for

a

huge

amount

of

companies

and

I

intend

to

die

at

red

hat,

hopefully

not

that

soon.

But

red

hat

is

a

different

type

of

company.

We

basically

take

open

source

software

and

we

make

it

production

strength.

We

provide

basically

a

subscription

which

gives

you

support

for

using

our

enterprise,

strengthened

version

of

our

open

source

software

and

there's

a

little

clause.

I

always

talk

about

with

customers.

I

call

the

hard

pass

four

in

the

morning

stack

trace

and

it

comes

from

an

example.

A

I

I

was

the

first

person

to

bring

open

source

software

into

the

government

and

I

brought

a

project

called

lucien.

Everyone

knows

that's

a

search

engine

into

a

government

agency

and

I

built

a

user

interface

around

it

made

it

nice.

It

looked

like

I've

done

something,

and

now

I

delivered

it

and

then

I

went

home

and

I

passed

four

in

the

morning

I

had

a

phone

call

from

the

agency

saying:

there's

a

stack

trace,

and

so

I

have

to

get

out

of

bed

rack

into

the

agency

itself.

Look

at

the

source

code.

A

It

was

all

in

the

open

source

beer

which

I

hadn't

written

and

I

had

no

clue

what

was

going

on

within

the

open

source

software,

but

because

I

brought

it

in

it

was

mine

and

I

owned

it

and

I

supported

it.

Red

hat

provide

a

breaker.

They

provide

kind

of

a

safety

net

for

that

past.

Four

in

the

morning

stack

trace.

We

provide

you

with

the

open

source

products.

We

provided

the

enterprise

strength

and

you

can

raise

support

requests

on

anything

that

we

actually

provide

you

with

the

third

one

is

intelligent

platforms.

A

This

is

very

important

to

me

because

I

was

a

developer

for

35

years.

I

was

a

developer

back

in

the

days

before

most

people

here

were

born

when

you

had

to

do

everything

yourself,

and

I

came

up

against

what

I

call

the

70

30

problem

and

the

70

30

problem

was.

I

was

getting

paid,

a

reasonable

amount

of

money

to

be

a

contractor

with

the

government

writing

software

and

I

spent

70

of

my

time,

not

writing

software.

A

I

spent

70

of

my

time

building

machines.

I

spent

70

of

my

time

downloading

frameworks

installing

frameworks,

configuring

the

tools

I

needed

to

write

the

software,

so

I

was

only

spending

30

of

my

time.

Writing

software

and

that's

hideously

inefficient,

and

what

we're

trying

to

move

towards,

especially

on

the

red

hat

side,

is

to

provide

tools

and

technologies

that

up

that

to

90

95

of

your

time

being

able

to

write

the

code.

B

A

Artificial

intelligence

and

machine

working

machine

learning

workloads

well.

The

whole

point

with

containers

is

that

decoupling

of

application

and

infrastructure-

I

actually

call

it

taint

and

the

reason

I

call

it

taint-

is

the

ops

used

to

hate

me,

because

I

used

to

rack

up

with

a

piece

of

software

and

say:

here's

a

software

mentor

you're

meant

to

use

you'll

need

to

install

this

version

of

the

jvm.

You

need

to

install

this

database.

You

need

to

set

this

configuration.

A

You'll

need

to

you

know

how

past

two

in

the

morning

you

have

to

come

in

and

tweak

this

environment

variable

and

all

those

things

with

taint

that

would

actually

take

the

underlying

operating

system,

so

obviously

install

my

machine

or

my

my

application,

and

they

wouldn't

be

able

to

put

anything

else

on

that

box

because

it

was

tied

to

my

jvm.

It

was

tied

to

my

framework.

It

was

tied

to

my

version

of

the

database.

A

The

beautiful

thing

about

containers.

Is

you

abstract

all

that

tape

into

the

container

and

the

underlying

infrastructure

just

executes

the

container?

So

if

you're

using

a

java

application,

the

jvm

travels

with

the

container.

If

you're

writing

a

database,

the

database

code,

the

database

configuration

is

within

the

container,

it's

not

in

the

core

operating

system,

agility

of

application

creation,

it's

so

easy

to

write

stuff

in

openshift,

and

that

sounds

mad.

But

literally

when

I

do

these

demos

to

customers,

I

rack

up.

A

I

I

install

openshift,

I

create

a

let's

say,

a

node

application,

that's

built

from

source

and

I

have

a

running

node

application

in

around

about

35

seconds

and

they're.

Often

people

did

so

often

asking

oh

yeah.

What

have

you

done?

What

did

you

pre-wreck?

What

did

you

do

in

advance?

It's

like

nothing!

It's

so

fast

to

be

able

to

create

these

different

environments

and

that's

one

of

the

advantages

of

it

on

demand

execution

I'll

get

into

this

later,

because

this

is

the

big

thing

I

want

to

talk

about

today.

A

We

got

the

ability

now

within

openshift

within

kubernetes,

to

execute

workloads

only

when

they

are

required

and

that's

very

important,

because,

if

you're

running

containers

on

a

standard

kubernetes

system,

they

have

to

be

active

at

all

time

to

receive

traffic.

So

when

you

actually

call

them,

they

are

there

to

actually

respond

to

it.

A

A

With

the

tools

and

techniques

that

come

with

openshift

out

of

a

box,

you

get

all

the

things

you

need

to

be

able

to

start

coding

like

that.

You

don't

have

to

set

up

your

machine,

you

don't

have

to

install

the

libraries,

you

don't

have

to

install

the

jvm

and

all

those

kind

of

things

and

it's

not

about

scale.

A

This

is

the

key

thing,

the

most

important

thing

it's

about

dynamic

scale,

so

artificial

intelligence,

machine

learning

apps

they

need

to

scale

up

massively,

but

they

don't

need

to

be

scaled

up

all

the

time

when

you're

running

a

hadoop

cluster

that

cluster

is

always

up,

and

it's

always

there

to

be

able

to

execute

workloads

and

when

people

aren't

using

it

at

the

weekend.

It's

just

sat

there

ticking

it's

using

cpu

cycles,

it's

using

electricity,

it's

not

being

consumed

in

the

past.

A

This

was

impossible

due

to

the

nature

of

infrastructure,

storage

and

the

tight

binding

of

the

applications

to

the

machines.

Using

containers

breaks

that

binding.

You

have

the

application

in

a

container

which

is

a

piece

of

currency.

You

can

move

around

between

your

platforms

and

with

containers

and

specifically

with

k-native.

This

has

radically

changed.

A

A

They

are

literally

just

file

systems

but

they're

executed

in

process

spaces

and

they

think

they're

operating

systems,

but

in

reality

a

container

is

just

built

from

a

immutable

image

that

is

just

a

file

system

and

to

take

advantage

of

the

actual

design

features

of

kubernetes

applications.

Experiments

need

to

follow

certain

design

patterns.

A

Capacities

were

designed

to

be

stateless.

It

was

designed

to

be

able

to

fire

up

the

applications

lose

the

applications

recreate

the

applications.

In

the

old

days

we

used

to

write

applications

that

used

to

sit

on

boxes

and

run

for

months

literally

months.

I

expect

most

people

here

work

with

companies

that

have

a

box

in

the

corner

of

the

room

that

you're

currently

supporting

using

ebay.

China-

and

you

know

if

that

box

dies

your

application's

gone

forever.

A

A

What

a

persistent

volume

allows

us

to

do

is

to

stand

up

a

file

system

and

express

that

fast

system

into

the

back

of

the

container,

but

it's

actually

external

to

the

containers.

The

container

sees

it

as

a

fast

system

it

can

write

into,

but

you

can

actually

destroy

the

container

and

recreate

it

somewhere

else

and

reattach

it

to

the

persistent

volume

and

it

can

carry

on

from

where

it

was

and

that's

brilliant

because

it

introduces

that

kind

of

post

state.

The

state

actually

survives

between

executions

of

the

containers

so

introducing

k-native.

A

Normally,

you

use

a

deployment

that

deployment

specifies

the

number

of

active

replicas

you

have

to

have

so

by

default,

you'll

install

a

single

replica

that

application

will

be

running

at

all

times.

If

you

install

it

using

the

k-native

technology,

it

actually

creates

an

ingress

controller

at

the

front,

and

it

creates

a

mechanism

that

allows

you

to

offline

it

to

zero

replicas.

A

The

first

is

serving

and

that

actually

creates

an

input

point,

an

ingress

point

on

the

service,

and

that

means,

when

you

push

traffic

into

it,

it

spins

it

up

and

responds

to

it.

The

second

one

is

more

important

and

I

think

much

more

relevant

to

ai

and

machine

learning

and

it's

called

eventing

and

what

eventing

allows

you

to

do

is

to

create

a

broker

that

lives

within

openshift

that

lives

within

the

namespace

itself.

A

The

process

is

a

new

type

of

abstract

event

called

a

cloud

event,

and

I

love

cloud

events

because

they're,

incredibly

simple,

they've

got

a

type

and

they've

got

a

payload

and

what

happens

is

when

you

push

one

of

these

cloud

events

into

the

broker.

The

broker

will

look

at

the

cloud

event

type

and

it

will

look

for

triggers

within

the

system

and

if

any

of

the

kennedy

serverless

are

waiting

on

that

trigger

of

that

type,

it

will

pass

the

event

down

to

it.

A

The

arrival

of

that

event

into

the

actual

application

will

spin

the

app

up

run

the

processing

and

do

all

those

kind

of

things

the

lovely

thing

about

this

is

those

brokers

are

by

nature

by

default,

they're

ephemeral.

So

they

live

within

the

actual

name

spaces,

and

if

you

lose,

the

machine

bring

it

back

up

or

the

state

is

gone,

but

you

can

back

these

brokers

with

kafka,

so

you

can

set

up

kafka

to

have

a

topic.

That

topic

can

deliver

cloud

events

of

certain

types

to

the

broker.

A

What

that

allows

you

to

do

is

basically

have

a

broker.

That's

generating

these

events

to

drive

the

canadian

serverless,

but

then

you

can

temporarily

replay

the

actual

messages

by

just

pushing

the

buffer

back

within

the

kafka,

and

that

allows

you

to

replay

experiments

to

actually

actually

experiment

data,

experimental

data

being

pushed

into

the

clinician

serverless

via

kafka

and

then

just

reset

the

actual

temporal

point

to

rerun

that

experiment.

A

So

ai

and

ml

work

codes

are

all

about

size

and

repetition,

they're

all

about

huge

data

processing

and

they're,

all

about

doing

things

over

and

over

again

to

get

to

get

the

results

to

generate

certain

types

of

results

and

most

organizations

are

limited

in

this

resource,

either

by

size

or

cost.

You

know

it's

very

expensive

to

run

up

an

aws

cluster,

it's

very

expensive

to

maintain

a

hadoop

cluster

containers

and

cognitive

technologies.

Allow

for

massive

experience

in

much

smaller

footprints.

A

A

They

can

be

resident

in

a

chain

driven

by

cognitive,

serverless,

much

lower,

much

smaller

resource

footprint,

so

you

can

do

many

more

experiments

on

much

less

hardware

and

openshift.

Kubernetes

are

facilities

for

targeted

orchestration,

and

this

is

where

it

gets

really

sweet.

How

kubernetes

works

is

it

has

a

number

of

I'll

tell

little

jokes,

I

assume

there's

no

red

hat

sales,

people

in

the

room.

It

has

a

number

of

working

notes.

These

work

and

those

are

basically

buckets

where

workloads

can

be

executed.

A

So

I

used

to

describe

to

customers

what

you

do.

Is

you

stand

up

all

these

little

buckets

within

your

kubernetes

system,

and

then

you

run

your

workloads

over

it,

and

I

was

taken

aside

by

one

of

the

chief

sales

people

at

red

hat.

He

said

you

can't

use

the

word

bucket,

it's

too

technical,

a

term

for

salespeople,

and

it

was

like

right,

okay,

that

just

struck

me

as

being

amazing,

but

anyway,

what

openshift

allows

you

to

do

is

to

do

specific

workload

targeting

on

these

buckets.

A

So

if

you've

got,

for

example,

five

worker

nodes

in

your

system

and

one

of

your

worker

nodes

has

a

huge

amount

of

memory

and

some

gpus

you

can

actually

have

or

openshift

orchestrate

those

workloads

that

require

gpu

to

only

land

on

that

box

and

one

of

the

new

features

we've

got

of

openshift

and

the

latest

releases

is:

we've

got

the

abilities

of

the

boxes

themselves

to

explore

the

actual

hardware

requirements

they

have.

So

if

you've

got

a

box,

that's

got

new

numero

zones

or

you've

got

a

box.

That's

got

gpus.

A

It

can

express

through

the

openshift

system

that

it

has

these

kind

of

things

and

then

your

orchestration.

If

you

get

a

workload

that

says,

I

must

run

this

number

zone

against

this

cpu.

I

must

have

access

to

this

amount

of

memory.

I

must

run

on

a

gpu.

Openshift

can

automatically

orchestrate

that

to

the

appropriate

box

and

that's

incredibly

powerful.

It

means

you

don't

have

to

have

a

box

or

a

cluster

that

has

identical

boxes.

A

So

I

work

with

some

banks

and

what

the

banks

do

is

they

tend

to

buy

a

very

expensive

box

for

all

their

top-of-the-range

services

and

they

go

and

buy

the

worst

possible

boxes.

They

can

for

their

developers

off

the

back

of

a

lorry

and

they

stamp

those

up

as

worker

nodes

and

label

them

as

developer

and

then

stand

up

the

prime

node

and

label

it

as

for

the

best

and

openshift

allows

that

kind

of

orchestration.

A

So

talk

about

the

thought

experiment

now-

and

this

is

where

I

get

a

bit

more

excited

because

I've

been

working

with

neural

nets

for

a

number

of

years.

I

love

the

concept

of

neural

nets,

but

I've

never

been

able

to

build

one

properly

because

I

I

I'm

any

java

programmers

in

the

audience

any

go

programmers

in

the

audience

before

I

start

to

insult

go

so

I'm

a

java

program

and

I've

been

a

java

programmer

for

25

years

and

go

makes

my

teeth

hurt.

A

I

don't

know

why,

so

I

find

it

very

hard

to

write

experiments

and

go

anyway

long

story

short.

What

I've

been

working

on

is

this

concept

called

k-mural

and

what

it

does.

It

allows

to

create

neural

nets

using

neurons,

but

the

neurons

are

k-native

services

that

only

exist

for

the

duration,

they're

being

called.

The

neuron

itself

uses

red

hat

data

grid,

which

is

an

in-memory

data

grid

to

actually

store

the

state

of

the

neurons.

So

when

a

neuron

is

called

via

a

cloud

event,

it

spins

up

the

first

thing:

it

does.

A

It

talks

to

the

data

grid

and

it

pulls

off

its

memory

state.

It

looks

at

the

memory

state,

it

looks

at

the

thresholds.

It

looks

at

what

it

has

to

generate

if

it

exceeds

a

threshold

in

the

way

the

neural

normally

exceeds

a

threshold,

it

will

throw

a

cloud

event

back

to

the

broker

and

that

cloud

event

will

drive

other

neurons

and

when

the

neuron

has

finished

its

work,

it's

offloaded.

A

So

you

can

build

these

hugely

complicated

systems

with

very

small

atomic

components.

And

that's

incredible

I

mean

I've.

I've

had

some

problem

writing

it,

because

you

know

I

have

a

day

job,

so

they

won't.

Let

me

do

this

all

the

time

I

have

to

come

to

these

events

and

sell

software

and

things,

but

I've

been

working

on

this

a

lot.

I've

actually

got

to

get

a

brief

play

with

it,

but

I

say

neurons

are

perfect

for

this

kind

of

system,

because

they're

atomic

and

simple,

you

know

you

provide

the

state

when

the

neuron

is

created.

A

You

persist

a

state

when

the

neuron

changes

the

state

and

when

the

neuron

goes

away,

the

state

is

still

persisted

and

if

you

keep

your

neurons

simple,

if

you

keep

the

actual

state

of

the

engine

simple,

if

you

keep

the

thresholding

simple,

it's

a

very,

very

nice

way

of

doing

it

and

incredibly

fast-

and

I

say

I

use

the

infinite

span

red

hat

data

grid

for

the

memory.

So

it's

an

in-memory

data

grid

that

allows

to

store

nosql

objects,

so

if

it

gets

slightly

technical.

A

What

I

do

is

that

each

neuron

has

a

unique

id

assigned

to

it.

Our

unique

ideas

uses

a

key

into

the

grid

to

pull

the

data

off,

and

I

say

each

neuron

is

represented

by

a

tiny

container

because

you

can

build

containers

with

very,

very

small

footprints.

We've

got

technology,

we

ship

as

part

of

openshift

called

the

ubi

the

universal

base

image,

which

provides

rail

in

a

much

smaller

footprint.

A

A

So

what

we're

looking

at

here

when

it

starts

up

is

basically

this

is

the

standard

openshift

user

interface.

We've

actually

provided

two

provided

two

user

interfaces,

one

is

for

the

administrators

and

that's

not

actually

true,

because

it's

for

basically

it's

an

object

level

dive

into

every

object

that

you,

as

a

user,

can

actually

change.

So

I'll

show

you

very

quickly,

while

this

is

rendering.

A

If

I

go

to

the

administration

user

interface,

what

it

is

is

basically

it

allows

you

to

drill

down

into

any

component

of

the

system,

so

I

can

drill

down

into

all

the

deployments

drill

down

into

all

the

services,

the

routes

everything

I

have

access

to.

I

can

drill

down

into

one

of

the

lovely

things

I'll

take

30

seconds

on

this,

because

this

is

really

sweet.

A

It's

part

of

openshift

is:

we've

now

expressed

the

infrastructure

on

which

openshift

runs

as

objects

within

kubernetes,

so

you

can

treat

them

as

other

objects,

so

you

can

change

these

objects

in

real

time,

so

you

can

change

the

number

of

nodes

you've

got.

You

can

change

all

these

kind

of

really

cool

things

without

having

to

go

down

into

the

dirt

and

rebuild

the

system

and

do

all

that

kind

of

stuff.

A

A

I

love

kubernetes

kubernetes

is

one

of

the

most

elegant

pieces

of

software,

I've

ever

seen,

but

it

is

painfully

complicated

and

it

uses

this

model

which

they

call

it

eventually

consistent,

but

everyone

who

actually

uses

it

called

eventually

inconsistent

in

that

you

actually

talk

to

the

kubernetes

control,

plane,

change,

the

object,

state

and

then

kubernetes

says

yep

done

and

in

the

back

it

goes

away

and

actually

does

the

physicalization

of

that.

So

you

have

that

wonderful

thing

about

being,

eventually

consistent,

but

anyway.

So

what

we're

looking

at

here

is

an

application

I've

written.

A

So

this

is,

but

this

is

the

k

neural

stuff

I'm

actually

running.

I've

got

the

data

grid.

Active

I've

got

something

called

grid

connect,

which

is

basically

my

way

of

actually

interacting

to

the

grid

itself.

The

reason

for

that

is,

I

wanted

to

keep

the

neurons

very

small

so,

rather

than

having

the

connectivity

information

within

the

neurons

themselves,

the

neurons

just

send

very

small

packets

to

the

grid

connect

which

does

the

actual

physical

connection

and

physical

update

of

the

data

within

the

grid.

Again,

I'm

just

trying

to

optimize

those

things.

A

more

optimized

container.

A

A

much

smaller

container

is

much

faster

to

run

much

faster

to

deploy

much

faster

to

move

over

here,

which

is

the

cool

bit

I'll,

make

it

slightly

larger,

so

people

can

see

it

is

I

got

a

quarkus

function,

so

caucus

is

a

new

version

of

java.

We

basically

made

java

relevant

again,

because

I

said

I

was

a

java

programmer

for

25

years.

I

love

java

and

java

got

this

terrible

reputation

of

being

slow,

so

we

come

up

with

quarkx

and

what

caucus

does?

Is

it

pre-compiles

all

the

class

files

it

creates?

A

It

makes

this

the

startup

time

of

a

java

application

go

from

seconds

to

microseconds

to

nanoseconds

beautifully

fast.

So

what

I've

got

here

is

a

single

caucus

application.

That's

waiting

for

an

event

to

arrive

within

the

broker,

so

this

broker

here

is

actually

got.

Two

triggers

one

trigger

is

waiting

for

a

caucus

event

and

I've

got

a

subscription

for

that

trigger.

A

For

this

k

native

service,

the

other

one

is

actually

a

tech

talk

event,

and

on

the

end

of

that

I've

got

a

technology

called

camel

k,

so

camel

k

is

based

on

apache

camel

it's

an

integration

technology

which

allows

to

write

some

very,

very

cool,

very

fast

technology

in

very

small

amounts

of

code.

What

that

does

it's

very,

very

simple?

It

just

pulls

the

image

it

pulls

the

actual

event

off

and

it

just

logs

the

fact

it's

got

an

image,

an

event

and

I've

also

written,

and

I

apologize

profusely.

A

A

So

what

I'm

doing

is

I'm

actually

going

to

push

an

event

of

caucus

event

with

a

payload

that

has

just

a

single

key

payload

and

hello

ai

summit

in

it

and

I'm

going

to

push

that

into

the

broker.

So

this

is

just

basically

throwing

that

into

the

system

and

the

broker

I'm

targeting

you'll

see

over

here

is

the

k

neural

broker

in

the

actual

k

neural

namespace.

So

you

can

individually

target

these

brokers

based

on

the

namespace

themselves,

which

allows

you

to

fragment

the

event

model.

A

So

I'm

going

to

omit

that

event

and

if

I'm

quick,

this

makes

it

easy,

because

the

network

isn't

very

good

you'll

see

that

the

application

immediately

fired

up,

but

you'll

also

see

that

this

one

fires

up

and

the

reason

this

one

fires

up

is

that

this

one

actually

emits

an

event

of

tech

talk

event.

So

when

that

event

arrived

in

the

broker

the

brokered

saw,

there

was

a

caucus

event.

It

pushed

it

down

the

caucus

event

trigger

the

caucus

event

trigger

actually

spun

up

that

caucus

function.

A

A

But

if

you

think

about

it,

what

you

can

do

with

your

systems

is

you

can

break

your

systems

down

into

atomic

components,

break

it

down

into

atomic

microservices

write

each

one

of

those

micro

services

is

being

driven

by

a

cloud

event

and

install

it

as

a

candidate

of

serverless

workload,

and

then

you

can

write

extremely

complex

systems

but

not

consume

a

huge

amount

of

resource,

and

this

is

huge

when

I

talk

to

customers

about

this.

You

know

this.

A

This

is

the

next

generation

the

beautiful

thing

about

it

is

when

you

use

open

shift.

This

comes

out

of

the

box,

it's

not

additional

configuration.

You

just

install

basically

the

operator

to

install

kennedy,

serverless

and

away

you

go

so

this

thing

is

now

showing

flashing

numbers

at

me.

I

think

I've

gone

beyond

so

that

was

basically

the

demo.

We've

got

a

stand

over

there.

My

voice

is

probably

gonna

last

for

another

two

hours.

C

A

B

A

C

A

Does

what

we

do

normally?

Is

we

localize

the

actual

the

networks

for

the?

So,

if

you're

running,

let's

say

a

huge

amount

of

pods

within

a

single

cluster,

we

use

the

internalized

sdn

and

what

we

can

do

is

actually

have

placement.

So

if

you

want

to

have

a

number

of

applications

are

very

chatty

across

the

network,

you

can

make

sure

they

land

on

the

same

nodes

or

nodes

that

are

actually

close,

but

we've

got

a

new

technology

called

submariner.

Have

you

heard

of

submariner?

A

So

what

submariner

allows

you

to

do

is

to

put

an

overlay

network

over

multiple

clusters,

so

you

can

actually

treat

what

this

overlay

network

does

is.

It

provides

basically

an

overlay

network

over

the

sdns

of

multiple

clusters,

so

you

can

actually

spread

your

workload

out,

but

the

answer

in

your

question:

you

can

localize

these

things.

A

I've

got

a

lot

of

customers,

who've

got

very,

very

sort

of

intensive

application

spaces

and

they

need

to

be

close

to

each

other

to

cut

down

the

latency

from

the

call

from

point

to

point

and

what

they've

done

is

basically

actually

stood

up.

A

number

of

the

worker

nodes

within

openshift,

with

black

fiber

between

them

or

basically

in

the

same

room,

to

get

around

that.

So

you

can

architect

the

beautiful

thing

about

the

openshift

side.

Is

that

we're

not

opinionated

in

the

way

you

actually

install

it?

A

This

is

what

I'll

talk

about

the

box

of

technical

lego.

If

you've

got

a

system

that

has

to

be

massively

network

efficient,

you

can

design

those

appropriately.

I've

got

some

customers

and

all

they've

got

is

two

nodes,

and

these

nodes

are

massive

sort

of

dual

socket

96

core

systems,

and

they

do

everything

I

actually

had

one.

I

won't

say

the

name,

because

it's

under

nda,

but

what

they

did

was

they

actually

created

a

csi

driver

that

expressed

a

pv.

So

you

based

on

persistent

volumes,

which

are

the

way

in

which

you

store

things

offline.

A

On

disk,

they

wrote

a

csi

driver

that

actually

use

memory

for

persistent

volumes,

so

they

could

mount

a

file

system

into

the

containers

itself,

but

when

they

wrote

to

that

fast

system,

they

were

actually

writing

into

memory

just

to

get

the

speed

of

actual

processing

and

pushing

the

messages

about.

I'm

not

sure

that

answered

the

question.

I

think

I've

gone

off

on.

A

A

So

if

you

want

to

scale

out

your

system

by

having

small

nodes,

but

thousands

of

them,

you

can

do

that

if

you

want

to

scale

out

in

a

more

kind

of

horizontal

way

by

having

just

a

number

of

small

number

of

huge

nodes

and

then

scaling

up

the

applications

themselves,

you

can

do

it

that

way,

so

that

it's

a

bad

answer

to

a

question.

But

there

are

so

many

ways

you

can

do

it.

A

The

problem

we've

got

the

problem

we've

always

had

with

this

is

that

we

try

not

to

be

opinionated,

because

the

minute

you're

opinionated

you're

forcing

someone

to

do

it.

That

way,

the

beautiful

thing

about

the

openshift

is,

you

can

hang

them

together,

whatever

whatever

way

you

like.

Does

that

make

sense.