►

Description

As part of the “All Things Data” series of briefings, Red Hat’s Jon Cope and Jeff Vance gave an update on the Object Bucket Provisioning Kubernetes Enhancement Proposal (KEP) being worked on upstream and the value it adds to Kubernetes. Also answering questions during the Q&A was Red Hat's Erin Boyd - co-chair of the Kubernetes Storage SIG.

Join the Kubernetes SIG Storage google group: https://groups.google.com/forum/#!forum/kubernetes-sig-storage

Review the Kubernetes Enhancement Proposal: https://github.com/kubernetes/enhancements/pull/1383/

A

Thank

you,

everybody

for

joining

us

for

another

OpenShift

Commons

briefing

and

they

of

all

things

data

series.

Today

we

have

John

cope

and

Jeff

Vance

from

the

Red

Hat

CTO

office,

and

they

are

the

key

drivers

for

object

bucket,

provisioning

and

kubernetes.

So

we're

very

excited

to

have

you

here.

Please

take

it

away.

Hi.

B

My

name

is

John

cope

and,

along

with

my

colleague,

Jeff

vans,

we've

been

working

on

object,

bucket

provisioning

for

quite

a

while

now

and

started

off

as

a

small

project

and

has

grown

into

a

much

bigger

community,

supported

project

that

we're

gonna

be

talking

about

here

today.

So

this

initially

started

off

as

a

in-house

solution

to

the

lack

of

an

object,

storage

API

in

kubernetes.

B

At

the

time

there

has

there

wasn't

and

they're

still

not

isn't

an

API

that

allows

a

normal

interaction

with

object,

storage

for

provisioning

of

buckets

or

any

vendors,

and,

as

a

result,

managers

often

have

to

go

out

of

van

or

out

of

kubernetes

to

manage

their

bucket

quotas,

their

storage

quotas

and

to

manage

cost.

They

have

to

handle

user

policy

out-of-band,

attaching

users

and

roles

to

buckets

through

the

vendors

interface.

B

That's

an

extra

layer

of

complexity

of

the

system,

and

it

raises

inconsistent

workflow

for

users

who

have

to

figure

out

on

their

own

how

to

inject

connection

and

credential

information

into

their

pods

or

their

workloads.

This

creates.

We

feel

some

security

holes

when

the

user

and

administrator

has

to

go

outside

of

kubernetes,

get

their

credentials

and

then

inject

them

into

the

work

workload

manually

and,

of

course,

this

at

scale

becomes

incredibly

difficult

to

manage

and.

C

B

We

want

to

simplify

the

onus

on

them

to

describe

their

workloads

in

their

animal

specifications

and

just

as

they

can

do

a

PVC

or

a

per

system

volume

definition

in

a

yeah

mall,

along

with

their

pod.

We

want

to

make

that

possible

for

bucket

provisioning,

so

they

can

say,

don't

start

my

workload

until

this

bucket

is

ready,

create

this

bucket

and

then,

when

it's

ready,

give

me

the

connection,

information

and

inject

it

into

the

workload

in

this

way.

B

So

we

we

want

to

automate

the

entire

process

and

keep

them

from

having

to

go

out

of

band

either

through

the

CLI

or

a

web

GUI

to

create

their

bucket

and

get

their

credential,

and

we

also

want

to

make

it

possible

to

some

extent,

to

make

these

workload

specifications

portable,

assuming

that

the

backing

object,

store

vendor

supports

the

same

protocol.

So

if

they're

moving

from

say,

AWS

s3

to

a

on

Prem

cluster

running

stuff,

their

workload

should

still

run

without

any

modifications.

So

to

touch

on

some

of

the

goals

of

the

project.

B

We

want

to

create

a

control

plane

within

kubernetes

and

open

shift

for

buckets

I

call

it

control

here,

because

what

we

I

want

to

explicitly

say

we're

not

replacing

or

abstracting.

The

actual.

All

objects

store

vendor

protocols

that

is

s3

GCS

as

your

blob,

so

users

will

still

be

using

those

SDKs.

We

want

to

normalize

the

experience

of

provisioning,

the

buckets

we

want

to

provide

vendors,

a

easy

onboarding

experience

when

they're

writing

their

provisioners.

So

this

will

be

much

like

si.

B

Si

si

written

against

a

gr

g,

RPC

interface

with

a

handful

of

methods

that

a

vendor

would

write

an

abstract

away

all

of

the

kubernetes

controller

framework,

so

that

provision

or

authors

don't

have

to

be

kubernetes

experts

when

they're

writing

these

provisioners

and

to

touch

on

non

goals

as

well.

We

do

not

want

to

define,

as

I

said,

a

native

object

store

protocol.

That

is

something

we're

hoping

to

enable

with

this

project

and

it's

something

that

has

been

talked

about

as

a

stretch

goal

following

this

project.

But

currently

that's

not

something

we're

trying

to

solve.

B

We're

not

trying

to

make

it

possible

to

lean,

on

the

the

first

point

to

shifter

workloads

between

objects

or

protocols,

so,

if

you're

shifting

from

a

juror

to

AWS,

s3

and

you're,

using

these

your

SDK

we're

not

trying

to

make

it

possible

to

magically

drop

that

in

AWS

to

make

that

work,

that's

not

something

that

this

project

is

trying

to

solve,

and,

lastly,

we're

not

trying

to

orchestrate

the

infrastructure

of

object

stores.

That

is

the

spinning

up

of

actual

physical

storage

and

the

deploying

of

Si

SEF

object

onto

nodes

with

storage.

B

I'd

recommend,

if

you're

looking

for

something

like

that

checking

out

rook

IO.

That

does

a

very

good

job

of

orchestrating

that

kind

of

stuff

and

they

do

have

support

for

stuff

some

of

the

history

of

this

project.

So,

as

I

said

earlier,

this

start

off

is

a

kind

of

a

small

in-house

solution

has

grown

sense

as

a

result

of

its

origins.

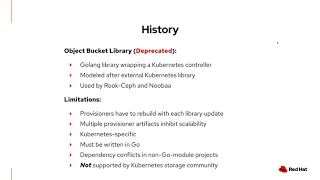

We

opted

for

a

library

that

would

be

imported

into

projects

and

that

library

would

wrap

the

kubernetes

controller

long,

and

we

would

ask

vendors

just

to

write

to

an

interface

that

we

had

to

find.

B

This

has

some

pretty

significant

limitations

that

weren't

a

problem

for

our

in-house

solutions,

but,

as

we

started

to

see

a

bigger

community

interest

in

this

and

the

broader

kubernetes

community,

we

started

to

see

some

of

the

real

limitations

here,

namely

one

every

time

a

library

update

occurred.

So

a

bug

was

fixed

or

a

feature

was

added.

Those

provisioners

had

to

be

rebuilt

because

it

was

a

dependency

code,

dependency

of

those

provisioners.

B

The

design

itself

used

config

maps

and

secrets

to

represent

a

single

bucket

and

at

scale

that

becomes

pretty

unmanageable

because

you've

doubled

the

amount

of

objects

you

have

to

manage

per

bucket.

It

was

kubernetes

specific,

whereas

a

CSI

like

model

of

the

g

RPC

interface

can

actually

be

implemented

for

any

container

registration

platform

and,

of

course,

as

a

result

being

written

and

go.

It

required

that

anyone

that

depended

on

it

right

there

provisioners

and

go

which

again

wasn't

a

big

issue

for

us.

B

We

at

the

time

we're

only

writing

to

projects

that

already

had

or

were

already

based

and

go

and,

of

course,

with

go

especially

an

older

go.

Dependency

management

was

a

real

problem

prior

to

go

module

projects

and

we

ended

up

spending

a

lot

more

time

and

increasing

amount

of

time

D

conflicting

dependencies

and,

lastly,

we

didn't

have

support

for

it

at

the

communities

community

at

the

time

they're.

B

Coming

to

today,

with

the

enhancement

proposal,

we've

got

a

pull

request

open

for

against

kubernetes,

it's

led

by

Jeff

and

myself,

and

we

have

support

for

it

within

six

storage

and

are

working

closely

with

some

of

the

chairs

on

six

storage

to

really

get

this

movement

through

and

what

it

proposes.

A

a

native

kubernetes.

You

know

community

maintained

API,

and

what

that

means

is

that

the

conversation

has

been

around

making

this

an

API.

That

is

not

an

external

to

the

core

kubernetes

api.

B

It

would

be

part

of

core,

it

would

be

referenceable

from

within

and

say,

pods

and

deployments,

and

things

like

that,

and

that

would

the

the

benefit

of

that

would

be

pretty

large

for

one.

It

would

significantly

cut

down

the

amount

of

extra

API

objects

required

to

manage

each

bucket.

We

have

a

single

bucket

object

per

per

bucket,

rather

than

a

secret

and

config

map.

In

that

bucket

could

be

referenced

by

a

pod.

B

We

some

of

these

I've

already

mentioned

before

we

separate

a

container

kubernetes

orchestration

from

the

provisionary

code,

so

via

this

G

RPC

interface,

provisioners

could

right

there

or

provision

or

authors

rather

could

write

the

provisioners

in

any

language.

That's

supported

by

G

RPC,

which

there's

a

list

of

20

or

more

so

they're

not

tied

to

go.

They

don't

have

to

learn,

go

to

write

and

provisioner.

This

significantly

simplifies

upgrades.

The

cosy

sidecar,

just

like

in

CSI,

would

run

in

its

own

container.

So

whenever

an

update,

a

bug

fix

a

feature

would

come

out

for

it.

B

There

wouldn't

be

a

manner

of

having

to

readjust

it.

Deconflict

dependencies,

rebuild

your

provision

and

then

deploy

that

it

would

just

be

updating

your

sidecar

container

version

and

you're

good

to

go,

and

probably

most

importantly,

as

I

had

said

already,

we

have

support

within

six

storage

for

this

and

that

pretty

significant

support.

In

fact,

the

we

have

been

invited

to

talk

about

this

at

the

last

year's

cube

con

we've

gotten

a

lot

of

support

from

the

technical

chairs

on

this

and

we're

holding

weekly

meetings

to

move

this

along

and

prove

on

the

API.

B

So

we

can

get

the

cap

merged

and

begin

designing

the

the

lower-level

implementations

to

dive

into

the

design

itself.

A

little

bit

we're

looking

at

introducing

three

new

API

objects,

a

bucket

bucket

content

bucket

class.

The

bucket

is

similar

to

a

PVC.

It's

a

user

created

object,

it's

a

it

triggers

the

provisioning

of

a

new

bucket

by

the

sidecar

and

the

provisioner

define

our

provisioner

authored

driver.

The

Bucky

content

is

analogous

to

a

persistent

volume.

B

It

represents

a

cluster

scoped

administrative

sign

of

the

bucket

containing

bucket

metadata

that

you

don't

necessarily

want

users

to

be

privy

to

and

bucket

classes

very

similar

stores

classes

provide

a

object,

store,

tailored

API

to

represent

a

set

of

parameters

that

users

would

reference

from

their

bucket

object.

As

an

MVP,

we

want

to

support

three

separate

cases

for

buckets

greenfield

being

the

creation

of

new

buckets

on

demand

by

users,

so

those

would

be

brand

new,

empty,

buckets

brownfield.

B

So

what

we

see

this

doing

for

our

customers

is

enabling

a

couple

of

use

cases

at

least

hybrid

cloud,

being

a

big

push

for

the

Red

Hat

right

now

with

these

pourable

api's.

It

would

make

it

a

lot

easier

for

users

to

pick

the

workloads

up,

outta

one

cloud

provider

and

drop

them

in

another,

assuming,

of

course,

that

the

protocol,

the

object

store

protocol

remains

the

same,

so

they

don't

really

exam.

B

Earlier

example,

I

gave

was

picking

up

from

AWS

and

dropping

it

on

Prem

to

a

staff

object

that

should

be

a

seamless

action

that

doesn't

require

any

changes

to

the

there

has

been

some

talking

around

backup

and

disaster

recovery

operations

and

object.

Storage

really

enables

orchestration

of

periodic

backup

and

disaster

recovery

stuff,

namely

the

fact

that

it

provides

versioned

immutable

data

storage

makes

it

a

real

prime

solution

to

store

the

backup

information

by

normalizing

the

object,

storage,

provisioning.

B

We

enhance

those

those

efforts

and

make

them

a

lot

more

portable

across

cloud

providers

and,

lastly,

it

provides

a

nice

streamlined

way

or

applications

that

are

very

reliant

on

object.

Storage

now,

namely

AI

ml

and

service

apps,

to

be

written

and

to

be

deployed

on

RedHat

products

or

on

on

cloud

providers

without

having

to

become

an

expert

in

that

object,

source

and

I

just

want

to

say

thank

you

for

listening

to

the

web.

Webinar

again,

my

name

is

John

Koch

I,

my

colleague

is

Jeff

Vance.

B

We

would

love

for

you

to

reach

out

to

us

if

you

have

any

questions

or

comments,

if

you

have

you

store

user

stories

that

you

think

we

should

know

about

we'd

love

to

hear

from

you,

we

hold

weekly

meetings

on

the

review

that

are

public.

If

you

join

the

kubernetes,

6

storage,

Google,

Group

you'll

automatically

get

invites

for

those

and

please

feel

free

to

jump

over

on

the

actual

pull

request

and

take

a

look

at

the

document

itself.

B

A

C

C

D

C

So,

like

John

mentioned

yeah

and

you're

right,

Google,

Cloud,

Microsoft

cloud,

Amazon

Cloud,

they

all

have

a

different

SDK

and

therefore

a

different

API

that

you

use.

If

you

were

outside

of

kubernetes

and

you're,

trying

to

create

buckets

list,

buckets,

add

two

buckets

that

or

delete

them

and

they're

not

the

same.

That

is

the

problem

with

buckets:

there's

no

POSIX,

there's

no

I

Triple

E

standard

for

buckets

and

and

that's

why

kubernetes

hasn't

addressed

it.

Yet

that's

what

John

mentioned

about

the

data

plane

or

those

protocols.

C

Yet

what

we're

trying

to

do

is

manage

the

what

we

call

the

control

plane

that

you

can

use

queue,

control

to

create

resources

that

will

be

a

request

for

a

bucket

and

that

that

there's

abstraction

of

buckets

and

that

there's

a

single,

consistent

way

of

managing

buckets

through

kubernetes

the

quota

could

be

applied

and

and

the

the

high

level

bucket

is

a

first-class

kubernetes

resource

now,

so

that

that's

what

we're

trying

to

do,

but

it,

but

because

there's

not

a

consistent

data

plane.

You

can't

take

an

application

written

using

AWS

SDK

for

your

buckets.

C

Does

that

app

knows

that's

using

the

AWS

bucket

API?

You

can't

take

that

app

and

just

stick

it

on

Google

Cloud

and

expect

it

to

work

just

because

the

control

plane

is

the

same.

In

other

words,

your

app

look.

You

create

this

claim

this

request

for

a

bucket

with

which

is

the

bucket

CRD.

That

John

mentioned

that

part's

the

same,

but

underneath

you'd

be

referencing.

A

different

bucket

class

that

would

have

a

different

driver

would

be

a

Google

driver,

not

an

Amazon

driver

and

so

forth,

so

that

app

can't

be

portable

across

different

bucket

stores.

C

B

And

I

no

I,

don't

believe

so.

So

the

what

we're

describing

in

the

same

vein

as

CSI

would

be

that

AWS

or

you

know,

someone

supporting

AWS

would

write

their

AWS

driver.

That

would

be,

like

least,

or

within

six

a

six

storage

managed

repository

or

project

but

managed

or

but

maintained

by.

You

know

the

authors

of

that

driver

and,

and

perhaps

other

people

within

six

storage.

This

would

not.

The

drivers

themselves

would

not

be

part

of

core

kubernetes

code.

B

A

E

So

the

object

bucket

claim,

which

we

introduced

last

year,

which

I

think

a

lot

of

Hatter's,

are

using,

was

based

on

Lib

bucket

provisioner.

So

using

the

idea

of

external

provisioner

is

just

to

create

that

storage

and

since

then

the

community

has

wanted

to

pivot

more

towards

a

CSI

implementation,

because

that's

how

all

data

will

be

accessed

within

the

kubernetes

infrastructure

and

with

that

the

community

wanted

to

go

with

a

different

naming

convention.

E

There

was

a

pretty

strong

opinion

about

that,

given

that,

if

they

could

go

back

in

time,

they

would

have

changed

the

the

PV

PVC

nomenclature.

So

hence

that's

why

the

name

is

different,

so

the

object

bucket

claim

and

bucket

was

used

before

these

are

it's

going

to

be

somewhat

of

the

same,

but

not

is

tracking

as

closely

to

the

PV

PVC,

which

is

unfortunate.

In

our

case,

I

mean

we

liked

being

able

to

relate

that

as

an

admin

understanding,

that's

the

way

you

consume

other

types

of

storage,

but

we'll

take

what

we

can

get.

C

To

add

what

Aaron

said:

I

mean

I

and

there's

a

stat

conversation

on

this.

You

know

the

library

design

had

an

OVC

which

was

an

object

bucket

claim,

that's

what

you

see

an

open

shift,

documentation

and

rook

documentation

and

people

are

familiar

with

that

because

it's

it

sounds

like

a

PVC,

but

when

you

really

think

about

it,

it's

it's

it's

fairly

different.

C

But

the

important

part

is

the

pot

referenced

claim

and

we

don't

have

that

with

an

OVC,

the

pod,

you

have

an

OVC

and

it's

it's

separate

from

the

pot

there's

no

place

in

the

pod,

where

it

references

that

OBC,

so

the

analogy

falls

short

with

the

library

design

and

the

six

chord

and,

most

importantly,

as

Erin

said:

stick

storage

would

not.

They

did

not

like

that

name.

He

in

fact,

they

said

that

if

they

were

starting

all

over,

they

wouldn't

have

called

it

a

PVC.

They

don't

think

it

plain.

C

A

You

I

mean

that's

really

interesting,

I'm,

so

glad

I'm

here

to

listen

to

this

and

looks

like

others

are

as

well

going

from

the

chat.

So

thank

you

all

for

joining

us

and

you

know

giving

us

an

update

on

object,

bucket

provisioning

and

all

the

great

work

that

you're

doing

upstream,

with

that

quick

announcement

for

everybody,

we'll

have

another

session

next

week

same

time,

were

they

all

things,

data

series

and

thank

you,

everybody

for

joining

us

again.