►

Description

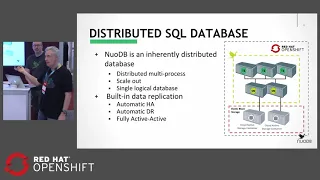

Organizations commonly struggle when designing container applications that use SQL databases. Traditional database management systems aren't built for distributed architectures. However, with a container-native approach such as NuoDB's, it becomes straightforward to deploy an elastically scalable, highly available, SQL database in Red Hat OpenShift Container Platform. Join us to learn how!

A

Excellent

well

welcome

to

our

presentation.

Today

we

are

nuodb

and

today

we're

going

to

be

talking

about

deploying

a

container

native

sequel

database

inside

of

OpenShift.

Okay.

My

name

is

Joe

Leslie

I'm,

a

product

manager

with

nuodb.

This

is

Ben

Higgins.

He

is

our

lead

DevOps,

engineer

extraordinaire

that

is

responsible

for

our

integrations

and

our

delivery

of

our

open

shift

solution.

A

So

let's

go

ahead

and

look

at

what

we

plan

to

present.

Today

we

are

going

to

first

share

a

little

bit

about

nuodb

and

then

we're

going

to

talk

about

some

of

the

traditional

challenges

that

the

companies

are

faced

with

when

deploying

a

traditional

database

in

OpenShift,

and

then

we're

going

to

talk

about

our

container

native

approach

and

how

nuodb

approaches

delivering

a

database

inside

of

OpenShift

and

then

then

Ben's

gonna

have

a

live

demo

for

you,

we're

gonna,

we're

gonna,

show

you

nuodb

running

inside

of

open

ship.

So

let's

go

ahead

and

get

started.

A

So

let's

talk

a

little

bit

about

nuodb

in

our

product.

We

are

nuodb.

We

we

are.

Our

product

is

nuodb,

it

is

a

sequel

distributed

database

and

it

has

several

superpowers

that

I

want

to

cover

before

we

we

get

further

into

the

presentation,

and

one

of

them

is

simplicity

of

scale

out,

not

scale

up

but

scale

out

right.

We

can

scale

out

our

database

across

commodity

Linux

hardware.

We

are

linux,

us

processes

that

can

run

on

prem

in

bare

metal,

virtualized

machines

and

even,

of

course,

containers

which

we're

going

to

demonstrate

today.

A

We

also

provide

continuous

availability,

because

our

database

is

a

process

redundant

database.

As

you

scale

out

your

processes,

you

are

also

protecting

that

environment

and

your

applications

will

continue

to

run,

even

if

you

lose

a

process

due

to

a

hardware

failure

or

a

network

failure,

whatever

it

may

be,

the

database

will

continue

to

run

and

and

the

except

connection

request

and

process

sequel.

A

So

we

do

all

that,

while

providing

transactional

consistency,

we

are

a

sequel

database,

so

we

adhere

to

the

the

acid

properties,

the

acid

database

properties

as

well

as

we

are

a

full

sequel,

ANSI

standard

database.

Okay.

So

when

migrating

sequel

applications

to

nuodb,

it's

actually

quite

easy,

because

all

that

investment

you

made

in

your

sequel

and

your

applications

will

continue

to

run

in

nuodb.

A

So

we

do

all

that

also

providing

the

durability.

You

would

expect

at

the

storage

layer,

creating

multiple

storage,

copies

and

all

that

native,

that

replication

happens

natively

within

nuodb,

and

this

this

is

sort

of

what

we

expect

from

our

cloud

and

container

applications.

Today

we

expect

100%

availability

always-on.

So

now,

let's,

let's

go

ahead

and

look

at

some

of

the

challenges

when

deploying

a

traditional

database

in

OpenShift

or

in

a

containerized

or

micro

services,

type

environment

right.

A

These

traditional

database

products

are

monoliths

with

with

a

very,

very

tightly

integrated

stack

of

software,

and

it's

it's

hard

to

they're,

not

designed

to

break

apart

easily

and

run

those

components

in

micro

services

and

individually

in

containers

makes

them

very

difficult

to

deploy.

It

makes

it.

You

know

more

work,

there's

additional

complexity

and

setting

these

up

in

a

container

model,

and

they

just

don't

work

well

with

this

idea

of

easily

deploying

breaking

down

and

restarting

containers.

A

So

let's

go

ahead

and

see

you

know

just

what

are

the

options

that

the

database

would

have

within

a

containerized

environment?

You

can

run

the

deed,

the

database

outside

of

open

ship

and

that's

the

first

one

we

see

here.

That's

probably

you

know

the

one

that

you

know.

The

database

is

still

the

same

database

and

there's

really,

you

know

very

little

additional.

A

It's

just

poking

a

hole

through

the

openshift

Network

and

looking

outside

to

a

external

network

right

with

a

network

overlay,

but

this

has

problems

of

security,

holes

and

issues

and

would

not

be

the

recommended

way

to

run

a

date

for

an

open

shift

application.

Well,

we

can

improve

upon

that

alil

if

we

could

still

run

the

database

outside,

but

we

could

also

use

a

data.

Caching

mechanism

using

like

a

third

party

data,

cache

you

you

could

achieve

better

performance,

but

yet

you

still

have

the

problems

of

you

know

from

the

application.

A

A

Awesome

great

I'm

getting

we're

gonna

go

ahead

and

pick

up

there.

So

finally,

I

just

want

to

cover

this

last

approach

where

you

could

force

fit

a

monolithic

database

into

a

container

and

you

would

get

sort

of

this

container

bloat

problem

again.

This

is

no

real

advantage

to

using

this

type

of

micro

service

environment.

You

you.

A

This

is

just

yet

another

virtualization

type

of

solution

right

and

if

you

want

another

one

well,

you

would

just

go

ahead

and

stand

up

a

copy

of

it

and

shard

your

database

and

yet

you're

still

just

bloating

containers

you're,

forcing

a

traditional

model

into

a

newer

model

that

offers

so

many

other

benefits

you're,

not

taking

advantage

of.

Let's

look

at

the

new

DB

approach

right,

so

nuodb

is

a

distributed

sequel

database

by

Nature.

It

is

a

set

of

processes

that

you

can

go

ahead

and

distribute

across

your

your

environment

and

so

in

a

containerized

environment.

A

They

run

very

nice

in

each

process.

In

its

own

container,

we

have

multiple

TVs,

multiple

storage

managers.

The

storage

managers

connect

up

to

the

the

PVCs

that

the

persistent

volume

claims

claims

to

attach

to

the

storage.

If

we

should

lose

any

one

of

these

open

ship

will

real

orchestrate

the

replacement

of

that

process

very

quickly,

because

it's

a

single

process

running

in

a

container

using

containers,

just

as

they

were

designed

right

now.

A

The

Storage

Manager

connects

up

to

this

storage,

where,

even

if

we

lose

a

storage

manager

when,

when

that

Storage

Manager

is

replaced,

it

will

pick

up

that

PVC

and

and

and

pick

up

all

of

its

data.

That

was

there.

It

may

take

30

to

60

seconds,

but

then

it's

going

to

resynchronize

with

an

active,

Storage,

Manager,

okay,

and

that

that

new

Storage

Manager

will

be

online

in

about

30

seconds

to

a

minute.

So

that's

our

approach

and

abend

is

gonna.

A

B

B

All

right,

so

what

we're

gonna

start

off

with

is

a

single

zone

deployment

and

then

we're

gonna

show

you

spreading

out

and,

as

Joe

pointed

out,

these

are

basically

micro

services,

so

we've

micro

service

tower

admin,

layer,

art

transaction

engine

layer

which

is

are

cached

of

our

database

and

the

Storage

Manager.

So

all

of

our

writing

out

to

disk

is

done

down

in

another

layer

in

this

environment.

We're

gonna

show

that

we're

using

a

cloud

native

storage

at

the

moment

we're

using

open

EBS,

but

we're

working

here

with

the

storage

OS.

A

B

Well,

okay.

So

what

we're

looking

at

here

is

a

single

cluster,

four

node

nuodb

and

it's

deployed

in

u.s.

East,

2a

and

you'll

see

that

we

have

a

total

of

five

pods

that

are

running

I,

just

scaled

up,

the

TE,

our

transaction

engine

inside

that

transaction

engine

we're

actually

running

a

payload

with

ycs

B.

This

is,

it

sounds

a

little

bit

strange,

but

actually

to

improve

performance.

B

A

A

Here

they

come

to

come

so

that

that

application

is

coming

online

and

we

start

to

see

already

that

it

is

this

single

transaction

engine

is

processing

just

just

about

50000

transactions

per

second,

this

is

a

YC

SB,

95

5

read/write

workload

very

similar

to

what

many

of

your

applications

might

actually

be

driving

as

well.

Okay,.

B

B

Normally,

what

you

would

have

to

do

is

you

would

have

to

deploy

that

box

or

that

image,

and

then

you

would

have

to

do

a

backup,

restore

set

the

slaves,

let

the

master

and

point

the

replicating

fire

off

the

replication

right.

New

DB

doesn't

need

to

do

that.

It

already

has

that

knowledge

inside

of

its

own

framework.

B

B

Alright,

and

that

is

in

u.s.

East

Qi

way,

so

I

don't

need

to

change

anything

else.

Rd

I'm

gonna

reduce

the

number

of

containers

I'm

going

to

fire

up

for

speed

of

delivery.

So

that's

it.

We

already

have

a

secret

store

out

there

and

we

also

already

have

a

new

admin

DNS.

So

we

don't

need

to

reproduce

those.

So

we

have

a

service

that

does

DNS

resolution

for

our

admin

layer.

B

B

B

A

We

can

also

see

that

as

well

from

the

open

shift

in

her

Facebook

right

from

open

ship

you'll

actually

see

those.

We

also

have

a

new

ODB

insights

monitoring

tool

that

will

also

show

when

those

processes

come

online

Ben.

Could

you

look?

Could

you

show

us

what

the

the

monitoring

tool

looks

like?

Oh

look

at

that

there's

our

load

and-

and

so

we

still.

B

A

B

All

right,

we

should

see

them

now,

so

the

other

environment

has

to

have

the

admin

running.

First,

then

the

storage

manager

will

come

along

and

it

will

tag

that

region

so

that

we

can

use

load

balancing

for

our

connection

strings.

So

we

have

a

unique

connection,

JDBC

or

ODBC

connection

string

that

works

in

our

environment,

because

normally

you

would

connect

and

expect

traffic

from

whatever

device

you

connected

to

right.

B

B

So

if

you

want

to

connect

to

the

locus

the

closest

device,

you're

gonna

say

well,

my

application

is

deployed

to

us

to

a

so

then

my

load

balance

priority

will

say

us

east

to

a

and

then

the

second

one

will

say

us

to

be

and

allow

you

to

say,

and

then

it

will

load

balance

you

first

to

a

and,

if

they're

not

available,

it

will

then

send

traffic

to

to

B.

So

you

can

expand

that

out

to

what

four

or

five

different

options.

Oh

yeah,.

B

So

far

we

see

the

us

two-way

still

in

here,

but

we're

gonna

go

back

to

Griffin

and

we're

gonna

see

that

increase

in

transactions

so

we're

doing

closer

to

150,000

transactions

per

second

right

now

and

remember,

this

is

a

distributed

environment.

This

is

using

the

YC

sbu

workload

B,

which

has

95

percent

read

and

five

percent

updates,

so

each

one

of

these

updates

actually

is

replicated

across

the

every

single

storage

manager.

So

this

is

a

full

active

active

database,

so

changes

could

be

made

in

whatever

AZ

or

region

that

year

s/m

has

been

deployed.

A

That

looks

great.

Their

band

looks

like

you

got

near

linear

scale

on

those

transactions.

You

went

from

one

to

three

and

you

jump

from

about

fifty

thousand

transactions

to

one

hundred

and

fifty

thousand

transactions

per

second,

all

with

inside

open

ships,

simply

by

toggling

up

the

number

of

transaction

engine,

pods,

very

impressive

Ben.

So.

B

Our

so

these

are

pods

are

being

managed

by

the

Staple

sets,

mainly

because

we

gotta

have

our

persistent

storage,

and

you

can

see

that

look.

We

may

have

a

affinity

restriction

in

this

environment.

That's

causing

this

one

to

fail

this

morning,

I

scaled

down

our

AWS

environment

and

reduce

the

number

of

pods.

So

we

may

have

crossed

over

and

hit

our

affinity

because

we

want

to

just

before

high

availability

where

we

want

to

deploy

each

container

to

its

own

host.

Therefore,

if

a

host

disappears

or

a

node

disappears,

we

still

have

an

active

environment.

B

B

A

A

Yes

well

well,

thank

you

all

for

for

joining

us

today.

If

you

would

like

to

learn

more

about

nuodb,

the

elastic

sequel

database

that

runs

inside

of

containers

and

is

deployment

agnostic,

you

can

visit

us

over

at

our

booth

and

we'd,

be

glad

to

show

you

more

of

the

demo

and

and

answer

your

questions.

So

thank

you

for

joining

us

today.