►

From YouTube: Arista and Red Hat OpenShift

Description

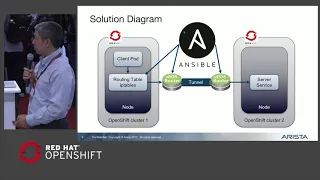

In this talk from the OpenShift partner theater at Red Hat Summit 2018, Fred Hsu from Arista discusses how to connect OpenShift clusters using Arista vEOS and Ansible playbooks. Thanks to the programmable nature of EOS and the automation provided by Ansible, pods in an OpenShift cluster can reach services in a remote cluster, without NAT. By retaining the original IP address of the requesting pod, services are aware of exactly what pods are making the requests, improving security and simplifying application deployment. The clusters can be on-prem, public cloud, or hybrid and are connected with GRE or IPSec tunnels.

A

So

our

agenda,

we're

just

gonna

quickly

go

over

a

Resta

for

those

of

you

who

may

not

be

familiar

with

what

Arista

does

then

we'll

go

into

the

problem

that

we're

trying

to

solve

with

OpenShift,

we'll

explain

a

little

bit

about

what

openshift

Sdn

looks

like

and

then

we'll

propose

the

solution

for

our

problem

with

openshift.

Then

open

it

up

for

Q&A

after

that.

A

So

for

those

of

you

who

are

unfamiliar

with

Arista

we're

at

datacenter

networking

company,

where

we

have

15%

of

market

share

with

a

number

two

data

center

networking

equipment

maker

right

after

Cisco,

we

have

over

15

million

port

shipped

and

we

have

over

26

new

products

introduced

over

the

last

year.

If

you

look

here

at

the

Gartner

Magic

Quadrant,

you

can

see

that

Gartner's

ranked

a

risk.

Pretty

favorably

we're

up

there

on

the

top

right,

so

we're

both

the

leader

and

a

visionary.

A

What

we

recently

introduced

last

year

was

the

ami

cloud

platform.

So

that's

taking

the

same

operating

system

that

we

have

on

Arista

switches

and

putting

that

into

a

virtual

appliance.

So

this

is

providing

virtualized

routing

and

switching

for

different

applications

such

as

cloud

native

applications

and

that's.

What

we'll

be

talking

about

here

is

how

we

can

integrate

deos.

Our

virtual

AOS

system

with

openshift.

A

So

the

problem

we're

trying

to

solve

with

openshift

here

is

when

customers

are

deploying

multiple

clusters.

How

do

they

interconnect

the

different

clusters

together,

so

these

clusters

could

be

spread

across

different

clouds.

They

could

be

spread

across

datacenters

and

what

we

want

to

provide

is

a

way

for

customers

to

you

to

access

resources

across

clusters

without

necessarily

having

to

go

through

nack

gateways

or

change

their

IP

addresses.

We

want

pods

and

services

to

be

able

to

use

the

private

IP

addressing

in

the

cluster

IPS

to

contact

each

other.

A

A

So

when

you're

talking

from

one

pod

to

another

pod

within

the

same

cluster,

that

goes

over

your

VX

LAN

tunnels,

now

once

you're

trying

to

talk

to

something

outside

of

your

cluster,

you

go

through

a

tunnel

interface,

so

it's

called

ton

zero

on

your

host

and

that's

how

you

speak

to

the

outside

world.

It's

going

to

get

matted

or

go

through

an

egress

and

get

out

to

the

outside

world,

so

your

IP

address

is

going

to

change

as

soon

as

you

want

to

talk

to

something:

that's

not

within

your

open,

shipped

cluster.

A

A

So

this

is

just

a

quick

kind

of

high-level

diagram

of

what

we're

putting

together

here

here

we

have

two

different

clouds:

AWS

and

Ashura.

We

have

your

private

data

center

and

we

have

V

us

router

stitching

together

these

clusters

and

disparate

systems

right

so

I

have

a

cluster

running

in

Amazon.

I

have

a

cluster

running

in

Microsoft

and

I.

Have

my

own

private

cluster

and

I

want

to

stitch

all

these

things

together?

A

So

the

first

phase

of

this

is

we're

gonna

leverage,

ansible

playbooks,

to

automate

and

deploy

the

clusters,

as

well

as

deploy

the

routing

policies

in

order

to

interconnect

these

clusters.

So

what

you're

gonna

see

in

the

demo

is

how

these

ansible

playbooks

can

be

deployed

and

how

we

can

deploy

these

configurations

automatically,

allowing

these

clusters

to

to

interoperate

so

there's

two

different

playbooks

that'll

be

instantiated.

The

first

one

is

when

you're

standing

up

a

new

cluster.

A

A

A

To

give

you

a

little

bit

more

details,

what

happens

is

when

we

have

the

ansible

playbook

run

it

actually

inserts

rules

into

both

the

routing

table

of

the

host

and

the

iptables

rules

of

the

host.

So

we're

gonna

insert

a

rule

that

says

to

reach

the

second

cluster

you're

gonna

go

through

your

BTUs

gateway,

as

well

as

we're

going

to

insert

some

rules

into

the

IP

tables

chain

so

that

we

can

say

well

for

the

for

the

traffic.

A

That's

going

to

our

different

cluster,

we're

not

going

to

now

we're

gonna

insert

this

rule

before

the

NAT

wall

takes

takes

place,

so

they

said

what

we're

gonna

actually

have

as

a

GRE

tunnel

to

connect

between

the

host

and

the

beat

us

router.

So

that's

how

we're

gonna

get

traffic

from

your

hosts

to

the

beat

us

router

when

the

new

cluster

is

routed.

We

do

the

modifications

that

I

pointed

out

on

the

earlier

slides,

so

we'll

modify

the

routing

table

and

we'll

modify

the

IP

tables

rules

for

your

inter

cluster

traffic.

A

It's

actually

going

to

exit

tons

0

go

over

the

GRE

interface

for

your

intro

cluster

traffic.

You're

still

gonna

go

over

your

VX

LAN

overlay,

so

the

same

mechanisms

that

you

use

for

your

pod

2

pod

traffic

within

a

cluster

that

still

remains

for

your

outbound

traffic-

that's

not

destined

for

a

remote

cluster.

A

That's

gonna

go

out

your

tunnel

0

and

it's

gonna

get

NAT

adjust

as

usual,

so

there's

been

no

modification

whatsoever

to

the

standard,

open

shift,

Sdn

platform

in

this

case,

so

we'll

take

you

just

through

a

really

quick

demo,

I

apologize

for

the

for

the

small

font

here,

oops.

Let's

click

this

see.

If

I

can

get

this

to

play,.

A

So

this

is

just

running

through

the

ansible

playbook

that

will

connect

the

two

different

clusters

together

and

modify

all

their

appropriate

rules.

So

you

see

that

we're

gonna

run

the

playbook

against

my

my

inventory

of

hosts

and

we

want

to

connect

the

different

clusters,

and

so

we

will

post

this

yeah

well

file

the

github.

So

if

later

on,

you

guys

want

to

take

a

look

at

what

the

yellow

file

looks

like.

A

You

can

see

that,

so

you

can

see

that's

going

through

the

different

tasks

now,

first

off,

it's

adding

the

different

IP

IP

routing

rules

to

the

different

openshift

hosts

to

point

to

the

vos

router

for

the

remote

cluster.

Then

we're

modifying

the

IP

tables

rules

so

we're

inserting

that

rule

before

the

before

the

NAT

rule,

so

that

we

don't

NAT

the

traffic

and

then

finally

we'll

make

the

changes

into

the

VE

us

router.

A

So

we're

leveraging

the

ansible

module

of

VE

us

to

insert

rules

into

the

beat

us

router

to

also

route

the

traffic

appropriately

between

the

clusters

and

we're

gonna

do

with

this

on

both

sides

of

the

cluster

or

on

both

sides

of

the

the

connection.

So

on

the

local

cluster,

as

well

as

the

remote

cluster,

and

when

all

that's

done

now,

we've

got

our

inter

cluster

traffic.

If

pod

1

is

talking

to

you

service,

be

in

the

other

cluster

that

is

going

through

without

having

to

NAT

or

do

anything.