►

From YouTube: MicroShift a lightweight OpenShift at the Edge Ricardo Noriega & Miguel Angel Ajo Pelayo (Red Hat)

Description

MicroShift a lightweight OpenShift at the Edge

Speakers: Ricardo Noriega de Soto & Miguel Angel Ajo Pelayo (Red Hat)

OpenShift Commons Gathering Kubecon EU

May 17, 2022 Live from Kubecon EU in Valencia, Spain

Full Agenda here: https://commons.openshift.org/gatherings/OpenShift_Commons_Gathering_at_Kubecon_Europe_2022.html

Learn more at: https://commons.openshift.org

A

A

And

we're

here

to

present

microshift

new

and

very

exciting

project

is

getting

a

lot

of

attention

lately

and,

as

we

say,

more

or

less,

it's

a

lightweight

implementation

of

open

sheet

for

for

the

edge,

so

I'm

gonna

do

we're.

Gonna

do

like

a

brief

introduction,

some

few

slides

and

then

a

cool

demo.

I

I

hope

the

demo

gods,

you

know,

are

good

with

us

and

yeah

to

give

you

a

little

bit

of

explain

a

little

bit

the

situation

for

the

past.

A

Over

for

over

a

decade

the

it

industry

has

been

trying

to

move

workloads

from

their

legacy

appliances

to

to

the

data

center

to

centralized

locations

to

the

cloud.

But,

however,

more

and

more

diverse

devices

are

connected

to

to

the

internet.

Those

devices

are

producing

huge

amount

of

amounts

of

data

and

it's

getting

important

to

to

get

computing

power

next

to

where

the

data

is

generated,

which

is

near

those

its

computing

devices

and

and

those

remote

locations.

A

A

Like

we

provide

distributed

worker

nodes,

we

provide

three

nodes

cluster

we

provide

lately

single

node,

openshift

right

and

in

the

other

side

of

the

spectrum

we

have

real

threads

red

for

edge

is

a

flavor

of

red

hat

enterprise,

linux

that

is

optimized

for

edge

computing

use

cases

and

when

I

say

optimize

this,

because

we

pick

certain

technologies

that

are

very

suitable

for

for

these

scenarios,

like

rpm

os

3

for

immutability

automatic

upgrades

and

rollbacks

in

case.

Something

goes

wrong.

A

Secure

onboarding

process

for

devices

and

this

operating

system

is

very

well

designed

for

field

field

deployed

devices.

I

will

explain

later

what

it

is

and

the

recommended

way

to

to

deploy.

Applications

on

on

rail4x

is

by

using

botman,

usually

static,

containerized,

workflows,

workloads,

so

microsift

comes

in

to

fill

the

gap

in

between

right,

as

I

mentioned

before,

to

try

to

manage

the

our

workloads

consistently

from

the

cloud

to

the

edge

there

you

go

and

what

is

microshift.

Microshift

is

a

small

form

factor.

Openshift

optimized

for

field

deployed

devices.

A

Our

team

has

been

really

focused

on

integrating

microshift

into

realforex.

It

provides

a

minimal

openshift

experience

and,

when

I

say

minimal

is

because

we

provide

a

lot

of

openshift

apis

like

routes,

security

context,

constraints

et

cetera,

but

we

don't

picture

microshift

as,

for

example,

a

platform

to

build

your

own

container

images

in

the

edge.

It

doesn't

make

make

much

sense

for

edge

computing

use

cases.

A

A

So

what

is

this

field

deploy

devices?

I

have

like

something

to

show

here.

This

is

a

jetson

nano

from

nvidia.

It's

really

really

like

a

this

is

the

developer

kit

that

has

some

connectivity

and

something

just

fall

off.

You

know

they're

very

cheap,

so,

and

the

question

is

that

why

are

they

so

different

from

from

servers

right

like

what

the?

What

are

the

characteristics

that

make

them

special?

So

we

all

are

kind

of

used

to

work

with

servers.

Servers

are

highly

standardized

and

highly

scalable.

A

You

can,

for

example,

plug

more

memory

like

more

storage,

accelerator

cards

and

so

on,

but

these

field

deploy

devices

are

usually

like

systems

on

a

board

systems

on

chip

or

single

board.

Computer,

sorry,

that

are

really

pre-integrated

and-

and

it's

very

difficult

to

you

know,

put

more

add-ons

on

top

the

scenarios

where

you

deploy.

These

field-of-play

devices

are

usually

remote

locations

with

no

physical

security

barriers

with

network

with

the

network

that

is

unstable

or

not

present.

A

There

is

no

out-of-band

management

system.

Probably

there

is

no

ssh

enabled

even

so.

The

way

that

we

operate,

these

devices

compared

to

servers

is

very

different

and

the

way

that

we

deploy

those

devices

is

is

completely.

You

know

the

opposite

from

from

servers

in

a

centralized

location

in

a

data

center

very

quickly.

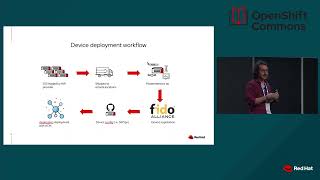

This

is

a

how

we

picture

the

the

deployment

workflow

of

a

field

deployed

device.

A

So

I'm

a

customer-

and

I

want

to

deploy

my

edge

solution

right,

my

application,

so

red

hat-

has

a

software

called

image

builder

to

to

create

customized

images

of

red

enterprise,

linux

and

rail4h

as

well.

So

I

create

my

own

image

with

my

dependencies

and,

for

example,

microshift

right

then

I

go

to

my

hardware

vendor,

and

I

say

I

want

2

000

devices

and

you

can

put

this

image

on

on

those

devices.

A

Finally,

once

the

device

is

registering

to

my

system,

it

will,

for

example,

get

a

register

against

my

acm

advanced

cluster

manager

instance,

and

I

will

have

2000

devices

ready

to

get

my

applications

deployed

in

a

github's

way.

So

this

is

somehow

the

the

workflow

that

we

picture

how

we

compare

openshift

with

microshift

openshift.

You

can

think

about

openshift

as

this

vertically

integrated

solution.

A

A

Openshift

chips,

cluster

operators,

so

those

will

be

able

to

manage

the

infrastructure,

the

operating

system,

the

versions

of

different

components

and

so

on.

However,

microsift

is

for

is

designed

for

the

edge

for

those

customers

that

want

or

those

users

that

want

to

build

their

own

image

of

the

operating

system

and

want

to

manage

those

devices.

The

way

that

they

want

like

it

could

be

with

red

hat

fleet

manager

or

some

other

orchestrator

right.

A

A

A

As

you

can

see,

there

is

an

rpm

or

stream

image

when

you

build

rail48,

rpmos

3

will

contain

the

list

of

rpms

that

are

part

of

the

system,

and

that

is

immutable.

If

you

want

to

upgrade

the

system,

you

will

have

to

build

a

new

image

and

the

upgrade

will

be

done

automatically.

But

it's

part

of

you

know

it's,

although

all

that

software

is

completely

immutable,

you

cannot

change

it,

let's

say

and

finally,

just

to

show

you

that

microshift

is

about

the

glue

of

the

components.

A

B

B

B

B

Sorry,

yeah,

you

can

see

the

pods

running

like

the

bottom

ones

are

microsiev

workloads

like

we

have

a

service

ci,

the

router

core

dns.

For

now

we

have

flannel,

but

we

are

still

in

the

process

of

deciding.

What

are

we

going

to

use

there

and

also

the

cube

beard,

hotspot

provisioner,

but

that's

still

being

yeah.

B

B

So

I'm

running

an

access

point,

a

server

for

some

cameras

that

we

have

here

and

we

are

going

to

connect

in

a

second,

then

a

regular

service

and

an

offensive

route

that

which

is

going

to

be

announced

via

mdns

for

the

cameras.

So

the

cameras

will

be

connecting

to

the

to

the

access

point

running

in

in

microsoft

and

they

will

connect

to

the

camera

server

and,

and

then

the

application

in

there

is

going

to

be

analyzing,

the

the

video

of

it.

Oh,

where

is

my?

B

So

they

will

look

for

the

for

the

microsift

access

point

and

they

will

try

to

to

find

the

the

application

behind

dns

and

then

register

on

the

camera

server.

And

then

the

camera

server

connects

and

gets

the

video

feed

and

then

on

the

server

side.

We

have

a

a

small

application,

like

very

simple,

is

not

optimized

or

anything.

We

are

using

the

face,

recognition,

python

library

that

is

going

to

process

the

video

feeds

looking

for

faces

and

try

to

look

for

ricardo

me

and

put

put

a

name

and

yeah.

B

So

if

I

log

here,

the

the

camera

server

yeah

his

camera

already

connected

to

the

to

the

server

and

the

server

probably

connected

back,

hopefully

to

to

the

camera,

and

when

I

plug

this

one,

this

one

is

also

connected

to

the

access

point

running

in

microsift

and

then

into

this

service.

And

now

we

have

a

a

video

feed

here.

Microsieve.

B

B

B

B

B

B

You

can

even

embed

your

application

containers

into

the

image

of

the

container,

so

it

I

mean

it

will

not

need

to

download

the

the

images

of

your

application

containers,

and

maybe

you

just

manage

the

the

image

or

of

your

device,

and

you

tell

the

device

to

update

and

it

will

do

an

atomic

update

and

your

application

is

inside

and

and

you

can

even

have

off

offline

devices.

Even

that

is

not

better

yet,

but

you

could

even

do

that

and

yeah

that's

it.