►

From YouTube: 2018-06-05 Rook Community Meeting

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

So

I

think

we've

had

some

conversations

recently

about.

You

know

what

is

essential

for

the

milestone

to

be

completed

and

I.

Think

we've

made

some

good

progress

recently

on

some

of

those

issues.

I

think

that's

probably

about

time

now

to

clear

off

the

like

two

halves

on

the

project

board

that

we're

looking

at

now,

because

we

need

to

converge

and

get

0.8

release

out

sooner

than

later,

so

I

want

to

start

making.

C

D

D

That's

two

issues

here:

why

that's

because

of

the

multi

multi

backends,

API

change,

let's

make

it

hard

to

upgrade

in

my

opinion,

make

is

upgrading

is

Nostrum

to

this

review.

The

second

is

that

OSD

right

now

use

the

device

map

device

that

device

config.

Not

so,

if

you

have

a

point,

seven

code

in

the

system-

and

you

want

to

upgrade

from

27

to

28,

the

API

change

is

one

one

of

those

things

and

then

the

device.

D

How

do

you

map

the

devices

from

the

OSD

existing

as

this

to

the

ones

that

you

are

using

the

devices

configured

as

be

a

significant

challenge?

The

third

thing

is

the

OSD

and

a

model

I

use

in

deployments

versus

replica

assets

in

point

seven.

So

all

these

things

connected

makes

me

believe

that

we

probably

will

have

a

break

in

changes

in

28,

so

how

we're

gonna

deal

with

upgrade,

or

should

we

support,

upgrade

at

all

I.

B

Wonder

if

we

can

find

a

way

to

either

support

the

or

not

seven

running

in

some

different

mode

like

those

that

were

using

daemon

sets,

maybe

they

could

continue

using

a

daemon

set

in

some

way,

but

I

know

there's

been

a

lot

of

change

here,

so

it's

gonna

be

a

challenge

to

support

one

way

or

another.

I,

unfortunately,

haven't

had

a

chance

to

really

think

about

that

upgrade

path.

Yet.

A

So,

just

to

be

clear

here

does

its:

are

you

guys

saying

it

sounds

like

what

you're

saying

is

that

there

are

some

upgrade

obstacles

that

are

beyond

the

scope

of

just

what

you

need

to

be

concerned

with

specifically

about

the

OSD

swimming

in

their

own

pods?

There's

other

things

beyond

that

scope,

yeah

so

with

with

the

upgrade

so

in

the

work

that

was

done

to

support

multiple

storage

providers

and

types.

You

know

we

updated

the

upgrade

guide

and

you

know

going

through

that

results

in

a

functional.

A

D

Certain

things:

well,

why

does

the

US

still

continue

to

use

the

API?

We

want

alpha

1

anyone

upgrade

from

there.

It's

going

to

be

some

challenge.

The

second

thing

is

the

switch

you

forgot

replica

statue,

team

assets,

I

haven't

figured

out

how

that's

upgraded

their

work,

the

and

then

how

because

the

device

is

being

used

by

the

existing

or

a

species

into

config

Maps,

that's

going

to

be

the

third,

so.

A

D

A

Talk

about

the

first

one,

real

quick

I

mean

the

you

know

the

about

types.

You

know

the

brook

that

iov

1

alpha

1.

So

we

have

you

know

automatic

conversion

code

migration

code

by

the

operator

that

you

know

converts

all

those

types

and

updates

since

the

new

ones.

So

is

there

there's

you're

saying

that

there's

still

an

issue

outstanding.

D

A

Exactly

so

it

like,

if

you

were

so

for

a

a

user

scenario

here,

it's

has

a

0.7

cluster

deployed

the

steps

in

the

upgrade

guide

to

you

know

to

delete

the

operator

and

let

it

recreate

itself

that

when

the

operator

comes

up,

it

will

automatically

convert

all

the

view.

1

alpha,

1

types

to

you

know

view

and

alpha

2

or

the

sefirot

wrote

that

io

types

you

know

recreating

the

CR

DS

and

doing

all

that

migration

automatically

and

I've

tested

that.

B

C

G

D

When

I

we

baste

about

to

adopt

the

master

code

I'm

using

the

the

Yamaha

was

using,

was

not

quite

working,

so

I

just

updated,

my

llamo

with

the

staff

API

and

instead

of

the

one

API

and

then

I

started

working.

So

my

impression

was

the

master

code.

If

I

just

use

them

to

do,

one

half

of

an

API

is

possible.

I

should

try

this

again

to

confirm.

D

So

if

that's

okay,

if

the

API

is

not

a

big

issue,

so

we

can

move

on

to

the

next

one

that

config

map

the

device

config

method-

that's

in

my

opinion,

couldn't

be

some

challenge

figure

out.

What's

the

devices

used

by

the

existing

OS,

these

Justin

question

actually

should

be

my

grace,

the

existing

part

or

just

should

we

just

let

them

run

like

they

are

right

now

and

then,

if

request

new

clusters,

we

use

the

new

schemes.

A

A

E

Woman

is

that

I

think

I

think

it

actually

would

be

okay

to

say

that

OSDs

that

all

run

in

a

single

pod

remain

that

way

and

I

I,

don't

see

why

that's

a

problem

I

think

over

time.

You

know

it

converges,

but

it

doesn't

mean

that

I

mean

as

long

as

as

long

as

people

upgrade

to

0.8

and

things

continue

to

work.

It's

fine.

E

D

A

The

the

same

purse-

and

the

same

could

be

said

about

you

know

what

do

I

mean

was

talking

about

with

month,

like

that

Mons

changing

from

replica

sets

to

deployments.

The

same

could

be

said

for

that

too.

If

they're,

you

know

state

if

the

real

goal

here

is

maintaining

a

functional

cluster

that

you

know

conserve

data

requests,

so

you

know

it,

it

doesn't

mess

the

important

part.

Isn't

it

so

that

everything

is

running

on

the

latest?

A

You

know

formats

of

the

components

to

keep

the

cluster

running,

but

as

long

as

it's

a

functional

cluster,

that's

what

really

I

am

concerned

about,

but

that

does

have

the

severe

caveats

that

you

know.

I

do

not

want

to

necessarily

get

it

have

a

bunch

of

code

paths

in

the

code

base

where

we're

having

to

deal

with

all

these

legacy.

You

know

types

and

in

components

and

carry

that

burden

for

you

know

forever.

A

B

E

C

C

The

discs

or

the

tourists

are

already

prepared,

so

I

haven't

seen

any

change

to

the

partition

layout

that

is

needed

for

photos

DS.

The

only

thing

that

we

right

now

have

this

issue

that

the

partitions

are

not

created

any

more.

The

DB

and

twelve

partitions

are

still

as

far

as

I

understand,

at

least

allowing

the

person

to

in

some

way

tell

tops

Alpharetta

to

start

a

new

deployment

photos,

the

other

job

to

prepare

those

T's

or

more

or

less

see.

C

The

proprietor

of

ten

sees

that

the

old

STIs

are

already

existent

and

then

just

adds

them

to

the

nose.

Just

could

also

be

done

many

of

these

because

they're

well,

we

don't

have

follow

many

upwards

it.

So

it's

totally.

Okay,

if

the

user

has

to

manually,

do

some

stuff

I

think

we

should

only

go

for

keeping

old

code

in

a

codebase

unless

there

is

no

no

way

to

do.

C

A

D

A

E

E

B

A

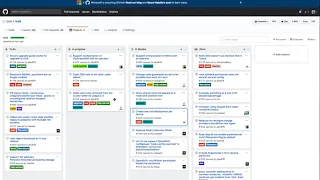

We

have

a

an

agenda

item

for

that

coming

up,

so

we'll

get

into

that

discussion.

It

just

is

that

yeah,

that's

definitely

an

issue

we

want

to

talk

about,

but

so

let's

try

to

wrap

up.

0.8

discussion,

though,

are

there

other

outstanding

tickets

on

this

board

here

included

in

the

0.8

milestone

that

are

of

at

risk

or

ones

that

we

are

concerned

about.

B

E

A

C

C

A

C

A

C

H

A

Okay,

that

sounds

good,

Tony,

okay.

So,

let's

move

on

to

the

next

agenda

item

today

and

the

Alex.

That

is

exactly

what

I

believe

you

wanted

to

talk

about.

So

in

general,

we've

had

some

reliability

concerns

with

our

jenkins

continuous

integration

infrastructure.

Some

of

those

have

been

sort

of

addressed

or

made

somewhat

more

reliable,

but

there

is

the

biggest

new

and

and

blocking

issue

that

we

have

right

now.

Is

that

the

GCE

instances

we

have

to

run

tests

in

to

end

integration?

A

So

we

need

to

figure

out

why

the

GCE

nodes

cannot

come

up

online

and

start

running

tests

and

Ilya

I

was

looking

at

this

yesterday

and

I

was

helping

him,

but

I

don't

know

if

we

what's

exactly

the

issue

is

or

what

you

know.

An

estimation

for

a

resolution

is

so

there's

not

I

think

this

is

a

high

priority

and

we're

gonna

continue

working

on

this,

but

it

don't

necessarily

have

a

resolution.

A

E

A

C

B

A

C

C

E

C

A

Okay,

I

think

we

had

a

discussion

about

upgrade

and

migration

in

the

context

of

you

know,

though

SD

pod

changes

and

then

just

a

little

bit

more

on

that

Travis,

you

updated

the

pull

request,

template

to

have

a

checklist

item

about

making

sure

that

each

pull

request

has

thought

through

upgrade

and

migration

impacts.

Correct.

Yes,.

A

Cool

so

I

liked

it

in

general,

as

a

community,

we

are

being

more

focused

and

mindful

of

upgrade

impacts

to

our

user

base,

because

with

each

release

and

with

each

you

know,

with

the

growth

of

our

community

and

more

users

on

the

current

code

base,

you

know

we

have

a

fairly

fair

amount

of

people

kind

of,

depending

on

their

clusters,

continuing

to

run

across

releases.

So

as

a

community

I

think

that

you

know,

we've

done.

A

Not

I'm,

probably

not

yet

Toni,

because

the

upgrade

the

the

support

we

have

for

upgrades

does

have

manual

steps.

There's

not

full

automated

upgrade,

that's

been

done

and

you

know

in

the

operators

so

having

an

integration

test

about

it.

You

know

we

have

to

also

incorporate

some

of

those

manual

steps,

so

it

might

be

very

kind

of

difficult

at

this

point

so

far.

Okay,

but

I

like

that

idea,

though

absolutely

that.

A

C

B

A

Yeah

well

in

yes,

that's

a

good

point

Alex

and

that

it

will

also

be

impacted

by

adding

more

storage

providers

as

well.

You

know

like

cement,

you

know

integration

tests

or

cockroach

TB

integration

tests

that

will

only

serve

to

further

increase

that

build

time

so

parallelizing.

Some

of

those

efforts

where

they

can

be

you

know

done

that

way

is,

is

definitely

a

smart

idea.

A

H

E

B

Yeah,

there's

definitely

been

interest,

I

think

a

few

people

don't

like

that

to

get

or

whatever,

but

I

think

the

right.

Now

the

documentation

we

have

as

far

as

creating

an

object,

store

and

consuming

it.

We

mentioned

here,

go

to

the

tool

box

and

run

run

this

SEF

command

to

go,

create

a

user,

and

then

it's

so

the

you

know

just

from

a

basic

walkthrough

perspective.

We

know

we

need

a

user

and

creating

us,

the

creating

a

user

with

the

CRD

would

make

that

flow.

B

A

lot

better

I

think

it's

not

clear

what

the

design

looks

like

for

the

CRD,

like

you

know,

if

it

generates

a

secret,

how

do

you?

How

do

you

know

that?

How

do

you

get

that

secret

back

from

the

user

we

just

created

how

to

consume

it

anyway,

so

I

think

there's

some

design

questions,

but

right

now

the

documentation

is

pretty

manual

as

far

as

creating

an

object,

store

user

write,

something

that.

H

H

So

we'd

have

to

tie

in

that

user

to

every

single

type

of

storage,

once

the

buckets

guarantee

goes

in

like

Tigh

users

to

the

buckets

ie

just

to

the

file

system

and

SEF,

and

that

that

becomes

a

I

mean

a

burden

really

I

don't

know

it

is.

Was

that

the

intention,

or

was

it

just

to

automate

the

creation

of

users

and

then

they

would

go

in

and

manually

deal

with

the

permissions

issues?

H

B

H

B

F

H

B

F

B

H

F

A

Yeah

and

the

way

I've

looked

at

this

so

far

is,

is

in

a

fairly

simple

context

that

Tony

I

in

general

I

see

value

in

automation

of

you

know,

steps

there

where

people

have

to

go

and

copy/paste

things.

If

it's

just

that

the

nice

think

that's

still

a

win,

because

right

now

you

have

to

go

to

the

tool

box,

then

you

have

to

run

some

commands

and

then

copy

this

text

and

paste

it

somewhere

else,

and

you

know

like

find

the

right

thing

to

put

it

in,

and

all

that

and

simple

automation

around

that.

A

A

H

As

far

as

this,

these

abstractions

guys

so

I'm

working

on

a

dock

for

bucket

abstractions

that

we

can

use

that

are

tied

to

a

set,

a

mini

object,

storage

and

there.

So

right

now,

it's

it's

super

early

phase

and

it's

we're

unable

to

tie

the

actual

bucket

to

the

application

lifecycle

right.

The

same

way

we

do

with

volumes.

So

the

what

people

have

to

do

is

manually,

create

a

bucket

and

then

go

off

and

create

the

thing

right

and

then

there's

this

kind

of

janky

naming

scheme.

H

That's

so

it

will

create

the

credentials

and

like

a

binding

with

a

certain

naming

scheme

based

on

what

you

name

the

bucket

and

that's

how

you

tie

it

to

your

application

propagate

the

credentials

in

but

moving

forward.

That's

not

really

something

we

should

keep

doing

so.

Some

of

the

Fond

around

that

was

going

to

six

storage

and

see

if

we

can

kind

of

broaden

the

abstractions

from

volume.

So

it's

not

just

volume.

Now

it

could

be

bucket

or

something

else

and

actually

tie

it

to

a

pod

lifecycle.

H

F

H

C

C

What

you

know

Bob

from

hajikko

yeah,

it's

the

secret

storage

and

stuff

like

that,

leads

to

the

second

part

there

with

the

users.

Have

you

thought

about

kind

of

having

a

generic

user

CLU,

which

kind

of

like

role

based

access,

like

Auerbach,

uses

findings

to

find

to

certain

pockets

to

certain

storms

or

even

other

stuff?

H

I

mean

I

can

look

into

that,

because

it

is

right

now

it's,

it

seems,

really

fragile.

This

is

not

fragile,

but

like

limiting

this

design.

Everything

as

we've

done

certain

weighting

relying

on

a

naming

scheme,

but

if

we

were

able

to

I

guess

deal

with

this

like

bucket

mounting

in

quotes

right

via

just

like

the

secrets

that

are

generated

and

propagating

them

into

the

pods.

That

would

be

really

nice

anyway.

H

H

E

C

H

And

it

once

the

not

having

to

modify

a

pod

spec

to

accommodate

for

this

and

then

the

other

is

you

know

if

we're

able

to

modify

the

pot

spec,

what

the

world

would

look

like

so

yeah

and

users

really

complicates

this.

That's

why

I

didn't

know

what

the

priority

was

with

having

an

object,

store

user

or

things

like

that,

because

I

would

change

its

design

quite

a

lot

because

then

you

have

multiple

sets

of

credentials

that

are

being

created,

and

then

you

got

new

configurations

and

permissions

on

the

buckets

things

like

that.

So.

F

A

F

F

H

F

F

E

F

H

H

H

A

E

H

H

E

A

B

B

A

C

C

It's

the

four

finale:

it's

letting

well

I

can't

fall

out

the

bottom

edge

on

I.

I,

probably

don't

see

a

downside

with

it

between

having

when

the

user

creates

a

new

issue

having

a

selection

between

vistas

and

backrub

for

distance,

the

feature,

requests

or

something

regular

and

then

getting

a

different

template.

B

C

C

C

A

G

A

G

G

Like

seeing

what

happens

when

nodes

fail

or

making

sure

that

we

like

tests,

that

rook

is

stable

on

an

upgrade

from

one

kubernetes

version

to

another

and

I

I

guess

I'm

also

trying

to

see

like

does

everyone

use,

mini

cube

for

development?

Does

this

every

you

know?

Do

people

spin

up

small

kubernetes

clusters.

A

Yeah,

that's

a

fantastic

question

Blaine,

so

there

so

Sebastian

Han

had

done

some

work

to

create

a

script

that

brings

up

I,

believe

a

multiple

node

cluster

using

I

think

I

think

it

uses

the

pert

on

Linux

machines.

So

there's

support

for

that.

I

personally

have

not

used

it

since

I

have

a

Mac

but

I

think

that

I

was

working

at

least

four

Sebastian's

workflows,

as

anybody

else

have

is.

B

Under

the

documentation,

it's

it's

a

link

to

a

multi,

no

development,

environment

yeah.

It's

definitely

a

great

question

now,

because

during

development

I'd

say,

yeah

mini

cube

is

the

common

thing,

but

to

really

test

it

yeah

integration

and

upgrade

and

multi

node

yeah.

You

need

more

than

mini

cube

to

really.

F

G

F

G

A

Yeah

I

personally

plain

haven't

used

in

OpenStack,

really

at

all

one

solution

that

we

were

Travis

and

I

had

used

in

the

past

when

we

were

still

at

quantum,

was

using

the

core

OS

vagrant

repo,

to

bring,

if

you

know,

multiple

virtual

machines

that

are

running

car

OS

instances

and

then

using

cube

ATM

to

deploy.

You

know,

multi

node

kubernetes

cluster

across

those

that

had

some

pretty

good

success

for

us

in

terms

of

being

able

to

run,

you

know

multiple

node

clusters

that

have

multiple

disks

and

that

we

I

do

not

recall

running

into

any.

A

A

I'd

be

interesting

interested

to

see

you

know

what

failures

you

had

already

run

into

because

you

know

in

general

having

a

multiple

node

solution

for

developers

and

their

workflow

is

pretty

important

to

me.

So

you

know,

if

you're

having

problems

with

that

and

that's

you

know

kind

of

blocking

things

that

you

want

to

progress

on

then

you

know

I

would

like

to

be

able

to

solve

those

or

you

don't

understand

what

those

issues

are.

Yeah.

G

Okay,

I

might

start

trying

to

to

focus

again

on

bringing

up

a

virtualized

cluster

I

mean

we

have

a

like.

Souza

has

a

the

our

cast

product,

which

is

like

a

an

operating

system

environment

that

already

has

kubernetes

up

and

running

I

I.

Don't

think

that,

like

there's

anything

particularly

different

about

that

that

should

be

making

the

environment

more

difficult.

G

Yeah,

it's

I

guess

it's

good

to

know

that

having

a

multi,

node

development

test

environment

is

something

that

is

deemed

important.

I've

been

a

little

cautious

to

like

jump

totally

on

mini

cube,

just

because

it

seems

like

it's

a

somewhat

of

a

degraded

environment

compared

to

what

will

exist

in

the

real

world.

Yeah.

A

That's

an

absolutely

valid

point:

Blaine

in

your

IDE

I've,

seen

mini

cube,

as

you

know,

at

the

quickest

possible

way

to

you

know,

have

a

kubernetes

cluster

that

can

test

something

on.

You

know

at

the

sacrifice

of

you,

know

more

realistic,

real-world

scenarios,

but

yeah

so

losing

losing

that

ability

to

test

in

more

realistic

scenarios

is,

is

definitely

the

drawback.

There's.

B

C

If

we

look

into

the

community

and

everyone

to

see

what

would

be

a

feasible

solution

to

you,

yeah

kind

of

have

an

environment

that

I

push

my

pull

request.

Rockets

build

and

the

only

thing

I

have

to

do

is

connect

you

to

commit

to

some

kubernetes

cluster,

which

maybe

even

start

it

up

with

requests

or

something

because,

in

my

case,.

C

C

A

All

right

yeah

in

general,

if

anyone

neither

has

say

I

blame

you

can

feel

free

to

share

some

of

the

issues

you're

running

into

on

a

multi

node

test

environment

and

then

in

general.

Anyone

who

has

ideas

about

you

know

good

ways

to

do.

Testing

from

you

know

into

the

development

workflow

on

multiple

nodes.

You

know

feel

free

to

share

those

and

add

those

and

there's

a

bit

of

a

discussion

going

pretty

recent

discussion

gone

going

on

to

get

1544

that

so

I'll.

Add

that

to

the

chat

as

well,

because.

C

It

depends

on

what

you

change,

trying,

often

what

you

want

to

test

at

the

end,

because,

if

I

want

to

like

do

stuff

at

the

Monaco

or

most

of

the

time,

you

start

the

simple

darker

and

darker

occupation

cluster,

which

just

a

loss

between

multiple

notes,

at

least

okay

I

think

I,

just

start

up

more

nervous

with,

but

for

our

smaller

changes

like

simple

stuff,

it's

a

team

that

updated

on

changes

or

something

it

is

enough

to

well

start

a

meteor.

We

have

to

really

begin

to

for

their

special

cases,

kind

of

so.

C

A

You

know

I

was

wondering

if,

if

that

works

on

the

Mac

at

all,

you

know

because

it

you

know

with

the

doctor

on

Mac

uses,

like

the

you

know,

they

had

their

hyper

kit

that

has

some

hypervisor

functionality

and

have

no

idea

is

it

that

doesn't

really

launch

a

full-fledged

virtual

machine.

So

I

don't

know

how

it's

like

some

kernel

operations

like

that

K

RBD

does

would

even

be

supporting

an

environment

like

that.

C

A

Possible,

probably

sometime

in

the

near

future,

but

not

this

morning,

cuz

I've

got

other

things

in

the

schedule

this

morning

yeah

this

is

this

is

something

we

can

continue

making

progress.

This

has

been

brought

up

so

many

times

by

so

many

people

and

outside

agreement.

Just

rook-

and

you

know

the

mini

cube

with

people

and

project

in

general.

Has

you

know

state

that?

That's

not

never

gonna

I,

don't

think

I

think

that

they

said

they're

not

going

to

support

multi-node.

So

there's

kind.