►

From YouTube: 2018-02-27 Rook Community Meeting

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

B

C

All

right

we

recording,

has

started

so

yes,

let's

get

going

then

so.

This

is

the

February

27th

2018

community

meeting

and

let's

go

ahead

and

jump

right

into

the

agenda.

One

of

the

first

things

that

I

wanted

to

talk

about

today

was

any

issues

that

we

have

open

for

0.7

and,

if

we're

going

to

need

to

do

any

releases

to

address

them,

I

know

of

one

fix

so

far

that

has

been

back

ported

to

the

0.7

branch

for

being

able

to

publish

the

helm,

charts

to

the

new

rest.

C

D

I've

not

been

able

to

test

promote

because

that

only

runs

and

we

actually

run

the

promote

build

so

I'm

hoping

it

works.

It

was

the

wrong

repo

I

was

at

the

wrong

bucket,

so

but

I

think

we

should.

The

one

way

to

find

out

is

we

should

run

it

so

so

like

go

ahead.

I'd

say

you

know:

let's

once

we

get

everything

else

and

let's

build

and

see

if

we

can

deploy

it

if

it

works

so.

C

My

my

question

about

that

are

two

questions.

What

the

first

one

would

be

was

the

bucket

name

change.

It

was

that

associated

with

the

transfer

of

ownership.

Ncf,

yes,

okay,

I

got

it

and

then

is

it

possible

to

rerun

the

0.7

build

or

like

a

do,

or

is

it

item

potent

in

a

sense

that

you

can

do

a

promotion

and

it

will

overwrite

the

existing

0.7

or

we

need

to

take

a

zero

at

7.1

for

this?

Well.

D

A

D

D

C

Okay,

so

then

I

tried

to

catch

up

on

the

conversation

about

the

new

SEF

version.

Yesterday,

now

is

that's

a

week,

picking

up

twelve

dot

2.3

because

the

new

packages

are

released

and

therefore

the

build

will

automatically

pick

them

up

or

was

there

a

specific

commit

to

our

repo

to

point

it

at

twelve

2.3

yeah.

D

So

one

of

the

problems

with

how

we're

building

stuff

right

now

until

the

they

make

changes

upstream,

is

that

we

pick

whatever

is

in

there

abt

or

repositories

and

right

now,

the

we're

using

luminous

and

they've

just

upgraded

luminous

to

twelve

to

three.

So

every

time

we

build

a

docker

image,

we're

gonna

pick

the

latest.

There

is

no

way

to

control

which

minor

version

we

pick

up.

A

D

B

D

C

A

Well,

by

stopping

the

agent

well,

an

agent

will

have

reused.

Docker

images

from

previous

builds

to

for

more

efficient

builds.

So

since

we

wanted

to

pick

up

SEF

12

to

3

I

thought:

well,

let's

just

recycle

the

build

agent,

and

so

that's

why

I

recycled

them

and

then

the

dr.

cash

was

gone

and

brought

up

a

new

build

there.

I

picked

that

up

was

answer

your

question.

Yes,.

C

D

D

D

It

should

be

for

4:08

I

think

this

will

all

be

fixed

and

especially,

and

we'll

talk

about

some

of

the

background.

If

we

start

using

different

images.

Well,

we'll

just

use

the

safe

container

one

and

and

there

they're

going

to

be

labeled

with

its

specific

tags

that

are

optimal

hider

versions.

So

I

honestly,

don't

know

how

they're

going

to

solve

the

problem

of.

If

there

was

a

security

update

to

base

OS

image

and

the

apt

repository

has

moved

on

I,

don't

know

how

they're

going

to

rebuild

it

with

an

old

version.

D

C

D

B

C

B

B

D

Well,

the

caches

are

super

safe.

The

only

reason

they're

that

this

issue

happens

is

that,

because

of

this

you

know

we

don't

have

the

packages

for

Seth

pins,

but

if

there

is

a

change

in

upstream

Ubuntu,

even

when

we

build,

we

will

pick

up

unusual

a

window

release

even

with

the

caching,

because

we

we

run

all

the

base

images

with

poll,

which

means

that

if

there

is

a

change

somewhere

upstream,

will

pick

up

the

whole

chain,

so

it

seems

very

safe

to

me,

except

for

this

one

issue.

We

identified

ok.

B

C

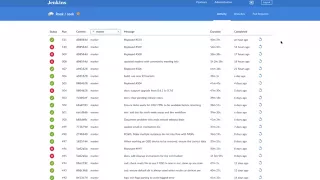

Alright,

that's

that

sounds

good

for

0.7

for

me,

so

I

also

wanted

to

discuss

the

much

higher

rate

of

continuous

integration

pipeline

failures,

we're

seeing

now.

This

may

or

may

not

be

related

to

the

Jenkins

agents

being

reset

yesterday,

but

we

are

seeing

what

we

previously

believed

to

be

random

failures,

we're

seeing

them

now

at

a

much

higher

rate.

Travis

did

you

happen

to

have

any

insight

on

that

from

last

night?

I

did

not

follow

up

myself,

yeah.

A

I

was

thinking

more

about

this,

and

we

have

seen

a

pattern

where,

like

agents

that

are

reused

like

same

ec2

instances

will

have

a

higher

rate

of

failure.

We

had

several

master

builds

in

a

row

fail

which

confirmed

that,

because

they

were

one

hour

after

the

other

this

morning,

I

started

the

master

build

again.

So

all

the

agents

were

stopped

because

no

builds

have

been

running

and

the

build

succeeded

now

so

there's

something

about

warm

agents

that

tends

to

cause

these

issues

between.

There

must

be

builds,

leaving

things

behind

or

running

over

each

other.

A

C

A

couple

questions

then,

on

that

you

know

to

me

it

to

me,

but

I

would

have

a

preference

for

you

know

if

it's

possible,

that

is

to

figure

out

what

the

stale

state

is.

You

know

if

it's

something

that

should

be

in

the

responsibility

of

the

test

framework

itself

to

clean

up

and

it's

not

cleaning

up

appropriately,

then

you

know

I

would

like

to

be

able

to

fix

it

there

right.

C

Definitely

yeah

I,

don't

know

if

we

know

what

that

exactly

is,

but

if

we

could

figure

that

out

in

any

reasonable

timeframe,

but

then

the

other

question

would

be

about

what

is

the

current?

Do

you

know

what

the

current

Jenkins

configuration

is

in

terms

of

how

long

a

in

agent

instance

will

be

idle

before

it

terminates

it

completely

such

that

the

next

build

that

comes

up

will

get

a

fresh

and

completely

new

easy

to

instance,

seem.

A

Then

that

for

the

agents

there's

did

I,

guess

I,

don't

know

if

there's

type

of

agent

or

whatever,

but

there's

the

one

that

the

main

agents

that

runs

the

build

and

then

there's

the

other

individual

agents

that

run

each

kubernetes,

1.6

1.7,

1/8

and

n

tests

right.

Those

agents

seem

like

they

start

up

and

they

go

away.

A

D

A

And

the

most

common

failure,

when

this

happens,

is

that

it

says

it

couldn't

I

was

it

tries

to

delete

the

operator

or

the

cluster

that

or

start

one

that

doesn't

exists

anyway,

something

where

it

exists

and

it

shouldn't

or

it

doesn't,

and

that

should,

and

so

it

fails

where

it's.

The

only

way

it

could

happen

is

a

seems

like

Steve

from

previous

build,

but

oh

yeah

I'll

look

again

and

see,

if

maybe

there's

some

logging

dad

to

help

track

it

down

or

something.

Yes,.

C

D

A

C

C

For

that

you

know

very

quickly

and

be

able

to

reach

a

you

know,

consensus

about

how

best

to

approach

this

and

then

we're

gonna

have

to

do

some

refactoring.

That's

got

obviously

got

to

be

front

loaded.

You

know

across

the

repository

to

be

able

to.

You

know,

enable

this

and

have

other

backends

besides

just

stuff

one

potential

back

into

start

start

with.

D

Say

also

that

many

of

our

has

a

hump

hump

chart

today

and

it's

fairly

limited

like

it

doesn't

even

do

a

racial

coding

or

doesn't

even

set

up

many

year

to

do

some

of

the

things

that

it

does.

Well,

that's

why

I

an

operator

controller

for

many

other

I

think

makes

a

lot

of

sense,

so

so

that

that

seems

like

an

interesting

back

end

to

a

second

part.

The

first

other

back

end

besides

stuff

to

add,

would

be

makes

makes

it

interesting.

The.

A

Part

of

that

design,

I

think

too

well,

when

I

started

this

design,

I

think

what

I

would

feel

like

I

was

missing

and

now

I'm

realizing

is

that

you

know

including

well,

what's

the

scenario?

What

would

be

the

motivation

in

this

case

for

many

users

to

come

to

work

to

then

go

run

Mineo

right,

so

I

guess

you

know

here's

what

here's?

Why

and

the

motivation.

B

C

You

Alexander,

yeah

and

Travis.

You

know

one

of

the

points

that

Bassam

had

just

made

to

I

thought

was

a

fairly

persuasive

argument,

for

you

know

the.

Why

of

many

or

the

benefit

that

it

brings

cuz

you

know

their

existing

helm.

Chart

bassam

set

is

very

limited,

and

you

know

a

lot

of

the

features

that

many

of

us

capable

of

you

know

can't

even

be

enabled

with

that,

like

a

ratio

coding.

D

Right

the

hell

I

mean

this

is

the

this

is

a

common

pattern

and

actually

a

core

premise

of

rook,

most

storage

systems

and

stateful

workloads.

You

know

go

as

far

as

using

you

know.

Stateful

sets,

but

don't

go

any

further

today

and

as

a

results,

you

don't

get

to

do

things

like

you

know,

scaling

them

dealing

with

things

like

you

know.

If

they

have

any

kind

of

consistency

requirements,

while

they're

scaling

quorums

setting

up

failure

domain

setting

up

you

know

migrating

replicating

stuff

across

failure,

domains

being

sensitive

to

how

often

that

happens.

D

B

C

I

realize

I

was

on

mute

for

a

while

there,

sorry,

so

you

know

kubernetes

the

next

item

in

the

list.

Kubernetes

has

a

couple

of

different

options

and

for

being

able

to

extend

the

platform

and

define

your

own

custom

resources.

Currently,

ruk

is

using

the

you

know,

custom

resource

definitions

which

are

somewhat

limited

and

rigid

and

what

they

enable

you

to

do.

Api

aggregation

is

a

much

more

flexible

way

of

defining

and

extending

the

kubernetes

api

and

gives

you

a

lot

of

features.

You

know

like

validation

and

multi

versioning.

C

You

know

custom

logic

and

they're

like

merging

four

patches.

Your

support

for

upgrade

matching

stuff

like

that.

So

you

know

front-loading

this

work.

If

we're

going

you're

going

to

change

from

using

CR

DS

to

api

aggregation

would

be

important

as

well,

because,

that's

you

know,

part

of

defining

and

solidifying

our

API

surface

area

right

yeah.

There.

D

Now

I'd

argue

that

you

know

we

have

a

it's

completely.

Busted

are

starting

right.

Now,

it's

like

we

have

to.

We

have

to

ask

the

user

to

go

literally,

transform

an

old

CRT

turn

if

we

upgrade

it

right

and,

like

you

know,

have

to

write

tools

that

are

outside

of

it

and

it

looks

like

as

a

1.7

there

is

now

well

see.

Are

these

were

introduced

in

1.7

and

API

aggregation

was

introduced

in

1.7

and

it

you

know

it

looks

like

older.

D

B

A

D

C

C

So

the

next

item

I

had

on

the

roadmap

here

is

you

know

our

existing

CI

pipelines

and

build

release

promotion

pipelines?

All

that

is

hosted

in

a

Jenkins

instance

that

we

need

to

migrate

over

to

something

hosted

and

owned

by

the

CN

CF

I.

Don't

know

you

know

what

all

that

entails,

but

I

don't

think

it's

entirely

trivial

and

it's

probably

gonna

take

a

fair

amount

of

work

to

do

that.

Is

there.

C

Okay,

the

so

there's

there's

still

work,

I

believe

I,

don't

know

how

well

it's

captured

somebody

who

can

perhaps

comments

on

this

for

running

these

privilege

and

also

you

know,

security

issues

in

general.

I

think

a

lot

of

these

were

associated

with

what

we

saw

an

open

shift

and

I.

Don't

know

what

all

the

scope

of

the

work

that's

left

there,

but

I

know

it's

not

completed

yeah.

D

So

there

I

think

the

there

was

a

bunch

of

cleanup

and

unit

test

cleanup

to

get

there,

okay,

PRA

and

CLI

out

of

the

out

of

the

tree,

and

that

enables

using

less

you

know

the

whole

security

designer

on

namespaces

and

removing

or

reducing

the

number

of

service

accounts,

and

all

of

that

there's

already

work

that

started

there

and

I

think

it

got

stuck

on.

There

is

just

so

much

unit

test

that

used

the

Brook

API

yeah,

so

it's

it's

gonna

need

someone

to

go.

D

B

A

D

D

C

D

A

D

C

So

the

next

one

I

have

here

is

about

running

on

arbitrary

persistent

volumes.

That's

something

that

we've

had

a

desire

to

do

for

a

couple

milestones,

but

it

has

been

kicked

down

the

road

a

couple

of

times,

but

that's

my

understanding

of

this

feature

is

that

it

would

enable

rook

in

general

to

be

you

know,

backed

by

you,

know,

Google

persistent

disks

if

you're

running

in

Google

or

EBS

volumes,

if

you're

running

in

Amazon,

anything

that

can

be

surfaced

as

a

persistent

volume

could

be

used

as

the

for

lack

of

a

better

term.

C

D

Basically,

there

is

a.

There

is

a

notion

of

a

lower

storage

cluster

substrate,

which

we

build

storage

systems

on

top

of,

usually

it's

local

disk,

but

it

doesn't

have

to

be

and

I

think

that

ties

those

the

running,

arbitrary

Peavey's

and

whatever

we

end

up

doing

as

the

storage

cluster

cluster

scope

or

whatever

ends

up

being.

You

know

using

that

so

I

think

this

is

a

very

critical

feature

honestly

right

now

we're

this

whole

host

error

thing.

D

A

D

C

All

right,

I

think

we've

still

got

some

issues

with

you

know.

Shutting

down

nodes

are

draining

nodes,

restarting

nodes.

You

know

all

that

sort

of

thing

we're

still

issues

floating

around

there

that

have

not

been

well

addressed.

So

effort

on

that

would

would

would

still

we

see

issues

about

that.

You

know

pop

up

on

slack

with

a

not

zero

frequency

as

well,

so

that

would

go

a

long

way

for

helping

the

user

base.

A

C

Then

we

have

some

stuff

specific

features,

improve

and

improvements

that

we'd

like

to

accomplish.

One

of

them

is

finishing

off

the

work.

That's

I

started

in

0.7,

where

we

know

we

have

support

for

adding

and

removing

nodes

to

a

storage

cluster

right

now,

but

and

then

we

actually

have

a

lot

of

work

that

goes

to

supporting

individual

disks,

but

that's

not

officially

supported

by

the

operator

at

the

cluster

level.

Yet

and

there's

a

number

of

prerequisites

for

that

to

happen.

I'm

one

of

them

would

be

the

architecture

change

of

having

a

single

OSD

per

pod.

C

I

am

afraid

of

cause

it

and

there's

also

you

know

the

Ceph

manager

balancer

module.

You

know

this.

It

seems

like

the

in

terms

of

being

able

to

keep

PGs

balanced

across

the

cluster,

that

that

is

a

cheap

way

that

you

know

we

can

enable

that

by

just

getting

you

know,

have

the

operator

to

get

the

that

module

loaded

by

the

staff

manager

instances

and

then

start

getting

that

behavior

for

fairly

cheaply.

A

C

A

D

One

thing

Travis

that

I'm

curious

about

is

you

know:

I

I,

believe

the

there

is

interest

in

the

Red

Hat

team

to

use

in

the

mimic

time

frame,

which

I

believe

is

May,

or

at

least

May

ish,

so

I

I'm,

hoping

that

we

can

get

more

people

involved

and

getting

the

staff

back

hands

up

to

optimum.

You

know,

after

production

levels

in

mimic,

if

it's

going

to

be

deploying

with.

D

B

C

And

then

it's

also

important

to

note

here

too,

that

you

know

the

work

around

multiple

storage,

backends

and

refactoring

the

code

base.

That's

going

to

have

you

know

the

moving

parts

and

pieces

of

the

codebase

moving

around

so

we'll

need

to

make

sure

that

you

know

this

up

front

and

quickly

to

make

sure

that

we're

not

stepping

on

toes

for

the

you

know,

seven,

what

we

wanted

to

do

specific

features

in

their

force,

F

right.

C

D

D

D

D

C

D

C

C

C

C

Okay,

so

yes,

so

I

I

was

thinking

that

you

know

this.

Removing

the

rock

API

at

CLI

needs

to

be

done

soon.

That

could

be

done

in

0.8,

but

then

you

know

this

all

the

security

work

that

that

enables

probably

could

get

bumped

to

0.9

instead.

So

we'll

start

with

this

and

then

do

the

later

security

work

and

in

the

next

milestone

I,

don't.

A

B

C

D

C

D

C

D

D

A

A

D

A

C

A

C

A

C

Yeah

and-

and

we

may

need

to

be

okay

with

that-

to

get

to

the

right

architecture,

still

the

operator

running

and

high

availability

is

still

a

point.

You

know

a

single

point

of

failure.

Right

now,

the

operator

dies

for

some

reason.

There's

no,

you

know.

Besides

the

standard,

kubernetes

no

support,

there

is

no,

you

know,

master

slavery,

I

believe.

D

C

We

have

durability

of

state

as

well.

That

was

in

a

previous

milestone,

that's

uncertain,

I

know

for

supporting

you

know:

local

storage,

local

volumes

and

being

able

to

you

know

in

a

disaster.

Recovery

make

sure

the

configuration

since

metadata

esterday

like

that,

can

be

regenerated

data.

That

happens

if

somebody

recently

didn't

hit

Travis

where

they

accidentally

deleted.

You

know

all

of

the

like

the

CRT,

the

operator,

all

that

sort

of

stuff

that

deleted

a

lot

of

things

they

had

to

rebuild

their

cluster

from

what

was

existing

on

disk.

Did

that

go

well

for

them,

so.

A

The

didn't

end

up

doing

it.

Actually,

they

said

it

was

just

a

test

cluster,

but

they

want

to

know

if

it

was

possible

in

case

of

it

was

a

production

cluster.

So

I

said,

oh

that

I

think

he

opened

a

ticket,

so

we

could

document

that,

but

that

yeah

I

haven't

gone

there.

Yet

okay

actually

try

it.

It

should

be

possible,

but

yeah

I

think

people

would

feel

better

if

it

was

documented

and

tested.

C

So

we'd

already

discussed,

you

know

moving

to

a

PIR

Gatien

which

would

give

us

the

ability

to

do

some

of

these

features

about

you

know

more

rich

validation

of

CDs

and

status

and

progress

all

that

so

we'll

get

the

you

know

the

ability

to

do

those

the

API

aggregation,

but

there's

still

work

associated

with

doing

them

to

support.

You

know

enable

and

support

those

types

of

features,

some

thinking

that

just

you

know

making

the

switch

to

a

pea

aggregation.

C

B

C

Already

talked

about

snapshots,

we

moved

that's

from

0

at

0

to

9

and

then

the

stuff

features

I

have

here

are

there

to

improve

data

placement

pool

configuration

which

there's

been

some

discussion,

that

I

can't

recall

right

now

about

from

the

Red

Hat

team

I

believe

that

that

fits

better

in

the

Ceph

platform

itself,

as

opposed

to

it

at

the

Brooke

level.

So

there

may

be

more

discussion

there

about,

especially

about

what's

going

to

be

happening

in

the

mimic

time

frame

that

may

be

addressing

some

of

this

more

appropriately.

At

this

F

level,.

A

C

And

then

also

for

SF

s

are

using

a

block

devices

of

the

our

BD.

We

have

dynamic

volume

provisioning

for

that

and

your

PBC's

purposes.

Persistent

volume

claims,

but

we

did

not

have

the

same

experience.

Force

ffs

so

enabling

that

might

be

might

be

something

to

address.

I

haven't

heard

it

in

normal.

Oh.

D

C

C

And

so

that's

all

I

have

for

0.9

and

for

one

dot.

Oh,

you

know

to

get

there

we're

gonna

have

to

you

know

a

lot

of

our

the

types

that

we

had

to

find

our

custom

resources

will

need

to.

You

know,

make

it

to

stable

or

v1

version.

That's

you

know,

obviously

a

hugely

nebulous

goal

that

has

a

whole

lot

of.

You

know

smaller

tasks.

To

get

to

that

point.

A

C

D

D

A

C

So

the

next

step

here

is

to

update

the

roadmap

MD

in

the

rec

repo,

with

some

of

this

stuff.

So

that'll

be

part

of

a

pull

request

and

you

know,

which

is

then

has

the

availability

for

more

commentary

to

solidify

this

before

it

gets

committed

to

the

repo,

so

I'll

take

a

stab.

Then,

when

I

open

up

the

proquest

at

some

of

you

know

these

items

about,

you

know

what

types

could

have

beta

and

then

we

could

discuss

it

in

the

pull

request

that

sound,

reasonable.