►

From YouTube: Bay Area Rust Meetup January 2016

Description

Bay Area Rust meetup for January 2016. Topics TBD.

Help us caption & translate this video!

http://amara.org/v/2Fhs/

A

Hello,

everybody

welcome

again.

Oh

sorry,

my

computers,

hi

everybody

welcome

again

to

another

rust

meet

up.

This

is

wonderful

to

see

you

all.

It's

been

a

really

long

time

coming

and

I'm

glad

that

we've

had

so

many

people

show

up

here.

So

tonight's

event

is

all

about

some

of

the

really

need

concurrency

and

parallelism

libraries

that

have

started

to

come

out

of

community,

but

you

know

we're

back

yay,

sorry

that

we

had

such

a

long

break.

I've

already

got

things

lined

up

for

you

know

the

next

couple

months.

A

A

Nico

will

be

talking

about

his

library

round

for

doing

very

simple

threading

Aaron

will

be

talking

about

cross

beam

for

writing,

concurrent

data

structures

and

then

Q

on

will

close

out

the

evening

of

with

simple

parallel

and

then

also

just

want

to

mention

that

I've

already

announced

the

next

rust

meet

up

in

February.

You

should

sign

up,

please

sign

up

for

the

wait

list.

We

had

people

50

people

signed

up

for

the

meet

up

before

I

even

announced

it.

A

B

Hello,

everybody:

you

can

see

I'm

calling

in

from

Boston

it's

a

little

bit

chillier

here

than

I

I

think

it

is

probably

over

there,

but

so

right,

I'm

going

to

talk

to

you

about

rayon,

so

I

guess

we

might

as

well

bring

up

the

slides.

Actually,

hopefully

you

can

see

these

look

good,

okay,

loudly

if

something's

wrong.

It's.

B

Right

so

right

so

I'm

going

to

talk

about

rayon,

which

is

a

library

for

doing

data

parallelism

and

basically

what

data

parallelism

means,

at

least

to

me,

is

you

have

a

lot

of

data

and

you'd

like

to

process

it

in

parallel

all

right-

and

this

is

a

little

bit

disjoint

from

like

task

parallelism,

which

is

kind

of

I,

have

a

lot

of

different

things.

I

want

to

do,

and

I

want

to

do

them

in

parallel,

but

they're

not

operating

something

I

have

a

big

array.

I

want

to

subdivide

or

something

like

that

all

right.

B

So

the

goal

of

rayon

really

is

to

be

able

to

take

sequential

code

that

you

wrote

and

make

it

run

in

parallel,

very

easily

a

sort

of

minimal

effort

and

minimal

risk

that

it's

going

to

do

the

wrong

thing

all

right.

So

here's

an

example

of

a

piece

of

sequential

code

that

iterates

over

a

list,

a

slice

or

a

vector

or

whatever

of

stores

and

for

each

store.

It

calls

compute

price,

which

is

going

to

do

some

computation

and

then

I'm

going.

To

sum

up

the

results

writing

this

is

the

quench

alliteration.

B

So

it

seems

like

it's

pretty

clear

what

it's

going

to

do,

but

if

I

happen

to

know

that,

let's

say

all

these

computations

for

computing,

the

price

can

be

done

in

parallel

independently

with

one

another

I'd

like

it.

If

all

it

took

to

to

make

this

run

in

parallels

to

kind

of

change,

this

dot

itter

to

something

like

dot

pirater

any.

A

B

B

All

right

so

I'd

like

to

be

able

to

just

write,

pirater

and

now

I

would

have

the

same

semantics,

but

it

would

run

in

parallel

right.

So

we

do

each

store.

Well,

let's

say

it

would

potentially

run

in

parallel.

Would

divide

and

the

reason

I

say

potentially

is

that

it'll

depend

a

lot

on

the

computer

you're

using

right.

B

If

you

have

some

function,

that's

doing

sequential

work,

I'd

like

to

be

able

to

convert

it

to

do

parallel

work

and

to

do

the

work

in

parallel,

and

none

of

the

caller's

of

that

function

ever

have

to

know

a

signature

of

the

function,

doesn't

change

it's

just

something

that

happens

inside

right.

It

just

gets

done

faster

and

finally,

it's

really

important

to

guarantee

data

race,

freedom

and

what

I

mean

by

this

is

so

I

said

we

want

to

make

pearl,

isn't

easy

and

I

showed

you

an

example

where

it

was

very

ergonomic.

B

You

didn't

have

to

type

a

lot.

That's

not

the

only

factor

in

making

things

easy

right.

You

also

want

to

know

that

when

you

do

this,

your

program

is

not

going

to

suddenly

do

the

wrong

thing

or

undefined

behavior

or

compute

crazy

results.

So

I'd

like

it

that

if

you

say

that

something

is

paralyzed,

abaut

and

you're

wrong,

you

get

a

compilation

error.

That

would

be

the

goal.

B

So,

basically,

if

you

put

all

that

together,

what

it

means

is,

you

should

be

able

to

sprinkle

on

a

willy

nilly,

parallel

hints

and

suggestions

without

a

lot

of

fear

that

something

is

going

to

go

in

this

and

your

program

is

going

to

start

misbehaving.

So

what

ray

on

basically

where

its

current

status

is

I

would

say

it's

still

very

experimental,

I,

don't

think

people

should

build

production

systems

on

it.

However,

it's

pretty

promising

and

I

think

it

delivers

on

these

goals.

B

No

pretty

well,

basically,

and

there's

there's

some

caveats

and

things,

but

basically

it's

able

to

do

all

these

things

that

I'm

talking

about

as

we'll

see

in

this

this

talk,

so

I

showed

you

this

parallel

iterate

PID

grillo

iterator

api,

which

is

something

that's

in

the

parallel

or

in

the

rayon

library.

But

it's

not

the

foundation

around.

It's

actually

just

a

kind

of

layer

built

on

top

of

a

more

primitive

api,

which

is

what

I'm

going

to

spend

most

of

my

time

talking

about

and

that

api

is

called

join.

B

So

the

goal

is,

you

should

build

adjoin.

This

is

kind

of

the

thing

that

you

sprinkle

around

right.

Wherever

parallelism

is

possible

and

then

let

the

library

in

runtime

essentially

decide

when

it's

profitable

to

use

it

so

join,

is

really

well

suited

to

divide

and

conquer

kind

of

algorithms,

you're,

probably

familiar

with

this

term.

B

But

basically

the

idea

is,

you

have

some

big

problem

you

have

to

solve

and

it's

kind

of

intimidating

and

you're

not

sure

how

to

solve

it,

but

you

can

break

it

up

into

two

smaller

problems

and

then

you

can

recursively

try

to

solve

those,

and

if

you

keep

doing

this

eventually,

you

get

down

to

some

really

tiny

problem.

That's

really

easy

right

and

the

idea

is

that

when

you're

doing

this

kind

of

divide

and

conquer

step,

each

time

that

you

you

divide

up

into

two

problems,

you

can

call

join

the

process.

B

Those

two

problems

in

parallel.

So

let

me

show

you

an

example.

This

is

quicksort

my

favorite

sort,

probably

everybody's

favorite

sort

I

imagine

most

of

you

know,

quicksort

or

maybe

more

accurately,

once

new

quicksort

and

since

then

I've

just

used

it

as

a

library.

It's

kind

of

how

I

was,

for

a

long

time,

till

I

wrote

this

blog

post

that

I'm

basing

this

on,

but

so

quick

sort.

Let

me

just

kind

of

briefly

review

how

it

works

real

fast,

it's

a

classic,

divide-and-conquer

kind

of

algorithm.

B

So

when

I'm

done,

I'll

have

first

all

sort

the

left,

half

and

then

I'll

sort

the

right

hand,

okay,

pretty

easy.

So

if

I

wanted

to

paralyze

this

well,

I

can

just

do

what

I

said

and

insert

a

call

to

rayon

join

exactly

at

the

point

where

I

have

two

subproblems.

So

this

is

the

parallel

quicksort.

It

looks

pretty

similar,

except

here,

I'm

calling

join,

and

so

you

see

that

it

took

very

minimal

work

to

do

this,

but

what's

even

more

exciting

in

I.

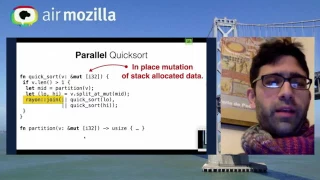

B

Think

very

cool

about

this

is

that

we

didn't

change

the

signature

at

all,

and

so

we're

actually

doing

a

you're,

not

a

completely

trivial

thing

to

paralyze

here,

because

we

have

mutation

going

on

and

we're

also

potentially

working

with

stack

allocated

data.

These

things

are

usually

a

little

bit

tricky

to

get

right,

because

if

you

start

up

a

thread,

it

runs

asynchronously.

B

With

respect

to

this

person,

the

stack

frame

that

started

it

so

working

with

stack

allocated

data

can

be

a

little

risky

if

you

return

or

do

something

wrong,

you

might

pop

that

data,

while

the

other

thread

is

still

active,

but

the

reason

that

all

this

works

with

rayon

is

that

basically

world

because

join

always

waits

until

both

threads

have

finished

before

it

returns.

You

know

that

it

can

have

safe

access

to

stack

allocated

resources

just

fine,

because

basically

the

stack

frame

isn't

going

to

return

until

both

threads

have

done

so.

B

The

library

kind

of

handles

that

aspect

for

you,

and

that

makes

sense

for,

if

you're,

taking

a

sequential

algorithm,

you

were

going

to

do

both

those

things

anyway,

before

you

return,

so

you

might

as

well

wait

for

the

threads

to

finish

right.

It's

the

same

algorithm

from

the

caller's

point

of

view

and

in

terms

of

mutation.

B

This

works

out

very

well

because

of

Russ

basic

type

system

which

guarantees

you

that

if

you

have

a

mutable

reference

to

something

and

you're

the

only

one

that

has

access

to

that

data,

so

it's

okay

to

put

it

to

another

thread

and

let

the

other

thread

mutate

it

there's.

No

one

else

can

be

reading.

It

will

see

a

little

more

on

that

in

a

bit.

B

I

want

to

start

out,

though

I

want

to

focus

instead

on

talking

about

how

how

does

rayon

decide

when

to

run

things

in

parallel

at

all

right

and

the

technique

that

I'm

using

is

something

called

work

stealing,

which

I

did

not

invent

at

all.

It's

been

around

a

long

time

and

I

think

it

was

invented,

at

least

the

first

time,

I

know

of

it

by

silk.

B

It

was

a

project

at

MIT

from

the

90s

and

that

silk

is

actually

now

available

as

part

of

Intel's

c

compiler,

and

there

have

been

a

number

of

projects

they

have

used

it

in

the

meantime.

So

it's

a

really

time-honored

well

well

respected

technique

and

the

basic

ideas

is

like

this.

Rather

traditionally,

when

you

paralyze,

you

might

take

a

bunch

of

work

and

assign

it

to

different

threads,

but

work

stealing

is

more

of

a

dynamic

load.

Balancing

idea,

all

right,

so

I

have

a

bunch

of

threads

in

a

pool.

B

In

this

case

let's

say:

I

have

two

threads

and

they're

going

to

find

work

for

themselves.

Let

me

show

you

and

if

it's

easier

to

illustrate

it

than

to

describe

it,

so

we

start

out

with

two

threads

here

and

thread.

Be

is

basically

Idol.

Initially,

it

has

no

work

to

do

but

thread

a

starts

out

with

the

job

of

the

bigger

the

big

big

problem.

I

started

with.

So

in

this

case

that's

quick

sort.

That's

what

q

S

stands

for,

calling

quicksort

on

a

slice.

B

Let's

say

it's

22

elements,

long

to

start

right,

then

we

saw

that

what

quicksort

does

once

it

starts

working

on

a

particular

problem.

Is

it

subdivides

the

problem

into

two

two

subproblems

and

then

it

calls

join

on

both

of

them

right

and

what

join

is

going

to

do

basically

is

take

these

two

closures

and

it's

going

to

start

executing

the

first

closure,

but

just

before

it

does

that

it's

going

to

post

a

little

a

little

note

into

a

threadlocal

queue

with

the

second

closure.

B

So

this

queue

is

basically

tracking

work

that

we

plan

to

do

later,

but

we

haven't

gotten

to

it

yet

so

we're

starting

in

on

0

to

15,

and

then

we've

put

in

the

queue

of

15

to

22.

So

we

can

pick

it

up

later

and

when

we

process

0

to

15

will

again

sub

divided

into

two

tasks

and

we'll

put

one

in

the

queue

that's

the

1

to

15

and

we'll

start

in

on

021

right

away.

B

This

is

the

one

length

array,

so

it's

going

to

finish

and

when

it

finishes,

what's

going

to

do,

is

go

over

to

this

queue

and

take

off

the

most

recent

thing

that

it

put

in.

So

it's

actually

a

double

ended

queue

or

a

deck.

You

can

use

it

like

a

stack

or

a

queue

it's

a

minor

morning,

but

that

means

we're

going

to

say:

okay.

Well,

we

finished

0

to

1.

B

B

So

what

threat

B's

going

to

do

is

while

whenever

a

threat

is

idle

in

order,

it

tries

to

find

work

for

it

to

do,

and

it

does

that

by

looking

at

all

the

cues

from

all

the

other

threads.

So

now

it's

wet

be,

might

come

over

and

say

you

know:

I've

got

nothing

to

do

and

thread

a

has

this

this

15

to

22

task

just

hanging

out

and

it's

Q,

maybe

I'll

be

helpful

and

I'll.

B

Take

that

work

on

and

we

call

that

stealing

so

threat

be,

is

going

to

steal

this

task

and

execute

it

and

it's

called

stealing,

but

it's

kind

of

a

weird

sort

of

stealing

it's

like.

If

a

thief

came

into

your

house

and

saw

that

you

haven't

finished

your

dishes

and

started

doing

them

for

you

right,

it's

pretty

nice,

it's

a

nice

kind

of

stealing

so

wet

B

is

going

to

execute

15

to

22

and

it'll.

Do

the

same

thing.

B

It

will

subdivide

this

up

into

two

problems

and

post,

one

of

them

into

its

local

q

and

starting

on

the

first

one

and

it'll

do

that

again,

and

so

it

basically

works

exactly

the

same

way

right.

So

now,

when

it

finishes

a

problem,

it'll

take

something

off

of

its

q,

just

like

thread

a

did,

and

so

let's

say

now

it's

processing

here

now.

If

we

come

back

to

thread

a

for

a

second.

B

B

That

means

three

days

idle

and

when

threads

are

idle,

they

go

look

for

other

work

to

do

so

now

we

can

go

look

at

thread,

B's

q,

and

we

can

say:

hey

here's

some

work,

the

thread

bead

had

started

but

hasn't

finished

yet

18

to

22

I

could

steal

that

back

right

and

so

now

thread

a

is

going

to

do

18

to

22,

while

thread

B

is

over

there,

and

you

can

kind

of

imagine

now.

This

proceeds

like

this

until

all

the

work

is

done,

so

everybody

kind

of

just

does

the

work

they

have.

B

They

create

new

work

and

throw

it

on

their

queue,

and

hopefully

somebody

else

takes

it

before

they

can

even

get

to

it

right.

So

it's

kind

of

like

if

that

thief

came

in

and

was

washing

my

dishes

and

I

see

that

they

haven't

got

gotten

to

a

particular

pan.

I

could

take

it

back

and

start

working

line

everyone's

working

together

to

finish

all

the

problems.

So

what's

nice

about

this,

is

you

get

a

kind

of

dynamic

load?

Balancing

effect,

so

it

might

be.

B

Quicksort

is

not

the

best

example

of

that,

but

if

well

even

here,

you

can

see

actually

the

tasks

don't

all

take

the

same

time

right.

Some

of

them

are

for

big

pieces

of

the

array.

Some

of

them

are

for

smaller

pieces

of

the

array,

and

so,

if

you

have

tasks

that

take

a

variable

amount

of

time,

which

is

often

the

case,

then

it's

really

hard

to

pre

allocate

those

two

threads,

because

you

might

end

up

giving

one

thread

a

lot

of

really

big

expensive

tasks

and

another

thread.

B

A

bunch

of

really

easy

tasks,

and

then

the

other

thread

will

finish

really

fast

and

you

just

won't

get

a

very

efficient

use

of

your

resources

but

with

work.

Stealing,

that's

not

a

big

problem,

because

if

one

thread

finishes

fast,

it'll

just

go,

take

work

from

the

other

threads

and

try

to

take

care

of

that.

B

Instead,

so

here's

a

big

wall

of

code,

this

is

kind

of

the

header

of

join

in

a

little

bit

of

pseudocode

and

the

reason

I'm

showing

you

all

this

code

is

that

I

want

to

kind

of

highlight

some

of

the

rust

features

that

let

let

you

write

something

like

join

as

a

library

and

rust

and

have

it

work

pretty

efficiently,

which

I

think

is

pretty

cool.

So

if

you

remember

joint

aches

to

closures

and

closures

in

rust,

here's

the

arguments

to

join

opera

and

opera,

be

those

are

the

two

operations.

B

B

The

reason

that

these

are

like

actually

two

distinct

types,

and

why

that's

really

nice

is

it

means

that

every

time

you

call

join,

you

will

get

a

custom

version

of

join

the

compiler

will

not

amorphous

meaning

it

will

create

a

custom

version

of

join

specialized

to

those

particular

closures.

That

means

that

llvm

can

basically

in

line

and

do

optimization

and

hopefully

in

general,

basically

reduce

the

overhead

of

join

in

the

sequential

case.

So

there's

no

virtual

dispatch

there's

only

static

dispatch

and

so

forth.

B

Now

this

is

the

the

kind

of

pseudocode

for

what

I

just

described.

So,

if

you

remember,

we

always

push

something

on

the

queue

and

then

execute

the

other

task.

So

here's

the

the

push

on

to

the

queue

part-

and

the

thing

I

want

to

highlight

here-

is

that

we

don't

do

any

allocation

when

we

push

on

the

queue

we

don't

necessarily

least

in

the

common

case,

the

operable

closure

that

we're

pushing

on

the

queue.

We

can

just

push

a

reference

to

the

closure

that

lives

on

the

stack.

B

So

we

don't

even

have

to

allocate

any

memory

to

do

that

right.

It's

just

a

few

rights,

and

then

we

can

call

the

first

closure

and

then

execute

the

second

closure,

and

each

of

these

calls,

as

I

said,

are

statically

dispatched,

which

means

they

can

be

in

line

very

effective,

very

easily

by

l'a,

vm

and

analyzed

very

easily,

and

then

finally,

here

this

is

just

a

little

loop

that

says

well,

if,

if

somebody

stole

my

work,

then

I'll

help

out

until

it's

ready.

I

mean

this.

B

B

Okay.

The

last

thing

on

this

slide

that

I

just

sort

of

glossed

over

is

this

sand

trade.

So

what

this

is

saying

is,

if

you,

if

you,

if

you

are

familiar

with

rust,

you'll

know,

but

so

send,

is

basically

or

if

you've

used

multiple

threads

in

rust.

You

probably

encounter

it's

and

I

should

say

and

send

is

basically

the

trait

that

identifies

data

types

which

are

safe

to

send

to

another

thread.

B

So

it's

things

which

can

be

safely

transported

between

threads

and

won't

cause

any

databases

right,

and

so,

when

I,

when

I

say

that

the

closures

have

to

be

send.

What

I

mean

is

all

that

data

that

you

use

from

your

closures.

So

in

the

case

of

quicksort,

that

would

be

the

array

that

we're

sorting

and

so

forth

has

to

be

sunday

ball

to

another

thread.

That

makes

sense

because

join

might

cause

those

two

threads

to

execute

in

parallel

or

it

might

not.

B

But

in

the

case

that

it

does,

the

data

had

better

be

safe

to

go

to

another

thread.

Now,

as

it

happens,

in

rust,

most

types

actually

are

send

and

almost

everything

is

sin

double

by

default.

The

only

exception

is

basically

these.

These

three

types

and

things

that

use

these

three

types.

So

if

you

use

the

RC

type

reference

counter

data,

that's

not

thread-safe,

because

the

reference

count

data

doesn't

use

the

correct

atomic

operations

to

increment

the

rest

count,

which

means,

if

two

different

threads

increment

the

ref

count

at

the

same

time.

B

Suffice

to

say

there

are

thread-safe

equivalents

like

mutex

in

atomic

32,

but

you

have

to

be

a

little

bit

careful.

So

if

you

find

that

you're

getting

compilation

errors

due

to

the

fact

that

you

have

cell

and

ref

cell,

but

that's

kind

of

telling

you

is,

this

code

is

not

going

to

be

trivial

to

paralyze.

So

this

gets

back

to

the

safety

guaranteed.

I

was

talking

about

in

the

first

place,

if

you're,

just

adding

paralyzation

and

you'll

wind

up

getting

errors,

we're

actually

getting

pretty

useful

information.

B

You're

saying

that

this

that

there's

some

shared

state

that

gets

mutated

on

and

you

want

to

think

about

it-

you

could

add

a

mutex

or

some

other

mechanism,

but

at

that

point

it's

no

longer

guaranteed

that

your

parallel

version

is

going

to

behave

the

same

as

your

sequential

version.

So

you

have

to

think

about

it

a

little

bit,

and

so

that's

one

aspect

of

safety.

Basically

that

we

want

to

make

sure

that

you

don't

transport

types

that

are

not

thread-safe

across

threads

and

then

the

other.

B

Is

you

want

to

make

sure

that

you

don't

transport

the

same

data,

especially

mutable

data

across

threads

all

right?

So

this

is

a

version

of

quicksort,

which

is

almost

the

same

as

the

correct

version,

except

that

instead

of

sorting,

though

the

low

and

the

high

on

the

two

different

threads,

it

actually

sorts

the

low

on

both

threads

and.

B

It's

kind

of

it's

kind

of

funny

that

I've

been

using

this

exit

as

an

example

for

for

a

while

and

I

always

thought

well,

I,

don't

know

if

people

really

make

such

a

simple

mistake,

but

then,

when

developing

round

I

actually

made

several

mistakes

exactly

like

this

several

times

and

the

compiler

called

them

for

me

and

I

was

very

happy.

So

what

will

happen?

Basically,

if

you

do

something

like

this,

where

you

accidentally

use

the

same

state

on

both

threads,

is

that

the

compiler

will

complain

and

that's

the

message

you

get

and

what

it's

telling.

B

You

is

basically

that

if

you

want

to

mutate

state

and

rust,

you

have

to

have

unique

access

to

it.

So

here

I

have

two

closures:

they

both

have

access

to

the

same

data,

so

neither

one

has

unique

access

right

and

what's

what's

cool?

Is

that

this?

This

rule

wasn't

designed

with

parallelism

in

mind,

but

it

fits

pearls

and

very

well.

So

the

compiler

isn't

really

doesn't

necessarily

know

that

these

running,

but

it

knows

that

it

needs

to

keep

mutable

access

on

unique,

and

so

it

just

kind

of

falls

out

from

that.

B

So

that's

how

join

works

and

I've

kind

of

dump

through

it

dell

food

in

some

detail.

So

this

api

that

I

showed

you

in

the

beginning

was

a

parallel

iterator

and

I'm

not

going

to

go

into

it

in

this

talk

because

I

don't

want

to

take

a

long

time.

But

basically

you

can

build

up

the

parallel

iterator

in

almost

entirely

safe

code

using

join

and

in

fact

it

was

building

a

car

literary

prize

where

I

made

that

mistake

with

passing

the

same

half

to

both

have

both

closures,

at

least

when

building

around.

B

So

so

that's

pretty

cool

that

the

joint

is

actually

flexible

enough

to

be

used

kind

of

build

up

abstraction

on

top

that

are

that

are

even

easier

to

use

and

apply

to

other

situations.

And

there

is.

I

have

written

up

the

details

of

how

this

works,

at

least

at

a

certain

level,

it's

in

a

blog

post

that

I'll

show

a

link

to

later.

B

So

if

you're

interested,

you

can

go

check

it

out,

but

the

basic

idea

is

that

it

will

divide

up

the

work

that

the

iterator

is

going

to

do

recursively

in

the

same

fashion.

Right

so

in

conclusion,

I

guess:

there's

a

couple

of

points

I

wanted

to

kind

of

bring

across

and

these

the

point

that

I

really

don't

want

to

get

across

here

is

you

should

go

use

rayon.

B

What

I

do

want

to

get

across

is

I

think

there

are

some

some

goals

and

lessons

to

be

learned

from

rayon.

That

I

think

we

should

try

to

incorporate

and

whether

that

I

hope

we

will

end

up

with

a

package

much

like

rayon,

which

is

very

widely

used.

It

doesn't

have

to

be

ran.

I'd

be

perfectly

happy

if

somebody

else

for

a

better

one,

but

I

think

these

are

some

really

important

things

about

it.

B

B

This

kind

of

guarantees

you

want,

but

it's

also

great

for

you.

When

you're

writing

sequential

code,

you're

kind

of

writing

parallel

ready

code

without

even

realizing

it

so

to

speak,

just

because

that's

the

way

that

you

normally

work

to

with

code

and

rust,

and

the

fact

that

we

have

these

lightweight

closures

that

can

be

stack

allocated

that

have

static

linking

and

easy

inlining

also

means

you

can

do

kind

of

just

in

the

compiler.

B

These

are

more

for

people

who

come

and

look

at

the

video

later,

but

this

is

where

the

rayon

project

is

on

github,

and

this

really

long

URL

that

I

had

to

put

in

a

small

font

is

actually

the

blog

post

to

that

was

talking

about

that

kind

of

goes

over

everything

I

said

here,

but

in

somewhat

more

detail,

so

that's

all

I

have

to

say.

Thank

you

very

much.

If

you

have

some

questions,

I

would

love

to

answer

them.

I

guess.

C

B

Well,

you

start

out

with

kind

of

the

queue

has

a

really

big

problems

at

the

top

and

they

get

smaller

and

smaller

as

they

go

down

right

and

so

the

task

when

a

task

goes,

it

always

pulls

from

its

own

cue

from

the

bottom.

So

it

takes

the

smallest

task,

basically

that

the

most

recently

pushed

thing,

which

means

it's

the

most

likely

to

have

the

same

cache

behavior

as

the

thing

you

just

did

right,

so

you

get

kind

of

maximum

locality

that

way.

B

But

when

you

steal

you

steal

from

the

other

side,

which

means

you're

stealing

the

work

that

is

most

remote

from

what

you're

from

what

the

processor

is

doing

right

now

and

thus

the

one

is

kind

of

represents

a

big

chunk

like

this.

The

right

half

of

the

array

that'll

be

kind

of

a

whole

different

cache.

B

You

know

it's

kind

of

just

you

would

get

the

minimum

benefit,

basically

from

from

being

executed

by

the

original

processor.

However,

I

am

not

sure

I

mean

most

of

the

kind

of

papers

and

things

that

I

saw

that

did

measurements.

I

think

we're

pretty

old,

so

I'm

not

sure

like

modern

architectures

are

different

and

I'm

not

sure

if

anyone's

done

any

recent

studies,

but

I

know

that

the

ones

that

I

saw

they

showed

a

quite

good

cache.

Behavior

nice.

D

B

B

By

default,

yes,

I

have

some

code

paths

in

there.

That's

not

very

well

tested

that

lets.

You

create

additional

threat,

pools

and

in

a

kind

of

dynamically

scoped

fashion,

so

you

can

kind

of

enter

into

a

thread

cool

and

then

the

work

that

executes

within

their

will

go

in

that

separate

thread

pool,

but

in

general

it's

probably

not

a

good

idea.

Unless

you

have

a

specific

reason

that

there

should

be

more

zabal

thread

pulls

it's

usually

a

good

idea

to

share

them,

because

I've

only

got

so.

Many

core

is

essentially

so.

E

So

you're

talking

a

bit

about

the

safety

of

rayon

or

that

having

to

do

with

data

races,

but

you

mentioned

in

passing

that

if

you

use

something

like

a

mutex,

you

can

accidentally

reveal

the

fact

that

these

things

are

running

in

parallel.

Have

you

thought

about

a

way

to

rule

that

out

similar

to

send

I.

B

Haven't

mostly

because

I

think

it's

really

useful,

so

there

are

a

lot

of

parallel

or

there

are

a

lot

of

algorithms

where

you

actually

want

to

communicate

between

the

parallel

workers.

So

a

simple

example

is

a

kind

of

branch

and

bound

search

where

your

or

something

like

this,

where

you're

kind

of

searching

through

a

space-

and

you

want

to

know,

what's

the

best

result

that

anyone

has

found

so

far

and

if

you're

running

sequentially,

that's

just

going

to

be.

B

You

know

the

current

what

the

current

weight

is

found

so

far,

but

if

you

do

have

the

opportunity

to

paralyze

you'd

like

to

know

if

someone

else

found

a

better

result

somewhere

else

that

you

can

stop

searching

unproductive

avenues.

So

this

is

used

to

like

solve

Traveling,

Salesman

problem

and

stuff

like

that

right,

so

I'm

not

sure.

That's

a

problem,

basically

that

you

can

reveal

the

Pearl

execution

like.

B

Using

a

mutex

already

you're

already

in

a

parallel

mindset,

you've

already

delimited

your

transactional

boundaries

where

you

acquire

the

lock

and

unlocked

it.

So

I'd

be

surprised

if

it

actually

led

to

bugs

and

code,

but

it

does

seem

like

we

won.

Something

like

send

accepted.

I,

don't

know,

I

haven't

talked

about

it

that

hard,

but

it

could

be

excused.

E

F

B

It

gives

it

gives

all

the

so

this

kind

of

comes

back

to

some

extent

to

the

to

the

shared

threadpool

question

that

was

raised

earlier.

But

so,

if

you

have

multiple

threads

with

different

priorities

and

they're

each

starting

up

parallel

tasks,

we

don't

give

one

thread

pool

higher

part

like

we

don't

give

the

the

tasks

and

the

thread

will

all

have

equal

priority

wherever

they

came

from

it's

an

interesting

idea.

B

Potentially,

the

challenge

is

that

they

can

get

mixed.

They

can

get

mixed

a

like

there's,

there's

one

thread

pool

right,

which

is

basically

totally

different,

set

of

threads

than

the

one

that

are

normally

used

in

your

application.

So

you

can

have

tasks

intermingled

from

different

application

threads

in

the

air

which

may

have

different

priorities,

I

guess

they

could

set

and

unset

the

priority

as

they

go.

E

Hear

me:

cool,

hey

everybody,

I'm

aaron

tron,

another

member

of

the

rest

team

here

at

Mozilla

and

I'm

excited

tonight,

to

tell

you

about

a

library

I've

been

working

on

called

cross

beam,

so

cross

beam

is

targeted

at

sort

of

a

different

layer

of

the

stock

than

the

library

that

we

just

saw

so

Nico's

library

breann,

is

all

about

writing

sort

of

introducing

parallelism

into

your

program

and

it

relies

on

some

underlying

data

structures

like

work.

Stealing

cues

crossbeam

is

targeted

at

those

kinds

of

data

structures.

E

Basically,

so

it's

it's

a

library

for

high

performance,

concurrent

data

structures

and

in

particular

the

the

sort

of

big

ticket

item,

is

locked

free

data

structures

which

also

spend

a

lot

of

time

telling

you

about

tonight,

but

I'm,

also

interested

in

userspace

synchronization,

which

is

closely

related

things

like

semaphores

barriers,

latches

and

so

on,

and

then

a

sort

of

central

topic

that

that

ties

all

this

together,

which

is

concurrent

memory

management.

So

a

lot

of

work

on

lock

free

data

structures

ends

up.

E

This

is

the

amount

of

time

sort

of

perk

you

operation

in

a

multi-threaded

test

case

right.

So

this

is.

This

is

basically

just

showing

like

the

overhead

of

the

memory

management

scheme.

I'm

going

to

show

you

today

is

quite

good

and

in

fact

it's

pretty

easy

to

do

better

than

Java,

so

the

Java

concurrent

link

you

hear

is

highly

tuned.

The

stuffing

crossbeam

is

like

textbook

algorithms,

with

very

little

effort

put

into

them.

Okay,

oh

yeah,.

E

Yeah

I

mean

and

no

yeah

well

well,

we'll

get

into

more

detail

on

this

I

should

say

feel

free

to

ask

questions

throughout.

This

is

definitely

gonna

be

a

little

bit

more

technical

than

the

previous

talk.

Okay,

so

I

realized

that

probably

a

lot

of

you

don't

know

a

lot

about

this

low-level

area

of

concurrency.

So

I

want

to

start

by

talking

about

some

of

the

important

trade-offs

in

that

space,

and

so,

of

course

we

have

to

talk

about

the

cash.

E

That's

that's

always

a

huge

determiner

of

performance

and

the

cash

story

gets

a

lot

more

complicated

once

you

start

talking

about

having

multiple

cores

right,

so

this

diagram

shows

a

fairly

typical

architecture

where

you

have

a

number

of

different

cores

on

the

same

die.

Each

of

them

has

dedicated

l1

cache,

and

then

they

have

a

shared

l2

cache.

E

E

There's

a

protocol

for

doing

this.

A

typical

one

is

the

so-called

messy

protocol

for

cache

coherence,

and

the

idea

is

pretty

simple.

Basically,

each

cache

line

has

a

state

at

any.

Given

time.

Modified

just

means

that

it's

it's

dirty.

Basically,

it

needs

to

be

written

back

to

ram

exclusive

means.

It

hasn't

yet

been

changed,

but

this

core

is

claiming.

It

is

the

only

one

with

a

valid

cache

line

for

this

address.

Shared

means.

Perhaps

multiple

cores

have

this

cache

line

out,

but

none

of

them

is

in

a

position

to

write.

E

So

what

you

want

is

to

be

able

to

access

shared

data

structures

where,

if

you're

accessing

different

parts

of

the

data

structures

say

you

have

a

shared

hash

map

and

one

core

is

looking

up.

One

key

and

another

chord

is

looking

up

a

different

key

and

those

are

in

different

buckets.

Those

cores

should

not

have

to

talk

to

each

other.

They

should

not

be

in

validating

each

other's

cache

lines.

E

Sometimes,

when

you're

working

in

this

space,

you

actually

don't

want

cash

locality,

because

it's

easy

to

have

packed

into

a

data

structure,

some

data

that

is

relevant

to

one

core

and

some

data,

that's

relevant

to

another

they're

sitting

on

the

same

cache

line,

and

now

the

two

cores

are

basically

ping-ponging

the

data

back

and

forth

every

time

they

want

to

write.

This

is

called

false,

sharing.

Okay,

so

that

that's

like

some

sense

of

the

kind

of

space.

E

We

still

up

invalidating

these

cache

lines.

Now,

there's

there

is

a

sort

of

finer

grained

approach

where,

instead

of

having

a

global,

lock

around

the

hash

table,

maybe

you

just

have

locks

around

each

bucket,

but

that's

still

not

as

good

as

you

might

hope.

For

because

again,

when

you're

reading

from

the

hash

table,

you

still

have

to

acquire

those

locks

that

still

involves

a

right

that

can

still

lead

to

cache,

misses.

Ok,

so

I'll

roughly

make

sense.

The

details

here

aren't

super

important.

E

I

just

want

to

give

you

a

flavor

of

the

kinds

of

things

you're

thinking

about.

Ok,

so

all

of

those

concerns

leads

you

in

the

direction

of

something

called

lock

free

data

structures.

So

this

is

basically

I

mean

if

you

think

about

it,

locks

fundamentally

can't

achieve

the

goals

that

we

set

out

in

the

beginning.

They

can't

they

can't

let

you

avoid

doing

a

right

when

all

you

want

to

do

is

read,

so

we

need

some

other

way

to

access

concurrent,

sorry

to

control

concurrent

access

to

a

data

structure

that

doesn't

involve

logs.

E

So

I

want

to

teach

you

briefly

how

to

write

a

lock,

free

data

structure

and

I'm

going

to

use

the

sort

of

hello

world

of

lock

free

data

structures,

which

is

a

stack

and

it's

not

nearly

as

scary

as

you

might

think.

It's

actually

pretty

simple,

so

the

representation

will

use

for

this

stack

is

just

a

simple

linked

list

right.

So

the

the

stack

has

a

head

pointer

pointing

to

the

current

head

and

then

nodes

just

tub

singly

linked

list

all

the

way

to

the

end.

E

So

can

everybody

read

that

it's

kind

of

small?

My

apologies,

if

you

can't

I

so

here's

the

code

for

pushing

on

to

such

a

stack,

so

we

allocate

a

new

load,

a

new

node,

that's

not

real

interesting.

We

turn

that

into

a

raw

pointer,

whatever

those

are

just

some

details

in

rust,

and

then

we

enter

into

a

loop

and

the

idea

with

this

loop

is

we're

going

to

try

to

optimistically

sort

of

install

this

node

onto

the

stack

and

we're

doing

so

in

a

way

that

might

be

racing

with

other

threads.

E

We

might

lose

that

race,

and

so

then

we

have

to

come

back

around

the

loop

and

try

again

and

what

I

mean

by

optimistic

here

is

we're

going

to

take

a

snapshot

of

the

current

head.

So

we

load

the

value

the

current

head

of

the

stock.

That's

that's

some

pointer

and

then

we

go

ahead

and

take

our

node

and

say

its

next

pointer

is

whatever

that

snapshot

was,

and

then

we

try

to

install

that

node

in

the

front

of

the

stock.

E

Basically-

and

we

use

an

operation

called

compare-and-swap

compare-and-swap

is

is

like

the

basic

building

block

of

all

autumn

icity

on

most

processors

and

what

it

says

in

this

case

is

I.

Think

the

current

value

of

head

is

N

and

I

want

the

value

of

head.

Sorry,

I

think

the

current

value

yeah

I've

head

is

head.

Excuse

me,

in

this

case

self

dot

head

is

head

and

I

want

to

update

it

to

N,

and

that

update

should

take

place

in

a

single

atomic

step.

E

As

far

as

all

other

cores

are

considered

right,

so

I'm

not

grabbing

any

lock

here,

I'm

just

doing

the

change

in

place,

but

if

atomically

head

has

some

other

value

than

the

one

I

expected,

then

this

just

won't

do

anything

and

the

result

I

get

back

is

essentially

what

value

did

had

actually

have

so

I

see

I

ask:

is

it

the

value

I

thought?

Is

it

equal

to

head?

If

so,

good

I

succeeded,

I

can

leave

the

loop

I've

actually

installed

my

pointer.

If

not,

then

I

lost

the

race

with

some

other

thread.

E

They

got

their

first

I

have

to

try

again.

Okay.

So

let

me

let

me

show

that

to

you

more

graphically

right

so

say

this

is

the

current

state

of

the

stack

we

have

a

head

pointer.

We've

got

two

nodes,

a

thread

comes

in

and

it

wants

to

push

this

node

with

the

value

seven.

So

it

allocates

that

node.

It

reads

the

current

value

of

head

and

links.

It

links

it

into

the

allocated

note,

but

it

hasn't

yet

changed

the

head

pointer.

Meanwhile,

some

other

thread

comes

along

and

pushes

a

different

node

and

right.

E

So

now

we

have

our

local

node

that

has

seven,

but

the

actual

head

pointer

has

changed

at

this

point.

If

we

do

our

compare-and-swap

it's

going

to

fail,

because

we

think

that

we're

pointing

to

the

node

that

contains

three,

but

actually

that's

changed,

that's

good,

because

if

we

actually

succeeded

we

would

have

just

dropped

the

node

with

five

on

the

floor

right.

That's

what

we're

trying

to

avoid.

So

then

we

have

to

retry

we'll

get

a

fresh

snapshot.

Weary

point,

our

tail

pointer,

we

do

in

other

casts,

and

this

time

we

succeed.

J

E

Right

so

if

we

look

back

at

the

representation,

basically

everything

you

need

to

declare

is

in

this

atomic

pointer

aspect

right.

So

that's

that's

enough

to

tell

rust

and

lvm.

You

know

I'm

going

to

be

modifying

this

in

a

sort

of

concurrent

way

in

a

racy

way

right

and

that

that's

what

makes

what

we're

doing

here,

not

a

date

of

race,

but

actually

a

synchronization

race,

which

is

a

good

kind

of

rates.

E

E

So

let's

look

real,

quick,

then

at

pop,

which

is

pretty

similar.

So

in

this

case

we

we

don't

need

to

do

any

allocation

up

front

right,

we're

just

trying

to

pop

a

note

off.

So

we

enter

right

into

our

retry

loop.

We

grab

a

current

snapshot.

If

we

see

that

the

head

is

actually

pointing

to

null,

then

we

have

nothing

to

do.

We

have

observed

a

moment

in

time

where

the

stack

is

empty,

and

so

we

just

return