►

Description

A talk from Full Stack Fest 2015 (http://fullstackfest.com/)

http://fullstackfest.com/agenda/rewriting-a-ruby-c-extension-in-rust-how-a-naive-one-liner-beats-c

Recorded & produced by El Cocu (http://elcocu.com) Full Stack Fest is a conference held by Codegram. We've been running development conferences since 2012 with a goal in mind: Inspiring our audience by putting together the best speakers & talks at a privileged location in the beautiful Barcelona area.

Head over to https://conferences.codegram.com/ to see an overview of all our conferences and their content. Visit https://www.codegram.com/blog/ to learn more from our team on related topics.

A

So

hey

I'm

here

today

to

talk

about

rusts,

which

is

maybe

weird

cuz,

we're

here

at

a

ruby

conference,

although

for

some

reason

rust

has

been

a

pretty

popular

topic,

not

just

among

rubyists

but

but

among

even

note,

people

and

all

kinds

of

stuff

I,

don't

actually

have

a

good

explanation

for

exactly

why

that

is,

but

I

know

that

people

want

to

hear

about

it.

So

I

am

here

to

deliver.

A

A

A

But

I

came

up

with

this

with

the

title.

Actually,

while

I

was

working,

I'll

talk

a

bit

more

about

this

later

working

with

Godfrey

and

actually

sort

of

encountered.

This

phenomenon

on

my

own,

without

actually

planning

for

it.

So

I've

been

working

on

my

talk

about

Russ

up

until

that

point

and

actually

encountered

this

phenomenon

and

sort

of

rebuilt,

the

beginning

of

it

to

focus

on

the

hook.

A

So,

first

of

all,

I

work

on

skylight,

which

is

a

tool

that

you

can

use

to

keep

your

rails

application

healthy

and

keep

an

eye

on

on

its

health,

and

one

of

the

things

that

we

do

to

make

that

work.

Is

we

put

an

agent

in

your

rails

application

just

like

any

of

our

competitors

and

very

early

on

in

the

lifetime

of

our

of

our

product?

A

What

we

realized

was

that

if

we

wrote

all

the

code

that

actually

did

the

instrumentation

and

talked

to

our

server

in

Ruby,

which

was

how

we

initially

prototyped

it

there

was

gonna,

be

a

limit

on

how

many

things

we

could

collect

and

that's

something

that

we

noticed

a

lot

of

competitors

ran

into

as

well.

There

was

a

limit

on

what

they

could

collect

and

so

one

we

decided

at

some

point.

A

But

I

don't

feel

comfortable

with

me

and

the

rest

of

the

team,

maintaining

this

big

blob

of

C++

code-

and

this

was

like

a

year

and

a

half

ago

where,

when

russ

was

already

on

hacker

news,

a

lot

but

Pat

wasn't

really

anywhere

I.

Think

in

terms

of

completion,

I

think

like

servo,

was

really

the

only

project

using

rust

at

the

time

and

I

said

you

know,

give

me

a

week.

I'll

try

to

do

this

in

rust.

A

A

The

thing

that

makes

rust

really

successful,

or

at

least

successful

for

me,

is

that

it

takes

people

who

are

good

programmers

really

good

programmers

even

but

who

look

at

a

bunch

of

C

code

and

say

I

understand

what

this

does,

but

I'm

scared

of

this

code

and

therefore

the

idea

that

well

my

rails

app

is

too

slow,

I'll

drop

down

to

C.

Well,

that's

a

good

thing

that

we

could

pitch

that

we

could

say

to

people.

It

doesn't

end

up

really

turning

out

to

be

true

right.

A

A

A

He

came

in

as

a

Microsoft

shop

guy,

but

discourse

decided

at

the

beginning

that

they

were

gonna

use,

rails

and

Emperor

as

their

stack,

and

they

did

that

basically

because

they

wanted

to

find

a

way

to

get

more

contributors

and

they

figured

if

they

tried

to

have

an

open

source

software

I

used

Microsoft

as

a

stack

that

was

going

to

result

in

very

many

fewer

open

source

contributions.

So

they

decided

to

base

it

on

Rails.

But

of

course,

rails

has

a

well-earned

reputation

for

being

slow

and

Sam

is

a

speed

junkie.

A

So

Sam's

has

been,

has

done

a

lot

of

good

work

to

make

rails

pieces

of

rails

faster,

either

by

just

reporting

slowness

by

actually

submitting

patches,

or

in

this

case

something

unorthodox.

And

so

one

day,

Sam

Saffron

was

looking

at

a

flame

graph

and

he

has

some

tools

that

make

it

easy

to

look

at

this

and

he

you

can

see

he

somehow

inked

some

circles

around

the

thing

that

he

reported.

He

said

it

looks

to

me

like

blind

question

mark

the

Blanc

method

is

taking

up

a

lot

of

time.

A

It's

taking

up

like

4%

of

the

total

time

in

this

whole

program.

Four

percent

is

a

lot

of

time

for

rails

app

right.

So

maybe

your

request

takes

100

milliseconds.

That

could

be

like

you

know,

40

mils

in

so

that's

that's

a

lot

of

time.

That

is

totally

the

wrong

thing.

That's

four

milliseconds

I

should

be

able

to

do

percentages

right,

I'll,

believe

it

on

jet

lag

okay

anyway.

The

point

is

it's

a

big

chunk.

Four

percent

is

a

big

chunk

and

he

said,

let

me

try

to

make

it

faster

and

for

at

first

he.

A

This

is

the

changelog

entry

about

this

thing.

At

first,

he

tried

to

submit

some

patches

to

make

it

faster

in

Ruby.

If

you

want,

you

can

follow

the

rabbit

all

it's

interesting

reading,

where

he

basically

tried

to

just

make

the

method

faster.

But

after

a

bunch

of

efforts,

I

think

the

first

thing

that

happened

is

he

submitted

a

patch

and

somebody

replied

seems

legit

and

then

tenderlove

replied

does

not

seem

legit,

because

it

was.

It

was

wrong,

it

didn't

it

wasn't

compatible.

So

eventually

Sam

just

decided

I'm,

just

gonna

rewrite

it

in

C.

A

So,

to

get

an

idea,

this

is

something

I

grabbed

out

of

his

test

suite.

This

is

what

the

it's

blank,

because

he's

testing

it

against

his

faster

blank

in

this

particular

situation.

So

you

can

see

that

it's

a

pretty

simple

implementation

right.

It's

it's

the

regex

that

checks

to

see

if

there's

any

spaces

very

simple.

A

Actually

you

might

not

look

at

this

and

feel

like

this

should

obviously

be

slow,

because

it's

basically

all

gonna

run

inside

of

Ruby,

but

what

you

should

be

able

to

realize

by

looking

at

this

is

that

this

is

gonna

exercise,

some

very

generic

parts

of

the

system.

It's

a

regular

expression

right.

So

it's

not!

Unless,

if

the

regular

expression

engine

happens

to

be

very

optimized

for

this

scenario,

it's

nothing

you're,

not

gonna,

get

the

best

performance

out

of

this

implementation,

so

he

basically

went

and

wrote

us

the

extension.

You

do

not

need

to

read

this.

A

The

snip

over

there

represents

like

another

20

lines,

or

something

like

that,

and

it's

sort

of

like

what

you

come

to

expect

when

you

look

at

code

that

is

designed

to

make

things

faster

right.

So

it's

a

function.

Has

a

bunch

of

gunk

around

Ruby

stuff,

you

can

see

it's

like

pulling

out

pointers

and

making

making

pointers,

getting

them

out,

etc,

and

then

there's

just

a

big

switch

theme

and,

of

course,

which

statements

are

expected

to

be

fast.

A

You

can

also

see

that

the

loop

is

just

like

right

in

here,

there's

not

using

any

abstractions

for

the

loop.

It's

literally

just

like

everything

is

right

here

and,

as

perhaps

expected,

if

you

actually

run

this

code,

what

you

will

discover

is

that

it

is

much

faster,

so

Sam

made

this.

He

he

released

it

as

a

gem.

You

can

install

it

as

fast

blank

and

it

will

basically

take

care

of

itself

and

make

your

blind

question

mark

methods

much

faster,

which

has

an

impact

on

some

percentage

of

of

your

rails

app

requests.

A

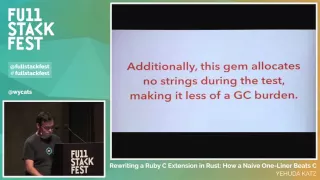

So

people

should

definitely

do

this

and

additionally,

Sam

said

in

his

in

the

readme.

This

gem

allocates

no

strings

during

the

test,

making

it

less

of

a

GC

burden,

and

this

is

actually

something

I

actually

wrote

a

blog

post

about

this

last

week.

But

this

is

something

that

I

think

people

don't

have

a

good

time

really

understanding.

When

you

allocate

a

bunch

of

wool-

okay,

I'll

comment

down

here

when

you

allocate

a

lot

of

objects,

usually

so

the

allocation

itself

is

very

cheap.

A

If

you

benchmark

allocating

like

a

thousand

objects

in

ruby,

it

will

be

pretty

fast,

but

the

thing

that

makes

it

a

problem

is

that

eventually

somebody

has

to

clean

it

up

and

the

more

objects

that

you

allocated

the

more

time

the

GCS

to

spend.

But,

of

course

the

GC

runs

kind

of

randomly

throughout

the

program.

So

it's

very

hard

to

track

down.

Gc

pauses

back

to

the

original

thing

that

that

triggered

it.

But

things

like

allocating

a

bunch

of

strings

just

to

do

a

match

to

see

if

there's

any

blank

characters

allocates

unnecessary

strings.

A

A

Maybe

there's

other

things

like

this

that

we

could

do

and

people

maybe

there's

a

general

collection

of

gems

I

think

this

was

like

a

pretty

low

hanging

fruit.

This

is

pretty

great,

so

I

personally

saw

this

as

a

pretty

good

success

story

for

the

whole

notion

of

dropping

down

to

see

the

old

bottlenecks.

Obviously

not

everyone's

gonna

be

able

to

write

that

C

code,

but

still

it's

pretty

great.

So

when

godfrey

started

working

with

me

a

couple

weeks

ago,

he

we

discussed

the

fact

that

he

was

really

interested

in

learning

Ross.

A

This

is

Godfrey

channel

on

the

rails,

core

team.

He

had

sort

of

dabbled

with

sort

of

hello,

world,

tutorials

and

rust,

but

he

was

interested

in

really

learning

rust

and

he

came

up

with

the

idea.

Maybe

we

should

try

to

reimplemented

in

rust

and

the

goal

was

not

actually

to

do

a

better

job.

The

goal

was

just

to

say

what

what

does

the

infrastructure

look

like

to

basically

implement

the

same

thing

and

rust

that

Sam

implemented

in

that

sound

implemented

in

C,

sorry,

and

so

just

to

get

an

idea?

A

Obviously

we're

not

going

to

we're

gonna,

not

gonna,

want

to

use

the

regular

expression

engine

in

rust.

There

is

a

regular

expression

engine,

but

we

don't

want

to

use

it.

We

want

to

start

out

by

saying:

okay,

what

is

the

loop

look

like

at

a

high

level

and

at

a

high

level

the

loop

looks

like

string

dot

cars

which

would

return,

could

return

a

numerator

or

maybe

it

just

gives

you

back

an

array

of

characters,

and

then

you

loop

through

all

them

and

check

to

see

if

any

of

them

are

whitespace.

A

A

So

the

next

thing

that

we

did

is

we

basically

transcoded

that

code

to

rust

and

I'm,

leaving

out

a

bunch

of

you

know

surrounding

C

extension,

gunk

I.

Think

we're

planning

on

I

want

to

say

we

already

posted

it

publicly,

but

I

think

that's

not

actually

true,

but

it's

basically

sort

of

the

traditional

I

left

that

all

the

you

know,

Sam

saffron

C

extension

gunk,

it's

pretty

straightforward

to

do

this,

and

so

we

implemented

this

thing

called

fast

blank.

A

It

takes

a

buffer

which

is

basically

just

a

string

that

you

could

transfer

over

the

boundary

and

then

it

does

pretty

much

what

we

did

before.

What

the

Ruby

code

did

it.

First

it

converts

the

buffer

into

a

rust

string,

which

is

called

the

slice.

Then

it

gets

a

iterator

for

all

the

characters.

Then

it

calls

all

on

them

passes

a

closure

which

says:

is

it

whitespace

and

I

just

just

to

go

through

here?

What

is

happening

here

so

first

of

all,

the

buff

object

has

a

method

on

it.

A

So

this

is

a

method

like

in

Ruby,

and

that

method

creates

a

new

slice

object

and

then

that

slice

object

has

a

method

on

it

called

cars,

and

that

method

creates

a

new

iterator

object.

An

iterator

is

kind

of

like

an

Raider

in

Ruby,

I

would

say

pretty

equivalent.

Then

there's

another

method

called

all

which

you

run

on

the

iterator

and

that

all

method

is

actually

implemented

for

all

iterators.

A

Just

like

the

all

question

mark

method

and

Ruby

is

implemented

for

all

enumerables,

then

we

pass

a

closure

which

is

like

a

block

in

Ruby

and

then

finally,

there's

another

method.

Call

on

each

character

call

to

look

up,

is

whitespace,

and

so

you

might.

It

is

a

pretty

high

level

code

here

and

you

might

look

at

it

and

say:

well,

obviously,

that's

going

to

be

pretty

slow,

there's

a

reason.

I

say

naive,

rust

implementation.

This

is

basically

the

first

thing

that

you

can

implement.

A

It

uses,

essentially

all

the

high-level

niceties

that

you

would

expect

implement

something

like

this

in

Ruby.

So

we

we

effectively

me

and

Godfrey

wrote

this

code

just

so

that

we

could

get

to

the

point

where

the

rest

of

the

infrastructure

was

working,

and

then

we

said

okay,

now

that

we

have

this

implementation,

let's

benchmark

it

and

then,

when

it's

slow

as

expected,

we'll

go

and

make

it

faster.

So

we

benchmarked

it

and

it

was

faster

which

led

me

to

say

to

make

the

title

of

this

top.

A

A

We

have

a

loop

right,

so

the

loops

are

usually

the

place

where

you

should

really

really

be

on

the

lookout

for

extra

costs.

Somehow

this

is

not

flow.

How?

How

is

that

and

that's

what

the

rest

of

my

talk

is

about,

so

hopefully

the

mind-numbing

parts,

the

rest

of

my

talk

will

be

well

motivated

by

this

surprising

result

in

the

beginning,

so,

first

of

all,

before

I

get

into

the

details,

I

want

to

talk

about

abstractions.

The

golden

abstraction,

of

course,

is

the

high

details

right.

A

So

if

you

use

a

function,

the

whole

point

of

the

function

is

that

you're

not

looking

at

the

inside

of

the

function

to

understand

how

it

works.

You're,

looking

at

the

API

documentation

to

understand

how

it

works

and

normally

abstractions,

also

high-performance

the

right.

So

normally,

if

you

use

all

our

enumerable

you're,

not

supposed

to

think

about

whether

there's

especially

optimized,

all

written

for

array

or

not

anything

like

that,

you're

supposed

to

just

say,

I-

assume

that

someone

did

the

right

thing

here

will

do.

The

right

will

do

the

job.

A

It's

actually

half

the

document

documentation

from

a

JavaScript

library

that

I

work

on

called

ember

there's

a

thing

here:

a

function

called

intern

and

utils,

and

you

can

see

the

top

line

says

strongly

hint

runtimes

intern

the

provided

string.

So

interning

is

kind

of

like

how

in

JavaScript

you

can

use

a

symbol

and

then

sorry

in

Ruby

you

can

use

a

symbol

and

those

symbols

can

be

compared

very

quickly

or

used

in

hash

key

hashes

very

quickly.

A

In

JavaScript,

there

is

no

such

thing,

but

you

can

basically

wink

at

v8

using

a

bunch

of

hacks

to

make

it

to

effectively

the

equivalent

thing.

And,

of

course,

if

you

look

at

this

documentation,

it

explains

sort

of

what's

going

on,

but

it

also

says

basically

don't

use

this

function.

It

doesn't

really

end

up

mattering

but

of

course,

when

you're

writing

the

kernel

of

something

like

an

ember,

it

sometimes

doesn't

there

mattering,

and

you

end

up

writing

these

underlying

documentation

about

what

exactly

is

happening

behind

the

scenes

in

today's

implementation

of

v8.

A

So

basically,

there

are

cases

where

the

fact

that

you're

abstracting

over

things

that

that

you

know

performance

details

ends

up

being

bad,

and

so

really

what

assistance

programming

language

is

is

a

programming

language

that

lets

you

actually

look

at

an

abstraction

and

while

the

abstraction

might

be

hiding

implementation

details,

it

does

not

actually

hide

performance.

Details

and

I'll

give

you

a

series

of

examples

of

what

that

means.

So,

first

of

all,

I

showed

you

that

there

were

a

bunch

of

methods

that

we

used

in

our

example.

So

what

it?

A

How

does

a

method

work

in

rust,

so

I'm

gonna

start

by

showing

you

an

example?

Let

me

walk

through

this

carefully.

So,

first

of

all,

we

have

a

struct

called

a

circle

and

you

can

sort

of

think

of

a

struct

in

rust

as

being

both

like

a

C

struct,

which

lists

a

bunch

of

fields

but

also

comes

with

an

implementation

like

a

class

in

Ruby

or

Java,

or

something

like

that

right.

A

So

now,

let's

talk

about

how

you

actually

use

this.

So

if

you

look

at

I've

made

a

main

main

function

here

and

the

first

thing

we

do

is

we

say

circle,

new,

ten

dot

o

and

then

we

print

the

diameter.

That

print

line

is

how

in

rust,

you

do

string

interpolation.

Basically,

so

you

can

see

here

that

we

created

a

new

circle

and

then

we

printed

its

diameter

and

both

circle.

A

Cone

:

ooh

and

see

that

diameter

our

methods

right

just

like

methods

in

Ruby

and

it's

important

in

rust

that

they

are

not

effectively

in

Ruby.

When

you

use

a

method,

it's

sort

of

the

performance

characteristics

don't

matter

in

rust.

It

does,

but

before

I

get

to

that,

let

me

just

show

a

different

implementation

square.

A

square

has

a

side

of

f

64.

Again

it

implement

you

implement

square.

A

We

do

the

same

sort

of

thing

with

new

should

get

the

hang

of

the

new,

the

new

method

now,

and

we

have

a

diagonal

which

basically

multiplies

the

side

by

the

square

root

of

two

which,

maybe

you

may

or

may

not

remember,

from

sixth-grade

math,

I

didn't

actually

remembered.

I

have

to

look

it

up

so

now

we

basically

want

to

do

the

same

thing.

We

have

a

function

called

main.

A

You're

calling

a

matthew

new

as

a

method,

the

methods

or

methods

looks

kind

of

the

same,

but

there's

actually

a

really

big

difference,

which

is

that

in

rust,

every

single

time

you

call

a

method

unless

it

with

the

with

very

minor

exceptions

that

are

very

explicit.

The

compiler

knows

at

the

time

it

compiles

exactly

what

method

you

are

talking

about.

So

let

me

go

back

there.

A

So

when

you

say

square

colon,

colon

ooh

that

isn't

saying

you

know

at

runtime,

go

figure

out

what

method

that

is

and

call

it

and

when

you

say,

C,

dot

diagonal.

It's

not

saying

at

runtime

go

figure

out

what

that

method

is

and

call

it.

The

compiler

actually

points

directly

at

the

place

where

it's

going

and

at

first

glance

that

might

seem

a

little

bit

useful,

but

you

probably

have

heard

from

people

like.

Well,

you

dynamic

dispatch

is

pretty

fast.

We

have

really

good

techniques

these

days

for

making

dynamic

dispatch

fast,

and

indeed

that's

true.

A

So,

for

example,

the

go

implementation

of

dynamic

dispatch

is

very

fast

like

reasonably

fast

implementation,

but

there's

actually

an

important

detail,

which

is

that

when

you

statically

dispatch,

something

when

you

know

exactly

where

it

is

that

you're

going

at

compile

time,

you

can

also

inline

the

function

and

you

might

be

thinking

well

function.

Inlining

seems

good,

it

seems

like

an

important

optimization,

but

maybe

it

doesn't

end

up

mattering

that

much

so

I

went

to

the

function.

Inlining

Wikipedia

entry,

which

is,

of

course,

where

you

go

to

learn

anything.

A

And

if

you

read

the

function,

inlining

section

it

has

a

bunch

of

hemming

and

hawing

about

how

inlining

may

or

may

not

actually

be

the

thing

you

want

to

do

in

any

given

situation.

But

if

you

look

at

it,

it

says

the

primary

benefit

of

inline

expansion

is

to

allow

further

optimizations

and

improved

scheduling.

And

basically

the

idea

here

is

that

inlining

is

kind

of

a

gatekeeper

optimization.

If

you

have

a

function-

and

you

know

again-

you

don't

know

where

you're

going

you're

basically

done.

A

Optimizing

have

to

wait

until

runtime

to

know

where

you're

going

and

maybe

you

know

a

JIT-

can

sort

of

recover

by

figuring

things

out

at

runtime.

But

if

you

can

in

line

at

compile

time,

you

can

go

further.

You

can

say:

okay

now,

I

see

the

whole

picture,

and

maybe,

if

you

in

line

a

bunch

of

times,

you

see

the

whole

whole

picture.

Maybe

you

see

the

whole

program,

and

so

you

can

do

things

that

really

require

more

expansive

knowledge

of

the

whole

program.

A

So

again,

I

I,

think

of

inlining

as

a

gatekeeper,

optimization

for

a

lot

of

other

optimizations

and

static

dispatch

is

how

you

what

you

need

to

do

to

get

inlining

so

basically

static.

If

you

don't

have

static

dispatch,

you

don't

have

a

whole

bunch

of

really

important

optimizations

that

end

up

mattering

and

will

me

eventually

at

the

end

circle

back

around

to

the

motivating

example

at

the

beginning,

we'll

see

why

that

ends

up

being

true.

So

that's

step

number

one

static

dispatch

in

rust.

You

write

code.

A

So

that's

one

one

important

vector

the

second

important

vector

is

allocation

and

you

might

have

heard

the

word

allocation

a

bunch

of

times

in

various

contexts,

and

you

probably

know

that

you

should

try

to

avoid

allocation

and

that's

that's

true.

The

more

allocation,

the

more

time

you

have

to

spend

d,

allocating

things.

So,

let's

look

at

how

it

actually

works

in

rust.

Well,

so,

first

of

all

here

is

the

code

that

I

was

looking

at

before

and

keep

in

mind

for

the

next

few

slides

that

obviously

Square

knew

has

to

go

somewhere

right.

A

So

we

have

to

actually

make

a

new

square

and

we

have

to

put

it

somewhere,

but

before

we

explain

where

it

goes,

let's

first

look

at

how

you

would

do

perhaps

in

an

equivalent

thing

in

Ruby.

So

here

we

have

a

square

class.

We

initialize

it.

Hopefully

people

in

this

room

who

are

here

for

a

ruby

conference

can

read

this

code.

A

You

probably

would

not

have

def

main

but

whatever,

and

what

happens

in

Ruby

is

that

when

you

say

that

you

want

to

make

a

new

square,

what

happens

is

it

goes

on

the

heap

and

internally

in

the

Ruby

C

code?

That

thing

is

called

an

hour

object,

so

basically

you

make

a

new

square.

You

put

an

hour

object

on

the

heap

and

the

way

you

should

think

about

the

heap

is

it's

just

a

place

where

you

put

stuff

has

to

be

managed.

A

So

when

you

want

to

get

rid

of

it,

you

have

to

do

extra

work

to

get

rid

of

it,

and

but

the

fact

that

things

are

on

the

heat

means

that

you

could

have

many

pointers

to

the

same

thing

and

you

don't

normally

have

to

worry

about

exactly

where

things

are.

So,

when

you

type

square

a

new

10.0

in

Ruby

you're,

basically

saying

something

if

this

is

somebody

else's

job,

but

it

actually

gets

allocated

somewhere

in

a

giant

filing

cabinet.

A

Basically,

then

you,

you

know

you

print

out

the

diagonal

and

then,

when

you're

done

so

far,

the

object

is

still

on

the

heap,

but

eventually

the

garbage

collector

will

come

around

and

stop

the

world

and

it

will

eat

up

the

our

object

and

then

the

object

is

cleaned

up.

Of

course,

that

takes

some

amount

of

time

to

walk

the

entire

space

etc.

But

this

is

basically

how

garbage

collection

works.

There

are

various

strategies

to

make

it

more.

Efficient.

A

Yes,

it

should

be

scary,

it's

very

easy

to

make

mistakes.

You

could

forget

to

free

you.

Could

you

know

you

can

malloc

the

wrong

thing,

malloc

a

pointer

instead

of

the

actual

thing

you

know,

you

have

to

remember

to

put

all

to

line

up

all

the

pieces

so

now

that

we

understand

that

Ruby

does

heap

allocation,

which

basically

means

take

it

and

put

it

somewhere

else,

and

here's

how

you

do

it

in

C?

A

Let's

look

at

an

alternative

kind

of

allocation,

which

is

called

stack

allocation,

and

this

is

again

Sim

C

code,

and

what

you

see

here

is

that

we've

made

a

struct

which

is

the

equivalent

of

the

struct

that

we

made

in

in

rust.

The

exact

details

are

not

so

important

here,

but

the

basic

I'll

just

walk

through

the

basic

idea

and

then

go

into

more

detail

in

the

rust

example.

So

the

first

thing

that

we

do

is

we

enter

the

main

function

and

the

key

trick

was

stack

allocation

and

this

is

like

the

one.

A

This

is

like

one

of

the

main

one.

Weird

tricks

of

C

and

C++

is

that

when

we

compile

the

main

function,

we

can

actually

look

at

it

and

say:

ok,

we

actually

know

how

big

a

square

is,

and

we

know

what

agonal

the

diagonal

function

does

and

we

know

how

much

space

we

need

for

it.

So

what

we're

gonna

do

is

we're

going

to

leave

some

space.

A

We're

gonna

leave

some

space

in

the

stack

when

we

enter

the

main

function

and

we

basically

create

some

space

for

local

variables.

We're

gonna

leave

enough

space

in

the

stack

to

a

hank

to

hold

all

those

objects,

and

why

does

that

matter

so

remember

before

I

said,

when

you

put

things

on

the

heap,

somebody

has

to

eventually

go

and

clean

them

up.

Well,

what

happens

if

you

put

things

on

the

stack,

so

first

of

all,

we

make

a

square.

You

know

we

fill

it

in

we

print,

we

call

the

diagonal

function.

A

The

diagonal

function

makes

another

place

on

the

stack

so

basically

pushes

onto

the

stack

another

frame

which

has

enough

space

I'm

lighting

the

details

as

enough

space

for

whatever

the

diagonal

function

needs

to

do.

But

then,

when

that

the

important

detail

here

is

that

when

the

diagonal

function

returns,

it

can

just

move

the

stack

pointer

back

up

right.

So

basically,

it's

like

okay

I'm

done

with

this

frame

here

and

then

it

nobody

has

to

free

anything.

A

Basically,

the

fact

that

we

knew

ahead

of

time

how

big,

whatever

intermediate

variables

were

needed,

means

that

we

don't

have

to

free

anything.

Simply

by

exiting

that

frame,

we

got

rid

of

all

the

garbage,

so

we

can

basically

create

the

garbage

in

a

place

that

we

know

and

sort

of

make

it

part

of

the

process

of

calling

functions

and

then

equivalently

over

here

when

we're

done

with

the

main

function,

we

leave

that

stack

frame

and

then

all

the

things

that

we

made

for

that's

that

can

go

away.

A

So

this

is

a

much

better

way

of

creating

things

that

are

only

needed

temporarily.

Obviously,

if

you

need

something

for

a

longer

period

of

time

that

outlives

the

lifetime

of

that

function,

you

may

still

need

heap

allocation,

but

under

normal

under

many

circumstances.

If

you

look

at

regular

code,

if

you

just

look

like

any

function,

you

write

a

huge

amount

of

the

things

that

you

make.

Are

temporary

they're

only

needed

for

the

lifetime

of

Europe

of

that

function?

A

A

There's

a

small

caveat,

which

is

that

the

exact

size

for

each

function

has

to

be

known

at

compile

time,

and

this

is

why

it's

hard

to

do

this

kind

of

stuff

in

Ruby

or

JavaScript,

without

a

JIT

right,

because

you

may

look

at

a

bunch

of

local

variables.

But

you

can't

tell

just

by

looking

at

the

local

variables

in

Ruby

how

much

space

you

need.

So

maybe

you

could

put

enough

space

for

a

pointer

there,

but

you

can't

put

enough

space

for

the

whole

square

object

because

it

has

other

stuff

in

it.

A

A

So,

let's

go

back

to

rust

and

I

hope

that

when

I

showed

you

this

example

in

the

first

place,

you

thought

that

looks

pretty

similar

to

what

I

might

have

written

in

Ruby

and

obviously

not

the

syntax,

but

the

basic

item.

Emory

management,

part

of

it

right

and

again,

just

like

in

the

C

code

that

we

wrote

before

when

you

make

a

new

square.

In

this

case,

it's

actually

going

into

the

space

that

we

created

at

compile

time.

A

So

we

made

some

space

at

compile

time

for

the

square

and

we

put

the

square

into

that

space

and

when

we

call

diagonal

again,

we

call

that

function.

It

has

scratch

space

for

its

stuff

when

it

returns

it

basically

pops

up

all

the

stuff

gets

collected

when

main

returns,

pops

up

it

gets

collected

and

the

interesting

thing

about

this

is

again.

A

If

you

think

about

the

code

that

you

normally

write

a

huge

amount

of

it

is

creating

variables

that

are

only

used

in

this

function

or

functions

that

it

calls

directly

and

all

of

those

variables

could

be

stack

allocated,

and

it

really

adds

up

a

lot.

So

this

is

another

trick.

If

you

can

eliminate

heap

allocation

of

all

temporary

things

that

don't

outlive

the

time

that

the

function

is

called,

you

can

eliminate

not

just

the

garbage

collection

right,

so

you

can

obviously

free

things

and

then

the

freeing

will

not

require

a

garbage

collection

pass.

A

But

even

freeing

has

some

costs

right,

because

if

you

think

about

how

the

allocator

has

to

work

it

has

to

when

you

free

something

it

has

to

go,

find

the

thing

pull

it

out

and

when

you

allocate

something

it

has

to

find

a

slot.

To

put

the

thing.

The

next

thing

in

right,

so

there's

some

cost

to

using

malloc.

That

just

amounts

just

deals

with

the

fact

that

putting

things

into

a

big

filing

cabinet

and

taking

these

out

requires

some

bookkeeping.

A

The

bookkeeping

actually

adds

up

quite

a

bit,

so

stack

allocation

is

an

important

trick,

and

so

so

now

we

have

already

two

things:

we

can

statically

dispatch

an

in

line

and

we

can

stack

allocate-

and

these

already

are

very

big

improvements

over

what

you

might

have

written

in

Ruby-

how

you

probably

at

this

point,

looked

at

structs

and

you

say

well:

structs

are

interesting,

but

I'm

used

to

being

able

to

do

inheritance

in

Ruby

I'm

used

to

being

able

to

share

code.

What,

if

I

showed

you

an

example

of

a

circle

on

a

square?

A

What,

if

I

want

to

have

some

implementation

for

all

shapes?

So

in

an

object-oriented

language,

a

traditional

object-oriented

language,

you

would

inherit

from

a

shake.

This

is

kind

of

like

the

hello

world

of

Opie,

object-oriented

programming

and

rust

recognizes

that

you

want

to

be

able

to

do

this

kind

of

stuff,

but

it

implements

it

in

a

little

bit

of

a

different

way

and

it

act

the

way

it

happens

to

implement.

It

is

both,

in

my

opinion,

more

powerful

than

simple

object-oriented

programming,

but

also

much

faster.

So,

let's

go

look

again.

We

have.

A

We

have

our

struct

circle,

our

struct

Square,

and

now

we're

going

to

create

a

new

trait

so

and

a

trait

is

basically,

you

could

think

of

it

like

a

mixin

in

Ruby,

except

you

aren't

required

to

implement

you.

It

may

not

come

with

all

the

default

implementations,

so

the

enumerable

mixin

in

Ruby

would

be

easy

to

describe

as

a

shape

in

rust.

A

Sorry,

a

trait

and

rust,

where

the

each

method

would

be

a

mandatory

method

without

a

default

implementation,

and

then

you

would

have

many

other

methods

that

would

have

default

implementations

that

called

the

each

method

right.

So

a

trait

is

effectively

a

list

of

methods,

some

of

which

are

mandatory

and

some

of

which

are

derived

from

the

things

that

you

provide

as

an

implementation.

A

Typically,

there

are

multiple

implementation

blocks,

so

you

have

an

implementation

block

for

what

are

known

as

concrete

methods

or

sort

of

the

methods

that

are

specific

to

that

to

that

struct,

and

then

you

have

implementations

of

various

traits

and

those

traits

again

are

sort

of

like

mix-ins

in

Ruby

in

that

they're

they

represent

cross-cutting

concerns

and

they

aren't

required

to

be

in

a

strict

hierarchy.

Just

like

Ruby

mix-ins

are

not

required

to

be

in

a

strict

hierarchy.

A

So

now

that

we've

implemented

the

traits,

let's

actually

go

and

use

them

in

our

main

function.

And

here

we

call

we

have

make

a

new

square,

we

make

a

new

circle

and

we

call

s

that

area,

see

that

area

and

again

just

like

before,

because

Ross

knows

that

we

have

a

square

in

a

circle.

It's

going

to

point

at

the

exact

implementation

of

the

of

the

trait

that

we

have.

So

it's

not.

It's

not

like

it's

saying.

Well,

you

know,

shape

is

an

interface,

so

we'll

just

dynamically

dispatch

that

interface

at

runtime.

A

It's

it

knows

at

compile

time

exactly

what

thing

we

want

to

dispatch

to,

and

it

dispatches

to

that

so

so

far

so

good.

We

still

have

static

dispatch.

We

still

have

inlining

and

all

the

optimizations,

but

you

might

be

thinking

well.

What,

if

I

wanted

to?

You

know

that

print

line

is

sitting

right

there

in

my

main

function.

What

if

I

wanted

to

pull

it

out

to

a

different

function?

If

I

do

that?

Doesn't

that

mean

that

I'm

gonna

end

up

with

dynamic

dispatch

again

right?

A

Doesn't

that

mean

that

the

other

function

has

no

idea

what

thing

I'm?

Actually

what

the

concrete

thing

I'm

actually

calling

it

on

and

to

handle

that

rust

has

a

thing

called

trait

bounds,

and

so

here's

how

you,

how

you

talk

about

it?

So

it's

a

little

different

than

perhaps

what

you

might

have

done

in

another

language

with

their

interfaces.

A

So

we

can

we.

Basically,

you

can

see

that

you

can

start

writing

distractions

that

are

pretty

high

level

in

the

sense

of

sort

of

Ruby

or

go

where

you

can

say.

I

take

anything

that

implements

this

interface,

but

because

of

the

particular

way

that

it's

implemented

in

rust.

You

get

you

still

get

static

dispatch,

so

you

could

you

start

building

things

up

and

because

of

the

tradition,

the

typical

idiomatic

way.

You

write

things

in

rust.

A

You

end

up

with

very

transparent

to

the

compiler

big

trees

of

things

and,

in

my

opinion,

traits

are

where

the

magic

is

so

and

up

until

now.

If

you

just

look

at

structs

and

in

foals

before

I

talked

about

traits,

it's

definitely

an

improvement

over

the

equivalent

C

code,

because

the

C

code

is

pretty

gnarly,

no

matter

what,

but

it

might

feel

like.

Well,

if

I

don't

have

I

can't

really

write

anything

higher-level

if

I

start

needing

to

have

multiple

implementations

of

something

I'm

gonna

get

stuck

I'm

not

gonna,

be

able

to

write

the

functions.

A

I

need

I'm

going

to

end

up

writing

very

concrete

code,

but

because

of

the

fact

that

there's

a

way

to

write

functions

that

take

trait

bounds

again,

it's

here,

it's

a

function

that

takes

any

implementation

of

shape.

But

what

this

is

saying

is

it's

not

just

that

you

should

go

figure

out

at

runtime.

What

kind

of

thing

to

do?

What

kind

of

thing

you

need

is

it's

saying

at

compile

time

figure

it

out

that

allows

you

to

create

many

abstractions

at.

A

However

deep

you

go

end

up

with

the

compiler

being

able

to

see

the

whole

picture,

so

you

get

to

write

methods

that

support

many

different

types,

but

you

still

get

static,

dispatching

and

inlining,

and

if

you

remember

at

the

beginning,

I

showed

you

a

car

with

like

string

that

cars

dot

all

blah

blah

blah

right,

that

all

method

is

actually

implemented

as

a

generic

method

on

all

iterators,

but

at

compile

time

the

compiler

says.

Oh,

I

know

that

you

actually

have

the

cars

iterator

here.

A

I

know

that

this

is

not

just

any

random

iterator

in

the

world.

I

know

that

you

have

the

cars

iterator

and

it's

so

it's

able

to

actually

inline

that

actual

code

directly

into

the

program

and

maybe

you're

starting

to

get

a

sense

for

where

all

this

is

going

now,

if

you

want

to

learn

more

about

how

traits

fit

into

the

abstractions

story,

there's

a

really

great

article

on

the

rust

log

called

abstraction

without

overhead

traits

and

rust.

A

A

Interestingly,

you

actually

know

all

the

things

from

what

I

already

explained

to

understand

how

closures

work

in

rust,

but

I'll

go

a

little

I'll

go

slow,

so,

first

of

all,

I'm

going

to

show

you

so

ruby

has

a

thing

called

tap

in

it.

You

started

off

in

rails,

but

ended

up

in

Ruby,

and

the

basic

idea

is

that

it's

a

method,

that's

on

all

objects,

so

it's

implemented

on

object

and

it

takes

a

block

and

that

block

just

takes

the

object,

but

the

whole

method

tap

returns

the

outer

object.

A

So

the

basic

idea

is

so

you

may

you

may

basically

need

it.

Sort

of

abstracts

over

the

pattern

of

like

x

equals

something

do

some

stuff

and

then

return

X,

which

you

probably

have

experience

a

lot

of

times,

and

it's

can

be

much

nicer

to

use

tap,

and

so

you

can

implement

tap

in

rust

and

I'm

not

going

to

go

into

the

details

of

how

it's

implemented.

A

It's

like

five

lines

of

code

and

a

little

dense,

but

you

can

implement

the

equivalent

thing

in

rust

and

the

way

you

use

it

in

rust

is

actually

very

equivalent

to

how

it

looks

in

Ruby.

So

I

made

a

vector

I

made

a

array

here:

I

started

off

with

the

string

rails,

I

tapped.

It

then

I

extended

on

all

the

args

from

the

environment.

A

So

that's

like

odd

V

and

then

at

the

end,

I

pushed

another

string

on

it

which

is

H

and

then,

if

I,

that

whole

thing

V

gets

the

value

of

the

multiple

taps

and

now

has

the

vector

that

I've

been

working

on

the

entire

time

and

the

reason

why

you

need

tap

here

is

because

extend

and

push

actually

don't

return.

The

original

object.

A

So

if

I

was

to

just

say,

V

equals

vector,

extend

that

push

somewhere

along

the

lines,

at

least

in

rust,

we'll

get

a

compile

time

error,

but

it's

not

going

to

what

you

want

and

tap

lets.

You

do

what

you

want,

basically

you're,

so

that

that's

great

but

I

think

if

you're

used

to

these

kinds

of

abstractions

in

Ruby,

you

kind

of

come

to

get

weary

of

them,

because

every

single

time

you

use

an

abstraction

like

this,

you

have

to

say

to

yourself:

is

it

really

worth

it

like?

Maybe

I

should

have

just

done.

A

The

three

liner

and

you'll

see

blog

posts

out

there.

That

say

like

don't

bother

with

stuff

like

this.

It's

confusing,

but,

more

importantly,

it's

slow.

So

you

should

just

the

three

liners

find

everyone

knows

that

it

means

etc.

But,

interestingly,

in

rust,

this

program

will

actually

perform

equivalently

to

the

longer

version

of

the

program

and

rust.

This

idea

is

called

zero

cost

abstractions,

which

is

what

that

blog

post

I

showed

before

goes

into

more

detail

about,

and

you

may

already

have

an

intuition

for

how

that

works

with

traits

and

struts.

A

But

how

can

that

work

with

closures?

How

does

how

does

what

we

learned

so

far?

Helped

us

understand

how

closures

end

up

being

free

in

rust.

So

the

first

thing

is:

closures

are

normally

stack

allocated

and

rust,

because

if

you

think

about

how

you

use

closures

in

Ruby,

99%

of

the

time

you're

making

a

closure

to

past

you're

making

a

block

to

pass

to

a

function,

that's

just