►

From YouTube: Rust & Tell - April 2021

Description

All details: https://berline.rs/2021/04/27/rust-and-tell.html

00:00:00 Introduction

00:04:00 Xavier Denis - Safer Rust: program verification with Creusot

00:33:20 Bernhard Borges - Using Rust to build open-source distributed networks

01:04:16 Andreas Klostermaier - Cropping.Rocks: a Rust/Rocket/WASM microservice

A

And

as

a

presenter

and

organizer,

my

name

is

evans

and

we

have.

I

have

a

co-organizer

called

scotland.

I

think

he

is

not

available

today,

probably

if

you

have

been

to

roast

and

tell

from

previous

session

on

march,

you

know

a

bit

about

me

now:

I've

been

taking

over

from

quite

few

strong

people

on

the

community

here

in

ross

center.

A

A

A

The

rosentel

is

an

online

meeting

meetup,

it

is

broadcasted

on

zoom

and

we

also

have

zoom

chat

where

you

will

be

able

to

ask

your

question

to

the

speakers.

We

also

have

breakout

rooms.

That

is,

after

the

second

talk

today

we

will

have

breakout

room.

You

will

be

assigned

to

a

room

and

for

a

few

minutes

to

chat

to

other

people

to

other

participants,

and

if

you

feel

alone

in

your

room,

you

are

always

have

the

option

to

come

back

to

the

main

room

and

we

can

assign

you

to

another

room.

A

Also

after

the

breakout

room,

we

will

have

the

last

stop

as

well.

So

just

keep

that

in

mind,

we

also

have

matrix

chart

available.

So

you

can

ask

your

question

on

matrix

chart

or

you

can

ask

your

question

directly

on

the

zoom

this

stream.

Would

this

meetup

will

be

streamed,

live

on

youtube

and

it

will

be

recorded

and

posted

afterwards.

A

So

if

you

are

not

willing

to

to

show

your

face

on

the

video,

probably

this

might

be

the

best

time

to

to

turn

off

the

video.

If

you

have

questions

you

can

type

it.

As

I

said

on

matrix

chat

or

on

zoom,

we

will

be

able

to

pick

it

up

and

ask

the

speaker

at

the

end

of

the

talk

and

also

to

highlight

that

this

community

is

here

for

you,

so

we

need

to

build

it

all

together.

A

A

Today

we

have

three

talks.

The

first

one

will

be

safer,

rust

program,

verification

represent

the

with

salvia

dennis

and

the

second

talk

will

be

using

ros

to

build

open

source

distributed

network

from

bernhard

burgess

and

he's

from

florence,

and

the

last

stop

will

be

cropping

rocks.

Roast

rocket

was

microservice

from

andreas.

B

B

We

often

hear

the

phrase

you

know

formally

verifying

a

program

or

a

formally

verified

code

or

things

like

that.

But

it's

like

what

does

that

actually

mean,

because

when

you

ask

people

to

actually

define

it,

they

give

you

all

sorts

of

different

answers.

It's

things

like

even

unit

tests

to

sort

of

more

advanced

techniques

like

property

tests,

or

you

get

all

the

crazy

types

that

you

find

in

languages

like

haskell

or

sometimes

in

rust

as

well.

B

Where

you

give

it

a

program,

it

tells

you

yes

or

no,

and

if

no,

it

usually

gives

you

a

pretty

decent

error

message

that

sort

of

explains

why

your

program

doesn't

do

whatever

the

type

say

it

does

and

then,

as

we're

willing

to

trade

a

little

bit

more

of

automation

and

ease

of

use.

We

typically

get

a

lot.

B

We

get

interactive

theorem

provers,

where

you

basically

you're

doing

math

on

the

computer,

and

it

just

turns

out

that

you're

doing

math

about

your

programs

and

you

can

do

all

sorts

of

crazy

stuff

there,

but

it

gets

very,

very

complicated,

very,

very

fast,

but

then

in

the

middle

there's.

This

point,

which

I

kind

of

I

kind

of

think

it's

a

sort

of

sweet

spot

in

this

sort

of

verification

area,

which

is

deductive

verification

where

you

have

mostly

automated

techniques,

even

though

I

kind

of

plotted

it

pretty

low.

B

You

have

mostly

automated

techniques,

but

you

still

need

a

good

amount

of

expertise

to

to

get

very

far

and

you're

relatively

expressive

in

what

in

what

you

can

say.

So

what

actually

is

deductive

verification?

Well,

the

idea

is,

as

the

name

sort

of

implies.

We

want

to

deduce

things

about

our

programs.

We

want

to

reason

about

them,

so

the

first

thing

is

going

to

be.

We

need

a

logic

in

which

to

reason

about

our

programs,

and

so

typically,

what

we

use

is

what's

called

a

logic.

B

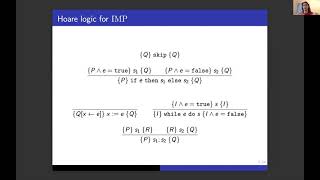

When

we

evaluate

the

code

in

c,

then

we

get

the

post

condition

q,

and

this

is

very

nice

because

it

gives

us

a

bunch

of

simple

rules

that

we

can

then

just

apply

to

the

to

the

code

of

our

program

to

sort

of

reason

about

them,

and

so,

if

we

look

at

this

a

little

bit

more

concretely,

here's

like

a

little

logic

for

a

very

basic

example

language.

That's

often

used

in

like

academic

courses.

B

So

the

idea

is,

we

just

have

if

statements,

while

loops

assignments

down

here

and

like

sequencing,

and

you

can

see

you

can

see

how,

like

the,

how

we

define

these

rules

like,

for

example,

for

skipping,

we

just

say

that:

well,

nothing

happens.

So

if

we

have

a

precondition,

q

after

doing

a

skip,

we

still

have

q

right,

whereas,

for

example,

for

sequencing

to

be

able

to

say

that

we

have

given

p

at

the

beginning.

After

evaluating

these

two

instructions,

we

have

q.

B

We

need

to

know

that

evaluating

s1

is

going

to

give

us

some

logical

phrase,

r

and

then

working

from

r.

When

we

evaluate

s2,

we

get

q,

and

so

we

can.

We

can

define

all

these

rules

and

we

can.

We

can

do

proofs

about

our

programs

with

this,

but

we

can

ask

ourselves

a

very

reasonable

question,

which

is:

how

do

we

know

we

actually

have

the

right

rules

right,

like

these

are

choices

that

we

have

to

make.

So

what

happens?

B

If

we

actually

there's

a

rule

out

there,

that's

a

little

bit

simpler

and

lets

us

do

more

proofs

more

easily,

and

it

turns

out

people

thought

about

this

problem

and

they

came

up

with

this

idea

of

the

weakest

precondition.

Calculus

and

the

idea

is

to

just

take

a

different

view

of

this

problem

of

of

these

rules

and

instead

just

work

from

the

post

condition.

B

B

So

we

don't

have

to

do

a

lot

of

the

busy

work

and

it's

been

applied

to

a

bunch

of

different

languages.

So

you

get

functional

languages

like

o'camel,

but

then

you

also

have

like

you,

have

a

bunch

of

different

deductive

verifiers

for

c

for

java

for

all

sorts

of

different

imperative

languages,

with

varying

degrees

of

success

each

time

and

that

varying

degrees

of

success

tends

to

be

because

of

pointers.

B

So

the

difficulty

in

a

systems,

language,

like

c

or

in

rust,

is

handling

pointers.

It's

handling

pointers

both

when

we're

just

writing

code,

because

you

know

in

c

we

get

the

classic

like

seg

faults

and

use

after

free

errors

and

all

sorts

of

problems

that

are

typically

rooted

in

pointers

and

the

same

way.

That

complicates

our

sort

of

informal

reasoning

and

the

way

we

write

our

programs,

it's

going

to

complicate

the

way

we

prove

and

think

about

our

programs

in

a

more

formal

way.

B

And

so

researchers

have

spent

a

lot

of

time

attacking

this

problem

c

and

have

come

up

with

a

bunch

of

specialized

tools

that

allow

us

to

structure

our

programs

like

formally

in

and

reasons

about

them

more

effectively,

but

it

it.

It

means

that

all

these,

like

a

deductive

techniques,

are

going

to

be

a

lot

less

efficient

because

it's

just

a

fundamentally

more

complicated

thing,

and

so

we

can

see

like

what

I

mean

here,

where

even

in

the

most

simple

c

program,

we

have

a

lot

of

things

that

we

have

to

consider.

B

If

we

want

to

talk

about

what

it

means

for

it

to

be

correct,

something

as

simple

as

incrementing.

Two

pointers

is

actually

hiding

a

lot

of

sort

of

potentially

undefined

behavior

right.

So

if

we

just

want

to

say

like

okay,

well,

does

this

program

increment

not

crash?

Does

it

run?

Does

this

function

call

succeed

well

to

know

that

we

need

to

know

that

a

and

b

are

both

initialized,

that

they

point

to

initialized

memory

and

the

question

of

allegating

here

isn't

important

in

this

example,

but

it

often

is

even

for

fundamental

things.

B

B

It

just

takes

all

these

problems

off

the

table

for

rest

code,

and

so

that's

exactly

like

if

we

translate

the

same

example

into

rus,

that's

exactly

what

we

we

can

conclude

informally

right

like

if

we

have

two

borrows

a

and

b

on

some

integer

well,

we

can

freely

just

increment

the

value

of

a

with

the

value

of

b,

and

we

don't

have

to

worry

about

any

sort

of

memory

problems

right.

We

know

it's

going

to

run.

We

know

it's

going

to

succeed.

B

We

actually,

we

would

like

to

even

say

that

not

only

is

it

going

to

succeed,

but

the

value

of

a

is

going

to

be

the

value

incremented

by

whatever

was

inside

of

b,

and

so

the

idea

is

that

we

want

to

leverage

the

same

properties

of

the

type

system

that

allow

us

to

write,

simple

rust

code,

that

use

pointers

and

not

worry

about

memory

like

memory

issues,

seg

faults,

all

sorts

of

stuff.

We

want

to

leverage

that

in

verification

as

well

right,

we

don't

like.

B

And

so

the

way

it's

going

to

work,

the

way

closure

works

is

that

it

translates

russ

code

into

a

functional

language

and

that

we

can

re-exit.

We

can

reuse

existing

tools

for

functional

language,

verification

and,

and

then

prove

properties

on

that

translation

and

know

that

they

hold

about

our

original

russ

program

and

so

the

key,

the

key

the

key

to

kojo

is

this

technique

developed

by

the

authors

of

a

paper

called

rust

horn,

which

is

a

verifier

for

rust,

where

we

use

a

little

trick

to

represent

mutable

boros.

B

We

use,

what's

called

non-determinism,

where

we're

going

to

guess,

basically

we're

going

to

guess

values

about

the

borrowers

and

turns

out

we're

just

allowed

to

do

that,

so

this

part

might

get

a

little

bit

technical.

So

if

you

don't

follow

fully,

it

doesn't

really

matter

we'll

get

back

to

sort

of

more

concrete

examples

in

a

few

minutes,

but

I

I

just

wanted

to

give

a

little

bit

of

intuition

for

this

translation.

So

the

idea

is

if

we

have

some

value

a

and

we

create

a

borrow

b

from

a

for

some

lifetime

alpha.

B

Well,

as

we

know

in

rust,

when

we

borrowed

a

value,

we

can

no

longer

access

that

original

name.

We

had

for

the

value

right

like

once,

we've

borrowed

a

we

can't

use

a

until

we

give

until

alpha

is

done

until

the

lifetime

of

our

borrow

is

done,

and

that

also

means

every

modification

to

the

value

of

a

has

to

go

through

b

right.

B

B

So

that's

what

we

do

here,

where

we

just

write

like

5

into

b,

and

so

we

in

our

translation,

we're

updating

the

value

that

we

have

in

b,

whenever

we

would

get

to

the

to

the

mo.

That

b

is

dropped

that

our

our

borrow

is

given

up.

We

do

this

sort

of

magical

thing

here,

which

is

an

assume

where

we're

just

gonna

say

well,

it

turns

out

that

the

final

value

of

b

is

exactly

the

value

of

b

here

right

now,

and

so

through

this

sort

of

magic

trick.

B

We

can

conclude,

then,

that

a

must

be

5,

because

we

know

that

a

is

the

final

value

b

and

the

final

value

of

b

is

its

current

value

and

the

current

value

is

5.,

and

so

by

doing

this,

we've

actually

encoded

sort

of

mutable

pointers

into

a

functional

language

without

having

to

do

any

sort

of

complicated

things

like

functional

references.

We

get

this

nice

encoding,

that's

very

easy

to

verify

and

sort

of

sidesteps

all

the

challenges

with

verifying

mutable,

pointer

or

code

using

immutable

pointers.

B

It's

a

it's

a

challenging

proof

to

do,

but

what's

more

interesting,

is

that

we're

working

on

a

new

proof

with

the

authors

of

rust

horn,

which

is

much

stronger

and

much

more

extensible

and

notably,

would

allow

us

to

handle

things

like

unsafe

code?

Like

justify

mixing

unsafe

code

into

your

safe

code

and

then

verifying

all

that

together,

so

that

is

going

to

be

that's

the

current

work

and

should

be

very

interesting.

B

B

We

have

constants,

we

have

all

sorts

of

pure

expressions,

so

arithmetic

comparisons

function

calls.

Even

so

you

can

do

all

these

fun

things

in

your

specifications,

but

we've

also

added

two

sort

of

special

operators

with

this

rather

ugly

syntax,

which

are

t

at

fin

and

t

at

now,

which

we

use

to

refer

to

the

values

of

borrows

in

our

specifications,

and

so

we'll

look

at

that

just

in

a

second

and

what

that

means.

B

We

want

to

be

able

to

prove

what

it's

going

to

do

so

first,

we

have

to

describe

what

it's

going

to

do,

and

so

that's

what

we

write

up

here

and

these

little

pragma

clauses,

where

we

give

two

post

conditions

denoted

by

the

keyword,

insurers

and

we

say

the

final

value

of

a-

is

equal

to

its

original

value.

The

value

at

entry,

along

with

the

original

value

of

b,

whereas

the

final

value

of

b

is

exactly

its

original

value,

so

b

is

unchanged.

B

And

so

it's

a

relatively

concise

specification

that

gives

us

exactly

what

we

want

to

know

about.

That

tells

us

exactly

what

we

want

to

know

about

increment

right,

that

it

it's

a

function

which

calculates

the

increment

and

so

yeah.

So

the

value

of

at

fin

here

is

a

value

that

comes

from

the

from

the

future

of

a

borrowed

right.

B

In

fact,

I

think,

to

want

to

do

this

in

rust,

because

we

want

to

be

able

to

do

things

like

return,

a

borrow

that

is

actually

a

sub

part

of

some

other

bigger,

borrow

and

sort

of

talk

about

how

they're

related

together-

and

so

that's

that's

what

this

constructor

allows

us

to

do

so

yeah.

So

now

we

can

see

sort

of

how

we

actually

apply

this

and

how

we

actually

get

get

something

out

of

our

borrows

and

our

specifications.

B

So

we

have

our

increment

function

with

its

specification

up

here

and

we

just

want

to.

We

want

to

verify.

We

want

to

verify

that

these

two

assertions

are

statically.

True,

we

want

to

prove

that

they're

going

to

always

be

true,

and

so

I

mean

it's

sort

of

intuitively,

true,

of

course,

but

we

can

sort

of

step

through

and

see

what

our

tool

is

going

to

to

see

in

a

formal

sense

and

how

it

can

actually

conclude

that

those

two

assertions

are

true.

B

So

as

we

evaluate

our

code,

we

on

the

on

the

right

hand,

side.

Here

I

have

some

sort

of

logical

environment

which

is

just

sort

of

saying

how

the

tool

understands

the

state

of

the

rust

program

to

be

so.

We

we

evaluate

a

code.

So

for

the

moment

we

just

know

things

about

x

and

y,

and

we

get

to

this

point

and

we

perform

the

two

borrows

for

x

and

y.

So,

as

I

said

way

back

when,

when

we.

C

B

A

borrow

we're

going

to

guess

its

final

value

ahead

of

time

right,

so

we're

going

to

guess

some

value,

v1

and

some

value

v2.

We

don't

really

know

what

value

it

is,

but

we

we

know

it's

going

to

be

the

final

value

and

then

we're

going

to

call

the

increment

function,

and

so,

when

we

call

the

increment

function,

we

actually

we

end

up

recovering

sort

of

logically

the

post

conditions

right

like

we

we

have

when

we

call

a

function.

B

B

B

B

So

first

we

have

to

actually

describe

what

it

means

to

put

zero

in

every

cell

of

our

list.

So

we

have.

We

have

to

define

a

few

helpers

first.

So

we

have

this

little

annotation

logic

which

lets

us

write,

rush

functions

that

we

actually

can't

execute

at

runtime.

These

aren't

real

rush

functions,

but

they

allow

us

to

write

they.

B

They

allow

us

to

write

little

things

to

use

in

our

logic,

so

you

can

note

that

the

return

type

here,

for

example,

is

int

with

a

big

I

and

we're

talking

about

here

about

unbounded

integers

right,

not

like

u64,

u32

or

anything

like

that.

So

we

have

all

the

mathematical

integers

that

are

at

our

disposition.

B

B

When

we

look

up,

I

in

the

final

value

of

the

list

we're

going

to

find

zero

and

also

a

second

thing

we

know

is

that

the

length

of

the

list

is

unchanged,

which

means

that

we

haven't

added

or

removed

any

cells

of

our

list

right.

We

didn't

accidentally

delete

everything,

and

so

hopefully

that's

the

specification

is

satisfactory

to

everyone

and

so

then

to

actually

show

the

specification

to

be

true.

B

So

hopefully

I

didn't

go

too

fast

and

didn't

lose

everyone.

I'm

sorry.

If

I

wasn't

able

to

go

into

enough

detail,

but

I'd

be

happy

to

answer

any

questions

now

ordering

the

breakout

session

and

just

as

a

quick

summary,

some

of

the

ongoing

work

we

have

is

basically

just

extending

this

proof,

so

extending

the

proof

that

our

approach

is

correct.

As

I

said

we

want

to,

we

want

to

actually

formally

verify

our

proof

and

then

extending

the

kinds

of

rest

code

that

we

can

verify.

B

So

what

I

showed

right

now

is

how

we

can

verify

russ

code

that

uses

mutable

borrows,

of

course,

we'd

like

to

do

things

we'd

like

to

verify

code

that

uses

traits,

for

example,

rustcode

that

uses

closures

and

roscode

that

uses

types

like

cell,

which

sort

of

break

our

approach

and

so

that

that's

the

ongoing

work

and

future

work

for

us

right

now

so

yeah.

I

sorry

sorry

if

I

went

too

fast

everyone,

but

yeah

I'd,

be

happy

to

take

any

questions

you

have

now.

A

B

Yeah,

the

conclusion

is

it's

out

of

scope.

For

now

it's

asynchronous

programming

or

the

like

fine

grain,

concurrency

like

that

is

probably

going

to

be

it's

going

to

be

challenging.

I

think,

but

yeah

I

that

I

we

evaluated

to

the

extent

that

we

decided

not

to

evaluate

it

further,

just

because

it's

it's,

it's

probably

going

to

be

a

lot

of

work

on

its

of

it

in

its

own

right.

B

A

B

No,

I

don't

have.

There

is

no

plan

to

do

that,

and

in

fact,

though,

though,

logical

annotations

can

often

dominate

the

code

in

informally

verified

code,

I

think

it

really

does

make

the

most

sense

to

embed

them

alongside

with

the

code,

because

they

often

correspond

to

very

specific

program

points

right

like

you,

you

don't

want

your

assertion

to

be

anywhere

in

your

program.

You

want

it

to

be

exactly

on

line

73

and

same

thing

with

like

loop

invariants.

B

If

you

have

multiple

loops

you,

you

want

to

say

that

this

invariant

is

for

the

first

loop,

not

the

second

one.

So

it

is

it's

true.

It

is

aesthetically

less

pleasing

to

look

at

formally

verified

code

when

it's

90

assertions

and

10

code,

but

I

I

think

that's

that's

sort

of

how

it

has

to

be

for

it

to

make

the

most

sense.

B

A

C

A

D

Hi

thanks

for

having

me

thanks

for

being

here

and

thanks

javier.

This

was

very

interesting

talk

and

he

actually

derailing

me

a

little

bit

because

I

have

a

little

bit

of

high

calculus.

So

now,

I'm

off,

of

course,

thinking

half

of

which

is

that

of

what

I

shouldn't

be

thinking

about,

but

anyway,

so

I'll

talk

today

is

a

program

distributed

system

of

the

act

marine.

D

Let

me

just

give

you

a

little

bit

background.

Fluence

labs

builds

open

source,

peer-to-peer

network

components,

development

tools,

support

systems

and

all

the

development

is

deeply

rooted

in

rust,

for

pretty

much

all

the

reasons

in

terms

of

safety.

Javier

just

pointed

out,

and

we

all

know,

that's

why

we

love

it

and

our

goal

is

really

to

empower

developers

to

efficiently

and

reliably

build

peer-to-peer

applications.

D

So

if

you

haven't

done

much

in

the

distributed

space

or

peer-to-peer

applications,

you

might

just

say

well,

all

I

need

is

slip

p2p

and

everything's

done

and

gone.

Well,

that's

not

really

the

case.

It

gives

you

the

basis

to

build

on

and

if

you

ever

had

to

build

on

lib

p2p

in

terms

of

application

services

back

in

systems,

you

probably

have

not

had

a

very

good

experience

at

all

and

the

failure

rate

seems

to

very

very

high,

and

one

of

the

things

we

offer

is

is

is

a

concept

and

the

tooling.

D

D

Well,

there's

a

variety

of

reasons

for

it,

but

at

the

service

level,

one

of

the

key

aspects

is

that

peer-to-peer

offers

something

that

your

traditional

client

server

architecture

does

not

offer,

and

that's

actually

the

request

response

pattern

in

your

typical

client

server

environment.

You

basically

have

requests

you

process

response.

You

have

a

request

to

process

response,

so,

for

example,

if

you

want

to

do

a

credit

card

authorization-

and

I

don't

know-

send

a

sms

to

to

the

user,

you

basically

call

your

stripe.

Ap

api.

D

So,

on

a

sudden,

you

can

do

very,

very

complex

and

computational

intensive

computation,

computational

intensive

tasks

on

thin

clients

like

iot

devices

and

mobile.

Well,

not

that

they're

weak

these

days,

but

a

particular

iot

devices,

because

you're

really

offloading

the

entire

application

flow

to

the

peers

and

that's

where

ackmarine

comes

in,

and

this

is

very

really

helps

you

as

a

developer

to

to

to

take

advantage

of

peer-to-peer

systems.

So

aquamarine

is

comprised

it's

a

bunch

of

stuff

and

one

is

the

aquascripting

language

which

basically

is

a

high-level

language

to

write

peer-to-peer

applications.

D

D

Okay?

So,

in

order

to

do

this,

you,

you

nee,

you

need

a

foundation,

and

this

is

where

now

it's

timing,

a

little

bit

heavier

aquarine

is

based

on

pi,

calculus

and

and

it's

a

process

calculus,

that's

geared

towards

modeling

concurrent

systems

with

dynamic

topologies,

and

I'm

not

going

to

go

into

detail.

But

one

of

the

big

challenges

in

modeling

and

verifying

systems

in

dynamic

topologies

is

that

that

in

a

peer-to-peer

network

it

is

the

expectation

that

a

peer

drops.

D

It's

not

the

exception,

and

you

need

to

be

able

to

handle

that

and

you

need

to

be

able

to

handle

that

formally

and

you

need

to

be

able

to

handle

that

your

programs

function

when

those

kind

of

events

happen-

and

this

is

where

sort

of

the

the

pi

calculus

foundation

comes

in,

and

the

modeling

of

the

systems

and

anybody

who's

looked

at

any

of

the

calculate.

There's

a

it's

just

a

handful

plus

of

operators

which

are

extremely

powerful

to

to

get

a

lot

done.

D

D

But

it's

basically,

you

know

you

execute

something

either

in

sequential

apparel,

you

iterate

over

results

and

you

have

branching

and

then

you

have

an

identity

and

then,

if

you

look

at

it

from

an

instruction

statement

in

this

particular

light

pink

line,

basically

it

says

we

have

a

service

dht

that

has

a

function

put,

and

we

want

to

call

that

function

of

that

service

on

that

particular

peer,

one,

two

d3

node

and

give

it

the

argument

list,

which

is

key

and

value.

So

we

get

up.

D

Think

of

so

on

the

right.

We

have

the

same

basic

layout

of

the

low-level

language

and

on

the

left.

We

have

an

interaction.

So

the

big

circle

is

a

academia

network.

The

square

ones

are

just

various

nodes

peers

on

in

that

network

and

then

the

little

dots

are

services

one

or

more

for

each

node

on

throughout

the

network.

D

You

want

to

add

a

file,

you

want

to

add

an

image,

you

want

to

add

an

emoji

or

more

than

one,

and

you

could

do

this

through

various

api

services

on

the

client

side

or

you

could

do

it

on

a

peer-to-peer

environment

and

if

you

do

it

on

a

peer-to-peer

environment,

you

basically

start

marking

up.

If

you

will

your

your

message

with

those

very

through

various

services

that

then

eventually

ends

along

the

way

ends

up

at

that

chrome.

Consumer

of

that

message-

and

I

mean

that

was

just

a

very

small

example.

D

So

I

showed

you

the

low

level

language,

because

the

low

level

language

is

first

of

all,

it's

been

long

around

than

aqua

script

and

to

me

it,

it

really

shows

you

from

a

sort

of

almost

like

pseudocode

what

happens,

but

anybody

who's

ever

dealt

with

list

scheme

whatever

and

have

to

wrangle.

Parentheses

and

nesting

knows

that

is

it's

a

highly

ineffective

way

of

writing

large

programs.

It's

not

just

ineffective,

it's

extremely

painful,

especially

if

there

are

change

requirements.

D

So

if

you

look

there

the

service

greeting,

basically

that

that

references,

a

service

generically

by

its

in

by

signatures,

so

we

have

a

greeting

service

out

there

somewhere,

which

is

basically

takes

a

string

and

a

boolean

and

returns

a

string.

So

this

is

not

the

service.

This

is

the

representation.

So

if

you

are

coming

from

a

blockchain

site,

think

of

it

as

as

your

abi

for

a

smart

contract.

D

We

want

to

have

this

computation

through

the

greeting

service

itself,

where

the

function

is

greeting,

taking

the

name

and

greeter

parameters

and

then

returns

the

result,

and

that's

really

compact,

it's

very

easy

and,

as

I

said,

we

want

to

help

developers.

We

want

to

empower

developers

with

aquamarine

to

successfully

build

peer-to-peer

application

and

clearly,

if

you

look

at

the

left,

the

right

kind

of

is

is

not

where

you

want

to

be.

D

An

additional

compiled

target

in

we

have

is,

is

a

text

script.

So

basically

you

can

instruct

the

compiler

to

just

say

you

know

what

I

don't

want,

just

a

low

level

representation.

I

want

this

wrapped

in

in

a

typescript

stub

and

then

you

just

gotta,

integrate

it

in

your

front.

End

application.

It's

super

neat

and

pretty

powerful

and

saves

you

from

a

development

perspective

a

lot

of

time.

D

And

if

you

look

at

it

in

the

two

services

we're

using

for

the

demonstration

here,

we

have

a

echo

service

which

is

basically

a

practice

proxy

service

for

whatever

you

want

it

to

be,

including

it

could

be

a

database

service

and

in

this

particular

call

where

instance,

we

we

give

the

echo

service.

We

give

it

an

array

of

strings

and

we

turn

on

a

vector

of

those

structs

which

include

the

string

and

that's

compiled

to

webassembly.

D

And

then

we

have

a

greeting

service

where

we

want

to

which

was

the

one

which

showed,

and

it

basically

takes

your

your

name.

So

it's

basically

a

hello

world

plus,

and

it

takes

you

a

greeter

whether

it's

agreed

or

not.

So

it's

either

high

or

bi.

And

now

we

want

to

compose

those

two

services

in

the

sense

that

we

want.

D

The

same

we

got

the

same

two

services

and

basically,

what

we're

specifying

here

is.

We

basically

say:

okay,

our

echo

service

is

on

node

one

and

we're

piping

in

rust,

berlin

and

deutschland,

and

we

basically

get

then

the

echo

result

back

echo

rust,

echo

blah

blah

and

that's

coming

from

a

pier

called

node

one.

D

In

addition,

we

have

a

grid

two

degree

of

services,

one

on

node

two

and

one

on

node.

Three,

the

greater

services

are

the

same

they're

all

same

web

assembly

modules

derived

from

the

same

webassembly

modules,

and

since

we

know

we

have

three

inputs,

we

just

basically

take

two,

the

first

one

and

the

last

one

or

zero

and

two

and

now

we

get

back

the

high

rust

hydrogen

and

that's

one

way

of

doing

it's

kind

of

lame

way

of

doing

it.

D

D

So

if

you

have

again

the

accessories

on

node

1,

it

takes

the

same

inputs,

and

now

we

have

in

this

particular

instance,

I

put

using

just

one

greeting

service

on

node

2

on

pier

2

that

now

just

processes

the

entire

array

in

sequence.

So

it

takes

one

at

a

time

so

iterates

over

it

one

at

a

time

and

that's

basically

the

specification

there

and

then

we

get

the

expected

single

array.

D

We

can

also

repurpose

those

services

into

a

slightly

different

workflow,

different

application,

and

in

this

case

we

want

to

take

advantage

of

the

boolean

capability

of

the

high

and

buy

of

the

service.

We

take

the

same

result:

the

same

service,

it's

on

another

one,

and

now

we

have

a

service

greeting

services

on

again

on

node,

2

and

3,

which

we

used

before,

but

now

we're

basically

piping

in

different

data

data

into

the

services

in

the

hang

on

in

the

greeter

parameter

slot

and

now

we're

getting.

D

Now

the

big

question

is

probably

I'm:

anticipating

is

security,

particularly

in

permissionless

environments

and

peer-to-peer

networks.

So

one

of

the

things

we

have

in

in

aquamarine

is

the

concept

of

security

tetraplate,

because

that's

basically

gives

you

the

ability

to

couple

your

authorization

from

your

origin

and

that

allows

you

to

basically

fundamentally

what

you're

doing,

if

you're

introducing

security,

it's

authentication

and

authorization

as

part

of

your

workflow

you're.

D

So

to

recap,

services

in

in

aquamarine

are

business

logic

units

and

they

basically

are

derived

from

nothing

web

assembly

modules,

particles,

call

services,

exposed

apis

and

provide

the

authentication

credentials

to

services,

protected

methods

and

aqua

choreographs.

The

services

into

applications

independently

from

service

business

logic

concerns,

and

that

gives

you.

This

is

all

part

of

the

aquamarine

toolset,

and

this

is

where,

on

the

virtual

machine

and

an

interpreter

level,.

D

A

D

D

See

you're

separating

you're

separating

the

service

from

the

workflow,

so

a

lot

of

the

concern

of

the

types

actually

is

at

the

service

level

right,

it's

not

so

much

at

the

choreography

of

the

service,

so

you're

you're

type

checking

really

comes

in

at

the

service

consumption

level,

and

that's

where

you

get

your

errors,

that's

where-

and

this

is

why,

in

many

respects,

starting

out

with

rust,

as

opposed

to

other

options

to

write

your

web

assembly

modules

is

actually

super.

Super

helpful.

D

So

one

thing

I

sort

of

skipped

over

what's

a

service

because

it's

not

not

really

part

of

act

marine

itself,

but

what's

a

service

a

service

is,

is

one

or

more

webassembly.

Modules

quote

unquote,

marked

up

by

a

blueprint

that

ties

together

different

modules

into

a

api

into

a

public

api,

and

with

that

we

associate

a

quote,

not

quote-unquote,

but

definitely

a

service

id,

and

this

is

the

unit

of.

D

I

don't

know

if

manipulation

is

the

right

word,

but

but

the

unit

of

reference

in

your

workflow

script,

so

the

script

it

the

workflow

itself

doesn't

do

the

data

checking

that

comes

in

or

the

even

type

checking

this

comes

in

at

the

service

level,

where,

when

the

service

level,

if

your

web

assembly

module,

if

you

will,

I

mean

it's

below

the

service,

gets

the

bad

data.

You

get

your

you

get

your

error

message

back

and

that

then

gets

reported

back

from

the

workflow.

Does

that

answer

the

question.

D

Okay,

so

tetraploids

have

have

a

public

key

and

the

signature

associated

with

them,

and

I

should

come

back

with

another

20

minutes

at

one

point,

but

but

basically

what

happens?

You

verify

the

integrity

of

the

tetrablade

at

every

hop

along

the

way

and

we

get

a

it's.

As

I

said,

it's

like

another

20

minutes,

but

that

verification

ensures

that

not

just

that

the

origin

of

the

tetraplet

is

what

it

should

be,

but

it

also

ensures

that

the

data

is

what

it

was

supposed

to

be.

D

D

However,

it

doesn't

give

you

the.

It

only

gives

you

a

pseudo

identity

if

you

will

and

that's

fine

for

verification.

If

you

need

a

full

identity,

you

probably

would

have

to

bring

in

a

decentralized

identity

server,

for

example,

or

service

that

takes

that

public

key

of

your

peer

client

and

associates

it

with

a

quote-unquote,

verifiable

identity.

Does

that

answer

the

question?

B

D

But

now

it's

a

much

fatter

service.

If

you

will-

and

this

gets

really

really

big-

really

really

quick-

I

mean

you

could

I've,

you

know

you.

Could

I've

taken

entire

libraries

like

sec

p,

for

example,

256

and

and

me,

and

you

know,

added

exposed

apis

to

it

and

made

it

one

service

and

that's

probably

not

necessarily

the

best

way

to

do

it,

and

so

you

do

service

granularity

definition

is

very

important

to

how

you

structure

it,

and

then

it

comes

to

how

you

spread

out

services.

D

So

one

thing

I

didn't

mention

is

services

themselves

can't

call

other

services,

and

this

actually

goes

back

to

towards

the

pi

calculus

ability

to

model

certain

things,

because

once

services

start

nesting

calls

it

doesn't

work

anymore.

You

can't

track

it

anymore.

It

becomes

intractable

and

and

then

you

have

no

expectation

of

of

of

reasoning

whatsoever

for

for

your

topology.

D

So

it

depends

how

many

services

you

have

now

in

terms

of

sort

of

an

auto

dns

to

resolve

your

services.

We've

been

thinking

about

it

and

it's

certainly

at

least

I've

been

thinking

about

it.

We've

been

thinking

about

it.

I've

been

thinking

about

it,

it's

high

on

my

list

and

so

right

now,

if

you

do

it,

you'd

have

to

resolve

it

yourself

in

the

nuts

of

this

future.

Hopefully,

we

have

something

for

you,

as

as

part

of

the

tooling

that

answer

the

question.

A

D

D

So

the

business

model

is

really

around

facilitating

service

developers

to

monetize

their

work.

So

before

I

switch

is,

does

that

answer

the?

So

this

is

nothing

to

do

with

marine

per

se

act.

Marine

is

open

source,

it's

free

to

use

whatever

you

want

to

use

it

for

you,

you

don't

have

to

use

anything

else,

but

if

you

want

to

use

the

stack

and

you

want

to

use

your

your

services

and

you

want

to

monetize

them-

that's

where

fluence

has

additional

tools

and

capabilities

coming

online

in

the

not-so-distant

future

that

help

you

with

that.

A

D

This

to

me

is

totally

unacceptable

and

I

think

it's

it's

it's

exactly

what

stalled

the

early

adoption

of

cloud

development,

because

that's

what

a

lot

of

business,

iit

or

enterprise

I.t

manager

said

would

happen,

and

now

it's

happening

now,

it's

not

as

as

widespread,

obviously,

but

still

I

think,

people

starting

to

realize

that

cloud

infrastructure,

it's

private,

it's

it's,

not

public,

it's

private

and

and

your

subject

and

you

can

be

subject

subjected

to

a

right

variety

of

quote-unquote.

It's

not

censorship!

D

D

D

D

A

C

C

C

But

now

I

can

use

this

color

tools

like

this

yellow

diamond

and

start

to

move

it

on

the

master

image,

and

what

happens

is

that

the

cropping

rectangle

of

the

different

aspect

ratios

changes,

independence

of

the

movement

that

I

make

here

and

if

I

find

a

position

for

this

yellow

diamond,

where

all

of

these

pieces

look

good,

I'm

set

and

done,

I

can

save

and

proceed

sometimes

just

moving.

This

yellow

diamond

is

not

enough

to

get

a

good

solution

or

all

the

images.

C

So

I

take

some

things

that

is

a

bit

a

little

bit

more

complicated.

So

obviously

this

woman

is

the

focus

area,

the

main

topic

of

the

image.

But

if

you

look

on

the

on

the

super

portrait

to

the

ref

to

the

right,

this

image

is

only

telling

part

of

the

story.

We

are

missing

the

groceries.

It's

not

obvious

that

this

is

about

shopping

and

depending

me,

so

I

can

make

more

sophisticated

modifications.

C

C

C

C

We

have

a

front

end,

that

is

the

web

page,

that

you've

just

seen,

and

that's

only

a

few

lines

of

html

in

javascript,

mainly

for

the

animations

and

nothing

else

in

it.

It's

a

pure

front

end

with

no

business

business

logic

at

all,

and

then

we

have

a

rust

rocket

based

backend

running

on

a

separate

server,

and

that

is

doing

the

real

business.

So

now

for

only

cropping

social

media

images,

it

wouldn't

be

necessary

to

make

such

a

sophisticated.

C

So

these

are

the

services

that

are

provided

by

the

backend

we

have.

We

have

various

monitoring

and

debugging

endpoints.

We

have

the

options

for

file

up

and

download,

but

that's

not

the

necessity.

The

whole

system

is

is

made

for

considerably

larger

problems

in

an

industrial

environment

where

we

will

pick

images

by

url

from

somewhere

else.

For

example,

then

we

have

this

cropping

hint

editor.

C

We

call

it

internally.

The

web

client,

that's

this

island

within

the

code,

but

it

is

delivered

by

the

back

end,

usually

embedded

as

an

iframe

in

the

front

end,

and

then

we

have

three

cropping

services

that

either

return

a

single

image

that

has

been

cropped

to

certain

parameters

that

were

passed

by

an

http,

get

request

and

all

the

parameters

in

in

the

url.

C

That

would

be

the

way

if

you

live

crop

images

from

a

website,

for

example,

or

we

could,

we

could

crop

a

whole

area

of

images

or

aspect

ratios

called

internally

bulk

crop,

the

multiple

image

crop

that

returns

a

zip

file.

That

is

what

we

just

used

on

the

cropping

rocks

front

end

and

then

there's

also

a

virtual

crop

which

does

the

cropping

but

does

not

return

an

image

file,

but

rather

data

like

rectangles

positions,

offsets

and

sizes,

so

that

you

can

do

something

with

the

data

in

your

own

front.

C

C

C

C

C

We

couldn't

move

the

red

frame

in

real

time

without

stutters,

because

it

has

to

check

the

positions

of

the

inner

frames

and

push

them

around

and

stuff.

So

I

decided

we

have

to

make

it

a

web

client.

Then

it's

easier

to

embed

into

other

solutions

like

like

dumb

systems

and

did

some

investigation

turned

out

app

assembly

would

do

the

job

or

I

was

just

supposed

to

think

like

this.

C

I

couldn't

imagine

that

javascript

could

be

fast

enough

to

do

everything

that

has

to

be

done

when

I

move

the

mouse

with

the

yellow

diamond

and

I

get

a

mouse

event,

every

every

six

teams

of

a

second

to

do

the

calculations

that

are

necessary,

because

assembly

is

faster

than

script

right-

oh

I'm

kidding,

but

that

got

me

into

web

assembly

that

got

me

into

rust

and

just

to

see

what

this

means.

What

happens

when

I

pick

up

the

yellow

diamond

and

I

move

it

around

with

the

mouse

60

times

a

second.

C

I

have

to

do

everything

that

is

on

the

listed

on

the

right

side

here.

So

I

have

to

calculate

where

my

mouse

is.

Where

this

element

is

going

to

be

this

movable

element,

then

I

have

to

check

the

limits.

For

example,

if

I