►

From YouTube: Join Us: or how I learned to stop worrying and love the trait by Coraline Sherratt - Rust KW Meetup

Description

This video was recorded during the Rust KW Meetup in Kitchener-Waterloo, ON, Canada on May 9, 2018.

https://www.meetup.com/Rust-KW/events/250122657/

This talk is a retrospective on specs-rs. Specifically exploring how it's user facing API uses traits to build fast and simple looking API.

Talk written and presented by: Coraline Sherratt (https://twitter.com/FloraCatz)

Recording: Mark Sherry

A

So

this

is

aspects

RS

retrospective

I

did

a

lot

of

work

in

the

initial

bring

up

aspects.

So

this

is

some

of

the

neat

things

we

worked

on

and

implemented

inside

of

that

and

I

wanted

to

share

it

with

you.

So

before

we

start

off,

does

anyone

know

what

an

EC

SS

here

at

a

few

second

question?

How

many

people

are

really

proficient

with

rust?

Have

you

like

done

macros

and

stuff

like

that?

A

How

many

people

have

never

used

breasts

at

all?

All

right?

You

people

tell

me

when

I

get

loose,

you,

okay,

because

this

is

this-

this

goes

right

into

the

weeds

of

rust.

Okay,

just

a

heads

up

all

right,

so

an

EC

s

is

an

entity

component

system

and

what

an

entity

component

system

does

is

that

kinda

works

like

a

column

based

database.

You

have

entities,

so

we've

got

three

entities

here,

and

these

are

literally

just

an

index

in

the

case

of

specs.

A

A

Now

we

have

to

add

a

component,

so

we

had

a

positions

component

and

now

we

need

to

bind

some

values

to

some

of

these

entities.

So

we

add

these

two

position

vectors

to

it.

Now

it's

important

to

note

that

no

values

are

actually

very

important

in

the

design

of

an

ECS,

because

most

entities

do

not

have

all

their

components

bound

and

that's

very

valid,

because

how

systems

will

work

in

an

entity

component

system

is

they

will

run

through

components

that

have

common

pieces

and

they'll

want

to

skip

over

pieces

that

are

missing.

A

A

So

an

important

part

of

an

e

CS

is

how

data

is

stored

and

how

it

is

arranged

in

memory.

So,

typically

speaking,

we

have

a

dense

storage

table,

so

we

have

some

occupied

slots

per

entity

per

index

and

we

have

some

wasted

or

unused

slots,

and

this

is

just

used

because,

when

we're

doing

a

dense

thing,

it's

basically

implemented

as

a

vector,

and

the

reason

is

because

that's

just

simple,

pointer

math

to

get

to

your

index.

It's

just

an

addition,

maybe

multiplication

or

a

bit

shift

and

you

get

to

the

value

you're.

A

Looking

for

when

you're

doing

the

index

lookup

very

quickly.

It

also

keeps

similar

values

close

together,

all

in

memory

close

to

get.

It

keeps

the

values

close

together

in

memory

which

allows

for

the

cache

lookups

to

be

more

efficient.

Basically,

not

everything

is

chosen

to

do

these

kind

of

dense

tiles.

The

other

style

is

kind

of

a

sparse

which,

despite

it,

is

actually

more

densely

packed

in

memory,

but

the

key

space

is

not

as

dense.

So

typically

you

do

this

with

a

hash

map

or

radix

tree

or

B

tree.

A

So

these

trade

off

something

there

they're

trading

off

being

more

efficient

in

space

for

giving

much

slower

access

time.

So,

like

a

hash

map

compared

to

a

vector

is

like

orders

of

magnitude

slower

or

B,

trees

and

stuff,

our

complexity

or

like

log

in

log

in

sorry,

log,

n

complexity,

so

much

slower

to

access

so

there's

no

best

choice.

It

really

depends

on

your

application

and

you'll

find

that

sometimes

you'll

move

around

these

types

for

performance

tuning

later

and

so

inside

aspects.

This

is

what

a

component

trait

looks

like.

So

it's

pretty

simple.

A

We

have

this

thing

called

an

Associated

which

we

did

not

go

over,

but

how

many

people

know

what

this

is,

what

half

of

you?

Okay,

so

an

associated

type,

is,

is

it's

a

value

that's

bound

to

to

a

trait?

Basically,

so

it

says

when

you're,

using

this

trait,

this

value

will

kind

of

get

found

here.

So

this

trait

literally,

has

no

methods

in

it.

All

it

has.

Is

this

associative

type

so

that

when

we

bind

it

to

a

specific

type

later,

we

know

that

this

type

is

associated

with

it

and

hence

it's

an

associative

type.

A

A

So

the

events

are

this

is

the

type

is

always

known

at

compile

time

of,

what's

associated

with

it.

So

the

example

before

with

the

earlier

presentation

with

the

loggers

etc.

This

basically

goes

one

layer

or

detail.

Like

is

a

bit

different

right,

every

single

time

you

say

it

like

you

see

you

int,

you

know

it's

always

going

to

be

stored

in

this

type

of

vector

or

your

velocity

vector

will

always

be

stored

in

like

a

dense

or

a

sparse

or

hash

map,

etc.

So

you

always

know

about

that.

A

Now

we

could

go

without

this

and

there's

actually

many

CSS

written

rust,

lua,

etc.

That

do

do

this,

and-

and

why

did

we

shoes

not

do

this?

Well?

The

answer

is

like

we

had

said

before.

We

need

to

do

this,

which

is

basically

boxing

our

position

table

which

creates

a

trait

objects,

and

then

we

lose

the

ability

to

inline

things

and

we

lose

the

ability

to

build

specific

functions

and

that's

really

important

later,

because

there

is

a

whole

mix

of

things

that

will

happen

that

basically

function.

A

Calling

overhead

will

be

the

entire

sum

of

our

program.

It's

quite

annoying.

So

we

skip

over

that

and

we

just

static

types

all

the

way

through

the

compiler

knows

everything

so

registering

a

type

is

pretty

simple

since,

like

this

position

vector

we've

told

it,

it

has

the

VEX

storage,

which

is

a

type

of

dense

storage

like

I,

said,

and

then

we

can

install

it

using

this.

A

So

the

nice

advantage

of

this

is

that

the

compiler,

when

we,

when

we

access

it

later,

the

compiler

will

always

associate

the

type

back.

So

when

we

ask

for

positions,

it

can

look

up

the

straight

and

then

know

that

it's

going

to

be

a

vector

storage

in

whatever

function.

We

grab

data

out

of

the

component

system

later

so

there's

zero

interface

overhead

when

we

actually

use

it-

and

this

will

be

a

big

part

for

when

we

get

into

systems

which

is

now

alright.

So

systems

are

the

last

bit

of

the

end

of

the

component

system.

A

A

So

how

does

this

all

work?

How

do

we

go

from

having

this

type

with

a

list

of

tuples

in

to

actually

getting

like

the

parameters

in

and

that's

because

the

system

data

implements

the

system,

data,

trade,

pretty

simple,

and

it's

a

pretty

simple

trade.

It

only

does

really

one

thing:

it

fetches

itself

out

of

a

resource

map,

so

a

resource

map

is

just

internal

to

the

engine.

A

It's

basically

a

table

of

all

of

the

components

that

you've

ever

registered

and

now

you're

pulling

out

the

each

individual

table

from

the

column,

and

then

you

just

return

yourself

so

in

rust.

There's

actually

no

nice

way

to

like

do

a

tuple

implementation,

but

there

is

a

really

ugly

way

and

that's

exactly

what

we

do

here.

A

You'll

see

this

a

lot,

so

this

is

basically

we've

gone

through

and

done

the

first

twenty

six

two

pulls,

so

it's

yeah,

it's

really

ugly

internally.

This

is

actually

not

that

complicated

we're

just

going

through

and

doing

the

same

thing.

It's

just

doing

a

fetch

fetch

fetch,

so

the

parameter

list

gets

expanded

and.

A

A

It's

best

to

avoid

it,

but

there's

these

certain

circumstances,

where

it's

just

so

much

nicer

for

the

record.

All

of

this

ugliness

like

this

is

not

exposed

to

the

user.

It's

just

hidden

in

the

bowels,

the

entity

opponent

system,

all

right

so

now

we'll

get

to

the

joint

rate

and

the

joint

rates

where

things

actually

start

to

get

super

interesting.

A

So

this

is

once

again

a

more

expanded

system.

We've

we're

getting

a

rate,

storage

of

positions

in

a

reef

and

a

read

storage

of

velocities

and

we're

going

to

act

on

so

the

key

way

we

act

on

this

is

through

this

little

method

called

Joey,

and

what

that

will

do

is

it

will

itinerate

over

all

of

the

components

that

match

the

pattern.

So

everything

that's

a

position,

has

a

position

and

has

a

velocity

will

get

returned

and

then

we

can

act

on

them.

A

It

has

a

nice

little

for

loop

and

this

is

done

through

the

joint

rate

and

it

takes

another

tuple.

So

we're

gonna

see

some

of

that

ugliness

again

and

it

obviously

returns

a

tuple

of

the

same

kind

of

shape.

So

if

you've

got

position

vector

you

get

a

position

vector

out.

If

you

pass

in

a

mutable

reference,

you

get

a

mutable

reference

out,

for

example,

of

the

internal

value.

A

A

The

actual

user

facing

part

is

just

the

join

method.

Everything

else

is

pretty

much

internal

to

the

engine

itself,

so

each

row

that

is

fetched

is

done

through

this

unsafe

function.

It's

unsafe

basically

for

performance

optimizations

reasons.

It

returns

a

non-optional

value

which,

like

if

you're

going

through

a

hashmap,

for

example,

is

not

legal.

So

we

basically

assume

that

the

mask

has

done

its

job.

I'm

gonna

get

into

what

this

mask

does

well.

The

mask

is

used

basically

to

determine,

if

there's

an

occupation

of

in,

like

the

table

of

that

this

is

pretty

straightforward.

A

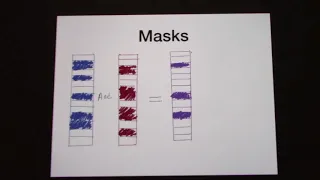

I've

got

two

theoretical

components

here:

right:

the

blue

in

the

reddish

pink

one

all

right

and

if

we

add

them

together,

we

get

out

a

new

call,

a

new

kind

of

pseudo

column

and

that's

what's

common

between

the

two

and

what's

nice

about

this?

Is

it

creates

large

gaps?

So

if

we

were

to

one

fruit,

let's

say

the

smallest

column

go

through

each

key

and

then

check

every

other

column

to

make

sure

they

match.

That's

pretty

slow

because

you're

doing

a

lookup

on

every

single

column.

A

A

So

this

is

the

return

type

that

we're

getting

out

of

everything

which,

if

you

go

back

here,

is

the

type

right

here.

So

that's

this

pretty

safe

Ord.

This

is

the

internal

type

used

in

it

that

basically,

the

array

of

tables-

it's

not

very

well

named

and

in

hindsight

I

probably

should

have

renamed

this

one

and

then

finally

the

mask,

and

what

is

this

bit

and

thing?

A

And

this

is

where

things

kind

of

get

weird

bit,

and

is

this

operator

basically

another

trait

and

it

has

an

and

function

on

it

and

once

again,

this

works

on

tuples.

So

we

have

another

ugly

table

like

this

so

internally.

This

looks

like

this.

This

is

by

the

way,

the

entire

expansion

of

the

macro

thing,

so

we

kind

of

got

a

left

to

right

and

then

a

nan

function.

A

So

this

is

actually

a

recursive

trait,

and

this

is

where

things

get

super

cool

and

there's

this

little

function

called

split.

This

is

taken

out

of

another

library

called

tupple

utils,

which

I

also

wrote.

Basically,

this

will

take

a

couple

and

it

will

try

to

split

it

more

or

less.

In

half

the

left

tends

to

grab

the

extra

value

if

it's

like

odd

number,

so

it

has

this

nice

little

trait

grab.

The

left

grab

the

right

internally.

A

Once

again,

this

is

completely

ugly

in

the

library

we

have

a

bunch

of

tables

where

we

just

go

through

and

manually

done.

This

I

think

I

wrote

this

with

the

Python

script

like

five

years

ago,

but

the

effect

of

it

is

pretty

useful

because

when

we

go

back

to

here,

we

kind

of

get

this

recursive

and-

and

we

get

this

recursive

value,

which

means

that.

A

A

So

the

purpose

of

all

of

that

basically

is

get

all

the

way

back

here

is

that

we've

built

this

nice

type

tree.

We've

built

this

nice

and

statement,

etc,

and

now

it

all

gets

in

lined

here

so

all

of

those

tuples

being

wrapped

and

unwrapped,

etc.

All

comes

back

to

the

statement

where

we're

basically

saying

split

these

up

do

an

and

operation

on

these

masks

find

all

the

masks

that

are

common

and

then

return

them

as

a

nice

iterator.

A

So

yeah,

why

did

we

do

this?

It

allows

for

very

flexible

types.

So

when

you

go

back

to

the

components

right

when

you

go

back

here,

the

right

storage,

the

read,

storage

you're

only

acting

on

the

position

or

the

vector

you're

asking

for

what

you

want,

you

don't

have

to

have

any

knowledge

of

what's

happening

internally

right.

You

can

just

redefine

it

where

the

component

was

defined,

where

how

you

want

it

stored.

A

So

that

gives

you

very

flexible

types,

they're

always

going

to

be

defined

in

one

place,

and

it

gives

the

compiler

a

lot

of

information

that

it

can

be

used

for

specialization,

which

provides

quite

a

bit

of

performance

advantage

and

to

user.

It's

a

very,

very

simple,

looking

API,

and

it's

very

fast.

In

fact,

what

these

are

kind

of

the

latest

numbers

when

specs

came

out

by

the

way

it

was

super

fast

compared

to

everything

else.

A

Ecs

is

one

of

the

older,

like

probably

one

of

the

first

entity,

opponent

systems

and

rust

and

rust

with

specs

was

two

or

three

times

faster,

and

it's

kept

that

leave

for

a

while.

Some

of

the

new

ones

have

popped

up.

Some

of

them

are

actually

derived

on

this.

The

code

base,

like

all

the

bit,

sets

in

etc

we're

all

used

in

constellation

and

in

fact

they

got

merged

into

library,

so

yeah.

So

this

is

a

super

fast

super,

flexible

little

library

and

those

slides

took

a

lot

faster

than

I

thought.

So

any

questions,

sir.