►

From YouTube: Envoy Overview and Envoy Powered API Gateway - Gloo

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

B

Sure

sure,

please,

okay,

guys

thank

you.

Everyone

for

joining

in

it

seems

there

is

some

issue

with

the

tool

today

to

join

people,

so

we

are

having

some

issues

there,

but

that's

okay.

We

are

also

doing

the

the

live

casting

at

youtube,

which

the

link

I

have

given.

So

I

think

we

should

be

good,

so

thank

you

for

joining

in

guys.

So,

let's

get

going.

B

Glue

yeah.

So

that's!

That's

me,

quick

intro

about

myself.

I

am

founder

of

cloud

and

we

provide

training

and

consulting

in

the

area

of

kubernetes,

docker

and

so

on.

I

also

authored

the

official

kubernetes

course

for

cncf,

which

has

been

taken

by

more

than

100k

participants,

I'm

also

a

cncf

ambassador

and

secret

city

fight

as

well

before

starting

cloud.

B

I

have

worked

for

red

hat

for

a

long

time

and

where

I

did

different

play

different

role

there

and

I

wrote

a

book

on

docker

in

2015,

which

is

kind

of

five

year

back

now

and

before

running

this

meetup,

I

also

ran

the

docker

meter

group

in

bangalore

as

well

for

some

time:

okay,

yeah,

so

the

agenda

for

the

today's

my

session

would

be.

We

look

at

the

overview

of

proxies.

B

We

would

then

see

how

does

this

the

communication

happen

between

the

micro

services

into

ny

and

the

topologies?

What

technologies

you

have

for

envoy

some

of

the

configurations

for

ny

and

then

we'll

do.

I

think,

six

or

seven

demos

which

I

have

planned.

So

that

would

be

interesting.

I

believe

let's

get

going.

So

what

is

the

proxy

in

general?

If

you

kind

of

look

at

the

term

proxy,

it

is

like

authority

to

represent

someone

else.

That's

the

general

terms.

B

What

we

have

so

what

you

see

on

the

screen

is,

for

example,

there

are

two

hosts

that

they

have

to

communicate

and

host

one

and

host

two

can

communicate

without

knowing

each

other.

If

there's

a

proxy

in

between

so

host,

one

communicate

to

the

proxy

and

then

proxy

would

take

the

connection

back

to

the

host

two

and

then

vice

versa.

There

so

host

will

just

send

a

request

and

then

somehow

it

gets

a

response

back.

B

B

Then

we

have

reverse

proxy.

So

in

the

previous

case,

we

kind

of

try

to

hide

the

client

ip

here,

but

in

this

case

we

do

not

tell

that

who

is

my

server.

So

this

is

called

reverse

proxy,

so

in

which

the

client

is

communicating

via

this

proxy

here

and

proxy,

would

kind

of

give

the

reply

back

from

any

one

of

these

servers,

which

is

running

behind

the

proxy

there

so

and

anybody

can

respond

back.

So

that's

a

reverse

proxy

for

you

that

in

this

case

we

are

hiding

the

details

of

the

servers.

B

That's

why

we're

calling

it

as

reverse

proxy.

So

what

are

the

use

case

of

reverse

proxy?

For

example,

we

can

use

it

for

load,

balancing

ssl

termination,

security,

caching,

compression

and

so

on.

So

for

all

that

purpose,

we

can

use

a

reverse

proxy,

and

this

has

been

very

widely

used

in

the

environment

I

mean

in

typical

internet

or

the

companies

you

will

see.

This

is

a

very

general

things.

What

we

do

these

days,

okay,

so

what

kind

of

software

you

can

use

for

use

of

proxy?

B

So

some

of

them,

you

can

kind

of

host

yourself,

like

nginx,

have

proxy

android

these

something

that

you

can

kind

of

configure

on

your

own

and

then

also

you

can

take

a

service,

so

some

of

the

companies

provide

it

as

service.

So,

for

example,

if

you

are

in

a

country

where

some

sites

are

banned

right,

but

you

can

access

the

proxy

server

so

why

the

proxy

can

access

the

respected

back.

I

mean

the

website

the

website

will

communicate

to

and

that

proxy

would

kind

of

take

care

of

that.

B

That's,

like

the

software

point

of

view

there

now

why

proxies

are

important

in

the

today's

microservices

world.

So

let's

try

to

cover

that

part

of

it.

So

generally,

we

assume

that

if

I'm

on

a

service

we,

for

example-

I

am

a

front

and

I

want

to

communicate

to

back

end

or

any

of

the

services

behind

the

scene.

Then

leave

assume.

B

Might

be

just

one

hop

away,

I

can

just

make

a

call

to

service

b

and

I

would

just

kind

of

reach

the

service

fee,

but

in

the

real

world

this

might

not

be

true

right

because

the

services

the

service

b

can

be

in

different

cluster

region

or

a

different

cloud.

It

can

be

anywhere

right

for

a

service

say

just

want

to

communicate

service

b.

B

That's

what

we

make

a

assumption

there,

but

things

would

play

in

between

and

what

can

happen

is

there

might

be

multiple

instances

of

service

b

also

so

which

one

you

would

connect

to

also

right,

how

do

you

load

balance

between

them?

So

these

are

the

future

to

take

care

in

the

today's

scenario

of

microservices

there.

Apart

from

this,

you

also

need

to

handle,

like

timeouts,

retries,

authentication,

circuit,

breaking

observability

and

other

protocols

which

you

might

have

in

the

in

the

in

today's

world

now

right.

B

So

how

do

you

handle

all

of

these

things

where

I

need

to

kind

of

say

if

I'm

going

to

communicate

to

service

b

and

if

service

b

for

the

face

for

the

first

time,

I

will

try

a

couple

of

times.

That's

called

retries

right,

so

I

want

to

kind

of

do

that.

I

want

to

implement

timeout

saying

that

okay,

I

need

to

get

the

result

within

a

specific

number

of

seconds.

So

how

would

I

kind

of

get

that

stuff

there

as

well?

B

So

all

these

things

need

to

kind

of

implement

in

the

today's

microservices

world,

so

how

we

can

kind

of

handle

all

of

these

things.

So

one

way

is

that

you

implement

a

language,

specific

implementation

right.

So,

for

example,

you

can

kind

of

say

okay,

I

would

I

would

writing

all

of

my

code

in

java

and

for

the

java

specific

language,

I

would

kind

of

use

the

tools

which

are

listed

here

mostly

from

netflix.

B

They

have

built

these

tools

to

kind

of

get

the

espresso

functionality,

which

you

are

looking

here

time

out,

retries

and

so

on

there.

So

you

can

use

this

language

specific

stuff

there

and

achieve

the

things,

and

it

has

happened.

It

has

happened

before

right,

so

java

has

kind

of

had

all

these

things,

and

we

could

do

with

this

thing

so,

but

this

does

not

look

in

the

current

scenarios

right,

because

we

write

our

code

in

different

languages

right

and

we

have

different

platforms,

deploy

the

application

and

so

on.

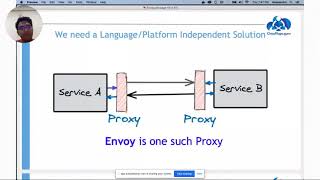

B

So

this

might

not

fly

in

the

today's

world

right.

So

what

we

can

do

is

we

want

to

have

something

which

is

independent

of

language

and

platform.

So

I

want

to

have

service

and

service

v,

but

I

want

to

have

them

communicate

such

a

way

as

transparent

for

them.

So

service

say

saying

that

I

want

to

communicate

service

b,

but

in

between

if

we

can

implement

some

kind

of

a

proxy

or

a

layer

which

gives

me

all

the

features

which

I

mentioned-

timeout

circuit,

breaking

and

so

on.

So

if.

B

Features

in

place

by

this

proxy,

I

can

transparently

put

in

front

of

any

service

which

I

have

and

that's

what

ny

is.

So

what

you

do

is

you?

Basically,

if

you

talk

about

in

terms

of

kubernetes

right,

you

might

know

about

side

car

containers

right,

so

I

would

deploy

a

part

for

my

primary

app

and,

along

with

my

primary

app

I'm

going

to

deploy

a

sidecar

proxy

and

that

proxy

would

kind

of

implement

the

features

without

telling

what

the

pod,

what

what

service

is

about

right.

B

So,

basically,

you

deploy

any

simple

app,

but

you

put

the

side

car

and

that

sidecar

would

take

care

of

building

all

the

features

or

like

circuit,

breaking

timeouts

and

all

that

stuff.

So

that's

what

ny

is

and

ny

is

being

used

in

service-

meshes

very

extensively

now

these

days

yeah.

So

let's

look

at

some

of

the

features

of

envoy

and

then

we'll

dive

deep

into

it.

B

So

it

is

out

of

the

process.

Architecture,

which

means

your

app

does

not

need

to

worry

about

anything

at

all.

All

the

features

would

come

and

build

with

envoy

and

you

just

attach

it

as

a

side

car

and

that's

it

and

your

app

need

not

to

change

anything

at

all.

It

just

run

plain

right.

Whatever

app

you

have,

it

will

just

finish

this.

B

We

can

have

different

filters

with

l3

l4

layers

which

I'll

talk

about

in

detail,

and

then

we

also

have

l7

filters

using

which

I

can

kind

of

identify

the

network

packets,

saying

that

this

packet

is

for

tcp

respected

for

my

sequel

thing,

and

so

on.

So

based

on

that,

I

could

kind

of

detect

my

pc

packet

or

the

network.

What

I

am

getting

and

then

I

would

put

apply

filters

on

them

and

then

continue

from

there

right.

B

B

B

A

B

Topologies

using

which

ny

can

be

configured

so

one

simple

thing

is:

you

can

have

it

as

an

ingress

and

egress

right,

so

any

traffic

coming

to

your

application

can

come

by

and

what?

Similarly

any

traffic

going

via

or

the

application

would

go

via

envoy,

so

nyk

kind

of

do

it

both

ingress

and

egress

free,

then

you

can

do

load

balancing

with

envoy.

So

basically,

if

you

have

multiple

replicas

of

your

application,

then

ny

can

kind

of

float

balance

between

them.

B

You

can

configure

ny

for

for

your

english

and

egress.

So

as

I

discussed

right,

if

you

have

your

network

and

you

can

kind

of

put

ny

on

top

of

that,

so

all

the

traffic

can

come

via

envoy

and

then

similarly,

you

can

have

an

on

the

egress

rule.

So

all

the

traffic

would

route

via

the

the

ny

as

well.

Depending

on

your

configuration,

you

can

have

a

setup

in

a

hybrid

mode

as

well,

where

you

can

have,

of

course

ingress

egress.

B

Then

you

can

have

a

load

balancing

also

happening

with

envoy

and

then

within

a

given

service.

You

can

also

deploy

an

envoy

which

can

have

internal

communication

between

the

services

as

well,

so

you

can

configure

ny

and

different

topologies,

whatever

what

we

are

seeing

here

and

then

you

can

have

multi-year

setup

setup

as

well,

where

you

kind

of

get

the

traffic

from

one

of

the

invoice

ingress

and

then

you

can

have

multiple

invoice

in

place.

B

Okay,

the

aperture

of

kubernetes,

the

architecture

of

ny,

looks

something

like

this

here.

So

what

happens

is

when

you

kind

of

make

any

connections

to

your

ny?

You

create

a

worker

thread

to

it,

so

a

worker

thread

would

kind

of

take

that

entire

connection

from

into

out.

So

that's

kind

of

a

threat

kind

of

takes

care

of

that,

and

then

what

we

have

is

something

called

as

listener

here.

B

B

So

from

this

you

kind

of

get

into

your

ny

and

then

you

would

kind

of

have

this

listeners

here.

This

list

is

nothing

but

it

can

be

any

port

number

or

socket

thing

using

which

you

will

kind

of

get

into

your

ny,

ny,

chained

and

ny

thing,

and

then,

with

this

particular

listeners,

we'll

talk

about

filter

chain,

so

you

can

have

multiple

filter

chains.

For

example,

you

want

to

first

detect

the

mysql

filters.

B

Okay,

are

there

any

mysql

packets

there

and

you

want

to

say

okay,

I

want

to

look

at

the

tcp

stuff

in

that,

so

you

can

kind

of

configure

them

in

different

filters

and

once

those

filters

are

being

passed,

you

would

kind

of

send

your

stuff

to

clusters.

So

clusters

are

nothing

but

collection

of

multiple

of

your

end

points

so,

for

example,

yeah.

Let's

talk

about

those

things

in

a

minute,

so

you

can

have

a

cluster,

nothing

but

logical

collection

of

one

or

more

endpoints.

B

Those

are

called

as

upstream

so

upstream

is

something

which

is

your

and

thing

so,

for

example,

if

you're

running

a

pod

or

a

container

that

becomes

upstream,

so

your

connection

come

from

downstream,

which

is

your

client

and

then

whatever

is

your

server

of

serving

entity

that

becomes

upstream

for

you

right.

So

I

kind

of

go

detail

in

each

one

of

them

in

a

minute.

B

Okay.

So

let's

talk

about

listeners

now,

so

listeners

are

nothing

but

your

end

point

to

which

you

will

connect

to

so,

for

example,

if

you

configured

an

envoy-

and

you

can

have

multiple

listeners

here-

that

one

can,

let's

say,

listen

on

for

http,

only

other

listener

can

listen

for

tcp,

so

those

kind

of

you

can

have,

and

it

would

create

the

connection

and

pass

the

request

to

filter

change.

So

filter

chains

are

like

this

I'll

talk

about

this

in

a

minute.

B

B

B

At

those

packages

you

can

see

what's

in

it

right

and

what

you

would

do

with

that.

So,

for

example,

you

are

saying

that,

okay,

if

I

am

getting

the

an

sp

packet

with

some

host

header,

I

want

to

change

it.

So

those

are

kind

of

the

filters

you

would

write

here

so

anything

going

to

change.

When

you

you

look

at

the

packet,

the

network

packet

and

you

identify

what

kind

of

packet

is

this

and

then

you

kind

of

do

something

on

that

right.

B

So,

for

example,

here

on

the

left

that

you

see

there's

a

filter

chain

here

in

this,

I

have

two

filters

here:

one

filter

is

for

mysql

another

filter

for

tcp,

so

I

would

have

like

one

filter

here

for

my

sequels

I'll

look

at

for

mysql,

stuff

and

I'll.

Tell

you

what

to

be

done

with

that

mysql

packets.

If

there

are

any

right

and

I'll,

see

you

for

that,

tcp

thing.

What

would

I?

B

B

So,

for

example,

you

can

think

of

a

kubernetes

service

can

be

a

cluster

kind

of

stuff

right.

So

a

cluster

would

be

like

that

so

like

here.

What

we

are

saying,

I

would

come

to

the

listener

here.

I

would

go

over

my

filter

chains

here

then.

I

would

hit

two

clusters

here

now

here.

If

you

look

at

the

cluster

is

like

a

block

so,

for

example,

suppose

you're

on

kubernetes

and

you

deployed

a

wordpress

app

and

there

is

a

front

in

the

back

end

front.

B

End

is

with

your

wordpress

application,

and

the

backend

is

from

mysql

right,

so

one

cluster

I

would

have

of

type

block

which

would

connect

to

my

block

service.

I

have

one

more

cluster

called

mysql

cluster

will

talk

to

my

mysql

endpoint

here,

so

you

kind

of

come

in

go

by

the

listener,

then

filter

states,

and

then

you

will

kind

of

come

to

your

clusters.

B

So

clusters

are

independent,

independent

of

listeners,

so

I

can

have

clusters

of

different

types

and

from

my

filter

chain.

The

traffic

can

go

to

any

one

of

these

clusters

and

after

that

I

would

go

to

end

points

eventually

that

what

is

my

end

point

towards

the

end,

where

I

will

connect

so

end

point

is

where

exactly

kind

of

go

and

hit

like

in

this

case.

The

end

point

is

the

wordpress

and

the

dbm.

B

So

here

I'm

going

to

kind

of

connect

to

my

wordpress

or

the

db

service

and

eventually

then

the

backend.

I

would

have

these

pods

what

I

have

typically

so,

but

for

an

envoy

here,

the

end

point

in

the

service

to

which

I

would

communicate

to

so

my

traffic

comes

to

my

listener.

I

go

over

the

filter

chains,

then

I

would

kind

of

based

on

the

filter

chains.

B

A

B

Let's

look

at

some

demos

and

they'll

become

much

more

clear

here,

okay,

so

so,

for

now

we

talked

about

a

few

terminologies

here.

Listeners,

filter

chains,

clusters

and

end

points

there

right

now.

How

do

we

configure

our

ny

for

doing

that

thing

right?

I

can

kind

of

either

always

build

this

kind

of

entire

stuff

manually.

Where

I

start

with

listener,

I

would

define

my

filter

chain.

I

would

define

my

clusters

and

from

there

I'll

go

to

my

end

points

there.

So

I

can

do

all

of

this

thing.

B

Configuration

manually

right,

that's

one

or

I

could

kind

of

do

it

dynamically,

that's

what

we

call

as

xds,

so

as

you

would

know

that

we

use

ny

heavily

with

istio

and

I'm

not

going

to

write

any

one

of

this

configuration

file

which

I'll

show

you

in

a

minute

manually

right.

So

there

should

be

some

way

by

which

I

would

configure

these

things

dynamically.

B

B

B

B

B

B

B

We

also

have

one

specific

filter

called

http

connection

manager,

because

http

is

very

important

part

of

our

day-to-day

life

right,

so

they

have

specifically

built

an

http

based

filter

which

can

which

further

has

http

only

specific

filters.

So

you

have

an

http

creation

manager

which

has

a

htt

specific

filter.

Also

so

I'll

talk

about

that

in

a

minute.

B

So

that's

what

this

x7

hdb7

l7

filter

here

and

from

there

I

will

kind

of

go

to

the

read

filter

of

http

7

or

for

l7.

Here

then,

I

would

kind

of

go

to

my

backend

service

on

the

backend

service.

My

traffic

would

come

back.

I

would

go

to

the

right

filters,

then

come

back

here

and

then

again

I'll

come

back

to

the

right

filters

of

l4

layer

and

come

back.

B

B

B

B

So

that's

all

there,

and

so

I'm

going

to

kind

of

go

over

these

demos

here

and

with

these

demos

I

would

kind

of

connect

to

my

docker

and

the

docker

desktop

running

on

my

workstation

and

do

the

labs

there.

Okay.

So

let's

look

at

the

first

demo

here.

So

what

you

want

to

build

a

simple

pc

proxy,

in

which

I

want

to

kind

of

make

a

connection

to

my

envoy

here.

So

let

me

show

you

the

config.

B

B

A

B

Here

here

what

I

have

is,

I

have

first

of

all,

I

am

building

this

statically,

so

I

am

going

to

put

a

static

resource

here

because

I

am

not

building

it

dynamically

here.

So

now

I

have

a

listener

here

in

this

listener

I

am

saying,

go

and

connect

to

any

one

of

my

ip

address

of

the

host

on

port

8080.

So

I

have

defined

a

listener

here

right

then

I

would

define

a

filter

chain

right.

So

let

me

define

the

filter

chain.

B

B

We

have

defined

the

filter,

in

which

I

am

saying.

I

want

to

look

for

all

the

tc

packets.

Then,

if

you

look

at

here

we

are

defining

the

web.

So

I

am

saying

look

at

the

the

web

cluster,

so

I'm

creating

a

web

cluster

here.

So

we

are

saying

any

packet

which

is

coming

via

this

filter

has

to

go

to

the

cluster

called

web.

B

B

So

here

is

only

one

filter

of

mine

called

web

from

here

I

would.

I

have

defined

my

end

point.

So

what

we

are

saying

if

my

traffic

is

coming

to

this

web

cluster,

I

would

route

it

to

two

of

my

end

points.

I

am

saying

that

go

to

either

the

end

point

called

service

one

or

go

either

to

the

end

point

called

service

to

so

look

at

what's

happening

is

my

traffic

is

going

to

come

into

my

coming

to

my

ny.

B

B

B

B

B

B

Okay,

so

it's

kind

of

building

that

my

envoy,

I

think,

configuration

there

and

then

let

me

just

to

the

pseudonym

we're

going

to

be

there.

Let

me

just

do

it

quickly,

okay,

now,

what

I

need

to

do

is

I

kind

of

want

to

kind

of

go

and

access

these

urls

from

my

workstation

here.

So

I

kind

of

come

to

my

workstation

here.

B

B

B

B

B

B

B

B

B

A

B

B

B

B

B

B

B

Okay,

let's,

I

think

I'll

give

it

a

minute

for

some

so

in

this

demo.

What

I

wanted

to

show

actually

is

that

you

hit

the

downstream

you

go

to

a

tcu

filter

and

then

from

there

we'll

hit

this

web

cluster

and

my

traffic

would

switch

between

two

services.

So

I

have

configured

an

nginx

here

and

when

I

said

we've

been

here

so

my

config,

my

connection

would

kind

of

switch

between

those

two

services

there.

So

the

first

time

I

want

to

kind

of

show

there

second

example.

B

B

Yes,

let's

go

over

the

http

thing,

one

so

I'll

go

to

configs

here

then

I'll.

Look

at

this

ny

configuration

here.

So

what

we're

discussing

here,

the

hp

filter

using

which

I

want

to

hit

my

url

plus

slash

services

left

service

too.

So

what

we

are

saying

in

this

case

is:

let

me

come

into

my

ny

with

the

listener

from

there.

I

would

look

at

the

match

here.

So

what

happens

is

you

would

look?

B

Have

this

route

configuration

here

and

in

which,

if

your

prefix

is

slash

service,

one

go

to

service,

go

to

cluster

call

service

one.

Similarly,

if

your

match

is

slash

service

to

you

will

go

to

the

cluster

of

service

to

there.

So

that's

how

it

is

going

to

work

out

where,

depending

on

your

suffix

of

the

url,

you

will

go

to

the

right

cluster

and

in

the

cluster.

I

would

have

these

different.

What

you

call

the

end

points

here,

which

is

going

to

be

my

service

one

and

service

two.

B

B

B

B

So

if

I

kind

of

try

to

come

here

and

hit

localhost

on

8080,

I

am

going

to

get

this

page

and

if

I

hit

again,

maybe

because

of

the

caching

I'll

not

see

that.

But

if

I

hit

on

incognito

mode

and

then

if

I

hit

localhost

8080,

I

will

get

the

other

page

right,

depending

on

which

one

you

will

hit.

You

will

get

that

stuff

because

at

least

our

one

demo

works.

So

let

me

bring

it

down.

B

Yeah

we

have

some

time

left,

hopefully

we'll

finish

all

the

demos

here.

Okay,

I

think

it's

not

going

to

work

out.

Let's

continue

further.

The

other

example

which

I

had

with

the

help

of

front

front

proxy

here.

So

what

we're

trying

to

configure

is.

I

want

to

kind

of

get

my

connection

in

from

the

external

world.

Why

are

the

envoy

and

then

I

would

have

envoy

within

my

application

as

well,

like

you

have

a

site

car

which

contains

your

ny

as

well.

So

what

happens

think

about

this

particular?

B

Is

your

entry

point

for

your

network,

so

my

traffic

would

come

in

to

this

ny

here

then

I

would

have

the

filter

chains

for

that

for

http.

Then

I

would

kind

of

go

to

my

app,

which

contains

another

envoy

and

from

there

I

will

go

to

my

flask

application

there.

So

that's

how

the

traffic

is

going

to

look

like.

So

let

me

show

that

thing

for

that,

as

well.

B

B

B

Okay,

then,

if

I

have

scaled

one

of

my

service

with

ny

here,

then

what

would

happen

this

in

the

previous

case,

if

I

hit

slash

service,

I

will

get

the

output

from

this

particular

application

of

flask

and

if

I

hit

my

port

8080

service

to,

I

would

get

served

from

here.

So

these

are

two

my

different

end

points

for

for

these

apps

applications.

B

Now,

what

I'm

saying

is

I

want

to

just

scale

my

service

one

here

so

now.

My

service

one

would

have

three

end

points

of

its

own.

So

if

I

hit

this

url

slash

service

one,

then

I

would

get

reply

from

any

one

of

these.

That

is

what

I

wanna

kind

of

showcase

here,

that

you

would

kind

of

try

it

out

with

the

front

proxy

scaling

there.

B

Which

I

want

to

kind

of

show

is

with

the

help

of

tracing

so,

as

you

know

that

you

can

have

the

tools

like

jager

and

some

kind

of

stuff

there

using

which

you

can

trace

your

entire

workflow

right.

So,

for

example,

if

you

have

a

microsoft

based

application

and

your

traffic

come

into

your

cluster

and

then

you

want

to

kind

of

track

where

the

packets

are

or

where

my

request

is

going.

B

So

there

are

things

tools

like

jager

and

zipkin,

which

can

help

us

trace

that,

but

they

need

some

input

data

right,

how

they

will

kind

of

build

the

graphs

for

you.

So

for

that

purpose

you

would

kind

of

again

use

ny

for

that

ny

has

this

jager

filters

or

tracing

filters

inbuilt

and

with

those

filters

as

the

packet

comes

in,

you

can

put

a

kind

of

stamp

on

that

and

that's

term

you

can

kind

of

walk

across

watch

across

the

network

and

see

what's

happening

there.

B

B

Front

proxy

first,

so

I

mentioned

about:

there

are

two

kind

of

proxies:

are

there

one

is

at

the

front?

So

this

is

the

front

proxy.

If

you

kind

of

imagine

that

so

from

there

my

traffic

is

going

to

come

to

service

service,

slash

one

I'll

go

to

service

one.

If

my

traffic

is

coming

to

this

port

ip

on

service,

slash,

12

I'll

go

to

this

service,

that's

right!

Traffic

is

going

to

come

so

from

here.

As

I

told

you,

I

am

going

to

kind

of

go

to

one

more

ny

here.

B

B

This

is

my

cluster

for

my

service,

one

here

and

now.

If

I

look

at

from

this,

I

would

go

to

my

service

one

container

on

port

8000

and

if

I

look

at

the

service

ny

there

now

here,

I

would

have

one

more

ny

configuration,

so

I

am

saying

that

any

traffic

coming

on

port

8000

take

it

to

my

local

host

on

port

8080.

B

B

B

Flow

of

my

call

state,

I

want

to

kind

of

give

you

all

the

demos

there,

but

somehow

it's

not

possible

today,

maybe

I'll

record

and

share

it.

Okay.

The

last

example

I

want

to

give

you

without

taking

so

much

time

that

I'll

I,

if

it's

possible.

Let

me

just

let

me

see

if

I

can

just

have

it

I'm

running

for

you.

B

Okay,

so

what's

happening

in

this

case

is

I

have

deployed

a

wordpress

application

and

for

that

wordpress

application.

I'm

also

doing

definitely

doing

that

http

one,

but

I

also

want

to

show

it

the

mysql

filter

that

how

does

the

mysql

filter

can

work

with

our

application

here?

So

if

you

look

at

what's

happening,

is

I

have

deployed

three

containers?

If

you

look

at

that

way,

our

wordpress

container

a

db

container

and

an

ny

container

and

an

ny

container.

B

B

A

B

One

listener

on

port

8080

other

listener

would

be

on

some

other

port,

like

I

think

3307

so

I'll

send

my

traffic

there

and

from

there

I

would

send

the

traffic

to

my

db.

What

is

the

benefit

of

this?

I'm

going

to

kind

of

collect

all

of

my

mysql

thing

as

well,

or

whatever

my

metrics

for

my

sql

as

well,

and

then

I

can

kind

of

see

how

much

my

mysql

behaving

there.

So

I

can

have

filters

like

this

for

mongodb

and

so

on.

So

I

hope

this

example.

This

would

come

in

so.

B

I

really

don't

know

what's

happening

here

today:

okay

I'll,

try

to

see

if,

towards

the

end,

I

can

show

the

demo

for

that,

but

that's

how

I

kind

of

show

the

demo

for

you

for

this

thing

and

you've

also

configured

a

grafana

and

prometheus

for

that.

So

I

can

kind

of

really

show

the

plot

for

you

that

if

I

kind

of

make

a

query

to

my

wordpress,

I

get

the

grafana

dashboard

for

that.

B

B

A

A

Yeah,

you

can

see

behind

me,

I'm

prepared

for

the

worst

all

right.

First

of

all,

thank

you

all

for

having

me

at

this

meetup.

I'm

excited

to

talk

about

these,

these

technologies

and

envoy,

and

the

presentation

that

you

that

you

had

there

about

envoys

was

perfect.

You

know

they

gave

people

an

idea

of

what

it

is

and

what

it

takes

to

configure

it.

What

are

some

of

its

capabilities

and

and

so

forth?

A

So

my

name

is

christian.

I've

I

work

at

a

company

called

solo.io

right

now

been

here

for

a

year

and

a

half

or

a

little

over

a

year

and

a

half

before

that.

I

worked

at

red

hat

before

that

I

worked

in

an

open

source

integration,

company

called

fusesource

I've

been

and

before

that

at

enterprises

and

so

forth.

A

I

did

spend

some

time

at

zappos.com,

where

I

got

to

work

hands-on

and

up

close

with

amazon

and

and

and

zappos

at

the

time

right

and

got

to

see

what

devops

and

microservices

and

all

this

stuff

was

before

it

became

all

this.

You

know

trend

in

our

industry.

This

was

back

in

2012

and

from

there

you

know,

I

built

a

lot

of

understanding

of

how

this

stuff

works

at

a

high

at

a

high

web

scale,

company

and

some

of

the

maturity

around

their

automation

and

the

processing

and

so

forth.

A

So

I

you

know,

through

my

work,

at

red

hat,

working

on

early

days

of

kubernetes,

very,

very

early

days

of

the

istio

project

and

so

forth.

You

know

bringing

this

type

of

infrastructure

both

into

open

source

and

to

enterprise

and

to

companies

to

help

adopt

that,

and

so,

when

I

left

red

hat,

I

wanted

to

go

to

a

company

that

focused

exactly

on

this

problem,

connecting

applications

together

in

a

reliable

way

in

cloud

environment.

And

so

that's

that's.

What

we're

doing

at

solo.

A

I've

written

some

books

here,

as

you

can

see,

on

microservices

and

and

istio.

I'm

currently

writing

the

istio

in

action

book

for

manning.

You

can.

I

I

do

encourage

you,

because

there

were

some

questions

in

here

and

just

general,

I

would

say:

uncertainty

or

confusion

in

the

in

the

ecosystem

in

general,

around

service

mesh,

api

gateway

envoy.

A

You

know

some

of

the

comparisons

between

the

two

and

so

forth,

and

I've

I've

done

my

best

to

try

to

share

some

clarifying

information.

So

if

you

go

to

my

my

my

blog,

so

I've

been

writing

about

this

stuff.

I

went

back

and

looked

you

know

since

since

the

very

beginning

of

2017,

specifically

about

envoy

and

and

and

this

technology

click

on

that.

A

A

I

also

share

videos

and

and

blogs

and

updates

on

my

book

and

so

forth

on

on

twitter.

So,

but

I'm

happy

to

be

here

talking

to

you,

I,

like

I

said

I

work

at

solo.I.o,

whose

mission

it

is

is

to

build

this.

This

application

networking

infrastructure

to

allow

services

to

talk

over.

You

know

when

deployed

in

cloud

environments.

So

if

you

just

take

a

look,

for

example,

kubernetes

right

kubernetes

is,

you

know,

kind

of

won

the

container

orchestration

wars,

but

what

is

it

it's

a

foundational

layer

for

deploying

containers?

A

A

A

Once

you

put

applications

into

kubernetes,

these

applications

need

to

do

things.

They

need

to

talk

with

each

other.

They

need

to

implement

some

level

of

reliability

and

security

and

so

forth

right

on

the

at

the

application

layer,

and

so

that's

where

technologies

like

envoy

start

to

come

in

and

fill

the

gap

right.

So

it's

a

layer

on

top

of

kubernetes

and

envoy

by

itself,

as

we

saw,

is

very

powerful

but

envoy

itself

isn't

the

final

solution

all

right.

A

So

envoy

is

this.

Is

this

little

piece

that

can

help

build

this

application,

networking

abstraction

right?

So

now?

What

is

this

application?

Networking

abstraction

some

of

the

important

pieces

to

this

is?

It

needs

to

be

decentralized

right.

We've

seen

in

the

past

application

networking

you

know

kind

of

functionality

in

in

terms

of

let's

say

message:

messaging,

based

systems,

message,

brokers,

right

with

message,

brokers.

You

could

kind

of

get

a

decoupling

of

the

the

caller

and

the

server

right

you

can

get.

A

So

you

can

get

that

transparency,

so

it's

kind

of

like

service

discovery,

because

you

don't

know

exactly

where

the

server

is.

You

get

things

like,

reliable

to

an

extent,

delivery

retries.

You

know

and

load

balancing

right.

You

give.

You

connect

multiple

consumers

to

a

queue

you

can

get

load,

balancing

and

so

forth.

But

it's

highly

centralized

same

thing.

We

saw

with

the

esbs

that

were

built

on

top

of

messaging

brokers,

often

or

api

gateways

right.

We

said:

let's,

let's

build

apis,

expose

this

on

the

api.

A

You

know

economy

and

do

all

this

stuff.

But

what

ended

up

people

really

doing

is

taking

api

gateway,

putting

in

the

middle

of

their

application

architecture,

because

it

can

do

security

because

it

can

do

some

routing

and

self-service

and

so

forth.

They

put

it

right

in

the

middle

of

this

of

their

application.

Architect,

everything

went

through

this

api

management

solution,

and

so

what

we're

trying

to

move

toward

when

we

move

to

cloud

is

decentralized

processes

all

right,

get

ops.

A

These

types

of

workflows

were

more

highly

centralized

teams

that

run

the

you

want

to

make

a

change.

You

have

to

file

a

ticket

and

so

forth.

So

we

want

to

build

this,

so

the

problems

are

not

all

that

new,

but

the

implementation

for

how

we

build

this

in

a

in

a

highly

decentralized,

highly

ephemeral

cloud

infrastructure

where

nodes

could

be

coming

and

going

pogba's

coming

up

scaling

and

so

forth.

The

the

the

implementation

needs

to

be

decentralized

and

it

needs

to

be.

A

So

why

envoy?

I

saw

some

questions

on

the

on

the

chat

there.

Why

envoy,

and

the

answer

is,

is-

is

pretty

straightforward?

Actually,

the

number

one

thing

is:

the

community.

The

community

has

kind

of

come

around

and

coalesced

around

envoy

the

innovation

in

level.

Seven

network

application.

Networking

technology

is

happening

in

the

envoy

community

and

it's

happening

with

input

from

massive

companies

like

google,

like

apple,

like

you

know,

verizon

and

yeah.

A

You

can

see

amazon,

you

can

see

the

some

of

the

people

on

the

on

the

right

hand,

side

of

the

slide

right

so

that

the

innovation

that

we

saw

at

google

is

being

poured

into

envoy,

the

innovation

at

netflix

and

ebay

and

so

forth.

Is

it

that

that's

kind

of

that

the

central

place

where

people

are

coming

together

to

push

on

this

and

we'll

look

at

some

of

the

fruit

of

that

innovation

in

a

second?

A

A

The

previous

talk,

but

this

is

a

foundational

piece

being

able

to

extend

envoy

and

a

community

that'll

help

you

do.

That

is

incredibly

important

because

we

want

to

support

all

these

different

technologies.

We

want

to

support

all

these

different

use

cases,

but

we

want

to

allow

extension

points

and

apis

and

make

you

know

a

modern

code

base

that

enables

that

that

extension.

A

So,

for

example,

an

example

of

this

innovation

and

extension

in

envoy-

that's

happening

right

now

is

around

webassembly,

so

webassembly,

which

kind

of

grew

up

in

the

in

the

browser

is

a

way

of

dynamically,

injecting

your

your

custom

code

into

a

target

endpoint

either

the

browser

in

this

case

enboy.

So

we

can

extend

onboard

writing

webassembly

or

writing

what

web

assembly

you

can

write

in

any

any

language

and

it

compiles

down

into

webassembly,

and

then

you

can

deploy

that

into

android

right.

So

you

can

make

you

can

you

all?

A

You

know

some

people

in

telco

have

these

highly

custom

protocols

or

you

need

a

certain

logger

for

your

access

logs

and

so

so.

Extending

envoy

has

been

a

foundational

principle

from

the

beginning

and

you

used

to

have

to

do

that

by

it's

actually

extending

the

cpu

plus

code,

but

now

you

can

just

do

it

with

webassembly.

A

The

last

thing,

which

was

definitely

touched

upon

in

the

previous

conversation

previous

talk

was

it

was

built

to

be

dynamic

and

to

change

and

to

be

driven

by

an

api,

not

file

configurations

and

hot

reloads

and

potentially

dropping

connections,

and

you

know,

but

where

we

didn't

have

this

dynamicism

20

years

ago,

right

envoy

was

built

from

the

ground

up,

as

we

saw

with

the

different

xds

services.

So

it's

basically

the

envoy

talks

to

an

api

gets

the

information

about

what

services

can

it

route

to?

What

are

the

routing

configs?

A

What

are

the

security

policies

and

so

forth

and

can

change

on

dynamically

right?

So

now

we

see

some

of

these

other

things

very

deep,

telemetry

collection

and

so

forth.

So

using

those

principles

as

the

foundation

for

ongoing,

we

can

build

pretty

pretty

powerful

networking

technology.

So

let's

take

a

look

at

what

that

what

that

might

look

like,

I

think

I'm

on

this.

I

know

I'm

on

this.

Sorry,

I'm

going

to

bounce

around

a

little

bit

because

I

want.

A

I

want

to

tell

a

story

naturally

and

I'll

use

the

slides

to

help,

but

I

want

to

just

I

want

to

speak

freely

here.

I'm

just

bound

to

things

like

that.

So

what

are

some

of

these

challenges?

What

are

some

of

these

connectivity

challenges?

How

would

envoy

fit

into

this

picture

at

all,

and

this

is

something

that

we

take.

You

know

this

is

core

to

our

our

ethos

and

our

competency

here

at

solo,

which

is

our

background,

is

in

in

envoy,

deploying

and

running

and

managing

envoy

in

production

at

scale.

A

But

some

of

these,

these

networking

challenges

we

talked

about

earlier

right,

a

service

talking

to

another

service

is

very,

is

rarely

that

simple

right,

you're

rarely

talking

directly

to

that

service,

usually

talking

over

the

network

through

multiple

hops,

different

boundaries,

firewalls

and

so

forth,

and

these

networks

are

not

reliable

right.

So

that's

and

that's

not

new

information,

but

so

these

applications

have

to

solve

for

things

like

timeouts,

retries,

circuit,

breaking

and

so

forth.

A

A

You

might

want

to

expose

and

you

might

want

to

catalog

and

you

might

want

to

have

searchable

and

and

so

forth.

So

you

know

self-service,

signup

and

documentation

and

automation

around

this

is

important,

and

then

you

take

that

problem

and

say

well,

there's

not

really

just

one

boundary

right.

We

have

maybe

multiple

clusters

of

kubernetes

or

maybe

running

on

premises

or

running

in

a

public

cloud

or

both,

maybe

maybe

in

some

cases,

we've

talked

to

lots

of

lots

of

large

companies

that

are

actually

running

in

multiple

clouds.

A

So

this

is

not

a

well,

let's

just

solve

the

problem

once

and

we

have

it

solved

right.

There's

multiple

areas,

all

right,

so

now

how

do

we?

How

do

we

expand

that

and

be

consistent

when

we

configure?

How

do

we

federate

the

different

services

that

are

running

in

this?

We

can

treat

this

as

a

single

large.

You

know

architecture

and

it

starts

with

envoy

okay.

So

before

I

get

into

this

part

and

glue,

I

want

to

come

to

this

slide

envoy

right

here.

Envoy

is

incredibly

versatile

and

can

be

run.

A

A

Alright,

so

maybe

a

group

of

services

that

all

live

and

work

together.

You

know

they

share

an

envoy

when

they

talk

out

to

the

network

of

when

requests

come