►

From YouTube: ECDC DAY 1: STARKs for Ethereum by Avihu Levy

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

B

A

Generate

like

our

main

target

is

provide

with

solution

of

zeros.

Before

of

several

schools

for

the

blockchain,

it

was

three

and

a

little

background

about

myself.

Can

you

hear

me

well

perfect

about

myself

so

I

have

a

technical

background.

I

did

Electrical

Engineering

and

physics

and

I

worked

eight

years,

a

security

researcher,

and

but

since

2011

I'm

involved

in

several

different

blockchain

projects,

I've

been

product

here,

so

this

lecture

will

be

mainly

about

different

use

cases

for

off

stock

for

helium,

and

there

is

going

to

be

less

math

and

I.

A

A

Now

that

you

have

zero

knowledge

proof,

you

can

do

two

things.

First

of

all,

you

don't

have

to

rerun

the

computation

in

order

to

verify

there,

those

great

and

in

the

case

of

start

and

snark,

and

some

several

other

proof

systems

you

don't

even

have

to

run

in

the

same

time

of

the

computation.

Actually,

the

verification

time

of

the

computation

is

now

much

shorter

than

the

computation

itself,

and

this

enables

you

to

do

a

few

cool

things.

A

The

other

idea

of,

like

the

other

part,

there

is

like

zero

knowledge

and

proof

proof

what

I

just

mentioned.

The

zero

knowledge

part

means

that

you

don't

have

to

him

all

the

inputs

of

the

computation

in

order

to

verify.

So

someone

can

come

to

you

and

say:

hey

I

know

an

input

for

a

sha-256,

and

this

input

generate

output.

That

starts

with

fifty

zeros

and

before

you

had

to

reveal

what

your

input

is,

and

now

you

don't

have

to

if

you're,

using

the

knowledge

moves

like

three

sentences.

Explanation

about.

A

A

A

Most

people

in

the

room

probably

are

familiar

with

snarks

and

it's

not.

The

verifier

runs

in

actually

fixed

time

or

almost

fixed

time

with

a

computation,

and

it's

actually

faster

than

the

computation

itself,

and

the

proper

running

time

is

longer.

There

is

overhead

to

run

the

proof

for

snacks,

and

now

also,

some

of

you

know,

bullet

tools

now

the

main

trope

of

bulletproof

compared

to

stockings,

not

that

it's

not

16.

That

means

that

both

the

prover,

but

also

the

verifier

they

usually

take

two

hour

longer

than

the

computation.

A

Then,

for

for

a

computation,

the

text

or

start

one

second,

to

run

the

prover

on

4zk

snarky

to

take

about

eight

seconds-

and

this

is

this-

is

actually

experiment

done

on

two

machines.

One

is

single

cord,

one

is

multi-core,

so

the

comparison

between

starts

in

snart

was

on

the

same

machine

which

is

multi-core

and

the

compression

between

starts

and

boot.

A

Proof

is

on

the

right

side,

so

it

seemed

to

occur

in

most

cases,

and

you

can

see

the

start

over

is

about

10

times

fast,

and

it's

not

one

and

it

just

start

over

is

100

times

faster

than

the

booth

booth

one,

and

when

it's

come

to

the

very

far

time.

So

by

the

way,

those

times

that

mention

here

for

starts

are

coming

from

the

academic

code.

So

this

code

is

available

on

github

and

it's

not

very

well

demised.

A

You

can

still

see

that

very

fire

time

for

starts

and

snark

is

about

the

same

well

for

bulletproof,

it's

something

like

thousand

times

more

slowly.

So

the

drawback

of

this

is

that

when

you,

when

you

want

to

use

bulletproof

or

anything

that

it

should

be

succeed,

it's

very

hard

to

do

so,

even

if

you

want

there

were

a

few

talks

about

using

bullet

books

for

range

means.

A

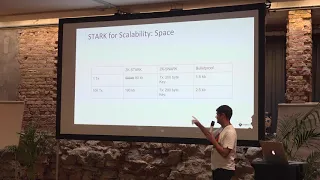

Okay,

so

another

competition,

this

time

the

space-

and

here

you

can

see

the

stark-

is

main

drawback,

because

the

start

proves

originally

from

the

paper.

They

are

something

like

five

hundred

kilobytes.

We

did

manage

in

not

something

like

three

or

four

months

of

war

to

reduce

this

number

to

80,

kilobytes

and

I-

really

hope,

especially

as

doing

the

product

that

we

will

be

able

to

take

this

number

even

another

factor

of

five

to

ten

more,

but

it's

just

wishful

thinking

and

yeah.

Ok.

B

A

In

stock,

the

fruit

size

is

fixed

is

something

around

two

hundred

bytes

in

bulletproof.

The

size

is

about

1.5

kilobytes

still

much

better

then

starts

in

this

category.

But

what

is

important

to

notice

is

that

when

you

go

to

from

proving

once

in

the

transaction

to

a

batch

of

10,000

cheated

transactions,

then

the

proof

size

only

grows

to

less

than

200

kilobytes,

and

now

you

can

prove

10,000,

see

the

transaction,

and

this

time

you

are

not

paying

linearly,

not

in

the

verification

time

and

not

in

their

flanks,

and

it's

not.

A

It

of

course,

will

still

be

200

dry

still,

and

it's

also

growing

logarithmic

with

the

size

of

the

proof.

One

thing

that

I

didn't

explain

here

is

that

in

snart

there

is

a

key

generation

for

every

different

circuit

for

every

different

entity.

Statement

that

you

want

to

prove

and

this

size

of

the

key

that

approver

holds

is

growing

linear

with

the

size

of

the

computation.

A

So

if

the

size

for

the

key

of

the

now

and

z

cash,

for

example,

is

900

mega,

bytes

and

I

think

that

in

the

next

something

they

are

going

to

do

it,

something

like

40

megabytes,

then

still

when

you

want

to

get

out

and

seal

the

transaction,

you

have

to

keep

in

memory

a

key

which

is

10,000

times

larger.

So

this

is

a

major

drawback

aside

from

the

trusted

setter.

A

A

A

They're

much

more

I'll

be

happy

to

talk

about

the

other

use

cases

later

and

also

on

Sunday

with

the

charting

day.

So

there

is,

there

are

also

few

relevant

use

cases

there

for

stocks,

so

basically

some

potential

uses

of

star

and

I'm

talking

now

on

second

layer

and

pace

live.

So

the

first

thing

that

you

can

talk

about

this

can

build

a

solution

by

batching

and

what

I

mean

here

is

that,

basically,

if

you

think

about

it,

there

is

ok

there.

We

can

talk

about

scalability

in

two

components.

The

first

one

is

the

transmission.

A

What

do

I

mean

by

this

when

you're

sending

transaction?

Most

of

the

time

you

are

sending

metadata

or

the

data

that

you

need

to

update

to

DB

and

also

together

with

this,

you

are

sending

some

proof,

and

this

proof

is

coming

in

a

way

of,

for

example,

signature

and

the

signature

technique,

like

sometimes

more

than

50

percent

of

what

you

are

going

to

transmit.

A

You

can

save

this

up

to

logarithmic

saving

in

stock

and

I

will

get

into

it

more

detail

in

the

next

slides

the

same

with

computation

because,

basically

immaterial.

The

idea

is

that

one

of

the

ideas

is

that,

in

order

to

verify

your

computation,

I'm

gonna

have

to

run

out,

and

so

somebody

send

a

transaction

list.

Transaction

cost

500,000

gasps.

A

You

cannot

like

they

way.

He

cannot

put

too

many

of

those

transaction

inside

a

block

because

he

expects

that

each

and

every

node

in

the

network

is

going

to

run

the

same

computation

to

validate

that

his

computation

was

done

correctly

and

what

you

could

do

with

Starks.

If

you

could,

you

could

say:

okay,

instead

of

sending

thousand

transaction,

that

each

of

them

spent

five

hundred

thousand

gasps

I

will

send

one

transaction.

Did

this

transaction

would

prove

that

the

computation

done

correctly

for

all

those

times

and

computation,

and

it

will

only

what

it

will

do.

A

A

So

possible

sinking,

basically

I'm

sure

some

of

you

heard

about

it

right

now

to

synchronize

the

in

in

food

trust

to

synchronize

blockchain.

What

you

have

to

do

is

you

have

to

download

all

the

blocks

yourself

and

run

all

the

computation

yourself

and

then

get

to

the

final

state,

and

then

you

trust

it,

but

instead

what

you

could

do

as

some

of

the

clients

of

it

here

I'm

doing

with

trust,

is

you

can

say:

okay,

I.

C

A

The

blockchain,

but

I'm

going

to

do

is

I'm

going

up

to

download.

For

example,

let's

say

that

I

know

already

block

five

million

in

the

chain

and

I

want

to

see

fast.

So

what

I

can

do

is

I

can

say:

okay,

let's

download

the

latest

block.

This

latest

block

will

come

with.

Come

will

come

with

a

proof

that

the

state-transition

coming

from

our

new

block,

five

million

to

this

block

is

correct

and

that

you

don't

have

to

download

all

the

blocks

in

the

chain.

A

A

Its

donate

for

Pratt

donating

for

privacy

is

the

one

of

shielded

transactions

now

I,

know

I'm

sure

like

I'm,

going

to

talk

about

it

more

in

the

following

slide.

This

will

be

the

main

topic,

but

I

just

want

to

mention

that

the

world

previous

attempts

to

do

it

honestly

room

and

the

main

problem

is

that,

like

privacy

solution

are

very

expensive,

if

you

try

to

run

them

a

second

layer,

so

we

want

to

present

ID

here

to

use,

starts

to

generate

privacy

solution

which

is

possible

to

use

it's

not

expensive.

It

has

good.

A

So,

let's

go

like

a

little

bit

more

details

on

scalability,

so

as

ice

as

I

mentioned.

There

are

two

categories:

the

first

one

is

a

transmission

and

the

second

one

is

computation

and

when

you

think

about

transmission,

you

can

do

few

things

not

just

to

what

I'm

mentioning

to

here,

because

they

are

the

more

simpler

who

are

once

and

I

show

that

some

of

the

audience

at

least

know

them.

A

The

center

Teague

sent

those

coins

to

the

receiver.

Now

what

you

could,

if

you

have

thousand

of

those

transactions

the

signatures,

probably

is

taking

taking

more

than

50%

of

the

space

that

you

are

going

to

transmit.

Now

what

you

could

do,

instead,

you

can

say:

okay,

I

have

those

thousand

transactions.

There

is

still

the

data

of

sender,

receiver

and

amount,

but

now

I'm

going

to

check

on

those

signatures

and

appoint

to

generate

approve,

and

this

box

is

going

to

say

for

those

thousand

transactions

I

seen

a

valid

signatures,

then

this

proof

is

not

very.

A

The

circuit

itself

is

not

very

small,

but

once

you

generate,

the

proof

is

going

to

be

almost

fixed

in

size,

so

doesn't

matter

how

many

transaction

you

are,

including,

and

the

validation

time

will

be

almost

instance,

so

you're,

basically

now

able

to

send

more

transactions

in

the

same

space.

You

think

about

Bitcoin,

for

example,

just.

A

Very

simple

example:

so

in

Bitcoin

you

have

a

block

size

of

one

megabyte

and

let's

talk

before

SEC,

which

you

have

a

block

size

of

one

megabyte

and

something

like

50%

of

this

block

size

is

signatures.

So

what

you

could

do

to

speed

both

transmission

and

verification

time

is

to

save

all

those

signatures

and

have

the

minor

generator

proof

that

those

signatures

are

valid

and

then

to

prove

we'll

wait

much

less

than

five

hundred

kilobytes.

So

there

is

substantial,

save

in

the

transmission

and

also

in

the

verification

time

of

the

block.

A

Somehow

familiar

use

case

is

the

one

of

the

queues

states

like

stateless

client,

the

basically

to

implement

them

as

contract

is

the

following.

So

actually,

when

you

have

a

payment

system,

you

can

either

save

the

balances

on

chain

or

save

the

balances

of

G,

and

if

you

want

to

serve

the

balances

of

chain,

you

can

keep

on

chain

just

root

of

the

states

of

the

accounts.

And

now

what

you

could

do

is

say:

thousand

people

want

to

send

transactions

so

they're

sending

a

transaction.

They

are

sending

the

signatures.

A

That

is,

we

already

mentioned

how

we

are

going

to

drop

and

they

also

sending

America

past.

That

shows

that

they

do

have

the

value

in

Dec

the

ballots

that

they

are

claiming

to

have

in

their

accounts,

and

this

marathas

goes

to

the

state

field.

Now.

The

problem

with

this

implementation

is

that

if

thousand

transactions

sending

thousand

Maricopa's

or

two

thousand

recognize

the

either

the

block

is

going

to

be

very

big

end

transmission

cost

on

the

network

are

big.

A

What

you

could

do,

instead

is

to

use

the

same

scheme,

but

instead

of

using

markup

pairs,

have

some

proof

that

you've

seen

thousand

merkel

pass

to

this

statute,

and

you

know

that

the

new

state

route

is,

let's

say

thank

you

is,

let's

say

a

so

now

the

new

state

would

is

B,

and

then

you

are

not

storing

anymore

the

state

on

the

chain.

You

don't

have

to

costly

updates

or

stores,

and

the

verification

time

is

very,

very

fast.

A

So

if

I

need

to

take

a

like

estimation

of

the

saving

that

you

can

get

so

by

removing

the

signatures,

you

are

getting

a

scale

of

about

two

times

more

to

the

throughput

and

by

removing

the

markup

path.

You

still

have

to

send

some

data,

so

then

you

can

get

something

like

times

more

asymptotically

to

the

throughput.

A

So

I

took

I,

I

took

one

point:

we

have

actually

a

table

and

this

table

is

very,

very

detailed

and

it

goes

for

pretty

large

computation.

You

can

see

part

of

it

in

the

start

paper.

If

you

go

to

the

graph,

you

will

see

the

qur'anic

time

verifier

anytime

in

two

sites,

and

so

there

what

you

see

was

about

the

size

of

one

select

transaction.

So

if

you

want

to

find

the

time

it

will

take

for

thousands

in

the

transactions

time

is,

almost

leaner

is

T

log

T.

So

it's

almost

linear.

A

You

can

go

to

the

to

the

tables

in

the

start

paper

or

you

can

calculate

it

yourself

from

this

presentation.

But

what

is

a

number

that

I

present?

It

was

a

one

shieler

transaction

okay.

So

the

second

use

case

is

in

scalability

is

computation.

Does

anyone

heard

about

probabilistic

micro

payments,

yeah,

okay,

so

so

perfect?

So

the

basic

idea

is,

you

can

I

like

to

think

about

it

more

as

lottery

tickets?

A

Basically,

so

the

idea

is

that,

instead

of

generating

payments

that

each

and

every

one

of

them

has

to

be

claimed

on

chain,

what

two

parties

can

do

if

they

want

to

strain

in

payments,

they

can

decide,

it

wants

to

pay,

is

going

to

give

lottery

tickets

as

payments,

and

this

lottery

ticket

has,

let's

say

the

chance

of

one

two

thousand

ten

thousand

two

week,

but

it

cannot

cheat

with

the

probability

of

winning

of

the

lottery

ticket.

So.

A

Pay,

let's

say

side

a

want

to

pay

side

be

in

lottery

ticket,

so

he's

paying

him

one

to

ten

one

hundred

thousand

lottery

tickets

and

the

nice

thing

about

this

scheme

is

that

the

other

side

is

all

the

time

he

knows

that

he's

been

paid,

but

from

ten

thousand

payment,

just

one

is

going

to

be

claimed

on

chain.

So

this

is.

This

is

the

thing

the

main

idea.

A

The

reason

that

I'm

mentioning

it

here

is

that

claiming

winning

tickets

can

still

be

expensive

in

gas

cost,

because

because

there

is

a

lot

of

computation

involved,

then

easily

the

cost

per

ticket

can

go

for,

let's

say

100

or

200

thousand

gas

per

ticket,

but

it's

really

just

to

prove

that

the

ticket

is

winning.

So

what

you

could

do

instead

is

you

could

add

to

the

lottery

ticket,

verify

your

contract.

A

You

could

let

us

start

verifying,

and

this

start

would

say:

okay,

I

know

to

verify

a

punch,

let's

say

a

batch

of

thousand

lottery

tickets

and

then

what

you

would

do

you

would

generate

the

proof

for

all

the

valid

tickets

for

every

one

hundred

or

thousand

value

ticket.

You

will

generate

once

to

prove

that

their

validity,

together

with

a

number

of

the

tickets,

and

then

you

can

submit

one

proof

to

the.

A

Something

that

is

almost

fixed

in

gas

cost

and

the

healthier

thousands

it

has

validated

much

less

than

what

it

would

cost

to

help

to

be

separated,

and

there

are

many

more

other

use

cases

here.

You

can

think

about

some

use

cases

of

crypto

kitties,

for

instance,

where

each

transaction

was

300,000

gas.

They

could

maybe

implement

it

differently.

A

B

A

A

Client

and

I

have

a

problem

because

for

for

every

day

that

I

want

to

sync:

let's

say

that

I

wasn't

in

sync

for

one

month

and

now:

I

want

to

sync

my

light

blue

and

then

I

have

to

download

about

I

took

here,

run

rough

numbers,

so

I'm

not

sure

they

are

the

correct

one.

But

let's

say

that

I

want

to

download

something

like

three

megabytes

of

headers

per

day.

Just

to

know

what

is

the

latest?

A

What

is

the

latest

blog

header

and

for

some

for

some

users,

for

example,

if

you

have

your

light

client

on

phone,

maybe

it's

too

much.

So

what

you

could

do

instead

is

you

could

have

some

entity?

Doesn't

it

can

be

nodes,

but

can

be

also

entity

outside

of

the

blockchain

today

to

generate

the

proof

that,

for

let's

say

for

which

day

for

every

day,

just

as

checkpoints

that

every

day,

one

bloke,

you

generated

proof

that

this

block

has

behind

it

such

and

so

amount

of.

C

A

A

Block

specifically,

has

this

amount

of

power

behind

him.

The

client

can

sink

much

faster

because

you

only

need

to

download

this

block-

maybe

few

others

after

it,

but

it

doesn't

need

to

download

all

the

block

in

the

blockchain

now

or

other

block

headers

in

the

blockchain,

and

a

little

bit

more

complicated

from

the

perspective

of

the

prover

is

to

do

the

same

for

blocks

and

now

what

you

want

to

do

is

you

want

to

take

it,

for

example,

for

instance

Assyrian

blocks,

and

you

want

to

prove

that

they

are

valid.

A

That

means

that

you

want

to

prove

that

the

computation

done

in

the

block

is

valid,

so

you

can

do

it

for

every

block.

You

can

do

it

for

once

in

thousand

blocks.

You

can

even

do

it

recursively

and

but

the

point

is

that

then

now

the

amount

of

data

need

to

be

download

and

compute

in

order

to

fully

with

full

trust

to

validate

the

latest

same

state.

So

is

much

much

much

lower

and

the

verification

time

will

be

much

faster.

Now.

A

A

E

E

The

both

non

interactive

for

good

reason,

but

I

now

think

that

there

is

actually

value

in

interactive

booths

as

well

in

the

context

of

light

clients,

because

the

light

client

could

actually

pay

for

the

proof,

and

the

proof

has

to

be

such

that

the

light

client

cannot

resell

it

later

it

had

approve

has

to

be

unique,

have

to

be

as

such

that

it

can

only

be

used

by

somebody

who

paid

for

it

and

not

put

anybody

else.

So

you

cannot

basically

extract

it

without

revealing

some

of

your

secrets

or

something

like

that.

B

A

Yeah

yeah,

so,

basically,

okay,

the

easiest

way

to

think

about

it

for

me

to

think

about

it

is

the

following:

computation

is

just

a

trace,

trace.

Let's

say

that

I

put

in

memory

on

the

side.

Now:

okay,

there

is

no

memory,

so

computation

is

basically

a

trace.

Each

level

on

the

trace

tells

you

what's.

The

state

of

the

registers

are

okay,

represent

it

like

this

and

then

the

next

step

that

you

are

going

to

do.

We

are

going

to

represent

in

each

let's

call

it

stages

so

rows.

A

So

each

row

you're

going

to

represent

you're

going

to

create

basically

a

constraint.

This

constraint

is

going

to

be

polynomial

and

this

constraints

going

to

show

that

the

transition

of

the

computation

from

the

previous

row

today

next

one

was

done

correct.

How

is

it

going

to

show

it

because

it's

going

to

be

zero

exactly

for

this

transition?

So

if

you

have,

for

example,

you

can

think

about

an

addition.

So

then

the

constraint

is

going

to

be

zero.

Just

if

the

result

of

the

next

row

was

the

correct

result

of

the

addition

of.

A

Roughly

the

ID,

so

now

you

have

all

those

constraining

polynomials

and

they

represent

your

computation

to

the

next

part,

I'm

not

going

to

enter,

but

there

is

some

polynomial

tricks

to

force

the

knowledge

of

this

component

collect

computation

to

make

the

constraint

to

be

0

over

the

whole

computation,

and

this

verification

that

you

do

know

the

correct

polynomials

that

when

you

submit

them

inside

the

constraint

polynomial

if

zero,

this

check

is

comparable,

fast

and

easy

to

verify.

There

is

another

one

he

didn't

drag

on

there,

which

is

logically

testing.

A

You

want

to

prove,

basically

that

you

are

using

polynomials

and

not

something

else

for

this

equation.

But

this

is

more

complicated

part,

but

start

is

basically

this

and

now

what

it

means

is

that

every

each

and

every

computation

you

are.

You

have

to

check

to

transform

to

a

constraint

system,

okay.

So,

by

the

way,

what

I

did

in

the

start

paper,

they

didn't

took

specific

and

statement

just

they

implemented

a

constraint

system

for

something

that

called

tiny

ROM.

A

A

F

A

I

don't

know

about,

but

I

would

say

something

that

in

snarks

they

are

using

are

one

CAS

I

want

CSR,

rent,

one

constraint

systems

and

in

stock

you

can

use

polynomial

from

the

first

degree,

but

it's

actually

saving

saving

to

use

polynomial

of

higher

degrees,

and

we

call

them

heirs

and

I

think

that

the

only

the

only

place

that

I

know

that

you

can

read

about

them

is

in

this

dark

article

for

now.

I

hope

it

at

some

point.

You

will

have

a

TSN

or

something

like

that

for

those

constraint

systems

as

well.

Thanks.

A

F

A

What

we

want

is

to

create

a

contract.

This

contract

will

be

over

interview.

It

will

be

enabling

sheet

transfers

of

value

over

until

you

now

it

can

be

shielded

transfer

of

ether.

It

can

also

be

shaded

transfer

of

any

year

c22

the

contracts

supporting

and

the

way

that

you

can

think

about

is

that

there

is

some

contract.

If

you

want

to

move

your

token

or

either

to

shielded

mode.

What

you

are

doing

is

your

depth

site

or

meet

your

tokens

in

this

contract.

The

contract

has

a

pool.

A

Load

is

token,

and

since

once

you

did

it,

you

can

privately

transfer

this

this

value

in

a

inside

a

contract.

So

let's

say

that

a

lease

put

a

note,

an

eater

or

ten

die

to

the

to

the

contract.

Well,

so

the

first,

the

first

maintain

or

Depa

site

of

the

contract

will

be,

of

course,

payable

totally,

not

private,

but

since

then

Alice

can

transfer

her

debt

value

secretly

or

privately

inside

the

contract,

and

once

she

finished

she

can.

Of

course,

we

draw

outside

air

talk

from

the

contract.

A

A

So

there

was

one

previous

attempt:

I,

okay,

three

one

important

question:

how

many

of

you

guys

know

please

the

basics

of

how

zero

cash

or

z

cash

are

working

basic,

okay,

so

I

would

give

like

really

present

about

it.

I

just

wanted

to

mention

that

there

was

already

one

attempt,

and

this

attempt

include

basically

implement

some

pre-compiled

contract

for

snarks.

They

were

not

implemented

in

a

way

that

you

can

in

one

in

one

in

one

contract

running

to

verify.

It's.

A

I

think

that

if

you

want

to

implement

the

transaction

on

top

of

this,

you

have

to

main

problem.

The

first

one

is

that

to

do

once

not

verification

takes

about

two

million

years,

so

you

wouldn't

get

far

with

your

throughput

there

and

the

second

one

is

that

if

you

want

to

change

the

EPI

statement

like

I,

don't

attend

it

before

snarks,

basically

distrusted

setup.

So

what

you

can

do

is

you?

Can

you

someone

else

trusted

setup

assuming

that

you're

trusting

he's

trusted

set

of

people's

yeah?

A

So

what

you

could

already

tell

you

could

use

the

exact

empty

statement

used

in

stopping

in

the

cache

and

then

you

could

don't

have

to

generate

your

own

trusted

setup.

But

even

if

you

change

this

a

little

bit,

then

you're

going

to

have

to

regenerate

the

trusted

setup

again.

So

it's

a

little

bit.

Oh

yeah,.

A

F

C

A

It

using

Z

cash

or

you

can

do

it

yourself.

You

know

as

long

as

the

people

that

are

using

your

system.

Trust

in

this

trusted

set

up

them.

Then

it's

possible

multi-party

computation,

whether

it's

another

topic

I,

will

be

happy

to

discuss

it

later.

The

downside,

the

nav

side

of

this

okay

I,

want

to

give

really

a

short,

really

short

explanation

about

how

the

cache

works,

because

it's

crucial

to

explain

shielded

transactions.

A

A

This

is

the

first

thing

to

understand

all

the

commitments

they

are

stored

in

one

recruit,

and

so,

in

order

to

prove

that

you

have

value,

you

should

prove

that

you

control

that

you

know

Maricopa's

from

the

forms

of

Route

in

the

past,

to

the

is

the

commitment

that

you

want

to

use,

and

you

need

to

show

also

that

you

know

the

secret

key

that

fits

the

private

key

behind

this

commitment.

Yeah.

It

is

part

clue.

A

So

the

way

to

do

it

in

your

knowledge

is

when

I

want

to

transfer

value

instead

of

saying,

hey,

I,

know

this

commitment

with

this

value

and

this

publicly

and

hide

the

secret

key

of

this

public

key.

Instead

of

doing

of

that

were

saying,

hey

I

know

some

commitment

in

this

tree.

Some

tree

you

are

giving

to

the

root

of

the

tree,

so

I

know

some

commitment

in

this

tree

and

to

this

commitment,

I

know

the

public

key.

A

A

Far

yeah

now,

so

the

proof

basically

proves

few

things

and

I

will

repeat

on

this

later.

It

proves

that

you

know

the

commitment.

It

proves

that

you

know

the

secret

key.

That

leads

to

the

commitment,

and

it

also

proves

that

as

the

match

you

spent

like

the

spender

side,

the

sender

and

receiver.

They

are

getting

the

same

value,

so

no

value

created

or

destroyed.

A

Okay,

the

only

one

problem

left

to

solve

or

money,

but

one

major

problem

is

to

solve.

How

does

one

prevent

me

not

to

say

two

separate

times

pay

I,

know

a

commitment

industry

and

then

five

blocks

later

say

again:

hey

I,

know

a

commitment

industry

and

use

the

same

commitment

or

in

other

words

how

to

prevent

double

spending.

So

the

whale

is

being

done.

A

There

is

a

unique

nullifier

related

to

each

commitment

and

when

I

spread

the

commitment,

I

I'm

not

going

to

show

which

commitment

I'm

spending,

but

I

am

going

to

reveal

a

notifier

related

to

this

commitment

now

to

go

back

from

the

nullifier

to

the

commitment.

It's

not

it's

it's

hard

or

it's

not

possible,

but

you

you

part

of

the

proof,

is

to

show

that

this

nullifier

is

related

to

the

commitment

that

you

are

spending,

and

so,

if

I

spent

mollify,

if

I

shout

notifier

one

time,

I

cannot

use

it

again.

It

is

consensus.

D

A

Not

okay,

so

again

what

I

want

to

spend

value

I'm,

proving

in

zero

knowledge

that

I

know

when

some

commitment

presented

before

belonged

to

some

root.

I

know

the

secret

key

that

fits

to

this

commitment

and

I

reveal

a

nullifier

that

also

fits

to

this

commitment,

and

this

nullifier

I

show

to

the

blockchain.

So

I'm,

basically

saying

hey

I

spent

some

commitment,

you

don't

know

which

commitment

it

is,

but

here's

the

nullifier

fire

along

to

these

commitments

of

this

show

that

I

can

spend

it

again.

A

Okay,

this

is

the

basic

idea,

so

every

time

that

I

want

to

make

a

transaction

I'm

presenting

knowing

fire

for

commitments

that

I

keep

in

secret,

I'm,

saying,

hey,

I,

know

nullifier.

These

are

the

nullifiers

of

commitments

that

I

know

what

I'm

not

telling

you

here's

a

proof

that

those

nala

fires

does

related

to

those

commitments.

That

I

know

the

secret

key,

relate

to

those

commitments

and

that

I

know

a

path

from

those

commitments

to

some

route

present

in

the

past.

A

Oh

hey

Bob,

your

new

commitment

with

your

public

key

on

it

and

this

value

so

their

way

to

do

it

on

chance

and

way

on

general

way

to

do

it

of

G

the,

but

basically,

is

to

basically

clear

your

question

body.

So

this

is

the

payments

convenient

it's

based

on

the

zero

modeling

video

syndication.

Okay,

let's

go

back

one

slide

back,

so

we

have

three

players

in

in

this

scheme

that

I'm

going

to

present

the

first

one

is

the

user,

the

user

he

wants

to

pay.

He

wants

to

generate

the

transaction

it

was.

A

A

A

The

way

that

you

can

imagine

it.

Imagine

that

you,

you

want

to

implement,

see

the

transaction

on,

tell

you

what

you

are

going

to

do.

You

are

going

to

create

the

logic

that

stores

the

miracle

route

of

the

commitments

you

are

going

to

create

the

logic

that

make

sure

that

any

nullified

you

wouldn't

spend

more

than

once,

and

you

are

going

to

create

a

logic

to

verify

those

proofs

right.

A

Once

you

have

those

three,

you

are

able

to

have

a

conflict

that

runs

a

sheeted

transaction

system,

okay,

so

the

user

and

the

contract

are

known,

familiar

players.

What

we

are

adding

here

is

the

proper

service

and

the

idea

of

this

proper

service.

He

doesn't

have

to

be

trusted.

He

also

cannot

censor

transactions,

but

he

can

do

he

can

take

multiple

Schindler

transactions,

so

he

doesn't

know

to

whom

those

transactions

are

paid,

but

he

can

take

a

batch

of

sealer

transaction

and

generate

for

all

those

transaction

together.

A

Okay,

so

what

what

is

Center

need

to

prove

you

need

to

prove

that

the

input

coins

or

the

commitments

that

he

is

spending

they

exist

and

that

he

owns

them,

meaning

that

he

knows

the

public

he

related

the

private

key

related

to

them.

He

also

knows

the

nullifiers

that

related

to

them.

He

also

need

to

prove

that

the

sum

of

the

inputs

and

outputs

is

equal,

that

he

is

not

creating

value

or

deleting

value

right,

and

this

is

done

as

your

knowledge.

A

So

that

means

that

there

is

no

data

reveal

about

on

the

input

coins

and

the

values

public

keys.

Everything

remain

secret

and,

what's

going

in

the

transaction,

as

the

data

are

the

nullifiers

in

the

new

commitments,

take

questions

so

fun,

okay,

so

the

part

of

the

contract,

as

we

said,

a

contract

is

to

verify

to

prove

the

sheeter

transaction

is

valid.

So

this

is

one

part

and

think

about

on

chain

or

contract.

A

Constant

that

calls

validate

its

get

tools

and

data

and

validated

approves,

does

correct

when

run

on

the

data

and,

besides

that,

he

also

needs

to

do

his

consent

of

work,

meaning

he

needs

to

verify

that.

Not

nullifiers

hasn't

been

presented

yet

so

they

are

not

being

spent

more

than

one

and

he

needs

to

update

the

relevant

states

on

change.

So,

for

instance,

if

you

think

on

the

case

of

miners

and

zika's,

then

every

time

do

they

get

new

commitments.

They

need

to

add

them

to

the

metal

tree.

A

A

A

little

bit

of

technical

details,

nullifiers

are

256-bit

and

the

way

that

we

chose

to

implement

it

is

that

there

are

pin,

store

or

chain

in

memory

and

future

contract

calls

can

check

that

they

are,

if

they're

getting

nullifiers,

they

can

check

whether

it

is

knowledge

fire

has

been

presented

before

or

not

so

the

way

one

can

implement

it

is

that

to

store

demo

if

I

were

using

single

store

operation

and

to

check

in

that

the

nullifier

does

not

exist.

It's

another

load

operation.

A

A

That

commitment,

when

he

gets

proof

he

gets

a

proof

that

says:

hey

I,

know

route

and

to

this

route.

I

know

commitment,

but

I'm,

not

telling

you

which

commitment

it

is

so

the

contract

needs

to

be

able

to

know

all

the

to

know

all

the

routes

before,

but

we

don't

want

a

contract

to

storm

entire

American

Road.

So

there

are

a

few

ways

to

do

it

and

presenting

you

one

that

I

think

that

I

like

to

present

it

because

I

think

it's

nice

and

it's

definitely

not

the

only

one

way.

D

A

Want

to

get

commitments,

let's

say

we

are

getting

our

no

one

thousand

commitments

in

a

block,

and

we

want

to

be

able

to

verify

later

that

those

commitments

exist

so

much

even

without

we

don't

want

to

store

the

hallmark

of

tree

in

memory.

Okay,

so

one

way

to

do

it

is

it

has

different

names.

I

will

call

it

forest

of

micro

trees,

just

because

it's

easier

that

way

and

when

you

implement

it

in

this

way

that

I

will

explain

later.

A

A

Scheme

which

I

will

show

like

right

now

for

a

single

commitment

to

pay

just

for

in

two

updates

on

average,

and

if

you

have

thousand

commitments

you

still

pay

with

something

like

ten

updates

on

average,

so,

basically

the

price

that

the

contract

will

pay

on

the

commitment

stays

almost

fixed.

Just

a

fixed

number

of

updates

doesn't

matter

how

many

commitments

it

receives

now.

A

A

Notice,

that

is

now

if

he

hasn't

memory,

just

the

first

commitment.

So

when

you

get

the

second

commitment,

his

nose

is

now

going

to

create

a

larger

man

country

and

it's

going

to

store

again

just

the

route.

He

doesn't

remember

anymore,

not

c1

and

c2.

We

just

remember

the

route.

Okay,

the

next

commitment

is

going

to

sit

here.

So

it's

going

to

remember

it

want

to

guess,

what's

going

to

happen

next,

yep.

C

A

A

A

Doesn't

have

to

do

all

the

hard

job

of

storing

the

entire

medical

tree

in

his

memo.

Okay-

and

it's

continually

saying

if

there

is

coming

that

ifs

commitment

is

going

to

put

it

in

the

root

under,

will

come

the

six

commitment.

He

will

take

the

previous

route

and

connect

it

together

and

then

it

doesn't

remember

anymore,

the

entire

state

under

the

route

just

remember

the

route

and

so

okay,

so

you

get

an

idea.

A

C

A

Nullifiers

and

two

commitments,

so

transmission

costs

so

I'm

talking

about

start.

Yes,

so

the

nullifiers

commitments

and

roots

of

the

miracle

tree

that

you

want

to

prove

that

you

know

commitments

from

they

are

together

of

192

bytes.

Now

the

star

proof

quickly

for

one

chip,

the

transaction

will

be

80

kilobytes.

So

it's

going

to

lead

a

cost

here.

The

transmission

cost

in

total

would

be

1.5

million

cars

are

pretty

high,

computation

cost

of

running.

A

C

A

How

much

or

how

exactly?

Is

this

compression

effective?

So

when

we

are

talking

on

100

she,

the

transaction,

so

because

in

numbers

the

probe

is

going

to

be

a

little

bit

larger,

125

kilobytes.

We

also

have

the

linear

component

of

the

transmit

internal

affairs

commitments

and

truth

computation

cost

of

running

the

verify

will

stay

almost

the

same.

D

A

So,

okay,

this

is

already

much

better.

We

still

have,

of

course,

as

you

can

see,

appointed

to

put

14.5

million

gas

in

a

block

is

not

possible

right

now,

so

there

are

several

ways

to

solve

it.

The

best

way

which

we

have

to

get

there

soon,

but

it's

not

promised,

is

just

to

make

the

proof

smaller,

and

this

is

what

we

would

like.

This

is

what

would

happen,

but

there

are

few

other

options

and

they

are

following.

We

can

split

the

proof

to

several

blocks.

A

A

There

are

a

few

improvement

which

are

not

quantum

resistant

and

they

will

make

the

verifier

probably

a

little

bit

more

expensive,

but

they

can

take

down

the

cost

that

the

length

of

the

proof

roughly

by

a

factor

of

three

or

four.

So

this

we

can

already

use

maybe

today

and

the

third

option-

is

to

choose

interactively

interactive

tools

because

alexei

mention

short,

privately

or

shortly

before.

A

What

we

are

talking

about

here

is

non

interactive

tools,

means

that

your

submit

proof

and

verify

can

verify

it

immediately,

but

there

is

also

a

variation

which

is

a

little

bit

shorter,

with

the

proof

that

you

can

work

interactively

with

a

verifier

generally

speaking,

meaning

in

our

case

that

we

just

need

randomness

from

outside

out

source.

If

there

would

be

some

random

pick

on

in

the

blockchain,

then

is

something

that

also

enables

us

to

take

the

proof,

shorter,

okay,.

D

B

D

So

this

is

not

a

problem,

but

just

for

my

own

understanding,

if

I

want

to

send

this

yield,

its

transaction

and

use

approver

service

I

had

to

stab

that

and

decrease

the

price,

but

it

would

likely

increase

the

time

that

I

have

to

wait

if

we're

already

batching

a

hydric

transactions

at

a

time.

Correct.

Yes,.

A

Enough

proof

to

put

in

one

block,

then

the

latency

will

be

not

very

high,

because

Hoover

service

can

actually

do

part

of

the

work

parallel,

and

so,

even

if

it

gets

thousand

transactions

per

second

four

thousand

transactions,

it

wouldn't

take

so

much

time

to

generate

the

combined

proof,

okay

yeah,

but

from

the

point

of

the

larger

pool.

If

you

need

to

split

it

in

several

blocks,

then

it

will

take

the

latency

of

those

several

gloves.

If

you

split

the

probe,

let's

say

to

ten

blocks,

then

you're

gonna

have

to

wait.

D

A

G

A

There

is

design

of

voting

systems

works

in

zero

knowledge,

but

the

design

what

it

intended

to

do

is

to

let

the

idea

the

following.

Ok,

you

let

a

third

party

to

count

the

votes,

so

this

third

party,

he

sees

the

balls,

but

you

verify

later

that

he

was

counting

the

votes

correctly

and

each

user

can

verify

that

he

is

bought

if

they're

and

the

disease

voice

vote

is

correctly

calculated.

D

A

Nobody

else

outside

of

this

third

party

can

see

the

what

what

each

and

everybody

voted,

but

the

prover

does

need

to

see

the

default

like

in

this

design,

so

it's

possible

to

do

it,

for

example,

would

prove

a

note.

Everybody

will

send

her

14

to

disprove

her

note

and

he

will

generate

the

result

of

the

vote

and

the

proof

that

this

result

is

correct

and

let

each

and

every

user

to

check

that

is.

A

Each

vote

is

actually

there,

and

this

can

be

done

in

Co

knowledge,

comparable,

easy

or

actually

there

are

several

use

cases

for

this.

The

only

drawback,

of

course,

is

that,

if

the

third-

if

there

is

no

like

you,

you

have

to

reveal

your

vote

at

least

once

to

the

program

in

some

cases,

it's

very

you.

It's

okay.

E

B

E

I

had

it's

not

a

kind

of

question?

It's

it's

probably

another,

just

idea

that

I

had

when,

while

listening

to

you

so

when

you

described

this,

this

mechanism

for

updating

commitments

and

as

far

as

I

understand

in

that

scheme,

you

are

trying

to

optimize

for

number

of

storage

operations.

You

tryna

you

doing