►

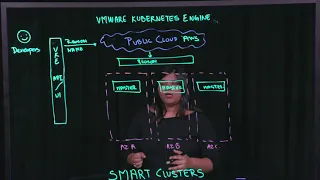

From YouTube: VMware Cloud PKS (formerly VKE) Lightboard SmartCluster

Description

VMware Smart Clusters. Run Kubernetes without Managing Servers or Clusters. VMware Smart Cluster automates the selection of compute resources that constantly optimize resource usage, provide high availability and reduce cost.

A

A

Welcome

to

this

video,

my

name

is

Bosque

Saleh

I'm,

a

product

marketing

manager

within

VMware.

Today

we

are

going

to

talk

about

via

maccready's

engine

and

smart

clusters

via

McBurney's

engineer,

the

SAS

offering

from

VMware

that

allows

developers

and

IT

operators

to

consume

kubernetes

clusters

as

a

service.

Now,

specifically

via

my

kubernetes

engine,

offers

smart

clusters.

So

what

are

these

smart

alerts?

Does?

Let's

talk

about

them

now?

A

Vma,

kubernetes,

engine

or

vke

runs

on

a

public

cloud.

It's

multi

cloud

enabled

so

when

a

developer

comes

in

and

wants

to

deploy

applications

of

taking

care

of

a

kubernetes

cluster.

The

first

thing

that

they

do

is

they,

you

know,

go

to

the

vke

api

or

UI

and

ask

for

a

cluster,

and

that's

a

smart

cluster

that

we

are

talking

about

now.

What

do

we

really

mean

by

that?

As

developers

start

coming

in

and

they

go

to

the

vke

API

or

a

UI?

They

are

going

to

request

for

a

cluster.

A

A

Now,

once

you

have

the

region

and

the

name

of

the

cluster

vmi

kubernetes

engine

takes

over

at

this

point,

it

really

doesn't

want

the

developer

to

worry

about

what

would

be

the

cluster

size.

How

many

worker

nodes

do

you

need?

How

many

master

nodes

do

you

need,

etc?

It

just

takes

care

of

everything

from

that

point

onward,

including

what

instance

to

provision

to

make

optimal

use

of

resources.

So

at

that

point,

the,

depending

on

the

region

via

kubernetes

engine,

will

talk

to

that

public

cloud

is

endpoint

and

select

the

specific

region.

A

A

A

Now

that's

as

simple

as

that

BMA

comedy's

angel

will

then

give

back

the

developer

the

API

endpoint,

to

talk

to

this

cluster.

Now

at

the

start

of

a

smart

cluster,

they

are

no

working

notes

provisioned.

This

is

intentional,

and

the

reason

for

this

is

as

soon

as

a

cluster

is

created.

We

don't

start

provisioning

worker

nodes

until

a

debauch

load

is

deployed.

A

Now

it

will

provision

worker

nodes

as

needed,

which

means,

let's

say

in

my

app,

only

needs

a

single

worker

node

that

has

a

specific

CPU

and

memory

limit.

Depending

upon

the

yam

l

file

that

you

specified

for

that

application,

it

detects

the

worker

instances

needed

to

provision,

and

it

only

will

provision

that

many

worker

instances.

Now,

let's

say

another

worker,

you

know

application

comes

in.

That

needs

a

little

bit

more

space

than

the

work

on

that.

A

So

that

way,

application

developers

need

not

worry

about

the

size

of

the

cluster.

What

operating

system

do

I

need?

How

many

worker

nodes

do

I

need?

How

many

master

nodes

do

I

need

do?

I

have

high

availability

in

involves

within

VMware

kubernetes

engine

or

the

cluster

that

I'm

deploying.

All

of

that

is

taken

care

of

now.

Let's

say

the

developers

start

deploying

applications.

We

have

the

worker

nodes

and

master

nodes

working

for

them.

Then

you

know

as

more

and

more

applications

get

deployed.

We

keep

increasing

the

worker

node.

A

A

It

will

keep

monitoring

the

k8

scheduler

for

the

instances

that

are

deployed

the

port's

that

are

deployed

within

each

worker

node

every

10

minutes,

or

so

it

will

keep

checking

whether

the

load

across

all

these

worker

nodes

are

equally

distributed.

If

not

what

we

america

vanities

engine

will

do,

is

it

will

reprovision

the

pods

to

another

worker

node

and

will

reach

or

fill

the

install

a

specific

worker

node

that

way

the

cluster

is

automatically

scaling

out

and

scaling

in,

depending

upon

the

workload

that

the

cluster

has,

and

all

of

this

is

transparent

to

the

developer.

A

The

developer

is

not

really

thinking

about

any

of

this.

This

is

all

automatically

done

for

the

developer

by

vke.

Now,

once

the

apps

are

deployed,

we

also

are

scaling

them

accordingly.

Now

another

instance

of

kubernetes

version

comes

up

and

the

developer

wants

to

upgrade

their

cluster

to

that

specific

version.

So

we

want

to

now

upgrade.

A

We

can

eat

will

actually

take

care

of

the

upgrade

for

the

developer

in

a

rolling

fashion

for

all

the

moisture

and

work

notes

deployed

for

that

cluster.

What

we

can

eat

will

do

at

this

point

in

time

is:

let's

say

you

have

of

k8s

cluster,

that

has

version

1.9

for

each

of

the

nodes

in

it,

and

the

developer

wants

to

upgrade

these

to

1.10.

A

We

will

start

upgrading

or

applying

those

upgrades

in

a

rolling

fashion.

It

will

first

take

out

this

master

node

and

create

a

new

one

that

is

1.10

version,

join

that

work

in

a

master

node

to

the

cluster,

make

sure

that

all

the

clusters

are

in

you

know

in

a

good

state

and

then

move

on

to

the

second

master.

Similarly,

it

will

once

this

master

is

active.

It

will

delete

this

one

and

upgrade

this

one

to

a

newer

version

at

all

points.

A

Now

at

any

point

in

time,

let's

say

the

upgrade

fails

for

some

reason:

vke

will

actually

roll

back

all

the

instances.

True

the

original

version

of

the

Kuban

IDs

cluster

and

restore

the

backup.

You

know

in

case

something

was

lost.

So

all

the

upgrades

and

everything

is

taken

care

within

the

small

cluster.