►

From YouTube: WebPerfWG call 2021 02 18

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

B

B

B

D

C

C

So

we

did

a

lot

of

brainstorming

about

how

we

could

address

the

issue

in

the

metric.

We

tried

like

about

200

strategies

locally

and

evaluated,

how

they

would

rank

some

pages

that

we

had

like

a

user

group

rated,

and

we

did

a

pretty

long

blog

post

about

this.

I

can

answer

questions,

but

it

might

be

easier

to

read

it,

but

we

tried

variants

of

like

how

windowing

cls

different

variants

on

how

we

would

write

statistics

about

layout

shift

like

averaging

or

max

or

percentiles.

C

C

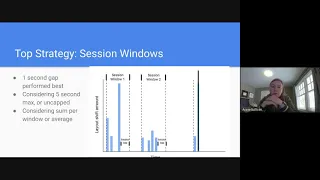

Oh

sorry,

I

have

a

the

wrong

graphic.

A

sliding

window

is

just

a

window

that

slides

over

time,

so

you

would

just

you

know

the

window

will

continually

update

over

time.

I'm

really

the

graphic

is

in

the

the

blog

post,

we're

considering

300

milliseconds

and

1

000

milliseconds

sliding

windows,

and

then

this

is

actually

a

session

window,

so

it

basically

bubbles,

as

you

have

layout

shifts

to

kind

of

contain

them

until

it

hits

a

gap.

C

C

But

I

want

to

share

some

initial

findings

that

all

strategies

is

expected,

reduce

the

correlation

with

the

time

spent

on

site

and

then,

when

we

ranked

like

cls

sites

by

cls

and

ranked

sites

by

each

individual

strategy.

The

sites

that

rose

to

the

top

with

the

new

strategies

were

all

sites

that

that

are

very

long

lived.

So

it

definitely

all

of

the

variations

on

strategies

are

accomplishing

those

goals.

C

So

we're

really

looking

at

at

a

max

over

a

session

window

or

a

max

over

a

sliding

window,

and

I

wanted

to

just

give

a

quick

update

and

and

ask

for

feedback

on

that.

We

we

do

have

like

the

blog

posts,

and

I

know

some

people

have

already

sent

in

feedback,

and

I'm

really

thankful

for

that.

Two

questions

in

particular

we

had

is

that,

if

we're

still

like

basically

using

the

cumulative

strategy-

and

it

it's

just

capped

by

a

window,

do

people

think

it

it

would

make

sense

to

rename

the

metric.

C

F

C

Yeah

yeah,

so

if

you

have

something

like

it's

an

infinite

scroller

and

it's

janking

a

tiny

bit

on

each

scroll

that

if

people

are

so

social

sites

like

can

you

imagine

instagram

twitter,

I'm

scrolling,

not

those

specifics

has

been

scrolling

through

my

feed

and

I'm

just

scrolling

and

scrolling

and

scrolling

that

can

go.

Tiny

layout

shifts

that

cap.

They

go

above

five

seconds

and

so

we're

almost

certainly

going

to

to

have

some

kind

of

cap

to

to

prevent

those

sites

from

scoring

too

poorly.

C

C

C

B

C

Yeah

we've

looked

at

statistics

just

in

like

you

know,

other

statistics

like

the

the

75th

percentile

experience,

the

median

experience

things

like

that

number

one,

it's

hard

for

a

developer

to

reason

about

like

what

exactly

are

we

grading

and

then

number

two?

We

found

that

only

very

high

percentiles

correlated

with

like

our

kind

of

simulated

user

experience

and

then

we're

talking

about

like

a

30

second

experience

and

you're,

saying,

like

the

95th

percentile

session

window

of

five

seconds,

like

that,

that's

an

interpolation

right,

so

you're

essentially

saying

the

max

yeah.

B

E

Yeah,

I

think

there

are

very

rare

situations

where

maybe

a

large

sliding

window

would

capture

the

global

maximum,

but

because

of

the

way

the

timings

are

set

up

session

will

always

do

the

same

as

well,

and

then

session

windows

have

variable

amounts

of

time,

and

so

really

that's

the

biggest

difference

is

is,

is

a

fixed

time,

slice

important

or

if

they're,

really

closely

clumped

together?

Is

a

user

really

intuitively

clumping

them

anyway?

And

so

that's

like

semantically,

the

big

difference.

A

G

So

we

prefer

session

windows

out

of

all

the

options.

There's

a

little

concern

that

if

you

have

a

tap

and

the

user

is

going

back

and

forth

between

you

know

into

a

product

page

back

to

the

category

into

a

product

page

really

quickly.

You

could

actually

get

a

bunch

of

layout

shift

in

a

single

interaction

or

in

a

single

group

of

those

interactions,

and

maybe

you

know

when

a

user

tap

occurs.

G

G

G

We

figured

okay,

that

makes

sense,

so

that's

our

feedback

and

and

where

we

had

so

the

most

concrete

one

is

like.

Maybe

ending

your

session

windows

when

a

tap

occurs.

I

know

you

get

the

500

milliseconds,

but

it

may

turn

out

to

be

of

a

bunch

of

transitions

that

go

past

500,

but

they

really

logically

scrolled

through

or

transitioned

through

five

or

six

pages,

and

it

just

keeps

establishing

the

window

because

you

keep

extending

the

sense

of

session.

E

H

F

Although

I'm

not

going

to

get

into

bike

shedding

about

it,

but

to

me

it

sounds

like

you

have

cumulative

layout

shift

with

one

second

slices

over

five

second

max

max

of

those.

So

if

that's,

if

that's

what's

actually

going

on,

there,

then

a

short

version

of

something

like

that,

but

yeah

I

would

say,

definitely

rename,

because

this

feels

different.

B

H

B

I

B

A

B

A

A

A

We

also

concluded

that

it

would

be

web

compatible

to

do

that

as

well

as

fire

new

pain,

timing

entries

and

other

yeah

like

pain,

timing,

entries

and

input

event,

and

then

the

question

is:

how

do

we

correlate?

How

do

we

correlate

those

new

pain,

timing

entries,

as

well

as

other

like

resource

timing,

entries

and

other

entries

that

we

have

to

that

navigation

compared

to

older

navigations?

A

That

would

work

well

with

the

proposed

app

history

api.

That

is

very

interesting

on

that

front.

This

is

not

yet

like

th

that

work

is

super

early,

but

it

seems

to

fit

the

same

model,

which

is

good,

so

the

proposed

spec

changes

in

order

to

make

that

happen

are

what

you

know.

What

I

haven't

had

in

mind

is

to

add

a

navigation

id

on

site

short

to

performance.

A

Entry,

add

a

counter

to

the

same

effect

to

the

document

to

be

initialized

as

zero

and

then

increment

that

counter

every

time

we're

hitting

a

new

navigation

type

so

initially

for

bf

cache

in

the

future.

We

may

also

increment

the

counter

for

soft

navigations

and

then,

when

cueing

any

new

performance

entry,

we

simply

initialize

its

navigation

id

with

that

variable

from

the

document,

and

that

would

create

the

effect

of

every

new

performance

entry

to

be

easily

correlatable

to

the

latest

navigation

timing.

A

Entry

that

preceded

it

and

just

to

illustrate

that

as

a

code

example.

Let's

say

we

want

to

grab

all

the

entries

for

each

one

of

those

navigations

that

happen

on

the

page.

We

collect

all

the

navigation

entries

and

then

we

collect

all

the

entries

on

the

page

in

general

and

then

simply

iterate

over

the

navigations

and

filter

based

on

entries

that

have

their

navigation

id

be

similar

or

identical

to

the

navigation

timing,

entry

and

navigation

id

and.

H

A

We

can

do

something

with

them.

That

is

obviously

a

simplified

version

with

like

typically,

you

would

probably

want

to

use

a

performance

observer

for

some

of

the

entries,

but

the

logic

would

be

similar

and

we

would

just

like

a

simple

filter

logic

will

be

able

like

will

enable

us

to

split

entries

based

on

navigations

yeah.

So

that's

all

I

had

as

far

as

slides

go,

and

I

would

love

to

hear

your

feedback

on

that

and

whether

you

think

this

make

this

makes

general

sense.

F

A

Yeah,

I

would

prefer

not

to

proliferate

that

data

to

all

new

performance

entries,

because

that

can

make

them

heavier.

It's

it's

better

to

just

have

an

id,

treat

it

as

a

pointer

and

then

you're

able

to

filter

entries

based

on

that

and

get

that

get

the

navigation

type

or

whatever

other

information

you

want

from

the

navigation

entry

directly.

F

Job

I

do

think

this

is

a

way

to

disambiguate,

which

is

a

concern.

The

last

time

we

were

talking

about

bf

cash,

so

I

really

appreciate

the

work

that

went

into

this.

I

think

this

is

a

way

forward

for

sure

and

maybe

could

be

used

for

soft

maps,

so

I

like

that

is

there.

Is

there

like

a

default

number,

so

I'm

just

curious

about

backwards,

compat

or

like

how

this

works

in

the

future.

If

all

all

entries

are

gonna

have

the

miracle

like

it

and

then

that's,

maybe

not

what

people

are

expecting.

A

So

so

what

I

was

thinking

is

that

zero

would

be

like

if

we

had

that

navigation

id

a

variable

and

or

property

in

the

proof

entry

idl

today

and

had

no

like

without

bf

cash

support.

It

would

just

be

zero

all

the

time

and

then

it

would

increment

over

time.

I

don't

think

like

from

a

backwards

compatibility

perspective.

A

I

doubt

that

extending

the

idl

would

like.

I

don't

think

content

today

is

looking

for

random

properties

and

if

it

finds

them

it

breaks,

I

would

hope

not

but

yeah.

So

so

I

don't

think

backward

compatibility

would

be

an

issue,

but

maybe

you're,

suggesting

forward

combat

issues

that

I'm

missing

like

or.

F

G

B

So

I

know

the

performance

timeline

interface

itself,

so

like

get

entries

and

such

we've

decided

to

put

less

focus

on

or

deprecate

or

not

as

much

but

your

example

itself,

using

it

suggested

that

you

know.

Maybe

there

are

some

use

cases

where

people

would

want

to

always

just

segment

by

the

current

navigation

id

I

mean.

Would

there

be

any

desire

to

like

we

have

again

entries

by

type

or

you

know,

have

they

got

entries

by

navigation

id

or

as

a

filter

that

you

pass

to

it

instead

of

having

to

do

it

in

javascript?

B

A

Yeah,

so

I

can

imagine

so

I

so

the

code

I

wrote

is

just

for,

like

I

was

aiming

for

rap,

something

that

would

fit

on

a

slide

so

perf

for

a

performance

observer.

I

would

do

something

similar,

but

then

do

the

filtering

as

part

of

the

observer

callback

and

then

you

know

add

it

to

an

array

with

all

the

navigation

entries

related

to

it

or

yeah,

even

not

even

go

over

the

navigation.

A

Just

you

know,

have

a

an

array

that

splits

on

navigation

id

and

then

like

each

cell

in

the

navigation

id,

is

an

object

like

another

array

that

contains

all

the

entries

that

is

fed

from

the

performance

observers.

So

I

wouldn't

put

too

much

emphasis

on

that's

this

particular

example.

Regarding

your

question

about,

like

do,

we

want

get

entries

by

id?

A

I

generally

think

that

all

these

get

entries

by

something

is

a

pattern

we

had

before.

We

had

filters

and

filters

enable

people

to

do

whatever

they

like

split

on

whatever

dimension

that

they

want

and,

to

be

honest,

like

even

from

an

internal

implementation

perspective.

It's

not

like

they

are

significantly

more

efficient

than

something

external

that

takes

a

javascript.

H

B

I

think

that's

fair.

I

was

trying

to

think

if

there

was

any

use

cases

that

people

would

be

using

the

performance

timeline

and

want

to

split

it

rather

than

just

using

a

performance

observer,

and

I

think

every

case

I

can

think

of

you

would

just

be

wanting

to

be

using

the

observer

and

doing

your

own

filtering

anyway.

So,

okay.

A

A

H

A

A

D

A

A

D

B

H

A

So

maybe

it's

worthwhile

to

decouple

that,

because

it's

yeah

not

neces

like

there's,

no

real

need

to

tie

those

two

together

and

people

can

filter

now

and

then

move

to

a

newer,

shinier

api

like

get

entries

by

id

if

get

entries

by

navigation

id.

If

that

would

be

something

we'll

add

in

the

future,

so

does

that

make

sense

to

maybe

decouple

those

discussions

and

then

potentially

we

can

add

that

sugar,

on

top.

A

A

H

D

A

B

E

So

speaking

of

being

forward

looking,

I

think

that

we

absolutely

should

have

a

navigation

id,

and

I

like

this

proposal

but

to

know

of

earlier

gnome's

earlier

point

about

perhaps

making

it

a

structure,

that's

more

complicated

like

fundamentally.

This

is

about

segmenting

perf

entries

based

on

their

like

logical

grouping,

and

I

do

think

that

benjamin,

at

least

in

the

past

has

turned

around

what

about

visibility

or

long

periods

of

idle

time

or

app.

E

D

E

E

A

E

E

A

E

So

so,

but

but

it's

a

similar

question

right,

like

navigation

entries,

give

you

the

ability

to

listen

to

them

and

slice

the

data

by

listening

to

the

events

looking

at

the

timeline

looking

at

the

start

times

and

slicing.

So

by

that

argument,

you

can

also

do

the

same

thing

manually

with

the

thing.

So

this

is

a

convenience

thing,

but

I

I

concede

that

it's

there.

There

will

be

a

way

to

raise

this

question

later

without

having

to

introduce

the

more

complex

tricks

you

know,

yeah

out

there.

A

A

Yeah,

I

guess

that

wouldn't

be

super

productive.

What

I

suggest

as

next

steps

is

for

me

to

create

various

pr's

on

the

various

specifications

related

to

that,

because

this

is

a

change

that

touches

on

performance,

timeline,

html

and

pain

timing,

at

least,

if

not

more

so

I

will

open

prs

and

then

maybe

send

them

over

to

the

list

for

greater

scrutiny.

A

F

Yes,

hey.

I

had

a

just

I'm

probably

wrong

here,

but

I'm

just

curious

like

if

we

have

monotonically

increasing

session

ids

then

isn't

the

largest,

the

one

that

could

be

visible

and

if

it's

not

the

largest,

then

it's

definitely

not

visible.

Or

is

this

whole

visibility

discussion,

something

we

really

don't

want

to

have

I'm

intrigued

by

what

michael

said.

I

think

like

this.

A

Yeah,

so

I

I

think

it

can

or

like

visibility

is

not

necessarily

like

users

could.

Theoretically,

click

on

the

back

button

then

switch

to

a

new

tab

before

you

know

very

quickly,

and

then

this

whole

bf

cache

navigation

would

be

invisible

for

most

of

its

time.

Even

if

you

know

a

small

number

of

milliseconds

would

it

would

still

be

visible.

It's.

A

A

F

That's

fine.

I

just

wanted

to

to

note

that

what

michael

said

there

might

anything

we

can

do

to

improve

clarification

of

visibility

would

be

with

wow

and

I

feel

like

this

is

solving

other

problems,

that's

great,

but

I

like

the

fact

that

it

might

be

able

to

discard

a

whole

bunch

of

what

is

visible

from

the

solution

set.

I

feel

like

we.

If

we

could

get

that

as

a

bonus,

then

let's

take

it,

you

know.

That's

that's

all.

I

want

to

say.

A

B

A

I

can

speak

speak

to

that,

probably

essentially

right

now

for

element

timing.

We

only

expose,

so

we

expose

the

load

time,

which

is

identical

to

the

own

event

and

what

we

already

expose

in

resource

timing,

and

then

we

expose

the

render

time

for

the

image.

But

it's

the

render

time

for

all

the

bytes

of

the

image.

A

A

A

D

H

A

D

H

Maybe

if

it

was

like

sorry

in

the

class,

if

it

was

like

an

app

I'm

just

imagining

an

app

that

has

multiple

thumbnails,

I

don't

know

when

you

click

on

it

and

then

it

shows

the

full

image

somewhere

right

and

then

it

might

not

have

the

image

the

full

high

resolution

image

in

memory

yet

loaded.

Yet

so

you

want

to

know

how

long

it

took

to

show

the

how

we

called

it.

The.

D

J

J

A

weird

line

where

baseline

images

still

draw

incrementally

right,

so

I

guess

you're

you're,

separating

the

the

incremental

painting

for

progressive

images

from

the

incremental

painting

for

baseline

images

and

not

all

browsers

paint

progressively

either

but

you're

almost

wanting

to

define

it

as

like

an

event

for

each

time.

The

entire

image

area

is

changed

if

you

would,

as

each

scan

completes

or

the

the

time

that

the

entire

image

dimensions

is

updated.

J

A

A

A

I

So

I'm

from

pinterest

actually-

and

we

do-

we

have

a

custom

metric

called

printer,

wait

time,

and

that

includes

the

load

or

the

rendering

times

of

all

the

images

in

the

viewport.

The

definition

is

supposed

to

be

for

60

quality

for

our

progressive

jpegs.

So

it's

not

necessarily

that

we

want

to

monitor

every

single

scan,

but

we

just

want

to

be

notified

of

that

specific

point

at

which

our

quality

threshold

is

met.

D

D

A

J

A

A

One

of

the

goals

for

lcp

is

to

be

a

single

number

rather

than

an

array

of

scans.

That

can

be

useful

for

some

scenarios,

but

may

not

be

useful

for

everyone,

so

yeah.

We.

We

have

a

separate

issue

related

to

lcp

that

I

don't

remember

the

number

of

where.

Essentially,

I

think

we

somewhat

converged

on

good

enough

for

lcp

semantics.

F

A

Okay,

yeah

yeah,

yeah,

okay,

so

you're

saying

there

are

parallels

between

those

milestones

and

the

milestones

for

the

full

page

render.

I

agree

that

there

are

parallels

there

are

also

like.

I

guess

that

depends

on

the

level

of

details

that

we'll

want

to

expose

here

in

terms

of

yeah.

If

we

expose

every

scan

that

that

goes

beyond

what

we

currently

expose

for

the

page

itself,.

A

B

A

I

I

think

it

can

be

useful

for

folks

who

have

opinions

or

better

yet

use

cases

for

this

to

to

comment

on

the

issue,

and

you

know

say

this

is

how

I

would

use

this

and

yeah

patrick.

Maybe

you

want

to

comment

like

outline

your

suggestion

of

having

a

more

flexible

structure

that

is

tied

to

scans,

and

then

we

can

see

how

that

expands

to

like

I

I

don't

know

if

this

is

like

jpeg

specific

or

can

expand

to

other

image

types

yeah.