►

From YouTube: WebPerfWG TPAC 2020 meetings - October 21 - part 1

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

Okay

and

we're

back

for

the

third

day

of

the

web

performance

extravaganzas

at

this

year's

dpac

and

today

we'll

talk

about

network

diagnostics,

performance,

dot,

measure,

memory,

talk

about

rechartering,

long

task,

attribution

and

finally,

js

self

profiling

and

norm.

Do

you

wanna

kick

off

and

present

the

network

diagnostic

part.

B

B

Okay,

so

let

me

start

just

reintroducing

myself,

I'm

from

microsoft.

I

work

for

excel

online.

Well,

the

development-

and

I

want

to

talk

about

a

proposal.

It's

not

a

spec

or

draft

for

spec

event.

It's

more

about

understanding

the

need

for

a

certain

capability,

I

think,

is

starting

to

be

more

and

more

important.

B

We

have

some

with

the

network,

information,

api

and

some

other

capabilities,

and

in

general

there

are

many

cases

where

application

developers

actually

need

to

be

able

to

optimize

the

the

user

experience

by

understanding

the

network

situation

better

yeah

and

it's

really

hard

to

do

without

existing

with

the

existing

tool.

What

I

want

to

focus

is

not

on

a

general

network

diagnostic

case,

because

that's

a

very

broad

topic,

I'm

going

to

scope

it

down

a

little

bit

and

talk

about

the

last

mile

diagnostics

and

even

scope.

B

B

B

B

However,

the

if

you

notice

there's

a

one

tool

that

pops

out,

which

is

the

pink

tool

which

is

very

popular

and

used

by

many

network

admins

for

initial

troubleshooting,

usually

so

what?

Let's

cover

the

existing

options

that

people

have

when

they're

building

apps

and

they

want

to

understand

the

network

situation,

so

they

can

use

network

error

logging.

It

doesn't

provide

the

granularity

about

what

happens

on

the

local

network,

but

it

does

give

us

some

general

connectivity

informations.

B

B

Other

ideas

is

that

people

are

commonly

used,

is

using

libraries

or

write

their

own

code

to

using

network

calls

and

try

to

detect

connectivity

responses,

some

error

codes

and

so

on.

We've

seen

also

cases

that

we

use

people

using

image

loading

just

to

determine.

If

there's

an

error,

it

has

some

advantages,

but

again

we

cannot

detect

if

there's

some

local

network

connectivity

issues

for

the

user.

B

B

So

network

information

api,

as

I

mentioned

earlier-

it's

not

configurable,

especially

not

the

sensitivity

of

when

it

would

notify

that

we

have

an

connection

up

or

down

so

now.

If

we

extend

it

by

adding

some

way

to

configure

it

and

I'm

not

specifying

any

specific

api

structure

or

interface.

I'm

just

saying

what

the

use

case

would

be.

I

guess

so.

We

could

possibly

configure

the

rtt

round

trip

that

we

may

consider

it

as

a

timeout

or

if

there's

a

few

consecutive,

timeouts

or

long

round

trips.

B

But

if

we

don't

do

that,

because

it's

not

trivial

to

define

the

main

limitation

for

this

approach,

I

guess

is:

we

require

to

actually

agree

on

what

determines

network

connection

up

or

down

or

available,

noisy

or

not

noisy.

The

problem

is,

every

application

might

have

a

different

threshold

or

different

requirements

for

what

is

considered

a

good

connectivity.

I

guess

games

online

gaming

might

require

very

low

latency

in

high

availability.

B

B

A

We've

re,

like

we've,

realized

that

the

current

api

is

at

the

same

time

exposing

too

many

bits

and

not

answering

people's

use

cases

necessarily

or

not

all

of

them

as

you

as

you

stated

out,

and

it's

not

the

first

time.

I'm

hearing

that

you

know

people

run

their

own

network

measurements

which

are

active

and

bad

and

people

shouldn't

be

doing

that,

but

they're

doing

that,

because

the

api

is

not

sufficient.

A

A

C

B

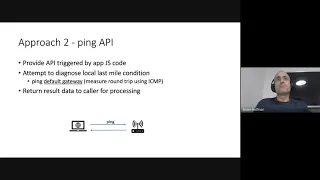

Right,

so

I

think

I

try

to

articulate

that

this

is

just

an

example

of

how

we

could

use

that

ping

api,

whether

it's

a

privacy

problem

or

not.

I

have

a

slide

on

that.

So,

let's

kind

of

let

me

just

quickly,

go

over

the

next

slide

and

then

we

can

have

a

discussion

on

the

privacy

aspects.

Okay,

so

this

is

just

a

proposed

interface.

If

we

go

with

this

ping

idea,

so

it

kind

of

mimics

how

ping

actually

behaves,

I'm

sure

there

are

other

ways

to

formalize

it.

B

And,

alternatively,

if

we

need

more

granular

data,

we

can

actually

capture

each

of

the

ping,

replies

and

add

them

to

performance,

observer

buffer

and

and

then

analyze

them

further.

If

you

want

to

it's

just

an

idea,

we

don't

have

to

decide,

or

it's

just

want

to

hear

what

we

think

about

this

structure.

A

Just

that

yeah,

from

my

perspective,

like

the

the

api

shape

matters

less

than

the

fact

that

you'll

be

exposing

ping

information

up

until

the

default

gateway

which

is

not

currently

accessible.

Unless

people

like

people

can

brute

force

typical

ip

addresses

for

the

default

gateway

and

try

to

get

like,

maybe

maybe

it's

fine

to

expose

it,

because

it's

already

exposed

because

people

can

ping

192,

168,

0,

254

and

they'll

be

right

most

of

the

time

or

I

don't

know

but

yeah.

B

Yeah,

it's

a

tentative

idea

for

an

api

shape.

It

could

be

structure

differently,

so

few

items

that

come

when

we

talk

about

privacy

is:

is

that

an

api

that,

in

order

to

even

activate

it,

we

need

to

have

some

kind

of

a

prompt

to

the

user

for

permission

to

even

do

this

so

ask

the

user

for

approval,

like

some

other

apis,

do

right,

and

how

would

that

look

like

I

mean

the

it

is

possible

to

actually

mimic

what

this

api

proposes

using

existing

technology?

B

B

A

B

B

B

Some

other

techniques,

like

the

network

information

api

rounds,

the

the

round

trip

time

to

25,

millisecond

buckets

it

could

be

even

more

or

less

granular,

maybe

to

three

buckets

like

quick

like

fast,

slow,

medium

or

something

like

that.

Would

that

be

sufficient

to

mitigate

fingerprinting?

I

don't

know

it's.

It

is

something

we'll

have

to

look

into

as

well.

E

I

have

two

questions

here:

one

is:

how

is

it

going

to

help

in

a

vpn

connected

network,

most

of

our

like

a

sales

force.

Most

of

our

customers

are

already

behind

a

vpn,

and

the

vpn

may

not

be

near

to

the

local

territory,

maybe

somewhere

in

a

geographically

separate

location.

So

how

is

it

going

to

help

in

there?

The

second

question

would

be

like

we

already

have

a

round

trip

time

and

how

the

ping

response

time

would

it

would

be

a

yeah.

E

B

Yeah

yeah,

I'm

not

sure,

actually

what

the

answer

regarding

vpn.

Definitely

that

changes

the

routing

table.

So

that

means

it's.

I

actually

tried

to

address

it

in

in

the

next

slide,

because

we

still

have

some

questions

if

we

have

more

than

one

gateway

right

specifically,

if

you

connect

to

ppn,

you

have

more

than

one

gig,

but

your

default

rate

would

probably

go

to

the

to

the

vpn

ip

right

when

you're

connected

and

the

then

comes

the

question.

B

B

That's

much

harder

to

define,

I

guess

and

harder

to

do

securely,

but

that

would

be,

I

guess,

the

ultimate

use

case

much

more

than

the

thing,

but

I

did

the

thing

about

starting

with

the

thing

and

and

just

to

see

what

you

hear.

The

main

feedback

I'm

getting.

I

guess

from

you

have

mostly

is

a

concern

about

privacy.

Am

I

correct.

A

So

concerns

around

privacy

with

exposing

this

new

information

that

is

not

currently

exposed

and

maybe

putting

that

behind

a

prompt

is

good

enough.

If

the

prompt

is,

you

know

explicit

enough

in

outlining

that

you

know

this

is

a

network

diagnostic

capability

that

the

user

is

enabling,

but

personally

I'd

love

to

see.

B

A

B

F

Yes,

my

main

question

is

if

it's

the

responsibility

of

the

web

app

or

if

it's

really

more

of

a

browser,

responsibility

and

browser

diagnostics,

to

to

tell

you

that

your

local

kind

of

like

chrome's

your

network,

is

having

issues

and

retry

kind

of

ui,

but

something

that

works

even

with

offline

spas

or

offline

apps.

Either

a

network

health

in

the

browser

ui

or

something

that's

completely

removed

from

the

web

content.

B

B

C

A

One

comment

from

alex

on

the

chat

is

that

if

this

api

does

move

forward,

it

needs

to

be

async

to

give

room

for

a

prompt

or

some

sort

of

a

you

know,

a

user,

visible

notification

that

enables

it

so

that's

good

feedback

going

back

to

net

info.

I

wonder

if

other

vendors

have

thoughts

and

or

appetite

about

what

a

revamped

version

for

net

info

may

look

like.

That

is

something

that

they

feel

they

can

ship.

A

G

B

A

C

H

H

This

is

a

proposal

which

has

strong

dependencies

on

information

from

the

operator

network,

and

things

like

that.

So

I

thought

I'd

maybe

just

bring

that

up

so

that

so

that

you

you're

aware

there

is

a

similar

kind

of

topic

being

discussed

there.

My

second

comment

is

perhaps

more

orthogonal

to

this

discussion,

but

in

this

topic

I

noticed

that

you're

talking

about

apis

for

network

diagnostics.

H

There

is

an

idea

in

our

interest

group

where

it's

the

other

way

around.

They

are

trying

to

extend

developer

tools

to

emulate

time

variant,

network

conditions

so,

which

means

that

similar

to

you

have

the

heart

race

format.

There

can

be

a

network

trace

format

where

the

network

trace

is

come

taken

from

the

real

world

and

and

you

kind

of

prepare

a

trace,

and

then

you

do

a

play

of

the

trace

and

then

you

kind

of

test

the

web

web

apps.

H

How

does

the

web

app

behave

when

the

network

conditions

changes,

so

this

kind

of

helps

the

web

developer

make

sure

that

his

app

is

able

to

adapt

to

the

varying

network

conditions?

So

I

thought

I

would

just

like

to

bring

that

up

in

this

discussion,

because

they

are

kind

of

connected

to

some

of

the

discussions

here.

I

can

share

two

links

in

the

chat

window.

A

H

D

D

D

D

D

There

are

also

other

ways

to

measure

memory

locally,

so

the

like

our

main

motivational

focus

here

is

to

get

data

from

production

and

look

at

the

aggregated

data,

so

the

we

are

not

aiming

to

make

individual

calls

to

the

api

meaningful

or

like

focus

on

that.

If

you

want

aggregated

data

okay

last

year

I

gave

presentation

to

this

group

and

proposed

apis

there.

D

At

that

point,

there

were

many

unclear

parts,

many

unknowns,

but

what

was

clear

at

that

point

already

is

that

there

is

this

trade

of

space

and

every

decision,

design

decisions

that

we

make.

It

will

move

in

somewhere

in

this

trade

of

space

and,

in

the

meantime,

api

evolved,

and

I

think

it

converged

to

this

point

in

the

trader

space

like

the

security

story,

improved

a

lot.

I

think

the

interface

also

improved.

I

I

will

describe

that

here

before

doing

that,

I'd

like

to

acknowledge

and

and

thank

people

that

helped

and

provided

feedback.

D

D

I

put

a

link

to

a

blog

post

that

describes

how

to

do

like

proper

feature

detection

and

how

to

set

up

randomized

periodic

sampling.

That

will

be

useful

for

like

looking

at

aggregated

data

before

I

describe

okay,

so

it

turns

a

result.

But

before

I

describe

how

the

result

looks

like,

I

want

to

talk

more

about

high-level

properties

of

the

api,

and

I

think

the

best

way

to

do

it

is

to

compare

it

to

the

non-standard

performance.memory

apis

that

you

might

know

so.

The

first

thing

the

scope.

What

exactly

does

the

api

measure.

D

The

like

the

websites

today

are

complex

right,

so

they

can

embed

iframes,

they

can

run

web

workers,

and

these

websites

are

also

running

on

top

of

complex

browsers

and

browsers

have

their

own

heuristics

on

where

to

allocate

particular

iframes

that,

depending

on

origin

and

policy,

they

may

decide

to

put

one

iframe

on

one

hip

on

one

process

and

other

iframes

and

another

heap,

and

in

this

diagram

that

we

have

like,

we

have.

Two

websites

like

this

diagram

shows

a

frame

tree

and

we

have

two

websites.

One

in

green

has

two

iframes

and

another.

D

One

in

white

has

one

iframe,

and

now

what

exactly

the

apis

are

measuring

for

the

new

api,

it

will

measure

the

memory

usage

of

the

website

together

with

iframes

and

workers.

So

this

is

what

web

developers

would

intuitively

expect,

and

this

also

maps

nicely

into

the

spec

where

we

can

define

the

scope

as

browsing

context

group

for

his

agent

clusters.

D

On

the

other

hand,

the

old

api-

it's

very

easy

to

implement

it.

We

just

return

the

heap

size

counter

right,

but

what

exactly

it's

measuring

in

terms

of

the

web?

It's

hard

to

say,

because

it

depends

whether

there

are

some

other

web

pages

that

happen

to

be

sharing

the

same

heap

also

note

that

the

old

api

may

underestimate

the

memory

usage,

because

some

iframes

are

not

accounted,

and

it

can

also

overestimate

the

memory

usage,

because

there

it

accounts

potentially

unrelated

pages.

D

That's

because

we

are

using

cross

region.

Isolation

so

like

to

explain

so.

The

problem

is,

if

we

provide

this

api

to

the

web,

attackers

can

start

using

the

api

and

load

cross-origin

resources

and

get

the

size

of

that

resource

and

from

the

size.

Maybe

some

other

information

may

be

inferred

right,

and

this

is

kind

of

the

side,

channel

attack

and

solution

to.

That

is

that

we

require

that

the

web

page

is

cross

origin

isolated.

D

A

link

to

the

blog

post

that

explains

it

well,

but

the

main

idea

is

that

if

the

website

is

crossaging

isolated,

we

know

that

all

resources

that

were

loaded

they

opted

in

to

be

loaded

in

cross-origin

documents,

and

by

doing

so

they

also

uploaded

it

into

potential

set

channel

information

leaks.

There

will

be

more

discussion

on

this

tomorrow

in

this

session.

D

Okay,

now

going

back

to

the

describe

to

the

result,

like

imagine,

we

called

this

api

and

they

we

have

a

website

that

doesn't

have

any

iframes.

In

that

case,

one

way

how

the

result

could

look

like

is

this:

we

get

the

total

bytes

of

the

web

website

and

then

breakdown,

but

in

this

case

the

browser

chose

to

not

show

any

breakdown.

So

this

is

a

valid

implementation

to

not

provide

any

breakdown.

D

On

the

other

hand,

browser

may

choose

to

provide

breakdown.

In

this

case,

a

breakdown

is

very

trivial

because

there's

only

one

entry,

but

it's

still

useful

to

show

and

explain

the

fields.

So

every

entry

in

the

breakdown

breakdown

is

an

array

and

every

entry

there

describes

some

portion

of

the

memory

and,

like

first

field,

is

a

byte.

How

large

is

this

portion,

then

to

what

window

or

iframe

or

worker?

D

And

similarly,

there

is

a

description

of

types

of

memory

types,

so

here

this

this

part

is

fully

implementation

dependent

like

I

expect

different

browsers

to

have

different

types

here,

for

example,

what

could

these

air

is,

whether

it's

javascript

memory

or

dom

memory,

or

whether

the

memory

belongs

to

detached

iframes

or

array

buffers?

Something

like

that?

D

Okay,

now,

to

a

more

interesting

example,

let's

say

you

have

a

website

with

multiple

iframes

like

one

is

the

same

original

frame.

Another

is

cross-origin

iframe,

then

the

result

could

look

like

one

way

it.

It

could

look

like

this

right.

The

web

browser

managed

to

break

down

an

attribute

memory

to

all

iframes

and

windows.

So

then,

you

have

three

entries

and

the

sizes

add

up

to

the

total

memory

usage

and

then

each

attribution

is

a

list

containing

consisting

of

a

single

entry.

D

What

could

also

happen

is

that

the

browser

may

decide

that

like

if

it

is

impossible

to

distinguish

between

the

same

original,

iframe

and

the

same

window,

and

it

may

choose

to

not

do

that

distinction.

In

that

case,

it

can

group

together

those

two

attributions

and

that's

the

reason

why

we

have

this.

As

a

list

or

array

and

also

perfect

developmentation

would

be

to

group

everything

together

right,

meaning

that

the

browser

cannot

distinguish

between

those

iframes

or

provide

empty

breakdown.

D

But

let's

say

we

have

the

food.com

and

it

embeds

two

iframes

one

is

same

original

frame

and

another

one

is

a

crossover

frame

and

let's

also

assume

that

same

original

frame

redirects

internally

to

another

url,

then

the

results

that

we

will

see

for

the

same

origin.

The

url

field

will

contain

the

most

recent

url

like

of

the

document

of

this.

So

since

it's

redirected

to

the

iframe

after

to

another

url,

it

will

contain

that

url.

D

D

We

are

not

even

saying

that

it

is

a

window

because

the

iframe

itself

could

contain

other

iframes

and

could

start

workers,

so

we

provided

the

scope

as

the

cross-origin

aggregated

sentinel,

and

the

most

useful

data

for

the

for

the

page

is

container

element

because

using

the

container

then

the

original

page.

That's

calling

this

api

can

figure

out

that

this.

This

is

the

iframe

that

retains

that

memory.

A

D

So

in

that

case

providing

the

most

recent

url

is

useful,

but

for

cross

origin

iframes

we

can

only

provide

what

the

main

origin

knows

and

main

origin

knows

the

source

attribute

of

the

iframe

of

attributes

of

the

iframe,

but

the

most

recent

url

is

not

provided

right

yeah.

So

now,

regarding

the

latest

status,

the

api

is

an

origin

trial

in

chrome

effectively.

The

original

trial

is

running

from

85

to

87,

like

from

september

to

january.

D

It

started

earlier

like

in

82

83,

but

we

had

a

buck

in

the

implementation

and

had

to

pause

the

origin

trial.

The

origin

trial

is

running

with

some

differences

to

the

spec

and

what

I

presented

here.

The

main

difference

is

the

security

mechanism

like

since

not

all

users

rolled

out

the

cross-origin

resolution.

D

We

are

relying

on

the

site,

isolation,

the

mechanism

that

the

all

the

api

also

used-

and

this

means

that

it's

the

scope

is

more

limited

like

we

cannot

show

crossover,

cross-site,

iframes

and

only

the

same

site,

iframes

and

additionally,

the

version.

That's

running

in

origin

trial

is

using

simpler,

attribution

format,

then

chrome

87

added

support

for

worker

memory,

and

that

was

not

possible.

This

is

something

new

like

previously.

It

was

not

possible

to

get

measure

memory

of

workers

with

the

old

api

and

the

plans

for

chrome

88

is.

D

This

will

be

the

version

where

we

will

switch

to

the

gating

behind

cross

origin

isolated.

We

will

also,

together

with

that

switch.

We

will

update

the

attribution

format.

We

need

to

sync

with

the

origin

trial

users,

because

this

is

going

to

be

a

breaking

change

and

we

will

add,

support

for

cross-site,

iframes

and

ship.

D

Hopefully,

I

can

give

like

some

some

feedbacks

that

we

received

like

described

feedback

that

we

received

mozilla

folks,

looked

at

the

api

and

provided

very

useful

feedback

main

concerns

there

were

around

interop

and

around

highlighting

that

the

data

returned

or

the

result

is

specific

to

the

browser

so

based

on

the

on

their

suggestion,

we

made

that

we

renamed.

Initially

we

had

the

memory

types

as

simply

types

now

they

are

called

user

agent

specific

types.

D

There

is

one

open

issue:

whether

we

want

to

rename

bytes

into

user

agent-specific.

Bytes,

I

I'm

curious

to

learn

what

this

form

thinks

about

that

for

me

personally,

it

seems

that

obvious

that

bytes

should

be

specific

to

the

browser,

but

maybe

we

want

to

highlight

that

even

more,

but

then,

if

we

do

that,

maybe

we

can

want

to

add

like

additional

objects,

that

just

is

named

user

agent

specific

and

then

the

result

will

become

a

field

of

that

object,

because

all

fields

may

become

user

engine

specific.

D

Then

there

was

one

more

suggestion

to

introduce

dummy

entries

and

randomize

order

of

entries

in

the

breakdown

list.

I'm

also

curious

to

learn

your

opinion

here.

So

the

idea

here

is

to

prevent

users

from

hard

coding

like

specific

indices

in

the

breakdown

like

breakdown,

0

always

means

main

window

or

something

like

that

and

there's.

Another

open

question

is

what

should

be

the

scope

of

the

api

like?

D

Currently,

we

are

going

with

the

scopes

that

is

in

what

developers

would

expect

like

the

whole

web

page

together

with

all

iframes

same

origin

cross

origin,

but

an

alternative

there

is

to

limit

the

like.

Still

look

at

the

browsing

context

group

or

the

web

page,

but

limit

it

to

the

current

process.

It's

it's

better

than

what

we

had

before

with

the

legacy

api,

because

this

would

not

include

unrelated

pages,

but

it

would

be

limited

to

a

process

and

it

would

then

depend

on

the

process

model.

D

Other

feedbacks

that

we

received

from

users

was

that

in

the

origin,

trial

version

promise

may

take

a

long

time

to

resolve,

and

this

is

kind

of

expected

because

the

way

how

we

implement

it

is

default,

the

measurement

into

garbage

collection.

So

the

measurement

actually

happens

with

the

next

garbage

collection.

We

do

it

in

order

to

reduce

the

overhead,

otherwise

the

alternative

would

be

to

iterate

the

heap,

and

that

would

be

very

costly,

but

in

local

like

this

only

affects

production

in

local

testing.

D

D

D

Are

the

sizes

of

objects

that

were

allocated

by

this

browsing

context

group

and

it

depends

on

like

it's

fully

implementation

specific?

What

what

exactly

that

means

and

on

what

heap

these

bytes

are

coming

from.

But

the

high-level

idea

is:

if

you

have

an

object

and

like

we

would

look

at

all

objects

and

then

sum

up

their

sizes.

D

I

C

I

Did

used

to

do

a

little

memory

use

analysis.

One

thing

that

is

very

interesting

is

the

fact,

for

example,

in

many

operating

systems

modern

operating

systems,

at

least

you

have

a

memory

compressor

right,

so

you

end

up

compressing

some

memory,

so

the

actual

bytes

in

the

physical

sense

is

different

from

the

number

of

bytes

that

you

may

see

in

the

view

of

virtual

outer

space.

I

Now.

Another

thing:

that's

important

is

the

distinction

between

dirty

bytes

versus

the

non-dirty

bits

right.

So

if

you

have

a

known

dirty

memory,

that

is

a

map

from

someone

else,

then

that

memory

could

be

purged

by

os,

so

the

cost

of

that

is

different

from

dirty

memory

and

even

for

dirty

memory,

depending

on

what

kind

of

dirty

memory

you

have.

If

it's

a

completely

empty

page,

that's

dirty

like

you,

you

compressor

can

take

care

of

that.

C

I

C

D

So

in

chrome

like,

I

think

it

depends

on

the

implementation

and

what

implementation

chooses

in

chrome.

We

don't

try

to

approximate

what

the

actual

physical

memory

usage

would

be.

We

report

the

sizes

as

the

allocates

and-

and

these

are

the

virtual

like

you

could

describe

it

as

a

virtual

sizes

right

and

if

operating

system

underneath

does

some

optimizations.

That

would

not

be

captured,

and

I

think

that

may

be

useful.

D

I'm

not

sure

how

to

spell

expect

that,

but

there

are

also

fingerprinting

concerns

right

and

it's

useful

to

avoid

exposing

too

much

of

the

system

information

so

and

regarding

the

non-dirty

memory,

I

guess

those

memory

that

was

mapped.

I

guess

that's

mostly

the

shared

memory

right

that

was

existing

before

like

well,

it's

at

startup

of

the

instance

of

webpage

and

also

to

avoid

fingerprinting.

This

kind

of

memory

should

not

be

surfaced,

so

the

idea

is

to

only

surface

the

memory

that

the

webpage

actually

allocates

on

top

of

what

the

baseline

is.

I

Yeah

but

for

example,

if

you,

if

you

have

a

blob

and

map

the

content

of

blob

into

array

buffer,

you

could

imagine

that

one

implementation

of

data

is

just

a

mapped

file

into

memory

right

and

then

you

have

a

cream

memory

versus

30

memory,

so

I

mean

yeah.

I

guess

if

the

definition

of

bias

is

completely

implementation

dependent

like

we

could

do

whatever

right.

I

mean.

D

I

D

I

I

I

G

G

D

D

So

it's

about

the

scope

of

the

api

and

whether

and

it

somehow

relates

to

security

in

a

sense

that

if

you

make

the

scope

very

limited

and

limit

to

the

same

process,

it

should

improve

security

because

we

will

not

get

any

data

from

other

processes,

but

as

a

trade-off

here,

it

would

make

them

the

result

very

much

dependent

on

the

process

model

of

the

browser.

So

if

the

web

page

runs

on

one

device

and

the

same

approach

runs

on

another

device,

we

could

get

very

different

numbers

there.

D

B

But

with

the

gpu

memory

would

be

able

to

expose

the

total

available

gpu

because,

in

contrast

to

the

general

ram,

I

guess

where

the

os

usually

uses

paging

or

any

other

strategies

to

deal

with

memory

stress

once

the

gpu

memory

is,

is

full.

I

guess

bad

things

happen,

and

usually

it's

not

handled

very

well.

So

I

wonder

if

we

can

get

sick

about

the

total

available

gp

memory

and

the

attribution

of

different

parts

of

the

app.

D

So

the

way

how

it

would

work,

it

says

not

implemented

right,

but

the

way

how

I

imagined

it

would

work

is

that

we

would

approximate

the

gpu

memory

like

we

would

not

get

real

gpu

memory,

but

from

the

things

that

we

know

in

this

current

process.

Like

canvas

elements,

we

would

approximate

how

much

gpu

memory

that

uses

and

provides

that

as

an

approximation,

I'm

I

I

think,

providing

the

capabilities

like

actual

the

limit

of

the

gpu

memory.

It

may

be

out

of

scope

of

this

api.

D

B

B

C

C

I

E

D

I

A

A

I

highly

appreciate

your

thoughts

on

the

visibility

of

that

in

terms

of

timelines,

and

if

we

can

do

that,

we

could

potentially

remove

them

from

deliverables,

and

then

there

are

other

specs

that

we

could,

potentially,

you

know,

bring

to

completion,

but

they

are

probably

in

terms

of

timeline

timelines

that

will

most

probably

happen

after

the

rechartering

and

otherwise

for

both

preload

and

resource

hints.

We

talked

about

dismantling

resource

hints

and

then

moving

bits

and

pieces

directly

to

be

integrated

into

html.

A

It's

unclear

to

me

if

we

should

just

remove

them

as

a

deliverable

entirely

or

have

a

section

stating

that

they

are

deliverables

in

transition,

because

we

won't

finish

that

work

before

the

rechartering,

so

karine

on

that

front

as

well.

I

love

your

opinion

and

then

for

everything

else,

all

the

other

specs

that

we

won't

bring

to

completion

or

transition.

A

The

plan

is

to

just

get

them

to

cr

and

then

forevermore

just

include

all

updates

as

a

cr

draft

and

once

in

a

while

run

a

snapshot,

I'm

not

yet

sure

on

what

the

mechanics

of

that

would

be.

But

I

don't

think

we

need

to

concern

ourselves

with

that

right

now

and

other

than

that

there

we

have

a

bunch

of

specs

that

where

we

would

love

a

helping

hand-

and

we

already

mentioned

that

as

part

of

the

intro-

a

bunch

of

specs

where

editors

are

needed.

A

A

So

I

think

that

the

level

of

effort

varies

based

on

the

different

specs,

but

generally

yeah.

Even

if

you

have

a

few

hours

a

week

that

you

could

dedicate

to

this

subject,

it

would

be

highly

appreciated

and

we

would

love

to

help

in

order

to

move

those

spec

forwards,

make

sure

they're,

well

maintained

and

issues

don't

lag

so.

K

A

A

A

A

A

For

both

resource

timing,

l1

and

page

visibility,

l2

l1

is

really

complete

and

l2.

We

have

one

final

issue,

but

we

have

a

good

plan

of

how

to

resolve

it.

So

we

could

potentially

do

that

rather

quickly,

if

if

there

is

a

prospect

of

moving

it

to

rec

before

we

recharter,

otherwise

we

can

just

leave

it

as

a

deliverable

and

you

know

aim

to

get

it

to

wreck

next

quarter.

But.

L

A

Yeah

yeah,

so

we

plan

to

keep

maintaining

both

of

the

so

resource

timing.

L1

will

be

done,

but

resource

timing.

L2

will

not

be

it's

just

that.

Currently

we

have

both

of

them

as

deliverables

in

the

charter,

and

it

would

be

good

to

clean

up

the

l1

bit

and

obviously

we're

still

committed

to

maintaining

and

keeping

l2

as

a

living

standard.

A

L

A

L

L

L

L

L

L

A

L

That's

a

possibility.

I

I

don't

say

that

it's

the

right

thing

to

do,

but

well

we

it

depends

on

the

approach

that

the

working

group

wants

for

versioning

used

levels

for

the

moment,

but

we

didn't

have

the

ability

in

the

process

to

evolve

now

that

we

have

the

ability

to

have

the

living

standard.

Then

that's

different.

Maybe

the

question

is:

do

we

just

enrich

the

apis?

So

it's

it's

never

breaking

up

what

exists,

so

we

don't

really

need

versioning.

In

that

case,

that's

it.

A

G

C

G

L

When,

when

you

enter

proposed

recommendation,

you

have

to

say

that

this

is

going

to

be

a

living

standard,

that

that's

the

first

step

and

once

you

are

in

recommendation

with

that,

you

are

allowed

to

go

through

a

different

process

to

amend

the

recommendation,

which

is

kind

of

combining

the

the

pattern

protection

that

currently

is

done

at

cr

and

the

ac

vote.

That

is

done

as

pr.

So

changing

your

recommendation

should

be

much

quicker.

L

The

other

change

is

that

the

crs

and

the

cr

drafts

and

cr

snapshot

are

distinguished,

whereas

before

some

crs

were

editorial

and

some

crs

were

substantive,

and

so

some

triggered

patent

policy

actions

and

some

did

not.

It

was

quite

confusing,

but

that's

not

related

to

living

standard.

That's

going

to

apply

to

all

specifications.

C

L

L

So

currently,

the

implementation

of

that

process

is

not

entirely

clear,

but

is

there

something

in

the

process

called

last

call

for

review

of

an

amended

recommendation

or

in

purpose

changed?

I

don't

recognize.

There

are

two

different

flavors

of

that

and,

and

that

is

going

to

be

a

kind

of

crpr

mix

of

review.

A

In

just

to

to

clarify

in

the

previous

discussion

when

we

talked

ab

like

we

concluded

that

the

living

standard

variant

of

amended

wreck

is

something

that

will

have

a

relatively

high

overhand,

so

we

prefer

to

go

with

the

cr

draft

version

of

the

living

standard.

So

basically

ip

commitments

happen

in

cr

and

then

drafts

from

that

point.

On

so

get

so

draft

snapshots

will

be

the

you

know,

tip

of

three.

L

So

the

the

difference

also

for

that

is

that

previously,

if

you

wanted

to

add

a

feature

to

a

recommendation,

let's

say

you

add

an

entire

api

to

an

an

api

that

it

already

exists

and

you

want

to

put

add

a

new

one

in

it,

and

and

for

that

you

had

to

go

to

first

public

working

draft

or

not.

It

was

an

entire

new

track.

And

now,

if

your

recommendation

was

marked

as

living

standard,

you

can

incorporate

that

and

directly

go

to

cr

new

publishers.

A

Yeah,

I

think

that

that

piece

will

probably

have

to

see

when

we

get

closer

to

like

an

existing

example

but

yeah.

Maybe

we

could

take

yeah,

try

that

out

with

page

visibility

and

then

move

it

to

rec

as

a

living

standard

and

then

bring

it

back

to

cr

to

add

the

pre-rendering

related

bits

or

something

like

that.

A

L

That

that

does

not

prevent

from

publishing

a

level

three

as

a

first

working

draft.

If

we

change

your

mind

later,

I

think

yeah,

it's

it.

It

is

not

mandatory

that

everything

that

is

related

to

page

visibility

will

be

in

the

living

standard

either.

So

I

think

the

process

is

not

is

not,

does

not

make

impossible

to

take

things

back

and

and

put

them

somewhere

else.

L

M

Hey

you

nick.

This

is

mike

smith

from

w3c,

sorry

to

butt

in

I've

been

in

other

meetings,

but

I

wanted

to

talk

about

one

thing

that

benjamin

asked

specifically

so

first,

I

I

work

for

w3c,

I'm

based

in

tokyo

and

worked

on

a

lot

of

the

transitions,

but

benjamin

asks

this

interesting

question

which

is:

is

it

considered

a

breaking

change?

M

M

If

you've

taken

time

to

review,

another

group

stack

some

other

spec

and

you

end

up

decide

finding

out

after

the

fact

that

they

made

a

change

and

went

ahead

and

transitioned

in

a

way

that

just

invalidated

the

previous

review

that

you

did

yours,

it

doesn't

make.

You

feel

super

great,

so

part

of

this

just

being

considerate

about

the

community

of

people

who've,

taken

time

to

to

review

your

spec

and

give

you

feedback

and

just

consider

would

some

change

that

you

make

be

something

that

they

would

like

to

have

an

opportunity

to

review.

G

But

I'm

still

not

getting

great

answers

as

to

how

disposition

of

comments

work

on

tag

review

of

this

working

group.

That

was

one

of

the

things

I

noticed

in

the

documents

in

general

was

that

that

seems

kind

of

ad

hoc

for

this

group,

and

so

I'm

just

hoping

that

there

is

a

like

a

place

where

these

re-reviews

can

happen

and

that

they're

noted

in

the

dock

and

that

place

is

usually

disposition

of

comments

from

what

I

can

tell.

So

I

just

want

to

make

sure

that

we

track

these

reviews

and

comments.

G

A

G

A

G

A

A

L

So

my

feeling

was

that

if

we

don't

plan

to

republish

them

under

this

group's

responsibility,

then

it

should

be

removed

from

the

normative

deliverable

section-

maybe

put

put

it

somewhere

else

for

because

for

ac

review

of

the

charter,

it

would

be

interesting

for

people

to

know

what

what's

happening

to

those

specs.

So

it's

still

interesting

to

have

wording

on

on

that

front.

L

So

that

we

don't

have

such

a

section

in

in

in

the

charter

template,

we

don't

have

a

section

for

things

that

we

transfer

to

somewhere

else,

so

that

was

going

to

be

more

or

less

freestyle,

spec

section

of

the

charter.

I

was

suggesting,

maybe

under

the

either

other

deliverables

or

in

the

section

where

we

have

liaison

to

other

groups

external

organization.

I

think

it

is.

A

L

B

M

So

yeah

that

happens

with

that's

happened

in

the

past,

of

course,

with

a

number

of

other

things,

but

I

would

just

say

that

when

that

does

happen

along

with

whatever

else

you

do

what

we're

just

talking

about

now.

Please

make

sure,

ultimately

that

sleep

is

aware.

What's

going

on,

because

the

relationship

with

what

we

g,

you

know

we

had

this

thing

where

we

created

a

memo

of

understanding

and

we

have

steps

that

we

are

supposed

to

go

through.