►

From YouTube: TPAC 2018 WebRTC meeting - @Tuesday part 3

Description

From 3:30 PM

A

C

A

C

Yeah

this

was

briefly

mentioned

yesterday,

Bernard

and

earlier

today,

by

Peter,

so

yeah.

This

should

be

quick.

I

know

this

was

discussed

like

last

June

and

face-to-face

and

over

the

meals

right.

So

my

goal,

or

is

to

revive

this

conversation

back

and

focus

on

a

simple

use

case,

hopefully

make

some

progress,

so

so

I'm

gonna

focus

on

fully

trust,

able

application.

So

I

remember

from

the

mailing

threads.

C

That's

it's

three

use

cases

like

fully

trusted

to

application

partially

trusted

and

not

trust

it

so

I

think

the

aliased

won't

focus

on

is

fully

trusted

application,

but

not

trusted

is

if

you'd.

So,

though,

application

will

have

access

to

the

key

is

joseph.

Has

access

to

the

kids?

The

effuse

can

see

the

metadata,

but

most

of

contents

on

this

would

be

enter

an

encrypted.

The

encryption

decryption

itself

would

be

done

by

the

application

by

javascript

and

only

focus

on

audio

and

video

no

text

next

five

days.

C

So

what

we're

going

to

use

is

basically

frame

encryption,

so

we're

going

to

use

two

layers

of

encryption

what's

already

happening

today

with

at

packet

level,

leaving

itself

to

be

this

would

be

between

the

clients

and

they

see

fuse,

which

is

outer

encryption

and

the

inner

encryption

would

be

at

the

frame.

That's

a

packet

lever,

so

basically

encrypt

sign

per

frame

before

packetization

and

then

attach

the

extra

Mack

and

IV

and

then

backside

of

it

and

then

send

it

over

the

network.

C

This

is

different

from

what

we

have

today,

for

example

in

Burke,

which

double

encrypt

at

the

packet

level.

This

improves

the

bandwidth.

The

codes

extra

mekin

IV

is

per

frame,

not

the

packet

very,

very

similar

to

implement,

and

it's

also

not

to

be

specific.

So

we

can

use

it

for

future.

Put

codes,

ie,

quark

I'm,

not

going

to

discuss

the

encryption

scheme

or

protocol

details

there.

It

will

be

discussed

ability

in

IDF

and

Prague

104

next

slide.

C

D

C

Okay

and

yet

again

so

basically

the

idea

is:

there

are

two

clients:

the

nbz

one

sent

this

awesome

cut

picture.

Sir

multiple

Chiefs

change

here

on

the

letter.

Security

exchange

also

banned

between

twenty

and

company,

this

for

Internet

encryption,

but

it

still

is

a

technical

detail,

a

handshake

between

client

and

AC

fields

for

the

hop-by-hop

encryption.

C

So

what

happened

is

taking

Zion

code

for

him

once

the

metadata

encrypt

the

frame,

but

not

to

miss

River

and

then

feed

it

into

the

regular

robotc

flow

is

oxidization

due

to

smaller

packets,

incredible

so

the

great

the

great

back

area.

Squirrels.

Are

they

in

turn

encrypted,

then

finish

to

the

resort,

abusing

the

black

hope.

I

hope

this

play.

This

is

Becca

to

go

through

SF

use.

C

The

clips

out

encryption

is

ought

to

be

affirmed

that

it

does

not

see

the

media.

Do

some

settings

magic.

Then

it's

a

lift

forward

to

the

next

client,

which

is

being

clipped

again

using

this.

If

you

using

resource

to

be,

and

in

the

final

client,

you

will

first,

the

crypts

outer

layer

construct

the

encrypted

frames

and

decrypt

the

framing

center

in

encryption

keys

next

slide,

please.

So

what

we

need

to

change

here

so

Pilipinas

is

a

typical

robot

see

flow.

C

What

do

we

need

to

inject

is

the

polled

squirrels

at

the

bottom,

which

is

between

the

encoder

and

vectorization,

so

between

the

encoder

back

on

the

original

insert

in

cutter

and

between

the

oxidization

decoder

inside

decrypter

next

slide.

Please!

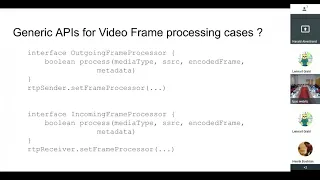

So

really

we

need

two

interfaces:

framing

crept

through

simple

function:

encrypts

that

takes

a

media

type

either

audio

or

video.

The

source

is

source

e.

C

Another

proposed

meant

you

could

actually

make

it

more

generic,

no,

not

a

specific

friend

to

encryption,

but

just

general

interface

that

well

a

process

that

included

media

before

sending

it

and

process

and

coded

frame

before

after

receiving

it.

So,

in

this

case,

we'll

have

like

process

process

out

going

frame,

pros,

sorry,

algorithm

process

or

an

incoming

frame

processor

and

just

to

simply

process

function

and

can

just

set

the

meeting

out

to

be

central

to

be

receiver.

C

E

E

F

E

C

E

C

Is

unusable

but

you

can,

if

I'm

select

like

the

top

three

audio,

you

can

prevent

forwarding

the

lower

volume

because

the

way

might

be

in

the

metadata.

So

it's

if

you

can

access

to

the

metadata

and

see

okay,

this

isn't

listen,

not

speaker

person,

so

we

went

forward

them.

So

you

can

do

such

a

thing

but

I'm

defining

the

content

itself.

It.

C

E

E

E

E

G

The

ID

behind,

if

I,

understand

him

at

correctly

the

ID

is.

Let's

say

you

have

an

application

that

use

a

third

party

platform

at

the

service

to

provide

NSF

you

to

relay

right.

So

the

only

thing

that

the

SFU

does

is

looking

at

the

header,

possibly

copy

clone

the

the

packet

to

send

to

multiple

people,

but

there

is

no

real

media

processing.

You

can

do

the

copying

and

everything

if

you

use

the

frame,

marking,

RTP

header

extension

or

dropping

framing,

as

we

see

and

so

on,

without

touching

the

media

at

all.

G

So

in

the

case

where

there

is

no

media

processing,

which

is

the

scope

of

the

discussion

SFU

with

relay

only

possibly

as

we

see

support,

if

you

do

not

trust

that

media

server,

that

was

provided

by

a

third

party,

then

the

security

provided

by

web

RTC.

Initially

we

DTLS

SRTP,

is

not

sufficient,

hence

the

hand

to

an

encryption.

You

have

a

secondary.

While

you

have

a

third

key

pairs,

that

is

only

between

Addison

Bob.

The

media

server

doesn't

have

access

to

it.

G

E

I

E

J

F

F

F

F

I

So

in

my

time

start

next,

one

of

my

slides

actually

shows

how

you

could

use

what

WD

streams

on

senders

and

receivers

to

do

the

same

thing.

And

if

you

combine

that,

with

the

worklet

version

of

transform

streams,

you

could

potentially

do

the

encrypt

and

decrypt

off

the

main

thread

and

also

have

it

available

for

other

processing

purposes.

E

So

I,

don't

you

know,

I,

don't

know

what

the

protocol

is.

Although

I

look

for

the

IETF

contribution,

but

are

you

assuming

that

the

that

any

private

key

material

associated

with

the

with

the

protocol

in

the

handshake

for

setting

up

the

encryption

keys

will

be

in

this

will

be

implemented

and

fully

contained

within

the

browser

or

you

or

you

or

you

planning

to

rely

on

some

sort

of

platform

mechanism?

E

C

E

F

E

A

So

it's

entirely

possible

that

you

could

write

encryption

yeah

all

scripts,

that

used

crypto

API

is

to

get

get

rid

of

the

key

handling.

But

as

far

as

I

understand

that

the

proposal

here

is

that

we

don't

require

any

such

thing.

Let's

say

that

whether

this

doesn't

grip

encryption

or

with

XOR

rot13

or

whatever

that's

entirely

up

to

the

application.

Is

that

correct?

Yes,

correct.

C

C

D

D

C

J

F

No

because

there

might

be

a

decision

to

be

made,

apparently

there's

some

work

in

the

ITF

right.

So

me,

if

there's

an

ITF

solution,

there's

a

decision

to

be

made

which

is

should

should

be

implemented

in

JavaScript

or

in

the

browser,

and

since

it's

related

to

security,

usually

you

do

not

want

people

to

write

their

own

code

to

algorithm.

J

H

F

F

A

We're

talking

about

this

behaves

like

a

transform

stream

and

in

the

particular

use

case

we

have

here,

the

transform

scheme

is

going

to

going

to

execute

Java

scripts,

which

is

probably

executing

wasn't

so

other

cases

could

do

other

things,

including

a

standardized

encryptor.

That

could

be

an

implemented

entirely

within

the

browser,

but

I

think

that's

not

part

of

the

proposal.

At

this

time.

J

G

So

in

Stockholm

we

spoke

already

about

opening

a

little

bit

the

pipeline

right

to

put

arbitrary

transform

in

there.

This

is

just

a

variation

of

an

arbitrary

transform.

It

might

be

duplicating

all

the

stuff.

Some

people

send

media

over

WebSocket

like

zoom

right

instead

of

using

way

about

C

and

so

on.

This

D

there

any

reason

to

actually

reject

the

proposal

because

we

might,

in

the

future,

do

the

same

thing

inside

the

inside.

G

The

browser

I

understand

Security's

little

bit

sensitive,

but

this

is

if

this

is

what

the

application

want

to

do

any

fit

one

of

the

design

we

have

agreed

to

discuss

previously,

while

rejecting

it

right

we

have

I

was

saying

you

know:

let's

not

do

it

because

we

might

do

work

in

the

future

hi

or

what

that's

the

way

I

perceived

it.

So

my

prediction

was

wrong:

I.

I

Agree

with

dr.

Alex

in

the

sense

that

we

should

add

a

mechanism

for

doing

arbitrary,

transforms

and

then,

if

applications

want

to

use

that

for

Indian

encryption

they

can

and

then

later.

If

we

feel

that

it's

worth

making

something

that

standardized

in

the

ITF

into

the

browser,

because

that

will

help

people

do

it

right

or

lower

the

barrier

to

entry

or

whatever.

Then

we

can

also

do

that.

Yeah.

F

I

think

I

believe

that

it's

you

start

with

something

that

is

very

focused.

It's

very

cheap

because

there's

already

something,

but

we

might

be

going

to,

and

then

there

might

be

a

longer

term

thing.

I

was

not

at

all

saying

that

we

should

refuse

that

proposal.

I

think

we

should

consider

it

as

a

use

case

and

as

some

somehow

as

a

goal

direction

so

that

we

we

create

a

model

that

will

comply

with

that

at

some

point

of

it

will

resolve

most

of

the

issues

of

this

use

case.

A

F

F

I

F

A

A

I

That

we're

going

to

conclude

this

use

case

that

we're

adopting

this

use

case

because

at

the

end

of

the

face-to-face

in

Stockholm

that

wasn't

clear

that

we

were

I,

think

that

would

be

progress

if

we

were

adopting

that

use

case.

What,

whether

or

not

it's

a

specific

okay,

it's

from

the

ITF

version

or

a

generic

mechanism

for

processing

frame,

all.

A

A

I

At

the

last

face

to

face

the

Stockholm,

there

was

confusion

or

whether

we

wanted

a

use

case

where

we

trusted

the

JavaScript

application,

but

not

the

server

and

versus

not

trusting

either.

And

so

this

is,

we

trust

the

application,

but

not

the

server.

And

if

that's

the

use

case

we're

adopting

or

not

whether

or

not

it

involves

an

API

that

assumes

ITF

docks

and

built

into

the

browser

or

not

like

you're

still

trust

in

the

JavaScript

and.

J

I

think

is

UN

mentioned.

We've

fully

had

the

discussion

yet

of

if

that

requires

exposing

rod,

that

raw

network

data,

I,

guess

and

so

I

think

I'd

like

to

agree

on

that.

We

should

support

end-to-end

encryption

on

the

application

part.

Maybe

we

could

take

it

to

the

list

too.

So

I

I

get

some

time

to

confer

with

my

my

folks.

J

A

J

E

But

you

know

frame

data,

then

really

once

that

should

be.

That

should

be

the

primary

question

to

be

answered,

because

that

that,

if

the

application

wants

to

implement

their

own

protocol

in

the

end

in

order

to

encrypt

they

have

the

capability

to

do

that

with

the

bread

and

what

what's

available

in

the

bars

of

the

day.

That.

I

A

C

I

You

you

skipped

ahead:

okay,

the

link

to

themself

I'm,

talking

about

media

over

quick,

but

it's

more

like

bring

your

own

transport,

so

it

could

be

me

over

other

things

too.

So

next

slide.

First

thing

I

want

to

bring

up

is

the

fact

that

people

are

already

doing

it.

For

example,

there

are

already

people

sending

live

media

over

HTTP

over

quick

and

putting

it

into

MSC

and

they're,

asking

us

for

a

better

server

push

basically,

and

they

would

like

to

use

the

quick

transport

to

push

chunks

of

media

over

that

to

push

it

into

MSC.

I

There

are

also

people

doing

live

media

over

SCTP

data

channels

already

and

putting

it

into

MSC.

There

are

two

examples

there

that

recently

had

some.

You

know

details

published

and

I

reached

out

to

one

of

them

and

their

specific

request

was

that

they

would

like

to

switch

from

SCTP

to

quick

because

of

the

pain

of

having

setp

on

their

server.

I

The

last

is

that

people

are

already

sending

media

over

WebSockets,

which

something

was

brought

up

earlier

today

and

they're

not

evenings

went

using

WebRTC

at

all

they're

using

web

assembly

to

do

the

encode

and

decode

for

both

audio

and

video

and

I

haven't

talked

to

them,

but

my

proposal

would

be

that

quick

transport

would

be

better

than

WebSocket.

Assuming

we

didn't

have

the

requirement

for

ice

and

you

could

do

a

client

server.

At

the

very

least,

you

could

avoid

the

head-of-line

blocking

and

congestion

control

problems

that

WebSocket

has

so

next

slide.

I

You

can

already

do

all

these

things,

although

somewhat

poorly

on

the

decode

side,

you

can

already

use

MSC,

which

is

pretty

good,

and

on

the

encode

side

you

can

already

use

webassembly

and

either

web

audio

or

canvas.

This

is

what

zoom

is

doing

and

on

the

web

audio

site,

it's

pretty

good,

because

audio

encode

and

decode

in

web

assemblies,

not

too

bad,

but

on

the

video

side,

video

encode

with

web

assembly

is

not

so

good.

I

So,

basically,

you

can

already

do

the

things

that

I'm

talking

about

today,

I'm

just

talking

about

making

it

so

that

red

box

is

not

so

red

and

actually

all

the

boxes

are

more

green.

So

next

slide

and

people

are

already

planning

on

doing

video

over

quick

I'm,

a

member

of

the

copán,

the

second

screen

working

group

and

we're,

as

I

mentioned

earlier

today,

considering

doing

media

over

quick

for

streaming

purposes.

I

There's

also

a

mailing

list

already

in

the

ITF

for

talking

about

sort

of

next-gen,

arty

media

and

someone's

already

proposed

the

idea

of

doing

video

over

quick,

the

implementation

of

WebRTC

that

is

widely

used.

Lib

WebRTC

is

adding

support

for

media

over

quick.

We

have

some

slides

and

more

detail

about

that

later

on.

At

the

moment,

it

only

supports

mobile

because

there's

no

Web

API

for

this

and

that

the

team

working

on

that

has

already

made

an

experimental

mobile

client

to

see

how

well

this

works,

and

the

conclusion

is

that

it

works

pretty

well.

I

But

web

support

is

a

problem

because

there's

nothing

in

the

weather

that

can

do

this

so

or

would

not

do

it

well,

referring

to

my

boxes

previously,

so

I

have

some

proposals

for

how

to

fix

that

next

slide.

There

are

two

proposals:

one

is

to

adopt.

The

quick

transport

which

we

already

have

expect

for,

but

which

is

not

adopted

by

the

working

group

and,

second,

is

to

add

what

W

stream

support

to

Archie

sender

in

our

to

be

receiver

for

encoded

media,

something

we

were

just

talking

about

next

slide.

I

I

There's

already

been

people

that

have

wanted

to

start

an

incubation

group

to

make

a

version

of

WebSockets

that

runs

over

quick,

basically

covering

the

clients

for

use

case,

but

not

the

period

appear

use

case,

a

version

of

quick

transport,

essentially

without

the

ice

transport

underneath

and

some

people

would

even

prefer

to

be

outside

of

our

working

group.

The

tag

for

example,

and

the

tag

review

mentioned

that

why

is

this

in

the

RTC

working

group?

This

is

mortar

rather

than

RTC,

so

I

feel

that

this

is

going

to

move

forward

one

way

or

the

other.

I

B

J

I

So

to

be

clear,

I'm

not

proposing

any

web

spec

about

media

over

quick

I'm,

proposing

a

quick

transport

for

doing

data

over

quick

and

a

mechanism

for

transforming

audio

and

videos

frames

from

RTP

sender

and

receiver

I'll

get

to

that

in

a

sec.

Those

two

combined

could

do

media

over

quick,

but

there's

no

spec

here

about

media

over

quick.

J

J

D

I,

don't

think

it's

within

the

quicks

working

in

the

IDF's

quick

charter

to

think

about

media

were

quick,

I

think

there

are

people

independently

in

the

idea

in

the

IETF

which

have

thought

about

Media

overcook

I'm,

one

of

the

co-authors

of

spec.

It

talks

about

RTP

over

quick,

so

there

are

people.

Definitely

thinking

of

it.

It

may

be

part

of

the

quick

working

group

or

might

be

part

of

a

boutique

or

or

might

be

part

of

something

else

remains

to

be

seen.

A

It's

possible

that

there

might

be

changes

later

to

quick

that

make

it

more

useful

for

transferring

data

that

that

contains

video

frames,

but

that

doesn't

mean

that

doesn't

prevent

anyone

from

using

the

current

version

of

quake

for

transporting

video

frames,

whether

whether

or

not

the

spec

says

anything

about

it.

So

the

so.

A

J

A

D

The

quick

is

a

congestion

control

protocol

over

UDP,

so

I'm

assuming

and

it

has

some

security

property

so

from

a

networking

perspective,

I

think

if

and

when

the

quick

working

group

and

the

IETF

clarifies

it,

and

it's

going

to

probably

go

into

the

II's

iesg

and

when

it

does,

it

can

be

used

for

anything

I

guess.

The

question

here

is:

do

we

want

to

use

it

beyond

the

scope

of

whatever

the

quick

working

group

has

delineated

it

for

I

I?

Think

it

if

it's

a

congestion

control

protocol,

we

can

use

it

for

anything.

D

Given

that

HTTP

2

was

HTTP

was

used

for

doing

video

streaming,

which

it

was

not

initially

destined

to

do

so,

and

HLS

both

use

HTTP

for

a

video

which

no

one

thought

initially

was

a

way

to

transport

video.

So

not

the

same

use

case

though,

but

it

allows

us

to

experiment

beyond

what's

possible

today,

I.

B

B

Think

I

think

quicker

for

transferring

data

sounds

very

interesting

in

and

of

itself

I

think

being

able

to

transform

frame

sounds

very

interesting.

You

know,

love

themselves,

I

think

you

know

being

able

to

do.

Swept

up.

Encoder/Decoder

sounds

very

interesting

on

their

own

and,

like

you

take

all

of

these

together,

it

sounds

very,

very

interesting

and

I

don't

know

details

but

I

mean

I

think

this

is

interesting

and

it

has

potential

so

I

have

the

second.

I

J

I

Be

one

of

the

options?

That's

essentially

the

second

half

of

the

proposal

is

transporting

now

statement.

So

there's

a

transport

Rosel

here

and

a

transport

agnostic

proposal,

but

I

want

to

know

if

we're.

If

this

working

group

is

going

to

adopt

the

quick

transport

or

not

because

if

it

doesn't,

we

should

proceed

somewhere

else.

A

I

A

E

I

Proposal

to

is

about

nothing,

did

with

transport

just

media,

so

the

gist

of

it

is

that

the

RTP

sender

and

RTP

receiver

can

include

a

mix

in

that.

Has

this

on

the

sender

side,

two

new

methods

and

on

the

receiver

side,

two

new

methods.

It

allows

you

to

read

encoded

frames

from

the

sender

and

write

encoded

frames

to

the

receiver

and

then

write

feedback

from

so

I

read

feedback

from

the

receiver,

such

as

a

keyframe

request

and

write

the

feedback

into

the

sender.

D

I

D

D

I

I

E

I

E

I

E

D

D

J

I'm

also

a

little

confused

about

the

wording

here,

but

I

think.

My

main

objection

here

is

that

this

adds

well,

it's

not

it's

less

about

adding

what

WG

streams

to

sender

and

receiver,

but

adding

a

way

to

get

data

out

and

into

sender

and

receivers

right.

It's

a

way

to

get

encoded

frames

out

of

the

senator

and

into

the

receiver.

Yes

right

so

I'm

not

sure

we.

I

Should

expose

that

on

the

main

thread?

That

would

be

my

so

this.

If

you

go

to

the

next

slide,

and

you

look

at

the

example,

this

entire

thing

can

be

off

the

main

thread.

Assuming

that

the

transform

stream

function

call.

There

is

something

that

gives

you

a

transform

stream.

That

can

happen

off

the

thread

using

any

of

the

mechanisms

that

we

thought

could

be

possible,

so

the

pipe

through

in

the

middle

there,

where

it

goes

to

the

either

the

steriliser,

the

or

the

parser,

would

happen

off

the

main

thread.

J

I

Don't

know

if

that's

really

worth

it,

because

I've

talked

to

several

people

about

how

important

it

is

to

them

the

people

that

are

using

SCTP

to

put

things

into

MSC

and

they're,

doing

it

on

the

main

thread,

and

they

said

it's

not

a

problem

for

them.

So,

even

though

we

think

it's

a

problem

and

I

totally

agree

that

we

should

come

up

with

a

good

solution

for

it,

which

is

why

I

presented

so

much

about

it.

There

are

people

that

think

running

on.

I

J

K

E

K

I

K

E

K

I

I

think,

if

that,

if

it's

easy

to

use

a

transform

stream

off

the

main

thread

and

we're

basing

this

off

of

what

WG

streams

and

all

the

examples

people

read

are

like

here,

get

this

transform

stream

and

get

this

and

plug

it.

You

know

they

see

this

line

of

code

is

the

example.

Then

the

obvious

path

to

take

will

end

up

being

one

where

you

are

off

the

main

thread,

but

having

it

like

throw

an

exception,

because

you

didn't

do

right,

yeah,.

J

Maybe

I'm

missing

a

piece,

but

I

mean

the

generic

transport

transfer

stream

with

a

uppercase.

T

is

a

pretty

much

just

a

JavaScript

I

mean

it's

a

class,

but

it's

a

JavaScript

construct

that

doesn't

automatically

make

it

exposes.

It

lets

JavaScript

right

classes

that

act

as

transfer

streams,

but

does

not

it's

still

not

a

browser

object

that

the

browser

could

then

move

that

information

off

main

thread.

Not

what

I

was

saying

is

that,

if,

if

we.

I

Have

an

implementation

of

transform

streams

off

the

main

thread

using

a

worklet

or

a

worker,

or

whatever

we've

talked

about

before,

then

it

should

be

like

five

extra

lines

of

code

in

order

to

get

one

of

those.

Instead

of

that

lines

of

codes

and

characters

in

the

code,

take

it

one

of

those

instead

of

the

classic

on

the

three

transform

stream

right.

J

So

yeah

I

think

this

is

interesting.

I

think

it's

one

of

the

options

I

also

believed

it's

one

of

the

lower

priority

items

that

when

we

sorted

all

the

priorities

it

sounded

like

this

was

on

the

lower

of

the

list,

at

least

from

what

UN

mentioned

so

I

think

it

may

be

a

little

early

to

say

one

way

or

the

other,

but

like

some

time

to

look

at

it.

J

I

A

I

D

J

J

Maybe

workers

are

fine

with

garbage

with

regard

to

garbage

collection

and

video.

Maybe

it's

not.

Maybe

they

work

late

way

is

better

I.

Think

there's

a

lot

of

experimentation

needed,

to

wit,

to

to

know

which

approach

is

going

to

work

better

and

I,

worried

that

focusing

on

the

API

first

might

be

pre-selecting

one

or

the

other.

So.

G

Expressions

of

interest

so

far

right,

yeah

I,

have

one

Russo's

working

on

that

already

experimenting,

but

I

think

this

is

the

stage

where

we

are

experimenting.

There

will

be

a

meeting

of

the

quick

working

group

at

the

end

of

January

in

Tokyo,

today's

working

group

and

today's

hackathon

interoperability

hackathon,

and

things

like

that.

So

maybe

that

would

be

a

good

place

to

hack

on

an

ID

and

on

the

web

at

resistance.

I

understand

that

there

is

an

existing

implementation

that

is

RTC,

but

that

is

in

the

code

of

chrome

and

remote

DC

standalone

as

well.

I

J

More

comment

would

be

that

I

think

what

might

be

useful.

It

is

to

get

feedback

from

the

what

WG

stream

group

to

see

because

I

know,

for

instance,

audio

work.

Let's

right

now

does

not

rely

on

readable

streams

at

all

to

ask

messages

back

and

forth

and

I

know

so

if

they

have

thought

about

so

this

use

of

transfer

streams

to

offload

to

main

thread

and

exactly

exactly

how

that

will

work.

It's

still

a

little

of

a

new

territory

so

be

good

to

get

feedback

from

them.

If

that's

a

reproach

to

a

sanction,

yeah.

L

L

If

I

think

this

viewer

that

we

are

doing

it

will

make

it

actually

easier

for

the

web

applications

and

for

the

browsers,

because

the

way

we

are

integrating

it,

it

will

make

easier

for

browsers

to

implement

proposed

API

is

that

Peter

was

talking

about

if

we

decide

to

go

with

this

API

s,

and

also

we

think

that

for

applications

today,

it's

a

pretty

higher

cost

of

doing

both

native

and

web

apps.

So

if

we

have

similar

building

blocks

in

Libra

party

she

and

on

the

web,

that

will

reduce

the

cost.

L

So

why

why

we

are

so

passionate

about

quick,

familiar

I?

Think

Peter

may

be

told,

talked

about

it

many

times

in

the

past,

but

I

want

to

reiterate

it

because

it's

we

started

it

not

because

of

the

web

started

it

because

we

want

quick

was

a

media.

We

think

it's

much

simpler

stack

if

you

familiar

with

RTP

I,

don't

think

you

will

disagree.

There

are

views

interest

inside

benefits

like

view

art,

it

is

to

set

up

encryption

and

we

see

many

opportunities

for

quality

improvements

that

we

actually

prototyped

locally.

L

We

see

opportunities

for

low

overhead,

but

retransmissions

and

very

easy

to

add

extensions

and

features

like

did

you

try

to

add

extensions

or

RTP

or

implement

an

improvement

story.

Transmissions

like

anything,

energy

P

world

is

very

hard

and

also

quick,

rotate,

the

channel

and

quick

Committee.

Of

course

we

want

to

use

the

same

path

so

quickly

how

we

are

doing

it

today.

L

If

you

have

a

miller

with

the

property

seeds,

the

code

is

very

RTP

aware,

even

even

the

media

parts

of

the

code,

so

we

actually

refactoring

it

so

that

between

the

Transperth

part

and

encoders,

there

is

this

media

media

interface

layer,

which

is

strikingly

similar

to

Peters

proposal,

which

is

like

here.

Is

you

encoded

frame

or

so

send

encoded

friendly

ship

encoded

frame

and

send

me

the

key

frame

and

so

on

so

but

in

very

Factory

in

the

code?

So

it

will

be

like

transport

agnostic?

L

Actually,

so

that

you

can,

we

will

implement

the

legacy

RTP

on

the

same

interface

and

then

the

future

concept

any

transports

and

they

have

a

link

in

the

bottom.

You

don't

see

it.

You

can

look

at

the

interfaces.

Currently,

we

are

also

doing

the

data

transport

implementation.

For

now

the

data

channel.

Sorry,

for

now

we

will

use

existing

data

channel

the

web

RTC

phone,

the

quick

so

that

applications

can

migrate

without

in

any

coach

angel.

But

in

the

long

term.

L

Of

course,

we

want

to

use

something

like

quick

transport

that

Peter

was

talking

about

and

then

have

the

media

through

the

same

quick

transport

interface.

So

what

is

this

media

transport?

Actually?

What

is

this

middle

layer?

What

is

it

so

in

our

prototype

implementation?

It's

this

one,

quick

per

frame

we

implement.

We

define

wire

format

and

implement

serialization

deserialization

then

send

it

to

quick.

We

use

protobufs

currently,

which

is

like

super

easy

for

serialization

or

extensions.

We

have

some

extra

transmission

control

on

top

of

quick

because

we

need

some

different

audio

and

video

presentations

here.

L

Adaptation

and

pendens

allocation

is

an

interesting

topic

because

I

think

the

long

term

you

want

to

have

this

media

transportation

much

tell

encoders

and

what

should

be

the

bitrate

or

frame

rate

etc.

Right

now

we

keep.

We

keep

like

the

adaptation

inside.

The

stream

receives

3-month

sense

sense,

dream

sorry

inside

the

send

stream,

but

will

probably

move

it

inside

the

transport

later,

and

it

is

a

pluggable

component.

So

we

think

we're

is

no

need

to

standardize

it.

L

So

we

have

some

of

the

challenges.

The

congestion

control

was

like

one

of

the

biggest

issues

for

us.

We

found

that

existent

way.

Congestion

control

is

not

rated

for

real-time,

we

did

not

give

up,

but

we

decided

we

should

try

to

integrate

congestion

control

from

Lee

property.

She

which

works

well

today

with

real-time,

and

we

will

plug

it

into

quick.

But

the

question

is

what

to

do

next

to

be

standardized.

It

and

quick,

oh

well,

keep

it

as

pluggable

or

should

we

fix

PDR

I?

Think

it

will

depend

on

on

our

success.

L

Hdpe

is

something

that

we

kind

of

said.

Ok,

we'll

do

it

later,

but

it's

an

it's

an

issue

for

us.

So

currently

we

assume

that

application

would

Whitney

go.

She

ate

quick

transport,

but

we

should

decide

what

to

do

forward.

So

one

option

is

just

not

to

use

peerconnection

API

for

quick

or

I

mean

we

can

cheat,

but

chitinous,

not

nice.

We

can

add,

but

we

can

also

add

quick

media

transport

option

for

HDPE,

but

I

think

maybe

we

need

to

standardize

the

protocol

itself.

L

If,

if

we

make

quick

like

a

visual

part

of

peer

connection,

if

he/she

is

not

currently

supported

by

quick

I,

think

eventually,

it

will

be

needed

for

a

good

quality

media

and

just

general

question

what

is

next

for

Libra

party.

She

is,

that

is

a

clear

connection

model

for

native

or

or

do

you

want

to

have

building

blocks

and

Kieran

thinking

exactly

as

I

said

in

the

beginning.

It

would

be

really

nice

to

have

some

since

in

some

similarities,

between

web

and

native

and

to

give

more

control

to

to

the

apps.

C

L

Think

they

did

not,

they

did

not.

They

did

not

consider

real-time

scenarios

and

when

we

think

how

a

fish

you

should

be

implemented

should

be

very

well

aware

of

the

media

like,

for

example,

for

audio

and

video,

it

would

be

very

different

and

there

is

some

feedback

on

RTC

the

jitter

buffer

size.

You

want

to

take

it

into

account.

D

The

reason

for

that

was

basically

because

you

have

only

when

you're

sending

packets

in

the

inside

a

quick

stream

the

only

time

it

would

help

you

is

during

the

tail

loss,

because

that's,

but

at

that

point

you

you

have

so

little

data

in

your

buffer,

that

it

was

only

at

the

tail

end

of

that

stream

or

connect

I,

forget

channel

there.

You

would

see

that

benefit

in

our

case

I

guess

it

depends

a

lot

on

what

type

of

strategy

you're

going

to

use

to

send

your

frames.

D

Are

you

going

to

open

open

if

you're

going

to

send

a

group

of

pictures

over

over

a

stream

or

if

you're

gonna

send

a

single

frame

or

a

single

packet,

because

that's

totally

up

to

you

as

an

application,

developer

or

decide

what

you

want

to

do

and

the

use

of

faq

is

more

with

long-standing

sessions.

So,

if

you'd

only

go

in

to

send

like

one

frame

or

less

than

a

frame

over

a

stream,

it's

not

probably

going

to

be

beneficial.

You

want

to

do

it

in

the

application

there

them

yeah.

E

E

You

know

it

becomes

difficult

for

the

application

to

buffer

that

many

friends

to

make

that

exact,

effective

in

that

case

and

then

we're

back

and

so

we're

back

to

actually

saying

no,

we

don't

want

to

get

the

application

and

give

the

application

that

capability.

We

want

to

push

it

down.

Implementation,

we're

about

the

management

is

probably

better

handled,

but.

D

L

D

And

I

think

I

think

that

there's

a

lot

of

when

we

talk

about

the

the

statistics,

both

from

the

receiver

side

and

gathering

statistics

from

the

local

side,

it

was

those

are

the

points

of

presence

that

I

would

be

really

interested

in

to

be

able

to

like

get

these

statistics

and

maybe

not

have

quick.

The

transport

have

to

figure

these

things

out.

Maybe

there

can

be

input

coming

from

the

application

to

tell

the

receive

side

that

I've

already

you

know,

do

not

send

these

knacks

for

these

quick

things,

yeah

completely

so

saying.

A

A

One

is

what

the

on

that

on

the

web

part.

You

see

quick

API.

We

have

a

decision

to

be

taken

to

the

lists

on

where

to

dr.

doc

document

and

I,

hear

the

working

group

encouraging

and

the

proponents

to

turn

the

streams

page

slide,

we're

into

a

proposal

so

that

we

can

actually

take

a

position

on

adopting

a

turnout.

Apart

from

this,

are

there

any

decisions

that

need

to

be

taken

based

on

this

segment

and

thank

you

for

the

very

interesting

information.

A

A

One

thing

we've

heard

heard,

load

loud

and

clear

over

the

last

few

days

is

that

people

want

web

RTC

1.0

to

be

finished

as

in

stop

wiggling,

and

they

want

in

implementations

to

be

interoperable,

which

means

testing

enough

to

make

sure

that

they

are

so

that's

a

high

level

bit.

We

also

heard

a

number

of

things

that

indicate

that

people

are

wanting

to

use

and

real-time

media

things

in

web

browsers

that

are

not

within

the

current

webrtc

1.0

specs

and

that

they

are

going

to

do

so,

no

matter

what

we

say

about

it.

A

So

if

we

want

to

have

its

standardized

within

the

WebRTC

workgroup

and

we'd

better

get

on

with

it,

third

get

the

webrtc

1.0

done

with

hype

right.

So

that's

a

hard

combination

to

match

up,

but

we'll

have

to

do

it.

Somehow

the

chairs

will

go

on

of

and

contemplate

so

concrete

things

of

decisions

that

we

decided.

We

have

a

number

of

issues

that

were

looked

at

and

said.

A

Okay,

we

decided

by

combine

our

agents,

gum,

media

functions,

secure

context,

bang

screen

share,

full

screen

is

in

front

of

non-normative.

Respectful

constraints

will

be

merged.

We

will

not

allow

URL,

white

and

blacklist

the

screen

share.

We

have

a

proposal

for

removing

the

surface

type

constraints

and.

A

A

We

had

a

consensus

decision

now

verified

and

duly

recorded

to

stop

saying

anything

about,

checked

out

ID

mapping

into

as

STP

or

out

of

STP

for

that

matter,

where

consensus,

rename

transport

of

transport

to

transport

of

ice

transport

and

that

direction

is

set

when

SRB

up

the

set

remote

description

answer

is,

it

is

done

clarifications

to

colic

parents

accepted

said.

Kotick

preferences

remains

on

transceiver

and

obvious

has

promised

to

write

a

tests,

use

order,

an

answer

as

preference

don't

make

life

complicated

and

make

codec

payload

type

read-only

select.

A

The

candidate

pair

needs

further

work,

so

these

are

non

decisions.

We

didn't

take

a

decision

on,

nobody

can

came

with

it

and

we

need

to

discuss,

discussed

codec

payload

type

in

that

transceiver,

and

they

can

Bernard

promised

specially

to

discuss

that

now.

If

you

see

anything

in

that

this

quick

review

that

you

think

is

the

wrong

recording

recorded

gyeongree.

This.

B

M

A

A

A

Prabhat

and

then

back,

then

it

should

have

changed.

No

II

did

so.

We

will

create

a

perspect

web

web.

A

web

platform

tests

directories

so

that

we

get

everything

linked

up

to

the

spec

that

it's

specified

at.

We

will

aim

for

a

simulcast

work,

a

virtual

interim

sorting

out

CEMA

castings

in

January,

and

we

will

aim,

for

that,

doesn't

mean

hits,

but

it

means

that

we

will

aim

for

a

hackathon.

That's

the

idea

in

March

rocky.

A

A

It

will

consult

with

the

CDN

folks

on

whether

is

dealing

or

not

or

how

should

work

when

it

when

it's

needed,

and

we

will

use

networking

information

to

represent

network

information

instead

of

defining

our

own

type.

That

may

cause

heartburn

down

the

road.

If

we,

if

the

web

RTC

I

spec,

gets

to

be

considered.

Considering

for

candidate

recommendation

before

the

the

network,

information

spec

gets

on

some

kind

of

formal,

formal

status,

we

will

think

about

that

on

SVC.

We

will

adopt

this

document

now,

that's

to

be

verified

on

the

mailing

lists.

A

We

have

a

salt

consultation

with

Web

Audio

what

you

needed.

We

have

a

consultation

station

needed

with

with

the

machine

learning

community

group

about

what

they

need

on

audio.

We

did

not

make

a

decision

to

adopt

my

draft

for

and

non

Web

Audio

API,

it's

from

the

feedback.

It

seems

that

it's

very

possible

we'll

just

drop

it

on.

A

A

J

A

Working

on

that

darts

I

think

it's

I,

it's

one

of

those

cases

where

yeah

we

have

a

grand

vision

of

the

bright

shining

and

streams

palace

in

the

sky,

and

we

have

a

number

of

thoughts

that

might

lead

there,

but

all

of

them

seems

thank

you

this

step.

So

why

not

just

take

it,

though,

keep

working

on

this,

but

we

don't

have

a

decision

on

it.

Yet.

A

J

A

We

expose

IP

addresses

only

if

an

ass

seen

in

an

ad

candidate.

That

is,

if

they,

if

it's

mdns,

kam

of

large

name

address,

then

we

show

up

them.

We

show

the

mdns

camouflage

and

if

it's

IP

address,

we

show

the

IP

address

and

if

we

didn't

get

it

through

I

candidate,

we

don't

show

it

will

copy

the

size,

stats

to

outbound

RTP

and

remove

them

from

sender.

We

will

not

remove

them

from

track.

A

A

I

A

K

So

one

thing

that

makes

service

workers

different

from

workers

and

makes

it

a

particular

motivation

fortune

2,

is

that

in

service

worker

you

need

to

manage

Network

changes

and

the

life

time,

and

so

on,

like

reaching

simple

workers,

you

could

just

keep

on

the

main

thread:

we're

not

in

Turkey

service

worker,

so

I

think

that's

the

reason

for

the

deactivation

exactly

so,

even

though

it's

not

only

for

workers,

service

worker

adds

an

additional

constraint

which

makes

that

option

more

appealing

than

some

of

the

other

option

that

also

appealing

in

other

contexts.

Exactly

so.

A

I

Subset

of

workers,

if

you,

if

it

works

for

service

workers,

it

should

work

for

other

workers,

but

if

it

works

for

any

worker,

if

it

works

for

web

workers,

it

doesn't

necessarily

work

for

service

workers.

So

that's

all

by

putting

service

workers

here

is

making

it

a

little

more

clear

about

what

we're

trying

to

achieve.

Yeah.

A

A

Though

the

quick,

on

the

basis

of

the

quick

big,

bring

your

own

transport

presentation,

the

working

group

will

ask

the

list

if

we

should

adopt

the

Weber

to

see

quick

API

documents.

I

noted

the

clear

majority

in

the

room

indicating

that

they

wish

wish

to

assess

so

adopt

and

a

couple

of

strong

voices

saying

we

should

not,

and

the

working

group

encourages

Peter

to

turn

the

stream

spaced

slide,

we're

into

a

proposal

that

can

actually

be

evaluated.

Production.

A

A

A

A

Progress

towards

our

next

goal

and

what

is

that

next

goal?

I

see

kind

of

two

groups

of

goals

which

are

somewhat

orthogonal.

One

is

pushing

the

specs

of

WebRTC