►

From YouTube: WEBRTC WG interim 2021-12-14

Description

See also minutes at https://www.w3.org/2021/12/14-webrtc-minutes.html

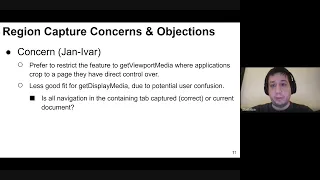

04:26 Call for Adoption of Region Capture

20:30 Call for Adoption of Media Capture Transform

29:10 WebRTC Encoded Transform - key frame request API

43:15 Issue #2698 / PR #2704 - add PAC timer; "new"→"failed"

47:35 Issue 47: RTP Header Extension Encryption

56:00 Issue 69: ptime is mentioned but not actually settable

59:21 WebRTC-NV Use Cases CfC

1:00:00 Low-latency P2P Broadcast

1:12:11 Decentralized Internet

1:21:40 Reduced Complexity Signaling

1:30:36 requirements N30, N31, N32, N33

1:43:04 requirements N34, N35, N36, N37, N38, N39

A

A

C

B

A

Okay,

thank

you.

Thank

you.

Ben

all,

right

a

little

bit

about

the

code

of

conduct.

We

operate

under

the

w3c

code

of

ethics

and

professional

conduct,

so

it's

fine

to

be

passionate,

but

let's

try

to

keep

things

cordial

and

professional

a

little

bit

about

the

meeting

we

are

still

being

recorded

so

be

on

your

wrist

behavior.

A

I

don't

think

we'll

need

a

sense

of

the

room,

but

if

we

do

we

can

use

polls

just

a

reminder

about

document

status,

just

because

something's

in

the

repo

doesn't

imply

it's

been

adopted.

That

requires

a

call

for

adoption

which

we're

going

to

be

talking

about

editors

drafts,

don't

necessarily

represent

working

group

consensus.

We

have

an

example

of

that

here.

A

Okay.

So

here's

what

we

have

we're

gonna

have

talk

about

the

calls

for

adoption,

the

transform,

as

we

said

and

finally

get

into

the

use

cases

so

call

for

adoption

and

actually,

if

harold,

you

could

also

look

at

the

time

we're

going

to

try

to

run

this

to

about

8,

30,

pacific,

so

the

half

of

the

hour

okay.

So

we

had

the

first

one

is

region

capture.

A

C

Sure,

well,

the

slide

conference

as

well

is

basically

that

we

think

this

is

a

good

feature,

but

we

would

it

seems

better.

Our

preference

would

be

to

restrict

this

to

get

viewport

media

which

we're

we

have.

We

owe

a

standards

document

on,

but

we

have

some

consensus

about

how

to

proceed

there.

So

that's

good

and

because

that

that's

a

use

case

where

that

makes

a

lot

of

sense

to

us,

because

the

application

has

full

control

and

direct

control

over

the

page

and

they

have.

C

We

can

imagine

applications

and

use

cases

where

cropping

and

the

entire

not

capturing

the

entire

document.

It

makes

a

lot

of

sense.

It

seems

less

good

of

a

fit

for

get

display.

Media,

mostly

because

we

feel

get

display.

Media

has

some

use

security

concerns

in

that

it's

not

always

clear

to

users

the

scope

of

what

they're

capturing

and

that

we

worry

that

this

cropping

feature

exacerbates

the

misunderstanding.

C

Further

in

that

you're

not

you're,

not

just

cropping

one

document

you're,

not

just

cropping

a

part

of

one

document,

you're

actually

cropping

the

tab

container

and

all

navigation

on

that

page,

and

is

that

clear?

It's

already

on

clear

and

get

display

media,

sometimes

sorry

about

the

dog,

but

we

worried

that

this

is

the

wrong

foundation

to

build

a

document

cropping

tool

on

top

of.

D

D

A

E

E

B

G

Yeah,

I'm

tim

hansen,

I'm

in

the

queue

I

wanted

to

ask

anybody,

I'm

trying

to

understand

what

the

perceived

risk

is

of

doing

this

now

you're

saying

that

there's

a

you

potential

user

confusion,

but

this

is

surely

this

just

reduces

the

thing

that,

like

it

reduces

exposure

rather

than

like,

the

user

has

already

agreed

to

share

to

to

capture

this

thing,

and

the

only

thing

this

does

is

reduce

it.

I

got,

I

don't

see

what

the

risk

is

there.

C

I

know

that

that's

a

good

question,

but

so

it's

hard

to

explain,

but

let

me

try

again

the

the

the

distance

between

what

a

user

may

think

they're

capturing

and

what

their

actual

capital

and

what

they're

actually

capturing

increases

here.

Because

not

only

are

you,

I

think

a

lot

of

people

already

would

be

surprised

to

learn

that

when

they

share

a

presentation

like

this

we're

looking

at

a

set

of

slides

right

now,

I

think

bernard

is

presenting.

If

bernard

were

to

type

a

new

url

in

this

tab

and

go

to

a

different

page.

C

Not

not

actually

he's

not

actually

and

his

entire

user

interface

experience

is

that

you

know

you

want

to

present.

You

click

yes

and

you

get

these

thumbnails

to

pick

from

and

there's

my

slides.

I

see

my

slides

and

I

pick

those

but

you're

not

actually

capturing

the

slides,

you're

you're

capturing

the

tab

container

that

happens

to

contain

that

slide.

Google

docs

document

right

now,

so

we're

capturing

one

level

up.

C

F

C

Of

that,

that

sort

of

builds

on

the

user's

understanding

that

oh

you're

capturing

a

document,

now

you

can

even

capture

the

subpart

of

that

document.

It

becomes

even

more

confusing.

I

think

that

you

still

have

the

gotcha

of

the

back

button,

but

if

you

hit

the

back

button

now,

not

only

are

you

no

longer

broadcasting

that

small

part

of

your

page,

but

your

I'm

guessing

cropping

would

turn

off

and

you

will

not.

E

E

G

So

I'm

I'm

still

not

feeling

that

there's

a

bigger

risk

here

I

mean

yes,

there's

a.

I

understand

the

risk

of

the

back

button,

but

I

don't

see

how

this

increases

it

or

if

it

does

it's

because

we

presented

the

region

wrong

somehow,

because,

like

there's,

actually

no

need

to

tell

the

user,

which

region

that

you're

you're

you're

cropping

to.

C

C

A

C

A

A

C

H

C

C

E

I

would

like

to

re-ask

tim's

question

because

we're

not

talking

about

well

at

least

we're.

The

proposal

does

not

address

get

report

media

right

now

and

does

not

address,

get

display

media

for

any

type

of

capture,

but

rather

only

for

self

capture,

in

which

case

the

back

button

would

just

stop

the

capture

anyway.

So

it

seems

like

any

concerns

of

lollying.

The

user

into

a

false

sense

of

security

might

apply

if

we

one

day

try

to

expand

this

proposal

to

crosstab

capture,

but

it

does

not

apply

for

self-capture.

E

F

F

F

C

E

C

But

my

concerns,

I

believe

there

are

unaddressed,

concerns

in

get

display

media

and

I

see

the

value

and

get

people.

I'm

surprised

to

learn

that

so

you're

saying

this

would

only

work

for

self-capture

so,

but

so

far

self-capture

would

get

display.

Media

still

allows

capture

doesn't

terminate

if

I

then

navigate

right.

So

are

you

saying

that

if

the

user

uses

this

cropping

feature,

then

the

capture

will

terminate.

E

E

D

Okay,

I'm

in

the

queue,

so

I

I

want

it

first

to

state

that,

yes,

the

current

only

use

is

self-capture,

which

is

something

we

we

dislike

and

we

prefer

not

people

go

there.

So

that's

the

first

thing

and

if

we,

if

we

had

to

do

something

like

that,

my

guess

is

that

we

would

probably

not

design

an

api

where

we

are

trying

to

define

an

opaque

object

that

would

be

allowed

to

be

transferable

and

so

on.

D

D

A

D

So

there's

the

pr

there,

which

is

just

a

rough

proposal,

so

we

need,

as

a

working

group,

to

put

the

effort

to

actually

make

sure

that

the

stream,

spec

and

stream's

implementation

will

be

daily,

which

is

so

that's

something

we

need

to

put

in

in

the

document

and

also

more

generally,

this

work.

There

is

the

first

one

which

is

trying

to

push

for

streams

for

image

manipulation.

A

D

F

D

A

Right,

oh

sorry,

I

I

just

wanted

to

point

out

that

you

know

I

understand

the

push

for

consistency,

but

actually

it

doesn't

feel

that

bad

when

you're

writing

code.

So

I

actually

kind

of

like

the

shape

detection

api,

I'm

not

even

sure

how

it

would

work

as

a

transform

stream,

because

basically

you're

you're

kind

of

giving

it

the

the

individual

video

frame.

Although

it's

a

an

image

bitmap

and

getting

back

a

face

detection

like

if

this

were

in

a

stream,

I

don't

I'm

not

sure

how

it

would

work.

A

It

actually

gives

you

a

bit

more

control

than

streams

would

so

I

actually

don't

feel

that

I

mean

I

understand

the

consistency.

Stuff

is

maddening,

especially

when

you

have

problems

with

things

like

memory

allocation

like

to

get

everybody

to

use

the

same

model,

and

I

think

we're

kind

of

stuck

there,

but

I'm

not

sure

that

we

have

to

have

strict

consistency.

A

C

C

A

C

Run

over

this

one

and

just

to

comment

on

what

I

agree

about

consistency,

I

think

that's

going

to

take

time.

I

think

the

best

we

can

do

is

to

push

good

apis,

that

use

streams

and

for

bible

codex.

There

are

I've,

had

it's

easy

to

shim

streams

on

top

of

webcodex

and

I'm

happy

to

push

for

them

to

use

promises

as

well.

They

had

some

good

arguments

against

that.

I

want

to

mention,

which

is

for

audio

there's,

not

always

a

one-to-one

between

encode

and

calls

to

encode

and

callbacks.

A

C

But

this

slide

is:

I

want

to

give

an

update

on

progress

since

last

month

in

the

what

wg

they've

been

very

responsive

to

our

needs

in

this

area

and

there's

I

want

to

call

out

there's

one

pr

already

for

fixing

the

avoiding

buffering

and

that's

to

make

high

water

mark

zero

work

and

basically,

by

inventing

a

new

method

just

still

being

bike

shed

with

a

writer

book.

So

the

readable

side

of

a

transform

can

call

a

function.

C

Currently,

you

know

release

back

pressure

or

mark

ready

to

basically

tell

the

writable

side,

I'm

ready

for

a

packet

now,

so

it

would

work

without

buffers,

so

there's

a

yay

on

that

and

another

pr

from

un.

Thank

you

for

providing

an

explainer

that

suggests

a

new

stream

type

tentatively

called

transfer

to

help

clean

up

objects

that

are

both

transferable

and

closable,

which

are

two

concepts

that

hopefully

eventually

might

end

up

in

web

idl

and

maybe

in

the

stream

spec

for

now.

C

But

basically

this

would

be

the

first

use

case,

for

this

would

be

video

frames.

So

you

can

now

make

a

transform

stream

that

accepts

a

video

frame

and

a

controller,

and

you

can

access

the

video

frame

until

you

and

cuba

to

the

controller,

in

which

case

the

video

frame

is

transferred,

think

think,

move

semantics

if

you're

from

c

plus

loss.

So

it's

move

semantics

for

trunk,

basically

and

then

so

you

can

write

code

that

takes

care

of

closing

the

frame.

C

But

after

but

after

it's

been

enqueued,

you

don't

need

to

close

it

anymore

because

you

don't

own

it

anymore

and

you

can't

also

access

it,

which

means

you

avoid

problems

like

a

passing

to

b

and

then

a

mutating.

What

was

passed

so

there's

for

transfer

transform

stream,

has

both

a

readable

side

and

a

writable

site,

so

there's

a

type

field

for

that,

and

I'm

also

showing

zero

high

watermark.

So

that's

the

current

state

of

things.

It's

just

an

explainer

at

the

moment,

so

still

gathering

feedback

from

other

participants

on

that.

C

A

Yeah,

I

just

had

one

question

about

the

code

you're

showing

here

yanivar

the

e

here

right.

You

just

try

the

controller

dot,

dotting

queue,

that's

really

only

an

error

in

enqueue.

Why

would

that

in

queue

itself

is

very

unlikely

to

blow

up.

So

I

don't

think

you're

catching

much

of

an

error

here.

It's

not

like

not

an

encoding

error

or

anything

that

you're

catching

right.

C

All

right,

no,

actually,

I

I

struggled

with

whether

that

should

be

inside

or

outside

to

try,

but

it's

the

line

ahead.

The

comment

that

says

js

can

imagine,

there's

some

code

right

above

that

is

accessing

the

video

frame.

If

any

of

that

javascript

code

were

to

fail,

the

the

proper

pattern

would

be

to

remember

to

close

the

video

frame

in

that

case,

but

at

the

point

where

you

enqueue

it

once

it's

been

enqueued,

then

the

the

red

comment

below

just

cannot

access

the

video

frame

here.

C

The

remaining

code

doesn't

need

to

close

the

video

frame

anymore,

so

so

this

is

sort

of

to

solve

the

lifetime

issues

of

video

frames

by

having

more

explicit

lifetime

controls,

which

gets

a

little

more

complicated

than

using

garbage

collection,

of

course.

But

okay,

if

that's

what

we

have

to

do

here,.

D

Okay,

I

think

we

already

discussed

this

topic

at

meeting.

So

basically,

there

are

some

needs

for

controlling

video

encoders,

either

on

the

receive

side

or

on

the

same

side,

and

the

most

important

thing

is

to

be

able

to

generate

a

keyframe

on

the

send

side.

It

might

be

like

when

you're

changing

your

entire

encryption

keys,

like

you're,

using

as

frame

and

you're

using

a

new

key.

So

probably

you

you

need

to

generate

a

new

keyframe

at

that

point,

so

it

would

be

very

handy

if

javascript

could

say.

D

Okay

generate

a

key

for

me,

generate

a

keyframe

for

me

please.

The

second

case

is

maybe

on

the

receive

side.

You

have

like

multiple

clears

and

you

might

want

to

to

switch

from

one

tier

to

the

other,

and

then

you

will

start

subscribing,

and

you

want

to

ask

the

other

guy

on

the

other

side,

to

actually

trigger

keyframes.

D

The

second

api

next

slide

is

also

exposed

in

script

transformer

and

is

only

meaningful

on

the

relative

side.

So

let's

say,

but

at

some

point

you

want

to

send

a

keyframe

request.

So

you

you

stand

off

here,

so

you

want

the

user

agent

to

send

a

full

request

for

you.

So

you

call

transformer

dot,

send

keyframe

request

there,

there's

no

parameters,

because

it's

up

to

the

other

side

to

know

exactly

which

encoder

to

actually

use

and

it's

promise

based

as

an

error

mechanism

as

well.

D

D

Yeah,

I

think

so

I

was

not

sure

exactly

what

was

the

purpose

of

a

time

stamp

in

the

transformer

case,

because

in

the

transformer

case,

you're

grabbing

frames,

so

you

know

exactly

which

one

will

be

a

keyframe

and

its

time

stamps

and

at

the

center

side

I

was

not

sure

what

would

be

the

use

of

the

timestamp

as

well.

So

that's

your.

F

D

F

F

I

got

a

little

bit

confused

in

my

head

about

whether

whether

there

was

a

case

where

you

could

get

the

keyframe

and

you

could

get

a

free

keyframe

ahead

of

the

one

you

asked

for,

so

that

you

would

not

not

start

encrypting

the

right

key

on

the

right

keyframe

and

that's

where

why

I

added

a

timestamp

just

to

have

a

you,

have

a

unique

identifier

for

the

keyframe.

That

is

a

result

of

your

request.

F

D

D

Need

to

define

that

and

it's

not

really

defined

the

pr,

and

my

rough

proposal

would

be

that,

yes,

you

can

have

cases

where

you're

requesting

a

key

frame

and

the

usa

agent

a

millisecond

before

decided

that

yeah.

Oh,

it's

five

minutes.

So

now

I

need

to

generate

a

keyframe

and

then

the

keyframe

order

is

arriving

and

you

generate

the

second

keyframe

like

three

three

frames

after

the

first

keyframe

and

I'm

not

sure

it

matters,

but

maybe

it

matters.

So

we

need

to

get

into

that.

D

F

D

Yeah,

so

my

guess

is

that

you

would

resolve

the

promise

first

then

synchronously

and

cue

the

frame

and

then

in

terms

of

promise,

if

you,

if

you

did

then

and

so

on,

it's

the

general

keyframe

promise

callback

that

would

be

called

first

and

then

the

read

chunk

that

would

be

called.

Second,

maybe

in

the

same,

investing

task.

E

F

D

Yes,

so

there

are

additional

issues

like

or

small

details

like

when

the

api

rejects

like

for

audio

senders

and

receivers.

That

does

not

make

this

sound,

real

sense

and

to

me

generate

keyframe,

for

instance,

on

sender

side.

You

should

not

drop

frames,

it

should

not.

The

next

frame

should

not

be

like

a

keyframe.

I

think

we,

maybe

we

with

that,

I'm

not

quite

sure,

but

that's

something

we

can

discuss

so

there.

E

A

So

I

would

say

I

generally

support

this

work

and

I

do

I

do

understand

now.

Harold's

concern

and

my

personal

view

is,

I

think

it's

it.

The

promise

should

resolve

relative

to

the

new

keyframe,

not

not

a

previous

one,

because

that's

a

little

it's

a

little

bit

confusing

and

there

there

might

be

use

cases,

as

you

once

said,

they're

a

little

bit

different

from

the

encrypted

transform

case.

A

G

Yeah,

I'm

I'm

a

little

bit

nervous

about

the

time

stamp.

Maybe

we

can

cover

this

in

when

we

get

down

to

the

detail

later,

but

I

just

wanted

to

flag

up

that.

Some

of

the

sfus

will,

as

opposed

to

asking

the

encoder

to

generate

a

new

keyframe,

will

send

you

one

that

they've

cached

recently,

which

would

have.

A

D

F

A

I

don't

think

it

changes

the

process

much

okay,

so

I

mean

I

guess

the

question

is,

I

think

we

can.

We

leave

this

up

to

the

editors

harold.

They

can

merge

it,

and

then

we

do

the

cfc.

It

might

be

easier

to

do

the

cfc

if

you

have

the

text,

because

someone

can

read

rather

than

a

pr

okay.

So

that's

next

step

is

next.

Next

step

is

merge

to

the

encrypted

transform

draft

and

then

run

a

cfc.

A

Okay,

so

we

can

put

that

down

run

as

cfc

on

the

preview

and

then

see

what

we

get

all

right

I'll.

Let

you

have

a

little

bit

a

little

bit

extra

time,

so

we're

now

going

to

get

into

the

warbridge

cpc

and

we're

going

to

see

extensions

prs

and

first

jani,

where

we'll

talk

about

peck,

timer

and

then

we'll

get

into

a

few

extensions

issues

and

pr's.

C

Yeah,

okay,

yeah,

so

there's

an

issue

filed

here

where,

in

this

came

up

when

we're

doing

a

content,

security

policy,

but

there's

actually

a

general

problem

here,

where

the

spec

there's

an

rfc

rfc8863

that

talks

about

a

pack

timer,

which

is

a

way

to

update

the

ice

agent

to

wait

a

minimum

amount

of

time

before

declaring

ice

failure.

Even

if

there's

no

candidate

pairs

and

all

checking

has

failed,

and

this

delay

provides

enough

time

for

for

the

discovery

of

peer

reflexive

candidates.

C

And

so

so

that's

the

first

thing

that

the

pr

does

is

to

add

language.

That

says,

the

condition

for

failed

is

all

these

other

things

we've

finished

gathering

we've

received

an

indication

that

are

no

more

remote

candidates,

we've

finished,

checking

all

pairs

and

all

pairs

have

either

failed

or

lost

consent

and

either

zero

local

candidates

were

gathered

or

the

pack

timer

has

expired,

and

with

that

change,

we're

now

mentioning

the

pack

timer

and

the

reason

for,

but

there's

an

exception

there,

because

the

pack

timer

is

by

default.

C

I

think

39.5

seconds.

So

it's

a

40

second

wait,

but

in

the

case

I

think

justin

bernie

pointed

out

this.

If

you

have

generated

local

zero

local

candidates,

you

know

for

a

fact

that

you

know

you're

not

going

to

get

a

peer

reflexive

candidate

because

there's

nothing

for

the

stunt

server

to

hit.

So

in

that

case

we

can

skip

the

weight

and

go

to

failed

immediately

and

that

that

has

some

advantages

in

that

we

get

an

instant

failure

path

in

the

common

situation,

where

both

it's

not

common.

C

A

A

A

If

you

support

it

and

then

you

have

rtp

header

encryption

policy

and

configuration

which

can

be

negotiate

which

says,

send

unencrypted

if

the

other

side

doesn't

support

it

or

require,

and

if

the

other

side

doesn't

support

it,

you

fail

and

then

we

have

a

attribute

encrypted

encryption

negotiated.

That

tells

you

whether

you've

negotiated

or

not

on

that

transceiver.

A

So

here's

the

rub.

The

itf

cryptex

spec

marks

this

as

bundle

transport,

and

that

means

that

if

cryptex

attribute

is

present,

it

must

be

in

all

m

lines

of

the

bundle

group,

but

all

m

lines

don't

need

to

be

identical

when

you're

not

bundling

so

harold

brought

up.

The

following

issue

is

that

this

creates

an

interop

problem,

potentially

with

non-browsers,

because

it's

it's

transport,

not

identical

and

without

identical

we

can't

reject

the

following

offer,

which

would

be

cryptics

on

audio

but

not

video.

A

I

would

note

that

I

actually

brought

up

this

same

issue

when

I

reviewed

the

cryptex

document

and

it

it.

I

didn't

understand

this

particular

use

case,

because

what

you're

saying

here

is

that

you

can't

receive

cryptx

on

video,

but

why

would

you

not

be

able

to

do

that?

If

you

can

do

it

on

audio?

It

didn't

make

sense

to

me

because

you,

the

code,

has

the

ability

to

do

it.

Why

would

you

decide

not

to

do

it

on

video?

A

A

A

Yeah,

I

didn't

understand

that

use

case

harold

because,

like

if

your

middle

box

does

cryptex

for

audio,

why

couldn't

it

do

it

for

video?

It's

a

different

story,

whether

the

thing

behind

the

gateway

can

do

it

or

not,

but

why

would

why

would

the

middle

box

care

it

doesn't

have

to

send

cryptics?

You

know

to

the

video

conference

or

whatever

it

just

has

to

do

it

on

its

own

side.

F

A

A

G

F

F

B

E

A

A

All

right,

thank

you

so

back

to

issue

69.

This

was

about

p

time

and

originally

in

the

adapted

p

time

description,

which

was

in

section

nine.

It

mentioned

setting

b

time

but

p

time

itself

wasn't

defined

the

attribute,

and

so

we

did

in

our

in

pr

89

is

we

removed

the

p

time

reference

to

not

have

it

referred

to

something

it

didn't

exist,

and

this

issue

said

hey:

should

we

add

this

back

and

actually

add

p

time

to

the

document

instead

of

having

a

dangling

reference?

A

And

so

the

question

really

is:

are

the

working

of

participants

supportive

of

adding

p

time,

and

if

we

add

it

we'll

actually

is

anybody

interested

in

implementing

it

and

just

for

reference?

This

is

the

pr

it's

not

particularly

complicated.

It

basically

adds

a

p

time

attribute

to

rtc

rtp

encoding

parameters.

Instead,

you

know

duration

of

media

represented

by

a

packet

of

milliseconds

and

just

adds

back

the

reference

in

adaptive

p

time

to

p

time

and

basically

says

you

can't

do

both

you

do

adapt

to

p

time.

A

A

A

A

Okay,

so

let's

talk

about

the

results

of

the

cfc,

it

was

one

of

the

more

complicated

cfcs,

hopefully

that

we'll

ever

have

it

ended

yesterday,

and

here

are

the

result

on

the

use

cases.

We

had

three

use

cases:

low,

latency,

peer-to-peer,

broadcast

decentralized,

internet

and

reduced

complexity

signaling.

A

My

other

question

was

what

drm

today

in

in

the

web

is

transport

independent?

So

you

know,

was

the

requirement

already

met

or

is

there

something

else

that

that

we

need

to

meet

it?

And

I

guess

harold

who

also

expressed

support,

said

he

interpreted

broadcast

as

semi-simultaneous

unidirectional

delivery

of

the

same

audio

video

content

to

a

thousand

plus

users,

and

then

yarivar

said

he

thought

it

was

too

broad

and

confusing

broadcast

meant.

A

I

think

tim

also

responded

that

it

was

the

multi

multiple

unit,

uni-directional,

real-time

streams,

with

multiple

viewers

watching

a

concert

or

sports

event

or

church

service,

and

we

have

seen

that

implemented

with

the

data

channel

in

particular,

and

some

of

these

do

use

drm.

That

already

exists,

and

so

then

yanir

said

this

is

required.

Drm

support

in

the

webrtc

media

stack

or

in

the

data

channel

like

something

beyond

like

what

we

already

have

do.

G

So

yeah

I

I

think

this

was

badly

phrased

and-

and

I

didn't

actually

mentally-

include

data

channel

use

cases

here,

but

but

maybe

that's

on

a

mission

that

I

shouldn't

have

made.

I

don't

know,

I'm

not

sure

yet.

I

think

the

only

objection

I

have

to

some

of

these

is.

I,

like,

I

think,

beyond

a

thousand

I'm

not

like.

There

are

smaller

churches

who

might

still

want

to

do

this.

G

G

F

A

Bernard

yeah,

I

also

support

adopting

the

use

case,

and

I

agree

with

what

a

bunch

of

what

harold

has

said.

I

will

say

what

I

think

the

use

case

is,

which

I

think

is

close

to

what

what's

been

suggested

here,

which

is

I've

seen

this.

You

know

you

mentioned

tim,

the

concert,

the

sporting

event.

That

kind

of

thing,

I

think

I

think

that's

actually

the

core

of

it.

A

The

big

question,

in

my

mind,

is

the

latency

and

you

use

the

term

low

latency.

I

think

that's

the

right

term,

because

I

think

you

you're

not

talking

about

an

ultra

low

latency

case

here.

Like

a

gaming

scenario,

I

think

you're

talking

about

something

which

doesn't

have

quite

the

latency

requirement.

Even

of

conferencing,

like

I'm

thinking

of

also

a

very

large

company

meeting

like

a

hundred

thousand

people

in

a

company

and

what

I've

seen

happen

here

is

basically

it

is.

A

It

can

be

done

a

bunch

of

ways,

but

basically

one

way

is

to

do

the

data

channel.

Think

of

it

as

a

data

channel

to

a

gateway

and

then

then

branch

out

with

peer-to-peer

caching

in

the

data

channel,

you

can

get

to

that

kind

of

hundred

thousand

scale

by

more

easily

than

you

could

with

whoever

you

see.

I've

also

seen

drm

supported

for

this,

but

it

doesn't

require

anything

new.

That

is

the

way

it's

done.

Is

you

send

containerized

media

get

the

keys

with

eme?

It

looks

exactly

like

the

drm

and

hls.

A

The

thing

here

is:

the

complexity

comes

in

when,

if

you

want

to

use

web

codecs-

and

there

is

discussion

in

the

media

working

group

about

how

that

would

work

because

then

it

wouldn't

be

containerized,

but

that's

not

in.

We

don't

have

to

worry

about

that

in

whatever

dc.

I

think

we

should

mostly

focus

on

the

transport

and

with

respect

to

some

of

yanivar's

questions.

A

This

is

done

with

the

latency

lowered

by

changing

the

congestion

control

on

the

server,

so

there's

nothing

in

the

rtc

data

channel

that

has

to

change

and

since

it's

unidirectional

it

wouldn't

be

both

directions

anyway.

So

I

I

at

least

I

think

I

understand

what

what

I

my

interpretation

of

the

use

case

and

maybe

it's

close

to

what

jim

and

harold

had

in

mind.

Hopefully,

yeah.

C

Yeah,

so

I

I'm

glad

I'm

hearing

a

lot

of

things

that

this

could

be

improved.

The

use

case

could

be

clarified

a

lot.

I

think

the

difference

is

that

I

think

we

should

object

to

the

use

case

until

those

improvements

have

been

made.

So

it's

clear

what

we're

committing

to

and

also

webrtc

is

being

used

for

a

lot

of

things,

but

it

still

has

a

scaling

problem,

so

I

think

we

should

be

careful

to

actually

commit.

A

C

Right,

it

should

support

a

thousand

or

anything

like

that

as

far

as

sending

media

over

data

channels,

but

yeah,

so

to

clarify.

What's

on

the

slide,

what

what

I

said

about

drm

was

that

I

just

want

to

make

it

clear

that

I

worry

that

the

use

case

is

so

broad

that

it

might

lead

people

to

believe

that

we're

asking

for

a

lot

of

these

things.

So

I

want

to

clarify

that

I

do

not

think

we

should

support

drm

in

webrtc

meet

a

stack

or

data

channel.

C

Right

we're

not

adding

drm

specific

features.

You

can

send

me

over

data

channels.

Today

you

can,

as

you

pointed

out,

if

you're

server

to

client,

you

can

even

send

low

latency

media.

You

just

can't

upload

from

client

to

server

low

latency

media,

because

that

would

require

well

you're

you're.

Still,

you

can

do

that,

but

you're

still

down

by

the

congestion

controller.

A

E

A

A

A

I

And

that's

the

unmute

yep

yeah

there

we

go

so

you

know

also

on

the

johnny

bar

stuff

helmet

on

the

data

channels.

This

is

mentioned

peer-to-peer,

but

bernard

was

talking

about.

Well,

you

can

do

real

time

if

you

have

a

cert

if

you

modify

the

server's

congestion

control,

but

that

wouldn't

be

peer-to-peer

just

not.

A

I

A

G

So

I

think

that

pita

peerness

is

orthogonal

from

whether

one

end

is

a

browser

or

not

like.

There

are

a

bunch

of

devices

out

there

that

aren't

servers,

but

there

also

aren't

browsers.

That

might

be

the

origin

of

this,

like

you

might

put

a

super

duper

webcam

in

your

church

and

have

your

700

parishioners

watch

it

there's

no

servers

involved,

it's

peer-to-peer,

but

you

know,

but

yes,

you

can

mess

with

the

congestion

control

algorithm

in

that

in

that

super

duper.

Webcam.

G

G

F

A

G

So

the

use

case

well

yeah.

I

I

I'm

again

I'm

slightly

struggling

with

the

with

dragging

out

the

use

case,

and

I

think

it

would

be

good

to

get

some

expert

input

on

this.

But

but

the

feedback

I've

had

from

people

who

are

in

the

kind

of

decentralized

messaging

space

is

that

they

want

to

be

able

to

effectively

receive

messages.

In

the

background

when,

when

the

user

currently

doesn't

have

a

tab

open

and

and

and

that

is

why

we're

in

the

service

worker.

G

B

I

I

And

then,

if

we

do

put

it

in

there,

that's

going

to

open

up

some

interesting

additional

security,

privacy,

etc

stuff.

That

neat

will

need

to

be

thought

about,

as

well

as

resource

usage

in

the

browser.

Ironically,

I'm

now

on

the

workers

and

storage

team

at

mozilla

and

been

working

on

service

workers,

so.

A

G

G

I

Yeah

one

yeah,

one

thing

I

I

do

there

are

some

as

I'm

thinking

about

it.

There

are

some

some

real

concerns

here

once

you

have

this

stuff

running

entirely

headless

without

any

user,

visible

control,

effectively

service

workers

are

totally

hidden

from

the

user,

and

you

know

I

mean

in

theory.

Yes,

you

can

get

to

them.

If

you

know

exactly

what

to

look

at

and

so

forth

in

a

browser

but

effectively

the

user

never

knows

that

they're

there,

which

means

once

it

gets

installed.

It

can

be

doing

anything

forever.

I

I

G

G

A

So,

in

terms

of

next

steps,

could

we

actually

try

to

get

the

decentralized

people

at

the

working

group

to

kind

of

make

the

case

and

maybe

discuss

this

because

I

I

personally

feel

like

I

don't

understand

this

space

well

enough

to

even

understand

the

motivation

entirely

and

certainly

with

ice.

I

think

it's

gone

over

my

head.

Okay,

I

don't

quite

understand.

G

G

C

Was

just

gonna

maybe

another

way

to

approach

it

was

to

compare

it

to

fetch.

I

mean

I

understand

you

can

use

fetch

in

service

workers

today

and

so

you

know

I'd

say

as

a

devil's

advocate.

Then

you

know

what

are

the

differences

between

asking

for

peer-to-peer

on

one

end

and

on

the

other

end

going

back

to

the

decentralized

people

can't

you

can

you

somehow

solve

your

use

case

using

fetch?

F

F

A

Okay

and

then

we

have

reduced

complexity,

signaling,

which

I

guess

harold

mentioned,

is

the

existing

requirement

is

too

simplistic

due

to

the

abuse

possibilities

outlined

in

rfc

8827

violence,

section

six

three

and

then

jan

avar

asked.

How

is

this

different

from

wish

and

then

tim

said

it's

wishes

for

ingest.

This

would

be

for

egress,

and

then

yanivar

asked

whether

it

was

the

itf,

the

new

uri

formats

for

the

itf

and.

F

What

these

are

supposed

to

guard

against

and

why

it's

not

a

problem.

In

this

case

I

mean

in

a

which

case,

I

think

the

answer

is

and

hey.

What's

what's

announcing

the

what's

announcing

the

url

is

the

server

and

servers

are

big

boys.

They

can

look

out

for

themselves,

which

might

not

be

the

case

for

a

for

a

peer-to-peer

connection.

G

Now

I

I

how

that,

without

having

to

drag

in

a

bunch

of

infrastructure,

a

bunch

of

javascript

and

and

and

I

think

it

feels

to

me-

like

that's-

achievable

now-

how

you

do

it

and

how

it

could

be

done.

I

I

don't

yet

know,

but

it

I

mean

wishes

and

just

the

distinction

with

the

other

thing

with

wishes.

That

wish

is

a

negotiation.

G

G

F

F

E

A

G

But

I

think

I

mean

to

harold's

point

about

the

server

being

a

big

boy.

I

mean

the

assumption

is

the

assumption.

Maybe

then

is

that

one

end

of

this

is

a

server.

Maybe

that's

what

that?

Maybe

that's

the

restriction

that

make

allows

us

to

write

this,

that

that

this

that

one

end

of

this

is

a

server

and

therefore

there

are

attributes

you

already

know,

perhaps

from

you

know,

from

having

talked

to

it

before

or

something

so,

it's

a

more

stable

place

and-

and

it

can

look

after

itself

in

in

some

respects,

I

I'm

yeah.

G

A

F

So

I

would

say

that

the

the

use

case

should

just

be

adopted

within

with

a

caveat

saying

that

that

secure

security

guarantees

for

the

for

each

one

must

be

must

be

taken

into

considerations

and

but,

but

so

so

the

the

way

it

looked

currently

was

that

it

it

said

the

answer

is

that

you're

right?

What

was

the

question,

and

I

think

the

the

question

needs

to

be

it

needs

to

be.

F

A

F

G

I

mean

I

I

don't

want

to

dive

into

the

detail

of

this,

but

but

I

think

the

strong

point

is,

as

you

said,

bernard

was

that

if

we

already

have

some

sort

of

exchange

of

certificates

that

we

both

that

the

both

sides

know

then

there's

at

least

somewhere

to

stand

and

and

and

maybe

validate

things

from

or

I

don't

know,

use

that

that

pivot

point

to

to

to

build

the

rest

of

it.

I

I

you

know,

I.

A

A

A

So

we

trying

to

summarize

here

the

comments

on

the

individual

requirements

and

a

bunch

of

them

were

questions

about

whether

we

actually

could

do

this

already

or

whether

we

needed

new

stuff

to

do

it

so

for

n30

it

said

ability

to

re-establish

media

after

an

interruption,

and

my

question

was:

do

we

need

new

apis

for

this?

As

an

example,

you

know

if

you,

if

your

media

gets.

A

A

Difficult

fish

so

yeah,

so

that

was

the

question

about

n30

is:

did

it

have

an

epi

requirement

or

was

there

some

underlying

protocol

thing

here

without

an

api

and

then

n31

was

so

place

media

during

an

interruption?

And

I

guess

the

question

was:

couldn't

you

just

download

the

whole

music

before

the

interruption

and

cache

it

and

then

play

it?

It

wouldn't

be

real

time.

Obviously,

but

you'd

have

stored

it.

You.

A

I

A

So

n32

was

about

parking

connections

and

my

distant

memory

of

sip

is

there

is

like

you:

do,

have

parking

there.

So

could

you

just

do

that

with

the

you

know,

the

existing

sip

signaling?

Do

you

need

anything

new

in

the

api

and

then

the

long-term

connection

re-established

without

access

to

external

services?

I

think

that

gets

into

what

we

were

just

discussing,

and

I

was

my

question:

was

you

know?

Is

there

some

kind

of

protocol

thing

you

need

there

like

you?

Basically,

your

sip

signaling

server

is

no

longer

accessible

like.

A

G

I'll

clarify

the

the

hold

music

one,

because

I

think

it's

probably

the

the

one

that

is

the

least

understood,

and

the

easiest

to

explain.

But

the

issue

here

is:

is

that

we

don't

that

the

browsers?

Don't

don't

tell

you

when

they

when

you've

been

interrupted

in

a

way

that

okay

allows

you

to

do

anything

sensible,

like

you

know,

if

you

get

a

gsm

interruption

or

or

the

the

user

put

something

foreground,

and

you

don't

ne,

you

don't

know,

and

what's

even

worse,

the

other

party

you're

talking

to

has

absolutely

no

idea.

B

G

And

I

think

it

would

be

really

nice

to

be

able

to

like

just

you

know,

hold

music

or

whatever

foots

for

the

far

end

to

receive

to

say

so

it's

basically

a

mute

state

that

carries

the

the

idea

that

it

might

be

retrievable

and

and

somehow

that

is

sent

to

the

far

end

and

the

firing

can

like

kind

of

play,

comfort,

noise

or

whatever.

It

is

that

he

needs

to

do

so.

G

Well,

so

I

think

there

are

two

parts.

The

first

part

is

that

the

near

end

needs

to

needs

to

know

what's

happening

like

if

the

near

end

knew

what

was

happening,

then

it

could

probably

send

something

over

the

data

channel

and

we

could

work

around

it

that

way,

but

what

it

does

at

the

moment

is

it

just

as

far

as

I

can

tell

it

just.

G

I

should

probably

defer

to

you

and

if

or

something

but

but

what

I

can

as

far

as

I

can

tell

what

it

does

at

the

moment,

is

it

just

mutes

the

stream,

it

mutes

the

audio

stream

right

right

and

possibly

the

video

stream

depending

on

the

implementation,

and

what

I'm

asking

for

is

if

that

mute

is

sent.

Can

we

not

like

do

something

else

as

well,

but

okay.

B

I

A

I

Okay,

so,

let's

assume

there's

some

way

you

can

find

out

from

the

us

or

whatever

okay,

second

of

all,

it

has

to

be

able

to.

If

we

find

out

that

out,

then

we

would

need

to

define

some

sort

of

event.

Once

we

define

some

sort

of

event

that

an

application

can

get,

then

the

application

can

do

whatever

it

wants.

It

can

switch

to

whole

sending

hold

music.

It

can

just

send

a

data

channel

message

to

the

other

side.

It's

entirely

within

the

existing

apis.

I

It

can

be

handled.

The

only

thing

I

think

that

is

needed

is

some

sort

of

notification

that

that

some

sort

of

interruption

of

of

media

by

the

operating

system

has

occurred,

and

then

you

have

to

define

to

figure

out

if,

like,

if

you,

you

have

to

worry

about

things

like

the

user,

just

hits

the

mute

button

on

their

mic.

Does

that

constitute

an

interruption?

C

A

Right

right

so

anyway,

I

think

I

think

this

one.

The

thing

to

do

tim

is

be

to

revise

it,

in

light

of,

I

think

I

think

this

may

be,

but

just

to

clarify

what

we

mean

by

interruption.

Certainly,

I

think

there

was

a

misunderstanding

about

that,

and

also

for

you

to

review

un's

pr

to

see

if

that's

a

foundation

for

it

or

whether

there's

an

additional

requirement.

G

A

G

I

think,

though

we

need

to

it's.

We

probably

need

to

come

up

with

some

limitations

on

on,

like

that,

the

two

have

already

like

met

that

this

is

this

is

33,

is

only

achievable

if

well,

no,

actually

it's

kind

of

reviving

it.

So

they

already

know

each

other.

So

there's

some

some

things

that

you

could.

You

can

pre-assume

potentially.

I

G

I

G

I

I

A

So

you

don't

always

need

a

media

server

to

do

a

conference.

You

could

you

can

do

that

today

without

seeing

a

mesh

and

then

there

was

a

whole

bunch

of

comments

on

autoplay

being

messy,

and

I

certainly

sympathize

with

that

and

there

were

questions

about

the

autoplay

attribute

and

actually

I

wanted

to

point

out.

Yaniverse

said