►

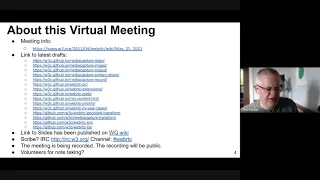

From YouTube: WebRTC WG meeting May 25, 2021

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

B

B

B

B

Yeah,

I

think

that's,

okay,

any

any

does

dom

dom

have

any

objection

to

that.

Okay,

all

right.

So

this

is

the

agenda.

Just

a

little

bit

of

a

warning.

We're

gonna

do

more

strict

time

control

harold

will

be

the

bouncer,

so

we're

gonna

give

a

warning

two

minutes

before

your

time

is

up

and

then

once

the

time

has

elapsed,

we

will

move

on

to

the

next

item.

B

D

Thank

you

very

much.

Can

everybody

hear

me

I've

just

joined

for

my

other

account

yeah

perfect.

So

this

is

about

capture.

Handle

capture

handling

is

a

way

for

two

for

two

applications

running

in

two

different

tabs

to

communicate,

but

to

communicate

just

a

bit

of

information

that

needs

to

jumpstart

the

rest

of

their

communication,

and

it

also

assumes

that

they're

already

communicating

in

one

way.

Let

me

get

to

that

in

a

second.

So,

let's

assume

that

we've

got

one

vc

application.

D

It

could

be

google

mid

or

let's

call

it

vc

max.

So

that's

an

application,

a

fictional

application

that

we

have

and

it's

capturing

using

get

display

media

another

tab,

that's

running

an

application

like

microsoft,

force,

point

or

open

office,

365

or

anything

else.

Let's

just

call

it

presentation

application,

so

two

applications

vc

capturing

a

productivity

suite

next

slide.

Please.

D

So

now,

let's

assume

that

the

application,

that

is

a

presentation

application

all

has

all

sorts

of

apis

for

getting

a

message

saying

hey.

I

want

you

to

change

to

the

next

slide

or

I

want

you

to

check

to

change

to

the

previous

slide

going

to

a

full

screen,

leave

full

screen.

Anything

we

can

imagine,

but

what

exactly

it

has

is

out

of

scope,

let's

just

assume

that

it

has

previous

next

line.

D

But

the

very

minimum

that

we

can

assume

is

needed

is

a

session

id

and

when

I

start

capturing

something

with

get

display

media,

I

don't

generally

know

what

I'm

capturing

it's

up

to

the

user

to

decide,

and

I

might

know

that

I

captured

the

tab,

but

I

don't

know

what

tab

who's

running

there

etc.

So

that's

what

I

want

to

focus

on.

How

can

we

discover

safely

a

reasonable

amount

of

information

to

allow

collaboration

if

collaboration

is

possible

between

these

two

applications?

D

So

one

hacky

way

of

doing

that

that

you

could

do

right

now

without

any

changes

to

any

browser.

Is

that

the

application

that

thinks

hey,

I

might

be

captured,

it

could

just

show

a

qr

code

and

whoever

ends

up

capturing.

It

could

just

read

that

qr

code

extract

whatever

information

it

wishes

out

of

that

and

then

use

that

so,

for

example,

it

could

get

a

session

id

and

then,

through

some

shared

back

end,

it

could

try

to

authenticate

and

say

hey.

I

want

to

start

communicating

with

this

session.

D

D

Second,

it's

not

efficient,

because

when

you

capture

something

you

have

to

continuously

scan

and

try

to

read

this

qr

code,

which

of

course

doesn't

have

to

be

a

full

blown

qr

code.

It

could

be

something

a

lot

more

minimal

and

less

user

visible.

It

could

just

be

a

couple

of

pixels,

but

you

still

need

to

scan

them

every

at

every

iteration

and

it's

also

right

for

disruption

unintended,

even

just

by

somebody

else.

D

Drawing

to

that

particular

part

of

the

screen,

and

last

of

all

you

you

all

when

you're

capturing

you

always

need

to

take

into

account

that

hey

whatever

I

think

I'm

reading,

not

none

of

it

is

verified

right.

I

need

to

verify

all

of

it

out

then

somehow,

for

example,

I

could

verify

a

session

id

by

trying

to

open

a

communication

channel

with

that.

Whoever

has

the

channel

and

they

need

to

like

they

could

display

a

you

know,

a

challenge

and

a

response,

or

anything

like

that

next

slide,

please.

D

So

what

I'm

suggesting

is

that

we

say:

okay,

if

this

is

already

possible,

let's

try

to

make

it

better.

First

of

all,

of

course,

this

needs

to

be

obtained

right.

The

application

will

not

like

some

of

my

previous

suggestions

would

not

just

always

expose

its

actually,

not

my

suggestion,

but

never

mind

not

always

expose

its

title

or

anything

like

that,

but

rather

it

would

have

to

say

hey.

D

I

want

to

expose-

and

here

are

the

things

I

want

to

expose

and

here's

who

I'm

willing

to

expose

it

to

so

two

are

things

it

could

expose,

it

could

expose

its

origin

and

it

could

expose

a

self-selected

string.

That

would

often

be

a

session

id

of

some

sort.

That's

meaningful

to

it,

and

the

next

thing

is,

it

needs

to

be

able

to

say

hey.

I

want

these

origins

or

maybe

any

origin

to

be

able

to

read

the

information

I'm

exposing

and

I'll

get

back

to

that.

D

Why

that

is

useful

a

bit

later

and

then

on

the

capturing

app,

you

will

say:

hey

here's,

what

I'm

capturing

so

I'm

capturing

meet.google.com,

I'm

capturing

slides.google.com

or

any

other

thing

and

here's

the

session

id

that

it

claims

now

one

benefit

here

is

that

at

least

part

of

this

information

is

automatically

verified

so

because

the

origin,

the

browser,

can

guarantee

that

you

cannot

expose

somebody

else's

origin.

So,

basically,

when

you're

exposing

you

just

say,

do

I

want

to

expose

my

origin?

D

Yes

or

no,

you

don't

self

self-report

the

origin

and

then

once

you

know

that

the

session

id

or

anything

else

that

is

coming

is

coming

from

you

to

you

from

a

verified

origin.

Then

you

could

choose

to

trust

or

not

trust

it.

So,

for

example,

if

one

microsoft

application

captures

another

microsoft

application,

maybe

it

chooses

to

say:

okay,

whatever

information

is

delivered

in

the

handle,

we

deem

that

to

be

trustworthy

or

not

it's

up

to

them

next

slide.

Please.

D

So

here

is

just

one

illustration

of

how

this

could

be

used.

So

let's

say

that

you're

on

the

captured

side

and

you're

some

kind

of

presentation

application,

so

you

call

set

capture,

handle

config,

meaning

that's

my

capture

handle

that's

what

I

would

like

to

show

you

could

say,

expose

origin.

True,

you

could

say

false!

D

Then

the

handle

can

be

anything

it

could

be

a

session

id

or

it

could

be

any

other

string.

Maybe

here

we

see

a

user

user,

readable

human,

readable

string.

That

says:

hey

go

here

to

understand

what

kind

of

api

you

have

you

could

use

with

this

particular

id

and

here's

the

id

as

part

of

it

and

that

could

be

parsed

on

capture

inside

and

then

we

say,

permitted

origins

is

any

origin

just

to

make

it

simple

next

slide.

Please

thank

you

and

then,

on

the

capturing

side

we

could

say:

okay,

I

called

get

display

media.

D

I

got

something

if

so

missing

from

this

example.

Is

the

part

where

you

say:

okay?

Is

this

a

tab

and

then,

if

it's

a

tab,

okay,

is

it

exposing

a

handle?

And

once

it's

I

see

it's

exposing

a

handle.

I

could

say:

okay,

is

it

one

of

several

collaborative

applications

I

know,

and

if

it

is,

then

I'm

gonna

parse

the

handle

in

a

certain

way,

extract

the

session

id

and

then

run

whatever

code

I

want

like.

Maybe

I

create

an

adapter

for

a

previous

and

next

with

whatever

api.

D

So

one

thing

to

note

here

is

that

actually

you

could

even

drop

the

exposing

the

origin

part

if

you

wanted

to,

because

if

your

id

is

sufficiently

unique,

then

you

could

just

get

a

value

and

go

and

talk

to

whichever

server

the

only

collaborating

application

or

a

small

number

of

collaborating

applications.

You

have

and

say,

like

hey,

is

this

id?

Does

that

belong

to

you

by

any

chance,

and

then

you

could

use

that

to

establish

communication.

D

D

The

captured

side

says

like

hey,

here's,

what

I

would

like

to

expose

and

then

the

capturing

site

says

like

hey.

I

would

like

to

either

get

notifications

of

whenever

they

handle

changes

which

can

happen

if

you

navigate,

and

it

can

happen

if

you

just

load

the

different

slides

deck

on

the

presentation,

software

or

anything

like

that,

or

I

could

read

the

exact

the

one

at

the

time

which

I

wish.

D

D

Thank

you

very

much

so

then

we

want

to

think.

Okay

are

there

any

privacy

or

security

concerns

with

an

api

of

this

shape,

and

my

argument

is

no,

and

I

think

it

is

because

of

the

following.

So

first

it's

opting

so

you

could

very

easily

just

not

use

it.

Second,

it's

user

driven,

so

it

hardly

ever

takes

effect.

It's

only

when

the

user

actually

starts

display

capture

and

we

don't

allow.

D

So

now

I

just

need

to

make

sure

that

everybody

is

looking

at

the

second

presentation,

because

soon

they're

gonna

shift

out

of

alignment

with

one

another.

So

next

it's

more

robust

than

steganography.

So

that's

nice,

because

but

that's

not

really

a

privacy

or

security

issue,

but

it's

good

to

have

that

right.

Once

you

start

relying

on

the

mechanism,

you

would

like

it

to

be

robust

next.

D

D

Next

you

could

use

a

handle,

that's

kind

of

partially

opaque,

so

you

could

like

write

in

a

very

understandable

form.

Exactly

what

you

are

I'm

wikipedia

article

xyz

or

you

could

have

something:

that's

only

understandable

if

you

have

access

to

another

api

last

thing

is

hey.

This

gives

us

the

ability

to

rely

on

the

origin,

because

you

cannot

spoof

it,

and

one

more

thing

is

like

you

could

kind

of

worry

that

hey.

D

Can

I

attack

the

capture

by

spamming

it

with

events,

and

the

answer

is

no,

because

it's

going

to

cost

you

as

much

as

it

costs

the

capture

that

you

don't

even

know

exists

and

the

capture

could

avoid

that

by

just

not

registering

to

read

your

handler

so

and

it

could

always

deregister

that

handler

next

slide.

Please,

yes,

so

previous

slide,

please,

and

that

was

what

I

had

to

say.

I

will

shift

the

mic

over

to

yanivar

later,

but

first

I

wonder

if

there

are

any

other

input

from

people.

F

This

is

good,

so

a

few

photos

there.

First,

the

name

might

be

a

bit

to

generate

capture

when

people

think

about

capture

they're

thinking

about

camera

and

microphone,

or

maybe

that's

my

bias

there.

It's

very

specific

to

screen

capture,

so

maybe

we

could

like

bike

shade,

a

name

that

is

that

has

more

focus

and

more

meaning.

F

I

I

would

start

very

simple,

not

always

expose

the

origin,

and

if

people

start

to

come

up

to

come

to

us

saying

hey,

I

want

to

not

show

my

origin,

then

we

can

talk

with

them.

Otherwise

I

think

that

I'm

not

exactly

sure

whether

we

want

like

this

even

mechanism

but

other

than

that.

If,

if

we

have

a

one-time

blob

of

data

that

is

exposed

from

the

capturing

to

the

capture

page,

I

think

it's,

it's

probably

fine.

F

I

would

say

that

in

most

cases,

navigation

will

probably

not

happen

and

also,

if

you

have

tight

coordination

between

one

and

the

other,

you

might

be

able

to

navigate

to

an

or

to

also

a

collaboration

destination

anyway,

so

you

might

be

able

to

reconcile

things

in

some

ways:

I'm

not

it's

something

that

that

is

doable

outside

without

this

event

mechanism

anyway.

So

I

think.

A

D

So

it's

not

controlled

by

the

app

in

any

way

the

user

just

moves

it

and

it

could

be

to

another

collaborating

or

non-collaborating

site

so

and

the

browser

does

not

know

right.

So

the

browser

needs

to

say:

hey

this

capture

handle

no

longer

applies.

You

don't

have

to

keep

calling

me

I'll.

Give

you

an

event,

so

you

know

you've.

Just

it

no

longer

applies,

and

maybe

something

new

is

going

to

apply

in

a

second

or

maybe

not.

F

Maybe

that

that's

something

we

could

discuss

in

that

case,

if

you

really

want

to

go

with

an

event,

I'm

not

sure

that

settings

is

the

right

way

of

doing

things.

Maybe

we

should

invent

something

new,

because

it

does

seem

a

bit

odd

that

you

have

settings

that

will

change

and

then

you,

you

need

an

event

somewhere

else,

so

it

seems

like

a

really

new

mechanism.

If

we

want

to

go

with

settings,

I

would

go

with

something

that

is

not

mutable

and

be

done

with

it.

D

I'm

not

sure

I

understood

because

for

settings

that

was,

if

you

want

to

read

the

capture

handle

at

the

moment

and

then,

if

you

want

to

register

an

event

handle

the

event

handle

is

on

the

track

not

on

the

settings

and

then,

when

you

get

an

event,

the

event

includes

the

new

capture

handle.

So

basically

every

time

the

capture

handle

changes,

you

get

it

with

the

event,

so

you

don't

actually

have

to

call

guest

settings

at

any

time.

E

A

D

F

C

D

Not

necessarily

so,

for

example,

if

you're

capturing

another

tab

that

happens

to

be

in

the

same

origin,

you

could

use

a

broadcast

channel.

So

this

does

not

really

talk

about

how

you're

going

to

use

the

session

just

how

you

discover

what

you've

captured

and

then

you

establish

communication

in

a

completely

orthogonal

way.

I

just

aim

to

give

you

a

strong

enough

way

that

it

would

apply

to

as

many

scenarios

as

possible.

E

A

A

E

E

E

It

seems

like

we're

introducing

multiple

solutions

that

are

perhaps

competing,

and

this

solution

would

then

enshrine

more

the

idea

that

what

does

it

say

about

the

working

group

and

what

it

intends

to

to

signal?

What

is

the

intended

api

that

people

should

use

what

for

capturing

web

services

and

specifically

on

the

api

in

your

earlier

slide,

you

said

the

discussion.

Scope

is

discovery

of

the

generic

pair

essentials.

E

I

also

think

this

api

could

be

smaller.

I

agree

you

should

always

include

the

origin

and

maybe

even

have

a

browser-produced

id

to

lessen.

I'm

not

very

keen

on

having

another

communication

channel

potentially

where

we

have

to

validate

a

lot

of

long

inputs.

If

we

have

an

unlimited

string

that

javascript

can

insert

into

any

api,

it's

going

to

be

abuse

one

way

or

the

other.

D

Sure

so,

first,

it

does

not

have

to

be

unlimited

right

so

and

implementation

wise.

I

we've

limited

it

to,

I

think

1024

characters

and

we

could

discuss

what

the

right

limit

should

be

and

whether

it

should

be

throttled,

maybe

you're

not

allowed

to

change

it

more

than

you

know

a

certain

amount

of

time,

maybe

there's

a

leaky

bucket

until

the

next

update.

D

E

No,

no,

I

think

I

I

phrased

myself

poorly.

What

I

meant

with

get

viewport

media

was

rather

an

approach

that

relied

on

a

better

security

foundations

like

site

isolation

and

opt-in,

to

capture

that

to

me

is

the

main

thing

that

I

think

we

had

a

breakthrough

on

in

the

working

group

that

we

could

provide

a

better

integrated

web

presentations

in

a

safer

way.

And

I

I

would

like

an

api

like

this,

to

work

in

conjunction

with

that,

but

I

would

perhaps

want

to

limit

it

to

site

isolation

and

opt-in

capture.

D

Then

I

think

that

once

we

have

get

viewport

media,

then

we

can

discuss

whether

this

api

still

applies

and

how

we

could

move

off

of

it

if

we

believe

it

to

be

detrimental.

But

right

now.

I

think

that

it

is

very

useful

and

I

don't

really

see

how

it

could

be

abused

and

I'm

open

to

listening,

but

right

now,

especially

the

suggestion

of

having

a

browser

assigned

id

which

makes

everything

less

powerful

and

requires

an

additional.

A

E

So

yes,

so

this

is

my

crystal

ball

slide,

which

you

should

never

contribute

to

the

internet,

because

it's

a

prediction

and

that's

in

two

years,

we'll

see

and

it'll

be

out

there

and

be

either

totally

correct

or

totally

false.

So

I'm

I'm

getting

I'm

doing

a

crystal

moment

of

what

would

it

look

like

in

two

years

time?

E

Excuse

me,

this

means

they

can

capture

themselves

using

git,

viewport

media.

With

permission

from

the

user

and

the

use

case,

there

is

the

user

clicks,

the

button

in

the

presentation

program

to

join

an

integrated

meeting,

and

that's

the

starting

point

they're

actually

in

the

presentation

program,

but

also

the

same

sites

can

register

for

preferential

positioning

and

treatment

in

get

display.

E

I

just

used

a

little

different

words

here.

You

know

register

intent,

presentation

because

that's

what

the

api

really

is.

It's

a

way

to

what

get

display

media

has

is

a

powerful

picker,

that's

built

into

the

browser

and

while

get

viewport

media

is

great

when

you're

already

on

the

permission

page

and

integrate

meetings.

E

D

So

one

thing

that

I've

not

written

down-

and

I

think

I've

not

said

before-

is

that

if

we

look

at

it

from

the

vc

applications

a

point

of

view,

I

don't

think

that

they

would

ever

agree

to

let

go

of

being

able

to

to

capture

an

arbitrary

tab.

They

would

never

be

able

to

say

okay,

we

can

only

capture

something

that

collaborates

with

us

calls

get

view

viewport

media

on

our

behalf

and

then

shares

it

with

us.

That

is

way

more

than

2023.

I

think

so.

D

D

So

to

the

intent

thing

I

have

to

say

it.

It

sounds

like

just

an

invitation

to

have

applications

jokey

for

position,

potentially

even

by

making

false

claims

saying,

I'm

a

presentation,

slash

video

game,

slash

productivity

switch

whatever,

please

put

me

or

whatever.

So

I

don't

think

that

we

should

rely

on

that

in

order

to

determine

position

in

the

picker

in

any

way,

so

that's

number

one

and

for

the

browser

assigned

id.

I

would

say

that

that

I

I

just

don't

see

why

it's

necessary.

D

I

know

why

why

it's

detrimental,

and

that

is

that

it

forces

another

translation

step

right.

It

also

means

that

you

have

to

have

collaboration

over

a

cloud,

whereas

if

you

have

an

arbitrary

string,

you

might

be

able

to

say

all

you

want

to

say

and

that's

it

like,

you

could

say:

hey,

I'm

a

bank,

try

not

to

try

to

warn

the

user

that

he

might

not

have

select

really

wanted

to

capture

me.

D

He's

probably

made

a

mistake,

or

you

could

say

you

know,

I'm

not

gonna

give

you

my

session

id,

but

here's

an

api

that

you

could

use.

There

could

be

a

lot

of

things

that

you

could

say

that

would

be

useful

without

a

shared

back

end,

so

first

you're

blocking

all

of

those,

the

ones

that

do

have

a

shared

back

end

you're

enforcing

an

additional

step,

whereas

maybe

you

could

speak,

you

could

talk

to

each

other

with

a

broadcast

channel.

Now,

no,

that's

not

good

enough!

D

Actually,

the

broadcast

channel,

I

guess

you

could

use

if

you

are

same

origin

you

could

ask

like.

Who

is

that?

So

I

take

back

that

particular

argument.

Sorry

and

you're

also

with

the

browser

assigned

id

you're,

no

longer

exposing

the

the

origin.

Unless

you

mean

to

you

also

meant

to

add

that

which

maybe

you

have

so

maybe

you

meant

to

say

okay,

so

first

you

have

to

opt

in

before

you

get

the

browser

assigned

id

and

then

you

get

both

the

browser

assigned

id

as

well

as

the

origin.

E

E

D

E

No,

I

just

meant

to

say

that

we

can

keep

that

ordering.

That's

fine,

but

I

don't

say

anything

wrong

with

if

web

pages

have

gone

through

the

efforts

of

site

isolating

themselves

and

opting

into

capture

those

are

inherently

safer

to

share.

I

see

nothing

wrong

with

giving

that

group

that

bulk

as

a

whole

preferential

treatment

over

sites

that

haven't

gone

through

that,

I

don't

think

that's

going

to

lead

to

as

far

as

position

within

that

group.

A

F

Chance

I

would

just

mention

now

that

I

understand

a

bit

more

of

a

proposal

that

the

idea

that

you

can

update

session

id

is

really

like

a

message

channel

one

way

and

we

already

have

like

a

construct

which

is

a

message

channel,

which

is

both

ways

and

we

have

window

proxy.

We

have

service

worker

clients

api.

We

have

a

lot

of

these

apis

that

allows

one

context

to

talk

with

another.

So

I

I

would

look

at

these

constructs

and

see

whether

we

should

not

try

to

use

them

or

not.

D

I'm

sorry,

but

I

think

that

is

a

misunderstanding,

because

all

of

the

existing

mechanisms,

like

peer

connection,

etc,

assume

that

you

already

know

who

you

want

to

be

talking

to,

whereas

here

you've

got

an

existing

channel

that

lets

you

you're,

basically

getting

all

of

the

pixels

off

of

whomever

you're

capturing,

but

you

don't

know

who

that

is

so

he

can

send

you

messages

like

your

embedded,

qr

codes,

etc.

But

you,

you

don't

know

who

that

is.

F

E

Well,

I

think

this

is

an

interesting

problem

that

we

should

definitely

the

working

group

should

definitely

try

to

solve,

but

I

think

the

issues

are

whether

what

security

properties

should

there

be,

and

what's

the

scope

of

the

solution

for

identifying

how

narrow

does

it

need

to

be

and

anything

else,

oh

yeah,

I

think

we

all

just

always

should

expose

origin.

I

don't

know

where

the

idea

came

from

to

hide

origin.

B

F

A

B

D

Slide,

thank

you.

So,

let's,

let's

imagine

a

post-covered

world

in

which

we

also

have

cheap

and

instantaneous

travel

and

therefore,

instead

of

meeting

remotely

as

we

are

right

now

we're

sitting

in

one

room.

Maybe

all

of

our

companies

got

purchased

acquired

by

the

same

company

and

now

we're

all

working

together

and

we're

sitting

in

a

room,

and

we

take

turns

presenting

to

a

mutual

to

a

shared

screen.

That's

on

the

wall,

and

there

is

a

pa

system,

a

set

of

speakers

whatever.

D

So

it

would

be

nice

if,

when

we

start

capturing

a

tab,

we

could

also

say

hey

kindly

stop

relaying

your

audio

to

the

local

speakers,

your

this

is

being

captured

and

we're

doing

something

useful

with

that.

So

what

I'm

proposing

here

is

a

new

constraint

called

suppress

local

audio

playback

modulo

bike

shared

it

on

the

name

of

course,

and

if

we

go

to

the

next

slide,

we

can

see

the

current

pr

state

modules.

D

Some

new

comments

that

have

just

come

in

and

they

have

not

been

able

to

address

yet

thank

you

geneva.

So

basically

we

we

with

this

constraint.

We

say

no

longer

play

this

or

your

audio

of

the

capture

tab

over

the

speakers,

but

one

thing

that

we

might

want

to

consider

is

okay.

What

if

there

are

multiple

captures,

in

which

case

we

say?

D

E

Clarify

that

it's

actually

not

the

reason

there

wasn't

actually

to

prevent

the

site

from

knowing

it's

captured,

because

it

would

actually

be

able

to

read

this

from

the

setting

itself.

My

concern

was

more

about

how

it

would

be

implemented,

because

I

worry

without

that

clarification,

there's

two

potential

ways

and

the

browser

could

implement

it.

One

would

be

to

basically

mute

audio

from

the

tab,

but

the

document

is

none

the

wiser

that

this

is

happening

and

that's

the

one

I

think

we

want.

E

E

D

E

D

E

F

F

F

My

main

question

would

be

whether

the

user

agent

could

not

already

do

that

in

my

in

like

90

like

in

most

cases

or

not,

that's

the

question

I

have,

for

instance,

maybe

this

should

be

like

the

suppressed

local

audio

payback

should

be

true

by

default,

meaning

that

if

the

page

is

starting

capturing

and

you

have

a

prompt-

and

in

the

point

you

say

yeah,

I

want

the

audio

to

be

captured.

Maybe

the

user

agent

should

be

smart

enough

to

say:

oh,

I

will

suppress

your

local

audio

playback

or

maybe

it

will

it

will?

F

The

user

agent

will

wait

to

suppress

local

audio

payback

based

on

what

the

capturing

page

will

do.

If

it's

playing

this

local

track,

then

it

will

suppress

on

the

capturing

track

or

maybe

the

user

agent

could

provide

ui

to

control

that

either

on

the

get

display

media

prompt

or

on

the

tab

icon,

where

you

can

mute

a

mute.

F

E

So

I

can

jump

in

a

little

bit

and

say

that

firefox

does

actually

have

a

mute

icon

in

the

tab,

and

this

is

why

the

pr

says

the

user

agent

should

stop

relaying,

because

we

imagine

that

it

could

actually,

since

double

muting

states,

are

a

bad

idea

that

it

could

actually

flip

this

state

so

that

users,

if

they

wanted

to,

could

flip

this

state

manually.

But

I

think

it's

still

useful

to

have

a

constraint

to

maybe

have

the

application

provide

its

intent

here.

D

I

would

like

to

answer

you,

and

so

you

and

you

said

three

different

things:

why

not

just

change

the

default

behavior,

and

to

that

I

would

say

that

that

would

not

really

work,

because

you

could

be

capturing

something

just

in

order

to

record

it

and

not

in

order

to

share

it.

So

you

could

imagine

that

some

cases

you

would

want

to

suppress

audio

or

suspend

local

audio

play,

but

sometimes

not

so.

You

cannot

just

change

that

to

add

any

ui

elements,

I

think,

is

a

very

open

question.

D

I

think

that

we

already

have

a

very

big

kind

of

picker

and

it's

gonna

be

too

difficult.

I

don't

think

that

any

user

agents

ux

people

are

going

to

agree

to

that.

I

think

they're

gonna.

I

think

that's

a

bad

idea,

even

if

you

were

to

say

to

convince

them

that

it's

a

good

idea,

you

would

probably

want

to

influence

the

default

state

when

the

picker

comes

up,

or

maybe

you

want

to.

I

don't

think

that.

A

F

F

B

D

B

D

Thank

you,

so

I

would

say

that

sure

it's

true

that,

even

after

the

making

of

the

decision,

the

user

needs

to

be

made

aware,

potentially

even

be

able

to

reverse

that

decision.

I

think

that

I

don't

think

that

the

heuristic

that's

saying,

hey,

let's

observe

what

the

capturing

page

does

and

the

site

based

on

that

is

going

to

lead

to

a

consistent

end

user.

F

D

F

That

so

the

user

will

be

will

be

shown

a

picker

and

the

user

will

click

on

share

audio

and

in

some

cases

the

audio

will

disappear

and

in

some

cases

the

audio

will

not

disappear.

That's

probably

that's

potentially

problematic

to

users.

They

will

not

understand

why

disappearing

and

why

it's

not

disappearing,

and

it's

capturing

webpage

that

controls

that

now.

So

that's

why

I'm

a

little

bit

hesitant

to

actually

go

down

that

road.

D

That

is

true,

but

you

could

show

the

user

like

a

flashing,

mute

sign

or

something

that

shows

hey.

The

reason

this

tab

is

now

muted

is

because-

and

that

is

better

than

offering

them

a

choice

because

offering

a

choice

gives

a

cognitive

load,

and

you

know,

whereas,

if

you

just

say

hey

this

happened.

This

is

why

that

might

be

more

something

that

ux

people

might

be

more

amenable

to.

But

yes,

I

agree

that

we

could

continue

discussing

this

on

github.

E

D

D

Of

course

there

is

an

implicit

decision

here

that

a

get

viewport

media

does

not

already

do

some

cropping

and

they'll

get

back

to

that

implicit

assumption

in

a

second

but

assume

that

we

have

get

viewport

media

that

lets

you

get

capture

the

entire

tab,

everything

on

it

and

what

now

so,

applications

can

comprise

multiple

parts

and

those

multiple

parts

can

be

cross-origin

from

one

another

and

they

can

also

kind

of

shift

in

size

and

location.

Especially

if

you

change

the

size

of

the

window,

then

the

browser

decides

to

move

things

around.

D

D

Yes,

so

let's

assume

that

we've

got

this

particular

collaboration

between

a

productivity

suite

displaying

presentations

and

the

video

conferencing

application.

That

draws

the

token

heads

on

the

side,

and

so,

when

you

capture,

let's

say

you're

using

the

share

me

button

that

calls

get

viewport

media

your.

What

you

want

to

capture

is

just

the

part

that

does

the

presentation

and,

moreover,

you

might

only

want

to

capture

part

of

that,

because

maybe

there

are

some

speaker

notes

that

are

also

embarrassing.

If

discovered.

D

D

Number

one

is

performance,

because

the

browser,

instead

of

having

to

keep

on

handing

over

very

big

frames

to

the

application,

might

hand

over

frames

that

are

quarter

of

the

size.

So

why

not-

and

the

other

reason

is

that

it's

very

difficult,

even

impulse

almost

impossible

for

to

cross

course,

origin

frames

to

communicate

their

size

and

location

on

the

interview

port

when

you

change

the

window

and

make

sure

that

you

never

miss

crop,

even

a

single

frame.

D

D

So

there

have

been

some

discussions

between

me

and

yanivar

about

okay,

but

who

needs

to?

Maybe

we

don't

need

to

crop,

maybe

get

viewport.

Media

itself

is

gonna

crop,

just

whichever

frame

you

call

get

viewport

media

from

you

only

capture

that

frame,

but

I

would

like

to

remind

everybody

that

we're

not

talking

about

element

level

capture

because

you

would

still

be

cropping.

D

You

would

still

only

be

capturing

whatever

the

user

can

see,

so

occluded

content

is

not

going

to

be

captured

and

the

included

occluding

content

is

still

going

to

be

capturing.

So

essentially,

what

we

would

be

promoting

here

is

a

pattern

whereby

I

have

an

invisible

frame

or

div

or

whatever

behind

all

of

the

content.

D

They

don't

care

about

that

and

they

might

not

even

think

that

it's

so

terrible,

but

we

would

like

to

teach

them

to

do

better.

So

I

think

that

if

we

a

more

developer

friendly

solution

would

be

something

that

would

allow

them

for

to

have

an

arbitrary

capture

cropping

to

an

arbitrary

target,

but

in

a

way

that

cannot

be

used

incorrectly

next

slide.

Please.

D

So

assuming

for

the

sake

of

argument

that

we

agree

on

all

the

previous

points,

the

question

is

okay.

How

do

we

indicate

which

arbitrary

target

we

would

like

to

crop

to,

and

then

there

are

two

or

three

options

I

could

think

of

number

one

is

just

give

a

dom

node

reference

right,

like

many

other

apis,

but

the

problem

is

that

this

would

not

work

cross-origin

origin.

D

D

D

D

D

I

just

apply

that

and

I

capture

to

capture

myself

only

so

that

works

and

the

other

one

I've

already

gone

through

over,

and

that

is

that

we're

not

talking

about

element

level

capture,

that's

another

tool,

it's

a

useful

tool

and

we

probably

want

to

support

both

eventually,

but

I'm

only

talking

about

the

current

tool

right

now

so

and

then

the

last

thing

that

I

thought

that

we

could

discuss

before

I

ask

for

feedback

next

slide.

Please

is

how

do

we,

assuming

that

we

use

an

id

we,

whichever

id

we

end

up

using?

D

Do

we

want

to

say?

Okay

as

soon

as

capture

starts,

we

say

crop

to

this,

in

which

case

we

don't

need

to

have

some

kind

of

barrier

to

say

like

okay

and

now

cropping

started.

You

just

know

that

cropping

was

there

all

along.

So

you

start

cropping

and

you

can

use

the

very

first

frame,

but

then

it

might

be

a

bit

more

difficult

to

say.

D

E

All

right,

so

I

have

some

feedback

on

the

you're

totally

right

that

the

security

properties

are

for

the

entire

page,

but

I

don't

necessarily

see

that

as

a

problem,

and

I

definitely

don't

see

it

as

a

problem

with

only

one

of

these

solutions,

because

the

only

difference

I

see

here

is

whether

you

post

message

an

id

or

you

post

message

the

track.

It

doesn't

really

seem.

E

I

mean

the

same

security

properties

apply

and

as

far

as

a

security

issue,

I

think

it's

already

understood

that

an

iframe

will

not

be

able

to

call

this

method

without

being

having

that

permission

delegated

from

the

top

page.

So

the

top

page

is

remains

in

control,

and

I

think

we

just

need

to

document

the

security.

These

security

properties-

and

I

just

threw

with

either

solution

like

just

because

I

captured

to

a

specific

element,

doesn't

give

me

any

security

properties,

because

that

element

can

be

behind.

E

It

can

be

invisible,

can

be

all

kinds

of

things,

and

also

this

idea

that-

and

I

I

I

know

we

should

also

say

we

have

agreement,

which

is

good-

that

no

one's

proposing

coordinates,

which

I

love

so

so

at

least

there's

that.

But

I

do

worry

that

an

element

whenever

you

relay

at

the

element

can

move

and

when

you

define

what

happens

to

the

capture.

E

This

is

why

I

think

it's

better.

I

think

it

would

be

more

conservative

to

tie

it

to

an

iframe,

because

we

already

changed

the

size

of

capture

when

the

window

changes

the

dimensions,

and

I

think

that

makes

sense

for

iframes

as

well.

If

we

go

down

to

individual

elements,

that

seems

a

little

over

specific

to

me.

D

Size

I

want,

and

so

let's

take

a

step

back.

So,

let's

suppose,

for

the

sake

of

argument

that

posting

a

track

or

posting

id

is

the

same.

You

are

still

ending

up

with

a

a

much

more

restrictive

api.

If

you

say

that

whenever

you

kept

call

get

viewport

media,

you

automatically

only

get

the

iframe.

What

if

I

don't

want

to

get

the

iframe?

What

if

I

want

to

get

the

entire

tab?

D

Yes,

in

which,

but

that

still

assumes

that

there

is

an

iframe

or

top

level

that

is

the

same

size

and

for

some

reason

you

have

to

communicate

with

it.

Even

though,

when

you,

it

could

be

that

you

are

embedding

a

page

that

you

don't

want

to

communicate

with.

Like

you,

you

just

want

to

give

it

the

permission

and

that's

it

like

I'm

just

hosting

a

site

on

glitch

and

whatever,

and

it's

like

here's

the

application.

I

don't

need

to

know

what

you

are.

D

E

F

D

F

D

D

I

I

think

that

what

I

said

was

more

on

the

line

of

like

let's

provide

only

this

tool,

and

it

also

gives

you

the

functionality

of

the

other

tool.

I

say

what

I

said

was

like:

if,

if

you

have

an

id,

if

you

only

crop

to

an

id,

you

could

always

crop

to

self,

because

you

yourself

have

an

id

that

you

can

crop

to

which

is

kind

of

a

hack.

F

F

So

maybe

we

could

at

least

here

get

a

consensus

on

that,

and

then

we

can

discuss

exactly

how

we

could

do

that

and,

for

instance,

whether

we

want

to

have

this

very

dynamic

or

whether

it's

at

the

capturing

start

that

we

want

to

say

hey.

This

is

the

the

element

of

the

iframe,

the

scope

where

we

want

to

capture

and

it

will

not

change,

and

once

we

have

about

the

sessions,

then

we

can

figure

out

maybe

the

particular

api.

F

C

A

F

F

E

D

D

But

that

means

that

whenever

I

want

to

iframe

some

vc,

I

need

to

set

up

a

very

elaborate

collaboration

with

it,

where

basically

it

sends

me

a

message

saying:

hey,

I

want

you

to

capture

everything

on

my

behalf.

Oh

I'm,

sorry.

I

want

you

to

capture

the

interesting

part

on

my

behalf

and

send

it

over

where

or

assuming

you

don't

want

to

crop.

Okay,

so

you're,

it's

might

not

even

be

a

vc,

so

you're

embedding

something

that

you

want

to

allow

to

capture

now.

D

The

outline

of

my

argument

is

this:

first,

I'm

trying

to

say,

hey

get

viewport

media

should

always

get

the

entire

viewport

and

not

a

cropped

version

just

to

itself.

Unless

we

add

something

so

the

default

needs

to

be

get

the

entire

viewport,

and

if

I

convince

you

of

that,

then

I

need

to

then

it

would

be

easier

to

convince

you

that

okay,

now,

we

need

to

also

add

cropping

on

top

of

that.

So

for

the

first

argument

of

the

entire

viewport

is

what

you

should

get

by

default

from

get

viewport

media.

D

I

claim

that

when

you

iframe

something

when

you

embed

something-

and

you

give

it

permission-

that's

not

a

lot

of

work

and

that's

a

reasonable

amount

of

work

to

expect

of

the

application.

But

if

now

you

say,

okay,

but

every

time

you

embed

something

and

give

it

permission,

you

also

need

to

commit

to

set

up

some

kind

of

api

between

you,

a

post

message

based

api

for,

if

you

ever

want

to

capture,

send

me

this

message

and

I'll

capture

and

send

it

back.

E

A

E

A

D

F

Okay,

so

going

to

another

world

where

data

is

no

longer

it's

encoded,

so

we're

shifting

to

webrtc

and

call

it

transform

and

s

frame.

So

there

we

are

talking

about

vsframe

transform,

which

is

implementing

the

sram

algorithm

natively

and

as

frame

processing

can

generate

errors

like

you

do

not

have

the

key

id

so

or

the

message

that

you

receive

is:

has

an

error

like

parsing,

fails

or

you're

decrypting

and

you're

validating

the

authentication

tag,

and

it's

it's.

It's

not

correct.

F

So

there

are

a

bunch

of

errors

there

and

since

it's

a

native

transform

it's

good

if

there's

a

hook

provided

so

that

javascript

can

register

to

various

events

and

say:

hey,

oh

there's

a

missing

key

id.

Maybe

I

should

do

something

or

oh

there's

a

lot

of

authentication

errors,

so

maybe

there's

a

potential

attack.

What

should

I

do?

Maybe

I

should

drop

the

connection,

so

I

think

it's

useful

to

surface

these

errors

to

the

javascript

application.

F

Here's

another

question,

which

is

whether

the

native

transform

by

itself

should

have

a

default

behavior

in

case,

for

instance,

of

authentication

error

for

now,

there's

no

default

behavior,

so

it

will

continue

working

and

it's

up

to

the

javascript

to

actually

do

something,

but

that's

something

we

could

continue

discussing

in

the

future.

So

the

proposal

yeah.

So

the

question

that

we

are

trying

to

solve

here

is

whether

we

should

surface

to

javascript

designers

and

how

we

could

do

so.

F

So

the

proposal

is

to

expose

error

event

handlers

to

on

the

s

frame,

transform

object.

These

events

can

be

used

if

spam

transform

is

used

standalone,

so

you're

assigning

vs

frame,

transform

to

the

sender.transform,

for

instance,

and

in

case

well

there

it

would.

It

would

probably

be

receiver

that

transform,

but

anyway,

it's

the

same

so

in

case

you're

missing

a

key

id.

Then

you

do

some

javascript

that

will

handle

the

unknown

key.

So

probably,

in

that

case

it

will

register

a

new

key

and

processing

will

continue

on

the

right

side.

F

F

B

Yeah,

I

have

a

question

you

and

how

does

this

interact

with

knacking?

So

you

know

you're

say