►

From YouTube: WebRTC WG meeting 2023-05-16

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

A

A

So

today

we're

going

to

cover

a

number

of

things,

use

cases,

some

emergency

extension

and

media

capture,

extension

issues

in

PRS

and

some

new

work,

ice

controller

and

rtb

transport,

and

we

have

a

bunch

of

future

meetings

that

are

listed

below

all

right.

So

the

slides

are

up

on

the

wiki

and

we're

being

recorded

just

a

reminder

and

it

will

be

made

public.

Do

we

have

a

note

taker.

B

A

A

I

think

you

all

are

I

figured

out

how

things

work,

but

we

do

run

a

tight

speaker

queue

so

raise

your

hand

to

get

into

it

and

lower

it

to

get

out

of

it.

We

will

mute

you

if

you

jump

the

queue,

so

please

don't

do

that

and

tell

us

your

full

name

before

you

speak,

so

we

can

help

get

it

in

the

minutes.

All

right,

I,

don't

think

we'll

use

polls

today.

But

if

we

do,

you

know

what

it

does

all

right.

A

So

just

about

document

status,

we

have

to

say

this

every

meeting

and

sometimes

it

gets

confusing

and

we

will

be

talking

about

use

cases.

The

point

is

that

editor

straps

do

not

represent

consensus.

Where

congrats

do

it's

possible

to

merge

some

PRS

that

lack

consensus

if

the

notes

attached

and

we'll

be

dealing

with

a

use

case

document

where

that's

been

done

liberally

and

maybe

not

such

a

great

idea,

we'll

see?

A

C

I

mean

it's

been

cited

recently

in

in

in

new

feature,

so

it

is

something

that

kind

of

crops

up,

and

so

it

has

a

use,

and

can

it

be

improved?

Well,

almost

certainly

I

think

there's

quite

a

lot

wrong

with

it

at

the

moment,

and

the

purpose

of

this

kind

of

20

minutes

or

whatever

it

is,

is

to

try

and

look

for

guidance

and

make

and

come

to

some

sort

of

agreement

about

how

we

might

improve

it

and

what

we

think

it's

for

next

slide,

please.

C

I

mean

kind

of

random

example,

but,

like

7478

is

really

dated,

you

know

it,

it

isn't

what

we're

doing

with

where

both

you

see,

I

mean

it

sort

of

is,

but

it

isn't

and

it

talks

about

things

that,

like

I,

haven't

talked

about

for

10

years

like

telephony

terminals,

so

it's

kind

of

out

of

date

in

some

ways

and

and

then,

if

you

look

at

like

at

the

NV

use

cases

document

as

well

as

it

talks

about

things

like

that,

aren't

actually

use

cases

like

funny.

Hats

isn't,

in

my

view,

a

use

case.

C

It's

a

feature

on

an

existing

use

case,

but

I

the

requirements

for

funny.

Hats

are

still

valid

and

useful,

and

what's

more,

the

resulting

API

points

fantastically

popular

and

useful

Way

Beyond,

the

hats

or

even

for

video

conferencing.

It's

like

there

are

users

outside

video

conferencing

so

like

that

tells

me

that

maybe

there's

something

wrong

with

the

way

that

we're

treating

this

document

and

yeah

like

and

the

other

way

around.

C

Like

section

3.9

and

we'll

talk

about

the

status

of

the

rest

of

the

document

in

a

minute

I,

it's

they've

got

no

consensus,

but

it's

already

been

done

by

wish

and

whip

effectively

and

so

like

we,

we

find

ourselves

in

a

weird

position

of

like

this

document

sort

of

almost

like

it's

an

orphan

or

something

I,

don't

know

it's

kind

of

been

overtaken

by

standards

and

events

and

and

things

and

I

and

I

think

that's

a

shame,

because

it's

still

got

a

use

next

slide.

Please

so

I

must

thank

I.

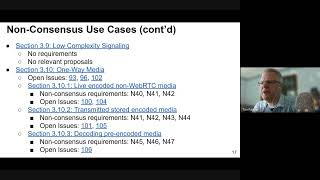

C

Think

Bernard

for

for

filling

out

this.

The

details

of

this

I

don't

intend

to

kind

of

go

through

a

huge

amount

of

this

full

status

in

in

a

lot

of

depth,

but

I

think

it's

worth

like

running

through

it

quickly,

so

that

we've

got

a

sense

of

where

we

are

with

it.

Yeah

we've

changed

the

name

because

Envy

isn't

what

we're

doing

anymore.

This

is

extended

use

cases

and

there

are

nine

consensus

use

cases,

but

some

of

them

have

non-consensus

requirements.

There

are

seven

non-consensus

use

cases

in

there.

C

There

are

31

open

issues,

you

know

of

varying

ages,

but

18

of

them

in

open

for

two

years

and

so

yeah,

it's

not

in

a

particularly

healthy

state.

From

that

point

of

view,

and

and

that

begs

the

question

you

know,

what

should

we

do

with

non-consensus

use

cases

that

aren't

progressing?

What

should

we

do

with

non-consum

census

requirements

and

and

kind

of?

What

do

we

do

with

use

cases

where

there

are

no

requirements

or

proposals?

C

Next

slide?

Please

so

just

to

dive

into

a

few

few

examples

here

or

I'm,

probably

not

going

to

kind

of

read

the

whole

thing.

But

you

know

we've

got

the

multi-party

gaming

for

which

the

requirements

have

consensus,

and

there

are

some

proposals.

We've

got

the

mobile

calling

service

for

which

they're

in

non-consensus

requirements,

but

it

has

consensus.

C

We

have

the

video

conferencing

with

a

central

server

which,

to

be

honest,

is

the

kind

of

the

core

use

case

that

everyone's

using

webrtc

for

and

all

the

requirements

have

consensus

and

they're

irrelevant

proposals

next

slide.

Please

and

then

we've

got

use

cases

more

use

cases

with

consensus,

say

the

file

sharing

whether

there's

a

proposal

for

there,

and

it's

got

the

required.

C

I

mean

I'm,

not

even

sure

that

some

of

these

others

are

but

I'm

not

sure

that,

but

it's

in

good

state

from

the

point

of

view

of

the

kind

of

paperwork

I

and

then

like

the

machine

learning,

is

interesting

because

it

effectively

has

a

bunch

of

the

same

requirements

as

funny

hats.

But

it's

a

different

use

case

and

Bernard.

A

Yeah

that

that's

a

weird

one

Tim,

because

it

really

begs

the

question

of

what

these

use

cases

are

for,

and

one

thing

add

some

confusion

in

the

ATF

and

and

maybe

Dom

or

someone

can

explain

the

w3c

process,

but

in

the

ietf

we

have

an

applicability

statement

document

and

a

use

case

document

they're,

not

the

same.

So

in

the

eye

chip

there's

no

requirement

that

the

use

cases

cover

everything

that

the

standard

can

do

right.

The

applicability

statement

will

tell

you

stuff,

that's

that

it.

A

The

standard

is

inappropriate

for

right,

but

I

don't

know

like

in

the

w3c.

Is

there

an

idea?

The

reason

we

have

machine

learning

here,

even

though

it

it

doesn't

give

you

any

new

requirements,

as

people

said

well,

if

you

don't

put

it

in

people

will

think

it

can't

be

used

for

that.

But

a

guy

cap

that's

considered

an

inappropriate

argument

and

an

argument

for

another

kind

of

document,

which

is

the

applicability

statement

so.

C

A

C

You

so

yeah

here

we

go

so

we've

got

a

bunch

of

use

cases

more

use

cases

with

consensus

that

don't

plan

my

video

conferencing

and

there's

a

sort

of

semi

theme

here,

which

is

that

video

conferencing

tends

to

get

consensus

and

other

things

sort

of

tend

not

to,

but

that's

a

that's

a

probably,

not

statistically

accurate,

and

that

that's

got

encoded

transform

as

a

proposal.

That's

that's

on

on

the

go

next

slide.

C

But

we

have

no

consensus

on

the

use

case

and,

and

we've

got

a

ton

of

open

issues

on

it

and

the

requirements

are

in

a

in

non-consensus

state,

so

we're

kind

of

where,

in

the

weirdest

situation

of

almost

saying

we

don't

think

you

should

be

doing

this

when

people

are

out

there

doing

it,

which

is

I.

Think

is

not

a

positive

thing.

Next

slide,

please

so

I

I

kind

of

stepping

back

I

thought.

C

C

C

Where

have

we

got

to

maybe

to

some

extent

a

progress

meter,

but

also

a

yardstick

to

see

whether

the

changes

that

we

people

are

bringing

to

us

are

suitable,

whether

they

fit

like

what

we've

said,

we're

trying

to

do

and

and

then

and

I

think

also

it's

desirable

to

have

a

way

of

maybe

decoupling

the

scenarios

from

the

requirements.

So

it's

you

know

it's

very

easy

to

kind

of

dive

in

with

with

a

requirements

or

even

the

solution.

C

Like

we've

been

doing

this

a

long

time

and

and

the

world

has

changed

and

I

think

that

we

need

some

kind

of

forward

Direction

on

this

and

I'm

happy

in

a

minute

for

people

to

bring

up

other

things

to

add

to

this

this

day,

I,

don't

think

it's

exclusive

at

all.

It's

just

like

what

I

came

up

with

next

slide.

C

Please

and

then

the

other

hat

that

I

could

put

on

and

did

is,

is

like

a

developer

who's,

building

on

webrtc

every

day,

using

the

apis,

consuming

them,

and

what

I

want

from

a

document

like

this

is

confidence

that

my

API

usage

will

continue

to

be

supported

and

a

guide

to

see

like

what

I

might

be

able

to

use

in

the

near

future.

What

sort

of

features

are

coming

down?

C

The

pipe

that

might

be

sensible

to

like

put

in

my

product

roadmap

and

a

place

potentially

to

ask

for

new

API

features,

a

place

to

say:

hey

I've

got

this.

You

know

aquarium

with

turtles

in

it

and

I

need

this

in

it,

and

and

and

so

you

have

a

place

to

kind

of

raise

that

discussion

and

what

features

you

might

need

to

to

meet

the

turtle

use

case

and

a

way

to

know

what

is

possible

to

do

now.

C

Now,

I

I

realize

that's

kind

of

difficult,

but

it's

a

changing

API

space

and

I

think

as

a

top

level

guide

to

kind

of

well.

You

know

these

are

things

that

work

is,

is

pretty

desirable

and

and

again,

I

feel

absolutely

free

in

a

minute

to

kind

of

come

up

with

other

things

to

add

to

this

list,

because

I

I

don't

claim

that

it's

exclusive

next

slide,

please.

C

So

the

reason

all

this

matters

is

is

and

I

had

it

kind

of

brought

home

to

me

very

firmly

recently

when

I

was

in

a

slack

chat,

and

this

floated

past

I

wasn't

addressed

to

me.

I

just

happened

to

be

in

the

chat

and

says:

we've

got

an

answer

from

the

web

ITC

team.

They

basically

confirmed

that

they're

doing

what

they're

doing

intentionally

since

they

believe

the

tech

is

meant

for

two-way

audio

conversations.

C

You

know

there

are

other

apis

that

that

are

coming

along,

that

are

doing

the

filling

in

the

gaps

in

the

other

spaces

and

I.

Think

P2P

is

the

thing

that

webrtc

does

uniquely

does

and

I

think

we

should

like

try

and

bring

the

emphasis

back,

which

is

actually

already

in

74.78,

bringing

a

little

bit

of

emphasis

back

to

to

that

and

I

think

we

should

take

out

use

cases

that

are

now

met

by

other

standards,

things

that,

like

you

know,

web

transport

or

web

codex

or

whatever

does.

C

We

should

be

removing

the

use

cases

and

and

their

Associated

requirements

if,

if

they're

already

done

somewhere

else,

we

do,

though

I

think

need

to

include

use

cases

that

have

no

requirements,

but

that

extend

74.78

so

that

you

know

that

particularly

things

like

the

iot

stuff,

which

is

dear

to

my

heart,

like

it

doesn't

even

crop

up

in

7478

but

I.

That's

relevant

and

interesting

and

and

a

good

use

case

for

this

technology.

I

think

yeah,

but

then

more

practically

on

a

kind

of

document

level.

C

C

So

and

then

I

think

we

should

remove

use

cases

that

don't

add

new

requirements,

with

the

exception

of

the

ones

that

extend

RFC,

7478

I

think

there's

a

little

tension

there

and

I

think

there's

a

discussion

to

be

had

about

what

what

those

Place,

how

that,

like

requirement,

balance

goes

I,

think

proposed.

Api

changes

should

probably

all

include

changes

to

the

use

case.

Dock

like

I,

don't

like.

C

Why

are

we

changing

the

API

if

there's

no

use

case

for

it,

so

so

I

think

you

know

I

think

we

need

to

tie

this

into

the

process

a

little

deeper,

and

that

also

comes

back

to

like

what

the

relationship

between

this

document

is

and

the

explainers

I

think

explainers

are

very

useful,

but

maybe

this

document

should

contain

pointers

to

explainers

so

that

it

becomes

much

more

of

a

living

document

and

I.

Think.

C

The

other

thing

that

we

we've

struggled

with

in

this

document

is

is

the

input

where

the

input

comes

from

I.

Think

we,

if

we

can,

we

need

to

find

a

way

of

broadening

that

I'm

happy

to

kind

of

use

webrtc.nu

as

a

feed

in

from

developers.

If

that's

thought

to

be

useful,

or

we

have

some

other

shape

that

allows

us

to

bring

stuff

in

here,

so

we

now

have

a

queue.

Oh

next

slide,

I

think

we

think

this

is

the

discussion

Point

site.

C

A

A

I

do

actually

think

that

the

peer-to-peer

aspect

is

important

because

it

comes

up

a

lot

and

it's

been

a

confusing

point

in

other

use

cases

and

other

working

groups

as

well,

where

what

I

see

from

developers

is

often

they

disagree

with

the

way

other

working

groups

have

done

the

use

cases

as

an

example

streaming

use

cases,

many

of

them

actually

require

peer-to-peer

operation

game

streaming

is

a

good

example

of

something

that

you

know.

I've

talked

to

game

streamers,

it's

very

popular

in

webrtc.

A

In

fact,

all

the

major

game

streaming

services

use

webrtc

and

many

use

them

not

just

client

server,

but

also

peer-to-peer

so

I

think

that

what

I

hear

coming

up

from

developers,

if

it's

peer-to-peer,

they're

forced

to

use

webrtc

and

a

lot

of

things

that

other

groups

think

are

our

client

server

are

actually

peer-to-peer.

So

I

think

that

one

is

a

really

good

one.

A

Certainly

I

I

think

you

know,

when

you

say

met

by

other

standards.

I

would

like

to

see

wide

usage

because

there

are

other.

There

are

people

claim,

for

example,

we're

the

best

game

streaming

stuff.

When

I

talk

to

developers,

they

basically

say

nope

I'm,

not

interested

in

that

stuff.

I

want

to

use

webrtc,

so

I

think

it

it's

not

just

that

they're

met

by

other

standards,

but

you

know

the

other

standards

are

actually

being

used

and

then

you

know

if

they

don't

get

consensus.

I

do

agree

with

this.

A

If

something's

been

lying

around,

as

you

said,

for

some

of

the

things

lying

around

for

two

years,

are

people

asking

for

stuff,

but

some

of

the

stuff

lying

around

for

a

long

time

is

things

that

were

just

the

issues

have

not

been

answered

and

I.

Think

I

think

you

have

a

really

great

point

that

leaving

these

things

in

the

document

probably

is

not

a

great

idea

and

then-

and

you

know

you

can

always

have

another

PR

to

clean

it

up.

A

But

if

it's

lying

in

there

with

no

consensus

and

if

the

requirements

that

where

the

issues

aren't

get

fixed,

just

rip

it

out

I

think.

But

there

is

a

very

big

question.

I

think

I'd

like

to

try

to

get

some

guidance

from

here

which

is.

Are

we

saying

that

if

there

isn't

a

use

case

for

it,

it

doesn't

mean

it

can

be

done?

Obviously

people

don't,

like

you

said

Tim

they're

doing

it

anyway,

so

do

they

need

our

blessing

like?

C

A

D

C

No

totally

totally

agree

there,

but

but

when

somebody

comes

up

with

a

change

to

the

API

that

says,

okay,

maybe

we've

got

a.

We

want

to

change

the

API

in

some

way,

API

shape,

and

it

removes

the

ability

to

do

that

and

then

there's

no

way

of

saying

from

the

document

point

of

view

hey,

but

we

agreed

that

this

was

something

we

wanted

to

do.

C

E

Yeah,

so

one

of

the

things

that

surprised

me

in

our

handling

of

this

document

has

been

the

has

been

the

problems

we've

been

getting

with

consensus

on

use

cases,

I

mean

the

most

recent

example.

I

personally

encountered

was

the

one-way

use

cases

where

I

claim

that

we

have

developers

who

are

eager

to

do

them,

and

the

working

group

says

no.

We

don't

have

consensus

that

these

are

valid

use

cases.

E

That

seems

bizarre

to

me

and

an

even

worse

one

is

the

one

that's

been

hanging

around

forever

with

or

was

kicked

out

with

trusted,

JavaScript

and

conferencing,

because

that's

what

everyone's

doing-

and

we

don't

have

a

use

case-

we

don't

have

consensus

on

the

use

case,

so

we

so

we

delete

this

from

the

use

case

document.

That's

nuts

right

right,

so

I

have

a

problem

with

the

distance

between

the

use

cases

document

and

the

use

cases

that

I

need

to

support

in

the

real

world.

C

F

C

G

Yes,

so

a

lot

of

good

points.

Thank

you.

Tim

for

for

I

definitely

agree.

This

needs

a

cleanup

and

I

like

a

lot

of

the

points

you're

proposing

here.

One

thing:

I,

don't

really

mentioned,

is

scope.

I

think

we

should

be

clear

about

scope

for

this

and

that

this

is

only

for

this

working

group.

You

mentioned

removing

things

that

have

other

standards

and

I

think

it's

useful

to

look

at

the

history

of

this

document.

G

Next

version

was

supposed

to

be

webrtc

2.0

and

it

was

an

important

part

in

our

lives

where

we've

finished

1.0

and

we're

deciding.

What

are

we

going

to

do

next

and

there

wasn't

a

monolithic

2.0?

Instead,

it

was

more

of

an

unbundling

of

webrtc

into

other

specs.

So

that's

why

a

lot

of

use

cases

are

in

there

like

you

mentioned,

wish

that

doesn't

need

to

be

does.

Is

that

me

does

that

that

wishes

existing?

G

Does

that

mean

the

use

case

stays

in

or

that

it

goes

out

and

I

think

it's

important

for

it

to

serve

our

process?

The

w3c

process,

which

is

that

this

is

not

we're

not

supposed

to

document

everything

for

web

developers.

Other

websites

can

do

that.

I.

Think

the

purpose

of

these

cases

is

to

drive

our

discussions

in

the

working

group

and

on

GitHub.

So

when

someone

opens

an

issue,

we

would

say:

hey

I

have

a

problem

solving

it.

G

D

I

just

had

a

quick

point

on

I

think

I

agree

with

the

removing

use

cases

met

by

the

standards

when

they

have

wide

adoption

in

the

other

places,

my

only

thought

was

I

just

hope.

This

doesn't

mean

that

we

end

up

sort

of

accidentally

siloing

where

you

can

have

like

peer-to-day

or

those

other

things

just

because

it

happened

to

be

an

understand.

It

do

you

think,

there's

space

for

having

like

the

need

of

a

use

case

for

integration

between

robot

to

see

and

these

other

standards

in

this

kind

of

life.

H

In

terms

of

scope,

I

would

Echo

what

anybody's

saying

is

that

this

document

is

foreign.

So

it's

mostly,

we

are

the

main

users

and

the

driver

is

to

add

new

things

or

add

new

features.

So

that's

the

school

I

I

agree

with,

however,

that

it's

not

very

successful

and

other

working

groups

over

bodies

are

using

explainers,

which

is

a

bit

better,

because

you

can

have

the

use

case.

H

C

I

I

I

think

that

I

I,

like

that

and

I,

think

the

relationship

with

explainers

is

probably

Central

to

the

solution.

The

question

is

that

Suzanne's

still

unclear

to

me

is

what

remains

in

a

in

a

use

case

document.

Is

it

just

like

a

headline

use

case

and

a

pointer

to

an

explainer

and

a

list

of

those

which

I

think

is

actually

possibly

a

doable

thing,

but

we

need

to

think

about

what

that

will

be

and

I'm

happy

to

have

this

conversation

on

the

list

now

Peter

you're

back

in

the

queue

somehow.

C

So

so

my

proposal

is

is

to

take

this

to

to

the

list.

Let

people

I

want

input

from

people

and

prepared

to

put

some

time

into

doing

some

of

this,

but

I

want

to

make

sure

that

we

understand

what

it

is

that

we're

we're

trying

to

achieve.

I

want

to

broaden

this

scope

a

little

more

than

than

than

just

formally

for

the

w3c,

but,

like

maybe

we'll

discuss

that

elsewhere

for.

A

Next

month,

what

I'd

like

to

do

Tim

is

to

continue

this

discussion

in

the

next

month's

meeting.

I

will

try

to

create

some

PRS

to

address

the

proposals

that

I'm

in

discussed

here,

and

then

we

can

talk

about

them.

You

know

the

the

Merit

of

the

individual

PR's,

but

basically

a

bunch

of

removable

PRS

sounds

good

next

month.

Thank

you.

I

Yes,

this

is

a

process

problem

we

have

because

the

UFC

extension

spec

in

order

to

implement

the

header

extension

API

is

trying

to

modify

the

Json

RFC

and

mozilla's

Eric

scholar

objective

to

that

which

is

valid

and

we

currently

are

in

the

states

that

RFC

8829

bizc

is

accessor

to

RFC.

8829

is

in

the

editors

RFC

editors

Cube,

so

it's

close

to

publication.

Just

networking

you

agreed

to

make

a

small

adjustment

to

the

text

which

will

hopefully

get

into

that

new

RFC

once

it

gets

published

as

a

discussion

in

progress

on

the

ITF

list.

I

E

E

E

I

Okay,

the

next

slide

is

about

the

question

when

are

keyframes

generated

and

webrtc

is

very

light

on

that

it

doesn't

even

mention

the

term

keyframe

at

all,

and

we

have

issues

from

that,

because

it

is

a

side

effect

of

some

API

calls.

For

example,

set

parameters

may

cause

keyframes

to

be

generated

when

you

change

the

schedule

resolution

down

by

Factor,

but

that

may

not

be

true

in

some

cases

for

some

codecs.

I

So

the

proposal

to

solve

that

is

to

allow

requesting

a

keyframe

explicitly

when

you

call

set

parameters

that

makes

this

implicit

thing

that

we

have

the

simplest

ability

to

call

the

keyframe

explicit

and

in

terms

of

semantics,

that

is

going

to

be

similar

to

the

rtcp

fair

message

defined

in

an

RFC.

So

at

the

earliest

opportunity

the

encoder

is

asked

to

generate

a

keyframe.

I

B

G

Oh,

thank

you

sorry

about

that

yeah.

So,

looking

at

the

pr

I

see

it's

a

request

frame

Boolean,

which

is

a

bit

odd

in

that

it's

not

really

a

parameter.

Is

it

so?

Why

not

just

make

it

a

method?

I

guess,

but

this

is

on

bike

shedding

I

I

don't

have

a

necessarily

a

problem

with

the

proposal

other

than

that

I

understand

they're.

Already

it's

an

API

for

this,

so

I'll.

Let

others

talk

to

that,

but

yeah

it

seems

like.

G

I

G

G

C

I

E

E

I,

don't

like

the

concept

of

having

set

parameters,

setting,

not

something

that's

not

a

parameter.

I

would

draw.

I

would

rather

following

it

on

the

tradition

of

set

local

description

that

suddenly

generated

this

kittens

and

say

that

okay,

we'll

make

a

new

call

that

that

called

set

parameters

and

sun

keyframes,

which

guarantees

that

they

do

they

do

asynchronously,

but

set

parameters

should,

in

my

opinion,

I

mean

get

high.

Matters

should

return.

K

It

might

be

a

better

compromise

to

make

it

work

better,

with

get

parameters

that

would

require

to

have

some

read

validation

but

I.

Don't

think.

That's

necessarily

a

really

hard

thing

to

do.

One

thing

that

I

see

that

might

be

an

issue

is

that

the

symmetric

API

on

a

receiver

would

be

very

different,

because

we

don't

have

set

parameters

and

receives

so.

Do

you

have

any

idea

about

what

we

should

do

there

if

we

wanted

to

add

an

API

that

is

doing

similar

things.

I

I

K

L

G

Yeah

so

quickly,

I

forgot

one

thing

to

ask:

just

because

the

browser's

not

doing

the

right

thing

doesn't

mean

we

need

to

jump

to

a

JS.

Api

JS

API

should

only

be

needed

if

the

browser

can't

figure

this

out

so

I

think.

First,

we

should

look

at

whether

maybe

changing

active.

Maybe

the

browser

should

send

a

keyframe

and

we

could

standardize

that

instead

of

relying

on

applications

to

to

to

you

know

fix

my

browser

thanks.

H

Yeah

two

two

things

now.

The

first

thing

is

so

in

and

connect

transform,

there's

a

sender,

receiver,

API

and

there's

a

transform

API

and

they

are.

They

are

different

to

each

one

of

them.

So

I

guess

the

partly

solve

different

issues.

So

I

guess

this

one

is

mostly

targeting

the

sender

API,

and

it

makes

sense

to

me

that

it's

synchronized

reset

parameters.

H

So

we

could.

We

could

Bike

Share

there

I

guess.

If,

if,

if

a

user

version

before

behavior

is

not

good,

then

maybe

it

could

be

a

policy

that

is

throughout

the

connection.

If

you

do

not

want

to

to

provide

the

set

parameter,

specific

parameters

but

I

guess

the

transform

API

itself

would

still

remain

or

are

you

suggesting

to

remove

it.

J

Next,

thanks

yeah,

yeah

I

think

this

makes

sense

to

me.

There

seems

like,

like

there's

two

reasons:

you're

going

to

want

to

deactivate

the

top

layer

either

the

person

left

the

call

or

they're

switching

down.

So

it's

like

semantically

two

different

set

parameters,

but

yeah

I

feel

like

most

of

the

time,

almost

all

the

time

you're

going

to

want

to

send

a

keyframe

when

you're

removing

in

a

higher

layer,

except

for

that

last

subscriber

leaving

the

call

case

and

I

think

also

by

extension.

J

This

means,

when

the

Ascender

throttles

on

upper

layer

due

to

bandwidth

constraints.

That

also

means

we

should

send

a

keyframe

in

that

scenario,

although

that

also

doesn't

involve

an

API

API

change,

but

just

yet

just

like

most

of

the

time

we

could

almost

always

set

a

keyframe

not

even

require

an

API

change.

I

F

G

L

Sure

can

I

go

I'll,

try

to

be

fast.

We

all

know

and

love

scale

resolution

down

by

it.

Lets

you

do

something

like

you

capture

a

720p

track.

You

apply

some

expensive

video

effects

and

then

you

send

civil

cast

as

follows

to

layers

civil

cast,

but

if

the

server

tells

you

that

720p

is

not

needed,

you

can

inactivate

the

top

layer

and

you'll

just

send

the

360p.

You

just

get

the

resolution

down

by

two.

The

question

is

now:

why

are

we

applying

expensive

video

effects

on

our

720p

track

if

we're

only

selling

360p?

L

L

You

can

do

this,

but

it's

racy.

So

when

the,

if

you

change

the

size,

you

will

probably

send

the

resolutions

the

wrong

resolution

on

the

right

wrong

layer

and

you

will

generate

keyframes

necessarily,

and

you

know

you

can

try

to

work

around

this

and

inactivate

layers

to

to

avoid

the

jump

jumping

up

and

down,

but

that

will

also

generate

more

keyframes

and

perhaps

previous

slides,

like

what

people

talked

about,

maybe

even

more

keyframes.

So

how

about?

L

We

add

a

scale

resolution

down

to

API,

where

you

specify

the

resolution

you

want

to

send

rather

than

a

relative

term

in

so.

If

you

save

I

want

to

send

360,

then

you'll

send

360

and

it

doesn't

matter

if

the

track

changes

size,

because

the

encoder

just

sends

360.

and

there

exists

a

similar

API

in

labor

RTC.

So

maybe

we

can

experiment

thoughts,

interests,

Peter,.

F

F

L

L

Yeah

I

I,

so

I

I

think

we

can

there's

probably

more

discussions

needed

to

be

had

on

a

potential

pull

request

like

and

like

portrait

mode

and

stuff

like

that

as

well.

But

but

the

general

idea

is

do

whatever

we

already

do

with

scale

resolution

down

by

which

remove

this

dependency

on

the

input

frame.

To

avoid

the

race

gotcha.

H

L

K

I

think

an

API

like

this

would

be

great

I,

don't

really

agree

with

a

single

value

to

see

scare

resolution

down

to,

as

mentioned

in

other

places,

there's

a

problem

of

orientation.

If

you

have

a

portrait

or

landscape

capture,

use,

that

value

will

be

very

different,

will

have

a

different

meaning,

so

we

might

want

to

talk

about

the

API

shape

there.

K

There's

also

something

that

Peter

said,

which

is

what,

if

you

feed

a

frames

that

are

smaller,

with

lots

of

different

resolutions

that

are

bigger.

Maybe

we

want

to

have

an

extra

mechanism

as

well

to

be

able

to

stop

sending

a

layer

if

a

frame

size

is

too

small

to

prevent

having

many

different

simoncast

layer

that

are

the

same

size

if

I

say

1080p,

7,

2360

and

I

feed,

360.

L

H

L

L

L

The

original

issued

file

talked

about

the

you

know,

calling

this

excessively

means

excessive

number

of

objects

created

on

the

GC

pile

not

being

ideal

for

performance,

and

it's

it's

a

lot

of

talk

about,

should

the

API

be

asynchronous

or

synchronous

should

written

a

dictionary,

I.E

a

copy

or

an

interface

I

reference

to

the

same

object.

So

there's

there's

two

main

API

shapes

that

have

been

discussed,

there's

more

too,

but

I'm

trying

to

keep

it

simple.

L

We

have

a

way

to

track

that,

gets

that

we're

turning

a

dictionary

or

track

dot,

audio

stats

or

track.videostats

to

return

an

interface

and

next

slide.

So,

first

of

all,

the

question

is:

is

how

big

GC?

How

big

of

a

problem

is?

Is

garbage

collection,

so

the

intended

use

case

is

to

pull

stats

what's

once

per

second

per

track.

Medium

Sim

track

is

not

accessible

from

real-time

threads.

So

arguably,

you

know

should

read

time

pushes

the

requirement

or

not

and

Johnny

ever

mentioned,

the

GC

nurseries

like.

If

you

do

call

this

a

lot.

L

Yeah

because

I'll

try

to

Encompass

the

the

counters

to

what

you

could

do,

but

anyway,

so

we're

only

talking

about

local

capture

tracks.

So

it's

not

a

lot

of

tasks

130

per

seconds,

if

you

have

an

audio

and

video

track,

but

what

I

want

to

at

least

people

to

have

in

their

back

of

their

their

mind,

is

because,

typically

when

it,

when

we

add

stats

eventually,

someone

asks

for

more

stats.

So

next

slide

humor

me:

will

you

what,

if

we

start

to

have

what?

L

If

someone

wants

stats

that

are

also

available

on

remote

tracks,

for

a

video

conferencing

use

case,

you

could

end

up

with.

You

know:

do

some

math

here

you

could

have

2

000,

plus

tax

per

seconds

and

what

I'm

kind

of

fearing

whether

that's

valid

or

invalid

I'll.

Let

you

decide

what

I'm

clearing

is

what

we're

in

a

situation

where

we

need

to

do

IPC

in

order

to

grab

stats,

so

fear

next

slide.

L

So

the

two

main

things

I'm

concerned

about.

Yes,

one

is

the

excessive

task

posting

for

apps

that

are

only

occasionally

introduce

interested

in

stats,

and

the

other

thing

I'm

concerned

about

is,

if

there's

cross,

process,

metric

collection

and

unnecessary

IPC.

So

next

slide,

it's

been

pointed

out

that

the

first

problem

can

be

avoided

with

the

mutex

and

that's

true

as

long

as

you

keep

make

sure

to

catch

the

stats

to

and

carry

it

in

the

next

type

of

distribution

cycle.

L

The

second

problem

has

been

proposed

that

so

the

next

slide

is

that

you

can.

You

can

piggyback

the

metrics

update,

because

it's

only

a

few

bytes

of

counters

you

can.

You

can

piggyback

on

other

IPC

messages

and

what

I'm,

not

very

comfortable

with

with

that

solution,

is

that

it

assumes

IPC

happens

anywhere

anyway.

L

L

But

at

the

end

of

the

day

this

this

is

what

we're

talking

about

or

some

version

of

it

open

to

suggestions,

but

we

need

to

decide

synchronous

or

asynchronous

interface

or

dictionary.

I,

don't

think,

there's

a

huge

difference

personally,

but

you

know

your

mileage

may

vary

and

I

I

would

like

input

on

if

my

concerns

are

valid

or

invalid

and

on

the

next

slide.

This

is

the

last

slide

before

we

get

to

cues.

L

I

have

three

proposals

proposal

a

promise?

Yes,

that's

proposal,

B

interface

and

proposal.

C

is

you

know,

let's

make

everyone

happy?

We

we

have

a

synchronous

API,

but

we

just

say

that

it

should

be

the

latest

snapshot.

So

you

could

do

a

batch

updates

if

performance

ever

becomes

a

problem.

Yes,

let's

go

to

the

queue

yen.

H

H

Don't

think

that

this

use

case

here

is

Target

on

that,

because

the

name

of

the

API

is

getting

stats

and

stats

are

like

yeah

one

on

time

time

like

every

second,

you

you

want

to

get

some

results,

but

you

you

don't

want

to

get

them

like

every

even

Loop

task.

So

that's

why

I

would

tend

to

go

with

a

promise

based

get

stats

and

if,

in

the

future

we

need

like

real-time

information

like

that,

then

we

will

be

free

to

add

additional

apis.

H

And

that's

something

that

is

already

being

done

in

webrtc

PC,

for

instance,

you

can

get

stats

about

frames

being

received,

and

you

can

also

have

request

video

frame

call

back

or

media

capture

transform.

If

you

want

to

know

precisely

at

at

what

time

the

video

frame

is

being

received-

and

these

are

two

different

apis-

and

this

is

fine-

so

propose

okay

and.

L

G

G

But

at

the

same

point

you

mentioned

queuing

a

lot

of

tasks,

for

example,

and

using

a

mutex.

You

don't

also

need

to

you,

you

don't

have

to

use

a

mutex.

You

can

also

do

lockless

implementations

of

this,

which

should

so

there

are

ways

to

implement

this.

That

doesn't

require

locking

and

in

fact

the

w3c

design

guide

says

we

should

not

lock

in

a

getter,

so

lockless

would

be

one

option.

I

think

it

comes

down

to

use

cases

if

the

use

case

really

is

just.

L

Is

the

ergonomics

I,

don't

see

a

big

difference,

whether

you

put

the

a

weight,

key

keyword

in

front

of

it

or

not

and

and

secondly,

like

I

guess

my

concern

is

one

month

from

now

someone

says:

oh,

what

about

these

other

metrics

and

and

then

we've

painted

ourselves

in

the

corner

which,

again

with

proposal

C,

you

could

probably

get

around,

but

I

I.

Just

don't

see

why?

Yes,

you

and.

H

Yeah

also

to

mention

with

that

with

commits-based

approach,

it's

very

clear

that

you

call

the

API

and

you

will

gather

the

result.

If

you

have

a

synchronous

API,

then

you

have

to

identify

what

is

the

frequency

of

how

much

equal

be

updated

and,

for

instance,

in

the

media

element

current

time?

The

use

case

is

that,

it's

being

it

will

be

query

to

the

synchronization

with

cover

data,

for

instance,

so

you

have

to

update

it

very

frequently,

but

in

another

in

an

implementation

like

that,

maybe

Safari

would

say.

H

Oh

the

use

case

is

that

so

we

will

be

very

lazy

and

do

it

like

once

every

second

or

so,

and

over

browsers

will

not

do

that

and

then

you

start

to

have

like

different

behaviors

and

then

the

effect

will

have

to

say:

okay,

you

have

to

update

it

to

that

kind

of

frequency

and

so

on,

and

it

starts

to

not

be

great

I

think

so.

That's

why

we

have

a

promise

based

approach.

The

contract

is

a

bit

like

clearer

as

well

and

fits

the

use

case.

G

So

I

just

like

to

quote

Oprah

to

say

we

should

make

decisions

out

of

love

out

of

here,

because

we

can

all

play

the

fear

game

like

what.

If

in

the

future,

someone

will

actually

need

to

read

these

stats

to

do

real-time

audio

processing,

and

then

we

would

have

wished.

We

had

a

you

know

an

attribute,

API,

so

well.

I

think

we

should.

G

J

H

Another

question

would

be

if

we

have

to

design

it

as

a

real-time

API.

Would

we

feel

confident

that

the

synchronous

API

will

be

the

best

choice

or

not,

and

I

don't

know

so

we

would

have

to

think

about

it

precisely

without

having

the

exact

real-time

use

cases

that

we

are

trying

to

to.

Think

of.

So

that's

why

it's

also

fuzzy

to

try

to

address

these

suitcases.

While

we

do

not

really

have

the

precise

information.

G

G

L

G

E

G

M

Okay

and

I

would

like

to

continue

our

discussion

on

the

ice

controller,

Slash

webrtc

ice

apis.

So

to

recap:

the

discussion

thus

far

at

the

previous

Century

meeting

Peter

and

I

proposed

a

set

of

improvements

to

the

API

that

will

incrementally

allow

applications

to

control

ice

to

an

increasingly

greater

extent.

M

There

was

a

positive,

possibly

consciously

positive

response

to

it,

so

I've

gone

ahead

and

I

would

like

to

start

the

discussion

on

the

first

of

those

set

of

improvements,

so

I've

written

up

an

issue

on

webrtc

extensions,

repo

I'll,

try

and

keep

this

brief.

So

we

have

enough

time

for

discussion,

but

the

first

increment

is

basically

to

prevent

the

removal

of

a

candidate

pair.

B

M

So

there's

a

couple

of

different

approaches

to

this.

We've

talked

about

these

a

bit

before,

but

to

summarize,

we

can

do

a

cancelable

event,

so

this

is

when

the

ice

agent

has

decided

to

remove

a

candidate

pair.

But

before

that

removal

actually

happened,

there

will

be

an

event

that

the

application

can

cancel

and

prevent

the

removal

from

taking

place,

and

the

other

is

the

ice

agent

continues

to

do

what

it

does.

M

The

application

just

provides

inputs

to

the

ice

agent

by

setting

certain

attributes

on

a

candidate

pair

and

to

reiterate

in

either

of

those

cases

the

existing

Behavior

does

not

change.

It

is

only

when

the

application

takes

some

steps

to

change

the

Behavior.

Anything

different

happens

so

on

the

next

slide,

going

a

bit

more

into

consolable

events.

M

So

yeah

the

basic

idea

is

the

ice

agent

when

it

decides

that

it

wants

to

remove

a

candidate

pair,

it

stops.

It

passes

our

action

temporarily

and

lets

the

application

know

that

it's

about

to

remove

a

candidate

pair,

and

then

it

waits

for

that

event

to

finish

this

patch.

If

the

application

calls

prevent

default

on

the

event,

the

ice

agent

does

not

remove

that

event.

It

can

come

back

and

propose

removing

that

event

at

a

later

time.

That's

completely

fine.

M

This

is

the

way

other

events

work

in

the

case

of

touch

or

form

submit

events.

So

this

is

an

established

pattern

in

some

use.

Cases

on

the

left

is

what

the

API

proposal

looks

like

so

an

RDC

ice

transport.

There's

a

new

event

for

a

new

event

can

be

filed

when

the

candidate

removal

is

being

proposed

and

then

on.

The

right

is

how

an

application

would

use

it.

So,

in

the

event,

listener

called

prevent

default.

M

So

on

the

left

again

is

the

API

proposal,

so

rdci's

transport

can

have

an

event

to

that

is

far

when

a

candidate

pair

is

at

it,

and

the

application

can

set

the

removable

flag

on

that

to

true.

If

that

is

also

removable

to

false

by

default.

It's

true,

and

if

this

is

false,

then

the

ice

agent

does

not

remove

the

candidate

pair.

The

flag

could

be

set

either

in

the

event

listener,

or

it

could

be

said

at

a

later

time

as

well.

We

can

discuss

a

bit

towards

the

end.

M

Since

the

last

ice