►

From YouTube: W3C WEBRTC meeting 2022-10-18

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

B

B

So

today

we're

going

to

cover

encoder,

transform

media

capture,

extensions

webrtcpc.

What

emergency

extensions

and

capture

handle?

We

have

two

meetings

before

the

end

of

the

year.

One

is

November

15th

and

December

6th.

So

please

keep

those

in

mind.

I!

Guess

it's

a

total

of

only

four

hours

together

other

than

this

meeting,

so

we

need

other

meetings,

probably

it'd,

be

a

good

time

to

to

think

that

through

and

and

plan

it.

B

B

We

operate

under

the

code

of

conduct,

the

w3c

code

of

ethics

and

professional

conduct,

we're

all

passionate

about

approving

our

emergency,

but

let's

try

to

keep

it

cordial

and

professional,

we'll

be

managing

the

queue

I

guess

the

speakers

or

Harold.

Would

you

do

that?

We'll

have

the

plus

q

and

minus

q

and

Google

me

chat

if

you

want

to

get

into

the

speaker

queue

and

please

use

headphones

or

a

echo

cancer

speakerphone

you'll

have

to

handle

your

microphone.

B

Obviously

after

you

get

called

on

and

give

us

your

full

name,

I'm,

not

sure

we'll

use

pulse,

but

if

we

do,

we

can

gauge

a

sense

of

the

room.

Just

note

about

document

status.

There's

been

some

misunderstandings

just

because

something's

in

the

repo

doesn't

mean

it's

been

adopted.

That's

a

separate

thing.

We

use

a

CFA

process.

Editors

process

may

not

represent

consensus,

but

the

working

group

drafts

do

all

right.

B

So

here's

the

agenda

for

today

we

have

encoded,

transform

Harold

the

media

capture

extensions

briefly

Henrik

Weber,

CPC,

Albert,

Hilton,

gpac

yanivar

will

handle

that

Florence

will

do

some

more

about

to

see

extensions,

choose

and

then

we'll

have

capture,

handle

and

we'll

try

to

manage

the

time

so

that

we

can

give

everyone

the

their

allocation

all

right.

So

Harold

you

have

the

floor.

D

A

We

did

manage

to

assign

AIS

for,

but

I

think

explain

the

use,

cases

and

Architectural

descriptions,

none

of

which

have

been

executed

on

I

think,

but

there

are

a

number

of

other

issues

that

were

in

the

strategy

that

Navigator

so

in

the

interest

of

making

progress

on

all

issues.

Let's

take

the

other

ones

here.

Next.

A

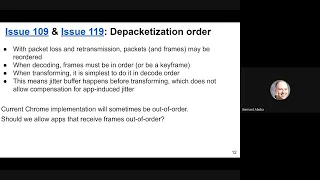

Is

that

packets

don't

arrive

in

order

on

the

network?

Sometimes

I

love?

Sometimes

we

ask

for

re-transmissions,

and

that

means

that

some

frames

might

turn

up

at

the

API

and

before

they

actually

the

before

frames

that

that

should

have

come

early,

so

decoders

codex,

not

frames

in

order

because

they

depend

on

each

other.

So

there's

a

reordering

in

front

of

the

in

front

of

the

decoder

step,

and

when

you

transform

things,

it's

of

course

simplest.

A

If

the

decoding

happens,

if

you,

if

you

can

get

them

in

in

decode

order,

because

that

makes

things

kind

of

obvious,

but

this

means

that

you

have

to

have

a

Jitter

buffer

in

front

of

the

Transformer,

and

so

that

means

again

that

if

you're

Transformer

introduces

Jitter,

there's

nothing

that

compensates

for

that

afterwards,

sorry.

So

the

current

Chrome

implementation

has

the

Jitter

buffer

after

the

Transformer,

which

means

that

we

sometimes

get

frames

out

of

order.

A

B

B

Because,

certainly

you

know

what

WD

streams

can

handle

frames

that

are

out

of

order.

I

mean

they

have

to

be

I.

Assume

they'll,

be

reassembled.

They'll

just

be

out

of

order.

Is

that

the

idea

they'll

be

complete

frames

where

they're,

just

not

in

the

right

order

right,

yeah

I

mean

you

could

handle

the

Jitter

buffer,

I

guess

as

kind

of

a

transform

stream

that

you

could

offer

to

the

app.

Is

that

what

you're

thinking

now.

A

Frames

arrive

in

whatever

order

they

arrive

in,

they

can

say

the

the

browser

must

reorder

frames

so

that

they

arrive

in

order,

including

waiting

for

the

for

the

Lost

frame,

which

doesn't

sound

good,

and

we

should

have

a

flag

that

allows

us

to

control

which

of

the

two

two

first

Alternatives,

that

we

want

that.

The

app

wants.

A

F

Cases

where

having

out

of

order

would

would

be

a

benefit,

and

we

we

know

that

out

of

order

is

a

is

a

potential

food

gun

and

if

we

see

use

cases

where

autoforder

would

be

good

to

have,

then

we

should

try

to

to

find

a

solution

there.

But

if

we

do

not

have

use

cases

for

out

of

order,

given

we

know

it's

a

food

gun

and

web

developers

will

not

account

for

it.

We

should

stick

with

in

order

like

we

have

currently

in

the

spec.

B

F

B

E

B

B

A

C

Yeah

I

guess

it

could

be

instead

of

picking

another.

It

could

be

always

in

order,

but

with

some

kind

of

developer,

configurable

timeout,

after

which

you

simply

assume

the

frame

lost,

and

so

that

way

the

developer

have

control

about

how

much

delay

they

might

introduce

without

having

them

to

re-implement

the

reordering

all

the

time

either.

A

G

E

F

Might

be

a

quite

difficult

to

to

have

both

options,

so

we

should

try

to

just

have

one

and

not

two

I

think

in

general,

it's

the

potential

issue

might

be

if

the

transform

is

like,

sometimes

taking

a

few

milliseconds

and

sometimes

taking

a

long

time

and

and

then

it's

true

that

cheetah

prefer,

after

my

might

be

beneficial,

but

in

practice

for

what

we

are

thinking

like

decryption

or

like

getting

some

metadata

and

then

passing

the

data

to

the

decoders

and

so

on.

It

should

be

fairly

stable

and

fairly

linear.

G

F

Maybe,

which

is

that

So

currently

Chrome

and

Safra

implementation,

since

it's

have

the

same

back

end,

there

are

out

of

order.

So

the

question

is:

we

have

implementations

that

are

not

matching

the

specification,

so

it

is

Chrome

planning

to

switch

to

in

order

or

not.

That's

also

a

good

question,

because

if.

A

H

Yes,

I

just

wanted

to

make

the

point

that

unless

the

transform

has

side

effects,

it

doesn't

seem

why

it

would

matter

much

we're

talking

about

taking

too

long

if

we

have

a

Jitter

better

for

ahead

of

time,

but

it's

not

going

to

affect

the

I

mean

if

you're

just

decoding

it

shouldn't

really

matter.

Unless

there's

our

transformed

use

cases

that

are

time

dependent

in

other

ways

and

that

maybe

it's

rerouting

the

information

somewhere

else

or

something

so

use

cases

would

definitely

be

appreciated.

H

A

I

So

the

first

proposal

is

to

that.

It

agrees

with

the

consensus

at

tpacks

that

the

return

value

should

be

empty,

but

it

also

allows

the

application

to

pass

any

subsets

of

the

Reds

into

generate

keyframe,

which,

if

you

want

keyframes

for

just

two

layers,

avoids

splitting

that

up

into

two

calls

from

the

main

thread

to

the

encoder.

I

A

A

F

F

F

A

A

A

I

A

F

E

I

The

use

case

is

very

similar

to

this

one-ended

transform

that

you

talked

about

at

tpack.

We

have

a

custom

decoder

that

would

that

does

rely

on

the

RTP

sequence

number

to

detect

losses

in

the

audio

and

for

audio.

It's

relatively

easy

to

add

that

to

incoming

frames

for

video

it's

much

more

complicated

and

it

is

more

complicated

for

outgoing

frames

as

well.

F

So

getting

back

to

the

previous

issue

for

in

order

or

Diet

of

order,

this

with

the

SE

would

expose

that

and

if

people

start

to

use

the

sequence

number

to

say,

hey

this

packet

has

been

lost

and

we

are

not

doing

in

order.

Then

we

might

receive

it

later

on

and

they

might

be

confused

and

so

on.

I

think

for

this

particular

issue.

It's

fine

to

to

expose

it,

but

it's

for

more.

We

expose

that

kind

of

stuff.

The

more

we

in

order

might

be

might

be

better.

I

I

A

A

A

A

J

J

I

A

F

F

A

A

D

All

right:

let's

talk

about

track

frame

rates,

so

with

get

settings

attract.get

settings

frame

rate,

you

can

tell

the

configured

frame

rate,

but

you

can't

tell

the

actual

frame

rate

and

well

what

is

the

actual

frame

rate

that

can

mean

different

things

depending

on

where

in

the

pipeline,

you

measure

it.

So

the

camera

May

produce

less

frames

because

of

the

lightning

conditions

where

the

frames

never

even

created,

or

the

frame

may

be

dropped

prior

to

being

delivered

to

the

sink.

D

For

example,

if

you

have

performance

issues

or

it

can

drop

later,

but

I

think

all

all

frame

counters

are

of

Interest,

the

settings

was

produced

and

what's

what

I

call

emitted

from

the

track?

If

we

want

both

the

camera

frame

rate-

and

we

want

to

know

about

fan

drops,

there

are

some

apis

already-

that

measure

frame

rates

with

different

points.

D

So,

for

example,

our

GP

connection

get

stats

as

the

frame

counter,

which

I

think

is

a

bit

under

specified,

but

it's

implemented

as

the

input

frame

to

webrtc

or

you

could

look

at

the

HTML

media

element

like

playback

metrics

I

get

the

frames

being

rendered

I

guess,

but

there's

issues

with

these

apis

and

Alternatives,

and

that

is

that

the

measurements

are

happening

later

in

the

pipeline.

So

they're,

not

if

a

frame

is

dropped.

You

know,

as

soon

as

it's

been

produced,

it's

likely

not

going

to

be

covered

here

and

also

ergonomics.

D

It's

I

think

it's

bad

if,

like

a

non-webrtc

use

case,

would

be

forced

to

use

an

rdcp

connection

like

a

dummy

peer

connection,

just

for

the

sake

of

getting

a

metric

from

a

track

like

if

it's

track

specific,

that's

my

argument

and

I'll

go

to

the

so

there's

one

more

slide.

I'll

go

to

the

slide

with

the

proposal,

and

then

we

can

do

questions.

D

D

The

frames

captured

you

know,

produced

by

camera,

for

example,

and

Frames

emitted,

which

are

the

frames

that

weren't

dropped

and

when

I

say

that

it's

emitted

I

mean

that

it's

being

passed

over

to

a

sync.

So

it's

a

it's

a

frame

that

was

neither

dropped,

muted,

disabled,

discarded

and

the

asterisk

is

frame.

D

Drops

could

still

happen

later,

for

example,

if

you're

passing

it

to

webrtc,

the

frame

could

get

dropped

after

encoding

the

first,

but

before

sending

or

or

whatever

like,

if

you're

rendering

it

it

might

get

dropped

before

getting

rendered

because

reasons,

but

that's

outside

the

scope

of

this

API,

which

is

only

concerned

with

from

the

source

to

the

track

and

let's

go

to

questions.

So.

Thank

you

and

it's

first.

F

Yes,

so

I

think

that

all

apis

that

are

using

a

media

stream

track

will

allow

you

to

to

get

the

number

of

frames

that

you

are

actually

receiving

like.

If

you're

using

a

media

capture

transform,

you

will

get

all

the

the

count

of

frames

that

you're

using

if

you're

using

webrtc

it's

the

same,

if

you're

using

a

video

element.

It's

the

same,

so

this

part

is

sold.

E

F

So

maybe

it's

fine

to

have

it

I'm,

not

sure

I,

understand

the

difference

between

frames,

captured

and

Frames

emitted,

and

it

seems

to

me

that

it

might

be

very

specific

to

a

given

pipeline

and

in

respect.

We

have

a

source

and

we

have

things

and

the

source

if

it's

producing

a

frame

will

emit

it

in

practice.

So

I'm

not

sure

it

will

be

very

easy

to

specify

frames

emitted

in

in

in

a

web

competitive

in

an

interval

way.

D

That

that

might

make

sense

actually

because,

like

frames

captured

as

the

big

missing

piece

in

my

mind,

frames

emitted

is

a

it's

a

nice

bonus,

but,

like

you

said,

you

could

get.

If

you,

if

you

remember

to

see,

is

your

your

sync

you

can

you

can

look

at

the

input

frame

rate

that

it

would

should

be

the

same

more

questions

John

either.

A

H

Yes,

so

I

I

would

say

for

frames

captured

I

have

some

understand.

You

know,

I,

think

that

makes

some

sense,

because

the

use

case

is

a

camera

and

low

lighting,

and

the

current

constraint

does

not

give

you

those

measurements

which

I

guess

we

could

revisit.

That

would

be

one

option

for

frame

submitted.

I'll

show

you

un's

concern.

H

Is

that

I,

don't

think

I

think

that's

implementation

defined

and

I'm,

not

sure

that's

actually

observable,

and

that

the

and

some

concerns

I

heard

in

what

it

might

when

it

might

produce

lower

numbers,

would

be

include.

Some

implementations

might

not

produce

a

frame

if

the

sync

doesn't

want

it.

So

then

you

have

a

Downstream

issue

where

you're

not

measuring

Upstream

you're

sort

of-

and

this

is

the

problem

with

track

right.

It's

supposed

to

represent

the

source,

but

it's

also

a

modifier

and

I.

H

If,

if

the

problem

is

that

you

know,

you

set

a

frame

rate,

so

it's

decimating

frame

rates,

hopefully

user

agents

are

good

enough

at

decimating

that

there's

not

going

to

be

that

much

difference

from

the

configured

value,

but

for

for

low

light

I

have

it

makes

more

sense.

As

for

a

new

get

starts

method,

yeah,

maybe

or

maybe

we

could

put

it

as

a

constraint,

but.

A

D

E

A

So

I

I

joined

the

cube

to

say

to

say

that

I

think

that

frames,

a

method

makes

some

sense

because

it's

consistent

across

the

apis,

but

I

can

see

the

argument

that

that

this

this

is

the

same

value

as

what

you

would

have

exposed

from

other

apis.

Well,

if

it's

the

same

value

that

would

be

nice

to

expose

to,

but

let's

consider

the

frames

captured,

accepted

then

and

frame

submitted.

That's

still

is

still

up

for

discussion,

not

that

not

adopted

I'll.

H

Simulcast

the

story

continues,

so

we

got

four

issues

to

discuss

the

next

slide,

so

this

is

same

slide

as

or

spillover

slide

from

last

keypack.

This

was

the

the

wing

we

choked

on

quite

early,

so

this

was

to

do

with

red

length.

If

you

put

in

17

character.

Rids

most

prices

will

choke

on

it

except

Firefox,

and

this

spec

relies

on

other

specs

to

Define

things

in

ITF.

H

The

RFC

8851

still

allows

256

octets,

but

in

practice

people

use

Single,

Character

Ritz,

because

anything

bigger

Bloods,

the

RTP

header.

So

we

still

I

think

this

working

group

still

would

like

to

try

to

limit

threads

to

16

characters

for

web

combat.

At

this

point

next

slide,

we

had

some

progress

because

there

were

some

other

differences

like

an

Errata

was

published.

I

should

say

on

RFC

8851,

where

minus

and

underscore

are

no

longer

valid

characters,

so

yay

restricting

the

feedback

we

got

from.

H

People

who

posted

this

a

ride

out

was

that

restricting

size

to

16

instead

of

255

would

might

be

hard

to

justify

as

an

erratum,

but

it

might

be

doable

in

abyss

and

for

people

who

are

not

familiar

with

ITF

lingo,

don't

feel

bad

I,

don't

know

what

a

business

either

I'm

sure

it'll

be

great

and

it'll.

Tell

you

that

if

you

go

with

16

plus

characters,

you'll

discover

a

path

of

pain,

and

it's.

A

H

I'm,

just

the

slide

is

basically

to

then

say

that

next

steps

that

we

think

are

the

way

to

go

is

to

try

a

Biz

to

say

less

than

16

characters

and

there's

a

remaining

question

of

empty

string,

which

I

think

we

can

just

fix

in

webrtc,

PC

and

basically

say

as

a

JavaScript

input

API.

If

we

get

an

empty

string,

you

have

not

provided

a

red

so.

A

C

H

Well,

I

suppose

we

could

make

a

decision

that

we

don't

allow

more

than

16

characters

in

our

API.

There

was

some

pushback

last

meeting

about

specifying

limitations

on

red

that

weren't

reflected

in

ID

aspects.

So

I

guess

we're

trying

to

do

the

right

thing

in

this

case,

but

I'm

open

to

other

options.

So.

E

H

J

It

should

be

possible

to

update

Chrome

to

support

all

the

characters

that

we

want

and

as

many

characters

as

we

want.

It

was

not

done

originally

because

we

didn't

have

to

buy

its

header

extensions,

and

so

we

felt

like

it

was

safer

to

just

you

know,

up

to

16

characters,

but

now

that

is

as

shipped

in

Chrome

and

it

should

be

possible

to

do

if

we

think

that's

the

right

thing,

and

if

people

don't

want

to

use

it,

which

we

probably

shouldn't,

then

we

don't

have

to

use

it.

F

It

seems

to

me

if,

if

we're

going

with

16

characters,

we

should

add

a

note

in

in

our

Weber

CPC

spec

but

hey.

This

is

the

current

limitation

and

we

are

trying

to

push

it

to

the

ATF

error

of

c

and

when

vrfc

is

updated,

then

we

can

remove

the

node,

but

still

it's

good

to

describe

it

somewhere

and

it's

somewhere

it's

easier

in

webrtc

right

now,

so

it

makes

sense

to

do

it

in

Weber

CPC.

First,

if

we

can

do

it.

H

B

C

Well,

but

it

can't

be

non-normative

if

there

is

going

to

be

an

error

when

you

do

more

than

69

again

personally,

I

feel

that

limiting

it

at

16

is

absolutely

the

right

thing

to

do,

but

it

it

is

a

normative

Behavior.

If

one

browser

is

going

to

accept

17

and

the

other

isn't

I

know,

my

sense

is

that

the

protocol

says

it's

legal

to

send

256

characters

that

doesn't

mean

that

any

implementation

should

allow

to.

Let

developers

do

something

silly

like

that.

C

So

I

think

limiting

what

we

allow

in

a

transceiver,

I,

don't

think

is

goes

against.

The

protocol.

I

fully

agree

that

in

terms

of

what

we

accept

from

sdp,

we

have

when

I

hope

we

still

already

hello

for

that,

because

that's

what

the

protocol

asks

but

yeah

in

terms

of

our

API

I,

don't

think

there

is

anything

bad

in

saying

such

a

silly

idea.

We

are

not

going

to

let

you

do

that.

There

is

no

point.

H

H

All

right

we're

out

of

time

on

this

issue,

I

think

so.

Next

Issue

the

specs

says

right

now

that

set

robot

description

with

an

offer

to

receive

simulcast,

as

actually

always

said

that

it

overwrites

sending

codings,

but

with

our

latest

update,

we've

relaxed

it

a

little

bit

to

clarify

that

it

only

overrides

sending

Coatings

that

don't

have

rids

in

them.

That's

an

improvement

based

on

what

was

considered

the

bucket

before

so.

H

So

this

seems

better

if

you

get

an

offer

to

receive

simulcast

now

you're

doing

simulcast,

and

you

you

have

your

previous

unicast

settings

overwritten

now.

So

that

would

mean

that

if

you

roll

it

back,

it

would

have

to

undo

that

overwrite,

basically

by

overriding

again

now

roll

back

in

offset

remote

description

is

rare.

H

It's

not

used

in

perfect

negotiation,

so

principal

at

least

astonishment

would

seem

to

be

that

if

you,

if

you

roll

back

the

overwrite,

it

undoes

the

override

basically-

and

that

means

it

also

wouldn't

do

any

modifications

you

had

done

after

the

overwrite,

if

that

makes

sense.

So

if

you,

because

these

methods

can

be

you

know,

JavaScript

can

call

them

and

lots

of

interesting

orders

and

one

of

them

is

you

get

offered

to

receive

simulcast.

You

then

call

set

parameters

to

modify

your

past

attributes,

and

then

you

roll

it

back

and

I.

H

H

So

our

set

parameters

API,

is

a

read

and

store,

modif

modify

API

and

that

you

read

settings

and

then

you

modify

them

and

you

set

them

asynchronously.

So

there's

a

Time

Gap

here,

where

this

process

of

reading

store

back

may

race,

with

the

negotiation

methods,

specifically

a

remote

offer

to

receive

simulcast

overrides

the

red

free,

Center

Coatings

array,

which

we

just

discussed

a

rollback

of

the

above,

which

we

just

agreed

to

and

also

answers,

May

prune

all,

but

one

written

coding

from

sending

codings.

H

So

what

happens

when

This

Racist

would

set

parameters?

What

outcome

would

we

want?

We'd

probably

want

the

result.

We've

gotten

had

set

parameters

been

allowed

to

complete

first,

so

the

proposal

is

to

let's

set

parameters

complete

first,

we

do

a

similar

thing

for

add

track

when

ad

track

is

racing

with

set

remote

description.

H

So

this

picture

shows

adding

a

third

sentence

here.

That

basically

says

if

any

promises

from

set

parameters,

methods

on

the

sender

associated

with

connection

are

not

settled

and

what

did

abort

these

steps

and

start

the

process

over,

which

is

language

similar

to

the

bullet

2,

above

that

we

do

for

ad

track.

Yeah.

H

D

Like

the

sdp

modifies

the

parameters

but

because

you're

setting

doing

set

parameters

again,

I

mean

the

set

parameters,

is

probably

a

modification

of

an

earlier

get

parameters.

So

to

do

this

correctly,

you'd

have

to

wait

until

the

sdp

is

applied,

then

call

gets

get

parameters

again

and

then

do

set

parameters,

but

that

requires

a

manual

restart

rather

than

applying

it

again.

So

I'm

not

sure

what

would

change

in

the

sap.

H

So

we

did

consider

so

this

is

steps

before

we

run

the

success

callback.

So

the

success

callback

would

not

happen

at

this

point

and

it

would

have

to

basically

the

our

intent

was

to

try

to

wait

until

the

set

parameters

have

settled,

so

we

don't

want

to

try

to

reapply

set

parameters

afterwards.

We

want

to

get

them

all

done

ahead

of

time

so

that

there

are

no

outstanding

set

parameters

and

solve

the

rates

that

way,

which

would

which

seems

to

be

close

to

what

would

have

happened.

H

Well,

it

will

never

fail.

So

if

you

do

set

parameters,

if

you

have

signal

cast

right

now

and

you

modify

simulcast

it'll,

say

you'll

get

a

promise

to

say

your

simulcast

parameters

were

set,

and

shortly

after

you

know,

there'll

be

an

answer

or

something

that

removes

those

encodings

again

and

you're

back

to

unicast.

So,

okay,

that

seems

to

fit

our

model

all

right.

H

So

this

doesn't

so

there's

relaxation

a

lot

for

validation

and

satisfies

Jason,

while

simultaneously

maintaining

an

existing

and

Speck

invariant

that

layer

pruning

woman

Coatings

only

happens

in

answers.

This

is

different

from

implementations

that

actually

exposed

layer

removal

and

have

remote

offer.

The

problem

is

that

all

those

implementations

also

fail

to

roll

back

that

information.

H

Yes,

so

so,

basically,

the

idea

is

to

go

this

far,

but

no

further,

because

by

waiting

to

the

answer,

the

nice

thing

is

jsep

already

ensures

that

answers

are

within

the

envelope

of

the

offer.

So

when

SFU

gives

the

browser

an

offer,

the

browser

is

in

full

control

of

the

answer

and

can

deal

with

removal

at

that

time

and

I

think

that

makes

sense.

It

protects

these

existing

environments

and

it

gives

us

the

nice

behavior

that

things

can

start

out

as

simulcast

until

you

get

an

offer

to

receive

Simon.

H

So

sorry,

things

can

start

out

as

unicast

and

it

can

be

promoted

to

simulcast,

at

which

point

layers

can

be

reduced

back

to

one

which

effectively

brings

you

back

to

unicast,

but

now

it

has

a

red

member

in

it.

It

means

it

can't

be

promoted

back

to

simulcast.

So

it's

a

one-way

Street.

Basically

that

you

can,

you

can

add

as

many

layers

as

you

want

on

the

initial

negotiation

of

simulcast

and

then

you

can

prune

layers

down

turn

yourself

back

to

unicast,

but

you

can't

restart

the

there's,

no

loophole

that

you

can.

H

J

J

So

at

the

moment

we

have

RDC

data

channel

that

is

transferable,

so

set

algorithm

is

mentioning

that

we

need

to

check

the

max

message

size

of

the

channels,

Associated

RTC

acetic

transport,

to

know.

If

we

can

send

a

message

is

a

slot

on

rtcp

transport

and

it

can

be

updated

during

renegotiation,

so

asynchronously.

J

J

J

So

that's

point

one

0.2

is

that

when

we

can

transfer

a

data

Channel

at

any

point

before

it's

used,

which

means

that

asset

transport

might

not

be

created,

yet

we

can

it's

perfectly

fine

to

create

a

newer

connection,

create

a

data

Channel

directly

even

before

during

negotiation,

which

is

so.

We

don't

have

a

value

for

Max

message,

size

that

is

available

to

copy

into

the

transferred

objects.

J

So

we

have

an

issue

there

that

we

have.

We

still

have

an

object

that

has

a

value

that

is

going

to

be

updated.

Max

research

is

not

the

transport

when

it's

created

and

an

object.

That

is

in

a

different

thread

and

they

will

need

to

have

some

communication

to

get

updated

about

the

value

or

we

need

to

agree

that

send

is

going

to

be

blocking

Hub

threads

and

to

read

the

max

message.

Size

or.

J

Like

that,

so

a

solution

that

I

would

think

would

be

to

add

language,

to

update

announcing

a

detached

Channel

as

open

algorithm

to

make

sure

that

at

this

during

the

that

algorithm,

we

notify

the

object

on

the

worker

thread

that

there

is

a

new

value

for

Max

message

size.

Well,

the

raise

a

value

for

Max

message

size,

so

the

first

value

if

it's

been

transferred

before

and

with

the

value

and

then

data

Channel

could

live

on

with

its

own

value.

That

is

never

going

to

be

updated.

J

C

J

We

asked

people

at

TPAC.

We

didn't

seem

to

think

that

it

was

something

that

was

needed.

We

need

to

make

some

checks

about

that,

but

it

doesn't

seem

like

to

be

to

be

a

very

Computing

feature

that

people

rely

on

well

for

sure

we

need

to

make

some

checks

about

that

at

the

moment.

In

any

case,

if

you

try

to

send

encounter

implementations

in

some

current

implementations,

if

you

try

to

send

a

message

that

is

bigger,

then

Max

message

size.

J

You

will

terminate

the

peer

connection,

the

data

channels

so

and

it

will

return

an

error

because

the

message

to

be

glitched

out

to

the

lower

level,

so

people

already

need

to

make

sure

that

they

don't

send

too

big

of

messages,

because

they

cannot

get

an

error.

So

it

shouldn't

be

too

much

of

a

problem.

A

J

C

A

J

Yeah,

we

still

have

an

issue

of

a

renegotiation

that

would

change

the

value

and

then

you

would

have

a

mismatch

between

every

yellow

negotiation

that

changes

mac

message

size.

We

would

have

a

mismatch

between

what

the

transport

is

configured

for

and

was

the

data

channel

is

allowing

and

possibly

the

transport

will

have

a

smaller

message

size,

which

means

that

some

messages

might

be

rejected.

J

C

J

H

H

J

Sorry,

the

value

would

be

the

same

for

all

data

channels

from

the

same

transport,

so

it

was

considered

not

necessary

when

we

didn't

consider

transfer

data

channels,

because

you

would

have

access

to

probably

disappear

connection

and

then

the

satp

transport

and

read

the

value

from

there

for

Simplicity

and

considering

the

detectional

transfer.

It

might

be

easier

to

have

the

value

also

readable

on

on

the

data

Channel,

knowing

that,

if

you

have

a

negotiated,

you

might

have

an

undefined

value.

H

Yeah,

it's

not

clear

from

the

slides

whether

you're,

proposing

just

language

to

update

internal

slots

or

new

API

I

thought

I

heard

exposing

something

in

in

an

event

and

I.

Think

design

principles

usually

prefer

that

we

don't

add

data

in

the

event

exposed

to

JavaScript,

but

instead

have

it

be

a

property

on

the

target

event.

Target.

J

Right

yeah:

it's

what

I

considered

updating

internal

slots,

adding

internal

slots

and

updating

them

in

the

open

events.

And

then,

when

you

get

your

data

channel

is

open.

Event,

you

can

you?

Could

your

max

message

size

slot

for

the

data

channel

would

have

the

proper

value

and

possibly

an

accessor

to

read

it.

J

J

We

an

extension

of

the

interface

for

transferable

and

the

HTML

spec

says

that

we

should

have

a

detached

internal

slots

on

the

transferable

platform

objects

which

we

don't

have.

We

have.

Instead,

an

is

transferable

slot,

which

is

a

little

bit

different,

which

needs

to

be

guarding

against

having

a

data

channel

that

is

transferable

after

we

already

started

sending

from

it,

which

is

a

little

bit

of

overlapping

block.

J

Quite

I

suggest

that

we

add

the

data

slot

to

measure

HTML

specification,

and

we

update

the

algorithms

that

we

describe

regarding

data

channel

transferability

to

actually

use

it,

and

that

we

also

keep

is

transferable

to

present

transferring

data

channels

on

which

we

have

called

send

so

effectively.

It

would

be

just

internal

specification

updates,

but

the

behavior

would

probably

be

the

same

where

detectional

transfer

has

been

implemented.

J

H

J

J

J

B

B

H

If

detached

is

true,

then

abort

these

steps-

and

we

haven't

added

that

yet

so,

there's

a

question

for

the

group

here:

how

should

these

main

thread

objects

that

are

left

behind

work

and

I?

Think

whether

they're

garbage

collected

or

not,

they

wouldn't

be,

because

you

could

still

have

strong

references

to

them,

and

that

seems

like

a

secondary

question.

But

the

primary

question

to

me

is:

how

do

these

objects

behave?

What

happens

if

you

read

their

attributes

and

those

kind

of

things.

J

In

this

specific

case,

since

the

current

state

in

the

specification

is

closed,

if

you

try

to

call

any

function

on

it,

there's

two

only

two

functions

close

and

send

close

will

say:

if

it's

already

closed,

don't

do

anything

it's

done

and

send

will

throw

an

invalid

State

error.

If

you

are

in

closed

state

which

matches

what's

a

detached

slot

would

do

if

it

was

true

on

most

of

our

apis.

J

J

K

E

K

You

we're

here

to

discuss

capture,

handle

again

and

a

couple

of

extensions

that

I

would

like

us

to

make.

So

just

a

quick

reminder

for

people

who

have

forgotten

who

were

in

all

or

were

not

here

when

we

discussed

this

previously

capture

handle

is

a

mechanism

by

which

a

captured

page

sets

some

kind

of

string

and

maybe

also

exposes

its

origin,

to

whichever

other

taboo

might

end

up

capturing

it.

K

So,

for

example,

I

could

say:

hey

ABC

and

also

expose

my

origin

and

then,

if

anybody

ends

up

capturing

me,

they

get

to

read

the

ABC

and

why

this

is

interesting.

Is

that

I

could,

for

example,

say

hey

my

IP

address

is

XYZ

and

you

know

if

you

want

to

communicate

with

me,

try

there

and

meet

and

slides,

for

example,

could

use

that

to

for

meat

to

detect,

hey

and

capture

in

a

slides

presentation.

How

about

I

start

doing

interesting

things

next

slide,

please.

K

So

some

of

the

things

that

you

could

do

if

this

is,

you

could

remote

control

presentation,

but,

unlike

for

example,

capture

actions,

you

could

also

remote

control

anything

right.

So

we're

already

talking

about

a

new

mechanism

that

would

allow

you

specifically

to

capture

to

control

a

presentation

more

easily,

but

if

you

can

actually

start

communicating

and

in

any

way

that

you

choose

with

whatever

you're

capturing

you

could

do

anything,

you

could

send

any

type

of

message.

K

K

So

the

reason

that

I

would

like

the

reason

that

I

would

like

us

to

discuss

this

is

that

I

think

that

there

are

a

couple

of

gaps

in

the

apis

currently

specified

and

that

we

need

to

plug

those

gaps.

So,

first

of

all,

it

is

unstructured

right,

so

anybody

can

choose

to

structure

their

data

in

any

way

they

want.

K

So,

for

example,

let's

take

slides,

slides,

could

say:

hey

here's,

my

IP

and

I,

or

it

could

say,

hey

my

IP

is

here

or

it

could

say

you

can

imagine

right,

it

could

structure

it

in

any

way.

Another

problem

is

that

you

can

actually

only

work

with

strings.

So

if

we

go

back

two

slides,

please

thank

you

and

then

we

will

notice

that

there

is

json.stringify

here

and

json.parse

and

while

that

is

often

very

sufficient,

sometimes

it's

not,

for

example,

for

crop

targets.

Three

slides

forward,

please

so

I

think

that

is

two.

K

So

it's

like

the

video

conferencing

tool

doesn't

know

where

to

look

for

that

information

and

specifically,

the

way

that

we

want

to

use.

This

is

with

something

called

content

heat

right.

There

is

a

way

that

a

capture

and

somebody

that's

transmitting

a

video

or

audio,

can

say

hey

in

the

case

of

an

imperfect

Network,

prefer

to

the

grade

resolution

or

prefer

to

the

grade

frame

rate

right.

So,

in

the

case

of

static

content,

you

would

prefer

to

integrate

frame

rate

first

right

in

the

case

of

motion.

K

K

So,

as

you

can

see,

we

don't

really

know

where

to

look

for

the

content,

and

that

gives

us

problem

number

one

structured

versus

unstructured

and

tightly

coupled

applications

don't

really

care

if

it's

structured

or

unstructured,

they

impose

their

own

structure

right

if

mid

says

that

it's

capturing

slides,

it

knows

which

protocol

slides

uses

but

other

applications

don't

next

slide.

Please.

K

This

slide

is

for

other

arguments

that

have

come

up

for

this

specific

use

case.

I

think

this

is

not

really

needed

right

now,

because

this

is

specifically

I'm

just

trying

to

explain

the

difference

between

structured

unstructured,

but,

if

need

be,

we

can

go

back

to

that

and

people

can

refer

back

to

that

if

necessary.

Next

slide,

please

so,

as

mentioned,

we've

got

a

couple

of

that's

one

use

case

right:

capture

handle

and

content

hint.

Another

one

is

crop

Target

next

slide,

please.

K

K

So

for

that

we

actually

have

a

mechanism

called

region

capture

and

that

mechanism

allows

you

to

say:

hey

I

only

I

want

to

crop

the

video

to

this

content

area.

The

problem

is

that

it

only

works

for

self-capture

right

now,

because

or

for

same

origin,

tabs

sales

same

origin.

Tabs

can

transmit

a

crop

Target

over

a

broadcast

channel.

K

Others

came

so.

How

would

this

be

useful?

So

we've

got

a

simple

mock

application

here.

Right.

Imagine

that

you're

a

video

conferencing

application.

First

thing

you

do,

is

you

say,

hey

whatever

you

captured?

Does

it

have

any

crop

targets

right?

If

it

has

any

crop

targets

you

can

cycle

through

them,

produce

one

frame

of

each

one

and

present

it

to

the

user's

thumbnails?

And

now

the

user

can

say

like

no

it,

the

user

can

either

say:

hey

I

actually

want

to

capture

the

entire

thing

transmit.

K

K

Be

able

to

do

if

we

could

actually

transfer

crop

targets

next

slide,

please,

as

mentioned

it's

not

swing,

string

dependent.

Also,

if

it

were

stringable,

we

would

still

run

into

the

original

problem

of

like

okay,

great

it's

a

string

where

do

I

find

it

among

all

of

the

other

strings.

Next

slide,

please.

K

So

what

I

think

we

should

do

is

we

should

probably

impose

some

structure,

some

additional

structure

on

the

capture

handle.

If

we

were

to

say

hey

there

is

there

are

a

couple

of

fields

that

are

always

there.

You

can

use

them.

You

cannot

use

them

right.

If

you

want

to

use

them,

here's

a

place

where

you

could

put

the

crop

targets

and

each

crop

Target.

Can

you

know

we

can

annotate

it

with

the

name.

We

can

then

take

it

with

metadata.

K

K

H

K

What

about

the

message

board?

There's

a

very

simple

answer:

it's

not

structured

if

it's

not

structured,

which

means

that

yeah

it's

going

to

work

just

great

for

Mid

and

for

slides

what

about

meet

and

zoom

I'm,

sorry

zoom

in

slides

what

about

meat

and

Ward

meat

and

PowerPoints?

All

of

those

combinations

are

not

going

to

work.

H

So

I

I

would,

as

someone

who

pushed

for

set

capture

actions

I

would

actually

suggest

we

use

a

message

Port

here,

because

it

feels

to

me

like

we're

getting

too

much

into

the

space

of

specific

applications

and

I.

Don't

think

we

necessarily

know

I'm,

not

sure

we

want

to

be

in

the

business

of

specifying

all

the

different

things

that

and

that

application

might

need.

H

There's

some

yeah

I

agree.

There's

some

benefit

in

that

it

could

allow

Innovation

across

presentation

products

and

video

conferencing

products.

But

it's

still

not

clear

to

me

how

far

we

need

to

go

to

to

cover

all

the

use

cases

and

I

also

believe

I've.

Given

this

feedback

back

in

February,

that's

issue,

11..

E

H

G

H

K

Read

some

of

my

responses

to

these

arguments

delivered

in

the

past

so

that

people

who

are

here

would

also

know

my

counter

arguments

counter

argument

number

one.

A

message

Port

requires

that

the

capture

informed

the

capture

of

the

presence

of

the

capture

right

the

moment

I.

Send

you

a

message

saying:

like

hey,

you

know:

what's

your

suggested

content

hint,

then

you

immediately

know

that

I'm

capturing

you,

because

that's

the

only

way

you

could

get

that

message.

That's

number

one

and

number

two

is

you've

mentioned

that

this

is

the

capture.

K

Handle

itself

is

a

message

board,

but

unidirectional,

and

that

is

correct,

but

that

is

okay

and

the

reason

that

it

is

okay

is

that

it's

very

useful

because,

for

example,

right

now

we're

seeing

Google

slides

on

our

screens

and

each

slide

is

going

to

be

slightly

different

right

so

to

set

the

handle

into

the

at

the

beginning

of

the

capture

right,

it's

not

really

useful.

What

happens

if

the

next

slide

shows

a

video?

What

happens

if

the

next

slide

shows

a

gif?

K

Those

things

are

so

we

actually

need

to

be

able

to

change.

The

capture

handle

now.

I

agree

that

sometimes

we

do

want

a

message

board

and

that's

why

I've

got

a

slider

I

think

a

message

board

is

going

to

be

very

useful,

but

I

think

it's

an

orthogonal

use

case.

It's

a

use

case.

The

use

case

here

is

when

you've

got

tightly

tightly

coupled

applications

to

applications.

That

know

each

other

agree

on

a

protocol

and

know

when

how

to

send

each

other

messages

know

what

the

messages

need

to

be

structured

like.

H

K

What

I'm

suggesting

I'm,

making

three

different

suggestions

here,

suggestion

number

one

is

I,

want

to

be

able

to

transfer

region

capture,

I'm,

sorry,

crop

targets,

all

the

capture

handle

itself.

Okay

right

now,

it's

a

string.

We

might

want

to

change

it

to

something

other

than

a

string.

Then

it

will,

for

example,

be

a

dictionary

and

that

could

include

region

crop

targets.

That's

going

to

be

suggestion.

Number

one!

That's

going

to

work

for

me!

K

Suggestion

number

two

is

I'm,

saying

I

want

to

say:

hey,

okay,

so

that

works

great

for

tightly

a

couple

of

applications.

What

about

Loosely

coupled

applications?

They

also

want

to

transfer

crop

targets.

So

how

about

we

also

designate

a

place

where

crop

targets

usually

go?

If

you

want,

you

could

still

put

crop

targets

right

in

the

normal

handle,

but

but

everybody

knows

that

hey