►

From YouTube: WEBRTC WG interim 2021-10-14

Description

See also the minutes at https://www.w3.org/2021/10/14-webrtc-minutes.html

03:24 The Streams Pipeline Model (Youennf)

44:11 Altnerative Mediacapture-transform API

1:28:53 Mediacapture Transform API

1:49:07 Wrap up and next steps

B

Okay,

this

is

the

w3c

webrtc

working

group

meeting.

We

abide

by

the

w3c

patent

policy,

and

only

people

in

companies

listed

on

the

page

are

allowed

to

make

substantive

contributions

at

this

meeting.

We're

going

to

cover

some

issues

with

what

wg

streams

and

then

talk

about

the

media

capture

transform

api

so

a

little

bit

about

the

meeting.

These

are

links

to

the

specs.

The

slides

are

up

on

the

wiki.

B

B

B

So

if

you

want

to

get

into

the

queue

type

plus

queue

and

then

when

you

want

to

leave

type

minus

q

in

in

the

google

me

chat,

so

we'll

be

using

that,

of

course

use

headphones

and

wait

for

the

microphone

access

to

be

granted.

Before

speaking,

it's

also

helpful

to

state

your

full

name

just

for

the

recording,

although

we

can

probably

recognize

you

by

now.

I

don't

think

we'll

be

doing

any

poll

we'll

be

using

the

google

me

pull

mechanism,

but

we

may

take

polls

in

the

chat.

B

Okay,

just

a

few

notes

on

document

status,

just

because

something's

in

the

wpc

repo

doesn't

mean

it's

been

adopted

by

the

working

group.

That

requires

a

formal

call

for

adoption

and

just

a

reminder

that

editor

straps

don't

represent

working

group

consensus,

but

working

group

graphs

do

and

we

can

merge

brs

without

consensus.

But

as

long

as

we

have

a

note,

okay,

so

here's

what

we're

going

to

try

to

get

done

today,

uns

going

to

talk

a

little

bit

about

the

streams

pipeline

model

and

some

of

the

issues

that

are

under

discussion

there.

B

B

So

we'll

I'll

give

a

warning

two

minutes

before

time

is

up

and-

and

we

will

move

on

once

we

get

to

the

timeline

okay,

so

until

8

30

we'll

have

u.n

present

on

some

of

the

streams

issues

that

he's

found

in

that

around

their

discussion.

In

the

what

wg

streams

working

group

you

and

you

have

the

floor,

sure.

B

D

D

D

Javascript

to

connect

sources

and

things

or

you

can

use

transform

streams,

so

the

example

shows

shows

there

is

like

the

angle

that

everybody

would

like

to

to

have,

which,

which

is

go

from

the

camera

up

to

the

network,

with

just

streams.

So

as

we

as

we'll

see,

we

will

just

focus

on

a

video

pipeline

here

and

we

we

want

to

discuss

the

issues

around

threading

and

video

frame,

interaction

with

streams,

and

so

let's

look

at

threading

first,

so

next

slide.

D

D

D

But

in

the

web

audio

case,

the

spec

clearly

states

that

the

audiograph

is

set

up

in

the

main

thread,

but

it

runs

in

the

audio

thread

and

the

sec

is

very

clear

about

that

and

all

implementations

are

abiding

to

it

and

the

nice

things

is

of

course

the

audio

thread

is

a

high

priority

thread.

So

you

get

very

good

performances

there

in

our

case

we're

using

a

generic

approach,

streams

and

the

situation.

D

If

we

wanted

to

not

assume

that

we

would

need

to

optimize

pipe

2

and

pipe

through

operations

and

maybe

next

slide,

we

can

illustrate

that

it's

it's

not

easy.

So

the

example

one

there

is

the

case

where

you're

doing

the

funny

hat

thing

from

a

camera

to

an

html

media

element.

So

let's

say:

there's

a

native

transform

there

and

you

can

pipe

two,

the

media

element

in

some

ways.

So

there's

no

javascript

and

there

you

have

a

call

to

play

through

and

a

call

to

pipe

too.

D

So

from

reading

the

spec

from

this

example,

we

don't

know

whether,

where

will

flow,

the

video

frames,

it

might

be

my

infrared,

it

might

be

a

background

thread,

it's

really

up

to

the

user

agent,

and

that

makes

things

really

complicated

from

a

web

developer.

Basically,

that

actually

wants

to

get

guarantees

and

the

worst.

B

D

B

B

D

D

If

you

go

with

example,

3,

which

is

using

t

after

the

native

transform

and

using

pipe

2

there,

it's

really

unclear

where

video

frames

will

will

actually

flow.

Will

it

will

it

be

optimized

by

the

user

agent?

Or

will

it

not

be

it's?

We

cannot

predict

that.

So

that's

why.

I

think

that

the

safest

assumption

we

can

consider

is

that

the

thread

wears

down

the

setup

is

also

the

thread

where

it's

done.

D

So

one

potential

related

idea

is

to

transfer

the

stream

to

worker,

for

instance,

to

get

rid

of

main

thread

issues

and

currently

to

transfer

stream.

You

it's

specified

in

the

spec.

As

you

take

the

stream,

you

want

to

transfer

you

pipe

to

in

a

special

identity,

transform

that

will

actually

send

the

content

over

to

the

worker

thread

or

the

worker

context.

D

D

I

also

actually

tried

chrome

last

week

and

the

current

implementation

around.

That

era

is

not

compliant

on

a

number

of

points

as

well,

and

the

second

issue

really

is

that

light

pipe

2

and

python.

It's

very

hard

to

predict

whether

the

optimization

the

transfer

optimization

will

actually

kicking

on

or

not,

and

the

fact

that

it

kicks

in

is

really

important

from

a

birth

point

of

view.

So

again,

this

next

slide

for

some

examples,

so

example,

one

is

the

typical

case.

Where

chrome

will

optimize

the

thing

you

have

a

camera

for

a

stream.

D

You

transfer

it

to

work.

It's

working

fine.

Now,

let's

say

that,

for

the

purpose

of

the

example

we

add

a

native

transform

between

the

camera

and

the

stream

will

actually

transfer

there.

We

don't

know

what

will

happen,

maybe

it

will

be

optimized,

maybe

not

example.

Three

is

again

the

same.

Let's

say

that

you

have

a

camera,

readable

stream.

Let's

say

you

t

and

you

transfer

one

of

the

teeth.

What

will

happen

in

terms

of

optimizations?

We

we

don't

know,

and

the

same

applies

to

non-camera

streams.

D

Will

peer

connection,

get

display,

media

or

get

viewport

media

be

optimized

or

not.

We

we

don't

really

know

it's.

The

spec

doesn't

say

anything

and

let's

say

you

transfer

a

media

stream

track

like

get

report

media

to

another

frame,

and

you

then

take

a

stream

that

you

transfer

to

worker

will

be

optimized.

We

we

don't

know

and

from

a

web

developer

perspective,

there's

no

way.

You

know

whether

it

will

be

optimized

or

not.

This

is

very

different

from

web

audio.

D

Where

you

have

a

spec,

you

know

it

will

it's

guaranteed

that

it

will

run

in

a

background

thread

in

an

optimal

thread.

So

that's

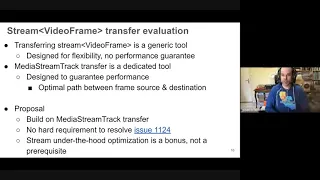

why

next

slide?

If

we,

if

we

look

at

the

idea

of

using

transferring

streams

for

our

purpose,

we

we

see

that

it's

really

a

generic

tool

like

strings

are

it's

designed

for

flexibility

and

it's

working

fine,

but

we

we

cannot

guarantee

performance.

D

D

We

should

go

with,

which

is

to

build

on

mediation,

track

transfer,

and

then

we

we

have

no

hard

requirement

to

re,

resolve

the

github

issue

to

actually

unable

to

have

a

spec,

compliant

optimized,

video

frame

of

optimized

stream,

video

frame

transfer

mechanism,

and

still,

if

with

that,

we

have

like

optimization

like

type

two

is

optimized.

Python

is

optimized.

It's

it's

a

bonus,

it's

great.

D

D

But

in

our

case,

where

we

have

a

video

media

pipeline,

it's

sort

of

different

first,

you

might

not

want

to

process

all

frames

if

there's

a

one

second

or

video

frame,

for

instance,

you

might

prefer

to

skip

it

and

get

the

freshest

frame,

for

instance,

and

that's

what

media

stream

track

processor

is

is

doing.

Actually,

if

you

do

not

process

frames

fast

enough,

it

will

drop

frames

so

that

you

next

time

you

will

get

the

freshest

frame

and

to

to

have

that

and

to

also

reduce

buffering.

D

The

second

pipeline

on

on

the

silver

second

below

is

the

one

where

we

are

doing:

processing

sequential,

which

has

a

cost

of

course,

but

it

has

also

some

benefits

in

in

our

case,

and

I

think

that

in

general

we

it's

safer

to

to

get

the

second

pipeline

model.

For

me

for

video

frames,

and

the

first

issue

is

that

the

second

pipeline

cannot

be

built

currently

with

streams.

D

You

can

only

basically

build

the

the

first

pipeline

next

slide,

and

so

this

is

the

real

issue

because,

as

I

said,

syncs

are

really

hoping

to

get.

The

freshest

video

frames

and

also

video

frames

are

big

and

scary

sources,

and

also

it's

not

very

clear

from

the

web

developer

perspective.

What

happens

because

the

buffering

is

sort

of

sort

of

hidden

to

to

your

application?

D

D

Next

slide,

and

it's

it's

also

a

bit

a

bit

sad,

like

generally

for

streams,

the

buffering

you

you

don't

really

care

about

it,

because

the

idea

is

that

streams

will

handle

it

for

you

for

back

pressure.

But

in

our

case

for

me,

the

pipeline,

maybe

some

limited

buffering,

might

be

good.

If

you

have

two

long

tasks

in

the

pipeline,

like

you're

doing

encoding

and

funny

hat

thing,

then

you

might

want

to

have

like

doing

the

encoding

and

funny

hat

thing

is

sort

of

important

to

to

reduce

delay,

but

nothing

else.

D

D

B

D

B

D

So

how

should

we

proceed

there,

because

I

I

only

have

like

until

10

minutes?

Why

don't

we

finish

the

slides

and

then

we'll

we'll

take

the

questions

if

that

that

works?

Yeah,

okay,

so

let's

go

with

t.

As

we

know,

mediastreamtrack

have

built

in

support

for

multiple

consumers

and

t

is

the

way

to

do

this.

Multiple

consumer

thing

with

strings

a

typical

example

in

in

this.

D

In

the

slides

there

is

we

we

do

some

effects

on

the

video

and

then

we

we

key

the

the

end

results

to

do

both

rendering

and

encoding

in

parallel,

and

the

use

case

might

be

doing

analytics

in

parallel

to

rendering,

for

instance,

and

t

is

valid

api

it's

already

used

for

the

stream,

so

we

should

be

able

to

use

it

and

we

should.

We

should

not

be

in

a

place

where

we

would

say.

Oh

no,

please

not

your

st.

We

will

find

something

else.

We

we

should

be

able

to

use

this

so

next

slide.

D

As

we

discussed

this

a

couple

of

times.

We

know

that

t

is

broken

if

a

stream

is

steered

in

branch,

one

and

branch,

two,

the

same

object,

so

the

same

frame

f

will

be

presented

to

both

branches,

and

if

one

branch

is

closing

f,

then

the

overbranch

is

only

getting

a

frame

which

is

already

closed,

so

cannot

cannot

really

use

it.

D

The

potential

solution

here

is

to

use

a

structured

clone

as

discussed

in

issue

1156,

and

it

should

be

fairly

straightforward

to

adult

I

mean

if

you

compared

to

the

other

case

with

buffering

there.

It's

stretcher

cone

equals

true

and

we,

I

hope

we

know

what

structured

clone

means.

So

that's

that's

kind

of

okay.

D

D

So

are

we

good

not

really?

We

need

more

more

chance,

more

changes

to

key

than

just

structure

clone,

because

if

you

apply

structure

clone,

then

you

create

hidden

buffering

and

if

the

branches

are

not

consuming

at

the

same

pace,

you

end

up

into

issues.

The

typical

issue

will

be

that

the

camera

will

run

and

run

out

of

buffers

and

branch.

D

One

will

starve

of

data

because

range

two

is

not

consuming

enough

of

the

data

and

the

typical

solution

in

in

typical

real-time

processing

flow

of

video

frames

is

to

drop

frames

as

needed.

Like

media

frame

track.

Processor

is

doing

that

way.

Branch

one

can

continue

at

its

space

and

branch.

Two

can

get

fresh

frames

as

well.

D

There

can

solve

this

issue

in

a

couple

of

lines,

so

it

should

be

possible

to

to

solve

in

a

in

a

good

way,

but

since

streams

have

a

model

and

the

model

is

not

aligned

with

the

goal

there,

it

it

makes

things

more

difficult

than

than

it

should

be

and

yeah,

but

that's

an

issue

that

we

we

need

to

solve.

Somehow

if

we

want

to

use

strings

there.

D

D

B

D

What

possibly

one

possibility

would

be

to

go

with

some

form

of

dedicating

handling

in

the

stream

of

video

frames

object

like

a

subclass

or

something

like

that

where

we

would

have

built-in

video

frame

memory

management.

Let's

say

that

you

call

you

enqueue

a

video

frame,

then

the

video

frame

will

be

cloned

and

when

you

remove

it

from

the

queue

from

the

internal

queue,

you

will

call

close.

D

So

we

need

a

solution.

I

don't

know

what

we

can

do

there,

but

it's

certainly

something

that

we

we

should

do,

and

I

have

two

minutes

for

the

conclusion.

So

that's

good

next

slide.

So

yeah

next

slide,

so

we

we

need

yeah.

We

need

to

solve

those

issues,

buffering

t

and

lifestyle

management

specifically

for

video

frames,

a

stream

studio

frame.

D

D

So

next

slide

yeah,

so

yeah

and

my

point

is

that

we

need

to

solve

those

issues

or

have

a

very

good

confidence

that

we'll

solve

these

issues

before

selecting

the

api

model,

because

if

we

select

a

model,

it

means

that

we

we

want

developers

to

go

into

that

model.

So

we

need

to

be

very

sure

that

it's

a

good

model

and

also

since,

if

we

pick

a

model,

we

won't,

we

are

sure

of

it.

D

We

if

we

use,

if

we

select

streams

which

has

its

own

benefits,

we

should

exam

streams,

integration

with

exiting

a

new

apis,

and

that

means

video

decoder,

video

encoder.

I

mentioned

barcode

decoder

and

face

detector,

which

are

also

existing

apis

or

at

least

proposals,

and

it

does

not

seem

that

we

are

going

into

that

direction.

D

E

I

think

the

idea

is

that

if

you

specify

a

transform

stream,

you

should

be

able

to

specify

a

transform

stream

with

a

zero

high

water

mark,

which

means

no

buffering,

and

then

the

transform

stream

will

automatically

call

release

back

pressure

when

something

downstream

from

it

reads

from

it.

I

think

that's

the

main.

What

we're

trying

to

solve

is

the

concept

of

bufferless

transform

streams,

which

would

be

helpful,

and

the

other

thing

was

about

dynamic

buffering.

E

It's

true

that

the

the

convenience

feature

of

providing

high

water

marks

is

a

static

one

that

you

set

up

at

the

time

of

the

pipe,

and

you

have

to

tear

down

the

pipe

again,

but

nothing

prevents

javascript

to

write,

transforms

to

implement

their

own

buffering,

which

could

be

dynamic

and

they

can

even

drop

frames.

They

just

can't

have

a

zero

high-water

mark

to

be

totally

buffer-less

today

and

that's

what

we're

trying

to

solve

in

that

issue

and

on

the

third

and

last

on

the

stream

reliance

on

a

government

collection.

E

D

Yeah,

I'm

thanks.

I'm

I'm

not

totally

optimistic

in

solving

that

at

the

source

level.

Honestly,

I'm

I'm

looking

forward

to

the

solution

there,

which,

if

we

have

a

solution,

then

I

I'll

be

happy

to

remove

my

concerns,

but

so

far

I

do

not

see

how

it

will

work.

So

my

my

understanding

is

that

and

more

generally

with

lifetime

management,

there's

no

api

contract

right.

D

D

It

really

depends

what

what

happens

so,

what

you

should

try

is,

for

instance,

on,

like

let's

say

you

have

a

render,

which

is

a

transform

stream.

Let's

say

it's

at

the

end

of

a

pipeline.

Let's

say

that

you

add

a

one

second

delay

and

you

look

at

you

look

at

which

video

frame

you

will

get

and

so

on,

and

you

will

see

that

things

will

will

not

be

will

not

be

great

there.

It's

true

that

what

what

you

happen

to

have

is

like.

B

D

B

D

To

it

and

if

you're

adding

that

to

the

fact

that

maybe

it

will

go

out

of

process

and

so

on,

maybe

five

is

not

enough

and

it's

on

devices

that

have

10

frames.

Maybe

maybe

some

devices

will

have

a

lower

capacity

and

will

have

a

smaller

number

of

buffer

buffer

frames,

and

then

you

will

end

up

in

two

glitches

where

video

frames

will

like

the

camera,

will

stop

producing

until

you

release

frames

and

you

will

have

frame

rate

that

is

decreasing

and

changing

and

that's

that's.

D

B

In

that

issue,

so

one

thing

I

noticed

by

the

lack

of

streams

integration

within

web

codecs

is

that

right

there

are

actually

two

cues

here:

there's

the

encoder

queue

and

then

there's

the

streams

queue,

and

so

you

kind

of

have

to

manage

these

two

cues

yourself

and

in

web

codecs.

There's

no

is

really

the

the

cue

is

not

transparent

at

all.

You

have

to

kind

of

keep

track

of

it

yourself

with

the

pending

output

counter.

B

B

B

D

B

D

A

Yeah,

so

the

discussion

is

discussion,

not

just

questions.

I

will

notice

a

couple

of

observations.

One

is

that

the

web

codex

did

have

a

stream

based

api

for

a

while,

after

we

developed

the

media

stream

check

processor

and

made

this

change

track

generator.

They

said

we

don't

need

to

have

another

one,

so

they

dropped

them

so,

and

we

have

had

actually

very

few

reports

on

people

having

having

trouble

with

these

so-called

issues.

A

On

the

comment

of

t,

I

had

had

a

fun

experience

of

reading

the

the

cl

that

added

the

tea

to

the

specification

and

all

the

people,

all

the

things

that

people

were

worried

about

with

tea

when

they

added

it

to

the

specification

were

in

fact

the

same

thing.

We're

worried

about

t

is

a

bad

design

and

it's

fairly

trivial

to

write

like

this

much

javascript

and

have

the

tea

you

want,

and

the

tea

you

want

is

actually

quite

dependent

on

on

your

application.

A

One

of

the

nasty

pieces

of

tea

that

you

didn't

mention

is

that

it

doesn't

respect

high

water

mark

on

the

on

the

downstreams,

which

stunned

me

when

I

discovered

that

so

t

is

bad.

I

realize,

on

the

contract

point

I'm

just

taking

trying

to

take

off

the

point

so

that

we

get

to

it

within

the

next

five

minutes.

Are

the

contact

points

yeah?

A

I

think

it's

natural

to

say

that

a

downstream

either

have

to

call

close

or

pass

the

thing

on

to

something

that

the

frame

onto

something

that

calls

close

for

video

frames

and

and

and

that

and

that

they

shouldn't

depend

on

upstream

to

do

anything.

We

do

have

a

problem

with

disrupted

pipelines,

as

you

say,

in

that

disrupted

pipelines,

don't

seem

to

have

a

good

method

of

letting

the

user

define

what

should

happen

to

what's

in

the

pipeline

when

it's

being

disrupted.

A

D

We

have

two

minutes:

two

minutes

yeah.

I

agree

with

you

that

tea

is

bad

and

we

can

try

to

salvage

it.

It

will

be

difficult,

and

this

is

this-

is

due

to

the

use

of

strings.

As

you

said,

you

can

use

javascript

to

do

your

own

key

and

it

will

be

done

in

a

much

better

way

and

that's

why

promise

based

callbacks

is.

Is

the

right?

It's

right

approach.

D

If

you

actually

want

to

use

t,

because

that's

what

we

will

use,

you

will

use

promising

promise

based

callbacks

internally

and

you

will

asynchronously

iterate

over

the

stream.

So

in

that

case,

why

should

we

even

use

things

on

the

closed

case

and

on

other

issues

that

you

that

you

were

seeing

they're,

not

fatal?

D

D

If

we

do

not

have

proposals,

then

what

should

we

do?

It

just

means

to

me

that

we

I'm

not

confident.

I

want

proof

that

reads

will

be

solved

and

I

want

proof

before

we

actually

decide.

Otherwise

we're

not

in

a

good

place,

and

all

of

that

is

because

we

select

streams

which

has

a

defined

model

which

sort

of

match,

but

not

entirely,

and

I

want

it

to

match

entirely

and

so

that

it's

the

perfect,

perfect

thing

and

then

I'll

review.

E

Callbacks

may

solve

that

very

easily

because

you,

but

the

problem

is

that

a

promised

callback

also

doesn't

work.

There

are

areas

where

streams

work

better,

for

instance,

with

audio

which

we're

not

talking

about

yet

where,

for

instance,

you

encode

some

audio

that

doesn't

mean

there's

a

one-to-one

between

encode

and

getting

a

callback.

Now

streams

can

handle

that,

but

at

the

cost

of

losing

track

of

it's

not

a

simple

input

output,

one

to

one.

D

I

will

I

welcome

pros

and

cons

between

promise-based

callbacks

and

streams

callbacks,

it's

true

and

streams.

It's

true

that

there

I

only

showed

the

issues

I

have

with

strings

and

I

I'll

be

happy

to

also

get

a

list

from

you,

our

geneva

or

lab

about

promise

based

callbacks

and

to

identify

what

are

the

issues

and

then

we

can

compare

issues

of

both

models.

E

And

unmute

all

right.

Thank

you

for

that

setup.

You

covered

more

threading

than

I

anticipated,

so

this

is

great.

So

the

first

off.

Why

are

we

here?

Well,

threading

is

a

big

issue,

and

I

wanted

to

highlight

the

build

on

what

you

and

just

said

that

today,

the

status

quo

in

in

specs

and

the

cross

browsers

is

that

the

real-time

media

pipeline

is

off

the

main

thread.

E

That

means

that

the

midi

stream

track

interface

is

purely

a

control

surface

on

the

main

thread,

but

that

media

flows

in

parallel

either

on

the

dedicated

media

thread

or

other

threads

multiple

threads.

So

when

you

call

get

user

media,

you

get

a

track

back

and

you

attach

that

track

to

appear

connection

you're

pretty

much

done.

E

So,

as

I

illustrate

here,

you

can

have

a

media

thread

where

camera

frames

move

through

a

downscaler

to

an

rdp

sender,

and

it

goes

out

on

the

network

and

the

main

thread

is

not

doing

anything

it

doesn't

get

in

the

way

of

that

I

mean

that's

important.

Media

delivery

never

ever

blocks

on

the

main

thread

next

slide

now.

E

So

here

again,

the

media

thread

has

a

send

where

the

bits

go:

sender

encodes

it

and

it

sends

it

to

a

javascript

encrypt

function,

that's

only

exposed

on

worker

and

it

sends

it

out

over

the

network

and

again,

nothing

blocks

the

main

thread

and

that's

for

encoded

video,

not

even

raw

video.

So

since

we

recognize

the

importance

of

this

for

encoded

media,

we

should

be

even

more

concerned

about

exposing

raw

uncoded

media

to

main

thread,

because

it's

a

lot

more

data

next

slide.

E

So

the

premise

here

is

that

the

main

third

is

bad,

and

so

how

do

we

back

that

up?

Well,

chrome

dev

summit

in

2019

had

an

excellent

presentation

by

cirma,

which

is

basically

the

main

thread

is

overworked

and

underpaid,

and

I'm

quoting

literally

here,

there's

a

link.

If

you

can

follow

the

youtube

where

therma

says

we

are

setting

ourselves

up

for

failure

here,

we

have

no

control

over

the

environment.

Our

app

will

run

in

the

main

thread

is

completely

unpredictable.

E

E

E

The

video

is

gonna

stutter.

So

on

high-end

devices

you

can

have

you

know,

90

frames

per

second

120

and

even

some

devices

with

144

frames,

whether

we're

less

than

seven

milliseconds

of

a

deadline.

To

finish

what

we're

doing

and

then

so.

The

point

here

is

contention

on

the

main

thread

is

common

and

unpredictable,

and

that

leads

to

intermittent

that

can

lead

to

intermittent

missed

deadlines

and

video

stutter.

E

E

In

contrast,

contention

on

a

dedicated

worker

thread

is

relatively

non-existent

because

it

is

a

controlled,

dedicated

environment

next

slide

now,

so

we

mentioned

that

web

codecs

recently

had

a

decision

to

expose

to

window

and

here's

what

they

wrote.

They

wrote

there

is

consensus

and

we

agree

that

media

processing

in

general

should

happen

in

a

worker

context.

E

E

That

means

it's

non-standard,

because

there's

no

consensus

and

google,

unfortunately

has

shifted

in

m94.

So

there's

a

time

limit

here.

I

think

for

us

to

try

to

standardize

something,

or

this

becomes

the

factor

standard.

Now.

Why

wasn't

it

adopted?

My

my

thoughts

on

this?

Is

that

the

problems

I

had

with

it?

I

should

say.

B

E

Also

ergonomics-wise.

These

apis

are

thread

couple

to

main

thread,

which

is

very

unfriendly

to

workers

which

I'll

show

in

subsequent

slides.

So

we

need

to

standardize

an

api

without

these

problems

and

reclaim

the

url

so-

and

this

also

was

designed

before

media

stream

track

was

transferable,

which

we

just

had

a

call

for

consensus.

That

was

positive,

so

we

can

rethink

the

api

next

slide.

E

E

Does

it

post

message

it

to

the

main

thread

to

ask

it

to

create

a

media

stream

track

processor

and

post

message

to

readable

back,

or

does

it

access

a

readable

on

that

track?

The

former

makes

no

sense.

It

shouldn't

have

to

go

back

to

main

thread

to

get

access,

and

this

also

doesn't

necessarily

get

us

off

main

thread

in

all

cases.

No

process

next

slide.

E

Does

it

have

to

post

message

to

ask

main

thread

to

create

a

generator

and

post

message

that

writeable

and

maybe

a

track

phone

back,

or

does

it

call

some

api

to

get

a

writable

and

a

track

again?

The

former

makes

no

sense

that

workers

should

be

able

to

create

a

track

directly

without

having

to

ask

main

thread

to

do

it,

and

this

points

out

that

mediaseam

track

generator

is

a

weird

object.

E

E

So

these

questions

are

getting

a

little

harder,

but

apologies.

So

this

is

very.

This

is

a

more

difficult

question,

but

very

relevant

one

that

that

euan

already

posed.

How

does

a

worker

send

processed

video

frames

say

over

web

transport

without

and

also

maintaining

a

self

view

that

has

high

resolution,

even

though

the

transport

might

need

to

drop

frame

rate?

So

we

mentioned

t

already

and

all

these

issues.

E

Another

option

would

be

to

clone

the

original

track.

But

then,

if

we're

doing

like

a

video

replacement

like

me

in

the

sky

here,

you

have

to

do

that

processing

twice

and

that's

undesirable,

or

do

you

post

message,

constraints

to

the

main

thread,

create

a

generator

apply

constraints

to

a

clone

from

the

generator

create

a

media

stream

track

processor?

From

that

clone

and

post

message,

the

generator

is

writable

and

the

the

producer

is

readable

back

to

the

worker.

E

E

And

I

also

want

to

talk

about

that

sentence.

I

I

rushed

through

here,

post

messaging,

immediately,

retract

processes,

readable

or

a

gender

is

writable

back

or

transferring

them

at

all

to

a

worker,

and

that

this

actually

violates

the

intent

of

what

wg's

transferable

streams

which

which

have

tunnel

semantics

they're

a

special

kind

of

identity.

Transformer

me,

which

has

a

the

writable

side

in

one

realm

and

the

readable

side

in

the

motherboard

to

implement

cross-thread

transforms.

E

So

an

alternative

proposal

and

here's

a

link

to

issue

1559,

which

also

has

a

spec

document

and

more

details

you

could

look

at.

I

would

encourage

you

to

look

at

after

this

presentation

since

I'll

be

going

through

the

basics

here,

the

goals

are

to

align

the

api

with

transferable

medium

tracks,

for

simpler

api

surface

and

remove

the

aforementioned

blockers

to

standardization,

by

exposing

real-time

media

pipeline

to

workers

and

not

main

thread,

and

also

encourage

user

workers

by

making

it

simple

friendly

to

workers

and

the

default,

and

also

discourage

usage

on

the

main

thread.

E

This

proposal

also

starts

with

video

to

in

order

to

reach

an

agreement

and

because

we're

trying

to

present

an

api

to

the

working

group

that

will

be

adopted,

and

we

do

so

by

removing

exposure

on

the

main

thread,

which

is

what

mozilla

and

safari

want.

We

focus

on

video

for

now,

which

is

safari,

has

been

interesting

and

we're

also

using

we're

still

using

streams,

which

is

what

chrome

mozilla

prefer.

E

That

means,

if

you

see

a

medium

stack

on

main

media

stream,

track

on

main

thread.

You

won't

see

this

attribute,

but

if

you

post

messages

to

a

worker-

and

you

look

at

it

there-

you

will

have

this

readable

attribute,

so

I'm

showing

the

javascript

only

the

worker

side

of

it

here.

You

went

showed

example

slides

earlier,

where

that

includes

the

post

messaging,

so

here

a

worker.

This

is

a

sort

of

read-only

example

where

we're

just

reading

and

we're

sending

it

over

web

transport

where

we

get

a

track

in

the

worker.

E

We

await

we

basically

just

pipe

it's

readable

through

some

kind

of

a

wrapper

for

web

codex,

and

we

also

have

to

transform

it

to

serialize

it,

which

also

means

chunking

it

and

stuff

for

you

know,

and

it

this.

This

might

not

be

how

you

would

send

things

over

web

transport,

but

this

is

a

simple

example

right,

so

it

says

sync

and

that's

it.

The

key

here

is

that

the

attribute

is

only

exposing

workers

and

that

keeps

data

off

the

main

thread

next

slide,

so

a

little

more

complicated.

E

Let's

say

we

want

to

read

and

write.

We

want

to

basically

have

a

self

view

here

or

show

local

view

only

and

direct

your

attention

again

to

the

blue

box

in

order

to

so

the

previous

slide

was

basically

the

stem

track

processor,

and

this

slide

is

made

into

a

generator

where

we

need

to

put

data

back

into

a

track,

put

humpty

dumpty

back

together

again,

so

we

exposed

only

on.

E

So

what

we

want

to

do

here

this

is

an

example

from

web

codex

that

I

modified

it's

basically

a

crop

example

where

you

use

offscreen

canvas

to

crop

some

video,

that's

basically

the

simplest

example

of

modifying

bits

that

actually

works.

So

we,

the

worker,

gets

the

track.

We

create

a

video

source

object

and

we

post

message

the

sources

track

back

to

main

thread

where

it

can

be

assigned

to

a

source

object,

and

I

know

what

we

do

the

same.

E

As

on

the

previous

slide,

we

pipe

the

readable

through

a

transform

stream,

which

is

shown

below

as

a

crop,

and

then

we

pipe

it

to

the

sources

writable,

and

that's

it

that's

it.

This

aligns

better

with

the

media

capture

main

spec,

which

separates

sources

from

its

track,

and

this

makes

it

easy

to

extend

the

source

interface

later.

The

source

critically

stays

in

the

worker,

while

its

track

may

be

transferred

cleanly

to

the

main

thread,

and

this

is

also

extremely

clean

and

simple,

with

how

interacts

with

track

clone

and

even

structure

cloning.

E

E

All

right

process

video

and

apply

constraints,

so

an

issue

we

have

is

to,

if

we're

going

to

do

any

kind

of

processing

like

say

we're,

cropping

a

video

you

might

want

to

have

a

self

view

of

that.

That

has

a

high

frame

rate,

but

you

might

also

want

to

lower

the

frame

rate

depending

on

where

you're

sending

in

so

here's

an

example

that,

where

you're

sending

over

a

peer

connection,

which

is

basically

you

send

it

to

the

worker,

do

the

processing

send

the

track

back

and.

E

Sorry,

this

one:

yes,

so

you

assign

the

so

when

you

get

the

track

back

to

main

thread,

you

assign

it

to

you

admit

you

take

a

clone,

basically

and

assign

it

to

the

source

object

and

then

on

the

track.

You're

sending

you

can

now

apply

constraints

and

you

can

have

a

lower

left

resolution

and

lower

frame

rate

on

what

you're

sending

so

you

can

send,

for

instance,

30

frames

per

second,

while

the

self-view

doesn't

start

to

stutter,

but

it's

still

a

good

60

frames

per

second.

If

that's

what

the

camera

source

was.

E

Now

that

works

great

for

peer

connection,

but

what,

if

you're

sending

over

web

transport?

Well

with

web

transport?

We

really

wanted

to

encourage

you

to

use

open

the

web

transport

in

the

worker.

So

this

is

the

same

example

basically,

and

it

shows

how

you

can

how

media

stream

tracks

now

are

useful.

Also

in

the

worker.

Again,

we

receive

a

track.

We

get

a

source

from

it.

E

We

clone

that

source

and

we

send

the

original

source

back

to

main

thread

for

self

view

at

60

frames

per

second

and

then

now

we

have

the

clone,

which

is

called

send

track.

In

this

example,

we

can

apply

constraints

to

it.

Do

anything

we

want,

just

as

if

we

were

on

main

thread

and

now

we

can

open

a

web

transport

connection

and

we

can

read

from

that

send

track.

E

So

what

we're

actually

reading

from

we're

doing

two

things

here:

we're

reading

from

the

track

readable,

sending

it

through

the

cropping

and

piping

it

to

the

source

writable

and,

at

the

same

time,

we're

reading

from

the

send

track

readable,

which

is

the

processed

downscaled

data

and

sending

it

out

to

web

transport

I'm

using

pipe

through

here,

which

is

a

bit

clever

thing

an

earlier

example.

I

had

a

promise

all,

and

these

are

really

two

operations

that

are

separate,

but

it

shows

you

that

you

can

natively

downscale

and

that

medicine

tracks

apply

constraint.

E

E

E

E

E

Well,

you

can

actually

do

that

because

every

readable-

and

this

applies

to

any

streamspace

proposal-

you

could

use

408

on

that

readable

and

you're,

basically

being

called

back

you're

getting

your

async

function

is

going

to

be

called

then,

and

that

means

that

you

can

basically

operate.

This

is

basically

a

promise.

Callback

is

what

I'm

trying

to

say

and

here's.

What

un

wanted

to

show

you

think

is

that

you

can

now

do

operations

like

encode

and

you

can

guarantee

that

frame

close

will

be

called.

E

And

appendix

b,

the

next

line

and

there's

also,

I

added

the

slide

only

if

people

want

to

have

more

than

one

readable

from

a

track

but

enter

so

far.

It

seems

like

people

are

more

than

happy

to

suggest

cloning.

The

track

for

these

things,

which

came

up

to

avoid

t,

for

example.

So

I

don't

think

this

is

that

critical,

but

I

want

to

pull

it

up,

throw

it

out

there

and

I

think,

that's

it.

I'm

going

to

skip

the

last

slide

and

we

can

move

to

discussion.

B

A

A

The

the

examples

where

you

have

posting

a

message

to

the

main

thread

to

to

do

get

a

message,

stream

check,

generator

and

and

get

that

to

get

generate

the

stream

and

post

it

back.

That's

just

wrong,

I

mean

obviously

mstg

and

nstp

were

designed

to

be

available

in

the

same

context

as

the

trackish

content.

It

is

available

in

so

as

soon

as

so

as

soon

as

track

is

available

on

workers,

msdp

and

msctg

obviously

need

to

be

there

too.

A

E

E

Which

raised

the

question

of,

but

so

I

would

still

say,

though,

that

the

model

that

people

seem

to

so

it's

your

suggestion

that

people

should

so.

There

are

two

ways

to

do

things

now

right,

so

you

can

now

either

create

you

have

your

track

on

main

and

you

create

a

media

stream

track

processor

there

and

you

transfer

it's

readable

to

the

worker

or

you

transfer

the

mediasting

track

to

the

worker

and

you

create

a

major

stream

track.

Processor.

There.

A

A

A

D

D

I

think

that

in

general,

in

media

capture

main,

we

have

the

concept

of

a

source

and

we

have

a

concept

of

a

track

and

we

have

we're

defining

the

relationship

between

the

source

and

the

track

and

the

fact

that

we

are

introducing

introducing

a

video

track

source.

A

javascript

object

that

represents

a

track

source

is

a

good

thing

and

it's

very

similar

to

readable

stream.

D

You

can

have

a

native,

readable

stream,

but

you

can

also

have

a

readable

stream

with

the

javascript

source

and

the

javascript

source

is

also

an

object

that

abides

to

specific

things,

and

so

I

think

we

should

go

there.

It

will

be

easier

to

extend

things

if

we

think

if

we

think

so,

we

remove

some

edge

cases,

so

it

will

be

much

cleaner.

D

So

that's

why

I

I

would

tend

to

go

with

this

way.

As

I

said,

there's

no

surprise

for

me

to

prefer

not

relying

on

transferable

streams

there.

I

think

that

we

should

rely

on

media

stream

track

transfer,

which

will

be

more

reliable,

which

will

be

a

typed

way

of

transferring,

and

since

it's

typed

they

are

like

requirements

that

that

will

be

fulfilled

while

with

streams

you

don't

know

whether

these

same

requirements

will

be

fulfilled

because

things

are

generic

objects.

So.

E

D

D

I

actually

want

30

frames

per

second

to

the

video

track

source

and

we

have

no

guarantee

there

there's

nothing

there

and

it's

due

because

there's

no

back

pressure

on

the

writable

stream

and

if

we

start

to

introduce

back

pressure

on

the

writable

stream,

which

we

might

want

to

do,

it

would

be

a

good

exercise

to

do

then

we're

in

a

good

situation,

because

promise-based

callbacks

have

that

and

we

will

not

be

able

to

have

that

with.

We

may

just

interact

as

video

track

sources,

so

we

should

be

able

to

so.

D

B

B

E

E

Dropper,

it's

the

four

lines

from

the

bottom

or

five

lines

from

the

bottom.

You

have

to

have

a

transform

string.

Basically

that

drops

frames,

so

that

would

be

a

way

that

of

solving

the

t

problem.

But

this

is

why

we're

here.

So

yes,

so

it's

true

that

using

a

track

to

and

apply

constraints

is

a

workaround

for

this.

Basically,

if

we

cannot

solve

the

d

problem.

B

Yeah

yeah

a

bunch

looking

through

these

and

that

these

didn't

make

sense

to

me

right

because,

basically

you

would,

I

think

you

wouldn't

post

message

to

the

main

thread,

because

you

basically

create

the

track

processor

on

the

main

thread

and

and

transfer

the

stream

or

and

what

you're

saying

you

would

just

transfer

the

track.

So

I

don't

these

slides,

don't

matter

yeah,

I

don't

think

they're

distinctions

between

the

two

apis

right.

E

B

B

E

So

harold

is

right

there,

a

lot

of

similarities

between

the

apis,

but

the

difference

is

that

mediastimate

processor

creates

a

new

class

on

the

producer

side

and

it

where,

as

mike

on

yuan's

proposal,

does

not

create

a

new

object.

In

that

case,

we

just

add

it

readable

to

the

track,

which

we

think

is

sufficient

and

simpler,

because

a

readable

already

has

locking

semantics

and

once

it's

locked,

you

know

that

it's

being

consumed,

we

don't

need

a

new

object

there.

B

E

Anyway,

so

yes,

so

so-

and

the

other

thing

I

want

to

mention

is

that

media

stream

track

generator.

It's

a

bit

of

an

odd

duck

in

that

it's

it's

just.

It's

also

a

track

and

that's

where

you

and

I

feel

like

that's

where

we

should

have

the

separate

api,

not

on

the

pro

producer

side

and

that's

a

cleaner

model

that

fits

neatly

with

the

sources

and

syncs

model

in

media

capture

as

far

as

main

thread

areas

where