►

From YouTube: SES-mtg: minimally invasive module rewrite

Description

No description was provided for this meeting.

If this is YOUR meeting, an easy way to fix this is to add a description to your video, wherever mtngs.io found it (probably YouTube).

A

Hereby,

okay,

so

I'm

going

to

start

from

the

top

for

the

sake

of

the

recording

and

anybody

who

joined

after

I

started

explaining

this

thing.

But

let

me

first

start

because

we

have

some

people

in

particular

John

David

Dalton,

who

is

the

guy

who

created

ESM

the

canonical

module

two

executable

script,

rewriter

that

that's

built

into

note

now

so

I'm

going

to

start

over

from

scratch

with

regard

to

explaining

what

the

goals

are

and

then

what

the

code

is

we're

seeing

on

the

screen.

A

So

at

a

gorrik

we're

we

have,

you

know:

we've

created

this

routing,

goreck

and

salesforce

together

created

a

realm

shim,

and

then

we

created

a

cess

shim

on

top

of

the

realm

xem

and

the

realms

shim

is

and

assess.

Shim

are

able

to

be

something

we

can

security

review

and

have

and

gain

confidence

in

its

security,

largely

by

virtue

of

it

being

very

small

and.

A

That

starts

with

the

program

production

program

versus

module,

there's

two

to

start

productions

in

eggman

script,

there's

program,

which

is

what

scripts

consist

of

and

what

and

the

the

nature

of

strings

you

can

feed

to

the

eval

function

and

there

are

modules

where

the

syntactic

difference

is

that

modules

have

top-level

new

port

export

statements

and

scripts.

Do

not

both

modules.

I'm,

sorry

I

mean

refer

to

programs

just

as

scripts,

which

is

what

everybody

does.

A

Both

modules

and

scripts

will

now

have

important

dynamic

import

expressions.

But

let's,

let's

postpone

worrying

about

those.

If

we

can

deal

with

import

statements,

then

it

would

be

much

clearer

what

we

need

to

do

for

import

expressions

so,

but

since

modules

are

not

evaluable

at

by

eval,

there's

no

way

for

us

to

safely

run

module

code

without

parsing

and

rewriting.

A

We

want

to

maintain

thus

the

layering,

where

the

result

of

the

parsing

and

rewriting

is

something

that

is

then

fed

into

the

existing

mechanism

as

any

valuable

script,

so

that,

even

if

our

parsing

and

rewriting

is

wrong,

it's

still

the

case

that

every

evaluable

script

is

only

given

the

authority

it

needs

hold

on

Jeff

can't

get

in

it's

demanding

authorization

or

something.

What

does

he

need

to

do?

Salah

you're,

the

zoom

expert.

B

B

A

C

D

A

A

A

So

what

we

want

is

that

the

that

the

modules

in

being

rewritten

to

a

valuable

script

are

not

that

a

mistake

can't

cause

a

module

to

to

have

more

authority

than

it

should

have.

However,

there's

the

the

the

other

security

problem,

which

is,

if

the

rewrite

introduces

a

change

to

the

semantics

of

the

module,

then

the

modules

that

you

write

might

not

execute,

meaning

what

you

think

they

mean

and

at

a

gorrik

with

all

of

the

packages,

including

ESM.

A

A

Never

changes

anything

inside

a

function,

source

text,

the

function,

source

texts

can

always

just

be

preserved,

exactly

as

they

are

originally

so.

There's

no

changes

there

and

the

reason

I

can

get

away

with

that

is

that

exports,

export

and

import

statements

never

occur

within

function

bodies

now

the

place

where

we

will

no

longer

be

able

to

get

away

with.

That

is

dynamic,

import

expressions,

but

right

now

we're

hitting

those.

A

So

when

we

allow

them

through

rewrite,

we

will

least

not

be

breaking

any

code

that

we're

currently

accepting,

but

still

it

the

for

module

code

with

dynamic

import

expressions.

They

can

happen

inside

and

inside

a

function

and

I

have

no

idea

what

we

can

do

with

that

other

than

change

the

source

code

of

the

function.

How.

A

Way

what

cess

does

with

so

the

only

way

to

do

it

safely

without

cursing

and

without

lexing

without

tokenizing,

is

to

adopt

a

conservative

rule.

We

want

to

be

sure

to

reject

all

valid

imports,

but

without

accurately

tokenizing

the

source.

You

have

to

also

reject

some

programs.

You

should

have

accepted

who's

a

reg

ex

and

the

reg

ex

will

find

what

look

like

import

expressions

that

occur

inside

on

literal

strings

or

comment

and

will

reject

those

programs

as

well.

A

A

B

B

A

Not

know

that

that

it

would

make

reject

Ali

Jason,

it

makes

sense

because

reg

X,

that's

pretty

cool.

So

yes,

given

that

it

was

conservative

in

that

way,

this

is

exactly

now

I

guess.

The

difference

is

that

what's

accepted

here

is

essentially

all

valid

program

source

text

other

than

things

that

seem

to

have

an

import

expression.

A

And

also

the

important

you

know,

import

meta

can

only

appear

in

modules,

not

in

the

script.

But

again

you

hear

inside

functions

so

have

the

same

problem

with

us

in

any

case

for

just

import

and

export

statements,

which

is

the

the

thing

that's

pervasive

right

now.

I

wanted

to

rewrite

that

didn't

change,

function,

source

text

at

all

and

minimally

invasive

or

overall,

for

the

module

source

tag

essentially

just

rewrote

the

import

and

export

statements

and

Saleh

came

up

with

a

very

nice

rewrite

that

met

all

of

the

constraints

that

I've

just

stayed.

A

The

rewriting

that

we've

been

discussing

in

these

meetings

and

that

masala

I

wrote

is

one

that

uses

the

width

on

a

proxy

trick

for

creating

the

scopes

that

are

the

rewritten

import

and

export

variables.

And

you

have

to

do

a

with

on

a

proxy

per

module,

because

each

module

has

its

own

unique

namespace

of

imported

variable

names.

A

However,

by

far

the

typical

case

is

that

an

exporting

module

does

not

reassign

it

to

an

exported

variable

for

those

cases,

it's

as

if

the

exported

variable

were

exported

as

a

Const

variable,

but

that's

not

as

common,

but

it's

it's

still.

It's

reliably,

lexically

analyzable

that

it

doesn't

sign.

Oh

I'm,

sorry

I,

think,

there's

one

thing,

I

think

I

didn't

make

clear.

A

It's

the

fact

that

the

rewritten

string

is

itself

in

a

valuable

script

that

allows

us

to

partition

the

risk

and

say

that

the

the

module

support

is

a

layer

on

top

of

the

evaluable

string,

support

and

the

evaluable

string

layer.

It's

security

does

not

depend

on

the

module

layer

being

correct.

Okay,

so

all

of

that

said

the

importing

module,

if

you're

not

doing

an

inter

module

analysis

cannot

tell

if

an

imported

variable

is

a

live

binding

or

not.

I

cannot

tell

whether

the

exporting

module

reassigned

to

it.

A

So

you

want

the

rewritten

import

to

be

efficient,

know

whether

or

not

the

exporter

is

reassigning

on

the

exporting

side.

The

typical

case

is

that

it

doesn't

really

want

it

to

be

a

lexical

variable

on

the

exporting

side,

and

then

the

remaining

case

is

that

the

rewriter

observes

that

the

exporting

side

is

exporting

a

variable

that

it

that

that

it

does

reassign

to,

in

which

case

the

rewrite

here

uses

the

variable,

but

it

does

not

declare

it

and

the

metadata

fed

into

the

proxy.

So

for

these

modules,

the

width

on

a

proxy

track

is

essential.

A

A

Reservoir

reserved

identifier

constraint

in

the

at

the

module

level,

which

is

the

module,

rewrite

reserves

all

identifiers,

beginning

dollar,

h

underbar

for

itself,

so

it

so.

The

parser

needs

to

have

an

additional

rule

that

if

such

an

identifier

appears

in

the

first

text

that

into

the

rewriter,

those

are

addition

additional

grounds

for

rejection

and

more

fancy.

Things

can

be

done,

but

I

think

practically

we're

just

going

to

stay

with

that

simple

rule

well

until

and

unless

it

gets

us

into

trouble.

Just

a.

D

B

C

C

A

Good

I,

like

that,

let's

I

hereby

say

that

I

am

that

I

plan

to

do

that.

Unless

there's

a

problem,

Michael

Fink

is

not

on

this

call,

so

I'll

definitely

coordinate

with

him,

because

I

I

want

to

have

the

same

reservation

identifier

in

what

I'm

doing

as

what

he's

doing

in

his

jessica

is

compiler

for

Jessie.

A

A

So

you

look

up

the

name

of

the

module

in

there

and

then

you

look

up

the

name

of

the

exported

variable

and

what

you're

getting

there

is

a

binding

to

the

observe

method

that

we

see

down

below

where

the

observed

method,

so

the

dot

X

there

is

giving

us

the

observed

function

of

an

instance

of

the

our

W

export,

that's

being

created

here.

It's

giving

you

an

instance

of

it's

giving.

A

You

observe

function

from

that

instance,

and

what

you're

passing

into

it

is

an

arrow

function

that

updates

the

lexical

variable

in

this

case

named

Y,

because

that's

what

I'm?

That's

what

the

import

statement

says

to

rename

it

to

that

where

so

the

arrow

function

simply

takes

whatever

its

invoked

with

and

updates

that

lexical

variable.

A

A

C

A

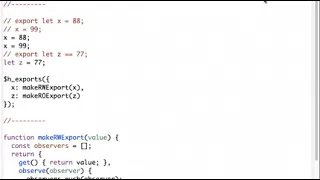

So

so

you

know

ask

questions

as

you

need

to,

so

you

can

follow

it

on

what

I'm

doing

sorry

case

over

here

we've

got

an

original

source

text

which

is

export.

Let

x

equals

88

x

equals

99,

so

I

said

so.

X

would

be

a

variable

that

is

rewritten

and

then

down

below.

We

also

have

let

Z

equal

77,

so

that's

an

exported,

let

variable

that

is

it

where

it's

statically

visible,

that

it's

not

rewritten.

A

We

would

rewrite

the

variable-

that's

not

rewritten,

in

the

obvious

way,

by

just

removing

the

export

and

then

there's

another

endowment

called

dollar

h,

underbar

exports,

where

we

create

a

description

of

every

lexical

variable

that

we're

exporting,

and

since

you

know

that

you

know

the

I'm

just

covering

the

cases

that

I'm

showing,

but

obviously

all

these

data

structures

have

to

cover

all

of

the

cases

possible

with

Ekman

script

modules,

but

in

any

case

the

RW

export.

The

get

and

set

here

would

also

be

the

getter

and

setter

for

the

property

on

the

module

export

object.

A

And

so,

but

so,

but

that

way

the

module

exports

object

itself

also

has

it

Samantha's,

preserved

and

then

over

here

we

see

make

read-only

export

for

the

Z

case,

and

for

that

it's

an

object

with

the

same

getter.

It

just

returns.

The

value

the

observed

function

just

invokes

the

observer

with

the

value,

but

it

doesn't

store

the

observer

because

it

knows

that

the

doubt

that

the

value

will

never

be

updated.

So

even

the

object.

A

The

state

tracking

object

itself

is

very

cheap

in

the

case

of

an

of

a

variable,

that's

not

reassigned,

and

we

still

get

to

use

the

the

you

know

together.

If

we

want

in

the

exports

object

in

this

case,

I

suppose

there's

enough

metadata

on

the

exporting

side

that

we

can

just

create

a

non

writable

non

configurable

data

property

with

the

value

on

the

on

the

X,

on

the

module

object

and

that's,

oh,

that's

a

you

know,

I

had

to

say:

I

have.

A

C

A

A

B

The

piece

of

this

story

that

I,

don't

I,

won't

say

I,

don't

follow

I,

follow

it,

but

I

kind

of

lost

the

thread

of

something

a

long

way

was

we

started

out

by

saying?

Well,

the

the

most

important

thing

is

we

don't

want

to

have

to

have

a

parser

and

rewriter

as

part

of

our

TCB,

and

then

you

added

a

parser

to

rewrite

it.

So

I

explained

right.

A

But

that's

a

crucial

thing

to

to

get

clear,

get

clear

on

and

for

all

of

us

understands

it's

very

much

the

hard

constraint,

that's

shaping

everything

else.

Here

we

want

to

build

the

the

overall

system,

the

overall

SES

system

in

two

layers,

the

lower

layer

when

I

say

two

layers,

I'm,

not

distinguishing

between

the

the

realms

layer

and

the

Sessler.

Those

are

actually

already

two

layers,

but

let

just

collapse

them

into

one

layer.

A

A

A

A

A

But

so

the

the

hard

guarantee

here

is

that,

from

the

point

of

view

of

the

cess

evaluable

scripts

and

manifest

declarative,

manifest

driven

mechanisms

and

all

that

that

that

formed

the

scaffolding

around

evaluating

the

scripts

that

represent

modules,

whatever

limitations

on

Authority

those

non

module

mechanisms,

Express,

which

are

expressed

purely

at

the

lower

layers.

The

parser

buddy

at

the

heart

at

the

higher

layer

cannot

cause

those

authority

limitations

to

be

exceeded.

A

C

B

Passed

into

the

excuse

me

being

passed

into

the

valuable

string

that

says,

evaluates

so

so

I'm,

making

a

much

stronger

statement

than

that.

Well,

that's

good

to

say:

I'm,

not

a

hundred

cents

sure

I

buy

that,

because,

if

you

have,

the

question

is,

is

if

you

have

multiple

modules

that

are

that

are

going

through

this

this?

A

Under

so

there's

there's

there

under

it

under

some

conditions,

yes

and

those

conditions

occur,

will

occur

frequently

enough

to

be

important.

So

let

me

first

of

all,

I

just

make

a

observation.

I'm

then

going

to

throw

away,

which

is

the

kinds

of

bugs

that

I

expect

a

buggy

parser

to

introduce

shouldn't,

be

introducing

much

danger

along

these

lines,

but

that's

that's

based

on

purely

on

intuition

and

it

might

be

a

buggy

intuition.

Okay,

so.

B

B

They

were

at

least

trying-

or

at

least

right,

and

so

we

don't

have

to

worry

about

the

query

where

we

are

not

worrying

about

the

case,

where

that

that

parser

is

explicitly

malicious,

because

if

we

were

concerned

about

that,

then

we'd

be

just

as

concerned

about

the

implementer

obsess

being

explicitly

malicious.

So.

A

So

so,

yes,

that's

so

that

was

the

observation

that

I

wanted

to

make

and

then

throw

away.

Okay,

which

is

I,

think

all

of

that's

true

and

it's

important,

but

it's

hard

to

make

a

case

that

it's

true

so

so

we

should

be.

We

should

remain

skeptical

and

worried,

but

let's

start

by

analyzing

the

worst

case

right.

A

It

doesn't

smell

that

bad,

it

smells,

but

not

that

bad,

okay,

the

so,

let's,

let's

go

ahead

and

examine

Oh

a

misery

before

I

examine

the

worst

case.

I

want

to

talk

about

another

case

that

works

out

well.

That

I

think

will

be

realistic

in

many

circumstances,

which

is

that

the

parser

happens

server-side,

so

that

what

arrives

in

the

client

are

only

evaluable

strings.

A

A

You

can't

cause

something

that

was

transformed

through

a

correct

parser

to

misbehave.

You

can

only

cause

the

code

that

you

were

able

to

decide

what

parser

it

goes

through

to

misbehave,

okay,

so

those

those

two

are

both

cases

where

the

the

worries

are

things

to

worry

about,

but

but

they're

contained

now,

let's

take

the

case,

the

hard

case,

which

is

everything's

going

through

a

cess

side

purser,

but

the

parser

self

militia,

her

search

itself

is,

let's

say,

run

as

a

transformer

under

sense.

A

The

parser

itself

is

malicious,

but

it

has

no

ability

to

cause

any

effects

on

its

own.

All

it

can

do

is

take

in

strings

and

output,

strings

or

potentially

not

terminate,

but

all

of

its

attacks

can

only

be

by

outputting

malicious

strings.

We

don't

give

it

any

ability

to

attack

by

any

other

means

right.

A

So

in

that

case,

yes,

at

the

lower

layer,

we

we

right

through

the

the

you

know

the

manifest

configuration

language

combined

with

evaluable

scripts.

We

write

a

setup

script,

let's

actually

just

take

evaluable

scripts,

because

everything

we

can

say

through

the

any

kind

of

declarative,

manifest

language.

Since

the

lower

layer.

There

are

no

loaders

and

there

is

no

modules

we

can

just

say

directly

as

a

valuable

script.

A

Any

references

will

get

wrapped

in

unwrapped

going

through

the

membrane,

so

both

of

these

sub

graphs

can

affect

the

outside

world

and

both

of

these

sub

graphs

can

communicate

with

each

other

and

then,

let's

say

the

setup

script.

Nano

revokes

the

membrane

I

will

make

the

claim

that,

even

with

this

malicious

parser

transforming

all

of

the

module

code

that

has

been

loaded

into

each

of

these

compartments

that

this

reduces

to

the

already

solved

problem

of

just

loading

malicious

code

into

the

two

compartments

and

controlling

what

they

have

access

to

and

how

they

can

interact.

B

Possibly

not,

but

if,

if

they

had

a

an

ability

to

use

the

authorities,

they

had

been

legitimately

granted

prior

to

the

revocation

of

the

membrane

to

establish

a

membrane

by

passing

covert

channel

of

some

kind

from

through

the

external

world

through

the

external

world.

Well,

they

also

could

have

done

that,

just

by

dint

of

being

malicious

to

begin

with.

But

this

feels

different.

B

A

Okay,

so

it

is

different,

which

is

the

creator

of

an

application.

For

example,

let's

just

go

back

to

the

event

stream

incident.

Creator

of

the

cryptocurrency

wallet

is

wants

to

be

able

to

make

use

of

modules

where

the

author

of

the

cryptocurrency

wallet

can

read

the

source

code

of

modules

and,

to

the

extent

that

they

can

reason

reliably

statically

about

JavaScript,

which

is

actually

quite

high,

very

much

underestimated

the

degree

to

which

you

can

do

safe,

static

reasoning

about

JavaScript

and,

in

particular,

in

the

cess

context.

A

They

have

made

decisions

to

trust,

something

which

is

not

trustworthy.

Yes,

they

have,

they

have

become

vulnerable

to

something

under

the

wrong

assumption

that

the

module

means

what

its

source

code

does.

It

means

so

for

so

so

I

think

we

I

think

that

covers

the

qualifications

well

and

I.

Think

that,

with

all

of

those

qualifications

being

stated,

we're

still

doing

a

wonderful

thing

by

partitioning

the

risk

into

layers.

In

this

way,

I

think.

B

B

A

The

answer

is

not

I'll

make

the

case

that

that,

for

current

platforms,

I

until

something

like

the

realm

Shin

gets

adopted

into

the

platform,

clones

were

stuck

with

current

platforms

and

having

to

do

all

of

this

by

shimming.

In

order

to

collapse

the

layers

you

would

still

have

to

have

the

source

code

for

a

parser

and

rewriter.

B

Nevertheless,

up

until

this

point,

we

had

a

place

to

stand

where

we

could

make

an

argument

about

sort

of

perfect

ability

at

the

semantic

level,

even

if

the

implementation

might

fall

short

of

that,

and

now

it

feels

like

this

this

this

mechanism,

not

not

the

partitioning

in

two

layers

which

I

think

is,

is

a

is

a

a

good

and

worthy

thing,

but

just

the

introduction

of

a

rewriter

I

think

that

that

undermines

this.

This

this

story

about

something

which

is

perfectible

right.

A

B

B

B

A

Rake

X

are

fast,

but

yes,

it

does

introduce

an

a

you

know:

linear

performance

cost

because

you

it

has

to

scan

the

entire

string

and

then,

depending

on

you,

know,

complexity,

analysis

of

reg

X,

which,

which

I'm

completely

ignorant

of

it's

conceivable

that

that

particular

reg

X

has

written

happen,

has

a

greater

than

linear

overhead,

but

the

the

problem

that

it's

solving

should

not.

There

should

be

a

reg

X.

That's

somebody

who

knows

what

they're

doing

could

write

that

has

only

linear

overhead

and

is

very

fast.

Okay,.

B

A

Not

expecting

there

to

be

a

problem

with

the

room

with

oregano

scanning.

However,

there

is

an

interesting

thing

about

the

conservativeness

of

the

rule,

which

is

you

would

think

that

now

that

we've

got

a

purser

full

parser

at

the

higher

level,

we

no

longer

need

to

be

conservative

at

the

lower

level.

But

of

course,

at

that

point

we

would

be

breaking

our

partitioning

risk,

but

we

can

regain

accuracy.

A

In

a

bizarre

way,

at

the

sacrifice

of

some

of

our

other

goals,

so

I'm

just

going

to

mention

this

for

completeness

I'm,

not

recommending

that

we

do

this,

but

the

parser

and

rewriter

could

recognize

in

the

source.

Are

there

any

strings

with

this

conservative

reg

X

would

reject

falsely

and

if

so,

rewrite

it

into

something

that

is

semantically

equivalent

but

wouldn't

get

rejected.

A

A

B

A

A

A

A

B

C

A

It

is

allowed

for

a

JavaScript

engine

when

processing

script

source

code,

but

not

when

processing

module

source

code

to

recognize

those,

and

then

this

is

so

incredibly

bizarre

treat

them

as

line

comments

so

that

everything

is

commented

out,

starting

from

either

the

HTML

open

or

HTML

close

to

the

end

of

that

line,

which

has

nothing

to

do

with

the

HTML

comment.

Rule

I

have

no

idea

store.

Eclis

I

couldn't

explain.

E

Ok,

so,

a

very

very,

very

long

time

ago,

back

when

ia

6

was

just

gained

in

popularity

browsers

had

some

interesting

things

going

on

with

what

was

placed

inside

of

script

tags,

everything

from

C

data

to

other

things.

But

the

key

point

here

is

when

these

earlier

HTML

parsers

existed.

They

would

actually

place

the

text

contained

within

script

tags

into

the

render

pipeline,

and

so

people

started

putting

HTML

comments

around

them

inside

the

script

tag.

So

how

did.

A

E

A

You

know

less

than

the

unary

knot

of

a

pre-decrement

of

something

or

a

post,

decrement

greater

than

or

both

perfectly

valid.

So

the

result

is

that

you

can

write

a

single

javascript

source

text

that

executes

that

purses

and

executes

one

way

when

parsed,

with

this

role

and

part

and

and

parses

and

executes

another

way

on

a

platform.

A

Without

this

rule,

where

both

of

them

succeed,

there's

no

errors

in

either

case,

they

just

mean

two

different

things,

and

that

means

that

any

analysis

you

did

under

one

assumption

can

be

violated

by

someone

who

writes

it

so

that

it

has

another

meaning,

under

the

other

assumption,

if

you

can

ever

cause

the

result

to

be

executed

under

the

other

assumption.

So

what

I've

done

in

my

branch

is

in

the

same

way

that

I

reject

anything

that

looks

like

an

import

expression.

A

C

A

Yep

and

in

particular,

for

rewriting

modules

to

evaluable

scripts,

the

string

might

appear

inside

module

search

text,

meaning

one

thing

and

then,

if

preserved,

when

rewriting

them

the

module

into

an

a

valuable

string.

If

that

literal

sequence

of

characters

is

preserved,

which

remember

is

very

much

the

part

of

the

goal

of

the

rewrite

I'm

showing

is

to

preserve

exactly

the

source

text

functions.

A

E

A

A

It's

it

could

only

in

the

sense

that

the

bizarre

parsing

rule

for

scripts

is

an

X

B,

so

on

v8

and

therefore

in

the

brown

and

in

chrome

and

anode

and

all

others,

it

will

do

it.

But

I

don't

know

that

we

have

this

problem

on

XS,

because

access

might

very

well

have

chosen

not

to

implement

this

nxb

bizarreness.

A

A

A

One

more

thing

about

this

form

of

rewrite

is

one

of

the

things

that

we're

used

to

from

all

of

the

packagers

is

that

a

set

of

modules

can

be

packaged

together

into

one

evaluable

string

by

using

lexical,

scoping

or

or

if

ease,

immediately

evaluated,

function.

Expressions

and

then

you

know

doing

a

bunch

of

renaming

hook

up

the

scopes

and

all

that

looking

at

this

rewrite

it

it's

it's

tempting

to

think

that

we

could

take

each

module

individually

and

wrap

it

in

some

kind

of

a

function

and

still

have

the

entire.

A

A

set

of

modules

compiled

together

still

go

into

one

evaluable

string,

and

I

think

that

that

is

not

the

case,

except

in

a

very

trivial

sense.

I'll

come

back

to

him.

I

think

that's

not

the

case

here,

because

we

have

to

do

the

width

on

a

proxy

trick

cur

module

because

they

each

need

their

own

lexical

scope.

They

each

need

their

own

important,

explore

expressions

and

in

particular,

they

each

need

their

own

assignment

trap

handlers

for

the

live

bindings,

so

the

trivial

sense

in

which

we

still

can

package

it

is

we

take

the

rear

it.

A

Each

of

the

rewritten

modules

and

encase

it

in

a

literal

string

and

then

the

package

together

form

is

just

the

source

code

of

a

map

from

module

names

to

literal

strings,

which

are

the

source

texts

of

modules.

But

we

still

keep

each

module

source

text

as

a

separately

evaluated

string.

We

don't

try

to

create

one

evaluation

context

across

a

set

of

packaged

together

modules.

A

C

Yes,

of

course,

and

this

we're

currently

working

on

me

on

the

strategy

around

this

one

of

the

things

we're

trying

to

come

up

with-

is

a

format

for

your

l,

addressable

modules.

So

in

the

browser

you

could

have

a

module

specifying

an

import

and

that

import

will

be

dynamically

resolved,

resolved

all

the

way

back

to

the

server

or

to

the

caching

layers

of

the

browser,

including

the

byte

code

cache.

C

So

we're

looking

at

options

to

have

well

per

module

in

that

case

are

very

interesting

because

they,

if

we

properly

send

us

our

sanitized,

the

importing

environment,

we

can

benefit

not

only

from

the

file

cache,

but

also

from

the

byte

code

cache

if

we

don't

have

an

evaluator

in

between

the

importing

URL

and

the

execution

context.

So

there's

a

lot

of

work

that

we're

doing

at

that.

We

embarking

on

that

right

now.

D

D

What

else

do

I

do?

I

handle

well

I

handle

eval,

so

I

handled

the

eval

case.

I

send

the

string

into

a

compiler

to

detect

dynamic

import

through

a

foul,

because

user

code

has

done

that

for

feature

detection

models.

I

do

a

few

other

things.

I

also

detect

I

have

to

detect

variables

that

are

are

not

available

in

a

module

format.

D

So

I

have

to

do

things

like

look

for

double

underbar,

their

name

file,

name

arguments

and

some

other

things

to

kind

of

ensure

that

they

aren't

being

accessed

or

if

they

are,

they

throw

the

appropriate

errors

unless

they've

been

assigned

globally

or

locally.

So

I

do

a

little

bit

more

of

that.

Really

a

lot

more

like

node

centric

East

off

is,

is

where

ESM

does

a

little

bit

more

work.

D

A

D

Yeah,

so

that

is

that

is

super

similar

I've

even

thought

about

the

optimization

that

year

you

have

here

to

where

you

have

like

a

right

read

only

thing

and

I

think

at

some

point

in

time.

I

did

that

and

I

have

some

form

of

that

optimizations

in

place

still

or

where

I

need

to

revisit

that

again.

But

yeah.

D

All

of

that

looks,

looks

looks

really

similar

to

the

only

thing

I

did

too

is

that

when

I

did

these

transforms,

I

did

them

in

line,

so

I

didn't

have

to

deal

with

shifting

sources

which

helps

for

source

Maps,

because

I

also

dynamically

generate

source

maps

for

things

like

the

chrome

inspector.

So

whenever

you're

and

whenever

you

launch

that

it

still

shows

the

ESM

code

there

and

not

the

instrumented

code.

C

A

Okay,

great,

so

that's

and

I'm

glad

you

raised

that

because

I

hadn't

hadn't

really

thought

about

that.

But

any

you

know

the

this

rewriter

of

course,

like

any

rewriter,

really

has

to

generate

source

maps

in

order

to

be

usable,

I

suppose

another

thing:

that's

the

source,

Maps,

the

the

the

source

map

mechanism,

as

opposed

to

similar

things

in

elf.

The

source

map

mechanism

does

not

include

variable

renaming,

it's

just

a

textual

position

remapping.

A

D

I

also

Jam

a

lot

of

my

transform

on

the

line,

zero

or

line

one

depending

on

if

your

zero

index

or

not.

So

that's,

usually

my

worst

line

there,

I

I,

the

other

things

I

end

up

having

to

do

is

whenever

you

throw

an

error,

you

want

to

have

a

descriptive

error

message,

and

so

in

node

there

is

a

line

decorator,

which

will

show

you

the

line

of

code

with

a

carrot

in

a

position

of

where

the

error

occurred,

and

so

I

have

some

work

around

that

as

well.

D

A

D

D

Same

ideas

are

there

like

I

can

talk

about

how

I

decorate

or

clear

a

stack

and

and

how

I

could

how

I

handle

those

those

parse

errors

versus

other

errors.

I

also

tracked

a

lot

of

metadata

and

save

that

so

I

know

how

to

like

avoid

parsing

every

time

and

store

a

lot

of

metadata

around

like

if

a

file

I

have

a

transforms,

bitwise

flag.

D

That

lets

me

know

how

many

transforms

I

have

and

what

types

of

transforms

I

have

and

I

save

that

metadata,

along

with

the

v8

code,

cache

compliation

as

well

in

a

big

blob,

so

that

helps

for

reevaluation

of

functions

and

stuff.

So

I'm

kind

of

going

down

the

rabbit

hole

in

terms

of

optimization

caching,

metadata

error,

decorating

things

like

that

even

I've

even

got

a

way

to

avoid

the

two

string

issue

which

exposes

that

some

of

the

transforms

right

now,

I'm

selectively

fixing

it

for

things

like

puppeteer,

which

I

do

to

string.

D

A

Great

great,

it

sounds

like

this,

the

the

stuff

that

you've

done,

that

we

need

exceeds

the

total

size

of

the

thing

that's

different,

so

maybe

the

right

thing

for

us

to

do

is

to

actually

just

start

with

ESM

and

just

insert

these

things.

You

know

create

a

branch

of

esm

that

uses

this

translation

yeah.

B

A

Both

to

Bradley

ed

to

jdd,

there's

also

a

bunch

of

work

you

guys

are

doing

on

node

so

that

node

modules

and

Atma

script

modules

have

a

principled

coexistence

and

I

have

not

really

followed

in

depth

the

semantics

of

how

they

coexist

there.

Anything

about

this

rewrite

scheme

that

would

make

any

of

that

more

difficult.

E

A

Yeah

I

think

it

is

I

mean,

let's

put

it

another

way,

if

we,

if

what

we

wanted

to

build

around

this

transform,

is

not

just

user

levels,

support

for

safe

heck,

no

script

modules,

but

also

a

user

level

support

for

common

J's

modules,

which

by

themselves

would

have

been

much

easier

for

us.

We

wouldn't

need

a

rewrite,

but

then

we

wanted

to

be

able

to

simultaneously

support

both

kinds

of

safe

modules

where

their

coexistence

mirrored

the

semantics

of

their

coexistence

on

node

I.

Don't

know

how

coherent

to

question

that

was

I.

D

Think

you'll

be

able

to

do

that.

Whatever

node

comes

up

with

it's

gonna

be

simpler,

then

what

I've

had

to

deal

with

in

terms

of

Interop,

which

is

a

more

seamless

kind

of

interrupts,

so

I

think

with

the

node

that

right

now

there's

various

flags

and

package

to

JSON,

configs

and

ways

to

do

it.

But

I

think

it

should

be

fine

for

what

you

you're.

Currently

what

you're

currently

doing,

okay,

I,

don't

I,

don't

see

a

problem

with

what

you

you

have

and

then

moving

to

what

what

node

has

okay

well.

A

D

E

E

E

There's

been

efforts

to

try

to

think

about

how

to

support

them

directly.

There

is

a

proposal

in

the

modules

working

group

that

does

something

very

similar

they're

doing

it

through

package.json,

not

through

import

Maps,

most

likely

it'll

be

a

different

mechanism

with

similar

capabilities.

Part

of

the

problem

is

just

in

import.

Maps

you're

expected

to

have

full

control

over

the

entire

URL

space

when

you're

doing

it,

mm-hmm.

A

E

A

That's

great

I

tried

to

think

about

some

kind

of

compositional,

so

you

know

the

the

the

manifest

in

the

that

we've

been

using

in

the

legacy.

To

do

example,

I

mean

the

the

thing

that

we're

just

using

to

flesh

out

the

ideas.

Ninety

percent

of

what

it's

saying

is

that

is

actually

could

be

said

with

import

maps

when

we

arrived

at

that

manifest.

A

You

know

we

went

through

these

designs

that

had

both

containment

and

you

know

hierarchical

containment

and

then

a

dag

of

dependencies,

and

that

turned

out

to

be

very

very

hard

to

explain.

So

we

dropped

the

containment.

But

then,

if

we

have

the

dag

of

dependencies,

we

don't

have

compositional

policy.

We

just

have

a

single

flat

global

policy,

the

this

compositional

import

renaming,

but

is

that

if

that

works,

that's

really

quite

wonderful.

What

package

a

and

package

B

both

depend

on

package

C

and

a.

A

A

E

A

E

A

E

A

A

Yeah

the

explanation

and

also

kind

of

the

attractiveness

on

adoption,

which

is

sort

of

the

flipside

of

explanation.

It

was

solving

a

problem,

people

didn't

know

they

had

yet,

and

they

wouldn't

know

they

had

it

until

they

got

fought

far

enough

with

flat

policy

to

realize

they

needed

something

compositional

and

not

flat,

but

it

sounds

like

people

already

realize

that

so

that's

great.

A

One

of

the

things

that's

awkward

in

terms

of

moving

a

lot

of

the

things

that

we

want

was

both

modules

into

the

ECMO

script.

Standard

is

the

eggman

script

standard

knows

about

modules,

but

it's

never

heard

of

packages

and

a

lot

of

the

declarative,

rewriting

that

we

would

like

to

express

for

doing

least

authority

wiring

on

a

lot

of

that

would

naturally

want

to

talk

about

packages,

and

it

sounds

like

this.

Compositional

hop

rewriting

remapping

policy

already

is

thinking

in

terms

of

packages.

A

C

A

Yeah,

if

we

were

trying

to

introduce

into

the

ecosystem

a

new

modularity

concept

like

packages,

then

I

would

I

would

very

much

agree.

It's

it's

it's

it's.

The

community

wouldn't

be

ready

to

adopt

is

a

new

concept

if

they

didn't

already

have

it,

but

in

the

existing

ecosystem

they

already

have

the

concept

of

packages

that

people

already

structure

software

around

the

division

of

grouping

of

name

of

modules,

into

packages

for

most

modules.

C

A

E

You

are

forming

something,

but

maybe

I

can

rephrase

it.

You

know

maybe

that'll

clarify

a

bit

so

currently

the

way

we've

been

approaching.

All

these

imports

are

a

module

to

module

mapping

so

directly

that

we

are

not

providing

any

sort

of

scoping

mechanism

to

group

different

modules

together

for

their

overall.

E

E

Let's

call

that

other

module

B

the

output

does

actually

show

you

what

package,

what

the

package

of

a

attempts

to

get,

but

it

shows

you

that

a

the

module

imports

B

the

module

rather

than

stating

that

a

is

trying

to

import

the

package

of

B,

okay

and

the

package

of

B

could

have

more

authority

in

it

than

B

itself.

Has

okay.

C

And

I'm

gonna

sure

if

this

is

irrelevant

or

not,

there

is

talk

of

a

new

bin

ASTM

standard

with

script.

That's

a

whole

nother

method

of

loading,

something

at

some

point.

You

have

to

wonder

how

many

times

whether

we're

starting

to

have

too

many

ways

of

doing

things.

This

was

the

problem

with

modules

having

both

common

j/s

and

this

other

module

format.

I

forget

about

now

we

might

run

into

just

too

many

ways

to

load

things

period.

C

E

Is

an

attempt?

That's

currently

stalled

due

to

family

things,

but

the

browser,

vendors

and

node

have

a

desire

to

make

sure

that

their

repos

adhere

to

the

same

semantics.

Well,

apparently,

all

of

them

are

doing

code

transforms

in

order

to

make

things

appear

to

work

like

top-level

await

that

should

not

work.

E

E

C

E

E

E

A

E

E

So

Bowser's

already

invalidate

that

actually

in

some

ways

due

to

their

weird

preamble

when

they

run

script,

ups

I

cannot

speak

to

that

very

well.

But

Dominic

has

pointed

that

out

a

couple

times.

Mm-Hm

I

think

that

is

due

to

history

of

roughly

trying

to

do

the

same

origin

policy

and

iframes

being

able

to

access

each

other's

Global's.

A

I

just

mentioned

by

the

way,

speaking

of

the

node

rebel,

something

that

aghoris

is

now

stubbed

his

toe

on

twice,

which

is

when

we

run

javascript

code

from

the

node

rebel

promises

that

get

created.

Have

this

additional

mysterious

own

property

called

domain

and

domain

seems

to

have

as

its

value.

It

leads

to

this

whole

other

object

graph

of

objects.

We

don't

recognize,

many

of

which

look

like

they

are

probably

security

holes,

so.

A

A

E

E

A

E

E

E

D

E

Values

in

these

two

agents,

so

a

and

B

have

created

a

cycle

with

each

other,

and

we

cannot

figure

out

when

it

is

safe

to

garbage

collect

both

at

the

same

time,

because

if

we

strongly

hold

on

to

it

in

one,

it

keeps

it

alive

in

the

other,

which

is

fine,

but

that

means

to

keep

it

alive

in

the

other

agent.

It

strongly

holds

on

to

it

right

right.

A

E

A

A

You

know,

without

without

thinking

about

what

your

implementation

constraints

are

just

mean

fantasize

for

a

moment

in

Java

I.

Remember

the

there

is

the

issue

of

classes

versus

loaders.

A

class

would

keep

its

loader

alive,

the

loader

from

which

the

class

was

loaded

and

the

loader

would

keep

alive

all

classes.

Loaded

by

that

learner,

we're

in

Java

class

I

mean

a

top-level

class,

is

basically

the

Java

form

of

a

module

instance.

A

And

you

could

dynamically

create

loaders,

but

a

loader

and

all

that's

classes

together.

If

oh

and

the

instances

would

keep

the

class

alive,

but

of

course

nothing

nothing.

Magic

was

keeping

the

instances,

but

if

the

loader,

all

of

its

classes

and

all

of

its

all

of

the

instances

of

those

classes

were

all

garbage

together,

then

the

entire

group

would

disappear.

Can

we

do

something

finer

grained

than

a

realm

in

particular

just

have

a

once.

A

A

A

We

basically

create

one

frozen

realm

and

then

having

created

one

frozen

realm,

since

it's

completely

stateless,

we

just

want

to

reuse

it

forever

or

we

want

to

have

the

ability

to

reuse

it

forever

and

then,

while

we're

reusing

it,

we

create

all

of

these

different

little

modules,

sub

graphs

that

are

isolated

from

each

other,

they're

genuinely

isolated

and

because

they're

genuinely

isolated,

even

if

they

refer

to

each

other

with

imports

and

exports

and

all

sorts

of

other

things.

When

the

sub

graph

as

a

whole

is

not

used

anymore,

then

the

whole

thing

can

disappear.

A

E

Let's

say

we

have

a

loader

ses

loader

only

only

generates

valid

SAS,

okay

and

let's

say

on

our

main

thread.

We

call

out

to

the

the

loader

saying

we

want

a

load,

a

file

okay,

so

the

file

gets

loaded

by

SES

and

sent

back.

It

generates

the

module

reference

on

the

main

agent

and

the

main

agent

now

keeps

the

SES

loader

alive

because

it

has

a

reference

to

that

module.

E

So

that

means

that

that

module

is

keeping

a

yes

loader

alive,

all

right

due

to

just

how

you

have

to

track

things

realistically

to

ever

support

cycles

and

live

bindings.

The

SES

loader

nests

needs

any

time

it

could

regenerate

the

friends

to

that

module

to

keep

that

module

alive,

and

that

is

not

something

we

can

solve.

A

So

it's

basically

on

you

know

in

the

as

I

was

describing

with

Java.

The

modules

pointed

there

loader

and

the

loader

points

that

the

modules,

but

when

the

whole

thing

is

within

the

view

of