►

From YouTube: Apache Cassandra and DataStax Enterprise Explained with Peter Halliday at WildHacks NU

Description

Speaker: Peter Halliday, Senior Software Engineer at DataStax

A

Excellent,

this

is

actually

as

a

percentage

much

larger

than

usual.

How

many

of

you

have

heard

about

Cassandra

before

okay,

good,

so

we're

going

to

preach

to

the

choir

a

little

bit?

My

name

is

Peter

had

all

day

I

work

at

data

sets

and

I'm

on

a

tee,

nuh

senior

software

engineer,

that

is

on

a

team

for

a

for

Oksana

we're

going

to

get

into

what

that

is

in

a

little

bit.

But

what

that

means

is

it

wasn't

too

long?

A

Actually,

until

I

was

where

you

are

I

graduated

with

a

master's

degree

in

june

2013

from

Cornell

University

with

a

specialty

in

distributed

systems

and

I

got

hired

datastax

working

on

distributed

systems

programming

in

Python

programming

enclosure,

which

is

a

functional

base

language

on

the

JVM.

If

you

are

interested

in

any

of

those

kind

of

topics,

you

can

certainly

geek

out

with

me

after

the

but

we're

going

to

learn

a

little

bit

about

Cassandra

and

we're

going

to

you

know.

I've

already

asked

a

question

about

six

Cassandra,

but

I'm

not.

A

Some

of

you

might

not

know

that

you

are

using

the

Thunder

already

actually

how

many

of

you

guys

have

filed

your

taxes

with

TurboTax.

Okay,

you

use

a

patch

of

Cassandra.

If

you

ever

play

call

of

duty,

if

you've

played

call

of

duty,

you've

used

Apache

Cassandra,

have

you

ever

done

something

an

Instagram

or

Spotify?

What

about

Netflix?

So,

as

you

can

see,

a

theme

of

these

is:

if

you

have

a

large

amount

of

data,

then

you

might

want

to

consider

a

Pecha

Cassandra.

So

what

is

a

budget

Cassandra?

A

It's

a

massively

scalable,

no

SQL

database,

no

SQL

databases.

You

know

a

lot

of

people

are

starting

to

Paula

a

post,

relational

database.

This

is

unstructured

data

that

and

usually

it's

stuff,

that

you

want

to

distribute

all

over

the

world.

It's

so

it's

big

data.

It's

multiple

data!

Centers!

You

want

no

single

point

of

failure.

You

want

continuous

availability

without

compromising

performance,

so

we

cut

across

a

bunch

of

like

I

mentioned

a

lot

of

different

kinds

of

customers.

A

Here

are

an

example

of

a

bunch

of

workloads

that

our

customer

segments

that

we

have

that

utilize

cassandra

and

anything

from

you

know

ebay,

using

a

ecommerce

to

fraud,

detection

with

air

to

their

networks

to

sensor

data.

You

know,

I

mentioned

call

of

duty

online

games

a

lot

of

different

kinds

of

data.

This

isn't

just

you

know

online

websites.

A

They

use

Cassandra

for

a

lot

of

very

important

reasons.

One

is

they

have

data

centers

all

over

the

world

and

their

customers

all

over

the

world.

They

want

this

data

replicated

automatically.

They

want

a

consistent

linear

scalability.

They

want.

They

want

to

guard

against

failure.

Failure

is

going

to

happen,

and

so

they

want

something

plus

they

want

a

masterless

architecture.

You

know

no

slam

against.

A

You

know

our

friends

at

Microsoft

and

Oracle,

but

a

lot

of

those

relational

technologies,

including

MongoDB,

have

a

single

point

of

failure

in

this

mike

in

this

master,

server

and

you'll

see

as

I

introduce

the

architecture

of

Cassandra

that

we

get

around

that

so

a

little

history

lesson.

Whenever

you

talk

about

Cassandra,

you

really

have

to

give

a

hat

tip

to

the

Google

big

cake

table

paper

and

the

Amazon

dynamo

paper.

If

you're

into

distributed

systems

or

databases,

those

are

two

papers

that

are

publicly

available

that

you

should

read

up

on.

A

There

was

a

engineer

at

Facebook

that

read

those

papers

and

decided

to

program

a

database

Cassandra

for

a

use

case

of

their

messages,

their

inbox

app

at

the

time,

and

so

they

created

Cassandra

and

they

open

sourced

it

and

gave

it

to

the

Apache

foundation,

one

of

our

co-founders

Jonathan

Ellis

of

kind

of

discovered

around

that

time,

and

he

is

currently

serving

as

the

chairman

of

the

Apache

foundation

project.

This

is

an

open

source

project.

We

took

aspects

of

both

of

their

of

you

know:

I

Amazon,

dynamo

and

Google

BigTable,

combined

it

into

both.

A

So

in

order

to

talk

a

little

bit

about

some

of

the

terminology

of

Apache

Cassandra,

so

that

we

and

then

we're

going

to

dive

into

like

a

read

and

write

takes

both

on

the

cluster

level

on

the

node

level.

So

what

is

it

Cassandra

note?

Cassandra

node

is

just

you

know,

a

server,

that's

running

Cassandra

software

and

it

could

be

real

or

it

could

be

virtual

in

some

cloud

instance

somewhere.

So

Cassandra

cluster

is

a

group

of

these

nodes

that

are

working

together.

A

data

center

is

obviously

a

group

of

clusters.

A

A

So

the

Cassandra

cluster

allows

you

to

take

data

and

spread

it

all

around

the

cluster.

So

we're

going

to

talk

a

little

bit

about

how

that

is

partitioned.

These

are

two

there's

two

partitioning

strategies.

We're

going

to

talk

about.

One

is

the

random

partitioning,

which

is

the

default

and

recommended

strategy.

This

strategy

takes

a

hash

key

and

assigns

that

randomly

basically

equally

around

the

cluster.

The

other

is

an

order

partitioning

which

allows

you

to

sort

it

in

order

in

in

an

order

way

that

you

would

define.

This

is

like

many

things,

you'll

learn.

A

This

is

configurable.

All

these

choices

are

configurable,

so

indebted

partitioning

I

talked

a

little

bit

about

in

the

random

partitioning

that

we

take

your

data

and

you

assign

some

sort

of

partition.

Key

that

partition

key

is

applies

a

hashing

algorithm

that

by

default

we

use

a

murmur

three

partitioner

which

takes

the

partition

key

and

creates

a

hash

out

of

it.

A

A

For

example,

it

is

a

number

that's

assigned

to

them,

and

the

hash

algorithm

means

that

it's

assigned

a

bunch

of

numbers

that

it's

responsible

for

from

the

token

value

to

one

more

than

the

token

value

of

the

previous

node,

so

its

able

to

decide

on

which

know

that

data

is

owned.

So

that's

how

data

is

partitioned

in

Cassandra.

That's

we

get

that

from

the

Amazon

dynamo

paper.

The

second

part

that

we

get

from

amazon

is

the

replication

strategy,

and

one

of

the

central

parts

to

the

replication

strategy

is

what's

called

replication

factor.

A

Replication

factor

is

how

many

copies

of

the

data

do

you

want

to

have.

This

is

something

that's

also

configurable

replication

factor

of

one

obviously

is

just

one

copy

of

the

data.

We

don't

recommend

that

you

could

have

a

reputation

vector

to

we

recommend

three.

This

is

something

that

is

controlled

at

the

keyspace

level,

so

each

key

space

you

can

choose

a

different

replication

factor,

there's

two

kinds

of

strategies:

this

will

control

which

replicas

in

your

cluster

will

get

that

data.

We're

going

to

talk

about

the

simple

strategy

and

the

network

topology

strategy.

A

Again

these

are

configurable.

So

in

this

example,

the

first

node

has

a

simple

strategy.

Disk

cluster

has

a

simple

strategy

or

replication

factor

of

two,

and

the

first

note

is

probably

the

owner

of

that.

That's

the

one

that

has

done

assignment

token

and

simple

strategy

means

the

next

replica

will

be

the

one

that

will

get

the

second

copy.

If

you

have

a

reputation

of

factor

of

three

the

one

after

that

will

get

that

third

party,

so

that's

simple

strategy,

it's

pretty

simple!

It's

the

default

strategy!

A

Another

useful

one

is

network

topology

and

if

you

have

multiple

data

centers,

which

cassandra

is

optimized

for

out

of

the

box,

love

doing

anything,

you

can

control

the

copies

ASAP.

So

you

can

say

if

you

have

a

data

center

in

London,

you

have

a

data

center

in

new

york.

You

want

to

make

sure

you

want

to

have

two

in

one

location,

three

in

the

in

the

other

and

we'll

show

how

that

happens

on

the

right

level

in

a

couple

screens,

but

network

topology

is

isn't

just

useful.

A

If

you

have

multiple

data

size,

let's

say

you

have

a

bunch

of

racks

in

the

same

data

center

and

one

of

the

rafts

go

down

because

you

have

a

switch

problem.

This

happens

all

the

time

in

the

real

world.

You

can

use

network

topology

to

make

sure

that

the

data

is

stored

on

separate

tracks

and

that

way,

you're

dedicating

to

survive.

That

kind

of

failure,

so

that

you

might

be

asking

yourself

I,

can

tell

you

are

actually

how

does

piss

on

don't

know

about

Rex,

it's

a

EST.

A

No,

actually

it's

this

thing

called

the

stitches,

so

snitches

is

a

bunch

of

our

code

that

basically

informs

it

tells

on

each

other

about

the

topology

of

your

application

and

there's

several

snitches

that

you

can

use

again.

This

is

configurable

based

on

your

application,

if

you're

running

it

easy

to,

for

example,

there's

a

special

easy

turn

snitch

that

we

can

use.

For

example,

you

can

see

the

last

one

ec2

multi,

beacon

snitch.

This

can

be

used

to

make

sure

that

you

have

Shepherd

data

in

separate

regions.

A

So

if

one

region

goes

down,

you

can

survive

that

kind

of

region.

So

we

don't

just

tell

on

each

other.

The

note

don't

just

telling

each

other

about

the

network

topology.

It

uses

gossip

to

inform

the

other

nodes

based

on

what

other

nodes

are

down.

That

information

which

notes

are

down

and

which

nodes

are

up

is

spread

through

gossip,

along

with

other

messages

that

are

spread

through

gossip,

so

another

sort

of

technology

I

mean

a

terminology.

Is

load

balancing

and

so,

like

I

said,

we

have

a

masterless

architecture.

A

You

can

read

and

write

from

any

node.

So

the

strategy

that

the

client

uses

to

connect

to

the

nodes

are

one

of

these

three

strategies,

and

this

is

something

as

a

developer

you

get

to

choose.

You

can

say

that

you

want

to

use

the

round

robin

strategy,

and

that

means

it's

going

to

connect

to

a

random,

neither

the

local

data

center

or

the

remote

data

center.

In

this

case,

it

just

so

happens

to

use

local,

but

if

you

have

a

remote,

it

might

take

a

little

bit

longer

for

your

of

connection.

A

So

we

often

advise

to

use

the

DC

aware

their

data

center

way

around

robbing,

and

what

that

does

is

it

gives

preference

to

the

local

data

center,

which

will

be

faster,

but

if

there's

a

failure,

then

it

will

automatically

switch

to

the

next

data

center.

So

you

can

survive

that

kind

of

failure

and,

lastly,

like

I

said

there's

token

aware,

because

we

use

because

the

client

knows

the

kind

of

petitioner

that

you

use

you're

using

this

hashing

algorithm.

We

know

what

requests

you

should.

A

In

the

token

aware

strategy

we

know

which

node

you

should

be

connected

to

and

this

optimizes

for

faster

connections-

and

this

gives

you

more

power

as

a

developer,

to

choose

the

strategy

that

best

fits

your

applications

use.

So.

Lastly,

last

part

of

terminology-

we're

not

going

to

get

into

a

lot

is

virtual

nodes.

A

If

you're

interested

in

this,

you

can

certainly

talk

to

me

after

or

you

can

look

at

planet

Cassandra

for

details

on

this

too.

So,

let's

get

into

reading

and

writing

on

a

cluster

level

like

I

said

this

is

them.

This

is

a

location

independent

of

that.

So

you

don't

have

to

worry

about

connecting

to

one

particular

master

in

this

case,

and

whites

are

automatically

partitioned

and

they're

automatically

replicated

for

you

there's

nothing

that

your

application

needs

to

do

so

in

writing

data.

A

In

this

case,

it's

not

one

of

the

relatives,

so

the

replicas

whoa

overeager

there

yeah

the

coordinator

for

the

updates

to

the

replicas

and

the

replicas

acknowledged

that

the

data

was

written

back

to

the

coordinator

and

a

coordinator

sends

a

successful

response

to

the

client.

But

that's

not

the

real

world

right,

like

accidents

kind

of

happen.

So

when

only

two

new

boots

respond,

what

should

happen?

Well,

that's

really

up

to

you

as

a

developer.

A

What

should

happen

and

that's

what

I'm

going

to

do

something

called

blight

consistency,

so

you

might

have

heard

of

a

term

called

eventual

consistency.

Apache

Cassandra

Dennis

X.

We

prefer

something

called

a

tunable

consistency

that

as

a

developer,

you

get

to

choose.

How

consistent

do

you

want

your

data?

Do

you

want

to

have

strong

consistency

or

you

out?

We

consistently

consistency.

This

is

something

that

is

tunable

by

you.

Kurt

a

patient

/

read

/

write

across

multiple

data

center

operations,

we're

talking

about

for

consistency

levels.

A

One

of

them

is

any

so

that

means,

if

you

write

as

long

as

it

happens,

to

one

of

the

replicas

it

works.

That's

any

corn

is

a

majority.

A

majority

across

all

the

games

and

local

forum

is

the

just

at

local

data.

Centers,

just

a

forum

and

the

quorum

is

define

it

as

51%

or

more

for

all.

That's

the

strongest

consistency

very

similar

to,

like

you

know,

more

of

a

relational

model.

So

an

example

is

in

the

failed

notes

from

earlier.

A

If

you,

if

you

can

face

questions

to

the

end,

if

that's

okay,

so

in

the

failure

example,

will

this

successfully

succeed

if

you

have

a

quorum?

Well,

yeah,

of

course,

because

the

forum

is

a

majority,

whereas

if

you

have

two

failures,

obviously

that's

not

going

to

succeed

right

because,

like

that's,

not

a

majority

Oh

as

an

aside,

there's

actually

a

solution

to

this,

because

we

have

one

there's,

actually

a

configuration

setting

that

allows

settings

if

you're

using

a

data

sex

driver.

A

So

in

the

download

scenario,

how

does

the

node

eventually

learn

of

the

day

the

the

node

learns

of

the

data,

because

the

coordinator

stores

in

memory

a

hint

of

the

data

for

that

node?

And

when

the

note

comes

back

online,

so

the

data

is

replayed

to

the

hints

that

is

replayed

to

that

node

and

there's

actually

we're

going

to

talk

about

in

read

path

in

just

two

slides

or

so

we're

going

to

talk

about

another

scenario

in

case

that

note

doesn't

get

that

data

so

about

reading

gate.

It's

very

similar.

A

A

client

connects

to

a

random

know

that

node

becomes

the

coordinator,

and

that

coordinator

sends

the

read

request

to

the

replicas,

the

replicas.

It's

Emma

data

that

and

the

data

gets

sent

back

to

the

client.

So

in

the

case

of

the

failure,

I

talked

about

in

the

right

path

where

one

of

them

failed.

So

when

the

read

comes

in

that

we

might

be

stale,

maybe

because

it

didn't

get

that

hint

for

some

reason

right.

In

that

case,

how

does

the

you

are

duplicate

nodes?

How

do

you

dispute?

How

do

you

settle

the

dispute?

A

The

coordinator

sees

that

one

of

the

nodes

have

old

data

and

it

sends

the

new

data

back

to

the

plan

and

then

issues

a

request

of

to

the

out-of-date

node

to

refresh

their

data

from

one

of

the

other

nodes,

and

so

that

that's

another

method

that

we

repair

itself,

there's

also

a

percentage

chance

that

this

will

happen.

Randomly

there's

a

configuration

option

that

will

let

you

tunas

the

percentage

chance

that

a

reed

repair

will

be

done

automatically.

So

the

cluster

is

really

designed

to

help

repair

itself.

A

This

is

also

a

procedure

that

you

can

have

done

manually

on

the

cluster.

Obviously

there's

some

performance

in

back

to

this.

If

you

want

to

do

this

on

a

cluster

wide

level,

so

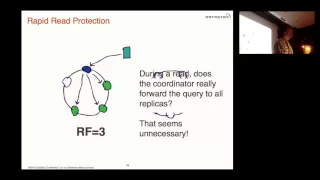

you

might

be

thinking

to

yourself

it's

kind

of

inefficient

right.

You

have

replication

factor

of

three

and

a

reed

consistency

of

two.

Why

do

you

send

three

read

requests

out?

Well,

the

answer

is

we

don't

really

we

send

two

out

automatically

and

if

those

two

don't

come

back

quickly,

then

we

sent

out

an

additional

one.

A

We

can

be

play

these

commit

logs

and

make

sure

that

data

isn't

lost

and,

at

the

same

time,

it's

written

to

these

men

tables

which

are

in

memory

versions

of

these

SS

tables

and

when

mm

tables

filled

up,

then

it's

written

to

disk

in

a

permanent

fashion

called

an

SSD

table.

These

ads

us

tables

are

immutable

and

can't

be

changed

and

the

only

way

to

change

them

is

through

what

we

call

compact

and

so

like.

A

As

you

can

see,

as

data

is

moved

around

the

cluster

and

becomes

less

relevant,

we

could

have

duplicate

copies

of

data

or

we

can

have

data.

That's

in

the

SS

tip

tables

that

that

cluster

is

no

longer

responsible

for

so

compaction

would

does.

Is

it

creates

new

SS

tables

from

the

old

SS

tables

and

then

deletes

the

old

SS

tables?

So

this

is

the

way

that

knows

update

these

SS

tables.

So

that's

what

happens

on

a

right?

It

read

is

a

little

bit

simpler

depending

on

your

application.

A

The

first

thing

we

do

is

look

in

memory.

Look

in

these

men

tables.

If

you

have

a

high

right

application,

these

might

actually

still

be

in

memory

and

then

it's

very

quick.

Otherwise

we

look

at

what's

called

a

bloom

filter,

I'm

not

going

to

get

into

what

a

bloom

filter

is,

but

if

you're

a

student

I

definitely

highly

encourage

you

to

look

up

on

Wikipedia

about

a

bloom

filter,

but

basically

it's

a

high

probability

index

that

allows

you

to

tell

with

high

probability

whether

something

is

in

one

of

these

SS

tables

or

not.

A

So

you

don't

have

to

scan

through

all

the

SS

tables,

and

so

then

we

look

in

the

bloom

filter

and

if

we

still

can't

find

it,

there

is

a

chance

it

still

on

the

SS

tables,

and

then

we

do

a

sikh,

obviously

across

the

SS

status.

So

usually,

if

it's

in

the

database

that's

caught

in

the

bloom

filter

and

it's

returned

rather

quickly,

and

so

that's

what

that's

the

repast.

A

So

this

Apache

Cassandra

and

I'm

going

to

talk

very

quickly

about

what

makes

datasets

different

datastax

is

a

company

that

supports

Apache

Cassandra

and

what

makes

us

different

as

a

company

as

as

opposed

to

companies

like

mondo

or

or

Oracle

or

sequel.

Server

is

a

couple

things.

One

is

Dennis

X

enterprise,

which

is

a

production,

a

certified

production

version

of

apache

cassandra.

This

is

something

that

we've

tested

internally

and

it

is

its

run

with

customers,

like

I,

said

like

eBay

and

Netflix,

and

it's

what

they

use.

A

It's

used

across

a

variety

of

use

cases

not

just

knee

Road

segments

of

the

market

is

integrated,

oltp,

integrated,

analytics,

integrated

search,

it

has

in-memory,

OLTP

and

analytics.

It

has

strong

data

protection.

It

has

management

tools,

we'll

talk

about

several

of

those.

We

also

saw

a

lot

of

different

languages

and

drivers.

In

many

cases

our

developers

are

the

ones

who

are

writing

these

drivers,

some

of

which

tastes

like

a

ruby

and

no

Jas

which

are

very

new

and

Python

our

open

source.

You

can

use

these

currently

for

free.

A

Other

versions

of

these

are

available

as

a

data

sex

customer

and

so

one

feature

that

you

should

use

data

sex

enterprise

for

security.

We

have

like

a

sex

security

as

a

part

of

Apache

Cassandra.

You

can

use

username

passwords,

you

can

use,

object,

permission

management,

so

don't

allow

a

user

a

gel

to

do

a

delete

on

this

table

because

he's

a

dumbass

or

you

might

have

default

of

client

encryption

between

a

certain

nose.

A

But

if

you're

an

enterprise

customer

you

want

real

enterprise

security,

which

is

things

like

external

authentication

which

allows

you

to

connect

to

Kerberos

or

Active

Directory

ldap.

You

want

a

tres,

you

want

get

a

quick

encryption

for

the

data,

that's

at

rest,

so

that

people

don't

look

at

your

data

in

the

SS

tables.

Unless

it's

encrypted,

you

want

data

auditing.

Those

are

all

features

that

are

part

of

data

steps.

Enterprise.

A

Another

feature

is

absent,

I

told

you

I

was

going

to

talk

about

the

product

and

responsible

for

like

as

a

hacker.

We

all

love

our.

You

know

our

tools

that

are

on

the

command

line,

but

when

you

have

clusters

that

are

like

thousands

of

nodes

at

hundreds

of

nodes,

you

often

want

a

browser-based

or

something

that

you

can

do

large-scale

operations

across

the

cluster.

This

all

ops

center

also

allows

you

to

do

backups

of

your

cluster

it

even

with

replication,

like

people

can

do

dumb

things

like

delete

old

tables

and

delete,

holds

key

spaces.

A

You

want

to

be

able

to

backup

from

events

like

that.

Opscenter

allows

you

to

do

that.

You

also

want

to

be

able

to

do

things

like

alerting.

So

when

people

like

you

have

certain

spikes

or

certain

other

things,

you

can

be

alerted

of

events

like

that

on

one

of

the

common

of

scenarios

for

using

ops

center.

We

use

this

at

internally.

Data

sex

is

what's

called

provision,

so

let's

say

you

want

to

in

Amazon

ec2.

A

You

want

to

create

10

notes,

you

can

click

on

the

create

new

cluster

button

and

add

in

the

IPS

of

the

machines

that

you've

already

spun

up

and

add

in

the

either

the

connection

or

the

SSL

key.

It

will

automatically

install

automatically

optimally,

configure

these

machines

and

then

suddenly

you

have

a

10

note,

plus

tur.

And,

alternatively,

if

you

have

ec2

of

credentials,

you

can

just

log

in

to

your

ec2

credentials,

and

it

will

automatically

do

all

of

that

for

you.

So

that's

app

center.

A

A

It

won't

have

all

of

the

abilities

of

DSC,

but

one

of

the

big

uses

that

people

use

apache

a

bit

of

sex

and

prices

integrated

search

for

that

we

use

a

technology

called

solar

and

I'm

not

going

to

get

into

those

of

the

search

and

the

analytics

in

fine

detail.

You

have

questions,

please

let

me

know,

but

one

of

the

benefits

of

this

is

it

allows

you.

A

Instead

of

setting

up

a

separate

of

solar

instance,

we

optimally

configure

these

integrated

for

textual

geospatial

of

faceting

searches

of

a

you

know

in

the

cluster,

the

Cassandra

cluster,

and

you

can

actually

segregate

these

so

that

the

search

nodes

are

run

in

a

separate

data

center

so

that

your

application

doesn't

load

down

your

cassandra.

Your

customer,

facing

up

to

sign

their

data.

Also

search

is

one

thing

that

your

application

might

need.

It

also

might

need

analytics

if

you're

doing

no

SQL

so

that

you

don't

use

joins.

A

You

might

have

to

use

something

like

Hadoop,

which

is

what

we

use

for

integrated

batch

analytics

and,

if

you're,

using

the

integrated

Hadoop.

It's

probably

because

you

don't

have

your

own

hood,

or

do

you

want

to

avoid

the

single

point

of

failure

in

to

do?

There

is

a

name

server,

has

a

single

point

of

failure,

and

also

HDFS.

If

you

haven't

connect,

if

you

haven't

tried

to

configure

HDFS,

it's

kind

of

a

pain,

and

so

maybe

you

want

to

avoid

that

by

using

our

integrated

approach.

A

This

is

something

that

we

call

bring

your

own

food

and,

lastly,

what

it's

kind

of

the

new

thing,

which

is

spark

real-time,

Hadoop

I,

mean

real

time

analytics,

and

this

is

a

wig

of

data

section,

a

price

of

4.5,

and

this

allows

you

to

do

things

in

a

virtually

real

time.

So

this

kind

of

offering

we

think

is

very

compelling,

as

opposed

to

our

competitors,

which

have

single

points

of

failure

and

don't

have

linear

scalability

or

when

you

look

at

the

benchmarks.

Our

performance

outlays.

A

Those

performance

as

well

as

developers,

I,

want

to

say

two

things.

One

is,

you

know:

I

encourage

you

to

use

us

for

the

hackathon

or

four

projects

on

you

can

go

to

planet.

Kisan

org,

you

can

go

to

get

sexcom,

look

at

customer

use,

cases

look

at

tutorials

and,

lastly,

we

have

a

booth

upstairs

we

are

hiring

and

you

are.

We

do

have

student

internships,

please

come

up

and

give

us

your

resume

drop

by

and

talk

to

us

a

little

bit,

I'm

going

to

be

up

there

for

a

little

bit.