►

From YouTube: Lightning Talk: WAMR, Intel - Le Yao, Intel

Description

Lightning Talk: WAMR, Intel - Le Yao, Intel

Lightning Talk: WAMR, Intel - Le Yao, Intel

This session introduce the WAMR - a hign performance and small footprint WebAssembly runtime in Envoy. The advantages can get by building envoy with this wasm runtime.

A

Hello,

everyone

welcome

to

my

topic

about

walmart

and

why

I'm

loyal

from

intel

as

a

cloud

australian

software

engineer,

and

today

I

would

like

to

introduce

walmart

and

from

three

aspects.

First,

I

will

give

a

brief

explain

about

enguava

sim.

Then

I

will

introduce

the

web

assembly

my

current

time,

which

is

developed

by

intel

mama

here

and

finally,

I

will

give

the

benefits

we

can

get

from

this

work.

A

A

A

We

all

know

web

assembly

is

a

stack

based,

virtualbox

virtual

machine

yeah

and

another

project

is

approximate

same

sdk

yeah.

It

will

explore

export

the

sandbox

api

and

declare

the

reference

to

invol

api

and

also

in

catalyst.

Encapsulate

my

api

and

control

the

sandbox

api,

so

we

can

use

the

ssdk

to

develop

our

waxing

filter

and

the

implant

mentions

interface

we

defined

in

the

envy

was

in

filter.

Then

we

can

integrate

this

filter

into

the

invoice

service

mesh

yeah.

A

A

A

This

last

shows

the

web

assembly

macaron

temp

warmer

over

real,

and

we

can

see

we

can

see

it

here.

One

more

itself

supports

many

cpui

texture

and

at

least

here,

under

the

platform

support

listed

here,

we

can

see

in

ramona

itself

support

many

cpu

architecture

and

the

platform

yeah,

and

it

had

a

successful

community.

A

Walmart

itself

target

is

a

small

list

and

the

first

standalone

same

runtime

and

the

module

designed

to

cover

the

uses

from

low

end

device

to

cloud

yeah

and

the

supporting

interpreter

ahead

of

time

and

the

just

in

time.

Compilation

is

in

the

warmer

itself

is

a

sea-based

implementation

and

iom-based

compilation.

A

One

advantage

it

has

is

it

has

a

small

flipping

and

a

memory

consumption.

The

ram

call

of

it

shows

the

like

this

yeah

under

there

is

a

self-implemented

ahead

of

time,

audio

loader.

It

also

supports

the

intel

sjx

no

need

for

the

sdx

label

as

other

things,

and

it

can

mature

the

application

for

using

its

application

framework

and

it's

very

easy

to

post

new

platform.

A

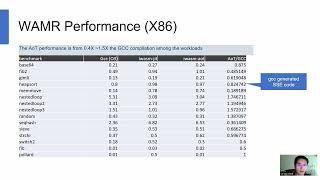

Here

we

give

the

one

more

performance

number

we

have

tested

on

the

x86

platform,

architecture

and

platform,

and

it

says

the

performance

number

compared

with

the

native

gcc

compiler.

We

can

say

the

ahead

of

time.

Performance

is

from

0.4

to

1.5

of

the

gcd

calculation

among

the

workloads.

This

is

the

benchmark

of

it,

and

this

is

the

performance

number

yeah

yeah,

it's

gcc

generic

sse

code,

yeah.

A

So

wait,

oh,

I

have.

I

have

gave

you

a

brief

introduction

about

walmart,

so

why

we

bring

walmart

in

why?

Because

itself,

wrong

or

similar

time

may

have

caused

the

binary

may

increase

the

binary

size

and

the

performance

number

is

not

real

in

our

text,

so

we

bring

one

more

into

enviro

and

do

some

performance

tests

to

get

the

this

advantage.

A

A

Also,

the

binary

says,

based

on

way

bar

on

time,

is

larger

than

murmur

using

warmer

to

build

the

binary.

We

can

get

the

y

binary

and

decrease

the

set

of

about

50

and

the

ships

set

about

10

yeah

and

the

high

performance

way

using

the

lighthock

to

test

the

performance

will

generate

some

hp

requests

to

avoid

a

gateway

and

get

the

performance

number.