►

From YouTube: Managing Local Storage with Kubernetes

Description

Most machines have storage devices attached to them and applications are used to consuming storage directly. Kubernetes will soon support local storage devices as a consumable resource. In this talk, we will explore the design principles for managing local storage in kubernetes. We will talk about some of the issues faced with local storage in Borg and present how Kubernetes will avoid those issues. We will present a recommended strategy for provisioning storage in datacenters that are powered b

A

All

right,

good

afternoon,

everyone

how's

everyone

doing

great

awesome.

My

name

is

Michelle

I

am

a

software

engineer

at

Google,

I

developed

mainly

on

kubernetes

and

I

work

on

the

kubernetes

storage,

subsystem

and

I'm

here

today

to

talk

to

you

about

local

storage

management

in

kubernetes.

This

has

been

an

area

I've

been

focusing

on

and

I'm

very

excited

to

be

here

today

to

share

our

progress

with

you.

A

A

A

A

A

A

The

containers

in

a

pod

share

the

same

networking

namespace

and

they

can

also

do

standard

IPC

mechanisms

to

communicate

with

each

other.

You

can

also

share

data

between

the

containers

through

shared

volumes,

pods

have

a

managed

lifecycle,

the

user.

As

a

user,

you

will

create

a

pod,

you'll

delete

a

pod,

and

the

system

is

going

to

take

care

of

managing

that

lifecycle

for

you.

So

if

one

of

your

containers

in

your

pod

dies,

then

this

is

the

system

will

automatically

restart

those

containers

for

you.

A

If

you

want

to

scale

your

pods,

then

the

system

will

take

care

of

that

as

well.

An

example

of

a

pod

is

a

data,

polar

and

a

webserver.

So

in

the

picture

on

the

right,

you

see

two

containers

in

this

pod,

one

container

is

only

focused

on

pulling

content

into

the

shared

volume

and

in

the

second

Taner

in

this

second

container.

It

is

only

serving

that

content

to

the

consumers.

A

So

now,

I'll

also

give

a

brief

overview

about

storage

in

kubernetes.

There

are

two

main

types

of

storage.

There

is

a

ephemeral

storage

here

in

ephemeral

storage.

The

data's

lifetime

is

the

same

as

the

pods

lifetime.

What

this

means

is,

if

the

pod

dies

or

restarts

or

gets

moved

to

another

node,

then

the

data

in

the

ephemeral

storage

also

gets

deleted

along

with

the

pod.

For

that

purpose,

ephemeral

storage

is

mostly

used

for

the

stateless

use

cases

for

caching

and

for

scratch

space

ways.

You

can

use

ephemeral

storage

in

kubernetes.

A

Today

you

can

access

it

through

two

different

layers.

You

can

access

it

through

the

container

laid

container

layer

through

the

writable

layer

or

at

the

pod

level.

You

can

access

it

through

empty

durval

Yume's,

which

let

you

share.

The

ephemeral

storage

between

containers

in

a

pod,

the

opposite

of

the

ephemeral

storage

is

persistent

storage.

Here

the

data

lifetime

is

independent

of

the

pods

lifetime.

So

if

the

pod

dies

which

starts

or

moves

to

another

node,

then

that

data

still

persists

until

it

is

explicitly

deleted

by

the

user.

A

A

So

what

do

I

mean

by

local?

What

is

local

storage?

As

seen

from

the

kubernetes

point

of

view

here,

a

storage

is

accessible

from

only

one

node.

You

can

compare

as

an

example

of

local

storage.

You

can

think

of

a

PCI

attached

disk

and

compare

that

to

network

storage

like

NFS,

cluster

or

Ceph,

or

you

can

access

those

or

you

can

access

those

volumes

from

any

node

in

the

cluster.

A

B

A

So

why

would

you

actually

use

storage,

local

storage?

There's

a

lot

of

trade-offs?

Like

I

mentioned,

you

have

less

availability,

you

don't

have

fault

tolerance,

so

why

would

you

use

these?

The

main

reasons?

Why

are

related

to

some

cost

benefits

in

certain

situations?

You

can

save

some.

You

can

save

a

lot

of

operational

costs

by

using

local

storage

if

it

can

fit

your

use

case

correctly,

so

I'm

going

to

go

over

those

cost

benefits

in

more

detail.

A

The

first

benefit

you

can

get

is

performance.

High

performance

SSDs

are

becoming

more

important

to

scaling

critical

applications

to

get

that

same

performance

through

network

storage.

It

requires

more

infrastructure.

You

need

supporting

networking,

networking

switches

and

the

networking

pipeline

to

be

able

to

funnel

all

that

data

back

and

forth

between

all

the

nodes.

So

there

is

more

infrastructure

cost

there.

A

Configuring

and

maintaining

network

storage

systems

and

its

supporting

infrastructure

can

incur

more

operator

cost.

You

need

experts

for

each

of

these

systems

and

they

have

to

know

how

to

debug

problems.

They

have

to

be

able

to

scale

the

system

they

have

to

be

able

to

change

the

system

as

your

business

needs

change

and

also

as

your

business

grows.

A

The

third

factor

is

utilization.

If

your

applications

can

directly

access

some

local

discs,

then

that

is

less.

That

is

less

requirements

on

your

remote

capacity

and

can

thus

lower

your

operational

costs.

This

is

especially

important

for

the

on-premise

and

bare

metal

environments

where

local

discs

are

abundant.

A

A

The

main

use

cases

that

are

that

are

suitable

for

persistent

local

storage

all

have

to

do

around

the

idea

of

data

gravity.

This

is

the

idea

that

the

data

the

data

set,

your

application

is

handling

is

so

large

that

you

need

your

application

and

your

storage

to

be

co-located

in

order

to

achieve

the

best

performance.

A

A

This

is

all

about

performance,

so

you

really

want

to

access

those

fast

SSDs,

but

why

do

you

actually

need

the

persistence

part

as

a

cache

you're,

just

temporarily,

holding

some

data?

So

it's

okay.

If

the

application

dies?

Why

can't

you

actually

just

use

ephemeral

storage?

Well,

in

this

case,

your

data

set

is

so

large

that

if

you

had

to

reload

that

cache

every

time

your

application

crashed

or

we

started,

then

you

can

incur

a

high

startup

latency.

A

So

if

you

were

able

to

persist

that

cache

data-

and

your

application

happened

to

restart

then

can

pick

up

where

it

last

left

off

and

you'll

save

a

lot

of

startup

time

there

and

Indian.

If

the

actual

note

or

the

entire

storage

fails,

it's

okay,

because

it's

just

a

cache

and

the

actual

real

data

source

that

it

is

based

off,

is

persisted

on

some

more

reliable

media.

So

you

can

always

go

back

to

the

backup

in

those

failures

and

re

O's.

A

A

Second

use

case

is

for

container

rising,

distributed

data

stores.

Examples

of

these

data

stores

include

Cassandra,

cluster

and

SEF,

and

there's

many

many

many

others

here.

These

systems

are

all

about

leveraging

the

local

disks

and

being

able

to

expose

those

local

disks

in

a

distributed

layer

that

can

be

accessed

across

the

whole

cluster.

A

A

Okay,

so

now

that

you

understand

some

of

these

use

cases,

how

do

you

actually

use

a

incubu

Nettie's

today?

Well,

the

solution

is

not

very

good.

Great

kubernetes

has

a

great

story

for

persistent

remote

storage,

but

the

story

for

local

storage

is

pretty

bad

today.

The

only

way

to

access,

persistent

local

storage

is

through

what

we

call

a

host

path

volume.

This

is

where,

in

your

application,

spec,

you

specify

the

path

to

the

volume.

The

local

volume

that

you

want

to

use.

A

This

mechanism

has

a

lot

of

problems

which

I

am

going

to

demonstrate

shortly

shortly

and

because

of

all

these

problems,

people

who

use

these

host

pathologies

such

as

the

Gloucester

and

Saif

provisioners.

They

have

to

write

custom,

schedulers

and

operators

to

be

able

to

deal

with

the

to

be

able

to

deal

with

some

of

these

issues

and

that's

not

a

sustainable

model,

and

it

is

actually

a

it's.

It's

becoming

a

barrier

to

entry

for

a

lot

of

storage

solutions.

A

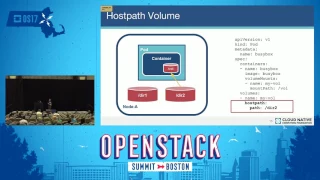

So,

let's

look

at

whose

path

volumes

and

how

you

use

it

on

the

left.

You

see,

I

have

a

node

and

it

has

two

local

directories

on

it:

director,

t1

and

directory

two

and

on

the

right

is

my

pod

spec

for

my

application

and

at

the

bottom

of

it

is

where

I'm

specifying

my

host

path

volume

here,

I'm

specifying

this

path

directory

and

then,

when

my

pod

launches,

then

the

system

will

go

and

mount

directory

into

my

container

and

I

can

access

it.

Great.

That's

pretty

simple

right!

A

Well,

there's

a

lot

of

problems

with

this

approach

that

I'm

going

to

go

for

now.

The

first

problem

is

portability

in

my

application.

Spec

I

have

put

a

path

to

some

volume

in

it,

and

this

is

a

problem

because

in

kubernetes

your

container

or

your

pod

can

be

scheduled

to

any

node

in

the

cluster.

So

how

do

I

actually

know

that

whatever

node

I

got

scheduled

on

exists?

A

A

Some

people

try

to

get

around

some

of

these

problems

by

putting

node

names

into

this

spec

and

that

kind

of

makes

a

problem

worse

because

now

I've

tied

my

to

specific

nodes

and

I've,

essentially

now

doing

manual

scheduling,

which

is

kind

of

defeating

the

purpose

of

kubernetes,

because

kubernetes

is

supposed

to

magically

schedule.

Everything

for

you.

A

So

the

second

problem

kind

of

related

is

accounting

because

I'm

specifying

a

path

in

my

pod,

spec

I,

don't

know

if

there's

any

other

application

running

in

this

cluster

that

also

specified

that

same

path.

So

I

can't

tell

if

I'm

the

only

one

using

this

volume

in

order

to

solve

that

kind

of

problem.

Again,

you

need

to

do

some

sort

of

manual

scheduling

or

coordination.

Where

you're

you

make

sure

that

your

two

different

applications

don't

use

the

same

paths

and

it

gets

really

complicated,

really

fast

along

the

same

lines.

A

I

don't

know

when

to

delete

my

volume

either.

If

there's

other

applications

that

are

sharing

a

directory,

I

need

to

coordinate

with

them.

Send

them

an

email.

Hey

are

you

done?

Can

I

delete

stuff?

So,

overall

you

can

see.

This

is

not

a

very

scalable

solution

and

the

third

and

perhaps

most

serious

problem

is

security.

A

Here,

the

user

is

the

one:

that's

specifying

the

path

to

this

value

on

this

node,

but

what

prevents

a

malicious

user

from

specifying

any

path

and

potentially

being

able

to

read

anyone's

data

being

able

to

corrupt

the

system,

or

you

know,

delete

system

files

and

causing

all

sorts

of

havoc

on

the

node.

For

that

reason,

a

lot

of

administrators

disable

host

Pat

values

by

default.

So

if

you

are

working

in

one

of

these

clusters,

where

you

can't

even

use

hose

volumes,

then

you're

out

of

luck,

you

can't

even

use

local,

persistent

storage.

A

A

What

we

are

looking

at

right

now

is

to

be

able

to

use

an

existing

feature

that

exists

in

kubernetes

today,

and

that

is

the

persistent

volumes

feature.

This

feature

works

very

well

for

remote

storage,

and

the

challenge

that

we

are

tackling

today

is

how

to

take

this

feature

that

has

been

designed

for

remote

storage

systems

and

adapt

it

to

local

storage

and

its

specific

characteristics.

A

So

now

I'm

going

to

talk

about

the

solution

that

we

are

designing

and

walk

through

example,

use

case

as

well,

and

how

to

use

it

so

to

give

some

background

about

persistent

volumes,

this

feature

allows

you

to

separate

the

details

of

how

storage

is

implemented

in

the

cluster

from

how

it

is

consumed

by

the

user.

It

sets

boundaries

between

the

cluster

administrator

and

the

user.

It

does

this

through

two

API

objects.

The

first

API

object

is

the

persistent

volume.

A

A

A

It

is

in

your

pod

spec,

where

you

specify

the

persistent

volume

claim

and

then

the

system

will

find

a

matching

persistent

volume

to

bind

claim

and

through

this

level

of

indirection.

That

is

how

your

pod

ends

up

mounting

specific

volumes

in

the

cluster

by

having

these

two

different

objects.

Now

the

user

story

is

portable

and

cluster

agnostic.

In

your

persistent

ball

Youm

claim

now

you

don't

specify

any

details

about

the

underlying

cluster.

A

You

don't

specify

anything

about

nodes,

you

don't

specify

anything

about

specific

paths

on

those

nodes,

and

so

this

is

a

great

model

for

us

to

follow

for

using

local

storage.

A

related

topic

is

storage

classes.

This

is

another

API

object

in

kubernetes.

It

represents

a

collection

of

persistent

volumes

that

have

similar

properties

and

the

name

of

the

storage

class

is

defined

by

the

administrator.

So

it's

completely

up

to

them

what

they

want

to

name

it

and

what

that

name

means

as

an

example.

A

A

In

my

example,

I'm

kind

of

implying

at

the

storage

classes

have

speed

differences

in

the

underlying

storage,

but

it

doesn't

have

to

be

that

I

could

also

do

the

same

thing

with

other

features

like

encryption,

I

could

say:

I

can

have

a

storage

class

called

purple

that

will

have

my

encrypted

storage

in

it,

and

a

storage

class

called

orange

that

has

my

non

encrypted

storage.

So

the

name

and

the

definition

of

what

is

contained

in

the

storage

class

is

completely

up

to

the

administrator

to

decide.

A

So

the

persistent

volumes

that

are

part

of

a

storage

class

can

be

statically

or

dynamically

provisioned.

So

in

when

you

statically

provision

a

persistent

volume

you,

the

administrator,

is

pre.

Creating

those

volumes

with

a

specific

purpose

for

dynamically

provisioned

volumes,

what

this

means

is

you

can.

The

administrator

does

not

have

to

actually

do

the

provisioning.

A

The

administrator

just

has

to

leave

some

instructions

about

how

to

provision

the

specific

storage

and

then,

when

a

pods

request

comes

in

that's

when

the

storage

will

actually

get

provisioned

with

the

exact

requirements

of

the

user,

so

those

specific

storage

parameters,

the

instructions

on

how

to

create

volumes

on

demand.

Those

instructions

are

contained

in

the

storage

class.

A

Again,

what

storage

class

allows

us

to

do

is

to

separate

the

details

of

the

cluster

in

the

district

cluster

implementation,

from

the

user's

request

for

storage,

the

details

of

what

the

underlying

storage

system

is

and

how

to

what

parameters

it

accepts,

and

all

of

that

is

contained

in

the

storage

class

and

on

the

user

side.

All

they

have

to

specify

is

a

name

so

to

look

at

an

example

here,

I

have

two

different

clusters

and

they

both

implement

a

storage

class

call

circle.

A

Okay.

So

how

will

persistent

volumes

and

storage

classes

solve

these

problems

with

hose

path?

Let's

look

back

at

the

host

path

problems

that

I

mentioned.

The

first

problem

was

portability

where

in

host

path,

you

are

specifying

specific

paths

on

specific

nodes,

and

that

makes

the

application

spec

not

portable.

A

So

persistent

volumes

can

solve

that

problem

because

of

the

two

objects

the

persistent

volume

claim

and

the

persistent

volume

allows

you

to

abstract

cluster

details

from

the

user

details.

So

you

can

keep

the

cluster

details

in

the

persistent

volume

object

and

you

can

create

the

portable

details

into

the

persistent

volume

claim,

object,

and

then

storage

class

will

add

on

on

top

of

that,

to

allow

users

to

request

specific

classes

of

properties

and

specific

generic

properties

of

the

underlying

storage.

A

The

second

problem

that

we

had

with

host

pad

volumes

was

accounting.

We

weren't

sure

if

there

were

multiple

applications

using

the

same

directory

or

not,

and

we

weren't

sure

when

it

is

when

it's

safe,

to

delete

a

volume.

So

again

the

persistent

volume

claims

and

persistent

volumes

have

a

direct

one-to-one

mapping

and

they

have

a

well-defined

managed

life

cycle

and

kubernetes.

A

So

since

these

are

API

objects,

you

go

and

create

and

delete

them

like

any

other

API

object,

and

then

the

state

machine

is

controlled

and

managed

by

the

system

and

the

third

problem

with

host

path

was

security.

Allowing

the

user

to

specify

any

path

is

a

very

dangerous

mechanism.

So

persistent

volumes

will

solve

this

problem

because

it's

a

administrator,

it's

a

non

namespace

object

that

can

only

be

created

by

administrators

with

administrator

privileges.

So

hopefully

you

guys

can

trust

your

administrators

and

trust

that

they

provision

the

correct

storage

on

your

in

your

cluster

okay.

A

So

those

are

the

reasons

or

that

is

how

the

new

model

of

using

persistent

volume

claims

and

persistent

volumes

is

going

to

solve

the

old

problems

of

accessing

local

storage.

So

let's

look

at

an

example

of

how

you

would

actually

use

this

new

model.

In

my

example,

I'm

going

to

take

a

typical

application

development,

workflow

I'm,

a

developer

and

I'm,

going

to

write

this

new

application

I'm

going

to

develop

it

and

test

it

on

my

laptop

and

once

I'm

done

with

that,

then

I'm

ready

to

deploy

this

application

into

production.

A

A

Here

we

have

our

laptop

cluster

and

the

administrator

is

provisioning,

a

persistent

volume

for

my

laptop

cluster

here

at

the

here's,

my

persistent

all

you

and

at

the

bottom

you

see

I'll

specify

my

storage

class

name,

I'm,

going

to

name

the

storage

class

local

fast,

and

then

here

is

where

all

the

details

of

the

actual

cluster

implementation

go.

It's

going

to

have

the

node

name

for

the

specific

node

that

I'm

on

and

then

it's

going

to

have

the

path

to

the

directory

where

this

volume

exists.

A

So

similarly

I'm

going

to

as

an

administrator

I'm

going

to

define

the

same.

A

similar

persistent

volume

on

my

production

cluster

here,

I'm

going

to

use

the

same

storage

class

name

local

fast.

But

now

the

details

are

different.

My

node

is

going

to

be

my

my

production,

node

and

the

path

is

going

to

point

to

a

real

SSD

disk.

A

So

that's

at

the

administration

level.

Now,

let's

go

back

to

the

developer

level

or

the

user

level

as

a

developer.

I'm

writing

my

application

and

I

have

my

pods

back

here.

This

is

the

same

pod

spec

that

I

used

for

host

path.

The

host

path

example,

but

now

I've

replaced

host

path

at

the

bottom,

with

persistent

volume

claim

and

I

specify

the

name

of

this

claim

and

on

the

right

is

my

persistent

volume

claim

object.

A

You

can

see

here

it's

requesting

some

generic

parameters

like

storage

capacity,

and

it's

also

going

to

request

a

storage

class

name

of

local

fast.

This

was

the

same

name

that

I

that

the

administrator

used

to

provision

those

volumes

in

so

now,

I'm

I've

developed

my

application

on

my

laptop

I've

run,

my

application

deployed

the

spec

and

it's

tested

and

it's

ready

to

go

into

production.

A

I

don't

have

to

change

anything.

This

pots

back

is

completely

portable.

There

are

no

details

in

here

about

what

node

to

go

to

or

what

path

my

storage

is

at.

So

I

can

take

this

pots

back

and

deploy

it

on

any

cluster,

and

as

long

as

that,

cluster

has

some

storage

with

the

storage

classname

local

fast,

then

my

application

will

go

and

the

pod

will.

A

A

A

The

same

thing

happens

in

the

production

cluster.

As

at

the

user

level,

the

persistent

volume

is

exactly

the

same.

Nothing

has

changed.

It's

requested

the

same

capacity

in

the

same

storage

class.

The

difference

is

the

actual

persistent

volumes

underneath

so

now

in

my

production,

cluster

I'll

be

using

real

disks

compared

to

my

laptop

cluster,

which

was

just

some

directory

under

temp.

So

so,

basically,

this

is

the.

A

This

is

the

magic

behind

persistent

volume

claims

and

persistent

volumes.

It's

able

to

provide

a

separation

between

the

user

and

a

cluster

administrator

and

allows

the

allows

the

applications

back

to

be

portable

so

that

you

can

go

and

publish

your

application

spec

and

anyone

can

pick

it

up

and

run

your

spec

across

any

of

their

clusters.

A

So,

in

summary,

accessing

local

storage

is

going

to

become

easier

by

using

the

new

model

of

local

persistent

volumes.

This

is

going

to

solve

the

current

issues

of

portability,

accounting

and

security.

In

some

circumstances,

using

local

storage

can

reduce

your

operational

and

data

center

costs

and

what

I?

What

I

see

most

important

is

that

local

storage

is

going

to

become

a

building

block

for

many

high-performance

and

distributed

applications,

especially

distributed

storage

systems,

to

be

able

to

move

over

to

kubernetes

and

make

the

kubernetes

experience

a

lot

better.

A

So

if

you

are

interested

in,

if

you

are

interested

in

learning

more

about

this

topic,

I've

clewd

included

a

lot

of

links

here

to

learn

more

about

kubernetes

and

storage

in

general.

We

also

have

the

actual

design

document

and

proposal

on

github.

So

we

would

love

to

hear

all

the

feedback

that

you

have

about

the

design

and

the

user

model,

and

we

also

want

to

hear

about

all

your

use

cases

as

well,

so

please

feel

free

to

reach

out

to

me

or

anyone

on

these

storage

SIG.

C

A

D

A

A

So

a

lot

of

scheduler

changes

have

to

go

into

there

to

make

the

scheduler

more

topology

aware

and

some

of

the

actual

future

direction

that

we

can

take

from

there

is

if

we

can

define

a

way

to

to

specify

topology

constraints

in

a

generic

manner,

then

we

can

apply

it

to

more

than

just

local

storage,

so

we

could

so

with

local

storage.

The

storage

is

constrained

by

a

single

node,

but

you

can

imagine

that

we

could

potentially

constrain

it

to

a

rack

or

we

can

constrain

it

to.

E

A

A

Being

able

to

expose

a

block

device

to

the

container

is

another

feature

that

the

storage

sig

is

starting

to

look

into,

and

it's

actually

it's

pretty

relevant

for

local

storage,

but

it

can

also

be

relevant

for

remote

storage

too.

So

we're

kind

of

treating

these

two

features

we're

treating

block

access

as

kind

of

a

separate

generic

feature.

That

not

only

applies

to

local

storage,

but

it's

definitely

related,

and

it's

also

on

the

radar

so

yeah

there

are

people

in

the

storage

sig

that

are

actually

starting

to

look

at

this.

A

E

F

I

have

a

question

on

the

you

demonstrated

with

the

persistent

volumes

and

claims

using

predefined

or

static

persistent

volume.

Aha,

so

can

you

give

a

use

case

of

using

them?

We've

tried

them

with

dynamically

allocated

volume

and

that's

very

clear,

but

once

you

you

tie

a

part

to

a

volume,

is

during

a

locality

very

preserved.

Yes,.

A

Sir

yeah,

so

the

main

goal

here

for

local

volumes

is

once

you

bind

the

persistent

volume

to

a

persistent

volume.

That

also

is

local.

Then

your

pod

will

always

get

scheduled

to

the

node

that

that

local

volume

is

on.

So

if

you

have

to

restart

your,

if

you

have

to

restart

your

pod,

then

that

pod

would

always

get

scheduled

to

the

correct

node

so

that

you

would

land

with

your

correct

data

on

it.

A

This

can

also

be

used

for

in

general,

for

like

producer-consumer

use

cases

where

you

could

have

one

pod,

that

is

producing

some

data,

and

it's

going

to

write

it

to

some

local

storage

and

then

later

you

might

have

a

a

consumer

pod

come

later,

and

it's

going

as

long

as

it

uses

the

same

persistent

volume

claim.

Then

it

will

also

get

routed

to

the

same

node

where

your

data

exists.

Okay,.

F

A

B

A

So

that's

the

that's

kind

of

like

the

failure

policy

of

what

happens.

If,

if

the

pod

encounters

some

sort

of

type

of

failure,

it

needs

to

recover.

I

can

see

applications

that

totally,

if

like,

if

you

have

a

caching

application,

and

you

really

don't

care

about

the

data.

If

your

node

goes

down,

then

it's

okay.

If

your

pod

gets

rescheduled

to

another

node,

but

then

there's

also

some

other

applications

where

you

know

that

data

is

really

important

and

you

actually

really

want

it

and

can't

live

without

it.

A

So

you

don't

want

that

pod

to

get

rescheduled

to

another

node.

If

it

fails,

you

might

need

to

do

might

want

to

do

some

manual

recovery

in

that

case,

so

I

mean

they're,

both

valid

use

cases.

So

what

we're

planning

on

doing

is

following

the

taints

and

toleration

x'

design

I,

don't

know

if

you're

familiar

with

that

design.

A

But

basically

what

can

happen

is

we

can

taint

a

persistent

volume

and

say

that

you

know

this

persistent

volume

is

no

longer

as

accessible

because

of

some

reason

it

died

or

the

node

is

not

there

anymore

and

as

a

pod,

you

can

specify

toleration.

X'

for

specific

taints,

so

you

can

say

my

pod

can

tolerate

node

failures

in

that

case.

A

In

that

case,

if

you

can

tolerate

the

node

failures,

then

you're,

then

that

tells

the

system

that

we

can

reschedule

your

pod

somewhere

else

with

a

new

volume

of

you

know

blank

slate,

basically

or

if

you

want

the

other

way

you

can

say

I

cannot

tolerate

node

failures,

and

in

that

scenario

then

we

would,

you

know,

just

keep

your

pod

in

pending

states.

While

the

note

is

down.