►

From YouTube: SimPEG Meeting February 28, 2017

Description

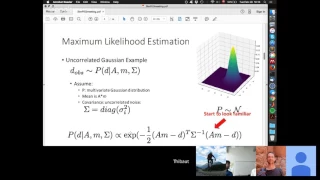

SimPEG meeting on Feb 28. Thibaut Astic presents on Designing objective functions: a probability approach

A

C

Okay,

everybody,

so

not

that

much

call

for

me

today,

like

I'm

working

on

it,

but

I

still

have

some

numerical

issue

to

figure

out

so

today,

I

mean

mostly

focused

on

showing

you

like,

yellow

chair

and

shoes.

So

far

for

a

project.

I

gave

me

so

with

so

basically

I

really

I

I

try

to

like

unfollow

the

assumption,

we're

making

about

simply

a

few

key

function

and

like

the

zipper,

the

the

main

ability

with

that

was

basically

to

have

to

design

a

framework

where

I

can

work.

C

C

Under

and

chargeable,

overburden

too

so

like

when

I

do

no

more

to

the

low

morning

barrel.

Aha,

it's

singing

nothing

like,

as

we

can

see

in

the

video,

the

middle

slices.

So

that's

my

model

and

that's

what

I

will

try

from

inversion

enzymatically,

what

I

see

with

my

real

my

realtor-

and

this

is

I'm

using

this

new

data-

cause

this

one

for

this

story,

but

instead.

C

C

The

main

goal

at

hand

will

be

also

to

be

to

say

that

if

we

pump

rented,

for

example,

the

Metro

PD

of

the

structure

saline,

we

don't

have

to

put

an

artificial

distance

of

X

waiting

on

a

row

I

for

in

our

physics.

So

because

that's

what

I

will

drive

so

I

don't

see

anything.

But

we

see

that

for

a

number

I

just

stood

face,

see

like

my

love.

C

Collectivity

like

a

high

quality

can

be

much

higher

than

what

I

have

in

my

photo

and

if

you

had

I

could

say

like

a

detective

et

Ossa

of

ice

field

data.

So

we

have

the

overburden

connectivity

here,

the

swivel

utility

and

I'm

somewhere

around

me.

For

my

father's

suicide,

like

I,

was

our

change

of

at

least

ten

to

fifteen

percent

of

the

data

due

to

the

sphere

so

I

detected.

C

The

thing

is

that

can

I,

we

drive

after

that

since

the

inversion,

and

so

that's

kind

of

like

the

motivation

and

the

beginning

of

what

I'm

going

to

show

you

today.

So

let's

game

moving,

so

I

try

to

unfold

it

for

provari

probability.

Part

of

you

like

there

is

nothing

too

crazy,

but

it's

from

it

was

really

like

your

high

opener

on

what

all

the

Assumption

been

making

with

the

with

your

objective

function.

C

So

the

first

thing

is

that

we

had

a

will

daddy

and

we

basically

gonna

basically

consider

it

as

a

realization

of

multivariate

probability

distribution.

So

this

observe

that

is

like.

So

it's

a

realization

of

poor

distribution,

P

of

the

low

in

the

Tigers,

my

physics,

my

model

and

and

some

co

violence.

On

my.

B

C

C

C

B

C

B

B

C

D

C

Be

that

I

want.

The

other

axis

would

be

that

that

tooth,

okay

and

then

on

the

under

said.

We

have

the

probability

density.

Ok,

so

here

file

and

use-

and

you

see

it

like

I

put

it

shifted

here

and

it's

not,

and

it's

actually

a

bit

skewed

to

so

my

maximum

probability

will

be

right

will

be

here

so

like

around

two

for

this

guy

in

the

room.

They

were

for

this

for

this

one.

C

B

C

C

So

let's

start

to

look

familiar

at

some

point.

Yes,

so

that's

so,

as

I

said

before,

we

want

to

find

out

the

model

M

that

maximize

the

probability

to

get

this

data,

so

I'm

looking

for

Z

for

the

ad

max

of

em

of

this

probability

and

a

way

to

do

that

is

that

it's

actually

equivalent

is

that

it's

if

I

want

to

maximize

the

potion

soma

likelihood

it's

it's

like

minimizing

the

negative

of

the

rug.

Also

liked

you,

because

negative

log

will

be

a

convert.

C

A

C

B

B

C

So

if

I

want

to

minimize

that

now

the

negative

log

log

likelihood

so

I

just

drop

the

ad

I

just

drops

the

exponential

here

and

minus

10

minus

here

got

plus

so

I'm

looking

for

the

minimum

of

the

of

the

l2,

none

of

n

minus

D

lowering

the

covariance

matrix

here.

So

we

get

back.

We

got

back

basically,

what's

what

we

usually

use,

but

we

just

derive

it

from

from

the

form

a

coherent

account

of

you,

saying

that

okay

I'm

your

soon

that

my

nose,

my

like

made

my

basically

and

never

tell

that.

C

C

C

So

what

we

look,

instead

of

looking

for

the

polarity

of

dealing

a.m.

and

similar

we're

looking

for

the

probability

of

our

model,

moving

our

physical

data

and

our

covariance,

which

can

be,

but

that

thing

is

much

harder

to

choose,

because

we

don't

know

anything

about

the

model.

At

first,

and

so

this

approach

of

like

maximizing

the

poverty

of

your

mat

perimeter,

that's

what

people

usually

call

maximum

a

posteriori

estimation.

C

C

So

this

guy

is-

and

I

have

a

normalizing

term

here-

that

we

don't

need

to

to

carry

just

like

the

probability

of

the

data

over

like

the

wall

space

of

like

or

possible

other

things,

so

just

a

normalization

term

that

we

usually

just

compute

as

the

end

we

compute

the

probability

of

things

and

after

at

the

end,

we

just

make

sure

that

the

sum

of

everything

is

equal

to

one.

So

it's.

C

We

care

about,

and

it's

not

dependent

of

our

model

too.

So

if

I

want

to

maximize

this,

it's

equivalent

to

maximizing

just

a

guy

are

on

top

so

now

I

have

this

probability

of

a

man's

ass.

This

one

is

open.

Let's

I

may

need

to

make

some

assumption

for

this.

One

from

infant

assumption

we

can

make

is

that

my

M

is

now

also

a

realization

of

a

group

of

a

Gaussian

distribution

of

mean

my

fun

and

covariance

one

of

a

better.

This

looks

familiar

again

and

we

do

with

this

one.

C

C

So

it's

going

to

it's

gonna

drop,

so

I

didn't

do

the

intermediate

thing

but

accident

when

I

minimize,

the

minus

log

of

my

probability

of

em

I

get

back

so

by

that

a

fitting

tell

me

so

before

and

my

regularization

term

that

is

so

assuming

I

like

so

basically

so

we

got

so

from

the

probability

we

got

back.

The

chicken

of

regularization,

so

why

I

do

or

for

me,

was

actually

really

nice

to

develop

to

develop

that

likes.

C

C

C

It's

drawn

from

a

Gaussian

distribution

and

we

have

made

actually

a

tons

of

assumption

to

get

to

get

to

here.

So

l2

and

ocean

are

very

like

interweb.

It's

it's.

The

same

thing

just

want

two

different

paltalk

you

and

one

thing

was

nice

to

look

at

too-

is

that

when

we

do

actually

when

we

do

with

better

cooling,

narrow

inversion,

that's

what

we

do

actually

like

that

at

first

like

when

beta

is

high

with

users.

C

C

Okay,

so

we

got

back

what

we

all

already

all

known,

but,

as

I

said

for

me,

I

found

it

really

nice

to

to

derive

it

from

this

point

of

view

and

I

just

pretty

much

I'm,

just

under

the

spot

or

the

search

or

the

accenture

behind

it.

So

what

what

can

we

do

with

it?

First

thing:

I,

you

can

think

of

it

like

you,

just

use

a

different

probability

distribution.

C

So

if

you

use

a

lot

last

priority

distribution,

you

get

back

your

zl1

normal,

so

that

she'll,

don't

you

use

successfully,

for

example,

if,

if

you

have,

if

you

want

to

recover

a

sparse

model

in

some

basis,

such

can

be

in

some

at

cancer

that

can

be

in

like

in

just

in

the

space

in

the

space.

But

if

you

want

to

have

like

a

your

model

that

is

fast

in

full

year

in

web,

blades,

etc,

that

would

work

the

same.

C

Another

distribution

that

is

really

cool

is

a

student's

t-distribution.

It's

like

a

Goshen

but

with

if

your

tail,

so

it's

very

nice

for

outliers

detectors

or

if

you

know

that

most

of

your

data

are

good,

but

you

have

some.

You

have

also

a

lot

of

outliers

that

are

hard

to

detect,

but,

and

you

have

enough

out

layers

that

is

basically

skewed.

Your

your

distribution,

like

am

I,

should

have

never

made

this

distribution

for

that.

C

But

imagine

that

you

have

you

even

like

leave

you

in

over

the

dome

in

case

you

have

tons

of

the

point

that

for

almost

a

linear

trend,

but

then

you

have

a

lot

of

very

high

value

on

top,

like

you

like

in

l2

norm,

it

will

just

put

you

in

the

middle

and

if

you

then

outliers

are

the

ones

that

I

don't

fit.

You

can

actually

threw

away

all

the

good

data

and

I

can

enter

yes.

C

So

yeah

I

have

like

tons

of

bone

disease

likely

that

pepper

tree

behind

at

CTU

vs.

line,

but

have

also

talked

about

Tigers,

do

too

to

some

it.

So

this

is

just

looking

at

it.

I

would

like

to

do

in

your

exes

right,

but

if

I

do

a

to

know

that

is

that

supposed

to

get

to

lacy

square,

give

me

right

and

then

I

said.

Oh,

so

all

the

other

two

years

are

our

sides

at

winco.

C

B

B

C

So

that's

so

find

out

what,

if

you

have

a

if

you're

busy,

because

basically

an

end

to

in

the

end

to

know

if

you

are

like

I

outliers

means

we

push

you

out

because

you

use

square

00.

That

really

is

get

even

video

ends,

but

I

ready

for

your

distribution.

Anyone

just

look

at

the

absolute

value

of

your

you

just

forget.

The

absolute

value

goes

everything

so

many

you'd.

Like

sir

this

one

looks

at

the

log

of

the

square

of

the

week,

so

I.

C

C

B

C

A

B

D

Yeah

yeah

no

I

totally

are

I

mean

that

I

think

it

they

can.

I

want

to

do

this

when,

when

they

re

waiting

after

a

few

songs

right,

the

crew

started

implementing

it

with

the

with

the

UPC

codes

with

yeah

I,

don't

know

it's

just

another,

it's

another

layer

on

top

of

it

and

I.

It

needs

to

mean

something:

it's

give

you

something

out

of

it.

I

guess,

work

yeah,.

C

This

one

will

be,

as

I

said

like

if

you,

if

your

data,

if

you,

if

you

have

good

data,

there's

also

some

very

noisy

data,

and

it's

can

be

in

it

scanned

and

l2

is

not

able

to

sort

to

sort

it

properly.

I

could

send

others

it's

a

it's

one,

another

tool

you

can

use.

If

you

have

this

type

of

this

type

of

thing,

ya,.

C

C

This

one

is

like

small

slide

for

very

complex

things

that

I

try

to

look

at

it.

It's

like,

like

people

in

machine

learning,

call

that

a

structural

sparsity

and

it's

basically

like

the

basic

idea,

is

that

you

apply

different

known

to

different

part

of

your

model

and

you

can

even

compose

the

different

and

you

can

even

put

the

same

parameter

in

different

nodes.

So

that

is

so.

C

Then

you

can

deal

with

dependency

between

your

model

like,

for

example,

if

you

have

you,

oh,

if

you

push

then

make

em

20

and

if,

if

both

are

nonzero,

then

we

utilize

them

in

the

l2

norm

and

stern

stuff

like

and

stuff

like

that,

so

you

can

actually

structures

bus

like

put

actually

impose

structure

in

our

model,

with

that

I

haven't

seen

it

in

done

in

geophysics,

yet

but

I'm

I'm.

I

Explo

that,

but

I

try

to

read

like

some.

It's

something

very

sad

someone

we

knew

like

I

read

the

disease

from

like

2015

like.

C

Is

this?

Yes,

that's

kind

of

what

it

is,

but

it's

been.

It's

been

used

for

like

machine

learning

application

before

so.

Actually

I

just

made

like

a

very

random

fuse.

We're

like

11

parameter

II,

like

is

drawn

from

the

Gaussian

and

the

other

perimeter

is

drawn

from

the

Laplace.

So

that's

something

that

is

the

thing

to

do

about

this.

This

one

is

religious

like

okay,

every

some

things

that

people

do

this,

but

I

haven't

dive

into

it

that

much,

but

it

what

I've

been

working

more.

C

These

days

is

working

on

the

prior

that

we

talked

about

like

so

far.

We

we

think

that

if

we

use

it,

if

you

use

like

a

single

motion

or

single

Laplace,

we

can

I

get

l2

l1.

No,

but

in

my

case,

for

my

data,

for

example,

are

tons

of

petrophysical

data

and

my

goal

would

be

to

make

it

falls

into

like

that.

C

My

inversion,

we

drive

model

by

metals

that

falls

into

these

bins

that

are

defined

by

my

petrophysical

data,

so

then

so

that,

for

me,

the

opposed

our

approach

I

choose

right

now

is

to

define

my

prior

as

a

sum

of

probability.

Distribution

channel

laplace

or

whatever

you

like,

but

let

goshen

is

good

enough.

It's

I

can

derive

it

if

I

can

divide

it

as

many

time

as

I

want

and

it

can,

if

I

have

and

if

I

put

in

our

ocean

I

can

fit

basically

any

type

of

distribution.

So

that's

quite

nice.

C

C

Because

the

Sun

is

inside

the

log

now,

which

make

it

more

painful,

that's

where

are

some

issue

is

that

I

will

show

it

later

was,

as

that,

what

that

looks

like

so

I

should

put

the

keys

of

the

prod.

Is

that

with

that

I

can

fit

any

parameter

distribution

and

it's

easily

scalable.

That's

not

like

something

in

1d,

but

that's

something

to

the

that

you

can.

C

You

can

fit

and

you

can

go

in

many

dimension

up

to

us

up

as

long

as

your

data

keep

up

with

the

dimension

and

actually

passed

four

dimensions,

probably

hopeless

in

a

way

that,

for

example,

in

in

one

day,

if

you

want

to,

if

you

it's

basically

an

app

instagram,

if

you

want

to

think

of

it

and

in

1d,

if

you

want

to

have

10

mins,

for

example,

you

need

dancer.

Thanks

up,

you

can

start

with

10

sample,

but

2d.

You

want

n

beans

for

each

of

the

two

parameters

unit.

C

You

need

hundred

three

dimensions

as

a

three

dimensional

parameter

like

a

cross

street

physical

property,

you

will

need

more

than

a

thousand

and

it

just

scales

and

scaling

scales.

So

imagine

that

DC

IP

data

I

have

resistivity

and

22

times

IP

time

windows.

If

I

want

to

do

a

fitting

on

all

this

parameter,

I

would

to

be

able

to

start

to

do

a

good

job.

C

B

C

God

that

by

tacky

bug

is

actually

important,

scalable,

but

mathematically

and

with

this

formulation,

it's

actually

englobe

k-means

and

thousand

window

I'll.

Just

special

case

where

you

came

in,

for

example,

would

be

put

your

covariance

matrix

all

the

same

and

that

are

just

proportional

to

identity

and

buzzing

windows

is

like

you

have

a

50.

You

have

a

500

data

pound

I'm

going

to

feed

500

Goshen.

So

it's

going

to

fit

perfectly.

C

There

are

some

twink

behind

that,

but

I

don't

need

to

take

two

to

see

it

right

now,

and

so

that

was

an

early

resort,

but

it's

not

quite

there

yet

like

this

one.

My

I

admit

that

some

of

my

gradient

was

wrong

or

anything

but

was

like

kind

of

still

beginning

to

give

something

something

nice.

You

know

in

a

way

that

you

see

here

that

I

didn't.

C

I

didn't

change

the

scale,

but

just

I

just

get

the

same

scale

for

my

perimeter

back

and

with

the

same

with

the

same

survey

and

in

putting

this

non-local

need

is

non-local

information

into

my

inversion

and

before

the

sphere

was

invisible

and

it

was

putting

me

very

conduct

it

stuck

on

top.

Now

I

got

some

high

at

the

surface,

but

I

start

to

distinguish

that

so

that's

kind

of

the

idea.

C

This

was

a

early

resolved

and

now

my

code

is

all

broken,

but

that

wasn't

doing

that

was

encouraging

at

that

point

and

I'm

still

working

on

that

and

so

like

now

to

show.

What's

my

function,

objective

looks

like

that's

what

it

looks

like

and

that's

when

it's

become

become

nicely,

so

my

data

fitting

term

is

0

still

the

same,

but

now

my

instead

of

a

Norma,

how

the

negative

log

of

a

song

of

waiting

exponential.

C

C

My

put

my

the

probability

here:

it's

it's

always

positive,

but

it

can

get

as

close

to

zero

are

like

as

we

want.

So

that

means

that

I

take

the

log

of

something

that

is

always

positive,

but

can

can

delete.

Get

close

to

zero

may

be

very,

very

fast

too.

So,

let's

can

impose

that

caused

me

or

so.

Some

numerical

issue

because

I

took

that

as

a

log.

C

Very

close

to

zero,

so

sometimes

I

can

glue

it

can

blow

up

and

that's

why,

even

if

it's

infinitely

divisible

the

grenye,

the

nation

can

get

very

big

very

fast.

That's

that's

one

of

my

in

the

program

a

happy

face,

or

so

right

now

and

the

last

point

I,

don't

know

if

it's

a

problem

or

not

it

just

is

that

in

most

very

most

cases

like

this,

this

thing,

like

the

probability

density,

gonna,

be

less

than

less

than

1.

C

So

my

negative

log

going

to

be

positive,

but

this

this

thing

ear

is

like

we

talked

about

probability,

but

it's

we

should

not.

We

should

not

be

talking

about

probability.

We

should

be

talking

about

probability,

density

distribution

and

if

I'm

ABT

stay

between

0

and

1

probability

density

does

not

so

this

term

can

sometime

be

more

than

one.

So

I

get

something

in

my

objective

function

that

is

negative

as

you

see

on

them,

which

should

not

be

a

problem.

C

If

you

will,

if

you

really

go

mom

that

you

can

just

shift

by

your

constant

to

make

sure

that

everything

stay

positive,

it

just

batted

you.

It's

just

like

an

annoying

detail

to

look

at

when

you

look

at

your

blog

result

and

like

you

see

that

your

phi

m

is

to

get

you

and

and

this

one

I

haven't

like

I,

haven't

included

in

the

presentation.

But

we

can

you

like.

Like

all

the

same

way,

we

include

those

small

nexus

cetera.

C

We

can

include

the

gradient

into

the

admin

to

that

thing,

and

one

thing

why

I

mentioned

to

you

that

I

was

really

interested

to

the

composite

board.

Objective

function

is

that

for

sister,

like

better

cooling

comes

very

naturally

when

we

do

the

when

we

have

just

a

Gaussian

drawn

from

a

Goshen

distribution,

but

this

one

I

don't

have

anything

with

it

doesn't

mean

that

I

don't

want

to

put

anything.

C

I

would

probably

put

some

ways

like

we

find

that

I'll

find

the

getty

blog

like

he

would

or

something

like

another

alpha,

but

I

will

definitely

use

another

bit

like

a

different

bit.

A

strategy

for

like

I,

don't

know

like

what

say

what

judge

did

in

CSM

is

that

evident,

I,

don't

think

use

this

framework

to

derives

came

in.

He

basically

took

them

the

objective

function

and

add

the

and

add

the

objective

function

you

usually

use

for

chem

in

which

is

like

a

special

case

of

that.

C

Once

we

got

some

simplifications,

we

just

adding

term

so

after

your

objective

function,

so

we

still

have

a

small,

nice

and

derivative,

etc

and

I

think

maybe

it

helps.

Maybe

it

else

also

like

stabilizes

the

problem

when

you,

when

this

guy

become

that

become

nasty

but

but

definitely

I

will

like

a

compostable.

C

C

I

will

do

a

better

cooling

on

the

small

exit

strategy,

meaning,

but

this

guy

would,

I

will

try

and

make

it

back

stays

the

same,

because

it's

there

is

nothing

appearing

on

that

side

that

they

will

eat

like

mathematically

speaking

in

that

framework,

I

don't

see

any

reason

to

do

a

code-

cooling-

maybe

maybe

numerically,

but

we

see

that

letter

so

just

to

that's

just

an

example.

So

that's

my

model

here

and

I

just

have

5

Goshen

to

fit

that.

That's

and

that's

quite

nice,

like

I,

mean

it's

all

the

web

or

etc

in

blue.

C

B

C

C

C

D

C

Right

now

how

I

do

that

is

that

I

created

a

new

regularization

class

that

I

could

fit

for

utilization

and

that,

like

her,

so

it's

and

so

now

in

the

inversion

like

in

the

eval

function,

like

figure

ization,

like

it

evals

and

negative

log

line

likelihood

in

the

gradient

and

asian

of

the

negative

log

likelihood.

Okay.

C

A

E

D

A

A

A

A

Awesome

and

then

the

other

pull

request.

I

wanted

to

go

over

and

maybe

I'll,

let

Rowan

jump

in

briefly.

Here

too

is

pulling

out

the

mesh

class,

and

so

now

it's

in

the

discretized

package.

So

now

there's

no

machine

inside

of

sim

peg.

This

is

on

dev

right

now,

but

I

would

like

to

get

it

down

to

master

soon,

ish

so

yeah.

So

all

the

meshing

changes

can

be

done

strictly

and

discretized

and

then

Rowan

updated

the

Richards

stuff.

E

Yeah,

which

I

mean

there's

it

it

works

better

now,

so

you

can

invert

for

any

of

the

different

sort

of

empirical

parameters.

I

might

do

that,

like

your

talk

about

that

at

a

later

time,

because

I

think

it

has

some

application

to

IP

and

any

time

we

want

to

do

sort

of

none

any-any

mapping

that

depends

on

the

model

or

on

the

fields

and

I'm

sure

we'll

be

getting

into

more

of

those

sorts

of

things,

especially

as

we

get

into

joint

in

versions

as

well.

E

E

Yeah

I

could

I

could

do

do

that

sort

of

prepare

something

for

the

next

next

couple

weeks,

yeah

and

then

there's

that

one

other

thing

about

the

number

hindsight

I

are

no

longer

your

site.

I

sparse,

are

no

longer

being

exported

by

this

impact

import

start.

So

that

means

you'll,

probably

if

you're,

if

you're

relying

on

those

you'll,

have

to

add

to

other

imports

at

the

top

of

various

functions.

A

E

Yeah,

I

think

this

it's

it's

pretty

simple.

I

don't

think

they're

ibly

any

breaking

changes

other

than

the

numpy

sci-fi

thing.

The

discretized

package

is

exactly

the

same

ins.

It

it

sounds

like

Joe

is

working

on

some

improvements

to

speed,

especially

on

the

tree

mesh

side

of

things.

I

don't

have

a

an

idea

of

when

that

sort

of

stuff

will

land,

though.

A

D

D

E

E

And

this

sort

of

allows

people

to

sort

of

jump

in

at

different

levels,

because

I

mean

so,

for

example,

Joe

is

jumping

in

on

the

discretized

side

of

things,

which

is

like

finite

volume,

with

derivatives

in

mind,

and

that's

like

what

that

packages

for

it

doesn't

have

anything

to

do

with

inverse

theory

or

inverse

frameworks.

Just

you

want

to

take

a

derivative

with

respect

to

your

properties

on

the

mesh

yeah.

D

E

D

The

same

yeah,

it's

the

same

old,

it's

just

a

man

is

bug

bug

hunting

yeah.

One

thing,

though

they

would

be

nice

to

changes,

is

the

print

statement

when,

when

we're

solving,

can

we

print

like

at

the

end

of

the

end

of

everything

after

the

directives

or

applied

basic

one,

I

should

say

before

the

directives

are

applied.

E

E

E

I,

don't

have

any

strong

preferences

without

without

sort

of

seeing

one

way

or

the

other

don't

like

it

held

it

up,

sweet

and

then

also

with

your

ear

at

the

printing

I've

been

looking

at

some

other

table

stuff,

there's

some

good

stuff

out

of

a

trope

I

that

we

could

possibly

rely

on

or

ask

them

to

break

it

out

or

something

like

that.

I

don't

know

for

like

print

to

file

your

man

yeah

print

to

file

and

like

console

login,

which

is

similar

to

what

kootenay

talked

about

last

week

or

two

weeks

ago.

E

Yeah.

So

there's

there's

some

maintenance

there,

because

our

iteration

printers

are

terrible.

I

got

a

trick,

is

just

a

little

bit

yeah.

They

do

the

trick,

but

especially

as

we're

going

to

this

compensated

object,

objective

function,

stuff,

it's!

It

is

sort

of

difficult

to

see,

see

all

of

the

various

pieces

all

at

once.

Oh.

C

D

E

A

And

from

talking

with

Doug

and

soggy,

it

sounds

like

mt

is

one

of

the

things

that

is

like

been

quite

important,

both

in

Indonesia

and

in

India

and

so

like

getting

some

of

those

examples

built

up

and

have

you

saw

on

the

cob,

we'll

just

call

him

out

would

be

that

would

have

a

big

impact.

So

that's

exciting.