►

Description

Watch the playback for a hands-on GitLab CI workshop and to learn how it can fit in your organization!

We will kick things off by going over the differences between CI/CD in Jenkins and GitLab, syntax requirements, advantages to using GitLab, and how you can achieve the same outcomes in GitLab. Getting started with CI/CD in GitLab will take a lot less time than tends to be required for Jenkins, and your users can stay in a single platform.

We will then dive into how to build simple GitLab pipelines and work up to more advanced pipeline structures and workflows, including security scanning and compliance enforcement.

A

A

But

the

goal

for

today

is

to

give

you

a

chance

to

understand

some

of

the

differences

between

Jenkins

and

gitlab,

CI

and

CD,

and

then

start

to

dive

into

creation

of

simple

pipelines

with

some

of

the

more

advanced

features

included.

So

you

get

a

chance

to

get

a

taste

of

of

how

easy

these

are

to

get

started

with.

A

So

my

name

is

Steve

Graham

I'm,

a

customer

success

engineer

on

the

scale

team

here

at

gitlab,

I've

included

my

LinkedIn

profile

link

there.

If,

if

any

of

you

would

like

to

get

in

touch,

but

just

for

awareness,

so

again,

tomorrow,

you're

going

to

be

getting

an

email

with

a

link

to

the

slide

presentation

used

today

and

a

link

to

the

recording

we

are

making.

So

you

can

review

either

or

share

it

with

your

peers.

A

A

So

let's

talk

about

some

of

the

platform

advantages

that

gitlab

possesses.

One

of

the

biggest

things

is

reduce

tool.

Switching

so,

regardless

of

your

subscription

pipelines

running

the

same

project

where

developers

make

their

commits

and

merge

requests,

all

pipelines

are

viewable

from

a

Project's

pipelines.

Page

and

pipelines

can

also

be

used

to

enable

environments

in

projects,

and

this

is

a

way

just

to

track

what

releases

went

out

to

what

or

what

commits

what

tags

potentially

were

released

into

different

environments.

And

you

know

this

could

be

production,

it

could

be

staging.

A

So

again

it

ultimate

subscriptions

also

allowed

for

creation

and

enforcement

of

compliance,

Frameworks

pipelines.

So

if

you've

got

a

scenario

where

you're

having

to

comply

with

HIPAA

or

something

along

those

lines

or

pcie,

you

can

label

a

part

A

a

project

appropriately

and

you

can

enforce

pipelines

if

you've

got

an

ultimate

subscription.

A

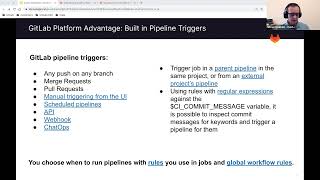

So

another

advantage

of

gitlab

is

built-in

triggers.

You

don't

have

to

build

your

triggers

into

Jenkins

here,

they're

just

built

into

gitlab

any

push

on

any

branch,

merge

requests,

pull

requests.

Pipelines

can

be

manually

triggered

from

the

UI.

If

you

want

to

do

that,

which

is

very

much

like

Jenkins,

we

have

the

ability

for

you

to

schedule

Pipelines.

A

They

also

have

an

API

and

you

can

run

a

pipeline

from

an

API.

They

also

have

the

ability

to

run

pipelines

from

web

hooks

and

the

ability

to

run

pipelines

from

the

chat

Ops.

If

you

want

to

take

that

route,

but

some

additional

things

to

think

about

are

that

we

can

trigger

a

job

in

a

parent

pipeline

in

the

same

project,

so

this

would

be

an

independent

pipeline

that

would

be

triggered

during

the

course

of

a

pipeline

run

in

your

project.

That

would

run

an

independent

pipeline.

A

A

You

can

also

use

rules

with

regular

expressions

against

the

CI,

commit

message,

variable

it's

possible

to

inspect

that

for

keywords

and

trigger

a

pipeline

for

them.

So

a

real

important

note

here

is

that

kitlab,

CI

and

CD

includes

the

concept

of

rules

and

rules

allow

a

job

to

run.

They

can

be

put

in

a

job.

A

They

can

also

be

put

in

the

entire

pipeline

as

workflow

rules

and

those

can

regulate

when

when

to

run

a

pipeline

and

when

right,

when

not

to

you,

may

not

want

to

run

a

pipeline

on

a

feature

Branch

until

it

becomes

a

merge

request,

and

then

at

that

point

you

can

start

running

pipelines

on

it.

If

you

want

to,

you

can

also

run

a

pipeline

on

a

merge

commit

when

that

merger

Quest

comes

into

the

default

branch.

A

So

another

Advantage

is

pipeline.

Template

repositories,

and

this

is

a

this

is

a

big

game

and

I

can

tell

you

that

a

ton

of

our

customers

are

using

these

right

now,

so

devops

teams

can

maintain

common

job

files

or

entire

pipelines

in

centralized

projects

for

use

in

other

projects

and

pipeline

template

repositories

will

use

the

downstream

projects

variable

context.

A

So

it's

just

something

that

you

can

take

a

look

at

if

you

want

to

do

it

later

and

then

using

pipeline

repositories,

projects

can

include

pipeline

files

from

external

repositories

in

their

pipeline

files

by

using

the

include

keyword

with

project

syntax

now

for

projects

that

don't

want

to

maintain

pipelines

at

all,

which

you

know,

we've

all

got

circumstances

like

that,

where

we've

got

developers

who

just

don't

want

to

pay

attention

to

devops

at

all,

it's

just

not

part

of

their

mindset.

They

want

to

stay

focused

on

their

code.

A

Now

pipelines

run

independently

of

each

other

and

have

fresh

environments

in

each

job.

So

what

you

think

of

as

stages

in

Jenkins,

with

steps

enumerated

below

it

that

are

going

to

be

run

for

that

particular

stage,

are

typically

called

jobs

in

in

git.

Lab

gitlab

does

have

the

concepts

concept

of

stages.

The

stages

are

meant

to

contain

jobs,

and

not

they

don't

have

steps

enumerated

in

them.

A

So

get

lab

runs

a

cleanup

job

after

eating

a

cleanup

after

each

job

to

ensure

a

clean

working

environment

for

the

next

job.

So

you

no

longer

need

to

add

cleanup

functionality

in

your

jobs

where

they

may

be

required

now,

and

then

we

also

have

the

ability

for

you

to

create

manual

jobs

with

optional

approvals.

Now

this

is

a

really

neat

feature,

but

it

gives

you

the

ability,

let's

say,

for

example,

you

want

to

run

this

pipeline,

but

then

you

want

to

go

through

an

approval

process.

A

Before

you

release

production,

we

have

the

ability,

so

the

pipelines

for

protected

branches,

only

users

who

are

allowed

to

push

or

merge

can

run

the

job.

If

the

job

is

running

in

a

protected

environment

and

by

the

way

jobs

can

delineate

an

environment,

they

can

actually

delineate

their

the

production

environment.

A

A

A

We

would

think

of

as

a

runner

and

Runners

are

just

these

bear

objects

that

pick

jobs

up

and

can

run

them

now,

post

jobs

by

the

way

we

we

do

have

an

after

script

capability

in

a

job

that

you

can

think

of

as

being

similar

to

a

post

job.

But

a

post

job

in

Jenkins

would

typically

be

something

that

you

use

to

do

some

cleanup

on

the

agent

with

in

this.

A

If

you

need

to

do

it

is

supporting

with

additional

stages,

but

again

runners

run

cleanup

jobs

of

their

own,

so,

depending

on

what

it

is

that

you're

needing

to

get

accomplished

there,

you

can

accommodate

some

additional

stages

and

then,

for

us

stages

again

stages

are

a

categorization

method

that

we

can

put

jobs

into

think

of

it

as

a

container

for

jobs.

But

it's

not

a

literal

container

right.

It's

just

a

place

that

we

put

jobs

into

and

displays

them

in

stages

in

gitlab

runs

sequentially.

A

So

when

you

think

about

stages

right

now,

those

have

a

steps

enumerated

underneath

them

in

git

lab.

We

use

the

script

keyword

for

that

and

the

script

keyword

is

an

array

of

commands

that

are

executed

in

a

shell

environment

on

that

on

that

Runner.

So

if

it's

running

in

a

Linux

container,

you

know

it's

running

in

Bash.

A

So

just

be

aware

of

that,

there's

some

variations

there

and

then,

with

respect

to

your

environment,

we

use

the

keyword

variables

for

that

and

that's

where

you

can

set

up

the

variables

that

are

going

to

be

available

and

variables

are

available

to

be

set

globally

for

all

tabs

or

they

can

be

set

individually

for

jobs.

If

you

want

to

do

that,

and

then

options

just

equate

to

job

keywords,

such

up

keywords

have

the

ability

to

change

the

way

that

jobs

behave.

A

A

You,

can

pre-fill

variable

values

using

your

gitlab.ci.yml

file,

so

it's

possible

for

you

to

actually

Define

the

variables

that

are

going

to

show

up

in

a

manual

pipeline

page

and

have

them

pre-filled.

If

you

wanted

to

take

that

route,

but

you

also

have

the

ability

to

put

a

list

of

options

for

each

one

of

those

variables

too

similar

to

what

Jenkins

does

and

then

triggers

and

crime,

because

gitlab

is

integrated

tightly

with

Git

fcm

polling

options

for

triggers

are

not

needed.

A

We

support

a

Syntax

for

scheduled

jobs

and

you

can

actually

go

into

gitlab

into

a

project

and

you

can

schedule

pipelines

to

run

whenever

you

want

them

to

and

then

the

last

of

these

tools

and

I've

looked

at

Tools

in

Jenkins

there's

only

a

few

of

them

available.

They

seem

very

Java

oriented

right

now,

but

kitlab

does

not

support

a

separate

tools

for

active.

A

A

So

it's

real

important

that

you

have

a

workinggitlab.com

account.

You

need

to

be

registered

at

getlive.com

because

we're

going

to

provision

an

ultimate

subgroup

for

you.

There

in

under

our

gitlab

learn

labs,

and

this

will

give

you

the

ability

to

kind

of

Kick

the

tires

if

you

will

play

around

with

the

ultimate

features

and

if

you've

got

an

ultimate

subscription,

then

it's

a

direct

equation,

but

the

things

we're

going

to

be

going

through

today

with

respect

to

this

Workshop

they're

all

covered

under

all

of

our

different

different

tiers

so

bust.

A

A

That

follow

a

very

linear

order

for

any

specific,

any

specific

job

and

we're

going

to

go

through

rules

and

failures.

How

to

deal

with

those

and

then

we're

going

to

go

through

setting

up

stat

scanning

which,

by

the

way,

is

available

at

every

single

tier

of

gitlab.

So,

regardless

of

what

tier

gitlab

that

you

have,

SAS

scanning

is

available

to

you

and

we're

also

going

to

talk

about

how

to

deal

with

artifacts

and

then,

at

the

very

end,

we're

going

to

talk

quickly

through

what

you

would

have

to

do.

To

transfer

a

project.

A

A

A

When

you

click

on

redeem

invitation

code,

you're

going

to

need

to

put

the

code

that

we've

given

you

in

and

then

redeem

and

create

account

now

it's

actually

not

creating

an

account

for

you

at

getlive.com.

But

it's

going

to

provision

this

subgroup

for

you

and

then

the

very

next

thing

you're

going

to

have

to

do

is

you're

going

to

have

to

know

your

username

from

gitlab

everything

except

for

the

ad

symbol.

A

Then

you

can

click

on

the

my

group

and

you

can

go

directly

to

your

group

you're,

going

to

end

up

at

a

group

that

looks

like

this.

Now

it's

going

to

have

a

different

set

of

letters

and

numbers

here.

That's

just

a

hash

value.

That's

a

product

of

the

at

the

registration

code

in

your

username

at

gitlab.

A

But

if

you

happen

to

manage

here

so

you

land

here,

something's

gone

wrong,

go

back

and

do

it

one

more

time

and

nine

times

out

of

ten

when

this

happens.

What's

happened

is

somebody's

included,

the

at

symbol

in

their

username,

when

they

put

that

part

in

so

go

back,

put

in

the

invitation

code

again

put

in

your

gitlab

username

provision,

the

training

environment

and,

hopefully,

you'll

end

up

back

here.

B

A

And

if

I

click

on

provision

training

environment,

then

I'm

going

to

see

this

page

and

again

you're

going

to

want

to

really

make

note

of

this

in

case,

you

want

to

come

back

and

forth

over

the

next

four

days,

so

you

have

a

way

to

get

back

to

it

quickly,

but

when

you're

ready,

just

click

on

my

green

and

as

you

can

see,

it's

opening

up

a

group

in

separate

tab

in

this

particular

sequence

of

letters

and

numbers

is

mine.

That's

specific

to

me.

A

So

very

next

thing

we

want

to

do

before

fully

pushing

out

the

application.

Your

team

wants

to

test

a

few

different

types

of

pipelines

to

see

what

fits

their

needs

best.

The

first

task

your

product

manager

gives

you

is

to

create

a

simple

pipeline

that

builds

and

tests

the

racing

application

now

you're

going

to

need

to

navigate

to

this

URL

and

Chris.

Would

you

mind

putting

that

in

for

us

foreign.

A

Now

again,

we're

going

to

be

working

in

a

split

screen

mode

today,

just

so

that

I

can

demonstrate

this

to

you.

The

downside

to

that,

of

course,

is

that

it

squeezes

my

screen

together

for

both

these

different

windows

and

that

condenses

things

in

a

way

where

you

don't

see

your

normal,

your

normal

eight

shots

as

we're

going,

but

I'll

show

you

how

to

work

around

that.

If

you

need

to.

A

Once

we

click

on

the

fork

button,

you're

going

to

have

to

pick

a

space

that

you're

going

to

Fork

it

to

you

know

we

want

to

pick

the

groups

that

you

just

provisioned

in

our

previous

step.

That's

going

to

be

an

ultimate

subgroup.

It's

going

to

have

everything

can

have

all

our

features

available

in

it.

So

that's

the

place.

A

A

A

And

what

we

have

a

couple

things

that

are

important

to

realize

here

we

have

a

before

script:

that's

a

command

that

executes

before

the

script

statement,

execute

and

script

you

can

think

of

as

steps.

It

equates

to

the

steps

you

would

grow

and

run

in

your

stages

in

Jenkins,

so

the

before

script,

typically

that's

being

used

by

people

to

pull

in

libraries

things

like

that.

A

They

need

to

install

things

along

those

lines,

but

in

that

particular

case,

if

that's

what

you

would

be

using

it,

for

we

really

recommend

that

you

start

getting

into

building

containers

of

your

own

and

you

can

store

them

at

kitlab.

Kitlab

has

a

container

registry,

so

you

can

put

them

in

there

and

then

you

can

consume

them

from

your

Pipelines

and

then

the

script

you

can

think

of

is

the

classic

steps

that

you

would

have

in

your

stages.

In

Jenkins.

We

also

have

an

after

script

the

implications

of

the

after

script

is.

A

It

runs

in

a

certain

shell

after

the

before

script

in

the

script

statements.

So

if

something

fails

in

the

before

script,

that's

going

to

fail

your

job.

If

any

individual

command

on

there

returns

anything

other

than

a

zero,

it

returns

a

one,

something

along

those

lines.

That's

going

to

cause

that

job

to

fail.

A

A

Now

gitlab

Runners,

they

run

all

the

jobs

you

define

in

a

pipeline

again.

Runners

are

you

can

think

of

as

classical

agents

or

nodes

in

Jenkins

they

run

all

the

jobs

you

define

in

a

pipeline.

They

could

be

Tagged

so

that

specific

jobs

can

only

run

on

specific

Runners,

which

means

that

you

can

take

the

time

set

up

a

runner

that

looks

a

lot

like

an

age,

a

classic

age.

It

would

be

in

in

Jenkins.

If

you

want

to

do

that.

A

A

These

won't

be

in

your

fourth

project,

because

when

you

Fork

a

project

it

doesn't

bring

issues

over.

It

only

brings

the

code

over

we're

going

to

need

to

go

down

to

issues

and

all

the

steps

we're

going

to

go

through

today,

including

the

two

optional

ones

that

we're

not

going

to

go

through

today

that

we're

giving

you

four

days

to

go

through

if

you

want

to

do

them

or

listed

at

the

top

here,

we're

going

to

go

to

simple

pipeline

and

we

can

step

skip

step

one

because

we

already

did

it

in

this

particular

case.

A

That's

working

our

project,

so

I'm

going

to

create

a

simple

pipeline.

So

first

click

the

project

overview

in

the

top

left

of

the

screen.

But

that's

where

we're

at

right

now

so

now

that

we

have

our

project

imported,

go

ahead

and

take

a

look

at

the

git

labs

desi.yml

file,

and

you

can

see

that

we've

got

a

very

simple

pipeline

defined

here.

It

just

has

a

build

app

and

it

has

a

unit

test,

and

then

it

has

it

by

the

way.

These

are.

A

A

A

A

A

You

can

see

a

pipeline

is

running

now

and

if

I

click

on

view

pipeline,

we

can

actually

take

a

look

at

it

now.

This

buildup

takes

a

little

while

to

run

so

I'm

not

going

to

wait

on

it

very

long,

but

test

for

awareness

build

apps

got

to

be

done,

but,

as

you

can

see,

the

unit

test

isn't

running.

Yet

it's

not

going

to

run

until

the

build

app

is

done.

A

Now

it's

telling

us

to

go

ahead

and

view

the

job

using

the

left-hand

navigation

to

go

through

to

build

and

pipelines.

Let

me

just

show

you

how

what

that

looks

like

we

go

to

build

and

Pipelines

it

just

takes

us

takes

us

to

a

similar

page.

Let

me

show

this

to

you

in

a

way

that

will

look

like

it

does

when

you're

on

gitlab

itself,

if

we

just

click

on

the

running,

we'll

be

able

to

take

a

look

at

it.

A

A

So

after

showing

off

your

sub

pipeline

to

the

team,

they

loved

it.

But

we're

wondering

if

you

can

speed

up

the

process

a

little

bit.

You

decide

you're

going

to

show

off

your

skills

and

show

you

create

a

pipeline

with

different

execution

orders,

as

well

as

the

large

directed

a

cyclic

graph

to

show

what

is

really

possible.

A

A

Good

quality

job

does

not

need

the

results

of

the

build

it

executes

directly.

On

the

code

itself,

it

can

execute

in

parallel

with

the

build

we

want

to

keep,

but

we

want

to

keep

code

quality

in

the

test

stage

so

because

it

just

makes

sense

it's

a

test.

We

want

to

keep

it

in

the

test

stage,

so

we

have

an

ability.

We

have

the

ability

to

separate

the

order.

A

We

can

adjust

the

job

execution

order

for

pipeline

efficiency,

and

this

is

a

very

big

deal

now.

What

you're,

seeing

down

at

the

bottom

of

that

code

quality

job

is.

It

needs

keyword

with

an

empty

array

at

the

end,

that

empty

array

means

that

there

are

no

dependencies

for

this

job

to

run

and

it's

eligible

to

start

running

just

as

soon

as

the

pipeline

starts

firing

up

and

what

we'll

see

when

we

externally

put

that

in

the

beginning

of

the

pipeline

execution

we'll

see

the

build

app

and

code

quality,

Go

Active

right

away.

A

So

an

example,

direct

is

a

cyclic

graph

pipeline.

That

uses

needs

to

run

as

fast

as

possible

in

this

particular

case

here,

building

test

day

and

deploy

running

series,

but

they're

not

going

to

wait

for

anything

that

has

to

do

with

Bill

b,

so

test

day

is

going

to

run

as

soon

as

Bill

day

is

done.

Deployee

is

going

to

run

as

soon

as

test

day

is

done,

and

the

same

is

true

for

the

B

series

of

tests

here.

A

So

it's

actually

possible

to

get

lab

to

build

stageless

pipelines.

If

you

want

to

do

it

now,

be

very

Frank

with

you.

This

isn't

something

that

I

adhere

to

or

like

to

do,

but

to

be

very

Frank.

If

you

take

the

time

to

put

your

needs,

delineate

the

needs

for

every

single

job

in

your

pipeline,

it's

going

to

run

faster.

A

So

why

is

this

useful

status

pipeline

makes

your

pipeline

more

efficient,

implicitly

configure

the

execution

order,

it's

faster

to

write

and

it's

a

more

efficient

Pipeline

with

less

cycle

time

now

to

be

very

Frank.

If

you

just

take

the

time

to

delineate

all

the

needs

keywords

on

every

single

job,

so

that

every

job

knows

when

it

can

run

and

what

its

dependencies

are

you're

going

to

get

just

as

fast,

even

if

you're,

using

a

staged

pipeline.

A

You

know

this

challenge

we're

going

to

build

off

the

simple

Pipeline

and

we've

created

in

the

first

track

and

show

how

you

can

modify

execution

order

and

create

a

directive

cyclic

graph,

so

you're

coming

in

from

the

last

track.

You

should

be

on

the

pipeline

page

if

you

navigated

away

just

go

back

to

your

project

and

use

the

left

hand,

navigation

to

go

to

click

view,

to

build

pipelines

and

by

the

way,

let's

take

a

quick

look

at

the

unit

test

here.

A

A

A

A

A

A

A

All

right,

so

these

are

paused

right

now,

which

means

they're

waiting

on

a

runner.

So

this

one

got

access

to

a

runner.

These

two

didn't

get

access

to

it.

I'll

have

to

check

the

router

capacity

when

we

get

done

here

today

and

make

sure

everything's

okay,

but

ordinarily,

we

would

see

code,

quality

and

unit

test

for

it

immediately.

Here.

A

A

A

A

You

know

if

we

click

on

the

visualize

tab,

we'll

be

able

to

actually

see

this,

and

you

can

see

that

you

know

our

original

build

out.

You

know

testing

code

quality

you're

still

in

here,

but

now

we've

got

all

these

subsequent

jobs

and

stages

that

are

tied

together

with

needs,

and

this

is

a

directive

cyclic

graph.

A

A

A

A

A

A

A

A

So,

if

you

don't

put

a

rule

in

a

in

a

job,

it's

going

to

run

for

every

single

trigger

that

you

impose

upon

your

impose

upon

your

project.

So

a

job

is

included

in

a

pipeline.

If

a

rule

evaluates

the

true,

so

it's

possible

to

put

in

a

row

that

has

a

test

in

it.

If

this

equals

this

kind

of

thing,

it

has

a

clause

that

going

on

success

when

delayed

or

when

always

then

that

job

is

going

to

get

included

if

no

rule

is

defined,

but

the

job

has

a

default

clause.

A

A

So

rules

evaluating

for

when

the

job

runs

in

this

particular

case

we're

showing

a

real

simple

rule

at

the

top.

Now

remember

that,

when

on

success

is

the

default

in

gitlab?

So

if

you

don't

enumerate

it,

gitlab

assumes

that,

when

on

success

is

what

you

want

to

have

happen

here

in

this

particular

case,

if

the

CI

now

the

rules

plot

always

evaluates

prior

to

running

the

script,

so

the

rules

happen

in

GitHub

itself.

The

script

happens:

steps

if

you

will

happen

on

the

on

the

runner

once

the

job

is

actually

up

and

operational.

A

A

So

if

it's

this

particular

Source,

we

want

this

job

to

run.

So

this

job

will

only

run

when

the

pipeline

is

kicked

off

from

the

web

form

things

to

think

about.

With

this

rule,

syntax

with

respect

to

Clauses,

we

have

the

ability

to

use.

If

which

is

just

an

evaluation,

you

have

the

ability

to

use

changes

which

can

look

for

changes

in

a

specific

file.

You

can

look

for

changes

in

the

directory

of

files

and

it

can

also.

We

also

have

the

ability

to

look

to

see

if

a

file

even

exists

using

that

exist

keyword.

A

So

if

Docker

Docker

file

exists,

this

particular

build

job

is

going

to

build

out

container

The

Operators

for

EF

statements

are

equals

equals

not

equals,

which

is

what

you

think

they

are

from

programming

now,

the

tildees

the

equals

til,

the

not

till

the

those

are

regular

Expressions.

So

you

have

the

ability

to

use

regular

expressions

and

rules

in

gitlab

jobs,

but

then

the

Andy

ad

in

the

or

or

what

you

think

they

are.

A

A

It

could

be

minutes,

it

can

be

hours,

it

can

be

a

day

whatever

you

need

it

to

be,

and

then

the

wind

options

are

always

so

we

want

to

run

this

particular

job

unconditionally

if

this

rule

match-

or

you

know,

if

it's

a

standalone

win

clause

on

the

job

itself

and

not

inside

of

a

role,

it

wouldn't

always

just

means

that

jobs

always

going

to

run,

but

whenever

that's

a

good

example

of

a

negating

role.

So

we

have

a

job

that

we

don't

want

to

run

ever

for

a

merge

request.

A

We

can

look

to

see

if

it's

a

merge

request

and

if

it

is,

then

we

put

in

this

Wendover

class

to

make

sure

that

job

doesn't

run

in

that

circumstance

and

then

again

on

success

just

means

everything

that

precedes

this

job

is

success,

is

passed

and

or

was

allowed

to

fail.

One

of

the

two

and

then

on

failures,

especially

use

case,

if

something

fails

in

your

pipeline

job

and

you

want

to

run

a

job

to

just

do

some

inspection

and

kind

of

check

some

things

out.

That's

the

case.

A

Use

case

for

this

when

manual

is

a

special

use

case

yeah.

When

you

create

a

job

that

has

the

closet

when

manual

that's

going

to

be

a

manual

job,

it's

going

to

get

a

play

button

on

it,

and

anybody

who

is

a

developer

and

able

to

run

a

pipeline

is

going

to

be

able

to

click

on

that

job

to

run

it

unless

it's

in

a

projected

Branch

a

decision

protected

Branch.

A

A

So

when

is

the

job

not

created

in

the

pipeline?

Job

is

not

included

in

your

pipeline.

None

of

the

role

is

defined

for

a

job

evaluate

to

true

and

by

the

way,

if

you

take

a

look

at

these

rules

here,

get

lab

is

going

to

go

through

these

sequentially,

the

first

one

that

matches

is

going

to

win

so

if

it

finds

a

match

in

here

that

rule

wins

and

it's

not

going

to

evaluate

any

other

clauses.

A

So

bear

that

in

mind.

If

a

rule

evaluates

to

true

it

has

a

closet

whenever

into

that

job's

not

going

to

be

included,

and

you

can

see

that

that's

the

case

here.

If

CA

pipeline

Source

equals

merge,

request

event,

then

we

don't

want

to.

We

don't

want

to

run

this

particular

job,

and

if

the

pipeline

source

is

a

scheduled

pipeline,

then

we

don't

want

to

run

this

job

and

the

default

for

everything

else

is

when

on

success.

A

A

A

A

A

So

this

child

will

execute

in

any

pipeline

where

CI

python

source

is

not

sent

to

merge,

request

or

set

to

schedule,

then

it

defaults

to

this

way,

non-success,

because

that

matches

that

has

no.

If

statement

there,

there's

no

evaluation,

Christopher

I

apologize

for

that.

That

may

be

a

problem

with

the

way

we've

got.

The

renters

provisioned

in

this

particular

group

right

now

and

I

conducted

that

just

as

soon

as

as

soon

as

we

get

done

with

our

Workshop

today,.

A

A

Then

this

rule

is

going

to

evaluate

to

true

and

then

it's

going

to

be

a

manual

job

in

that

particular

case.

So

again,

anybody

who's

eligible

to

run.

That

pipeline

is

going

to

be

able

to

run

this

manual

job

unless

it's

a

merger

Quest

going

into

a

percentage

branch

or

I'm.

Sorry

I

had

a

pipeline

I'm

going

to

protected

branch.

A

Yeah

variable

source

variables,

processing

orders,

so

this

is

precedence

for

variables.

There's

a

lot

of

different

places.

You

can

put

variables

in

it.

You

can

GitHub

ships

with

a

very

long

list

of

predefined

environment

variables

and

you

can

actually

look

for

gitlab,

predefined

and

predefined

variables

and

you'll

find

the

whole

list.

It's

a

it's

a

monster,

but

you

also

have

deployment

variables.

A

And

then

we

have

an

inherited

environment

variables

which

would

be

you

know

specific

variables

designed

or

you

can.

You

can

set

up

a

in

environment

variables

in

your

project

and

they

can

only

run

on

a

specific

environment

if

you

want

to

do

that,

it's

this

level

variables,

so

it's

possible

if

you're

self-hosted,

for

somebody

to

set

environment

variables

at

the

instance

level

and

they'll

Cascade

down

to

all

the

groups

and

all

the

projects

group

level

variables.

A

A

A

Cicd

pipeline

trigger

variables

scheduled

pipeline

variables

in

manual

run

pipeline

variables

at

the

highest

precedence

here,

so

the

Precedence

goes

from

bottom

to

top

on

on

this

list,

and

this

is

a

good

thing

just

to

keep

as

a

a

cheat

sheet

around.

So

when

you

get

the

link

to

this

presentation,

deck

tomorrow,

grab

this

particular

slide

and

stash

it

somewhere.

A

A

A

And

do

it

a

job

circuit

up

again

all

right,

we're

going

to

have

to

come

back

and

check

this

later,

but

we're

going

to

be

looking

for

code

quality

job

that

fails

and

there's

a

lot

to

fail.

So

it's

got

the

orange

circle

with

the

exclamation

point.

In

it

they

were

still

quick

through

again

still

a

pimple

okay.

A

A

A

A

A

A

A

A

You

know

real

important

for

me

to

say

here:

templates

are

zero

percent

magical,

there's

nothing

magic

about

tips

at

all.

We

run

open

core

and

open

source

in

our

Community

Edition,

and

in

that,

in

either

case

you

can

go

and

look

at

these

jobs.

You

can

just

see

that

they're

executing

the

roles

that

they

have

in

the

job

properties

that

are

included

in

those

specific

templates,

so

templates

are

always

executed

into

a

gitlab

pipeline

to

an

include

statement

in

the

projects.getlab.ci

dot.

Yml

file.

A

Now

the

four

types

of

includes

this

is

important

to

understand

again

template

the

one

on

the

upper

left

there.

It's

a

references,

content,

that's

provided

by

gitlab

engineering,

so

this

ships

with

gitlab

and

it's

it's

not

something

that

you

build

for

other

teams

to

consume

stuff

that

you're

devops

crew

is

going

to

build

out

and

maintain

for

other

people.

In

that

case,

it's

going

to

be.

This

include

file

capability

which,

by

the

way,

I

call

include

project,

so

you

would

delineate

the

project

its

path

from

the

root

of

your

gitlab

instance.

A

Everything

that

follows

the

domain

name

and

the

slash

that

after

the

domain

name

and

then

you

would

give

a

path

to

that

particular

template

file

in

that

project,

and

that's

how

you

include

files

from

a

centralized

template

repositories,

and

then

you

also

have

the

ability

to

break

out.

So

you

might

have

some

very

complex

pipelines.

You

might

have

multiple

independent

pipelines

that

you've

delineated

in

separate

files.

A

You

can

just

use

the

local

keyword.

It

just

means

you

know.

Look

in

this

particular

project

and

give

it

the

path

to

it.

Then

you

also

have

this

ability

to

include

remote

now

this

one's

kind

of

a

it's

kind

of

an

odd

circumstance.

But

let

me

just

explain

it

real

quickly

if

we

include

remote

we're

going

to

give

it

a

we're

going

to

give

it

a

full

URL

path

to

somewhere

else

completely

off

our

server,

and

that

project

has

got

to

be

publicly

viewable,

there's

no

way

to

authenticate

to

it.

A

A

So

you

can

see

the

two

examples

that

are

shown

in

the

code

down

below

and

the

variables

are

being

declared

out

in

the

global

namespace

here

secure,

analyzers

prefix,

you

know

that's

being

changed.

Secure,

analyzer

version,

secret

detection,

excluded

pairs

are

none

in

this

particular

case

and

then

we're

overriding.

The

image

for

the

secret

analyzer

as

well.

A

A

A

You

can

configure

the

SAS

language

scanner

for

node.js,

so

the

SAS

language

scanner.

So

it's

important

to

realize

that

we

with

every

single

one

of

our

security

tests.

They

support

a

very

broad

range

of

languages

and

well

you

can

just

let

them

run

in

the

default

they're

going

to

have

to

go

through

every

single

one

of

their

options

and

look

and

see

if

they're

able

to

determine

which

which

language

is

they

need

to

be

testing

for,

but

we

can

actually

configure

it

to

do

just

node.js.

If

we

want

to.

A

We

know

our

app

is

node.js,

so

let's

tell

the

job

it

only

needs

to

use

node.js

by

excluding

all

the

rest.

The

SAS

template

defines

what

language

scanners

to

avoid

using

incest

excluded

analyzers.

So

that's

a

variable

that

we

can

set

and

we

can

list

out

all

the

analyzers

that

we

don't

want

to

be

run

and

I

can

set

the

exact

scanners

to

exclude

by

defining

this

variable

in

my

gitlab

.ci.orml

file.

A

Now,

in

this

particular

case,

I

want

you

to

notice

that

they've

actually

used

the

code,

that's

being

shared

with

this

on

the

lower

right

here,

redeclares

that

SAS

job

and

that

it

adds

a

variable

to

it.

That's

one

way

you

can

do

this.

If

you

want

to

anytime

that

a

job

has

been

predefined,

you

can

still

declare

it

in

your

dot,

gitlab

dash,

Seattle

or

ml

file.

You

can

still

declare

it

and

override

any

of

its

properties

if

you

want

to

there.

A

A

If

you

go

to

the

a

specific

job,

if

you

go

to

the

jobs

page

so

that

you're

seeing

the

jobs

in

a

pipeline

listed

out

in

lines,

the

same

thing

is

true

there,

except

that

you're

downloading

the

artifacts.

For

that

specific

job,

you're

still

going

to

get

an

archive

file

because

it

can

leave

multiple

artifacts

if

it

wants

to,

and

if

you

go

to

a

specific

jobs.

A

A

So

a

best

practice

is

to

declare

your

artifacts

with

the

expiration

on

them

and

then

what

will

happen

is

once

they've

been

uploaded

to

gitlab.

Gitlab

will

start

a

countdown

in

this

particular

case.

It's

one

hour,

as

you

see

specified

here

in

the

job

it'll

expire,

that

artifact

in

one

hour

and

then

that

artifact

will

be

deleted.

It

is

possible

to

keep

that.

So

this

is

a

good

practice

to

just

engage

in

across

the

board,

with

all

your

devops

engineers

and

people

who

maintain

their

own

pipeline

files

in

their

projects.

A

But

if,

for

some

reason,

somebody

needs

to

keep

one

and

they

know

it's

going

to

expire

in

an

hour,

but

they

need

to

maybe

they

need

to

pass

that

through

some

review

pass

because

of

some

odd

behavior

or

something

that

was

observed

during

the

job

when

they

go

to

the

job

page

and

again.

What

you're

looking

at

is

the

job

page

you're,

seeing

job

log

on

that

bottom

of

the

screen

there

and

if

they

click

on

the

keep

button,

that's

over

on

the

right

hand,

side

that's

going

to

that's

going

to

keep

that

artifact

indefinitely.

A

A

A

There

will

be

a

tree

expand

icon

now,

if

we

click

on

that,

it's

going

to

show

all

the

files

that

are

being

brought

in

for

this

particular

pipeline.

Configuration

now

notice

that

we

can

click

on

this

one

right

here,

it's

going

to

open

up

a

new

tab,

but

it's

going

to

take

us

right

to

the

code

for

the

SAS

job

so

that

we

can

take

a

look

at

it.

A

You

can

inspect

it

if

we

wanted

to,

we

could

copy

it,

but

that's

not

a

best

practice,

because

these

are

being

updated

all

the

time

and

you

don't

want

to

be

responsible

for

maintaining

your

own

SAS

job

when

somebody

else

is

willing

to

do

it.

But

this

is

a

need

because

you

can

go

through

and

you

can

actually

take

a

look

at

it.

A

A

A

Notice,

we've

got

two

jobs

here:

process

jobs

in

this

particular

case.

The

SAS

job

is

a

it's

a

job.

That's

then

extended

by

other

jobs.

The

SAS

job

itself

doesn't

actually

run

it's

just

extended

by

other

jobs

depending

on

you

know

what

the

configuration

is,

and

you

can

see

that

node.js

gets

scans

asked

is

included

in

there

and

some

grepsest

is

included

in

there.

A

A

A

That's

going

to

be

used

to

spawn

all

the

different

SAS

jobs

that

are

capable

of

being

run

and

we're

going

to

override

that

particular

SAS

job

so

that

all

the

sales

jobs

move

to

the

security

stage

and

we're

going

to

set

it

so

that

it

can

run

immediately

because

SAS

jobs

again

they

run

on

code.

They

don't

need

a

build

artifact

to

to

run

against.

A

A

A

A

So

only

transfer

this

project

when

you're

done

and

when

you

transfer

the

project.

If

you

don't

have

an

ultimate

lesson,

she

might

lose

some

capabilities

now,

just

for

awareness.

Everything

that

we've

been

through

today

is

built

into

every

single

tear

you

give

up

so

it

doesn't.

This

doesn't

actually

apply

in

this

specific

Workshop

we've

done

so

far

today,

but

the

optional

parts.

This

could

apply

to.

A

One

of

the

things

I

want

to

share

with

you

is

this

number:

six

optional

security

and

compliance.

This

is

a

really

good

chance

to

get

a

get

your

hands

on

too

it's

play

around

with

some

of

gitlab

security

and

compliance,

tooling,

you're

going

to

be

an

ultimate

subgroup.

It's

going

to

have

all

of

our

features

available

in

it,

you're

going

to

be

able

to

set

up

our

full

security

and

compliance

capabilities.

A

Now,

that's

going

to

involve

setting

up

Appliance,

setting

up

a

compliance

pipeline,

or

at

least

using

one

in

a

project

and

then

going

through

setting

up

security

policies

that

trigger

approvals

for

certain

types

of

scan,

failures

and

you're,

going

to

have

the

ability

to

look

at

how

you

can

generate

a

licensed

bill

of

materials.

So

you

can

generate

a

NS

bond

to

share

with

vendors

if

you

need

to

do

it

in

compliance

work

and

the

one

at

the

top

has

got

some

very

complex

and

multiple

workflows

in

it.

A

Now

this

project

that

I,

which

by

the

way,

is

one

that

I

maintain,

is

just

a

way

to

be

able

to

stub

out

pipelines

without

any

job

logic

at

all.

So

it

has

the

advantage

of

being

able

to

run

very

very

quickly

using

a

shell

base

burner

instead

of

a

a

Docker

Runner

like

what

we're

accustomed

to

and

using

that

cell

Runner,

it's

just

gonna.

A

Instead

of

the

pipelines

page,

it's

got

several

pipelines

to

find

here

now

we're

using

workflow

rules

on

this

particular

project

to

define

the

names

of

the

pipelines

that

are

going

to

run

based

on

you

know,

matching

a

particular

growth

set

and

notice

if

I'm

using

you

know

emojis

here

that

are

supported

by

utf-8.

So

you

have

the

ability

to

use

those

if

you

need

to,

and

these

are

all

independent

pipelines

that

are

capable

of

being

run

in

this

particular

project.

A

There

is

a

readme

that

kind

of

goes

through

the

basics

of

it,

but

in

the

pipelines,

I've

got

several

different

pipeline

jobs

that

are

being

included

with

the

local

include

directive

and

then

there's

a

readme

in

here

too.

That

goes

through

what

these

are

and

how

they

work.

So

just

be

aware,

it's

really

good

examples

here.

A

A

So

please

do

take

the

time

again.

You've

got

48

to

complete

the

work

for

this

Workshop.

We

generally

only

provision

two

days

for

workshops,

but

in

this

particular

case

we

want

you

to

have

time

to

do

to

go

through

the

optional

security

and

compliance

step.

If

you

want

to

take

a

look

at

our

security

and

compliance

tooling

and

then

to

be

able

to

inspect

this

other

project

that

I

just

shared

with

you,

which

again

are

enumerated

and

optional

step,

six

and

seven

in

the

source

project.

A

A

A

B

A

That's

it

for

the

day

we've

finished

up

just

a

little

bit

early,

but

I

appreciate

everybody's

time

and

please

feel

free

to

reach

out

to

us.

If

you

have

any

questions

but

again,

if

you

qualify

for

working

with