►

Description

Watch the playback for a hands-on GitLab CI workshop and to learn how it can fit in your organization!

We will kick things off by going over the differences between CI/CD in Jenkins and GitLab, syntax requirements, advantages to using GitLab, and how you can achieve the same outcomes in GitLab. Getting started with CI/CD in GitLab will take a lot less time than tends to be required for Jenkins, and your users can stay in a single platform.

We will then dive into how to build simple GitLab pipelines and work up to more advanced pipeline structures and workflows, including security scanning and compliance enforcement.

A

So

you

can

get

a

sense

of

you

know

how

to

start

translating

your

pipelines

over

to

gitlab,

and

we

also

want

to

to

give

you

a

chance

to

start

to

play

with

gitlab

for

yourself

start

to

play

with

the

Pipelines

during

the

course

of

today's

Workshop

you're,

going

to

get

provisioned

and

ultimate

subgroup

under

the

gitlab

learn

Labs

namespace

at

gitlab.com,

and

that's

going

to

give

you

access

to

all

the

features

gitlab

has

available.

So

you

can

start

to

play

around

and

we

would

encourage

you

to

do

that.

A

We're

going

to

have

some

fairly

specific

things

we're

going

to

do

during

the

course

of

the

workshop

today.

There

are

some

optional

things

that

you

can

pursue

and

we'll

be

talking

about

that

as

we

go

along

but

they're

up

to

you.

If

you

want

to

do

them,

you

can,

and

if

you

don't

that's

okay

too,

but

we

want

to

encourage

you

once

you

get

this

once

you

get

this

subgroup

provisioned

to

go

ahead

and

play

around

and

do

what

you

want

to

do

and

experiment.

A

A

So

today

we're

going

to

be

talking

about

again

the

differences

between

Jenkins

and

gitlab,

with

respect

to

how

you

configure

pipelines

and

by

the

way

just

for

whatever

it's

worth

gitlab

is

super

easy

to

get

started

with

pipelines

in

one

you're,

not

in

a

programming

language

like

you

are

in

Jenkins

we

use

declarative,

Warren,

Mill

and

so

you're,

essentially

setting

up

a

configuration

file

for

your

pipelines

and

putting

commands

in

there

that

represent

the

steps

you're

going

to

want

to

do.

You

know

for

what

we

call

jobs,

but

what

traditionally

in

Jenkins,

are

called

stages.

A

So

let's

go

ahead

and

just

get

going,

my

name

is

Steve

Graham

I'm,

a

customer

success

engineer

and

by

the

way,

I've

got

one

of

my

peers

on

here.

Chris

guytart

Chris

is

one

of

our

senior

customer

success.

Managers

in

fact

he's

the

only

one

Chris

is

a

rock

star

and

he's

here

to

help

out

and

just

kind

of

give

us

a

sense

of

you

know

what

makes

sense.

A

So

let's

go

ahead

and

start

diving

in

so

again,

tomorrow,

you're

going

to

be

getting

an

email

with

a

link

to

the

slide

presentation

used

today

linked

to

the

recording

we're

making,

and

that

gives

you

the

opportunity

to

review

it

or

share

it

with

your

peers.

If

your

account

qualifies

for

a

customer

success.

Engineer

now

these

are

the

smaller

Accounts

at

gitlab

that

if,

if

your

account

qualifies

for

customer

success,

engineer

engagements,

you

should

expect

one

of

our

customer

success.

Engineers

to

contact

you

next

week

to

inquire

about

any

any

questions.

A

You

might

have

potential

enablement

sessions

for

your

team

and

to

assist

with

any

clarifications

that

may

help

you

in

your

conversion

to

get

lab

CI

and

CD.

However,

if

you

have

an

assigned

customer

success,

manager,

you're

not

going

to

be

hearing

from

a

customer

success

engineer.

So

if

you've

got

a

regular

inside

customer

success

manager

that

you

have

the

opportunities

to

meet

with,

please

reach

out

to

them

for

the

same

thing.

A

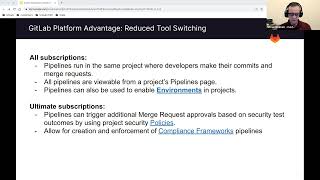

Going

to

start

by

talking

about

some

of

the

gitlab

platform

advantages,

one

of

the

primary

ones

is

reduce

tool.

Switching,

so

people

are

having

to

make

their

commits

in

git

lab

and

then

go

over

to

Jenkins

and

either

manually

run

a

pipeline

or

maybe

you've

got

a

trigger

set

up

there

that

you

know

just

fires

a

pipeline

for

them,

so

pipelines

all

run

in

the

same

project

where

developers

make

their

commits

and

merge

requests

and

all

pipelines

reviewable

from

a

Project's

pipelines.

A

It's

going

to

go

through

setting

up

these

these

policies,

and

it's

also

going

to

go

to

the

next

bullet

point

which

is

to

you

know

you

can

create

your

SEC

teams,

can

create

compliance

framework

Pipelines

that

they

can

enforce

on

projects

if

they

want

to.

So

you

can,

you

can

actually

run

pipelines

that

do

testing

in

these

Downstream

projects.

If

you

want

to

do

it

now.

This

is

the

next

thing

you

don't

have

to

build

triggers

into

your

pipeline.

A

Forget

lab

gitlab's,

just

got

them

built

in

and

you

regulate

these

triggers

with

rules

in

your

either

in

your

jobs

or

in

your

Global.

Workflow

rules

and

rules

are

an

evaluation.

You

know

they're

evaluating,

maybe

the

branch

that

you're

making

the

commit

on

or

if

it's

a

merge

request.

There's

several

other

things.

A

So

any

push

on

any

branch

will

trigger

a

pipeline.

A

merge

requests

will

trigger

a

pipeline,

pull

requests

trigger

pipelines.

You

can

manually

trigger

pipelines

from

the

UI

on

the

pipelines

page

there's

a

run

pipeline

button

that

you

can

use

to

just

manually

trigger.

If

you

want

to

take

that

route,

we

also

have

the

ability

for

you

to

schedule

pipelines

it's

it's

so

differently

than

it

is

in

Jenkins,

but

he's

just

very

Crown

like

syntax,

and

you

can

set

up

pipelines

to

run

and

you

can

also

oh

Chris.

Are

you

seeing

my

screen.

A

A

A

A

Now,

it's

also

possible

using

rules

with

regular

expressions

against

the

CI

commit

message

variable.

It's

it's

actually

possible

to

inspect

the

commit

message.

Jocelyn.

Yes,

this

meeting

is

being

recorded,

it's

actually

possible

to

inspect

the

commit

message

and

look

for

keywords

and

trigger

a

pipeline

for

them.

A

A

The

users

who

are

triggering

the

pipelines

have

the

ability

to

triggered

you

know,

pull

these

pipeline

files

into

their

project.

They

can

do

there's

a

couple

of

different

ways

that

can

happen,

but

all

they

have

to

have

is

read

access

to

the

Upstream

repository

that

has

the

pipeline

definitions

in

it.

So

your

devops

teams

can

maintain

these

projects

that

have

you

know,

template

repositories

in

them

and

they

don't

have

to

worry

about

everybody

being

able

to

commit

to

it.

A

So

the

pipeline

template

repositories

wanted

some

things

to

note

about

this

or

that

when

you

pull

these

files

in

and

run

them

as

pipelines

in

a

downstream

project,

they're

going

to

use

that

Downstream

projects

variable

context.

So

you

have

the

ability

and

we'll

be

talking

about

this

more

as

we

go

through

the

the

workshop

today,

you

have

the

ability

to

set

variables

in

a

whole

host

of

different

ways

and

it

so

the

variable

context

that

they're

going

to

run

in

is

in

the

downstream

project,

not

the

Upstream

and

again

this.

A

In

order

for

someone

to

be

able

to

run

that

pipeline,

they've

got

to

have

read

access

to

that

centralized

repository,

but

that's

all

they

have

to

have,

and

you

know

this

centralized

repository

can

have

a

ton

of

different

pipelines

in

it.

If

it

needs

to,

it

can

contain

multiple

pipeline

definitions

for

different

conditions

that

exist

in

the

downstream

pipelines

or

for

different

types

of

projects.

Maybe

you've

got

python

projects

in

node.js

projects

and

you

create

independent

pipelines.

A

So

projects

can

include

pipeline

files

from

external

repositories

in

their

pipeline

files,

so

they

can

create

their

own

dot,

gitlab

dash

ci.yml

file,

and

then

they

can

use

an

include

statement

to

include

the

Upstream

projects

pipeline

files

if

they

want

to,

but

for

projects

that

don't

want

to

maintain,

and

we

all

know

that

these

exist.

There

are

some

developers

who

just

don't

want

to

engage

in

pipeline

development

at

all.

It's

actually

possible

for

you

to

go

into

project

settings

and

delineate.

A

A

Anytime,

you

bring

in

a

file,

that's

included

into

your

projects,

you

know

using

the

syntax

in

the

dot

gitlab.ci.yml

file,

it's

going

to

run

in

the

context

of

your

pipeline

of

your

project

itself,

so

you

don't

even

have

to

pass

those

to

the

included

file.

It's

already

running

in

that

context

already

so

pipeline

jobs

run

independently

of

each

other

and

they

have

a

fresh

environment

for

every

single

one

yeah.

This

is

unlike

a

Jenkins

agent

where

job

you

know

the

stages

run

sequentially.

A

A

You

can

use

that

without

EnV

files,

which

are

which

are

an

artifact,

that

the

Upstream

job

would

leave

behind

and

the

downstream

job,

but

then

require

using

the

needs

or

the

dependencies

keyword,

and

then

it

can

evaluate

it

in

the

context

of

its

runtime

and

by

the

way,

gitlab

runs

a

cleanup

after

every

single

job

to

ensure

a

clean

working

environment

for

the

next

shift.

The

idea

is

that

the

runners

are

highly

disposable

and

highly

portable

and

Runners

can

run

for

any

project.

A

A

If,

if

you

will

they're

going

to

have

a

play

button

on

them

all

manual,

jobs

are

going

to

have

a

play

button

on

them

by

the

way,

but

any

developer

can

run

these

manual

jobs.

So

the

way

that

you

get

around,

that

is,

you

use

things

like

protected

branches

in

pipelines,

protected

branches.

Only

users

who

are

allowed

to

push

or

merge

to

that

protected

Branch

can

run.

A

A

You

can

also

add

deployment

approvals.

Now

these

are

independent

of

who

can

who

can

click

on

the

the

Run

button?

You

can

add

two

or

three

approvals,

if

you

think

that's

appropriate,

or

maybe

one

if

that's

appropriate,

and

you

can

delineate

who

those

users

are

that

have

to

be

able

to

approve

it,

but

you

can

also

pick

the

people

who

are

allowed

to

run

the

jobs

in

protected

environments

too.

A

Now,

let's

just

talk

about

some

of

the

differences

in

syntax

here,

just

for

a

few

minutes,

kit,

library

or

Jenkins

agents

are

what

we

call

runners

in

git

lab

now

they're

a

very

different

kind

of

concept.

You

know:

agents

tend

to

be

highly

customized

for

projects

That's

Not

Unusual

at

all,

Runners

tend

to

be

highly

disposable,

so

they

can

be

reused

over

and

over

again,

you

have

the

ability

in

Jenkins

to

create

a

post

set

of

steps.

If

you

need

to

or

a

post

job,

you

can

support

that

with

additional

stages

in

gitlab.

A

If

you

need

to

do

it,

but

remember,

the

gitlab

runners

run

cleanup

jobs

already.

So,

if

you're

using

that

to

do

some

cleanup,

it's

not

easy

to

get

left

and

then

chicken

stages,

which

I

tend

to

see

as

something

that

we

would

call

jobs

and

get

that

because

they

tend

to

have

steps

enumerated

underneath

them.

A

We

actually

have

a

keyword,

recall

stages.

Two

but

stages

to

us

is

a

container

for

jobs.

So

when

you

see

a

gitlab

pipeline,

you'll

see

critical

columns

and

jobs

will

be

listed

in

each

one

of

the

columns

and

those

are

stages

to

us.

So

just

be

aware

of

that

difference,

and

then,

when

you

delineate

the

steps

in

a

Jenkins

stage,

that

would

become

a

script

in

a

job

in

gitlabs

Pipelines.

A

So

you

know,

script

is

an

array

in

yml

and

you

can

put

individual

commands

in

there

so

that

those

all

get

executed

and

then

environment.

Now

to

us.

This

is

just

variables.

Variables

can

be

declared

in

a

job

if

you

want

to

declare

them

specific

to

a

job

or

they

can

be

declared

globally

for

an

entire

pipeline.

If

you

want

to

take

that

route

too,

either

one

is

sufficient

to

get

the

job

done

and

then

the

options

that

you

have

in

Jenkins.

A

A

Now,

with

respect

to

triggers

and

Cron,

you

know,

gitlab

is

tightly

integrated

with

Git

sem

pulling

options

for

triggers

are

not

needed

and

we

support

a

Syntax

for

scheduling

pipelines.

We're

not

going

to

go

through

that

today,

but

you

there's

by

the

way,

when

you

get

a

copy

of

this,

this

particular

deck

that

I'm

working

off

of

today

you'll

see

that

there's

just

a

ton

of

links

in

it

that

you

can

use

to

go,

follow

and

look

at.

A

You

know

the

various

things

that

and

how

they

work

at

get

lab

for

awareness

if

you're

looking

at

this

stream

that

I'm

on

right

now,

the

Jenkins

links

that

are

underneath

the

Jenkins

column

go

to

the

gitlab

documents

that

are

that

describe

how

to

migrate

from

Jenkins

to

get

lab,

and

those

tend

to

explain

these

differences

that

you're

that

we're

talking

about

here.

The

ones

on

the

left

go

to

our

feature

pages

so

that

you

can

look

at

those

directly

and

understand

how

to

work.

With

that

specific

feature.

A

And

this

is

the

last

of

these.

So

with

respect

to

tools,

you

know,

I've

looked

at

the

tools

in

Jenkins,

there's

only

a

few

of

them

right

now.

They

primarily

support

Java,

but

we

don't

have

any

kinds

of

any

kind

of

tools

directed

in

git

lab

best.

Practices

in

gitlab

are

to

create

containers

of

your

own

that

already

have

these

libraries

pre-loaded

in

them,

and

then

you

can

store

those

in

gitlab

and

consume

them

in

your

pipelines.

If

you

want

to

so

it

makes

it

very

easy

and

convenient

way

for

you

to

you

know.

A

Stub

out,

these

containers

create

your

own

Docker

files,

whatever

you

need

to

do

and

be

able

to

store

them

in

gitlab

during

the

course

of

the

run

of

the

pipeline

and

with

respect

to

input

it's

similar

to

the

parameters

keyword.

This

is

not

needed,

because

a

manual

job

can

always

be

provided.

Runtime

variable

entry

now

gitlab

does

support

a

win

keyword.

A

It's

used

to

indicate

when

a

job

should

run

now.

This

is

specific

to

jobs

in

this

case,

but

you

know

when

a

job

should

run

or

if

it

should

run

in

case

of

failure,

which

is

a

special

use

case

that

we'll

talk

about

more

as

we

get

going,

most

of

the

logic

for

controlling

pipelines

can

found

can

be

found

in

our

very

powerful

role

system.

Rule

system,

which

is

linked

here

in

this

document.

A

What's

that,

that

subgroup

has

been

provisioned

under

our

gitlab

learn:

labs

namespace

again

it's

an

ultimate

subgroup

with

all

of

our

features

available

to

it,

but

you're

going

to

be

the

owner

of

it.

So

you'll

have

complete

access

to

do

everything

you

need

to

do

in

there

you're

going

to

have

access

to

this

workshop

for

four

days,

this

Workshop

environment,

this

subgroup

that

you're

going

to

be

creating

today

we

routinely

set

these

up

so

that

they're

available

for

two

days

for

most

of

our

workshops.

A

A

This

is

our

agenda

for

today,

we're

going

to

go

through

lab

setup,

I'm

going

to

go

through

setting

up

a

simple

pipeline.

Next

we're

going

to

move

on

to

execution

order

and

direct

it

as

cyclic

graphs,

which

is

a

unique

feature

in

gitlab.

That's

pretty

exciting,

then

we're

going

to

talk

about

rules

and

how

to

deal

with

failures

and

we're

going

to

deal

with

instantiating

gitlab

SAS

jobs

which

are

available

regardless

of

subscription.

A

So

this

is

available

all

the

way

down

to

our

free

tier,

and

if

you

want

to

set

up

zest,

you

can

which

is

just

static,

application

security

testing

and

we're

also

going

to

talk

about

artifacts,

how

to

delineate

them

and

then

how

to

require

them

and

at

the

very

end,

we're

going

to

talk

about.

You

know

how

to

transfer

the

project.

We

won't

touch

on

that

for

very

long,

but

it's

available

as

a

step

in

our

in

our

process

today.

A

So

let's

go

ahead

and

get

started

today,

you're

officially

part

of

a

brand

new

startup

that

is

creating

a

public

leaderboard

for

The

Hitman

racing

game,

Tanuki

racing.

We

always

like

to

take

a

chance

to

put

our

logo

out

there.

Your

company

has

recently

swept

over

to

using

gitlab

for

CI

and

CD

and

has

tasked

you

with

learning

about

different

pipeline

capabilities.

A

So

please

don't

miss

out

on

the

next

step.

Since

the

group

you

act,

you

request

will

have

full

access

to

all

of

our

new

AI

features

for

four

days

now.

Just

for

awareness,

AI

is

something

if

you're

a.

If

you

have

a

namespace

with

a

subscription

on

it

at

gitlab.com.

You

can

request

that

AI

be

enabled

in

that

namespace,

and

it

is

in

this

particular

namespace.

A

To

register

this

this,

this

Workshop

into

provision

it

again,

you'll

need

to

have

a

gitlab.com

account

and

once

you

get

there

you're

going

to

get

page.

That

looks

like

what

we're

seeing

on

the

right

side

of

my

screen

today,

you're

going

to

need

to

click

on

the

redeem

and

bit

invitation

code

and

then

you're

going

to

be

using

this

registration

code.

That's

displayed

on

the

screen

that

Chris

will

be

pasting

in

in

just

a

minute.

A

Now,

once

you

click

on

that,

read

the

button

you're

going

to

get

this,

and

this

is

where

you

get

to

paste

in

your

invitation

code

and

you're,

going

to

click

on

redeem

and

create

account

and

by

the

way,

the

very

next

step,

you're

going

to

need

your

username

at

gitlab.com.

Now

real

important

here

is:

we

don't

want

to

include

the

app

symbol,

but

if

you

go

to

gitlab.com

You're

logged

in

you,

click

on

your

avatar

in

the

header

you'll

be

able

to

see

your

username

displayed

down

below

that.

A

A

The

gitlab

URL

that

you

see

displayed

down

here

really

either

bookmark

that

or

copy

it

out

to

a

document.

You

may

be

keeping

with

notes

in

it.

But

if

you

forget

it,

you

can

come

back

and

go

through

this

process

again

and

you

will

get

provisioned

exactly

the

same

one.

It's

not

going

to

create

a

new

subgroup

for

you,

because

the

subgroup

that

it

creates

is

a

hash

value.

A

That's

a

combination

of

this

registration

code

in

your

username

at

git

lab,

but

when

you're

ready,

you

can

just

click

on

my

group

and

it'll.

Take

you

to

your

group

and

that's

going

to

look

a

lot

like

this.

Now

you

can

see

my

test

group

Dash

and

then

a

weird

string.

It's

just

that's

just

a

hash

that

follows

the

the

dash

and

it's

Unique

to

you.

A

So

it's

going

to

be

different

than

what

you're

seeing

on

the

screen

right

now,

but

if,

by

chance

you

end

up

with

a

404,

something

went

wrong:

you'll

need

to

go

back

and

start

over

again

most

commonly

what

happens?

Is

people

tend

to

put

in

there

at

the

ad

symbol

for

their

username

when

they're

putting

in

their

user

name

in

the

appropriate

field?

A

Take

a

quick

minute:

let's

go

through

what

we

just

talked

about

the

way

I'm

going

to

be

using

a

split

screen

today,

just

so

that

I

could

be

able

to

show

you

multiple

things

at

once,

but

you

can

see.

We've

got

this

I'm

logged

in

to

gitlab

demo.com

I'm,

not

logged

in

by

the

way

I've

just

hit

the

hit

the

URL

I'm

going

to

redeem

the

invitation

code

now

I'm

going

to

capture

this

real

quickly

share

with

me.

A

And

you're

going

to

get

this

page

and

again

seriously

bookmark

this

URL

when

you

follow

it

or

copy

it

put

it

into

a

document

that

you

may

be

keeping

notes

in,

but

when

you're

ready,

just

click

on

buy

group,

and

it's

going

to

send

you

to

your

group-

that's

already

been

provisioned

for

you

and

again

you're

the

owner

of

this

group.

So

you

can

do

anything

you

want

to

with

it.

A

Let's

go

back

to

our

agenda

real

quickly.

Next,

we're

going

to

talk

about

setting

up

a

simple

pipeline,

we're

at

this

point

and

we're

gonna

probably

end

up

just

a

little

bit

shorter

than

two

hours.

We've

got

provision

today,

so

we'll

just

keep

going

here

before

fully

pushing

out

the

application.

Your

team

wants

to

test

a

few

different

types

of

pipelines

to

see

what

fits

your

needs

best.

First

task,

your

product

manager

gives,

you

is

create

a

simple

pipeline

that

builds

and

tests

the

bracing

application.

A

A

Hopefully,

you've

got

a

large

screen.

You

can

work

with

two

regular

size

windows,

but

if

you

don't-

and

you

need

to

just

do

the

split

screen

methodology

you

know

just

do

whatever

your

operating

system

requires

to

do

that

and

again

we're

going

to

be

working

in

split

screens

today.

So

we

can,

with

this

source

project

that

we're

going

to

be

working

from.

It's

got.

A

The

instructions

in

it

we're

going

to

Fork

that

and

when

we

Fork

it

it's

going

to

copy

the

code,

but

it's

not

going

to

copy

the

instructions

that

are

in

the

issues.

So

just

be

aware,

we're

going

to

start

by

going

to

this

project

this

source

project

and

we're

going

to

click

on

the

fork

button

in

the

upper

right,

then

you're

going

to

have

to

select

your

provisioned

ultimate

group

under

gitlab.

Learn

labs!

A

A

A

And

then,

as

soon

as

your

project

gets

formed,

you're

going

to

need

to

remove

the

fork

relationship.

This

will

keep

it

from

creating

some

potentially

merge

requests

going

into

the

first

project,

which

you're

not

going

to

have

access

to

to

be

able

to

create

a

merger

question.

So

we're

going

to

go

to

settings

and

go

to

General

scroll

down

to

the

advanced

section,

expand

that

and

then

scroll

down

to

remove

Fork

relationship

and

then

click

on

that

button

to

remove

the

fork

relationship.

A

A

A

A

A

A

A

We've

got

test

a

and

test

B

and

the

test

stage,

and

then

we've

got

a

deploy

job

in

the

deploy

stage,

so

jobs

run

independently,

sometimes

on

different

Runners

and

again

that

it's

possible

to

subvert

this.

If

you

want

to

use

Tag

Runners-

and

you

only

have

one

Runner-

that's

possible

to

do-

if

you

really

want

to

do

it,

but

discardability

of

jobs

is

super

important

in

gitlab.

If

you

want

to

just

follow

best

practices

there,

so

jobs

run

independently,

sometimes

on

different

runners.

A

All

jobs

in

this

stage

have

to

complete

successfully

before

proceeding

to

the

next

stage,

so

build

jobs

got

to

be

completely

done

before

test

Aid

and

test

B

are

going

to

start

to

run

by

the

way.

There's

ways

to

subvert

that

that

we're

going

to

talk

about

and

then

the

deploy

job

can't

run

until

test

day

and

test.

B

are

done.

A

Now

what

we've

got

here

in

the

codes

code

block

is

is

a

job

got

a

job

that

we're

calling

production

and

that's

the

way

it's

going

to

show

up

inside

of

our

stage

and

by

the

way

you

can

see,

stages

deploy

function

there,

but

it's

up

to

you

to

arbitrarily

name

your

stages.

Anything

you

want

to

this

particular

job

has

before

script.

A

Now

you

know

before

scripts

are

most

commonly

used

to

pull

in

libraries.

Things

like

that

that

might

be

needed,

and

in

that

kind

of

a

scenario,

your

best

bet

is

really

to

start.

Creating

your

own

Docker

images

create

a

Docker

file,

build

it

store

it

in

gitlab,

and

then

you

know

pull

it

down

to

render

pipelines

in.

But

you

do

have

this

before

script

capability.

A

The

important

thing

to

realize

about

the

before

script

is:

it

runs

in

the

same

shell

as

the

main

script,

and

this

is

what

you

think

of

as

steps

right

now

in

Jenkins.

So

it's

it's

going

to

do

the

same

thing.

It's

a

it's!

A

wine

Mel

array

which

you

can

tell

by

looking

at

these

dash

symbols

here

that

follow

the

Declaration

of

at

the

yml

component.

A

This

would

typically

be

something

that's

going

to

just

do

some

cleanup

whatever

you

need

to

do

it,

you

know

after

running

your

steps,

and

then

that

runs

in

a

separate

shelf.

So

if

you

have

a

some

if

command

that

fails

in

that

particular

section,

that's

not

going

to

fail

your

fail.

Your

job

in

the

pipeline.

A

Now

get

lab

Runners,

they

run

all

the

jobs

you

to

find

in

a

pipeline

again

they

could

be

text

so

that

specific

jobs

will

be

run

on

certain

Runners

and

you

might

have

a

real

use

case

for

that.

You

know

your

team

might

be

developing

firmware.

You

might

want

to

set

up

a

shell-based

runner

that

has

all

the

libraries

loaded

to

be

able

to

load

firmware

onto

an

attached

device.

That's

on

Hangout

on

USB.

A

It's

operation.

You

know

it

takes

What

It

Takes

right,

but

the

jobs

are

typically

picked

and

picked

up

within.

You

know

five

seconds,

sometimes

it

depending

on

how

busy

the

runner

Fleet

is

for

you,

because

if

your

self-manager

going

to

be

managing

your

own

runners,

if

you're

not

self-managed

in

your

gitlab.com

you're

using

the

shared

Runners,

it's

going

to

be

subject

to

the

availability

of

the

runners,

but

we've

got

pretty

good

availability

in

our

runner

plates.

There.

A

A

A

The

default

name

for

these

Pipelines

pipeline

files

is

dot

gitlab

dash,

ci.windmel.

You

can

change

that

if

you

want

to

in

your

project

settings

I

recommend

that

you

don't

just

because

this

particular

terminology.

This

name

is

very

well

understood

known

in

gitlab,

but

you

can

see

that

we've

got

two

stages

here.

We've

got

a

build

and

a

test

stage.

A

We've

got

it

the

rest

of

this,

but

these

are

all

Global

directives

here.

Image

just

creates

a

default

image

for

our

jobs

to

run

in

you

know,

that's

a

Docker

image

and

then

where's

delineating

a

cache

key

to

be

used

on

our

runners

and

then

we've

got

to

build

app

to

find

and

we've

got

a

unit

test

defined

and

that's

that's

all.

We've

got

in

our

pipeline

right

now.

A

So

in

the

unit

test,

let's

see

unit

tests,

we

want

to

use

the

after

script.

Keyword

Echo

out

to

build

this

completed.

This

is

just

to

play

around

with

the

after

script,

so

you

get

a

chance

to

see

that

it

connects

cute

commands

as

well,

so

to

edit

the

pipeline.

We

need

to

click

edit

in

the

pipeline

editor

and

then

you

can

see

that

we're

actually

using

the

pipeline

editor.

In

this

case,

we

do

have

a

web

IDE

if

you

want

to

use

it,

but

the

pipeline

editor

has

some

real

advantages

with

respect

to

it.

A

A

So

now

it's

giving

us

an

image

of

what

the

unit

test

should

look

like

to

me.

That

is,

in

fact

what

it

does

look

like.

So

once

you've

added

the

code,

you

can

click

commit

changes,

let's

go

ahead

and

do

that

that's

going

to

immediately

trigger

our

pipeline,

you

can

see

checking

pipeline

status

up

at

the

top

of

the

screen

here

in

just

a

minute.

A

It's

going

to

give

me

a

link

to

the

pipeline,

so

I

can

go,

take

a

look

at

it

if

I

want

to

so,

let's

go

ahead

and

do

that

real

quickly,

and

what

you

can

see

is

that

the

build

app

is

running

right

now.

The

unit

test

is

this:

you

know

grayed

out

circle

with

a

dot

in

the

middle,

which

just

means

it's

not

eligible

to

run.

Yet

it's

not

going

to

be

eligible

to

run

until

the

build

app

is

done.

A

You

know

we're

not

going

to

wait

for

this

pipeline

to

remain.

We

can

go

back

and

check

it

later.

If

we

want

to

but

the

the

goal

is

you

know

what

what

it's

giving

us

instructions

to

do

if

you

want

to

do

this,

for

yourself,

is

that

if

you

were

to

click

on

any

of

these

jobs,

you'd

be

able

to

see

the

job

log?

A

So,

let's

talk

about

some

Concepts

real

quickly.

We

can

adjust

the

execution

order

for

pipeline

efficiency

if

we

want

to-

and

in

this

pipeline,

that

you're

seeing

down

here,

you

can

see

that

these

two

jobs

have

got

the

grayed

out

circles,

with

the

dots

in

the

middle,

which

just

means

they're

not

available,

so

jobs

in

the

test

stage

execute.

After

all,

jobs

in

the

build

stage

are

completed,

but

our

desired

state

is.

A

We

want

to

add

a

cool

code

quality

job

and

it

does

not

need

the

results

of

a

bill

that

can

execute

parallel,

because

the

code

quality

job,

the

one

specifically

that

we

ship

with

gitlab

has

the

ability

to

execute

directly

on

the

code.

It

doesn't

require

the

build

at

all

and

we

want

to

keep

the

code

quality

in

the

test

stage.

So

we

just

want

to

subvert

the

processing

order

here.

A

So

we

use

this

needs

with

an

empty

array,

so

we

declare

leads,

which

ordinarily

is

an

array

that

we

would

put

job

names

into.

Let

me

just

make

it

empty,

and

that

means

that

this

job

is

eligible

to

run

just

as

soon

as

the

pipeline

starts

that

way.

At

the

beginning

of

the

pipeline

execution,

both

jobs

are

now

in

the

running

state.

A

Now

we

have

the

ability

to

do

Advanced

needs

and

this

gets

into

directed

and

cyclic

graphs,

which

literally,

is

you'll,

see

lines

going

through

a

pipeline

showing

dependencies

between

jobs.

So

it

runs.

We

use.

You

know

the

needs

directive

in

this

particular

case,

to

be

able

to

allow

jobs

to

run

just

as

it

is

a

relative

public

run.

A

So

in

this

particular

case

you

know

we're

doing

multiple

builds

right,

so

we've

got

build

a

build

B.

This

might

be

IOS

and

Android

test

a

it's

eligible

to

run

as

soon

as

Bill

day

is

done.

It

doesn't

have

to

wait

for

build

B

and

deploy

a

is

eligible

to

run

just

as

soon

as

test

a

is

completed

and

the

same

thing

you

can

see

in

in

the

build

sequence

of

jobs

too,

for

build,

build

B,

it's

actually

possible

for

you

to

build

status

pipelines

now.

A

It

allows

the

Deeds

keyword

to

be

used

in

the

same

stage

and

by

the

way

that

works

without

a

stageless

pipeline,

but

previously

this

could

only

be

used

between

jobs

and

different

stages.

We've

recently

made

changes

to

that

in

gitlab

in

our

15.

extreme,

and

so

now

jobs

could

be

dependent

on

jobs

that

are

in

the

same

stage.

A

So

why

is

this

useful

stages

pipelines

make

your

pipeline

more

efficient,

implicitly

configure

the

execution

order.

It's

faster

to

write,

more

efficient

pipelines

with

less

cycle

time

now

to

be

very,

very

Frank,

even

if

you

put

all

your

jobs

into

stages,

but

then

you

take

the

time

to

create

these

needs

dependencies

all

the

way

through

for

every

single

job,

you're

going

to

get

the

same

effect.

A

So,

first,

let's

talk

about

execution

order.

If

you're

coming

right

from

the

last

track,

you

should

still

be

on

the

pipeline

page,

but

if

you

navigate

it

away,

you

could

just

go

back

to

your

project

and

use

the

left

hand,

navigation

menu

to

go

through

to

build

pipelines

and

pick

the

hyperlinks

starting

with

the

most

recent

pipeline

image.

A

And

by

the

way,

you're

seeing

a

very

odd

layout

here,

that's

because

my

screen

is

so

narrow,

but

you

can

see

that

our

pipeline,

which

is

the

only

one

that

I've

run

in

this

project,

has

passed

and

if

we

click

on

this

pass,

which,

by

the

way

it

could

be,

this

could

be

failed.

It

could

be

a

warning

if

we've

got

jobs

in

there.

A

They

ran

sequentially,

and

then

the

thing

we

were

supposed

to

do

from

the

last

step

was

to

look

and

find

this

Echo

that

we

put

into

the

after

script.

So

we

added

the

after

script

capability.

We

added

it

and

you

can

see

the

command

listed

right

here.

Echo

build

that

job

is

run

and

build

up.

Job

is

run,

it's

just

been

echoed

out.

A

A

Now

something

is

to

go

through

build

pipeline

editor,

but

it's

also

possible

to

do

that

here.

We

can

edit

this

file

in

the

pipeline

editor.

This

is

being

that

this

is

the

pipeline

file

for

this

project.

It's

the

only

one,

that's

going

to

show

us

the

option

to

edit

the

pipeline

editor,

but

let's

go

ahead

and

follow

the

path.

It's

telling

us

to

go

build

pipeline

editor.

A

A

A

B

A

A

And

you

can

see

built,

the

build

app

is

still

running,

but

now

the

code

quality

is

already

done

and

the

unit

test

is

running

right

now.

Now

we

put

in

a

very

simple

code

quality.

We

just

put

a

stud

job

in

there.

It's

not

the

actual

code

quality

test

that

ships

with

gitlab,

but

we

just

put

it

in

there.

So

we

could

illustrate

how

this

works.

A

A

A

We're

going

to

create

a

new

deploy

stage

that

we're

going

to

put

a

job

into

and

then

we're

going

to

add

a

direct

to

the

sigma

graph.

We

did

this

pipeline.

That's

fairly

extensive,

so

we're

going

to

capture

all

this

code.

That's

in

this

next

section

down

we're

going

to

actually

append

that

onto

our

pipeline.

A

A

Now

our

job

is

pending,

which

just

means

it's

waiting

for

a

runner

to

pick

it

up,

but

you

can

see

that

build

it,

build

a

and

build

app

or

running

right

now

and

if

we

take

just

a

minute-

and

you

can

see

several

jobs

fair

enough

all

at

once

there.

So

the

next

set

of

Runners

checked

in

picked

up

the

jobs

that

were

available

and

started

running

on.

A

If

we

go,

if

we

click

on

needs

up

here

at

the

top,

we

can

actually

see

what

is

graphically

represented

as

a

directed

in

cichlid

craft.

Now

it's

not

going

to

include

any

jobs

that

don't

have

needs,

defining

them

in

some

way

and

have

some

dependency

chain

to

show.

But

you

can

see

building

test

days,

dependent

on

Bill

day

employees

dependent

on

test

day

and

building.

A

So,

let's

move

on

to

the

next

step,

we're

going

to

talk

about

rules

and

failures.

The

rules

are

just

a

way

and

by

the

way

roles

have

several

different

applications

in

gitlab.

You

can

put

them

in

jobs,

so

the

jobs.

If

you

want

to

put

your

rules

in

jobs,

you

can

absolutely

do

that

and

that's

the

most

common

way

of

building

pipelines

so

that

jobs

have

Independence.

You

know

you,

you

might

have

half

a

dozen

rules

in

any

specific

job,

so

they

can

execute

under

certain.

A

You

know

certain

evaluated

circumstances,

and

maybe

they

even

have

the

default

that

just

run

always

under

some

circumstances,

but

you

also

have

the

ability

to

deal

with

failures.

Now

you

might

have

a

test

something

along

those

lines

that

is

stuck

in

your

pipeline.

You

know

it's

some,

some

tests

that

your

team

has

put

together

and

they

just

really

need

to

get

through

it.

A

A

All

right

so,

as

you

come

back

to

the

team

and

show

them

your

new

pipeline

knows

that

one

of

your

test,

jobs

is

failing

now

the

normal

default

Behavior

circular.

If

a

job

fails,

it's

going

to

stop

the

pipeline

pipeline's,

not

getting

any

jobs

that

are

eligible

to

run

after

that

job

fails

and

that

haven't

already

started

running

are

going

to

not

be

able

to

not

be

eligible

to

execute

after

that.

A

So

after

taking

a

look

into,

the

job

is

determined

that

you

don't

actually

need

to

enforce

it

passing,

but

you

still

want

to

be

able

to

see

the

test

results

and

again.

This

is

by

checking

check

clicking

on

the

job

name

and

going

through

to

the

job.

Blog

section

will

show

you

how

to

use

rules

and

failure,

Clauses

in

your

gitlab

Pipelines,

so

allowing

job

failure

and

what

we're

seeing

on

the

left

here

is

foreign.

A

We

can

see

there's

a

unit

test

at

the

top

and

it's

got

allow

failure

colon

true,

so

the

default

for

gitlab

is

allow

failure

false

you

don't

have

to

put

allow

failure

in

there

at

all

if

you

want

your

jobs

to

stop

your

pipelines,

but

if

you

want

for

some

reason

to

tolerate

a

failed

job,

you

need

to

put

in

a

little

failure.

You

know

calling

true.

A

A

A

A

So

again,

you

can

actually

have

jobs

that

don't

have

rules

at

all

they're,

going

to

run

for

every

single

pipeline

trigger

unless

you're,

using

workflow

rules

where

you

can

actually

shut

the

pipelines

down.

If

you

want

to,

but

this

job

can

just

have,

went

on

success

when

delayed

or

when

always

and

it'll

be

included

in

every

pipeline,

if

no

rule

is

defined

and

know

when

Clause

is

specified,

remember

that

when

on

success

is

the

default,

so

you

can

just

create

the

job

it's

going

to

run

in

every

pipeline.

A

So

if

we

want

to

talk

about

what

a

rule

looks

like,

it's

certainly

possible

to

do

an

evaluation

in

the

context

of

a

role

I

want

you

to

notice

that

rules

is

an

arrays.

Let's

cut

these

dashes

following

it

on

separate

lines

in

this

particular

case

and

by

the

way

rules

always

evaluate

prior

to

the

script,

rules

run

in

gitlab

itself

and

then,

if

the

job

is

eligible

to

to

run

in

that

particular

pipeline,

it

gets

added

to

the

to

the

queue

of

jobs,

that's

eligible

and

ready

for

runners

to

pick

up.

A

So

in

this

particular

case,

we're

looking

at

the

pipeline

Source

variable

and

if

that

equals

web,

this

job

is

going

to

run

now

notice.

It

doesn't

have

any

other

rules.

So

it's

going

to

have

to

match

that

specific

circumstance

for

this

job.

To

run,

but

the

important

thing

to

realize

about

this

is

that,

if

statements

can

reference

variables,

kitlab

has

a

very,

very

long

list

of

predefined

variables

like

look

at

things

like

you

know,

is

this

branch

of

default

branch?

Is

this

a

merge

request?

Is

it

a

pull

request?

A

You

know,

is

this

being

triggered

by

the

API?

Is

it

being

triggered

by

the

manual

pipelines

page,

which

is

what

this

particular

one

is

here?

Web

refers

to

the

manual

pipelines

page,

so

we've

been

going

through

to

build

and

pipelines,

and

looking

at

that

page

and

there's

a

run

pipeline

button

at

the

upper

right

that

if

we

were

to

click

on

and

run

a

pipeline,

this

rule

would

be

true,

so

this

job

will

only

run

when

the

pipeline

is

kicked

off

from

the

web

form.

A

A

Maybe

you

only

want

to

run

them

on

your

default

Branch?

Maybe

you

only

want

to

run

them

in

the

merge

request.

This

is

the

way

for

you

to

make

sure

that

that

that

happens,

so

the

if

Clause

lets

us

evaluate

an

expression.

You

know

this

can

be

if

a

variable

equals

some

value

and

there's

lots

of

options

on

that,

and

then

we

have

the

ability

to

look

for

changes

in

the

code

too.

A

So

if

the

commit,

if

the

commit

has

changes

to

a

specific

file

or

to

the

to

a

set

of

files

in

a

certain

subdirectory,

you

know

that's

a